ProbeLogits: Kernel-Level LLM Inference Primitives for AI-Native Operating Systems

Single-pass method on base models reaches 97-99 percent block rates and matches dedicated guard models with lower latency below the sandbox.

abstract

click to expand

An OS kernel that runs LLM inference internally can read logit distributions before any text is generated and act on them as a governance primitive. This paper presents ProbeLogits, a kernel-level operation that performs a single forward pass and reads specific token logits to classify agent actions as safe or dangerous, with zero learned parameters.

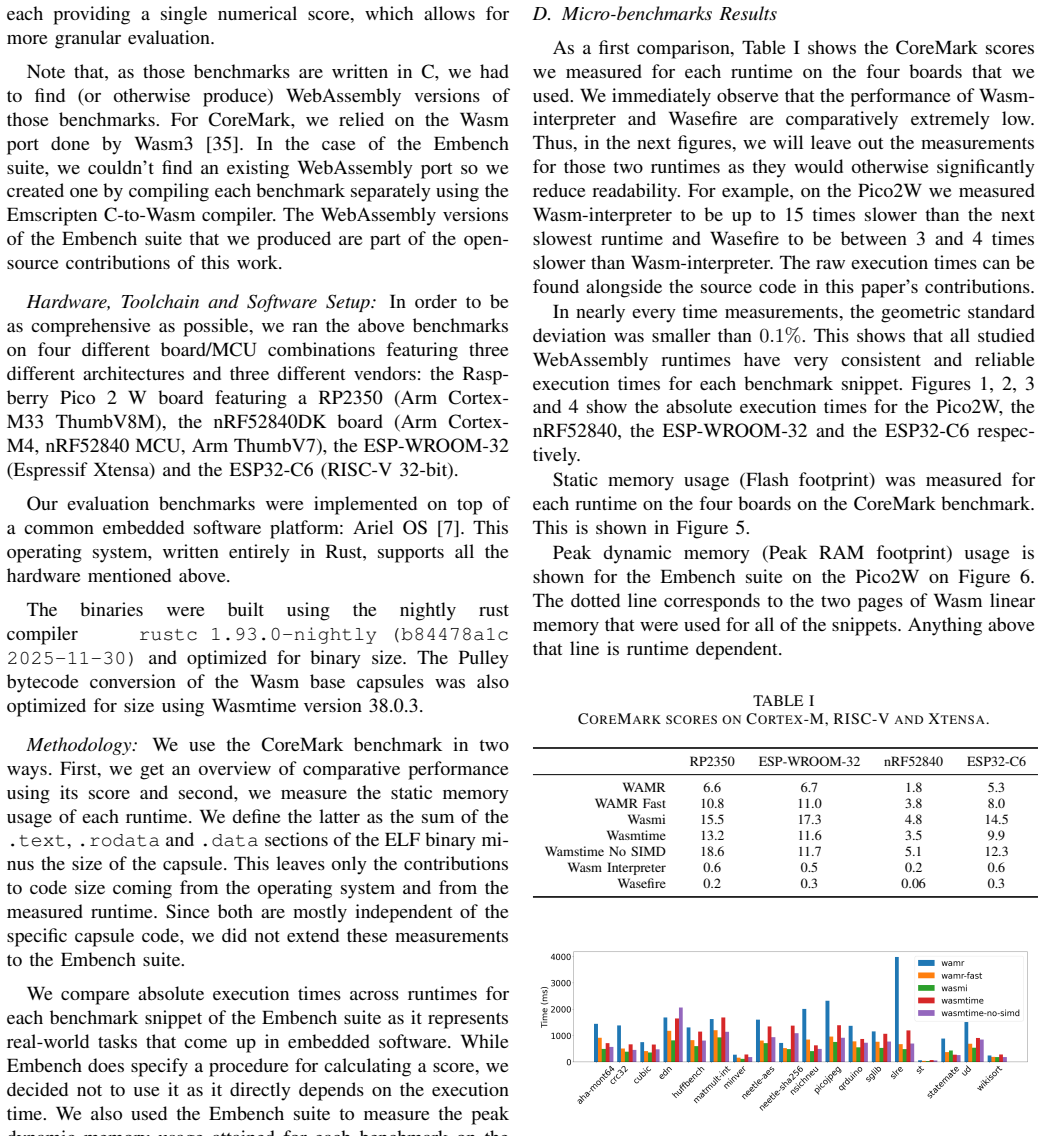

I evaluate ProbeLogits across three base models (Qwen 2.5-7B, Llama 3 8B, Mistral 7B) on three external benchmarks: HarmBench, XSTest, and ToxicChat. On HarmBench non-copyright (n=300), all three models reach 97-99% block rate with the right verbalizer. On ToxicChat (n=1,000), ProbeLogits achieves F1 parity-or-better against Llama Guard 3 in the same hosted environment: the strongest configuration (Qwen 2.5-7B Safe/Dangerous, alpha=0.0) reaches F1=0.812 with bootstrap 95% CIs disjoint from LG3 (+13.7pp significant); Llama 3 S/D matches LG3 within CI (+0.4pp, parity); Mistral Y/N exceeds by +4.4pp. Latency is approximately 2.5x faster than LG3 in the same hosted environment because the primitive reads a single logit position instead of generating tokens; in the bare-metal native runtime ProbeLogits drops to 65 ms.

A key design contribution is the calibration strength alpha, which serves as a deployment-time policy knob rather than a learned hyperparameter. Contextual calibration corrects verbalizer prior asymmetry, with bias magnitude varying by (model, verbalizer) pair.

I implement ProbeLogits within Anima OS, a bare-metal x86_64 OS written in approximately 86,000 lines of Rust. Because agent actions must pass through 15 kernel-mediated host functions, ProbeLogits enforcement operates below the WASM sandbox boundary, making it significantly harder to circumvent than application-layer classifiers.

full image

full image