Recognition: unknown

PubSwap: Public-Data Off-Policy Coordination for Federated RLVR

Pith reviewed 2026-05-10 16:00 UTC · model grok-4.3

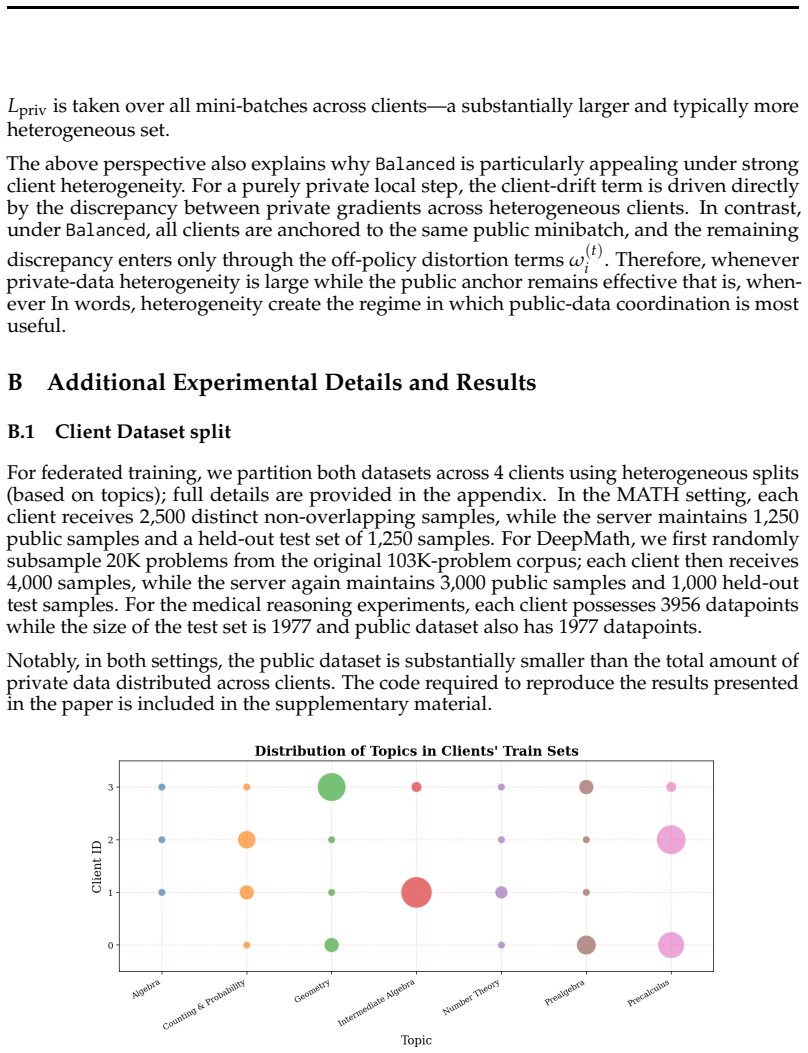

The pith

Public data response swaps enable better coordination in federated RLVR for reasoning post-training.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that a federated RLVR framework using LoRA for local updates plus public-data-based off-policy coordination, where a small shared public dataset supplies response-level signals and locally incorrect responses are replaced with globally correct ones, achieves cross-client alignment without exposing private data and delivers consistent improvements over baselines on mathematical and medical reasoning benchmarks and models.

What carries the argument

PubSwap, the selective replacement of locally incorrect responses with globally correct ones drawn from public data during off-policy steps, which serves as a lightweight anchor for global alignment while preserving local policy fidelity.

If this is right

- LoRA-based local adaptation lowers the cost of full-model synchronization across clients.

- Public data steps supply a lightweight global anchor without any private data exchange.

- Selective response replacement reduces client drift under heterogeneous local data distributions.

- Training stays closer to each client's local policy while still gaining from cross-client signals.

- The method produces consistent gains on mathematical and medical reasoning benchmarks.

Where Pith is reading between the lines

- The same public-data swap pattern could be tested in other federated reinforcement learning settings that do not involve reasoning.

- Success likely depends on the public dataset remaining representative as the number of clients or data heterogeneity grows.

- Combining PubSwap with additional efficiency methods such as quantization could be explored for very large models.

- The technique might generalize to non-reasoning tasks where verifiable rewards are available.

Load-bearing premise

A small shared public dataset supplies sufficiently representative and high-quality response-level signals to align heterogeneous private clients without introducing bias or reducing local policy fidelity.

What would settle it

If the performance gains disappear when the public-data steps are removed or when the public dataset is replaced by random or mismatched data while all other components stay fixed.

Figures

read the original abstract

Reasoning post-training with reinforcement learning from verifiable rewards (RLVR) is typically studied in centralized settings, yet many realistic applications involve decentralized private data distributed across organizations. Federated training is a natural solution, but scaling RLVR in this regime is challenging: full-model synchronization is expensive, and performing many local steps can cause severe client drift under heterogeneous data. We propose a federated RLVR framework that combines LoRA-based local adaptation with public-data-based off-policy steps to improve both communication efficiency and cross-client coordination. In particular, a small shared public dataset is used to periodically exchange and reuse response-level training signals across organizations, providing a lightweight anchor toward a more globally aligned objective without exposing private data. Our method selectively replaces locally incorrect responses with globally correct ones during public-data steps, thereby keeping training closer to the local policy while still benefiting from cross-client coordination. Across mathematical and medical reasoning benchmarks and models, our method consistently improves over standard baselines. Our results highlight a simple and effective recipe for federated reasoning post-training: combining low-rank communication with limited public-data coordination.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes PubSwap, a federated RLVR framework for reasoning post-training that combines LoRA-based local client updates with periodic off-policy coordination steps on a small shared public dataset. The method selectively replaces locally incorrect responses with globally correct ones during public-data steps to mitigate client drift while preserving local policy fidelity and avoiding private data exposure. The central empirical claim is that this yields consistent improvements over standard federated RLVR baselines across mathematical and medical reasoning benchmarks and models.

Significance. If the reported gains hold under rigorous controls, the work supplies a practical, communication-efficient recipe for decentralized RLVR that leverages limited public data as a coordination anchor. This could be relevant for privacy-sensitive domains such as medical reasoning where full-model synchronization is prohibitive. The approach is presented as a lightweight heuristic rather than a theoretically guaranteed unbiased estimator.

major comments (2)

- [§4] §4 (Experiments): The abstract asserts 'consistent improvements' over baselines, yet the provided text supplies no quantitative tables, exact metrics (e.g., accuracy deltas), number of random seeds, statistical tests, or ablation results on the public-data swap frequency and size. Without these, it is impossible to assess whether the central claim is supported or whether gains are attributable to the coordination mechanism versus other factors such as LoRA rank or local step count.

- [§3.2] §3.2 (Public-data off-policy step): The selective replacement of incorrect local responses with correct public ones is described as keeping training 'closer to the local policy.' However, this introduces a potential bias if the public dataset is not representative of the heterogeneous private distributions; the paper should quantify how response-level signals from the public set affect local policy fidelity (e.g., via KL divergence or reward distribution shifts) and include an ablation on public dataset size/quality.

minor comments (2)

- [§3] Notation for the off-policy update (e.g., the exact form of the replacement rule) should be formalized with an equation rather than prose description to aid reproducibility.

- [Abstract] The abstract lists 'mathematical and medical reasoning benchmarks' without naming them (e.g., GSM8K, MedQA); adding the specific datasets and model sizes in the abstract would improve clarity.

Simulated Author's Rebuttal

Thank you for the constructive feedback. We address each major comment below and will revise the manuscript to incorporate the requested details and analyses.

read point-by-point responses

-

Referee: [§4] §4 (Experiments): The abstract asserts 'consistent improvements' over baselines, yet the provided text supplies no quantitative tables, exact metrics (e.g., accuracy deltas), number of random seeds, statistical tests, or ablation results on the public-data swap frequency and size. Without these, it is impossible to assess whether the central claim is supported or whether gains are attributable to the coordination mechanism versus other factors such as LoRA rank or local step count.

Authors: We agree that the current manuscript version does not provide sufficient quantitative details to fully support the claims. In the revision we will add tables with exact accuracy metrics and deltas across the mathematical and medical benchmarks, report results averaged over 3 random seeds with standard deviations, include paired t-tests for statistical significance, and present ablations on swap frequency and public dataset size to isolate the contribution of the coordination mechanism. revision: yes

-

Referee: [§3.2] §3.2 (Public-data off-policy step): The selective replacement of incorrect local responses with correct public ones is described as keeping training 'closer to the local policy.' However, this introduces a potential bias if the public dataset is not representative of the heterogeneous private distributions; the paper should quantify how response-level signals from the public set affect local policy fidelity (e.g., via KL divergence or reward distribution shifts) and include an ablation on public dataset size/quality.

Authors: We appreciate the point on potential bias. In the revised manuscript we will add measurements of KL divergence between the local policy before and after public-data steps as well as reward distribution shifts on private data to quantify effects on policy fidelity. We will also include ablations varying public dataset size and quality (including reduced and degraded versions) to assess robustness. revision: yes

Circularity Check

No significant circularity: purely empirical method proposal

full rationale

The paper describes an empirical federated RLVR algorithm (LoRA local updates + periodic public-data off-policy response swaps) whose central claims are performance improvements on math and medical reasoning benchmarks. No derivation chain, equations, fitted parameters, or predictions are presented that could reduce to self-definitions, ansatzes, or self-citations. The method is introduced as a lightweight heuristic without uniqueness theorems or load-bearing prior results from the same authors. Experimental outcomes are independent of the method's internal construction and do not rely on quantities defined by the method itself.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Qingxiu Dong, Li Dong, Yao Tang, Tianzhu Ye, Yutao Sun, Zhifang Sui, and Furu Wei. Reinforcement pre-training.arXiv preprint arXiv:2506.08007,

-

[2]

10 Aaron Grattafiori, Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex Vaughan, et al. The llama 3 herd of models.arXiv preprint arXiv:2407.21783,

work page internal anchor Pith review Pith/arXiv arXiv

-

[3]

Rubrics as Rewards: Reinforcement Learning Beyond Verifiable Domains

Anisha Gunjal, Anthony Wang, Elaine Lau, Vaskar Nath, Yunzhong He, Bing Liu, and Sean Hendryx. Rubrics as rewards: Reinforcement learning beyond verifiable domains.arXiv preprint arXiv:2507.17746,

work page internal anchor Pith review arXiv

-

[4]

Zhiwei He, Tian Liang, Jiahao Xu, Qiuzhi Liu, Xingyu Chen, Yue Wang, Linfeng Song, Dian Yu, Zhenwen Liang, Wenxuan Wang, et al. Deepmath-103k: A large-scale, challenging, decontaminated, and verifiable mathematical dataset for advancing reasoning.arXiv preprint arXiv:2504.11456,

-

[5]

Measuring Mathematical Problem Solving With the MATH Dataset

Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the math dataset.arXiv preprint arXiv:2103.03874,

work page internal anchor Pith review Pith/arXiv arXiv

-

[6]

Measuring the effects of non- identical data distribution for federated visual classification,

Tzu-Ming Harry Hsu, Hang Qi, and Matthew Brown. Measuring the effects of non-identical data distribution for federated visual classification.arXiv preprint arXiv:1909.06335,

-

[7]

Eunjeong Jeong, Seungeun Oh, Hyesung Kim, Jihong Park, Mehdi Bennis, and Seong-Lyun Kim. Communication-efficient on-device machine learning: Federated distillation and augmentation under non-iid private data.arXiv preprint arXiv:1811.11479,

-

[8]

Fedexp: Speeding up feder- ated averaging via extrapolation

Divyansh Jhunjhunwala, Shiqiang Wang, and Gauri Joshi. Fedexp: Speeding up feder- ated averaging via extrapolation. InThe Eleventh International Conference on Learning Representations. Shuli Jiang, Pranay Sharma, Zhiwei Steven Wu, and Gauri Joshi. The cost of shuffling in private gradient based optimization.arXiv preprint arXiv:2502.03652,

-

[9]

Federated Learning: Strategies for Improving Communication Efficiency

Jakub Koneˇcn`y, H Brendan McMahan, Felix X Yu, Peter Richt´arik, Ananda Theertha Suresh, and Dave Bacon. Federated learning: Strategies for improving communication efficiency. arXiv preprint arXiv:1610.05492,

work page internal anchor Pith review arXiv

-

[10]

Shuyue Stella Li, Melanie Sclar, Hunter Lang, Ansong Ni, Jacqueline He, Puxin Xu, Andrew Cohen, Chan Young Park, Yulia Tsvetkov, and Asli Celikyilmaz. Prefpalette: Personalized preference modeling with latent attributes.arXiv preprint arXiv:2507.13541,

-

[11]

Ruikang Liu, Yuxuan Sun, Manyi Zhang, Haoli Bai, Xianzhi Yu, Tiezheng Yu, Chun Yuan, and Lu Hou. Quantization hurts reasoning? an empirical study on quantized reasoning models.arXiv preprint arXiv:2504.04823, 2025a. Zhaowei Liu, Xin Guo, Zhi Yang, Fangqi Lou, Lingfeng Zeng, Mengping Li, Qi Qi, Zhiqiang Liu, Yiyang Han, Dongpo Cheng, et al. Fin-r1: A large...

-

[12]

Youssef Mroueh, Nicolas Dupuis, Brian Belgodere, Apoorva Nitsure, Mattia Rigotti, Kristjan Greenewald, Jiri Navratil, Jerret Ross, and Jesus Rios. Revisiting group relative policy op- timization: Insights into on-policy and off-policy training.arXiv preprint arXiv:2505.22257,

-

[13]

Training a scientific reasoning model for chemistry.arXiv preprint arXiv:2506.17238, 2025

Siddharth M Narayanan, James D Braza, Ryan-Rhys Griffiths, Albert Bou, Geemi Wellawatte, Mayk Caldas Ramos, Ludovico Mitchener, Samuel G Rodriques, and Andrew D White. Training a scientific reasoning model for chemistry.arXiv preprint arXiv:2506.17238,

-

[14]

Ravan: Multi-head low-rank adaptation for federated fine-tuning.arXiv preprint arXiv:2506.05568,

Arian Raje, Baris Askin, Divyansh Jhunjhunwala, and Gauri Joshi. Ravan: Multi-head low-rank adaptation for federated fine-tuning.arXiv preprint arXiv:2506.05568,

-

[15]

Adaptive federated optimization,

Sashank Reddi, Zachary Charles, Manzil Zaheer, Zachary Garrett, Keith Rush, Jakub Koneˇcn`y, Sanjiv Kumar, and H Brendan McMahan. Adaptive federated optimization. arXiv preprint arXiv:2003.00295,

-

[16]

Fedpaq: A communication-efficient federated learning method with periodic averaging and quantization

Amirhossein Reisizadeh, Aryan Mokhtari, Hamed Hassani, Ali Jadbabaie, and Ramtin Pedarsani. Fedpaq: A communication-efficient federated learning method with periodic averaging and quantization. InInternational conference on artificial intelligence and statistics, pp. 2021–2031. PMLR,

2021

-

[17]

Proximal Policy Optimization Algorithms

doi: 10.64434/tml.20250929. https://thinkingmachines.ai/blog/lora/. John Schulman, Filip Wolski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov. Proximal policy optimization algorithms.arXiv preprint arXiv:1707.06347,

work page internal anchor Pith review Pith/arXiv arXiv doi:10.64434/tml.20250929

-

[18]

DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models

Jiawei Shao, Fangzhao Wu, and Jun Zhang. Selective knowledge sharing for privacy- preserving federated distillation without a good teacher.Nature Communications, 15(1): 349, 2024a. Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, et al. Deepseekmath: Pushing the limits of mathematical reasoning i...

work page internal anchor Pith review Pith/arXiv arXiv

-

[19]

Flexolmo: Open language models for flexible data use.arXiv preprint arXiv:2507.07024,

Weijia Shi, Akshita Bhagia, Kevin Farhat, Niklas Muennighoff, Pete Walsh, Jacob Morrison, Dustin Schwenk, Shayne Longpre, Jake Poznanski, Allyson Ettinger, et al. Flexolmo: Open language models for flexible data use.arXiv preprint arXiv:2507.07024,

-

[20]

M., Straube, J., Basra, M., Pazdera, A., Thaman, K., Ferrante, M

ISSN 2835-8856. Prime Intellect Team, Sami Jaghouar, Justus Mattern, Jack Min Ong, Jannik Straube, Manveer Basra, Aaron Pazdera, Kushal Thaman, Matthew Di Ferrante, Felix Gabriel, et al. Intellect- 2: A reasoning model trained through globally decentralized reinforcement learning. arXiv preprint arXiv:2505.07291,

-

[21]

Can public large language models help private cross-device federated learning? InFindings of the Association for Computational Linguistics: NAACL 2024, pp

Boxin Wang, Yibo Zhang, Yuan Cao, Bo Li, Hugh McMahan, Sewoong Oh, Zheng Xu, and Manzil Zaheer. Can public large language models help private cross-device federated learning? InFindings of the Association for Computational Linguistics: NAACL 2024, pp. 934–949,

2024

-

[22]

Tina: Tiny reasoning models via LoRA.arXiv preprint arXiv:2504.15777, 2025b

Shangshang Wang, Julian Asilis, ¨Omer Faruk Akg¨ul, Enes Burak Bilgin, Ollie Liu, and Willie Neiswanger. Tina: Tiny reasoning models via lora.arXiv preprint arXiv:2504.15777,

-

[23]

Fedmoa: Federated grpo for personalized reasoning llms under heterogeneous rewards

Ziyao Wang, Daeun Jung, Yexiao He, Guoheng Sun, Zheyu Shen, Myungjin Lee, and Ang Li. Fedmoa: Federated grpo for personalized reasoning llms under heterogeneous rewards. arXiv preprint arXiv:2602.00453,

-

[24]

Not All Rollouts are Useful: Down-Sampling Rollouts in LLM Reinforcement Learning

13 Yixuan Even Xu, Yash Savani, Fei Fang, and J Zico Kolter. Not all rollouts are useful: Down-sampling rollouts in llm reinforcement learning.arXiv preprint arXiv:2504.13818,

work page internal anchor Pith review Pith/arXiv arXiv

-

[25]

An Yang, Beichen Zhang, Binyuan Hui, Bofei Gao, Bowen Yu, Chengpeng Li, Dayiheng Liu, Jianhong Tu, Jingren Zhou, Junyang Lin, et al. Qwen2. 5-math technical report: Toward mathematical expert model via self-improvement.arXiv preprint arXiv:2409.12122,

work page internal anchor Pith review arXiv

-

[26]

An Yang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv, et al. Qwen3 technical report.arXiv preprint arXiv:2505.09388,

work page internal anchor Pith review Pith/arXiv arXiv

-

[27]

Chenlu Ye, Zhou Yu, Ziji Zhang, Hao Chen, Narayanan Sadagopan, Jing Huang, Tong Zhang, and Anurag Beniwal. Beyond correctness: Harmonizing process and outcome rewards through rl training.arXiv preprint arXiv:2509.03403,

-

[28]

Privacy-preserving instructions for aligning large language models.arXiv preprint arXiv:2402.13659,

Da Yu, Peter Kairouz, Sewoong Oh, and Zheng Xu. Privacy-preserving instructions for aligning large language models.arXiv preprint arXiv:2402.13659,

-

[29]

DAPO: An Open-Source LLM Reinforcement Learning System at Scale

Qiying Yu, Zheng Zhang, Ruofei Zhu, Yufeng Yuan, Xiaochen Zuo, Yu Yue, Weinan Dai, Tiantian Fan, Gaohong Liu, Lingjun Liu, et al. Dapo: An open-source llm reinforcement learning system at scale.arXiv preprint arXiv:2503.14476,

work page internal anchor Pith review Pith/arXiv arXiv

-

[30]

Towards building the federatedgpt: Federated instruction tuning

Jianyi Zhang, Saeed Vahidian, Martin Kuo, Chunyuan Li, Ruiyi Zhang, Tong Yu, Guoyin Wang, and Yiran Chen. Towards building the federatedgpt: Federated instruction tuning. InICASSP 2024-2024 IEEE international conference on acoustics, speech and signal processing (ICASSP), pp. 6915–6919. IEEE,

2024

-

[31]

Jie Zhu, Qian Chen, Huaixia Dou, Junhui Li, Lifan Guo, Feng Chen, and Chi Zhang. Dianjin- r1: Evaluating and enhancing financial reasoning in large language models.arXiv preprint arXiv:2504.15716,

-

[32]

Qihao Zhu, Daya Guo, Zhihong Shao, Dejian Yang, Peiyi Wang, Runxin Xu, Y Wu, Yukun Li, Huazuo Gao, Shirong Ma, et al. Deepseek-coder-v2: Breaking the barrier of closed-source models in code intelligence.arXiv preprint arXiv:2406.11931,

-

[33]

If M(t) i (x, θ) =m , let Y (0) i (x), Y (1) i (x),

Define the one-replacement GRPO distortion β(t) i (x;θ ) :=sup (Y,eY) E G(θ;x, eY)− G(θ;x,Y) , where the supremum is over all pairs (Y,eY) that differ in exactly one coordinate, (happens when one local response in Y is replaced by a donor-correct response in eY), while the other K− 1 responses are identical. If M(t) i (x, θ) =m , let Y (0) i (x), Y (1) i ...

1977

-

[34]

The Balanced method and aswapperiod of 2 is used here for response aggregation withPubSwap

across different numbers of local steps ( τ). The Balanced method and aswapperiod of 2 is used here for response aggregation withPubSwap. Method τ=10τ=40τ=90τ=120 Base model 49.2 49.2 49.2 49.2 FedAvg-GRPO 59.758.9 57.9 57.7 FedAvg-PubSwap 58.359.5 58.5 58.1 Table 8: Best checkpoint pass@1 performance of the model Llama3.2-3B-Instruct on medical reasoning...

2048

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.