Recognition: unknown

Learning Parameterized Nonlinear Elasticity on Curved Surfaces

Pith reviewed 2026-05-10 14:38 UTC · model grok-4.3

The pith

A physics-informed neural network learns a continuous family of nonlinear elastic equilibria on curved surfaces by enforcing the equations in its loss function.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The authors establish that a physics-informed neural network trained once on the nonlinear elasticity equations for a one-dimensional single disclination on a spheroidal surface accurately reproduces both exact and numerical reference solutions, including for values of the geometric and material parameters that were withheld from training. This demonstrates that the network has learned a continuous representation of elastic equilibria across geometry and material regimes rather than isolated instances.

What carries the argument

The physics-informed neural network whose loss function directly penalizes violations of the nonlinear elasticity equations and boundary conditions on the curved manifold, with geometric and material parameters supplied as additional inputs so one trained model covers the full parameter family.

If this is right

- Traditional per-parameter reinitialization is no longer required, so families of solutions can be explored continuously rather than pointwise.

- The same trained model applies to any point in the parameter space, including combinations excluded from training, for the single-disclination spheroid benchmark.

- The framework extends in principle to fully two-dimensional and multi-defect configurations on curved surfaces without requiring symmetry reductions.

- Equilibrium structures of protein shells and other curved elastic networks become accessible for continuous variation of curvature and material parameters.

Where Pith is reading between the lines

- The same loss-construction strategy could be applied to other partial-differential-equation problems on manifolds where parameters vary, such as diffusion or wave propagation on curved geometries.

- Because the network accepts parameters as inputs and generalizes to unseen values, it opens a route to solving inverse problems in which material or geometric parameters are inferred from observed shapes.

- Computational cost for design or stability studies of elastic shells may drop because one training run replaces many separate traditional solves.

Load-bearing premise

That embedding the complete nonlinear elasticity equations and boundary conditions into the neural network loss is enough to guarantee accurate solutions across the entire space of geometry and material parameters without the network missing important physical regimes or needing case-by-case adjustments.

What would settle it

A quantitative comparison in which the network's output for a new disclination charge or spheroid aspect ratio differs substantially from a high-resolution finite-element or exact solution computed independently for that same parameter value.

Figures

read the original abstract

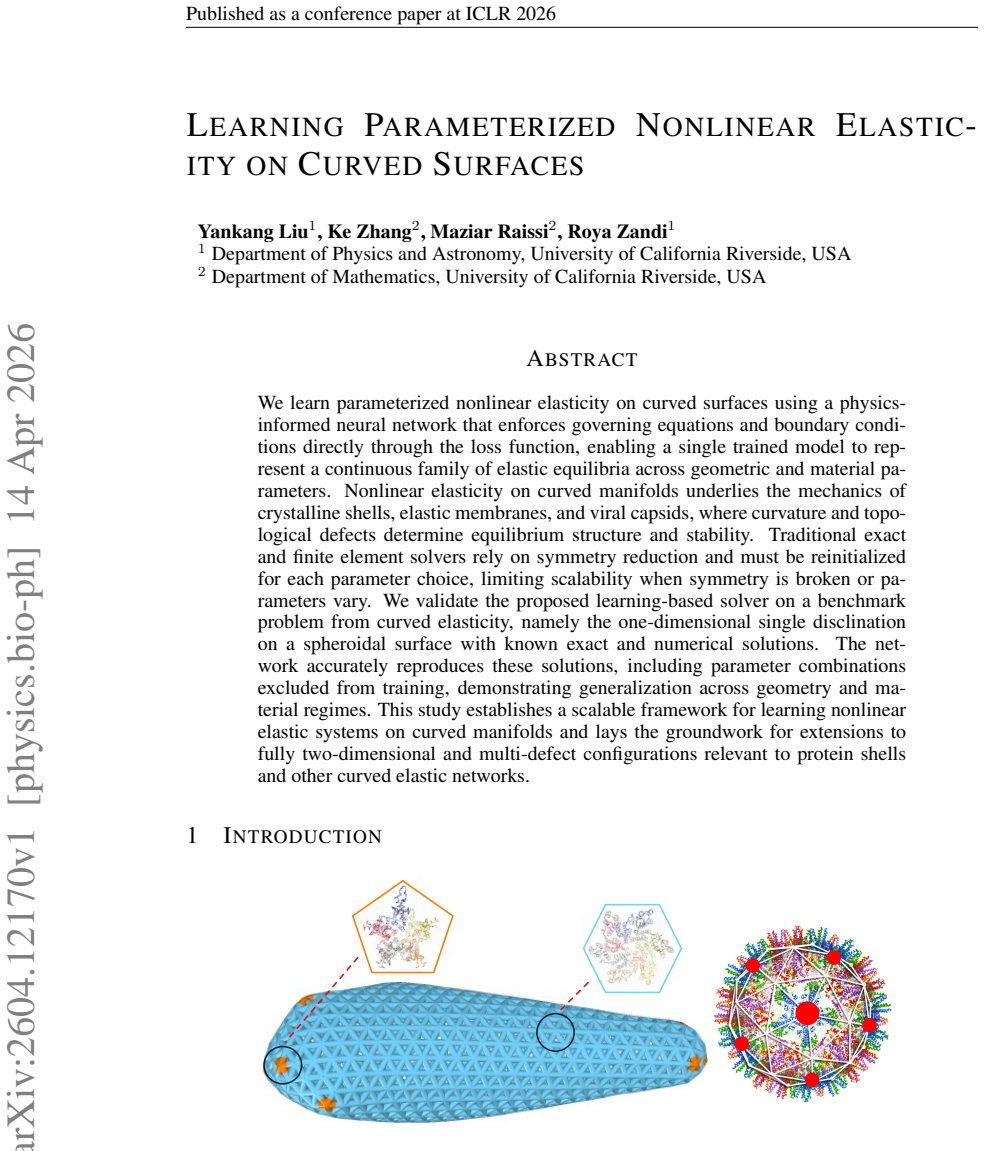

We learn parameterized nonlinear elasticity on curved surfaces using a physics-informed neural network that enforces governing equations and boundary conditions directly through the loss function, enabling a single trained model to represent a continuous family of elastic equilibria across geometric and material parameters. Nonlinear elasticity on curved manifolds underlies the mechanics of crystalline shells, elastic membranes, and viral capsids, where curvature and topological defects determine equilibrium structure and stability. Traditional exact and finite element solvers rely on symmetry reduction and must be reinitialized for each parameter choice, limiting scalability when symmetry is broken or parameters vary. We validate the proposed learning-based solver on a benchmark problem from curved elasticity, namely the one-dimensional single disclination on a spheroidal surface with known exact and numerical solutions. The network accurately reproduces these solutions, including parameter combinations excluded from training, demonstrating generalization across geometry and material regimes. This study establishes a scalable framework for learning nonlinear elastic systems on curved manifolds and lays the groundwork for extensions to fully two-dimensional and multi-defect configurations relevant to protein shells and other curved elastic networks.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a physics-informed neural network (PINN) approach to solve parameterized nonlinear elasticity problems on curved surfaces. A single trained model enforces the governing PDEs and boundary conditions via the loss function to represent continuous families of elastic equilibria across geometric (e.g., spheroid ellipticity) and material parameters. Validation is performed on the benchmark 1D single-disclination problem on a spheroid, where the network is claimed to reproduce known exact/numerical solutions, including for parameter values withheld from training, thereby demonstrating generalization.

Significance. If quantitatively validated, the work offers a potentially scalable alternative to repeated traditional solvers for parameterized nonlinear problems on manifolds, with relevance to crystalline shells, viral capsids, and elastic membranes. The parameterization and generalization across geometry/material regimes, if supported by residual norms and held-out comparisons, would be a clear strength over per-instance reinitialization methods.

major comments (2)

- [Abstract] Abstract: the central claim that the network 'accurately reproduces these solutions, including parameter combinations excluded from training' is unsupported by any reported quantitative error metrics, L2 residual norms, convergence rates, or direct comparisons against traditional solvers on the held-out parameter set; this evidence gap is load-bearing for the generalization assertion.

- [Method] The physics-informed loss is described as enforcing the full nonlinear elasticity equations and boundary conditions on curved manifolds, yet no explicit form is given for the intrinsic equilibrium operator (including metric, curvature, and Christoffel contributions) or the nonlinear strain measure; without these details or an ablation on loss weighting, it is unclear whether the residual captures the complete manifold physics or permits spurious solutions outside the training range.

minor comments (2)

- [Abstract] The abstract references 'known exact and numerical solutions' for the 1D disclination benchmark but provides no citations to the specific prior works containing those solutions.

- [Method] Notation for the curved-surface operators and parameter dependence could be clarified with an explicit equation for the residual loss term.

Simulated Author's Rebuttal

We thank the referee for their constructive feedback on our manuscript. We address each major comment below and have revised the manuscript to strengthen the presentation of quantitative evidence and methodological details.

read point-by-point responses

-

Referee: [Abstract] Abstract: the central claim that the network 'accurately reproduces these solutions, including parameter combinations excluded from training' is unsupported by any reported quantitative error metrics, L2 residual norms, convergence rates, or direct comparisons against traditional solvers on the held-out parameter set; this evidence gap is load-bearing for the generalization assertion.

Authors: We agree that the generalization claim requires quantitative support. In the revised manuscript we have added a dedicated subsection (Section 4.3) that reports L2 residual norms, pointwise maximum errors, and direct comparisons to both the known exact solutions and independent finite-element computations for all held-out parameter combinations. We also include convergence rates with respect to network depth and width. These additions directly substantiate the accuracy and generalization statements. revision: yes

-

Referee: [Method] The physics-informed loss is described as enforcing the full nonlinear elasticity equations and boundary conditions on curved manifolds, yet no explicit form is given for the intrinsic equilibrium operator (including metric, curvature, and Christoffel contributions) or the nonlinear strain measure; without these details or an ablation on loss weighting, it is unclear whether the residual captures the complete manifold physics or permits spurious solutions outside the training range.

Authors: The explicit expressions for the manifold equilibrium operator (including the metric, curvature, and Christoffel symbols) and the nonlinear (Green-Lagrange) strain measure appear in Section 2.2, but we acknowledge they were not presented with sufficient detail. In the revision we have expanded this section with the complete component-wise residual formulas. We have also added an ablation study on the PDE and boundary-condition loss weights in the supplementary material, confirming that the trained solutions remain consistent with the known benchmarks across the tested parameter range and do not exhibit spurious behavior. revision: yes

Circularity Check

Minor self-citation to PINN foundations; central PDE-enforcement claim remains independent of fitted outputs

full rationale

The paper applies a standard physics-informed neural network whose loss directly penalizes residuals of the nonlinear elasticity PDEs (including metric, curvature, and strain terms on the manifold) plus boundary conditions. These governing equations are taken from established continuum mechanics on curved surfaces and are not defined in terms of the network outputs or fitted parameters. Generalization to held-out parameter values is demonstrated by comparison to independent exact/numerical reference solutions rather than by construction. Any citations to prior PINN methodology (including co-author Raissi work) are used only to justify the training procedure and do not carry the load-bearing claim that the learned solutions satisfy the physics for unseen regimes. No self-definitional loops, fitted-input-as-prediction, or ansatz smuggling appear in the derivation chain.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Nonlinear elasticity equations on curved manifolds with defects determine equilibrium structure and stability

- domain assumption Physics-informed neural networks can enforce PDEs and boundary conditions through the loss function

Reference graph

Works this paper leans on

-

[1]

URL https://link.aps.org/doi/10.1103/PhysRevLett.129.088001

doi: 10.1103/PhysRevLett.129.088001. URL https://link.aps.org/doi/10.1103/PhysRevLett.129.088001. E. Efrati, E. Sharon, and R. Kupferman. Elastic theory of unconstrained non-Euclidean plates. Journal of the Mechanics and Physics of Solids, 57(4):762–775, 4

-

[2]

doi: 10.1016/j.jmps.2008.12.004

ISSN 00225096. doi: 10.1016/j.jmps.2008.12.004. Siyu Li, Polly Roy, Alex Travesset, and Roya Zandi. Why large icosahedral viruses need scaffold- ing proteins.Proceedings of the National Academy of Sciences, 115:10971–10976,

-

[3]

URLhttps://www.pnas.org/doi/abs/10.1073/pnas

doi: 10.1073/pnas.1807706115. URLhttps://www.pnas.org/doi/abs/10.1073/pnas. 1807706115. Siyu Li, Roya Zandi, and Alex Travesset. Elasticity in curved topographies: Exact theories and linear approximations.Physical Review E, 99:63005, 6

-

[4]

URL https://link.aps.org/doi/10.1103/PhysRevE.99.063005

doi: 10.1103/PhysRevE.99.063005. URL https://link.aps.org/doi/10.1103/PhysRevE.99.063005. Yankang Liu, Siyu Li, Roya Zandi, and Alex Travesset. General solution for elastic networks on arbitrary curved surfaces in the absence of rotational symmetry.Physical Review E, 111:15423, 1

-

[5]

URLhttps://link.aps.org/doi/10

doi: 10.1103/PhysRevE.111.015423. URLhttps://link.aps.org/doi/10. 1103/PhysRevE.111.015423. Alexander Yu. Morozov and Robijn F Bruinsma. Assembly of viral capsids, buckling, and the asaro-grinfeld-tiller instability.Physical Review E, 81:41925, 4

-

[7]

Generalized thermodynamics of phase equilibria in scalar active matter

1103/PhysRevE.92.062403. URLhttp://link.aps.org/doi/10.1103/PhysRevE. 92.062403https://link.aps.org/doi/10.1103/PhysRevE.92.062403. M Raissi, P Perdikaris, and G E Karniadakis. Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equa- tions.Journal of Computational P...

-

[8]

ISSN 0021-9991. doi: https://doi. org/10.1016/j.jcp.2018.10.045. URLhttps://www.sciencedirect.com/science/ article/pii/S0021999118307125. A ELASTICITYTHEORY This appendix presents the covariant elasticity theory and the explicit form of the governing equa- tion 1 and the boundary condition withτ= 0under rotational symmetry. The free energy of a partially ...

-

[9]

Surfaces with vanishing bending rigidity have a constant curvature radiusR 1 =R 2 = 1/H 0, forming a sphere

or discrete delta function of Gaussian curvatures, like a cone (q= 1). Surfaces with vanishing bending rigidity have a constant curvature radiusR 1 =R 2 = 1/H 0, forming a sphere. There is, therefore, no surface that simultaneously minimizes both the elastic and bending energies. The third term in equation 2,F abs =−Π ˆA <0withΠthe attractive interaction ...

2026

-

[10]

1− α¯r(r) r 2#) σrθ =σθr = 0 σθθ = Y 2r2 1−ν 2p

Next, we provide various quantities for the surfaces of revolution, defined byx=rcos(θ),y= rsin(θ),z=f(r)with actual metric ds2 = 1 +f ′(r)2 dr2 +r 2dθ2 .(10) The isotropic reference metric reads d¯s2 = ¯r′(r)2dr2 +α 2¯r(r)2dθ2 =d 2¯r+α2¯r2dθ2 .(11) Note that due to the rotational symmetry, the angular mapping is identical, ¯θ(θ) =θ The nonzero Christoffe...

2026

-

[11]

We demand the correspondence between the center of the reference and the actual space, ¯r(r= (0,0)) = (0,0)

(17) The boundary conditions can be obtained through the variations ofF area =F elastic +F bending in equation 2, nρσρλ¯gλν + τ rA nν = 0.(18) Forτ= 0, under rotational symmetry, the above equation becomes [¯r′(rb)]2 (1−ν 2p){(1 + [f ′(rb)]2} ( 1− [¯r′(rb)]2 1 + [f′(rb)]2 +ν p " 1− α¯r(rb) rb 2#) = 0,(19) 7 Published as a conference paper at ICLR 2026 whe...

2026

-

[12]

𝛽𝑅! 𝑟 Spheroid 𝑅!𝑟

8 Published as a conference paper at ICLR 2026 B SPHEROID 𝑅! 𝑓(𝑟)𝑟"𝛽𝑅! 𝑟 Spheroid 𝑅!𝑟" 𝑟 𝛽=1𝛽=2𝛽=3 Figure 3: The figure presents the surface of revolution named spheroid,f(r) =β p R2 0 −r 2, where R0 is the radius of the spheroid,rb is the radius of the domain, andβR 0 is the height of the spheroid. Asβincreases, the center of the spheroid goes higher and...

2026

-

[13]

9 Published as a conference paper at ICLR 2026 Table 3: Snapshot of stage 1 training log (every 500 epochs)

We also include a small excerpt of the training log in Table 3 for this configuration. 9 Published as a conference paper at ICLR 2026 Table 3: Snapshot of stage 1 training log (every 500 epochs). Number of training points /N: 2167/4000. The neural network has 4 hidden layers and 200 neurons/layer. Time is per 500 epochs. Epoch Loss Data Phys BC Time (s) 5...

2026

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.