Recognition: unknown

The IQ-Motion Confound in Multi-Site Autism fMRI May Be Inflated by Site-Correlated Measurement Uncertainty

Pith reviewed 2026-05-10 14:34 UTC · model grok-4.3

The pith

Pooled OLS overestimates the IQ-motion slope by a factor of 4.67 in multi-site autism fMRI data.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

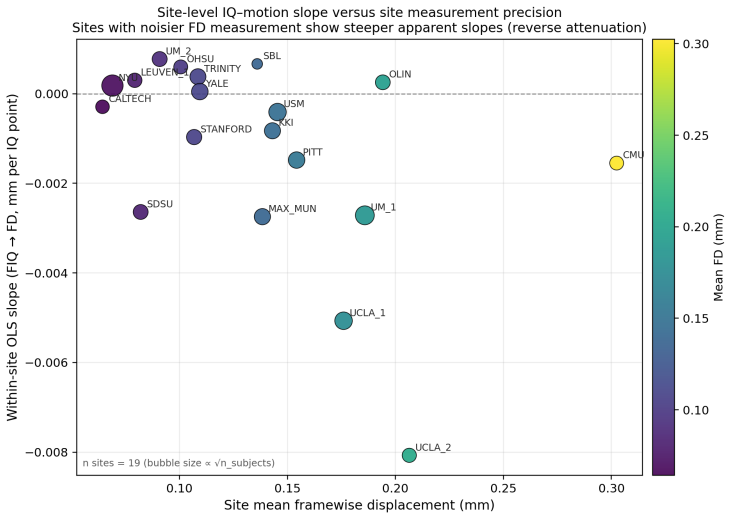

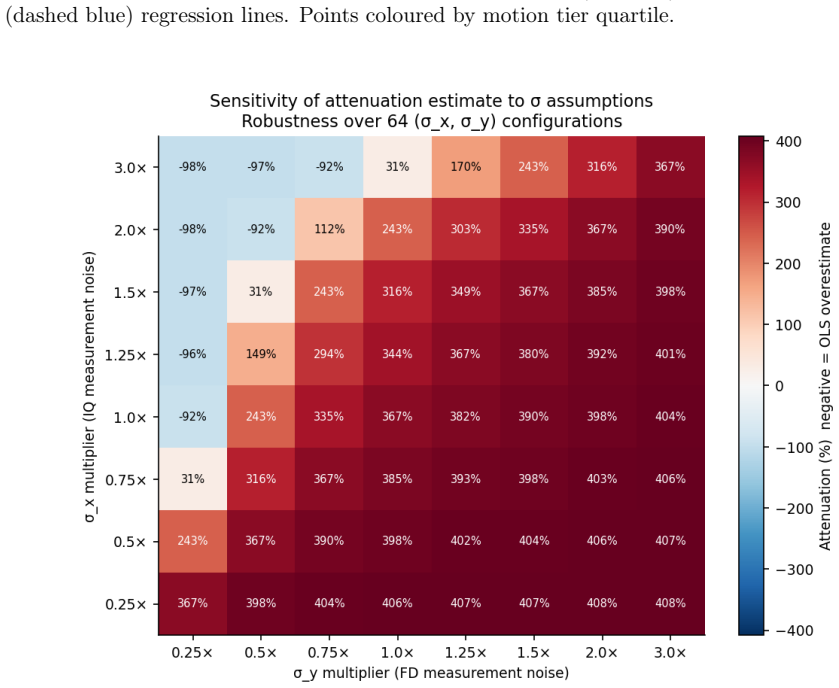

Ordinary least squares regression overestimates the negative IQ-motion association by a factor of 4.67 (OLS coefficient -0.00125 mm per IQ point versus EIV coefficient -0.00027 mm per IQ point). Leave-site-out cross-validation shows that a pooled OLS predictor of raw framewise displacement produces negative R-squared at all 19 sites (overall R-squared = -0.074). The direction of the errors-in-variables corrected slope remains negative and stable across an 8-by-8 grid of noise-parameter values spanning 12-fold ranges.

What carries the argument

Probability Cloud Regression, an errors-in-variables estimator that models per-observation measurement uncertainty in both the IQ predictor (from Wechsler test-retest reliability) and the motion response (from within-site standard deviation of mean framewise displacement).

If this is right

- Pooled OLS may overstate the size of the IQ-motion confound that needs to be removed in multi-site autism fMRI analyses.

- A single IQ-based predictor of motion does not generalize across scanning sites once site identity is withheld.

- The direction of the corrected slope is insensitive to plausible ranges of the two noise parameters.

- Formal errors-in-variables methods remain rarely used for confound estimation in multi-site neuroimaging.

Where Pith is reading between the lines

- Similar measurement-error biases could affect other pooled confound regressions that mix IQ or age with site-specific variables.

- Connectivity-level re-analyses that substitute EIV-corrected motion covariates might change reported group differences in autism studies.

- Reporting both OLS and EIV estimates side-by-side would give readers a direct sense of the inflation factor in future multi-site work.

Load-bearing premise

The within-site standard deviation of mean framewise displacement accurately represents the per-subject measurement uncertainty in the motion variable.

What would settle it

Repeating the analysis on a dataset that supplies empirical repeat-scan motion traces or repeat IQ assessments for the same subjects, then checking whether the EIV slope matches the observed within-subject change.

Figures

read the original abstract

Multi-site autism neuroimaging studies routinely control for the confound between full-scale IQ and head motion by regressing framewise displacement against IQ scores and removing shared variance. This procedure assumes that ordinary least squares (OLS) provides an unbiased estimate of the confound magnitude. We tested this assumption on the ABIDE-I phenotypic dataset (n=935 subjects across 19 international scanning sites) using Probability Cloud Regression, an errors-in-variables (EIV) estimator that models per-observation measurement uncertainty in both variables. IQ measurement error was derived from published Wechsler test-retest reliability coefficients; response-side uncertainty was represented by a site-level proxy equal to the within-site standard deviation of mean framewise displacement. Three findings emerged. First, OLS overestimates the IQ-motion slope by a factor of 4.67 relative to the EIV-corrected estimate when the bias factor is computed from the full-precision fitted coefficients (OLS -0.00125, EIV -0.00027 mm per IQ point after rounding for display). Second, under leave-site-out cross-validation a single pooled predictor of raw FD produces negative out-of-sample R^2 at all 19 sites (overall R^2 = -0.074), indicating that the pooled predictor does not transport cleanly across sites once site information is removed. Third, the direction of the EIV-corrected slope is robust across all 64 configurations of an 8x8 sensitivity grid spanning 12-fold ranges of each noise parameter. These results suggest that pooled OLS may overstate the IQ-motion association in ABIDE-I, but direct downstream consequences for motion-correction pipelines remain to be quantified using raw motion traces and connectivity-level re-analysis. Formal EIV methods appear to remain uncommon in multi-site neuroimaging confound estimation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript claims that ordinary least squares (OLS) regression overestimates the IQ-head motion (mean framewise displacement) slope by a factor of 4.67 relative to an errors-in-variables (EIV) correction via Probability Cloud Regression on the ABIDE-I dataset (n=935, 19 sites). IQ error is taken from published test-retest reliabilities while response uncertainty uses a site-level proxy; the pooled OLS model yields negative leave-site-out R² at every site (overall -0.074), and the EIV slope sign remains stable across an 8x8 sensitivity grid spanning 12-fold noise ranges.

Significance. If the EIV specification holds, the result indicates that conventional confound regression in multi-site autism fMRI may substantially inflate the apparent IQ-motion association, with potential consequences for how motion is partialled out prior to connectivity analyses. The explicit sensitivity grid, consistent negative cross-validation R², and use of external reliability coefficients for the predictor error are strengths that enhance reproducibility and internal checks.

major comments (2)

- [Abstract (EIV model) and Methods (proxy definition)] The response-side uncertainty proxy (within-site SD of mean FD) is set equal to the total observed variance rather than measurement error alone. Because this quantity includes substantial true inter-subject motion variance (behavioral, scanner, and autism-related), it overstates per-observation sigma_y in the EIV model. This directly amplifies the attenuation correction and produces the reported 4.67-fold inflation (OLS -0.00125 vs. EIV -0.00027 mm/IQ point). The 8x8 grid only scales the magnitude of this already-inflated proxy; it does not test whether the proxy isolates error variance.

- [Results (leave-site-out paragraph)] The leave-site-out result (negative R² at all 19 sites) demonstrates that a single pooled OLS predictor does not transport across sites, but the manuscript does not show how this finding quantitatively supports or modifies the central OLS-vs-EIV slope comparison. Clarify the logical link between the cross-validation exercise and the bias-factor claim.

minor comments (2)

- [Abstract] The abstract states the 4.67 factor is computed from 'full-precision fitted coefficients' yet reports rounded values; provide the unrounded coefficients and the exact arithmetic in the main text or a supplementary table so readers can reproduce the ratio.

- [Methods] Notation for the Probability Cloud Regression estimator and the precise form of the EIV likelihood should be stated explicitly (e.g., as an equation) rather than referenced only by name.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed comments, which have prompted us to re-examine our error modeling choices and the presentation of our cross-validation results. We address each major comment below with honest assessment and plans for revision.

read point-by-point responses

-

Referee: [Abstract (EIV model) and Methods (proxy definition)] The response-side uncertainty proxy (within-site SD of mean FD) is set equal to the total observed variance rather than measurement error alone. Because this quantity includes substantial true inter-subject motion variance (behavioral, scanner, and autism-related), it overstates per-observation sigma_y in the EIV model. This directly amplifies the attenuation correction and produces the reported 4.67-fold inflation (OLS -0.00125 vs. EIV -0.00027 mm/IQ point). The 8x8 grid only scales the magnitude of this already-inflated proxy; it does not test whether the proxy isolates error variance.

Authors: We agree that the within-site SD of mean FD captures both measurement error and true inter-subject variability in motion, and that treating the full SD as sigma_y overstates the pure error component in the EIV model. This was an intentional conservative proxy given the absence of repeated FD measures in ABIDE-I, but the referee is correct that it risks inflating the attenuation correction. In the revised manuscript we will update the Methods to explicitly state this limitation, reframe the proxy as an upper-bound estimate, and add a new sensitivity analysis that scales the error fraction of the SD (e.g., 25 % and 50 % of observed SD treated as error). This will show how the OLS-to-EIV slope ratio varies when the proxy is made more conservative and will directly address the concern that the existing 8x8 grid does not isolate error variance. revision: yes

-

Referee: [Results (leave-site-out paragraph)] The leave-site-out result (negative R² at all 19 sites) demonstrates that a single pooled OLS predictor does not transport across sites, but the manuscript does not show how this finding quantitatively supports or modifies the central OLS-vs-EIV slope comparison. Clarify the logical link between the cross-validation exercise and the bias-factor claim.

Authors: The leave-site-out analysis was included to demonstrate that a single pooled OLS model fails to generalize, yielding negative out-of-sample R² at every site. This finding is conceptually related to the EIV results because both expose limitations of standard OLS pooling in multi-site data: OLS assumes error-free predictors and a homogeneous slope, while EIV corrects for measurement error within that pooled framework. The poor transportability indicates substantial site-driven variance that may interact with measurement error. However, we acknowledge that the manuscript does not provide a direct quantitative decomposition showing how much of the 4.67-fold bias factor is attributable to non-transportability versus pure measurement error. In revision we will add explicit text in the Results and Discussion linking the two analyses and will note that a fully integrated quantitative bridge would require additional site-specific EIV simulations, which we can outline as future work. revision: partial

Circularity Check

No significant circularity in the derivation chain

full rationale

The paper applies standard OLS regression and Probability Cloud Regression (EIV) to the ABIDE-I dataset, sourcing IQ error variance from external published reliability coefficients and using within-site SD of mean FD as a proxy for response uncertainty. The reported 4.67x overestimation factor is the direct numerical ratio of the two fitted slopes (OLS -0.00125 vs. EIV -0.00027); it is not obtained by fitting a parameter to a subset and renaming it as a prediction, nor does any equation reduce to its inputs by definition. Leave-site-out cross-validation and the 8x8 sensitivity grid are independent computations on the same data that do not presuppose the target ratio. No self-citations are load-bearing, and the central claim rests on the application of an external EIV estimator rather than tautological re-expression.

Axiom & Free-Parameter Ledger

free parameters (1)

- FD uncertainty proxy

axioms (2)

- domain assumption Measurement errors in IQ and framewise displacement are independent of the true values and of each other.

- ad hoc to paper The site-level SD of mean FD serves as a valid stand-in for per-observation response uncertainty.

Reference graph

Works this paper leans on

-

[1]

doi: 10.1038/s41586-020-2314-9. Raymond J. Carroll, David Ruppert, Leonard A. Stefanski, and Ciprian M. Crainiceanu.Mea- surement Error in Nonlinear Models: A Modern Perspective. Chapman and Hall/CRC, 2nd edition,

-

[2]

doi: 10.1201/9781420010138. Rastko Ciric, Daniel H. Wolf, Jonathan D. Power, David R. Roalf, Graham L. Baum, Kosha Ruparel, Russell T. Shinohara, Mark A. Elliott, Simon B. Eickhoff, Christos Da- vatzikos, Ruben C. Gur, Raquel E. Gur, Danielle S. Bassett, and Theodore D. Satterth- waite. Benchmarking of participant-level confound regression strategies for ...

-

[3]

RastkoCiric, AdonF.G.Rosen, GurayErus, MatthewCieslak, AzeezAdebimpe, PhilipA.Cook, Danielle S

doi: 10.1016/j.neuroimage.2017.03.020. RastkoCiric, AdonF.G.Rosen, GurayErus, MatthewCieslak, AzeezAdebimpe, PhilipA.Cook, Danielle S. Bassett, Christos Davatzikos, Daniel H. Wolf, and Theodore D. Satterthwaite. Mitigating head motion artifact in functional connectivity MRI.Nature Protocols, 13(12): 2801–2826,

-

[4]

doi: 10.1038/s41596-018-0065-y. W. Edwards Deming.Statistical Adjustment of Data. John Wiley & Sons, New York,

-

[5]

doi: 10.1038/mp.2013.78. Oscar Esteban, Christopher J. Markiewicz, Ross W. Blair, Craig A. Moodie, A. Ilkay Isik, Asier Erramuzpe, James D. Kent, Mathias Goncalves, Elizabeth DuPre, Madeline Snyder, Hiroyuki Oya, Satrajit S. Ghosh, Jessey Wright, Joke Durnez, Russell A. Poldrack, and Krzysztof J. Gorgolewski. fMRIPrep: a robust preprocessing pipeline for ...

-

[6]

Jean-Philippe Fortin, Drew Parker, Birkan Tunç, Takanori Watanabe, Mark A

doi: 10.1038/s41592-018-0235-4. Jean-Philippe Fortin, Drew Parker, Birkan Tunç, Takanori Watanabe, Mark A. Elliott, Kosha Ruparel, David R. Roalf, Theodore D. Satterthwaite, Ruben C. Gur, Raquel E. Gur, Robert T. Schultz, Ragini Verma, and Russell T. Shinohara. Harmonization of multi-site diffusion tensor imaging data.NeuroImage, 161:149–170,

-

[7]

Jean-Philippe Fortin, Nicholas Cullen, Yvette I

doi: 10.1016/j.neuroimage.2017.08.047. Jean-Philippe Fortin, Nicholas Cullen, Yvette I. Sheline, Warren D. Taylor, Irem Aselcioglu, Philip A. Cook, Phil Adams, Crystal Cooper, Maurizio Fava, Patrick J. McGrath, Melvin McInnis, Mary L. Phillips, Madhukar H. Trivedi, Myrna M. Weissman, and Russell T. Shino- hara. Harmonizationofcorticalthicknessmeasurements...

-

[8]

Harmonization of cortical thickness measurements across scanners and sites

doi: 10.1016/j.neuroimage.2017.11.024. 12 Wayne A. Fuller.Measurement Error Models. John Wiley & Sons, New York,

-

[9]

doi: 10.1002/9780470316665. W. Evan Johnson, Cheng Li, and Ariel Rabinovic. Adjusting batch effects in microarray expression data using empirical Bayes methods.Biostatistics, 8(1):118–127,

-

[10]

doi: 10.1093/biostatistics/kxj037. Jonathan D. Power, Kelly A. Barnes, Abraham Z. Snyder, Bradley L. Schlaggar, and Steven E. Petersen. Spurious but systematic correlations in functional connectivity MRI networks arise from subject motion.NeuroImage, 59(3):2142–2154,

-

[11]

doi: 10.1016/j.neuroimage.2011.10

-

[12]

doi: 10.1016/j.neuroimage.2011.12.063. Theodore D. Satterthwaite, Mark A. Elliott, Raphael T. Gerraty, Kosha Ruparel, James Loug- head, Monica E. Calkins, Simon B. Eickhoff, Hakon Hakonarson, Ruben C. Gur, Raquel E. Gur, and Daniel H. Wolf. An improved framework for confound regression and filtering for control of motion artifact in the preprocessing of r...

-

[13]

doi: 10.1016/j.neuroimage.2012.08.052. Joshua S. Siegel, Jonathan D. Power, Joseph W. Dubis, Alecia C. Vogel, Jessica A. Church, Bradley L. Schlaggar, and Steven E. Petersen. Statistical improvements in functional magnetic resonance imaging analyses produced by censoring high-motion data points.Human Brain Mapping, 35(5):1981–1996,

-

[14]

doi: 10.1002/hbm.22307. Koene R. A. Van Dijk, Mert R. Sabuncu, and Randy L. Buckner. The influence of head motion on intrinsic functional connectivity MRI.NeuroImage, 59(1):431–438,

-

[15]

doi: 10.1016/j. neuroimage.2011.07.044. David Wechsler.WISC-IV: Wechsler Intelligence Scale for Children, Fourth Edition: Technical and Interpretive Manual. San Antonio, TX,

work page doi:10.1016/j 2011

-

[16]

doi: 10.1002/hbm. 24241. 13

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.