Recognition: unknown

SciFi: A Safe, Lightweight, User-Friendly, and Fully Autonomous Agentic AI Workflow for Scientific Applications

Pith reviewed 2026-05-10 14:50 UTC · model grok-4.3

The pith

The SciFi framework supports end-to-end automation of structured scientific tasks with minimal human intervention through safe agentic AI design.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

By focusing on structured tasks with clearly defined context and stopping criteria, the framework supports end-to-end automation with minimal human intervention, enabling researchers to offload routine workloads and devote more effort to creative activities and open-ended scientific inquiry through its combination of an isolated execution environment, a three-layer agent loop, and a self-assessing do-until mechanism.

What carries the argument

The three-layer agent loop with self-assessing do-until mechanism inside an isolated execution environment, which together ensure safe and reliable operation for scientific tasks.

If this is right

- Researchers can automate well-defined scientific tasks from start to finish with little to no ongoing input.

- The system remains reliable when using large language models that vary in capability.

- More researcher time becomes available for creative activities and open-ended inquiry.

- Automation applies specifically to tasks that have explicit context and stopping criteria.

Where Pith is reading between the lines

- Such a system might extend to other domains involving repetitive analysis, like financial modeling or engineering simulations, if similar structure is imposed.

- Adoption could lead to standardized AI-assisted pipelines in labs, changing how routine experiments are documented and repeated.

- Future versions could incorporate feedback from actual experiment outcomes to refine the self-assessment logic.

- The minimal intervention design opens possibilities for running long-term autonomous monitoring tasks in scientific settings.

Load-bearing premise

The isolated execution environment combined with three-layer agent loops and self-assessment will ensure safe and reliable results across different levels of large language model performance on scientific tasks.

What would settle it

Deploying the framework on a scientific task that involves generating and running code with potential side effects, then verifying if it always stops correctly without executing unsafe actions or producing unverified results.

Figures

read the original abstract

Recent advances in agentic AI have enabled increasingly autonomous workflows, but existing systems still face substantial challenges in achieving reliable deployment in real-world scientific research. In this work, we present a safe, lightweight, and user-friendly agentic framework for the autonomous execution of well-defined scientific tasks. The framework combines an isolated execution environment, a three-layer agent loop, and a self-assessing do-until mechanism to ensure safe and reliable operation while effectively leveraging large language models of varying capability levels. By focusing on structured tasks with clearly defined context and stopping criteria, the framework supports end-to-end automation with minimal human intervention, enabling researchers to offload routine workloads and devote more effort to creative activities and open-ended scientific inquiry.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

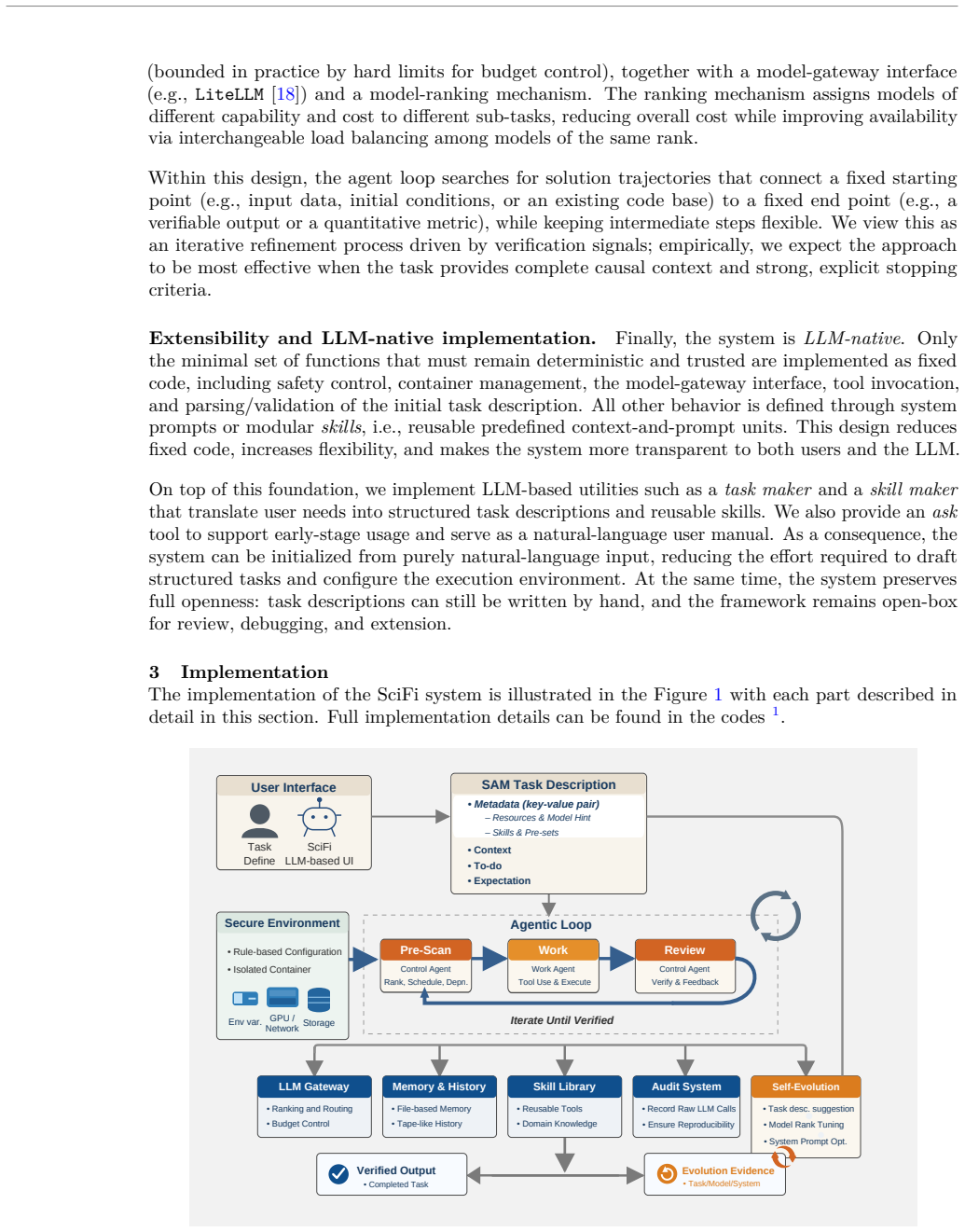

Summary. The manuscript proposes SciFi, a safe, lightweight, user-friendly, and fully autonomous agentic AI framework for executing well-defined scientific tasks. It integrates an isolated execution environment, a three-layer agent loop, and a self-assessing do-until mechanism to enable reliable operation across LLMs of varying capabilities, supporting end-to-end automation of structured tasks with minimal human intervention so researchers can focus on creative work.

Significance. If the safety, reliability, and automation claims were empirically validated, the framework could meaningfully advance practical deployment of agentic AI in scientific workflows by addressing current limitations in LLM consistency and safety. The focus on tasks with explicit context and stopping criteria is a reasonable scoping choice that aligns with present model capabilities.

major comments (1)

- [Abstract] Abstract: The central assertions that the framework 'ensures safe and reliable operation' and 'supports end-to-end automation with minimal human intervention' rest solely on the high-level architectural description. No experiments, benchmarks, failure-mode analyses, success rates on scientific tasks, or comparisons to baselines are provided anywhere in the manuscript, leaving the load-bearing claims about error constraint, unsafe-action prevention, and cross-model reliability untested.

Simulated Author's Rebuttal

We thank the referee for the thoughtful and constructive feedback. We agree that the strength of the safety, reliability, and automation claims requires empirical grounding, and we will revise the manuscript to address this limitation directly.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central assertions that the framework 'ensures safe and reliable operation' and 'supports end-to-end automation with minimal human intervention' rest solely on the high-level architectural description. No experiments, benchmarks, failure-mode analyses, success rates on scientific tasks, or comparisons to baselines are provided anywhere in the manuscript, leaving the load-bearing claims about error constraint, unsafe-action prevention, and cross-model reliability untested.

Authors: We acknowledge that the current version of the manuscript presents the SciFi framework primarily through its architectural design and does not include quantitative experiments, benchmarks, or failure analyses. This was an intentional scoping decision for an initial system-description paper, but we recognize that it leaves the core claims insufficiently supported. In the revised manuscript we will add a new Evaluation section containing: (1) success rates and error-recovery statistics on a set of well-defined scientific tasks (e.g., automated literature summarization pipelines, parameter-sweep simulations, and data-cleaning workflows); (2) explicit failure-mode analysis showing how the three-layer loop and self-assessing do-until mechanism constrain unsafe actions and recover from LLM errors; (3) cross-model reliability results using at least three LLMs of differing capability; and (4) comparisons against baseline agentic systems (e.g., unmodified ReAct and LangGraph agents) on the same task suite. These additions will be placed before the Conclusion and will directly substantiate the abstract claims. revision: yes

Circularity Check

No circularity in descriptive framework proposal

full rationale

The manuscript proposes an agentic AI workflow architecture consisting of an isolated execution environment, three-layer agent loop, and self-assessing do-until mechanism. No equations, fitted parameters, predictions, or derivation steps appear in the provided text or abstract. Central claims about safety and reliability are presented as consequences of the design choices rather than reductions to prior self-citations, data fits, or self-definitional loops. The paper contains no load-bearing self-citations, uniqueness theorems, or ansatzes smuggled via citation, rendering the description self-contained without circularity.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Scientific tasks can be structured with clearly defined context and stopping criteria that enable safe autonomous execution.

invented entities (1)

-

SciFi framework

no independent evidence

Reference graph

Works this paper leans on

-

[1]

arXiv preprint arXiv:2308.11432 , year=

Lei Wang, Chen Ma, Xueyang Feng, Zeyu Zhang, Hao Yang, Jingsen Zhang, Zhiyuan Chen, Jiakai Tang, Xu Chen, Yankai Lin, Wayne Xin Zhao, Zhewei Wei, and Ji-Rong Wen. A survey on large language model based autonomous agents.arXiv preprint arXiv:2308.11432, 2023

-

[2]

React: Synergizing reasoning and acting in language models

Shunyu Yao, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik Narasimhan, and Yuan Cao. React: Synergizing reasoning and acting in language models. InInternational Conference on Learning Representations (ICLR), 2023

2023

-

[3]

Reflexion: Language agents with verbal reinforcement learning

Noah Shinn, Federico Cassano, Edward Berman, Ashwin Gopinath, Karthik Narasimhan, and Shunyu Yao. Reflexion: Language agents with verbal reinforcement learning. InAdvances in Neural Information Processing Systems (NeurIPS), 2023

2023

-

[4]

A survey of motion planning and control techniques for self-driving urban vehicles.IEEE Transactions on Intelligent Vehicles, 1(1):33–55, 2016

Brian Paden, Michal Čáp, Sze Zheng Yong, Dmitry Yershov, and Emilio Frazzoli. A survey of motion planning and control techniques for self-driving urban vehicles.IEEE Transactions on Intelligent Vehicles, 1(1):33–55, 2016

2016

-

[5]

Deep direct reinforcement learning for financial signal representation and trading.IEEE Transactions on Neural Networks and Learning Systems, 28(3):653–664, 2017

Yue Deng, Feng Bao, Youyong Kong, Zhiquan Ren, and Qionghai Dai. Deep direct reinforcement learning for financial signal representation and trading.IEEE Transactions on Neural Networks and Learning Systems, 28(3):653–664, 2017

2017

-

[6]

Agentic ai for scientific discovery: A survey of progress, challenges, and future directions, 2025

Mourad Gridach, Jay Nanavati, Khaldoun Zine El Abidine, Lenon Mendes, and Christina Mack. Agentic ai for scientific discovery: A survey of progress, challenges, and future directions, 2025. Published as a conference paper at ICLR 2025

2025

-

[7]

Schwartz

Matthew D. Schwartz. Resummation of the c-parameter sudakov shoulder using effective field theory, 2026

2026

-

[8]

Laurent, Joseph D

Jon M. Laurent, Joseph D. Janizek, Michael Ruzo, Michaela M. Hinks, Michael J. Hammerling, Siddharth Narayanan, Manvitha Ponnapati, Andrew D. White, and Samuel G. Rodriques. Lab- bench: Measuring capabilities of language models for biology research, 2024

2024

-

[9]

Mdagents: An adaptive collaboration of llms for medical decision-making, 2024

Yubin Kim, Chanwoo Park, Hyewon Jeong, Yik Siu Chan, Xuhai Xu, Daniel McDuff, Hyeonhoon Lee, Marzyeh Ghassemi, Cynthia Breazeal, and Hae Won Park. Mdagents: An adaptive collaboration of llms for medical decision-making, 2024

2024

-

[10]

Hypothesis generation for materials discovery and design using goal-driven and constraint- guided llm agents, 2025

Shrinidhi Kumbhar, Venkatesh Mishra, Kevin Coutinho, Divij Handa, Ashif Iquebal, and Chitta Baral. Hypothesis generation for materials discovery and design using goal-driven and constraint- guided llm agents, 2025. Accepted in NAACL 2025

2025

-

[11]

Toolformer: Language Models Can Teach Themselves to Use Tools

Timo Schick, Jane Dwivedi-Yu, Roberto Dessì, Roberta Raileanu, Maria Lomeli, Eric Hambro, Luke Zettlemoyer, and Mike Lewis. Toolformer: Language models can teach themselves to use tools.arXiv preprint arXiv:2302.04761, 2023

work page internal anchor Pith review arXiv 2023

-

[12]

Pal: Program-aided language models,

Luyu Gao, Aman Madaan, Shuyan Zhou, Uri Alon, Pengfei Liu, Yiming Yang, Jamie Callan, and Graham Neubig. Pal: Program-aided language models.arXiv preprint arXiv:2211.10435, 2023

-

[13]

Eyal Karpas, Yoav Levine, Michal Moshkovitz, Shai Itzhaky, Barak Cohen, Yoav Goldberg, and Ido Dagan. Mrkl systems: A modular neuro-symbolic architecture combining large language models, external knowledge sources, and discrete reasoning.arXiv preprint arXiv:2205.00445, 2022

-

[14]

AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation

Qingyun Wu, Gagan Bansal, Jie Zhang, Yiran Wu, Beibin Li, Erkang Zhu, Li Jiang, Xiaoyan Zhang, ChiWang, etal. Autogen: Enablingnext-genllmapplicationsviamulti-agentconversation. arXiv preprint arXiv:2308.08155, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[15]

Snakemaker: Seamlessly transforming ad-hoc analyses into sustainable snakemake workflows with generative ai, 2025

Marco Masera, Alessandro Leone, Johannes Köster, and Ivan Molineris. Snakemaker: Seamlessly transforming ad-hoc analyses into sustainable snakemake workflows with generative ai, 2025

2025

-

[16]

Singularity, 2021

Singularity Developers. Singularity, 2021

2021

-

[17]

Kurtzer, Vanessa Sochat, and Michael W

Gregory M. Kurtzer, Vanessa Sochat, and Michael W. Bauer. Singularity: Scientific containers for mobility of compute.PLOS ONE, 12(5):e0177459, 2017. 22

2017

-

[18]

Litellm, 2026

BerriAI. Litellm, 2026. Open-source library and gateway for interfacing with multiple large language model providers

2026

-

[19]

Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, Y. K. Li, Y. Wu, and Daya Guo. Deepseekmath: Pushing the limits of mathematical reasoning in open language models.arXiv preprint arXiv:2402.03300, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[20]

Voyager: An open-ended embodied agent with large language models

Guanzhi Wang, Yuqi Xie, Yunfan Jiang, Ajay Mandlekar, Chaowei Xiao, Yuke Zhu, Linxi Fan, and Anima Anandkumar. Voyager: An open-ended embodied agent with large language models. Transactions on Machine Learning Research, 2024

2024

-

[21]

SoK: Agentic skills–beyond tool use in LLM agents.arXiv preprint arXiv:2602.20867, 2026

Yanna Jiang, Delong Li, Haiyu Deng, Baihe Ma, Xu Wang, Qin Wang, and Guangsheng Yu. Sok: Agentic skills – beyond tool use in llm agents.arXiv preprint arXiv:2602.20867, 2026

-

[22]

Docker, 2026

Docker, Inc. Docker, 2026. Container platform for building, packaging, and running applications

2026

-

[23]

Root data analysis framework

CERN. Root data analysis framework

-

[24]

Calo-vq: Vector-quantized two-stage generative model in calorimeter simulation, 2024

Qibin Liu, Chase Shimmin, Xiulong Liu, Eli Shlizerman, Shu Li, and Shih-Chieh Hsu. Calo-vq: Vector-quantized two-stage generative model in calorimeter simulation, 2024

2024

-

[25]

Cluster counting algorithm for the CEPC drift chamber using LSTM and DGCNN.Nuclear Science and Techniques, 36(7), May 2025

Zhe-Fei Tian, Guang Zhao, Ling-Hui Wu, Zhen-Yu Zhang, Xiang Zhou, Shui-Ting Xin, Shuai-Yi Liu, Gang Li, Ming-Yi Dong, and Sheng-Sen Sun. Cluster counting algorithm for the CEPC drift chamber using LSTM and DGCNN.Nuclear Science and Techniques, 36(7), May 2025

2025

- [26]

-

[27]

HGQ: High Granularity Quantization for Real-time Neural Networks on FPGAs

Chang Sun, Zhiqiang Que, Thea Aarrestad, Vladimir Loncar, Jennifer Ngadiuba, Wayne Luk, and Maria Spiropulu. HGQ: High Granularity Quantization for Real-time Neural Networks on FPGAs. InProceedings of the 2026 ACM/SIGDA International Symposium on Field Programmable Gate Arrays, page 79–91. ACM, February 2026

2026

-

[28]

da4ml: Dis- tributed arithmetic for real-time neural networks on fpgas, 2025

Chang Sun, Zhiqiang Que, Vladimir Loncar, Wayne Luk, and Maria Spiropulu. da4ml: Dis- tributed arithmetic for real-time neural networks on fpgas, 2025

2025

-

[29]

Verilator, 2026

Wilson Snyder, Paul Wasson, Duane Galbi, et al. Verilator, 2026. Open-source Verilog/Sys- temVerilog simulator and lint system

2026

-

[30]

The LHC Olympics 2020 a community challenge for anomaly detection in high energy physics.Reports on Progress in Physics, 84(12):124201, December 2021

Gregor Kasieczka, Benjamin Nachman, David Shih, Oz Amram, Anders Andreassen, Kees Benkendorfer, Blaz Bortolato, Gustaaf Brooijmans, Florencia Canelli, Jack H Collins, Biwei Dai, Felipe F De Freitas, Barry M Dillon, Ioan-Mihail Dinu, Zhongtian Dong, Julien Donini, Javier Duarte, D A Faroughy, Julia Gonski, Philip Harris, Alan Kahn, Jernej F Kamenik, Charan...

2020

-

[31]

Metodiev, Benjamin Nachman, and Jesse Thaler

Eric M. Metodiev, Benjamin Nachman, and Jesse Thaler. Classification without labels: learning from mixed samples in high energy physics.Journal of High Energy Physics, 2017(10), October 2017

2017

-

[32]

Auto-encoding variational bayes, 2022

Diederik P Kingma and Max Welling. Auto-encoding variational bayes, 2022. 23

2022

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.