Recognition: unknown

Working Memory in a Recurrent Spiking Neural Networks With Heterogeneous Synaptic Delays

Pith reviewed 2026-05-10 11:26 UTC · model grok-4.3

The pith

A recurrent spiking neural network with heterogeneous synaptic delays stores and recalls arbitrary temporal spike patterns by chaining overlapping motifs.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

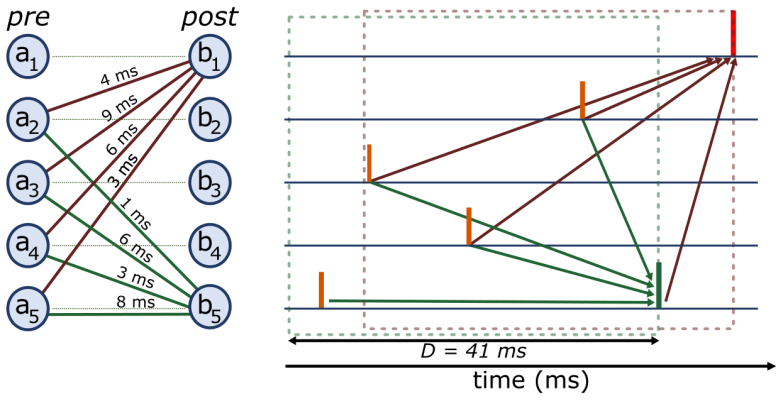

The central claim is that heterogeneous delays modeled as a weight tensor W in R^{N x N x D} with D=41 enable the representation of M arbitrary target spike patterns as sequential chains of overlapping Spiking Motifs. Each motif of length D uniquely determines the spikes at the subsequent time step, and backpropagation through time trains the weights so that recall propagates accurately from an initial clamped window without accumulating errors over long durations.

What carries the argument

The heterogeneous delay tensor W together with the Spiking Motif decomposition, where each motif is a contiguous window of D time steps that predicts the next spike pattern.

If this is right

- The network achieves a mean F1 score of 1.0 when storing 16 patterns over 1000 steps.

- Recall begins near the initialisation window and propagates forward in time without drift.

- Heterogeneous delays serve as an efficient substrate for working memory tasks in spiking networks.

- This structure supports energy-efficient implementation on neuromorphic hardware for edge applications.

Where Pith is reading between the lines

- Similar delay-based mechanisms might underlie temporal memory in biological brains where axonal conduction delays vary.

- Increasing the number of delays per synapse could expand the capacity for longer or more intricate sequences.

- Validation on physical neuromorphic chips would test whether the simulated energy efficiency holds in hardware.

Load-bearing premise

Arbitrary target spike patterns can be decomposed into non-interfering overlapping spiking motifs of length D that chain reliably without drift or forgetting over extended time periods.

What would settle it

Observing a significant drop in F1 score below 1.0 when testing with a larger number of patterns or with randomized homogeneous delays instead of heterogeneous ones would falsify the claim.

Figures

read the original abstract

Working memory -- the ability to store and recall precise temporal patterns of neural activity -- remains an open challenge for spiking neural networks (SNNs). We propose a recurrent SNN of $N$ neurons in which each synapse is equipped with $D = 41$ delays, modelled as a weight tensor $\mathbf{W} \in \mathbb{R}^{N \times N \times D}$ and trained end-to-end with surrogate-gradient backpropagation through time. The network stores $M$ arbitrary target spike patterns by representing each as a sequential chain of overlapping Spiking Motifs: contiguous windows of length $D$ that uniquely predict spikes at the next time step. On a synthetic benchmark of $M=16$ patterns ($N=512$ neurons, $T=1000$ steps), training achieves a mean F1 score of $1.0$, with recall emerging first near the clamped initialisation window and propagating forward in time. This result demonstrates that heterogeneous delays provide an efficient substrate for working memory in SNNs, enabling energy-efficient neuromorphic edge deployment.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

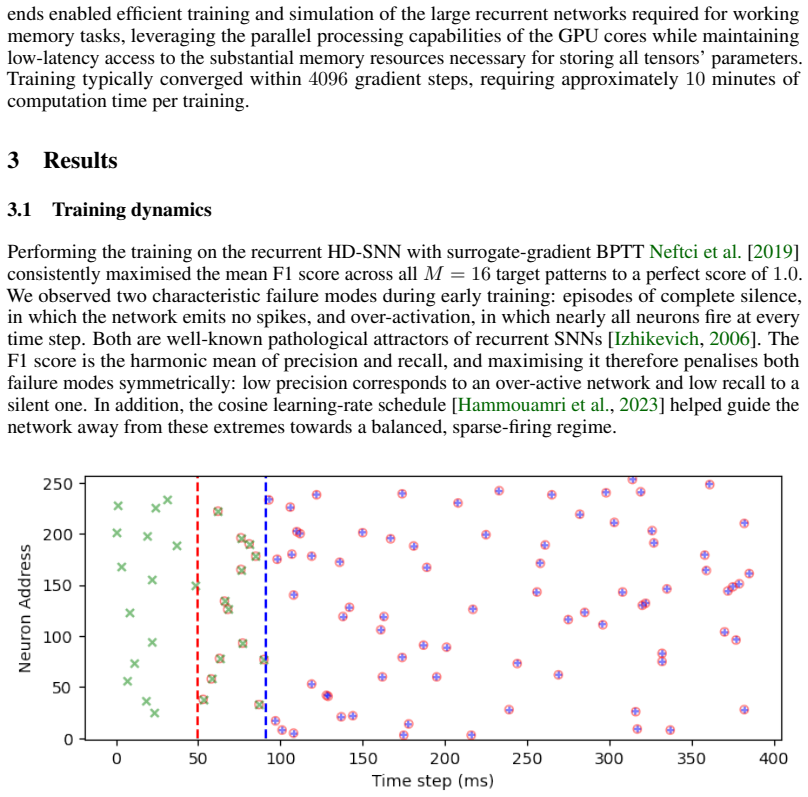

Summary. The paper proposes a recurrent spiking neural network (SNN) with N=512 neurons where each synapse has D=41 heterogeneous delays, represented as a weight tensor W in R^{N x N x D}. The network is trained end-to-end with surrogate-gradient backpropagation through time (BPTT) to store M=16 arbitrary target spike patterns over T=1000 steps. Each pattern is represented as a chain of overlapping 'spiking motifs' (contiguous D-length windows that predict the next spike). On a synthetic benchmark, training yields a mean F1 score of 1.0, with recall propagating forward from an initial clamped window. The authors conclude that heterogeneous delays provide an efficient substrate for working memory in SNNs suitable for neuromorphic edge deployment.

Significance. If the central empirical result generalizes, the work offers a concrete mechanism for temporal pattern storage in SNNs that leverages delay heterogeneity rather than additional recurrent connectivity or explicit state variables. This could be advantageous for energy-efficient neuromorphic hardware. The perfect F1=1.0 on the controlled M=16 benchmark is a strong positive signal for the specific case, and the forward-propagation behavior from the clamp is consistent with motif chaining. However, the significance is tempered by the absence of baseline comparisons, generalization tests to denser or longer patterns, and analysis of motif uniqueness or error accumulation.

major comments (2)

- [Abstract / Results] Abstract and main results: The claim that arbitrary target patterns are stored via 'sequential chain of overlapping Spiking Motifs' that 'uniquely predict spikes at the next time step' is load-bearing for the working-memory substrate conclusion, yet the manuscript provides no direct evidence (e.g., motif uniqueness metric, crosstalk analysis, or reconstruction of the learned motifs) that the D=41 windows are non-interfering or that chaining occurs without drift. The reported F1=1.0 with forward propagation could arise from BPTT discovering a narrow solution for the low-density synthetic patterns rather than a robust property of heterogeneous delays.

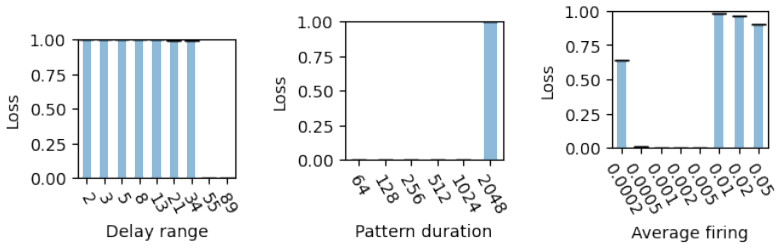

- [Abstract / Methods] The weakest assumption—that any target spike pattern decomposes into non-interfering D-length motifs that chain deterministically over T=1000 steps without timing-error accumulation—is not tested beyond the M=16 benchmark. No ablation on pattern density, no longer-horizon experiments, and no analysis of how the learned W tensor enforces motif uniqueness are provided; if motif overlap produces crosstalk for denser patterns, the energy-efficient neuromorphic claim does not follow.

minor comments (2)

- [Abstract] The abstract states 'mean F1 score of 1.0' but does not report variance, number of random seeds, or the precise definition of the F1 metric (e.g., per-neuron, per-time-step, or pattern-level).

- [Methods] Notation for the delay tensor W ∈ R^{N×N×D} is introduced without clarifying whether delays are discrete bins or continuous, and how the surrogate gradient handles the delay dimension during BPTT.

Simulated Author's Rebuttal

We thank the referee for their constructive comments, which help clarify the scope and limitations of our results on heterogeneous delays for working memory in SNNs. We address each major comment below and will incorporate revisions to provide additional supporting analyses while preserving the focus on the synthetic benchmark results.

read point-by-point responses

-

Referee: [Abstract / Results] Abstract and main results: The claim that arbitrary target patterns are stored via 'sequential chain of overlapping Spiking Motifs' that 'uniquely predict spikes at the next time step' is load-bearing for the working-memory substrate conclusion, yet the manuscript provides no direct evidence (e.g., motif uniqueness metric, crosstalk analysis, or reconstruction of the learned motifs) that the D=41 windows are non-interfering or that chaining occurs without drift. The reported F1=1.0 with forward propagation could arise from BPTT discovering a narrow solution for the low-density synthetic patterns rather than a robust property of heterogeneous delays.

Authors: We acknowledge that the current manuscript does not include explicit quantitative metrics for motif uniqueness or crosstalk. However, the observed perfect F1=1.0 over T=1000 steps, with recall propagating forward from the clamped initialization without apparent drift, constitutes indirect but strong evidence that the D-length motifs function as non-interfering predictors in this benchmark. Significant crosstalk or drift would be expected to degrade performance over long horizons. To directly address the concern, we will revise the manuscript to include (i) reconstruction and visualization of example learned motifs from the W tensor, (ii) a simple uniqueness check verifying that distinct D-windows map to distinct next-spike predictions, and (iii) clarification of the low spike density in the M=16 patterns. These additions will make the chaining mechanism explicit rather than inferred from aggregate F1 scores. revision: yes

-

Referee: [Abstract / Methods] The weakest assumption—that any target spike pattern decomposes into non-interfering D-length motifs that chain deterministically over T=1000 steps without timing-error accumulation—is not tested beyond the M=16 benchmark. No ablation on pattern density, no longer-horizon experiments, and no analysis of how the learned W tensor enforces motif uniqueness are provided; if motif overlap produces crosstalk for denser patterns, the energy-efficient neuromorphic claim does not follow.

Authors: We agree that testing generalization beyond the M=16, T=1000 benchmark is necessary to support broader claims. The present work uses a controlled synthetic setting to isolate the effect of delay heterogeneity. In the revision we will add (i) ablations varying pattern density (spike rate per pattern) and M, (ii) results on modestly longer horizons (where compute permits), and (iii) analysis of the learned W tensor showing how delay-specific weights promote motif separation. We will also qualify the neuromorphic deployment claim to apply to sparse temporal patterns, explicitly noting that denser patterns may require further validation or hybrid mechanisms. These changes will better delineate the conditions under which the motif-chaining substrate is effective. revision: yes

Circularity Check

No circularity: empirical training outcome on synthetic benchmark

full rationale

The paper defines a recurrent SNN with per-synapse delay tensor W and trains it end-to-end via surrogate-gradient BPTT to store M=16 target spike patterns, reporting an achieved mean F1=1.0 on the T=1000-step benchmark. This is an optimization result, not a first-principles derivation or prediction that reduces to the inputs by construction. No self-definitional steps, fitted inputs renamed as predictions, or load-bearing self-citations appear in the provided text; the motif-chaining mechanism is demonstrated rather than assumed as a uniqueness theorem. The derivation chain is therefore self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

free parameters (2)

- D=41

- N=512

axioms (1)

- domain assumption Surrogate gradient approximation allows backpropagation through non-differentiable spike events

invented entities (1)

-

Spiking Motif

no independent evidence

Reference graph

Works this paper leans on

-

[1]

doi: 10.1023/B:NACO.0000027755.02868.60. Randy M. Bruno and Bert Sakmann. Cortex Is Driven by Weak but Synchronously Active Thalamo- cortical Synapses.Science, 312(5780):1622–1627,

-

[2]

URL https://doi.org/10.1126/science.1124593

doi: 10.1126/science.1124593. URL https://doi.org/10.1126/science.1124593. Akihiro Eguchi, James B. Isbister, Nasir Ahmad, and Simon Stringer. The emergence of polychro- nization and feature binding in a spiking neural network model of the primate ventral visual system. Psychological Review, 125(4):545–571,

-

[3]

ISSN 1939-1471. doi: 10.1037/rev0000103. URL https://psycnet.apa.org/fulltext/2018-25960-001.pdf. Jason K Eshraghian, Max Ward, Emre Neftci, Xinxin Wang, Gregor Lenz, Girish Dwivedi, Mo- hammed Bennamoun, Doo Seok Jeong, and Wei D Lu. Training spiking neural networks using lessons from deep learning.Proceedings of the IEEE, 111(9):1016–1054,

-

[4]

doi: 10.1109/JPROC.2023.3308088. J. Göltz, L. Kriener, A. Baumbach, S. Billaudelle, O. Breitwieser, B. Cramer, D. Dold, A. F. Kungl, W. Senn, J. Schemmel, K. Meier, and M. A. Petrovici. Fast and energy-efficient neuromor- phic deep learning with first-spike times.Nature Machine Intelligence, 3(9):823–835, Septem- ber

-

[5]

URL https://link.springer.com/article/10.1007/ s00422-023-00975-8

doi: 10.1007/s00422-023-00975-8. URL https://link.springer.com/article/10.1007/ s00422-023-00975-8. Antoine Grimaldi, Amélie Gruel, Camille Besnainou, Jean-Nicolas Jérémie, Jean Martinet, and Laurent U. Perrinet. Precise Spiking Motifs in Neurobiological and Neuromorphic Data.Brain Sciences, 13(1):68, January

-

[6]

ISSN 2076-3425. doi: 10.3390/brainsci13010068. URL https://www.mdpi.com/2076-3425/13/1/68. Antoine Grimaldi, Victor Boutin, Sio-Hoi Ieng, Ryad Benosman, and Laurent U. Perrinet. A robust event-driven approach to always-on object recognition.Neural Networks, 178:106415,

-

[7]

URL https://www.sciencedirect.com/science/article/ pii/S0893608024003393

doi: 10.1016/j.neunet.2024.106415. URL https://www.sciencedirect.com/science/article/ pii/S0893608024003393. Ilyass Hammouamri, Ismail Khalfaoui-Hassani, and Timothée Masquelier. Learning Delays in Spiking Neural Networks using Dilated Convolutions with Learnable Spacings, June

-

[8]

URL http://arxiv.org/abs/2306.17670. Yuji Ikegaya, Gloster Aaron, Rosa Cossart, Dmitriy Aronov, Ilan Lampl, David Ferster, and Rafael Yuste. Synfire Chains and Cortical Songs: Temporal Modules of Cortical Activity.Science, 304 (5670):559–564, April

-

[9]

URL https://www.science.org/ doi/10.1126/science.1093173

doi: 10.1126/science.1093173. URL https://www.science.org/ doi/10.1126/science.1093173. Eugene M. Izhikevich. Polychronization: Computation with Spikes.Neural Computation, 18 (2):245–282, February

-

[10]

doi: 10.1162/089976606775093882

ISSN 0899-7667. doi: 10.1162/089976606775093882. URL https://doi.org/10.1162/089976606775093882. Thomas Kronland-Martinet, Stéphane Viollet, and Laurent U Perrinet. Detection of spiking motifs of arbitrary length in neural activity using bounded synaptic delays,

-

[11]

URL https://arxiv. org/abs/2511.15296. Emre O. Neftci, Hesham Mostafa, and Friedemann Zenke. Surrogate Gradient Learning in Spiking Neural Networks: Bringing the Power of Gradient-Based Optimization to Spiking Neural Networks. IEEE Signal Processing Magazine, 36(6):51–63, November

-

[12]

doi: 10.1109/ MSP.2019.2931595

ISSN 1053-5888. doi: 10.1109/ MSP.2019.2931595. URLhttps://ieeexplore.ieee.org/document/8891809/. Hélène Paugam-Moisy, Régis Martinez, and Samy Bengio. Delay learning and polychronization for reservoir computing.Neurocomputing, 71(7–9):1143–1158, March

-

[13]

doi: 10.1016/j.neucom.2007.12.027

ISSN 0925-2312. doi: 10.1016/j.neucom.2007.12.027. URL http://www.sciencedirect.com/science/article/ pii/S0925231208000507. L. Perrinet and M. Samuelides. Coherence detection in a spiking neuron via Hebbian learning. Neurocomputing, 44–46:133–139,

-

[14]

doi: 10.1016/s0925-2312(02)00374-0. Laurent U. Perrinet. Accurate Detection of Spiking Motifs in Multi-unit Raster Plots. In Lazaros Iliadis, Antonios Papaleonidas, Plamen Angelov, and Chrisina Jayne, editors,Artificial Neural Networks and Machine Learning — ICANN 2023, pages 369–380, Cham,

-

[15]

Springer Nature Switzerland. ISBN 978-3-031-44206-3. doi: 10.1007/978-3-031-44207-0_31. Kathleen S. Rockland. Non-uniformity of extrinsic connections and columnar organization.Journal of Neurocytology, 31(3-5):247–253,

-

[16]

ISSN 0300-4864. doi: 10.1023/a:1024169925377. W. R. Softky and C. Koch. The highly irregular firing of cortical cells is inconsistent with tem- poral integration of random EPSPs.Journal of Neuroscience, 13(1):334–350, January

-

[17]

URL https://doi.org/10.1523/JNEUROSCI

doi: 10.1523/jneurosci.13-01-00334.1993. URL https://doi.org/10.1523/JNEUROSCI. 13-01-00334.1993. Botond Szatmáry and Eugene M. Izhikevich. Spike-Timing Theory of Working Memory.PLoS Computational Biology, 6(8):e1000879, August

-

[18]

PLOS Com- putational Biology18(9), 1010492 (2022) https://doi.org/10.1371/journal.pcbi

ISSN 1553-7358. doi: 10.1371/journal.pcbi. 1000879. URLhttps://dx.plos.org/10.1371/journal.pcbi.1000879. Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, and Illia Polosukhin. Attention is All you Need.NIPS, pages 5998–6008,

-

[19]

doi: 10.48550/arXiv.1706.03762. URL https://proceedings.neurips.cc/paper/2017/hash/ 3f5ee243547dee91fbd053c1c4a845aa-Abstract.html. Vincent Villette, Arnaud Malvache, Thomas Tressard, Nathalie Dupuy, and Rosa Cossart. Internally Recurring Hippocampal Sequences as a Population Template of Spatiotemporal Information. Neuron, 88(2):357–366, October

work page internal anchor Pith review Pith/arXiv arXiv doi:10.48550/arxiv.1706.03762 2017

-

[20]

doi: 10.1016/j.neuron.2015.09.052

ISSN 0896-6273. doi: 10.1016/j.neuron.2015.09.052. URLhttps://www.sciencedirect.com/science/article/pii/S0896627315008417. Bojian Yin, Shurong Wang, Haoyu Tan, Sander Bohte, Federico Corradi, and Guoqi Li. Efficient Sparse Selective-Update RNNs for Long-Range Sequence Modeling, February

-

[21]

URL http: //arxiv.org/abs/2603.02226

work page internal anchor Pith review Pith/arXiv arXiv

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.