Recognition: no theorem link

Verification Modulo Tested Library Contracts

Pith reviewed 2026-05-11 01:00 UTC · model grok-4.3

The pith

A counterexample-guided framework synthesizes library contracts that prove client programs correct and pass testing.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

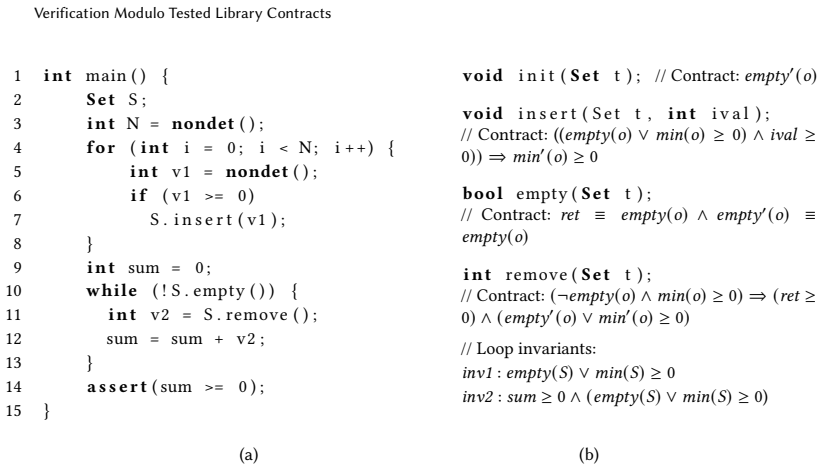

The central claim is that verification of a client program modulo its library can be reduced to the synthesis of adequate modular or contextual method contracts together with inductive invariants for the client. These contracts are found by a counterexample-guided loop that alternates between a generalizing CHC solver (implemented via ICE learning) and a testing engine that exercises the library implementation against the proposed contracts; the loop terminates when contracts are found that are both sufficient for the client proof and pass the library tests.

What carries the argument

The counterexample-guided learning framework that coordinates a CHC solver with a testing engine to infer adequate contracts and client invariants.

If this is right

- Once adequate contracts are synthesized, the client can be verified modularly using only those contracts.

- Contextual contracts often suffice where classical modular contracts would be harder to state or infer.

- The same loop can produce both the contracts and the inductive invariants needed for the client proof.

- The resulting contracts are guaranteed to hold on the library implementation as exercised by the tester.

Where Pith is reading between the lines

- The approach suggests a practical division of labor between deductive tools for the client and testing for the library.

- It could be extended to libraries whose contracts involve temporal or probabilistic properties if the tester can be adapted accordingly.

- Similar synthesis loops might apply to other settings where partial specifications are easier to test than to prove in full.

Load-bearing premise

A testing engine exists that can exercise the library sufficiently to expose any violation of the synthesized contracts.

What would settle it

An execution trace through the library that violates one of the synthesized contracts yet is never generated by the testing engine, allowing the client proof to succeed while the client actually fails at runtime.

Figures

read the original abstract

We consider the problem of verification modulo tested library contracts as a step towards automating the verification of client programs that use complex libraries. We formulate this problem as the synthesis of modular contracts for the library methods used by the client that are adequate to prove the client correct, and that also pass the scrutiny of a testing engine that tests the library against these contracts. We also consider a new form of method contracts called contextual contracts that arise in this setting that hold in the context of the client program, and can often be simpler and easier to infer than classical modular contracts. We provide a counterexample-guided learning framework to solve this problem, in which the synthesizer interacts with a constraint solver as well as the testing engine in order to infer adequate modular/contextual method contracts and inductive invariants for the client. The main synthesis engines we use are generalizing CHC solvers that are realized using ICE learning algorithms. We realize this framework in a tool called DUALIS and show its efficacy on benchmarks where clients call large libraries.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper formulates verification of client programs using complex libraries as the synthesis of adequate modular or contextual method contracts. These contracts must suffice to prove the client correct (via CHC/ICE-based invariant inference) while also passing validation by an external testing engine. The approach is realized as a counterexample-guided interaction loop among synthesizer, solver, and tester, implemented in the DUALIS tool and evaluated on benchmarks with large libraries.

Significance. If the soundness and completeness claims hold, the work offers a practical bridge between deductive verification and testing for library-dependent code, reducing the need for full library specifications. The introduction of contextual contracts (simpler contracts that hold only in the client context) is a useful conceptual contribution, and the reliance on generalizing CHC solvers via ICE learning provides a reusable technical core.

major comments (3)

- [Framework description and soundness argument (likely §3–4)] The central soundness argument rests on contracts that both enable a client proof and 'pass the scrutiny' of the testing engine. Because testing is necessarily partial, a contract can pass all generated tests yet be violated on some input, invalidating the client verification even when DUALIS reports success. The manuscript must specify the testing configuration (coverage criteria, input-generation strategy, number of tests) and any mechanism for detecting or bounding false-negative contract acceptance; without this, the efficacy claim on benchmarks cannot be assessed.

- [Definition of contextual contracts and CHC encoding] The adequacy of contextual contracts for client verification is asserted but requires a precise definition and a theorem showing that a contextual contract, together with the synthesized client invariants, implies the desired client property without assuming full modularity. The current high-level description leaves open whether the CHC encoding correctly captures the context restriction.

- [Evaluation section and benchmark tables] The evaluation reports efficacy on benchmarks but provides no data on test-suite size, coverage achieved, or cases where the testing engine could plausibly have missed violations. Table or section reporting these metrics is needed to substantiate that the testing scrutiny is sufficient for the claimed library sizes.

minor comments (2)

- [Algorithm description] Clarify the precise interface between the ICE learner and the testing engine (e.g., how counterexamples from testing are fed back as negative examples).

- [Introduction or related work] Add a short related-work paragraph contrasting the approach with prior contract-inference tools that rely solely on static analysis or on full verification of the library.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive feedback on our manuscript. We address each major comment below and will revise the paper to incorporate additional details and clarifications as indicated.

read point-by-point responses

-

Referee: The central soundness argument rests on contracts that both enable a client proof and 'pass the scrutiny' of the testing engine. Because testing is necessarily partial, a contract can pass all generated tests yet be violated on some input, invalidating the client verification even when DUALIS reports success. The manuscript must specify the testing configuration (coverage criteria, input-generation strategy, number of tests) and any mechanism for detecting or bounding false-negative contract acceptance; without this, the efficacy claim on benchmarks cannot be assessed.

Authors: We agree that testing provides only partial coverage and cannot rule out all violations. In the revised manuscript we will add a dedicated subsection (in Section 5) that specifies the testing configuration used in the experiments: branch coverage as the criterion, a combination of random and boundary-value input generation, and a fixed budget of 1000 tests per library method (with up to three rounds of refinement). We will also add an explicit discussion of the approach's limitations, noting that testing serves as a practical filter rather than a complete check, and that the CHC-based verification step supplies complementary assurance. No formal bounding mechanism for false negatives is present beyond increasing test volume. revision: yes

-

Referee: The adequacy of contextual contracts for client verification is asserted but requires a precise definition and a theorem showing that a contextual contract, together with the synthesized client invariants, implies the desired client property without assuming full modularity. The current high-level description leaves open whether the CHC encoding correctly captures the context restriction.

Authors: We thank the referee for highlighting the need for greater formality. Section 3 already defines contextual contracts as contracts required to hold only at the call sites appearing in the client. We will strengthen the presentation by inserting an explicit theorem (Theorem 3.1) stating that satisfiability of the CHC system encoding the client, the contextual contract, and the inferred invariants implies that the client satisfies its specification whenever the library method obeys the contract at the observed call sites. The CHC encoding achieves the context restriction by generating clauses only for the concrete call sites present in the client program rather than universally quantifying over all possible inputs. A proof sketch will be added to the appendix. revision: yes

-

Referee: The evaluation reports efficacy on benchmarks but provides no data on test-suite size, coverage achieved, or cases where the testing engine could plausibly have missed violations. Table or section reporting these metrics is needed to substantiate that the testing scrutiny is sufficient for the claimed library sizes.

Authors: We accept that additional metrics would improve the evaluation. In the revised version we will insert a new table (Table 6) that, for every benchmark, reports the size of the generated test suite, the achieved statement and branch coverage percentages, and the number of testing rounds performed. We will also add a short paragraph in Section 6 discussing the possibility of missed violations for the largest libraries and explaining why the combination of high coverage targets and the subsequent verification step makes such misses unlikely in practice. revision: yes

Circularity Check

No circularity: framework uses independent external solver and testing engine

full rationale

The paper defines the problem as synthesizing contracts that are both adequate for client proofs and pass testing scrutiny, then presents a CEGIS-style framework where a synthesizer interacts with a CHC/ICE constraint solver and a separate testing engine. No derivation, equation, or claim reduces by construction to its own inputs; the testing engine and solver are treated as black-box external components whose behavior is not defined in terms of the synthesized contracts. No self-citations, fitted parameters renamed as predictions, or ansatzes smuggled via prior work appear in the abstract or problem formulation. The result is a standard algorithmic framework applied to a new problem, with no load-bearing self-referential steps.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Generalizing CHC solvers realized using ICE learning algorithms can infer adequate contracts and inductive invariants.

- domain assumption A testing engine can scrutinize library behavior against the synthesized contracts.

invented entities (1)

-

contextual contracts

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. 2023. GPT-4 Technical Report.arXiv preprint arXiv:2303.08774 (2023)

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[2]

Aws Albarghouthi, Isil Dillig, and Arie Gurfinkel. 2016. Maximal specification synthesis. InProc. 43rd Annual ACM SIGPLAN-SIGACT Symp. Principles of Programming Languages (POPL 2016), St. Petersburg, USA, January 20-22, 2016. ACM, 789–801

2016

-

[3]

Apt and Ernst-Rüdiger Olderog

Krzysztof R. Apt and Ernst-Rüdiger Olderog. 2019. Fifty years of Hoare’s logic, Chapter 9.Form. Asp. Comput.31, 6 (2019)

2019

-

[4]

Madhusudan, Shambwaditya Saha, Shiyu Wang, and Tao Xie

Angello Astorga, P. Madhusudan, Shambwaditya Saha, Shiyu Wang, and Tao Xie. 2019. Learning stateful preconditions modulo a test generator(PLDI 2019). ACM, New York, NY, USA, 775–787

2019

-

[5]

Madhusudan, and Tao Xie

Angello Astorga, Shambwaditya Saha, Ahmad Dinkins, Felicia Wang, P. Madhusudan, and Tao Xie. 2021. Synthesizing contracts correct modulo a test generator.Proc. ACM Program. Lang.5, OOPSLA, Article 104 (Oct. 2021), 27 pages

2021

-

[6]

Haniel Barbosa, Clark W. Barrett, Martin Brain, Gereon Kremer, Hanna Lachnitt, Makai Mann, Abdalrhman Mohamed, Mudathir Mohamed, Aina Niemetz, Andres Nötzli, Alex Ozdemir, Mathias Preiner, Andrew Reynolds, Ying Sheng, Cesare Tinelli, and Yoni Zohar. 2022. cvc5: A Versatile and Industrial-Strength SMT Solver. InProc. 28th Intl. Conf. Tools and Algorithms f...

2022

-

[7]

Dirk Beyer and Jan Strejček. 2025. Improvements in software verification and witness validation: SV-COMP 2025. In International Conference on Tools and Algorithms for the Construction and Analysis of Systems. Springer, 151–186

2025

-

[8]

Pasareanu

Colin Blundell, Dimitra Giannakopoulou, and Corina S. Pasareanu. 2006. Assume-Guarantee Testing.ACM SIGSOFT Softw. Eng. Notes31, 2 (2006)

2006

-

[9]

Weining Cao, Guangyuan Wu, Tangzhi Xu, Yuan Yao, Hengfeng Wei, Taolue Chen, and Xiaoxing Ma. 2025. Clause2Inv: A Generate-Combine-Check Framework for Loop Invariant Inference.Proc. ACM on Software Engineering2, ISSTA (2025), 1009–1030

2025

-

[10]

Adrien Champion, Tomoya Chiba, Naoki Kobayashi, and Ryosuke Sato. 2018. ICE-Based Refinement Type Discovery for Higher-Order Functional Programs. InTACAS, Part I (LNCS, Vol. 10805). Springer, 365–384

2018

-

[11]

Leonardo De Moura and Nikolaj Bjørner. 2008. Z3: An Efficient SMT Solver. InProc. 14th Intl. Conf. Tools and Algorithms for the Construction and Analysis of Systems (TACAS 2008)(Budapest, Hungary). Springer-Verlag, Berlin, Heidelberg, 337–340

2008

-

[12]

Ezudheen, Daniel Neider, Deepak D’Souza, Pranav Garg, and P

P. Ezudheen, Daniel Neider, Deepak D’Souza, Pranav Garg, and P. Madhusudan. 2018. Horn-ICE learning for synthesizing invariants and contracts.PACMPL2, OOPSLA (2018), 131:1–131:25

2018

-

[13]

Grigory Fedyukovich, Sumanth Prabhu, Kumar Madhukar, and Aarti Gupta. 2018. Solving Constrained Horn Clauses Using Syntax and Data. InFMCAD. IEEE, 170–178

2018

-

[14]

Grigory Fedyukovich, Sumanth Prabhu, Kumar Madhukar, and Aarti Gupta. 2019. Quantified Invariants via Syntax- Guided Synthesis. InCA V, Part I (LNCS, Vol. 11561). Springer, 259–277

2019

-

[15]

Rustan M

Cormac Flanagan and K. Rustan M. Leino. 2001. Houdini, an Annotation Assistant for ESC/Java. InProc. Intl. Symp. Formal Methods Europe (FME ’01). Springer-Verlag, 500–517

2001

-

[16]

Madhusudan, and Daniel Neider

Pranav Garg, Christof Löding, P. Madhusudan, and Daniel Neider. 2014. ICE: A Robust Framework for Learning Invariants. InProc. 26th International Conference on Computer Aided Verification CA V 2014, Vienna, Austria, 2014. (LNCS, Vol. 8559), Armin Biere and Roderick Bloem (Eds.). Springer, 69–87

2014

-

[17]

Madhusudan, and Dan Roth

Pranav Garg, Daniel Neider, P. Madhusudan, and Dan Roth. 2016. Learning invariants using decision trees and implication counterexamples. InProc. 43rd ACM SIGPLAN-SIGACT Symp. Principles of Programming Languages (POPL 2016), St. Petersburg, USA, 2016, Rastislav Bodík and Rupak Majumdar (Eds.). ACM, 499–512

2016

-

[18]

Timon Gehr, Dimitar Dimitrov, and Martin T. Vechev. 2015. Learning Commutativity Specifications. InProc. 27th Intl. Conf. Computer Aided Verification (CA V 2015), San Francisco, USA, July 18-24, 2015 (LNCS, Vol. 9206). Springer, 307–323. 24 Verification Modulo Tested Library Contracts

2015

-

[19]

Lopes, Corneliu Popeea, and Andrey Rybalchenko

Sergey Grebenshchikov, Nuno P. Lopes, Corneliu Popeea, and Andrey Rybalchenko. 2012. Synthesizing software verifiers from proof rules. InPLDI. ACM, 405–416

2012

-

[20]

Arie Gurfinkel, Temesghen Kahsai, Anvesh Komuravelli, and Jorge A. Navas. 2015. The SeaHorn Verification Frame- work. InProc. 27th Intl. Conf. Computer Aided Verification (CA V 2015), San Francisco, USA, July 18-24, 2015. Springer, 343–361

2015

-

[21]

Hossein Hojjat and Philipp Rümmer. 2018. The ELDARICA Horn Solver. InFMCAD. IEEE, 158–164

2018

-

[22]

Bart Jacobs and Erik Poll. 2001. A Logic for the Java Modeling Language JML. InProc. 4th International Conference on Fundamental Approaches to Software Engineering (FASE ’01). Springer-Verlag, Berlin, Heidelberg, 284–299

2001

-

[23]

Temesghen Kahsai, Rody Kersten, Philipp Rümmer, and Martin Schäf. 2017. Quantified Heap Invariants for Object- Oriented Programs. InLPAR (EPiC Series in Computing, Vol. 46). EasyChair, 368–384

2017

-

[24]

Temesghen Kahsai, Philipp Rümmer, Huascar Sanchez, and Martin Schäf. 2016. JayHorn: A Framework for Verifying Java programs. InCA V, Part I (LNCS, Vol. 9779). Springer, 352–358

2016

-

[25]

Lahiri, Akash Lal, Aseem Rastogi, Subhajit Roy, and Rahul Sharma

Adharsh Kamath, Nausheen Mohammed, Aditya Senthilnathan, Saikat Chakraborty, Pantazis Deligiannis, Shuvendu K. Lahiri, Akash Lal, Aseem Rastogi, Subhajit Roy, and Rahul Sharma. 2024. Leveraging LLMs for Program Verification. InProc. Formal Methods in Computer-Aided Design (FMCAD 2024), Prague, Czech Republic, October 15-18, 2024, Nina Narodytska and Phili...

2024

- [26]

-

[27]

Daragh King, Vasileios Koutavas, and Laura Kovács. 2025. Llm-Based Generation of Weakest Preconditions and Precise Array Invariants. InProc. 13th IEEE/ACM Intl. Conf. Formal Methods in Software Engineering (FormaliSE). IEEE, 1–5

2025

-

[28]

McMillan

Anvesh Komuravelli, Nikolaj Bjørner, Arie Gurfinkel, and Kenneth L. McMillan. 2015. Compositional verification of procedural programs using horn clauses over integers and arrays. InProc. 15th Conf. Formal Methods in Computer-Aided Design(Austin, Texas)(FMCAD ’15). FMCAD Inc, Austin, Texas, 89–96

2015

-

[29]

Anvesh Komuravelli, Arie Gurfinkel, and Sagar Chaki. 2014. SMT-Based Model Checking for Recursive Programs. In Proc. 26th Intl. Conf. Computer Aided Verification (CA V 2014), Vienna, Austria, July 18-22, 2014. Springer, 17–34

2014

-

[30]

Sumit Lahiri and Subhajit Roy. 2022. Almost correct invariants: synthesizing inductive invariants by fuzzing proofs. InProc. 31st ACM SIGSOFT Intl. Symp. on Software Testing and Analysis (ISSTA 2022), South Korea, July 18 - 22, 2022, Sukyoung Ryu and Yannis Smaragdakis (Eds.). ACM, 352–364

2022

-

[31]

Gary T. Leavens. 2007. Tutorial on JML, the Java Modeling Language. InProc. 22nd IEEE/ACM Intl. Conf. Automated Software Engineering (ASE 2007)(Atlanta, Georgia, USA). ACM, New York, NY, USA, 573

2007

-

[32]

Francesco Logozzo. 2013. Practical specification and verification with code contracts.Ada Lett.33, 3 (Nov. 2013), 7–8. https://doi.org/10.1145/2658982.2534188

-

[33]

Yusuke Matsushita, Takeshi Tsukada, and Naoki Kobayashi. 2020. RustHorn: CHC-Based Verification for Rust Programs. InProgramming Languages and Systems, Peter Müller (Ed.). Springer International Publishing, Cham, 484–514

2020

-

[34]

McMillan

Kenneth L. McMillan. 2003. Interpolation and SAT-Based Model Checking. InProc. 15th Intl. Conf. Computer Aided Verification (CA V 2003), Boulder, USA, July 8-12, 2003 (LNCS, Vol. 2725), Warren A. Hunt Jr. and Fabio Somenzi (Eds.). Springer, 1–13

2003

-

[35]

Millstein, and David Walker

Anders Miltner, Saswat Padhi, Todd D. Millstein, and David Walker. 2020. Data-driven inference of representation invariants. InProc. 41st ACM SIGPLAN Intl, Conf. Programming Language Design and Implementation (PLDI 2020), London, UK, June 15-20, 2020. ACM, 1–15

2020

-

[36]

Nausheen Mohammed, Akash Lal, Aseem Rastogi, Rahul Sharma, and Subhajit Roy. 2025. LLM Assistance for Memory Safety. In47th IEEE/ACM International Conference on Software Engineering, ICSE 2025, Ottawa, ON, Canada, April 26 - May 6, 2025. IEEE, 1717–1728

2025

-

[37]

Sujit Kumar Muduli, Rohan Ravikumar Padulkar, and Subhajit Roy. 2024. Interactive Theorem Proving Modulo Fuzzing. InProc. 36th Intl. Conf. Computer Aided Verification (CA V 2024), Montreal, QC, Canada, July 24-27, 2024 (LNCS, Vol. 14681), Arie Gurfinkel and Vijay Ganesh (Eds.). Springer, 480–493

2024

-

[38]

Sujit Kumar Muduli and Subhajit Roy. 2022. Satisfiability modulo fuzzing: a synergistic combination of SMT solving and fuzzing.Proc. ACM Program. Lang.6, OOPSLA2 (2022), 1236–1263

2022

-

[39]

Millstein

Saswat Padhi, Rahul Sharma, and Todd D. Millstein. 2016. Data-driven precondition inference with learned features. In Proc. 37th ACM SIGPLAN Conference on Programming Language Design and Implementation (PLDI 2016), Santa Barbara, USA, June 13-17, 2016, Chandra Krintz and Emery D. Berger (Eds.). ACM, 42–56

2016

-

[40]

Kexin Pei, David Bieber, Kensen Shi, Charles Sutton, and Pengcheng Yin. 2023. Can Large Language Models Reason about Program Invariants?. InInternational Conference on Machine Learning, ICML 2023, 23-29 July 2023, Honolulu, Hawaii, USA (Proc. Machine Learning Research, Vol. 202), Andreas Krause, Emma Brunskill, Kyunghyun Cho, Barbara Engelhardt, Sivan Sab...

2023

-

[41]

Elizabeth Polgreen, Andrew Reynolds, and Sanjit A. Seshia. 2022. Satisfiability and Synthesis Modulo Oracles. InProc. 23rd Intl. Conf. Verification, Model Checking, and Abstract Interpretation (VMCAI 2022), Philadelphia, USA, January 16-18, 2022 (LNCS, Vol. 13182). Springer, 263–284

2022

-

[42]

Sumanth Prabhu, Grigory Fedyukovich, Kumar Madhukar, and Deepak D’Souza. 2021. Specification Synthesis with Constrained Horn Clauses. InPLDI. ACM, 1203–1217

2021

-

[43]

Sumanth Prabhu, Kumar Madhukar, and R Venkatesh. 2018. Efficiently learning safety proofs from appearance as well as behaviours. InSAS (LNCS, Vol. 11002). Springer, 326–343

2018

-

[44]

Sriram Sankaranarayanan, Swarat Chaudhuri, Franjo Ivancic, and Aarti Gupta. 2008. Dynamic inference of likely data preconditions over predicates by tree learning. InProc. ACM/SIGSOFT Intl. Symp. on Software Testing and Analysis (ISSTA 2008), Seattle, USA, July 20-24, 2008. ACM, 295–306

2008

-

[45]

Fabio Somenzi and Aaron R. Bradley. 2011. IC3: where monolithic and incremental meet. InProc. Intl. Conf. Formal Methods in Computer-Aided Design (FMCAD 2011), Austin, USA, October 30 - November 02, 2011, Per Bjesse and Anna Slobodová (Eds.). FMCAD Inc., 3–8

2011

-

[46]

Gemini Team, Petko Georgiev, Ving Ian Lei, Ryan Burnell, Libin Bai, Anmol Gulati, Garrett Tanzer, Damien Vincent, Zhufeng Pan, Shibo Wang, et al. 2024. Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context.arXiv preprint arXiv:2403.05530(2024)

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[47]

Cheng Wen, Jialun Cao, Jie Su, Zhiwu Xu, Shengchao Qin, Mengda He, Haokun Li, Shing-Chi Cheung, and Cong Tian. 2024. Enchanting program specification synthesis by large language models using static analysis and program verification. InProc. Intl. Conf. Computer Aided Verification (CA V 2024). Springer, 302–328

2024

-

[48]

Zhe Zhou, Robert Dickerson, Benjamin Delaware, and Suresh Jagannathan. 2021. Data-driven abductive inference of library specifications.Proc. ACM Program. Lang.5, OOPSLA (2021), 1–29

2021

-

[49]

maximal

He Zhu, Stephen Magill, and Suresh Jagannathan. 2018. A data-driven CHC solver. InPLDI. ACM, 707–721. 26 Verification Modulo Tested Library Contracts A Benchmark Details Name Lbry LOC Clnt LOC #Obs-Mthds #Mthd Specs #LoopInvs AlternatingList 100 15 2 1 1 AtomicHashMap (1 - 5) ∼3k 17 - 28 4 1 - 2 1 - 3 AtomicLinkedList (1 - 2) ∼500 16 - 19 1 - 2 2 2 Binary...

2018

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.