Recognition: unknown

MedPRMBench: A Fine-grained Benchmark for Process Reward Models in Medical Reasoning

Pith reviewed 2026-05-10 05:54 UTC · model grok-4.3

The pith

MedPRMBench is the first benchmark to evaluate process reward models on fine-grained medical reasoning errors.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

MedPRMBench is the first process-level reward model benchmark for the medical domain. It is built through a three-phase pipeline based on Clinical Reasoning Blueprints that systematically generates high-quality evaluation data covering 14 fine-grained error types across Simplicity, Soundness, and Sensitivity categories with a 4-level severity grading to quantify clinical impact. The benchmark includes 6500 questions with 13000 reasoning chains and 113910 step-level labels plus additional training questions, and its medical PRM baseline achieves 87.1 percent overall PRMScore while serving as a plug-and-play verifier that improves downstream medical QA accuracy by 3.2 to 6.7 percentage points.

What carries the argument

The three-phase pipeline based on Clinical Reasoning Blueprints that generates evaluation data covering 14 fine-grained error types across three categories with a 4-level severity grading system.

If this is right

- Process reward models can now be tested for their ability to detect medical reasoning errors at the step level across 14 specific types.

- A trained medical PRM can function as a plug-and-play verifier that raises accuracy on medical question answering tasks.

- Current frontier, open-source, and medical-specialized models all show critical weaknesses in detecting medical reasoning errors.

- Future PRM training should target the identified weaknesses in simplicity, soundness, and sensitivity errors.

Where Pith is reading between the lines

- Widespread use of this benchmark could support safer deployment of reasoning models in healthcare settings by verifying error detection before real-world use.

- The severity grading approach might transfer to benchmarks in other high-stakes domains that need to prioritize errors by potential impact.

- The generated dataset could serve as training material to directly improve medical PRMs rather than only for evaluation.

- Linking the benchmark to existing medical datasets could create combined pipelines for training and verifying reliable clinical AI assistants.

Load-bearing premise

The three-phase pipeline based on Clinical Reasoning Blueprints produces data that accurately covers the 14 error types and assigns 4-level severity grades that reflect real clinical impact.

What would settle it

Independent review by clinicians of a sample of the benchmark's labeled reasoning chains that shows low agreement with the assigned error types or severity levels.

Figures

read the original abstract

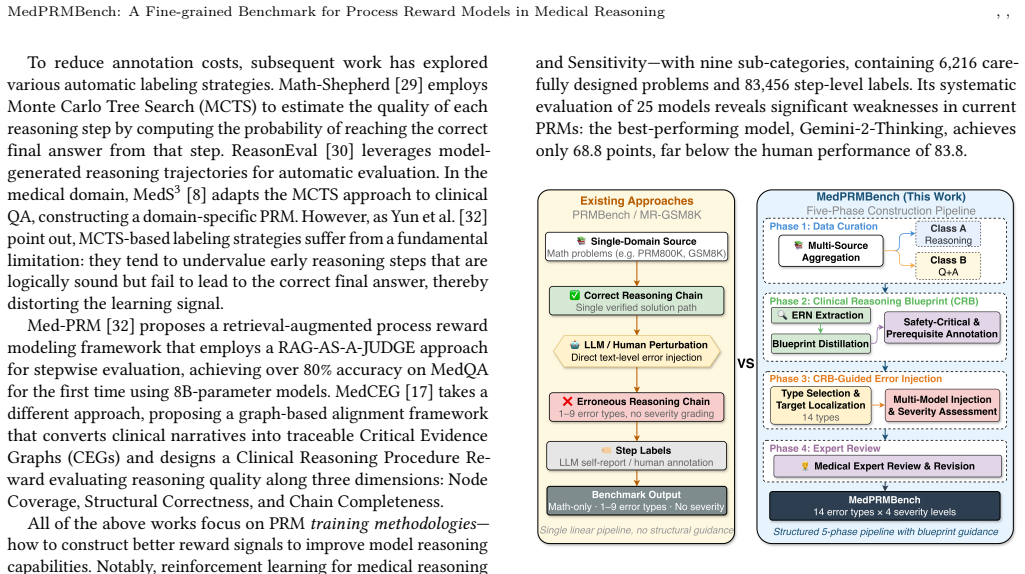

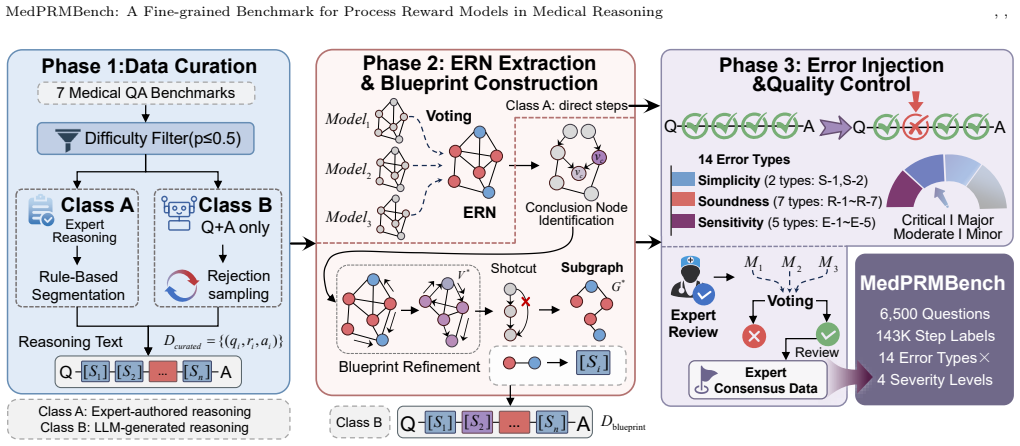

Process-Level Reward Models (PRMs) are essential for guiding complex reasoning in large language models, yet existing PRM benchmarks cover only general domains such as mathematics, failing to address medical reasoning -- which is uniquely characterized by safety criticality, knowledge intensity, and diverse error patterns. Without a reliable medical PRM evaluation framework, we cannot quantify models' error detection capabilities in clinical reasoning, leaving their safety in real-world healthcare applications unverified. We propose MedPRMBench, the first process-level reward model benchmark for the medical domain. Built through a three-phase pipeline based on Clinical Reasoning Blueprints (CRBs), MedPRMBench systematically generates high-quality evaluation data from seven medical QA sources, covering 14 fine-grained error types across three categories (Simplicity, Soundness, and Sensitivity) with the first 4-level severity grading system to quantify clinical impact. The benchmark comprises 6{,}500 questions with 13{,}000 reasoning chains and 113{,}910 step-level labels, plus 6{,}879 questions for training. Our medical PRM baseline achieves an 87.1\% overall PRMScore -- substantially surpassing all baselines -- and serves as a plug-and-play verifier that improves downstream medical QA accuracy by 3.2--6.7 percentage points. Systematic evaluation spanning proprietary frontier models, open-source reasoning models, and medical-specialized models reveals critical weaknesses in current models' medical reasoning error detection capabilities, providing clear directions for future PRM improvement.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces MedPRMBench, the first process-level reward model (PRM) benchmark for medical reasoning. It constructs the benchmark via a three-phase pipeline based on Clinical Reasoning Blueprints (CRBs) from seven medical QA sources, generating 6,500 evaluation questions with 13,000 reasoning chains and 113,910 step-level labels covering 14 fine-grained error types across Simplicity, Soundness, and Sensitivity categories, plus a novel 4-level severity grading. A medical-specialized PRM baseline achieves 87.1% overall PRMScore, outperforms baselines, and improves downstream medical QA accuracy by 3.2–6.7 points when used as a verifier. Systematic evaluation of frontier, open-source, and medical models reveals weaknesses in current PRMs' medical error detection.

Significance. If the generated labels and severity grades are reliable, this benchmark fills an important gap by providing the first fine-grained, process-level evaluation framework for PRMs in a high-stakes domain. The scale, error taxonomy, and downstream gains position it as a useful resource for developing safer medical LLMs. The reported model weaknesses offer concrete directions for future work on process supervision in clinical reasoning.

major comments (3)

- [§3] §3 (Benchmark Construction, three-phase CRB pipeline): The error labels and 4-level severity grades are generated synthetically without reported external validation by practicing clinicians or inter-rater agreement metrics. This is load-bearing for the central claims, as the 87.1% PRMScore, 3.2–6.7 pp downstream improvements, and conclusion of 'critical weaknesses' all presuppose that the 14 error types and severity scale accurately reflect real clinical reasoning failures and patient impact.

- [§4] §4 (Experiments and Evaluation): The definition and computation of the overall PRMScore (reported at 87.1%) is not fully specified in a way that allows assessment of whether it penalizes false positives/negatives appropriately across severity levels or whether the medical PRM baseline was trained with any overlap to the evaluation set. This affects interpretation of the 'substantially surpassing all baselines' claim.

- [§4.3] §4.3 (Downstream QA improvement): The plug-and-play verifier usage that yields 3.2–6.7 pp gains lacks detail on how the PRM is integrated (e.g., rejection sampling, step filtering thresholds, or whether it requires domain-specific fine-tuning), making it difficult to assess generalizability or reproducibility of the reported accuracy improvements.

minor comments (3)

- The paper should include a limitations section explicitly discussing potential artifacts from the synthetic pipeline and the absence of clinician validation.

- Figure 2 or the error taxonomy table would benefit from an example reasoning chain annotated with all 14 error types and severity levels for clarity.

- Ensure all seven source QA datasets are cited with their original references and any preprocessing steps are detailed to allow replication.

Simulated Author's Rebuttal

We thank the referee for their constructive comments, which have helped us improve the clarity and rigor of our manuscript. We address each of the major comments point by point below.

read point-by-point responses

-

Referee: [§3] §3 (Benchmark Construction, three-phase CRB pipeline): The error labels and 4-level severity grades are generated synthetically without reported external validation by practicing clinicians or inter-rater agreement metrics. This is load-bearing for the central claims, as the 87.1% PRMScore, 3.2–6.7 pp downstream improvements, and conclusion of 'critical weaknesses' all presuppose that the 14 error types and severity scale accurately reflect real clinical reasoning failures and patient impact.

Authors: We acknowledge the referee's concern regarding the synthetic generation of labels. The three-phase pipeline leverages Clinical Reasoning Blueprints extracted from seven established medical QA sources, with error types and severity levels defined based on clinical reasoning literature and expert input during blueprint creation. Although we did not perform additional inter-rater agreement studies with practicing clinicians for this benchmark release, the labels follow a systematic, reproducible process. In the revised manuscript, we have added a dedicated paragraph in §3 explaining the construction rationale with supporting references to medical guidelines, and we have included a new Limitations section that explicitly discusses the synthetic nature of the annotations and outlines plans for future clinician validation. This addresses the transparency issue while preserving the benchmark's contributions. revision: partial

-

Referee: [§4] §4 (Experiments and Evaluation): The definition and computation of the overall PRMScore (reported at 87.1%) is not fully specified in a way that allows assessment of whether it penalizes false positives/negatives appropriately across severity levels or whether the medical PRM baseline was trained with any overlap to the evaluation set. This affects interpretation of the 'substantially surpassing all baselines' claim.

Authors: We apologize for the insufficient detail on the PRMScore. The overall PRMScore is defined as the severity-weighted average of per-step classification accuracy across all error types, where higher severity levels (e.g., level 4) receive higher weights to emphasize critical errors. The medical-specialized PRM baseline was trained exclusively on the 6,879-question training split, with a strict separation from the 6,500-question evaluation set to prevent data leakage. We have revised §4 to include the precise mathematical definition of PRMScore, the weighting formula, and explicit confirmation of the non-overlapping splits. These additions enable readers to fully evaluate the metric and the baseline's performance. revision: yes

-

Referee: [§4.3] §4.3 (Downstream QA improvement): The plug-and-play verifier usage that yields 3.2–6.7 pp gains lacks detail on how the PRM is integrated (e.g., rejection sampling, step filtering thresholds, or whether it requires domain-specific fine-tuning), making it difficult to assess generalizability or reproducibility of the reported accuracy improvements.

Authors: We agree that additional implementation details are essential for reproducibility. The PRM serves as a plug-and-play verifier without requiring further domain-specific fine-tuning. Integration involves scoring each reasoning step with the PRM, applying a threshold of 0.5 to filter invalid steps, and using rejection sampling to select the chain with the highest aggregate score among valid candidates. We have expanded §4.3 with a step-by-step description of this process, including the threshold value, pseudocode for the verification procedure, and notes on how it can be applied to other models. This should facilitate replication and assessment of generalizability. revision: yes

Circularity Check

No significant circularity in empirical benchmark construction

full rationale

The paper constructs MedPRMBench empirically through a three-phase pipeline applied to seven external medical QA sources, producing labeled reasoning chains and evaluating PRM performance on them. No equations, fitted parameters, or predictions are presented; the central claims rest on data generation and model evaluation against independent baselines rather than any self-referential derivation. The CRB pipeline is described as a methodological choice without reducing to prior self-citations or ansatzes that would force the benchmark outcomes. This is standard benchmark work with no load-bearing self-definition or renaming of results.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Clinical Reasoning Blueprints (CRBs) provide a valid and comprehensive framework for generating realistic medical reasoning chains and error patterns.

Reference graph

Works this paper leans on

-

[1]

Aho, Michael R

Alfred V. Aho, Michael R. Garey, and Jeffrey D. Ullman. 1972. The Transitive Reduction of a Directed Graph.SIAM J. Comput.1, 2 (1972), 131–137. doi:10. 1137/0201008

1972

-

[2]

I ˜nigo Alonso, Maite Oronoz, and Rodrigo Agerri. 2024. MedExpQA: Multilingual Benchmarking of Large Language Models for Medical Question Answering. Artificial Intelligence in Medicine155 (2024), 102938

2024

- [3]

-

[5]

Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Peiyi Wang, Qihao Zhu, Runxin Xu, Ruoyu Zhang, Shirong Ma, Xiao Bi, et al. 2025. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning.arXiv preprint arXiv:2501.12948(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[6]

Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. 2021. Measuring Massive Multitask Language Under- standing. InProceedings of the International Conference on Learning Representations (ICLR)

2021

-

[7]

Aaron Jaech, Adam Kalai, Adam Lerer, Adam Richardson, Ahmed El-Kishky, Aiden Low, Alec Helyar, Aleksander Madry, Alex Beutel, Alex Carney, et al. 2024. Openai o1 system card.arXiv preprint arXiv:2412.16720(2024)

work page internal anchor Pith review Pith/arXiv arXiv 2024

- [8]

-

[9]

Di Jin, Eileen Pan, Nassim Oufattole, Wei-Hung Weng, Hanyi Fang, and Peter Szolovits. 2021. What Disease does this Patient Have? A Large-scale Open Domain Question Answering Dataset from Medical Exams.Applied Sciences11, 14 (2021), 6421

2021

-

[10]

Cohen, and Xinghua Lu

Qiao Jin, Bhuwan Dhingra, Zhengping Liu, William W. Cohen, and Xinghua Lu

-

[11]

In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Pro- cessing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP)

PubMedQA: A Dataset for Biomedical Research Question Answering. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Pro- cessing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP). 2567–2577

2019

-

[12]

Hyunjae Kim, Hyeon Hwang, Jiwoo Lee, Sihyeon Park, Dain Kim, Taewhoo Lee, Chanwoong Yoon, Jiwoong Sohn, Jungwoo Park, Olga Reykhart, Thomas Fetherston, Donghee Choi, Soo Heon Kwak, Qingyu Chen, and Jaewoo Kang

-

[13]

doi:10.1038/s41746-025-01653-8

Small language models learn enhanced reasoning skills from medical textbooks.npj Digital Medicine8, 1 (2025), 240. doi:10.1038/s41746-025-01653-8

- [14]

-

[15]

Hunter Lightman, Vineet Kosaraju, Yuri Burda, Harri Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. 2023. Let’s Verify Step by Step.arXiv preprint arXiv:2305.20050(2023)

work page internal anchor Pith review arXiv 2023

-

[16]

Hunter Lightman, Vineet Kosaraju, Yuri Burda, Harri Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. 2024. Let’s Verify Step by Step. InThe Twelfth International Conference on Learning Representations. https://openreview.net/forum?id=v8L0pN6EOi

2024

-

[17]

Zicheng Lin, Zhibin Gou, Tian Gong, Zhicheng Liu, Yinghui Wang, Zhengguang Yang, Zhicheng Jiao, Qingjie Cai, Haotian Shi, Yukang Shao, et al . 2024. Crit- icBench: Benchmarking LLMs for Critique-Correct Reasoning. InFindings of the Association for Computational Linguistics: ACL 2024. 530–546

2024

- [18]

-

[19]

Yutian Mu, Hao Sun, Jingyi Xu, Jiaqi Gao, Yizhou Ren, Chengqi Zhu, and Jie Zhu

- [20]

-

[21]

NovaSky Team. 2025. Sky-T1: Train your own O1 preview model within $450. https://novasky-ai.github.io/posts/sky-t1/ Official project page

2025

-

[22]

Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, et al. 2022. Training Language Models to Follow Instructions with Human Feedback. In Advances in Neural Information Processing Systems, Vol. 35. 27730–27744

2022

-

[23]

Ankit Pal, Logesh Kumar Umapathi, and Malaikannan Sankarasubbu. 2022. MedMCQA: A Large-scale Multi-Subject Multi-Choice Dataset for Medical Domain Question Answering. InProceedings of the Conference on Health, Inference, and Learning (CHIL). 248–260

2022

-

[24]

Qwen Team. 2025. QwQ-32B: Embracing the Power of Reinforcement Learning. https://qwenlm.github.io/blog/qwq-32b/

2025

-

[25]

Alexandre Sallinen, Antoni-Joan Solergibert, Michael Zhang, Guillaume Boy´e, Maud Dupont-Roc, Xavier Theimer-Lienhard, Etienne Boisson, Bastien Bernath, Hichem Hadhri, Antoine Tran, Tahseen Rabbani, Trevor Brokowski, Meditron Medical Doctor Working Group, Tim G. J. Rudner, and Mary-Anne Hartley. 2025. Llama-3-Meditron: An Open-Weight Suite of Medical LLMs...

2025

- [26]

-

[27]

Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Mingchuan Zhang, Yifei Li, Yu Wu, and Daya Guo. 2024. DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models.arXiv preprint arXiv:2402.03300(2024)

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[28]

Charlie Snell, Jaehoon Lee, Kelvin Xu, and Aviral Kumar. 2024. Scaling LLM Test- Time Compute Optimally can be More Effective than Scaling Model Parameters. arXiv preprint arXiv:2408.03314(2024)

work page Pith review arXiv 2024

-

[29]

Mingyang Song, Zhaochen Jiang, Fengli Zhang, Bingqian Qin, Xin-Yu Mao, and Huimin Hu. 2025. PRMBench: A Fine-grained and Challenging Benchmark for Process-Level Reward Models. InProceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (ACL)

2025

-

[30]

Jonathan Uesato, Nate Kushman, Ramana Kumar, Francis Song, Noah Siegel, Lisa Wang, Antonia Creswell, Geoffrey Irving, and Irina Higgins. 2022. Solving Math Word Problems with Process- and Outcome-Based Feedback.arXiv preprint arXiv:2211.14275(2022)

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[31]

arXiv preprint arXiv:2504.06196 (2025)

Eric Wang, Samuel Schmidgall, Paul F. Jaeger, Fan Zhang, Rory Pilgrim, Yossi Matias, Joelle Barral, David Fleet, and Shekoofeh Azizi. 2025. TxGemma: Efficient and Agentic LLMs for Therapeutics.arXiv preprint arXiv:2504.06196(2025). https://arxiv.org/abs/2504.06196

- [32]

- [33]

-

[34]

An Yang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv, et al. 2025. Qwen3 technical report.arXiv preprint arXiv:2505.09388(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

- [35]

- [36]

-

[37]

Zhongshen Zeng, Yinhong Liu, Yingjia Wan, Haiyun Jiang, and Jiaya Jia. 2024. MR-Ben: A Meta-Reasoning Benchmark for Evaluating System-2 Thinking in LLMs. InAdvances in Neural Information Processing Systems, Vol. 37

2024

-

[38]

Kaiyan Zhang, Sihang Zeng, Ermo Hua, Ning Ding, Zhang-Ren Chen, Zhiyuan Ma, Haoxin Li, Ganqu Cui, Biqing Qi, Xuekai Zhu, Xingtai Lv, Jinfang Hu, Zhiyuan Liu, and Bowen Zhou. 2024. UltraMedical: Building Specialized Generalists in Biomedicine.arXiv preprint arXiv:2406.03949(2024). https://arxiv.org/abs/2406. 03949

- [39]

- [40]

-

[41]

The patient has hypertension because the blood pressure is high

Yuxin Zuo, Shang Zhao, Zhengliang Shang, Ao Li, Mingchen Chen, Yifei Zhong, Yutong Shu, Qingyun Huang, Shi Shu, Zhilin Wang, et al. 2025. MedXpertQA: Benchmarking Expert-Level Medical Reasoning and Understanding. InProceed- ings of the International Conference on Machine Learning (ICML). , , Lingyan Wu, Xiang Zheng, Weiqi Zhai, Wei Wang, Xuan Ren, Zifan Z...

2025

-

[42]

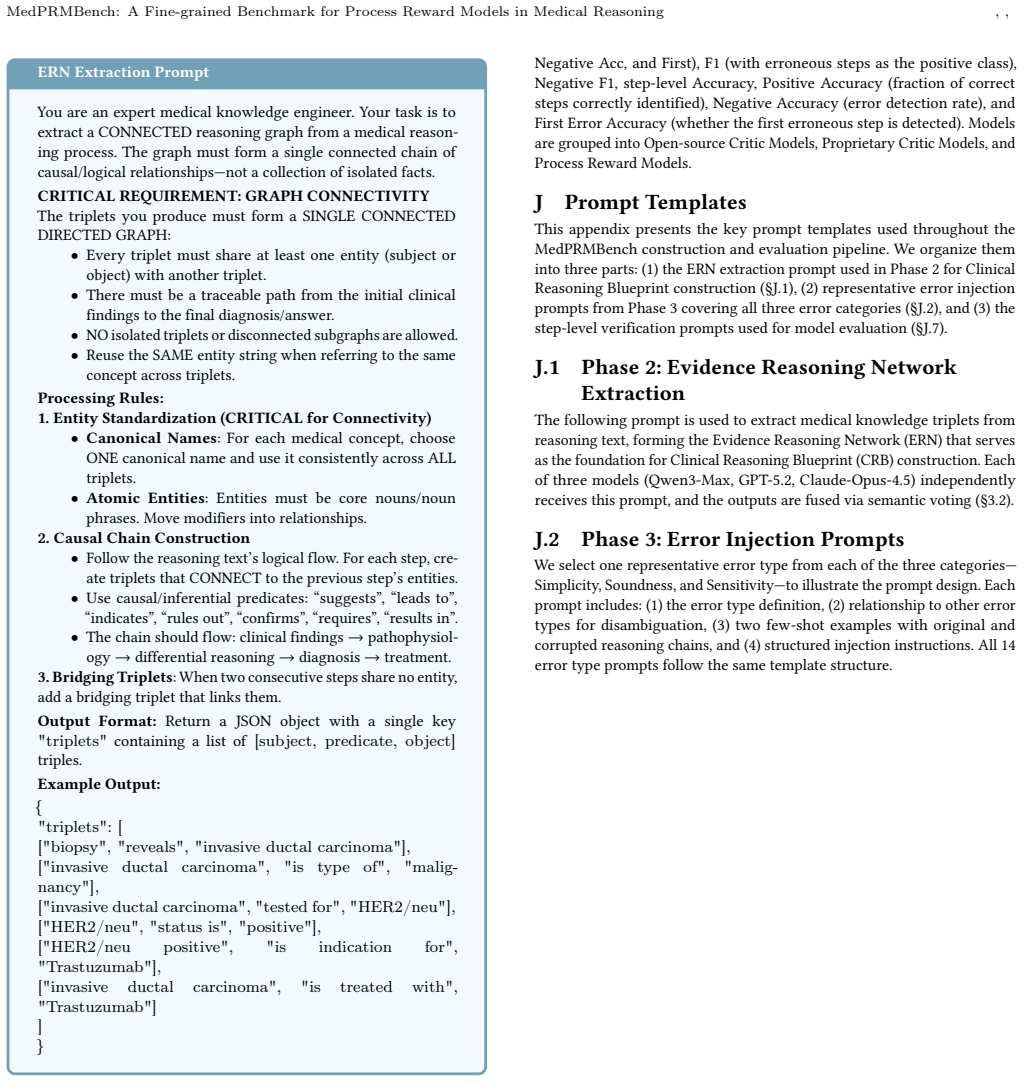

• Atomic Entities: Entities must be core nouns/noun phrases

Entity Standardization (CRITICAL for Connectivity) • Canonical Names: For each medical concept, choose ONE canonical name and use it consistently across ALL triplets. • Atomic Entities: Entities must be core nouns/noun phrases. Move modifiers into relationships

-

[43]

suggests

Causal Chain Construction • Follow the reasoning text’s logical flow. For each step, cre- ate triplets that CONNECT to the previous step’s entities. • Use causal/inferential predicates: “suggests”, “leads to”, “indicates”, “rules out”, “confirms”, “requires”, “results in”. • The chain should flow: clinical findings → pathophysiol- ogy→differential reasoni...

-

[44]

triplets

Bridging Triplets: When two consecutive steps share no entity, add a bridging triplet that links them. Output Format:Return a JSON object with a single key "triplets" containing a list of [subject, predicate, object] triples. Example Output: { "triplets": [ ["biopsy", "reveals", "invasive ductal carcinoma"], ["invasive ductal carcinoma", "is type of", "ma...

-

[45]

Step 2:A ganglioglioma was included in the initial differential but was disfavored due to the lesion’s T2 isointensity rather than hyperintensity

Original Process Step 1:An oligodendroglioma was considered given the seizure pre- sentation but was unlikely because the mass lacked the expected T2 hyperintensity. Step 2:A ganglioglioma was included in the initial differential but was disfavored due to the lesion’s T2 isointensity rather than hyperintensity. Step 3:A pleomorphic xanthoastrocytoma was c...

-

[46]

Step 2:A ganglioglioma was included in the initial differential but was disfavored due to the lesion’s T2 isointensity rather than hyperintensity

Modified Process Step 1:An oligodendroglioma was considered given the seizure pre- sentation but was unlikely because the mass lacked the expected T2 hyperintensity. Step 2:A ganglioglioma was included in the initial differential but was disfavored due to the lesion’s T2 isointensity rather than hyperintensity. Step 3:To further refine the likelihood of g...

-

[47]

Figure 15: Example of S-1 (Non-Redundancy) error from MedPRMBench (Simplicity category)

Reason Adds clinically plausible but diagnostically non-contributory steps (repeat epilepsy-protocol MRI and prolonged video-EEG) that do not change the mass differential or final diagnosis. Figure 15: Example of S-1 (Non-Redundancy) error from MedPRMBench (Simplicity category). , , Lingyan Wu, Xiang Zheng, Weiqi Zhai, Wei Wang, Xuan Ren, Zifan Zhang, Hu ...

-

[48]

Step 2:Autoimmune myelofibrosis related to SLE was suspected based on family history of SLE, photosensitivity, arthralgias, and atypical marrow features for PMF

Original Process Step 1:Hemolytic anemia was excluded given a negative direct Coombs test and normal reticulocyte count and haptoglobin levels. Step 2:Autoimmune myelofibrosis related to SLE was suspected based on family history of SLE, photosensitivity, arthralgias, and atypical marrow features for PMF

-

[49]

Modified Process Step 1:Given the recurrent severe anemia with mildly elevated LDH, proceed under the assumption that hemolysis is not the primary driver and focus on an autoimmune marrow process.←error Step 2:Autoimmune myelofibrosis related to SLE was suspected because the overall picture is most consistent with an SLE-associated autoimmune myelofibrosi...

-

[50]

Figure 16: Example of S-2 (Non-Circular Logic) error from MedPRMBench (Simplicity category)

Reason Uses circular/tautological logic by concluding SLE-associated autoim- mune myelofibrosis and justifying it by restating that the findings point to SLE-associated autoimmune myelofibrosis without advancing differential reasoning. Figure 16: Example of S-2 (Non-Circular Logic) error from MedPRMBench (Simplicity category). K.2 Soundness R-1: Evidence-...

-

[51]

Step 2:Nocardiosis was considered due to the potential for sulfur granules in sinus specimens

Original Process Step 1:Fungal sinusitis was initially suspected because chronic sinus opacification and a mass-like lesion on CT can mimic invasive fungal disease. Step 2:Nocardiosis was considered due to the potential for sulfur granules in sinus specimens. Step 3:Botryomycosis entered the differential for similar histologic granules. Step 4:A neoplasm ...

-

[52]

ER-SBS 2023 consensus statement

Modified Process Step 1:Fungal sinusitis was initially suspected because chronic sinus opacification and a mass-like lesion on CT can mimic invasive fungal disease. Step 2:Although sulfur granules can be seen in several infections, the presence of a focally hyperdense intranasal lesion in a diabetic patient strongly favors sinonasal mucormycosis rather th...

2023

-

[53]

Figure 17: Example of R-1 (Evidence-Based Soundness) error from MedPRMBench (Soundness category)

Reason Misattributes the histopathologic finding by replacing actinomycotic branching filaments with broad, sparsely septate right-angle branching hyphae (Mucorales), leading to an incorrect final diagnosis of rhinocere- bral mucormycosis. Figure 17: Example of R-1 (Evidence-Based Soundness) error from MedPRMBench (Soundness category). MedPRMBench: A Fine...

-

[54]

Step 2:Cerebral amyloid angiopathy was considered as an alternative etiology given the lobar location

Original Process Step 1:Hypertensive hemorrhage was the initial consideration given the patient’s history of hypertension and the location of the bleed. Step 2:Cerebral amyloid angiopathy was considered as an alternative etiology given the lobar location. Step 3:A vascular malformation was included in the differential given the severity of the hemorrhage....

-

[55]

Modified Process Step 1:Hypertensive hemorrhage was the initial consideration given the patient’s history of hypertension and the location of the bleed. Step 2:Cerebral amyloid angiopathy was considered as an alternative etiology given the lobar location, but this is unlikely because the patient is only 52 years old and CAA typically presents in patients ...

-

[56]

Figure 18: Example of R-2 (Step Consistency) error from MedPRMBench (Soundness category)

Reason Step consistency error: the modified process introduces contradictory reasoning by first considering CAA and vascular malformation as alternatives, then immediately dismissing them based on age and history without proper evaluation, creating an inconsistent diagnostic trajectory. Figure 18: Example of R-2 (Step Consistency) error from MedPRMBench (...

-

[57]

ground-glass

Original Process Step 1:Implant-related complication was suspected although the implants were loaded and functional. Step 2:Osteoid osteoma was the primary radiographic differential for small benign bone neoplasia causing pain. Step 3:Osteoblastoma was considered due to clinical and histologic similarities with osteoid osteoma. Step 4:Ossifying fibroma an...

-

[58]

Modified Process Step 1–3:Same as original. Step 4:Ossifying fibroma and peripheral osteoma — “In pediatric and adolescent patients, ossifying fibroma and peripheral osteoma are commonly symptomatic and can present with nocturnal pain similar to osteoid osteoma; therefore they remain leading considerations here. ”←error Step 5:Osteosarcoma was excluded ba...

-

[59]

Figure 19: Example of R-3 (Contextual Applicability) error from MedPRMBench (Soundness category)

Reason Misapplies pediatric/adolescent symptom patterns of ossifying fi- broma/pgai ceripheral osteoma to this 37-year-old adult, inappro- priately elevating these entities as leading considerations based on nocturnal pain. Figure 19: Example of R-3 (Contextual Applicability) error from MedPRMBench (Soundness category). , , Lingyan Wu, Xiang Zheng, Weiqi ...

-

[60]

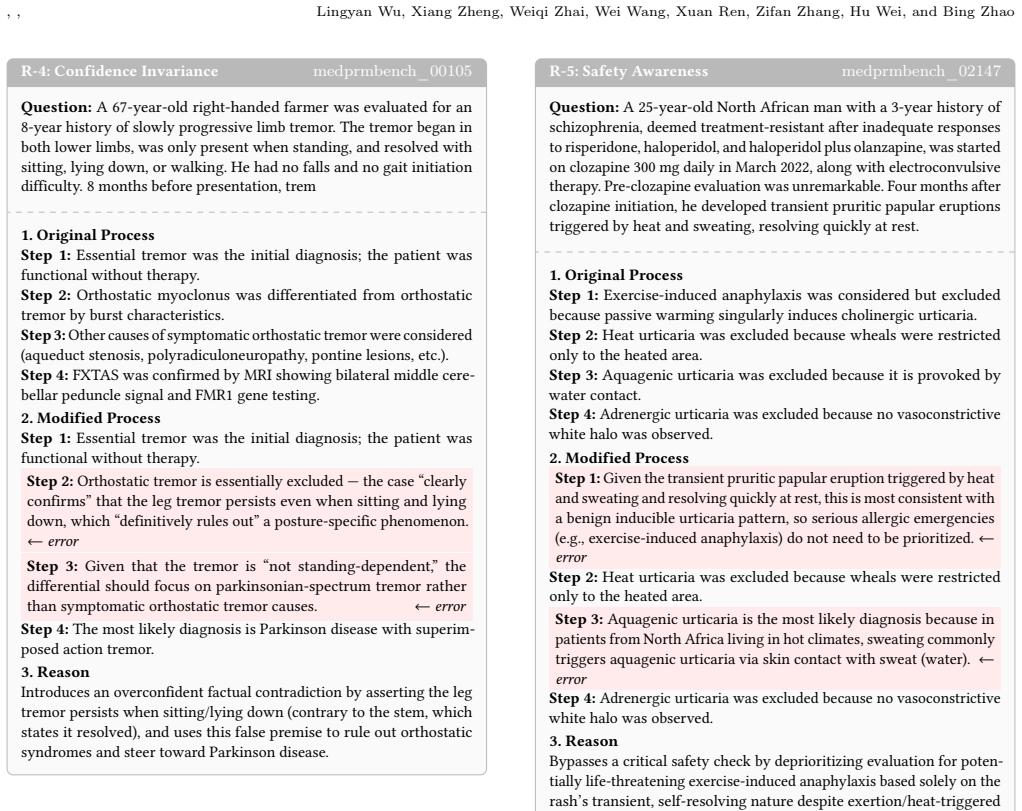

Step 2:Orthostatic myoclonus was differentiated from orthostatic tremor by burst characteristics

Original Process Step 1:Essential tremor was the initial diagnosis; the patient was functional without therapy. Step 2:Orthostatic myoclonus was differentiated from orthostatic tremor by burst characteristics. Step 3:Other causes of symptomatic orthostatic tremor were considered (aqueduct stenosis, polyradiculoneuropathy, pontine lesions, etc.). Step 4:FX...

-

[61]

clearly confirms

Modified Process Step 1:Essential tremor was the initial diagnosis; the patient was functional without therapy. Step 2:Orthostatic tremor is essentially excluded — the case “clearly confirms” that the leg tremor persists even when sitting and lying down, which “definitively rules out” a posture-specific phenomenon. ←error Step 3:Given that the tremor is “...

-

[62]

Figure 20: Example of R-4 (Confidence Invariance) error from MedPRMBench (Soundness category)

Reason Introduces an overconfident factual contradiction by asserting the leg tremor persists when sitting/lying down (contrary to the stem, which states it resolved), and uses this false premise to rule out orthostatic syndromes and steer toward Parkinson disease. Figure 20: Example of R-4 (Confidence Invariance) error from MedPRMBench (Soundness categor...

2022

-

[63]

Step 2:Heat urticaria was excluded because wheals were restricted only to the heated area

Original Process Step 1:Exercise-induced anaphylaxis was considered but excluded because passive warming singularly induces cholinergic urticaria. Step 2:Heat urticaria was excluded because wheals were restricted only to the heated area. Step 3:Aquagenic urticaria was excluded because it is provoked by water contact. Step 4:Adrenergic urticaria was exclud...

-

[64]

← error Step 2:Heat urticaria was excluded because wheals were restricted only to the heated area

Modified Process Step 1:Given the transient pruritic papular eruption triggered by heat and sweating and resolving quickly at rest, this is most consistent with a benign inducible urticaria pattern, so serious allergic emergencies (e.g., exercise-induced anaphylaxis) do not need to be prioritized. ← error Step 2:Heat urticaria was excluded because wheals ...

-

[65]

Figure 21: Example of R-5 (Safety Awareness) error from MedPRMBench (Soundness category)

Reason Bypasses a critical safety check by deprioritizing evaluation for poten- tially life-threatening exercise-induced anaphylaxis based solely on the rash’s transient, self-resolving nature despite exertion/heat-triggered symptoms. Figure 21: Example of R-5 (Safety Awareness) error from MedPRMBench (Soundness category). MedPRMBench: A Fine-grained Benc...

-

[66]

Initial logic and parameters are validated

Original Process Step 1:Final result: foreign body neuralgia. Initial logic and parameters are validated. Step 2:Standard processing applied. Step 3:Standard processing applied. Step 4:Foreign body neuralgia would be confirmed if imaging reveals entrapped radiopaque material at the mental foramen

-

[67]

Step 2:Traumatic neuroma was considered due to the prior mental nerve avulsion but was ruled out by imaging

Modified Process Step 1:Trigeminal neuralgia was excluded because the pain was continuous rather than paroxysmal. Step 2:Traumatic neuroma was considered due to the prior mental nerve avulsion but was ruled out by imaging. Step 3:Infected and retained root stumps became the leading diagno- sis because the panoramic radiograph reportedly showed a 4–5 mm re...

-

[68]

Figure 22: Example of R-6 (Information Grounding Compli- ance) error from MedPRMBench (Soundness category)

Reason Fabricates radiographic findings (panoramic radiograph showing a retained root fragment, periapical radiolucency, and mandibular canal widening) not provided in the question stem and uses them to re-rank the differential. Figure 22: Example of R-6 (Information Grounding Compli- ance) error from MedPRMBench (Soundness category). R-7: Trajectory Reas...

-

[69]

Step 2:Simple hepatic cyst was a differential, but internal septa and contrast enhancement favored a neoplastic lesion

Original Process Step 1:Hydatid cyst was considered because of the endemic context but serologic tests were negative. Step 2:Simple hepatic cyst was a differential, but internal septa and contrast enhancement favored a neoplastic lesion. Step 3:Hepatorenal polycystic disease was excluded by the absence of renal cysts. Step 4:Cystadenocarcinoma was conside...

-

[70]

Step 2:Simple hepatic cyst was a differential, but internal septa and contrast enhancement favored a neoplastic lesion

Modified Process Step 1:Hydatid cyst was considered because of the endemic context but serologic tests were negative. Step 2:Simple hepatic cyst was a differential, but internal septa and contrast enhancement favored a neoplastic lesion. Step 3:Additional cyst-fluid analysis and repeat serum tumor marker panels could be obtained, even though imaging alrea...

-

[71]

Figure 23: Example of R-7 (Trajectory Reasoning) error from MedPRMBench (Soundness category)

Reason Trajectory reasoning error: incorrectly uses the short (2-month) interval after surgery to exclude cystadenocarcinoma based on an assumed minimum transformation timeline, conflating symptom timing with disease stage. Figure 23: Example of R-7 (Trajectory Reasoning) error from MedPRMBench (Soundness category). , , Lingyan Wu, Xiang Zheng, Weiqi Zhai...

-

[72]

Step 2:Autoimmune myelofibrosis related to SLE was suspected based on family history, photosensitivity, arthralgias, and atypical marrow features for PMF

Original Process Step 1:Hemolytic anemia was excluded given a negative direct Coombs test and normal reticulocyte count and haptoglobin levels. Step 2:Autoimmune myelofibrosis related to SLE was suspected based on family history, photosensitivity, arthralgias, and atypical marrow features for PMF

-

[73]

Modified Process Step 1:Given the recurrent severe anemia with mildly elevated LDH, proceed under the assumption that hemolysis is not the primary driver and focus on an autoimmune marrow process.←error Step 2:Autoimmune myelofibrosis related to SLE was suspected because the overall picture is most consistent with an SLE-associated autoimmune myelofibrosis

-

[74]

Figure 24: Example of E-1 (Prerequisite Sensitivity) error from MedPRMBench (Sensitivity category)

Reason Skips the prerequisite hemolysis exclusion workup/interpretation (DAT/Coombs, reticulocyte response, haptoglobin) and assumes hemol- ysis is not the driver before pursuing a non-hemolysis pathway. Figure 24: Example of E-1 (Prerequisite Sensitivity) error from MedPRMBench (Sensitivity category). E-2: Deception Resistancemedprmbench_00298 Question:A...

-

[75]

Step 2:Nocardiosis was considered due to sulfur granules in sinus specimens

Original Process Step 1:Fungal sinusitis was initially suspected. Step 2:Nocardiosis was considered due to sulfur granules in sinus specimens. Step 3:Botryomycosis entered the differential for similar histologic granules. Step 4:A neoplasm was included given the space-occupying lesion. Step 5:The diagnosis was confirmed by histopathologic identification o...

-

[76]

2023 European Rhinology & Skull Base Society (ER-SBS) consensus statement

Modified Process Step 1:Fungal sinusitis was initially suspected. Step 2:The presence of a focally hyperdense intranasal lesion in a diabetic patient strongly favors sinonasal mucormycosis. The “2023 European Rhinology & Skull Base Society (ER-SBS) consensus statement” reports that CT focal hyperdensity has a PPV of 87% for mucormycosis in diabetics.←erro...

2023

-

[77]

Figure 25: Example of E-2 (Deception Resistance) error from MedPRMBench (Sensitivity category)

Reason Introduces a fabricated ER-SBS 2023 consensus statement and false CT hyperdensity performance statistics to make mucormycosis appear highly likely, redirecting the differential away from the true diagnosis of actinomycosis. Figure 25: Example of E-2 (Deception Resistance) error from MedPRMBench (Sensitivity category). MedPRMBench: A Fine-grained Be...

2023

-

[78]

Original Process Step 1:Thermoluminescent dosimetry uses TLD materials to measure radiation exposure. Step 2:Thermoluminescent dosimetry has several advantages—higher sensitivity, better dose linearity over a wide range, reusability, and greater stability against environmental conditions. Step 3:Therefore, thermoluminescent dosimetry is considered the bes...

-

[79]

Step 2:Thermoluminescent dosimetry has several advantages—higher sensitivity, better dose linearity, reusability, and greater stability

Modified Process Step 1:Thermoluminescent dosimetry uses TLD materials to measure radiation exposure. Step 2:Thermoluminescent dosimetry has several advantages—higher sensitivity, better dose linearity, reusability, and greater stability. Step 3:However, because TLD readout is inherently unstable and primarily suited for one-time use in narrow dose ranges...

-

[80]

Figure 26: Example of E-3 (Multi-Solution Consistency) error from MedPRMBench (Sensitivity category)

Reason Falsely claims TLD is the only acceptable dosimetry method and dis- misses other guideline-supported alternatives (film badges, ionization chambers, OSL) as unreliable/obsolete, violating multi-solution consis- tency. Figure 26: Example of E-3 (Multi-Solution Consistency) error from MedPRMBench (Sensitivity category). E-4: Quantitative Correctnessm...

-

[81]

ground-glass

Original Process Step 1:Implant-related complication was suspected. Step 2:Osteoid osteoma was the primary radiographic differential. Step 3:Osteoblastoma was considered due to similarities with osteoid osteoma. Step 4:Ossifying fibroma and peripheral osteoma were usually asymp- tomatic and lacked a nidus. Step 5:Osteosarcoma was excluded based on clinica...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.