Recognition: unknown

ArgBench: Benchmarking LLMs on Computational Argumentation Tasks

Pith reviewed 2026-05-10 06:12 UTC · model grok-4.3

The pith

ArgBench unifies 33 prior datasets into 46 tasks to benchmark LLMs on computational argumentation.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We create the first benchmark for a standardized evaluation of LLM-based approaches to computational argumentation, encompassing 33 datasets from previous work in unified form. Using the benchmark, we evaluate the generalizability of five LLM families across 46 computational argumentation tasks that cover mining arguments, assessing perspectives, assessing argument quality, reasoning about arguments, and generating arguments. On the benchmark, we conduct an extensive systematic analysis of the contribution of few-shot examples, reasoning steps, model size, and training skills to the performance of LLMs on the computational argumentation tasks in the benchmark.

What carries the argument

ArgBench, the benchmark that standardizes 33 prior datasets into 46 tasks across five categories of computational argumentation.

If this is right

- Researchers can now compare any new LLM or prompting method against the same fixed set of 46 tasks instead of building private test collections.

- Larger models and more few-shot examples improve results on most argument-mining and quality-assessment tasks.

- Adding explicit reasoning steps during prompting raises accuracy on perspective-assessment and argument-reasoning tasks.

- Models that received prior training on related argumentation skills transfer better to the benchmark tasks than untrained models of similar size.

- The unified task format makes it straightforward to measure how well an LLM handles the full pipeline from mining to generation.

Where Pith is reading between the lines

- The benchmark could be extended with multi-turn debate scenarios to test whether current prompting techniques scale to sustained argumentation.

- Insights on which tasks benefit most from model scale could guide the creation of targeted fine-tuning corpora for weaker categories such as argument generation.

- A public leaderboard built on these 46 tasks would let the community track incremental gains without each group re-implementing dataset loaders.

- Similar unification efforts in other language-understanding domains might adopt the same dataset-to-task conversion approach to reduce fragmentation.

Load-bearing premise

The 33 selected datasets and their reformulation into 46 tasks give comprehensive, unbiased coverage of computational argumentation without major gaps or distortions from unification.

What would settle it

A newly collected argumentation dataset or task outside the current 46 that produces LLM performance patterns markedly different from those measured on ArgBench would show the benchmark misses important aspects of the domain.

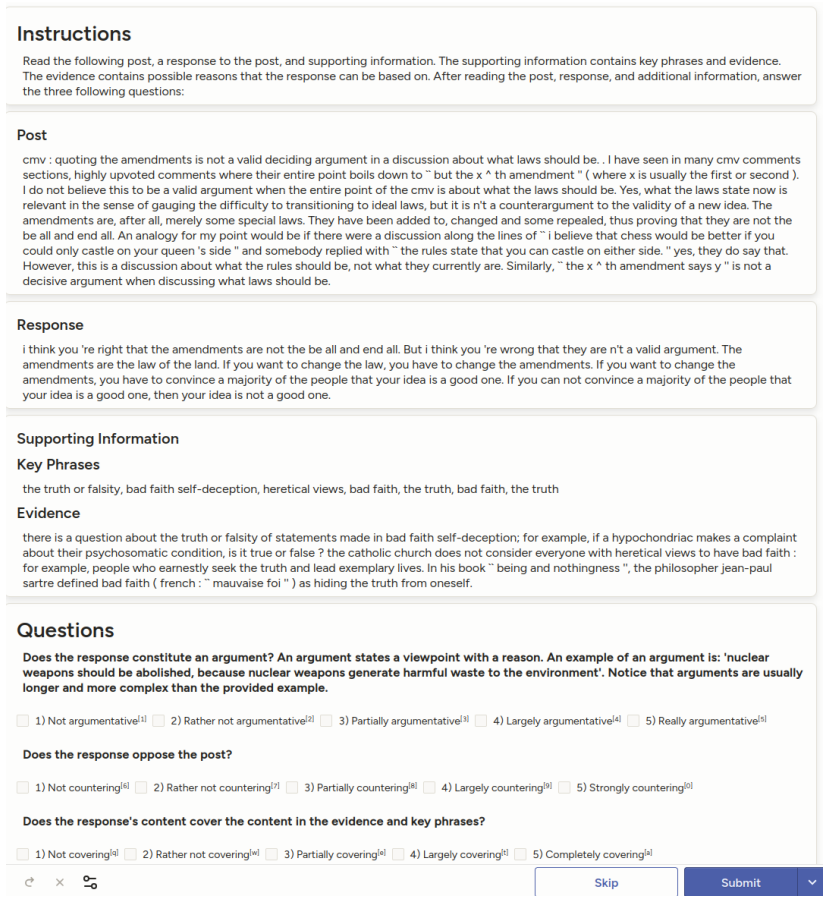

Figures

read the original abstract

Argumentation skills are an essential toolkit for large language models (LLMs). These skills are crucial in various use cases, including self-reflection, debating collaboratively for diverse answers, and countering hate speech. In this paper, we create the first benchmark for a standardized evaluation of LLM-based approaches to computational argumentation, encompassing 33 datasets from previous work in unified form. Using the benchmark, we evaluate the generalizability of five LLM families across 46 computational argumentation tasks that cover mining arguments, assessing perspectives, assessing argument quality, reasoning about arguments, and generating arguments. On the benchmark, we conduct an extensive systematic analysis of the contribution of few-shot examples, reasoning steps, model size, and training skills to the performance of LLMs on the computational argumentation tasks in the benchmark.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces ArgBench as the first standardized benchmark for LLM-based computational argumentation. It unifies 33 prior datasets into 46 tasks spanning argument mining, perspective assessment, quality assessment, reasoning, and generation. The work evaluates five LLM families on these tasks and performs a systematic analysis of how few-shot examples, reasoning steps, model size, and training skills affect performance.

Significance. If the unification process preserves original semantics, labels, and evaluation criteria without distortion, ArgBench would provide a valuable standardized resource for evaluating LLMs on argumentation skills relevant to applications such as debating and content moderation. The systematic analysis of prompting and scaling factors offers practical insights into LLM capabilities in this domain.

major comments (2)

- [Dataset unification and task reformulation (likely §3-4)] The unification of 33 datasets into 46 tasks is load-bearing for the central claim of comprehensive, standardized coverage. The manuscript must detail the unification process (prompt templates, context truncation, label remapping) and provide validation (e.g., human equivalence checks or comparison of original vs. unified performance) to rule out semantic distortions, as noted in the skeptic analysis of potential loss of discourse structure in mining tasks.

- [Introduction and dataset selection] The claim of 'first benchmark' and comprehensive coverage requires explicit justification of dataset selection criteria and coverage of argumentation subfields. Without this, it is unclear whether gaps exist in the 46 tasks relative to the full space of computational argumentation.

minor comments (2)

- [Benchmark construction] Clarify the exact mapping from 33 datasets to 46 tasks, including any splits or augmentations, to improve reproducibility.

- [Related work and tables] Ensure all original dataset citations are retained and linked to the unified task definitions.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed feedback. We address each major comment point by point below, providing clarifications and committing to revisions that strengthen the manuscript without altering its core contributions.

read point-by-point responses

-

Referee: The unification of 33 datasets into 46 tasks is load-bearing for the central claim of comprehensive, standardized coverage. The manuscript must detail the unification process (prompt templates, context truncation, label remapping) and provide validation (e.g., human equivalence checks or comparison of original vs. unified performance) to rule out semantic distortions, as noted in the skeptic analysis of potential loss of discourse structure in mining tasks.

Authors: We agree that greater transparency on the unification process is required. In the revised manuscript, we have substantially expanded Sections 3 and 4 with explicit documentation of the prompt templates for all 46 tasks, the context truncation heuristics (retaining full argument spans where possible while respecting token limits), and the label remapping rules used to harmonize outputs. We have also added a new validation subsection that reports performance comparisons between original and unified task formulations on a stratified sample of five datasets, demonstrating that differences are within expected variance. While we did not conduct exhaustive human equivalence checks across all tasks due to scale, we include a qualitative analysis showing that core semantics and evaluation criteria are preserved. On discourse structure in mining tasks, we now explicitly note the trade-offs and justify our choices by reference to the original dataset papers. revision: yes

-

Referee: The claim of 'first benchmark' and comprehensive coverage requires explicit justification of dataset selection criteria and coverage of argumentation subfields. Without this, it is unclear whether gaps exist in the 46 tasks relative to the full space of computational argumentation.

Authors: We accept that the original manuscript was insufficiently explicit on selection criteria. We have revised the Introduction and added a dedicated subsection in Section 2 that states the four inclusion criteria: public availability with reusable licenses, established evaluation metrics, coverage of at least one of the five core argumentation categories, and recency (post-2015). We map the 46 tasks against major subfields identified in recent surveys (e.g., argument mining, quality assessment, reasoning) and acknowledge gaps such as limited multi-turn dialogue and multimodal argumentation. The 'first benchmark' claim is now qualified as the first unified, standardized, and multi-family evaluation suite rather than an exhaustive enumeration of every possible sub-task. revision: yes

Circularity Check

No circularity: empirical benchmark construction with no derivations or self-referential predictions

full rationale

The paper presents ArgBench as an empirical unification of 33 prior datasets into 46 tasks spanning mining, perspective assessment, quality, reasoning, and generation, followed by LLM evaluations. No mathematical derivations, equations, fitted parameters renamed as predictions, or first-principles results are claimed. The process is described as dataset selection and prompt reformulation without any step that reduces by construction to its own inputs or relies on load-bearing self-citations for uniqueness. Central claims about coverage and standardization rest on methodological choices that are externally verifiable against the original datasets, not on internal loops. This is a standard benchmark paper with no detectable circular elements in its construction or analysis.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The 33 datasets from previous work cover the main aspects of computational argumentation without significant selection bias.

Reference graph

Works this paper leans on

-

[1]

Khalid Al-Khatib and Henning Wachsmuth and Johannes Kiesel and Matthias Hagen and Benno Stein , booktitle =

-

[2]

Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021 , month = aug, year =

Counter-Argument Generation by Attacking Weak Premises , author =. Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021 , month = aug, year =. doi:10.18653/v1/2021.findings-acl.159 , pages =

-

[3]

Proceedings of the 4th Workshop on Argument Mining , year =

Ajjour, Yamen and Chen, Wei-Fan and Kiesel, Johannes and Wachsmuth, Henning and Stein, Benno , title =. Proceedings of the 4th Workshop on Argument Mining , year =

-

[4]

Modeling Frames in Argumentation , author =. Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP) , month = nov, year =. doi:10.18653/v1/D19-1290 , pages =

-

[5]

Ehud Aharoni and Anatoly Polnarov and Tamar Lavee and Daniel Hershcovich and Ran Levy and Ruty Rinott and Dan Gutfreund and Noam Slonim , booktitle =

-

[6]

Patterns of Argumentation Strategies across Topics , author =. Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing , month = sep, year =. doi:10.18653/v1/D17-1141 , pages =

-

[7]

Roy Bar-Haim and Indrajit Bhattacharya and Francesco Dinuzzo and Amrita Saha and Noam Slonim , booktitle =

-

[8]

doi: 10.18653/v1/2020.acl-main.371

Roy Bar-Haim and Lilach Eden and Roni Friedman and Yoav Kantor and Dan Lahav and Noam Slonim , booktitle =. 2020 , publisher =. doi:10.18653/v1/2020.acl-main.371 , pages =

-

[9]

Advances in Neural Information Processing Systems , pages=

Algorithms for hyper-parameter optimization , author=. Advances in Neural Information Processing Systems , pages=

-

[10]

Implicit Premise Generation with Discourse-aware Commonsense Knowledge Models , booktitle =

Tuhin Chakrabarty and Aadit Trivedi and Smaranda Muresan , editor =. Implicit Premise Generation with Discourse-aware Commonsense Knowledge Models , booktitle =. 2021 , url =. doi:10.18653/V1/2021.EMNLP-MAIN.504 , timestamp =

-

[11]

Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) , month = aug, year = 2024, address =

Exploring the Potential of Large Language Models in Computational Argumentation , author =. Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) , month = aug, year = 2024, address =

2024

-

[12]

Think you have Solved Question Answering? Try ARC, the AI2 Reasoning Challenge

Peter Clark and Isaac Cowhey and Oren Etzioni and Tushar Khot and Ashish Sabharwal and Carissa Schoenick and Oyvind Tafjord , title =. CoRR , volume =. 2018 , url =. 1803.05457 , timestamp =

work page internal anchor Pith review Pith/arXiv arXiv 2018

-

[13]

Contextualizing Argument Quality Assessment with Relevant Knowledge

Deshpande, Darshan and Sourati, Zhivar and Ilievski, Filip and Morstatter, Fred. Contextualizing Argument Quality Assessment with Relevant Knowledge. Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 2: Short Papers). 2024. doi:10.18653/v1/2024.naacl-short.28

-

[14]

C on QR et: A New Benchmark for Fine-Grained Automatic Evaluation of Retrieval Augmented Computational Argumentation

Dhole, Kaustubh and Shu, Kai and Agichtein, Eugene. C on QR et: A New Benchmark for Fine-Grained Automatic Evaluation of Retrieval Augmented Computational Argumentation. Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers). 2025

2025

-

[15]

and Mordatch, Igor , title =

Du, Yilun and Li, Shuang and Torralba, Antonio and Tenenbaum, Joshua B. and Mordatch, Igor , title =. Proceedings of the 41st International Conference on Machine Learning , articleno =. 2024 , publisher =

2024

-

[16]

Abhimanyu Dubey and Abhinav Jauhri and Abhinav Pandey and Abhishek Kadian and Ahmad Al. The Llama 3 Herd of Models , journal =. 2024 , url =. doi:10.48550/ARXIV.2407.21783 , eprinttype =. 2407.21783 , timestamp =

work page internal anchor Pith review Pith/arXiv arXiv doi:10.48550/arxiv.2407.21783 2024

-

[17]

Determining Relative Argument Specificity and Stance for Complex Argumentative Structures

Durmus, Esin and Ladhak, Faisal and Cardie, Claire. Determining Relative Argument Specificity and Stance for Complex Argumentative Structures. Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics. 2019. doi:10.18653/v1/P19-1456

-

[18]

The Thirty-Fourth

Liat Ein-Dor and Eyal Shnarch and Lena Dankin and Alon Halfon and Benjamin Sznajder and Ariel Gera and Carlos Alzate and Martin Gleize and Leshem Choshen and Yufang Hou and Yonatan Bilu and Ranit Aharonov and Noam Slonim , title =. The Thirty-Fourth

-

[19]

Limited Generalizability in Argument Mining: State-Of-The-Art Models Learn Datasets, Not Arguments

Feger, Marc and Boland, Katarina and Dietze, Stefan. Limited Generalizability in Argument Mining: State-Of-The-Art Models Learn Datasets, Not Arguments. Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). 2025. doi:10.18653/v1/2025.acl-long.1164

-

[20]

Gemechu, Debela and Ruiz-Dolz, Ramon and Reed, Chris , booktitle =

-

[21]

Martin Gleize and Eyal Shnarch and Leshem Choshen and Lena Dankin and Guy Moshkowich and Ranit Aharonov and Noam Slonim , booktitle =

-

[22]

Argument-based Detection and Classification of Fallacies in Political Debates , booktitle =

Pierpaolo Goffredo and Mariana Espinoza and Serena Villata and Elena Cabrio , editor =. Argument-based Detection and Classification of Fallacies in Political Debates , booktitle =. 2023 , url =. doi:10.18653/V1/2023.EMNLP-MAIN.684 , timestamp =

-

[23]

The workweek is the best time to start a family

Shai Gretz and Yonatan Bilu and Edo Cohen-Karlik and Noam Slonim , booktitle =. The workweek is the best time to start a family. 2020 , address =. doi:10.18653/v1/2020.findings-emnlp.47 , pages =

-

[24]

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

DeepSeek. CoRR , volume =. 2025 , url =. doi:10.48550/ARXIV.2501.12948 , eprinttype =. 2501.12948 , timestamp =

work page internal anchor Pith review Pith/arXiv arXiv doi:10.48550/arxiv.2501.12948 2025

-

[25]

GitHub repository , howpublished =

Guo, Junxian and Tang, Haotian and Yang, Shang and Zhang, Zhekai and Liu, Zhijian and Han, Song , title =. GitHub repository , howpublished =. 2024 , publisher =

2024

-

[26]

Exploiting Debate Portals for Semi-Supervised Argumentation Mining in User-Generated Web Discourse

Habernal, Ivan and Gurevych, Iryna. Exploiting Debate Portals for Semi-Supervised Argumentation Mining in User-Generated Web Discourse. Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing. 2015. doi:10.18653/v1/D15-1255

-

[27]

Ivan Habernal and Henning Wachsmuth and Iryna Gurevych and Benno Stein , editor =. The Argument Reasoning Comprehension Task: Identification and Reconstruction of Implicit Warrants , booktitle =. 2018 , url =. doi:10.18653/V1/N18-1175 , timestamp =

-

[28]

Ivan Habernal and Henning Wachsmuth and Iryna Gurevych and Benno Stein , editor =. Before Name-Calling: Dynamics and Triggers of Ad Hominem Fallacies in Web Argumentation , booktitle =. 2018 , url =. doi:10.18653/V1/N18-1036 , timestamp =

-

[29]

Measuring Massive Multitask Language Understanding

Dan Hendrycks and Collin Burns and Steven Basart and Andy Zou and Mantas Mazeika and Dawn Song and Jacob Steinhardt , title =. CoRR , volume =. 2020 , url =. 2009.03300 , timestamp =

work page internal anchor Pith review arXiv 2020

-

[30]

Hu and Yelong Shen and Phillip Wallis and Zeyuan Allen

Edward J. Hu and Yelong Shen and Phillip Wallis and Zeyuan Allen. LoRA: Low-Rank Adaptation of Large Language Models , booktitle =. 2022 , url =

2022

-

[31]

Neural Argument Generation Augmented with Externally Retrieved Evidence , booktitle =

Xinyu Hua and Lu Wang , editor =. Neural Argument Generation Augmented with Externally Retrieved Evidence , booktitle =. 2018 , url =. doi:10.18653/V1/P18-1021 , timestamp =

-

[32]

Argument Generation with Retrieval, Planning, and Realization , booktitle =

Xinyu Hua and Zhe Hu and Lu Wang , editor =. Argument Generation with Retrieval, Planning, and Realization , booktitle =. 2019 , url =. doi:10.18653/V1/P19-1255 , timestamp =

-

[33]

and Rockt\"

Khan, Akbir and Hughes, John and Valentine, Dan and Ruis, Laura and Sachan, Kshitij and Radhakrishnan, Ansh and Grefenstette, Edward and Bowman, Samuel R. and Rockt\". Debating with more persuasive LLMs leads to more truthful answers , year =. Proceedings of the 41st International Conference on Machine Learning , articleno =

-

[34]

Proceedings of

Context Dependent Claim Detection , author =. Proceedings of

-

[35]

Liang, Tian and He, Zhiwei and Jiao, Wenxiang and Wang, Xing and Wang, Yan and Wang, Rui and Yang, Yujiu and Shi, Shuming and Tu, Zhaopeng. Encouraging Divergent Thinking in Large Language Models through Multi-Agent Debate. Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing. 2024. doi:10.18653/v1/2024.emnlp-main.992

-

[36]

Davide Liga , editor =. Argumentative Evidences Classification and Argument Scheme Detection Using Tree Kernels , booktitle =. 2019 , url =. doi:10.18653/V1/W19-4511 , timestamp =

-

[37]

Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing , month = dec, year =

Argue with Me Tersely: Towards Sentence-Level Counter-Argument Generation , author =. Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing , month = dec, year =

2023

-

[38]

Findings of the Association for Computational Linguistics: ACL 2024 , month = aug, year =

CriticBench: Benchmarking LLMs for Critique-Correct Reasoning , author =. Findings of the Association for Computational Linguistics: ACL 2024 , month = aug, year =

2024

-

[39]

Logical Fallacy Detection , booktitle =

Zhijing Jin and Abhinav Lalwani and Tejas Vaidhya and Xiaoyu Shen and Yiwen Ding and Zhiheng Lyu and Mrinmaya Sachan and Rada Mihalcea and Bernhard Sch. Logical Fallacy Detection , booktitle =. 2022 , url =. doi:10.18653/V1/2022.FINDINGS-EMNLP.532 , timestamp =

-

[40]

2023 , eprint=

Mistral 7B , author=. 2023 , eprint=

2023

-

[41]

2024 , eprint=

Mixtral of Experts , author=. 2024 , eprint=

2024

-

[42]

Computational Models of Argument , year =

Argument Mining Using Argumentation Scheme Structures , author =. Computational Models of Argument , year =. doi:10.3233/978-1-61499-686-6-379 , series =

-

[43]

Stefano Menini and Elena Cabrio and Sara Tonelli and SerenaVillata , title =

-

[44]

Let's Argue Both Sides

"Let's Argue Both Sides": Argument Generation Can Force Small Models to Utilize Previously Inaccessible Reasoning Capabilities , author =. Proceedings of the 1st Workshop on Customizable NLP: Progress and Challenges in Customizing NLP for a Domain, Application, Group, or Individual (CustomNLP4U) , month =. 2024 , address =

2024

-

[45]

A Logical Fallacy-Informed Framework for Argument Generation

Mouchel, Luca and Paul, Debjit and Cui, Shaobo and West, Robert and Bosselut, Antoine and Faltings, Boi. A Logical Fallacy-Informed Framework for Argument Generation. Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers). 2025

2025

-

[46]

S tereo S et: Measuring stereotypical bias in pretrained language models

Moin Nadeem and Anna Bethke and Siva Reddy , editor =. StereoSet: Measuring stereotypical bias in pretrained language models , booktitle =. 2021 , url =. doi:10.18653/V1/2021.ACL-LONG.416 , timestamp =

-

[47]

Proceedings of the 7th Workshop on Argument Mining , month = dec, year = 2020, address =

Creating a Domain-diverse Corpus for Theory-based Argument Quality Assessment , author =. Proceedings of the 7th Workshop on Argument Mining , month = dec, year = 2020, address =

2020

-

[48]

OpenAI and Josh Achiam and Steven Adler and Sandhini Agarwal and Lama Ahmad and Ilge Akkaya and Florencia Leoni Aleman and Diogo Almeida and Janko Altenschmidt and Sam Altman and Shyamal Anadkat and Red Avila and Igor Babuschkin and Suchir Balaji and Valerie Balcom and Paul Baltescu and Haiming Bao and Mohammad Bavarian and Jeff Belgum and Irwan Bello and...

work page internal anchor Pith review Pith/arXiv arXiv

-

[49]

Bleu: a method for automatic evaluation of machine translation

Papineni, Kishore and Roukos, Salim and Ward, Todd and Zhu, Wei-Jing. B leu: a Method for Automatic Evaluation of Machine Translation. Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics. 2002. doi:10.3115/1073083.1073135

-

[50]

Andreas Peldszus , booktitle =

-

[51]

Andreas Peldszus and Manfred Stede , booktitle =

-

[52]

Proceedings of the 20th International Conference on Natural Language Processing (ICON) , month = dec, year = 2023, address =

Consolidating Strategies for Countering Hate Speech Using Persuasive Dialogues , author =. Proceedings of the 20th International Conference on Natural Language Processing (ICON) , month = dec, year = 2023, address =

2023

-

[53]

Sougata Saha and Rohini K. Srihari , editor =. ArgU:. Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers),. 2023 , url =. doi:10.18653/V1/2023.ACL-LONG.466 , timestamp =

-

[54]

Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics (ACL 2019) , publisher =

Nils Reimers and Benjamin Schiller and Tilman Beck and Johannes Daxenberger and Christian Stab and Iryna Gurevych , title =. Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics (ACL 2019) , publisher =

2019

-

[55]

Khapra and Ehud Aharoni and Noam Slonim , booktitle =

Ruty Rinott and Lena Dankin and Carlos Alzate Perez and Mitesh M. Khapra and Ehud Aharoni and Noam Slonim , booktitle =

-

[56]

Allen Roush and Yusuf Shabazz and Arvind Balaji and Peter Zhang and Stefano Mezza and Markus Zhang and Sanjay Basu and Sriram Vishwanath and Mehdi Fatemi and Ravid Shwartz. OpenDebateEvidence:. CoRR , volume =. 2024 , url =. doi:10.48550/ARXIV.2406.14657 , eprinttype =. 2406.14657 , timestamp =

-

[57]

Gemma 2: Improving Open Language Models at a Practical Size

Morgane Riviere and Shreya Pathak and Pier Giuseppe Sessa and Cassidy Hardin and Surya Bhupatiraju and L. Gemma 2: Improving Open Language Models at a Practical Size , journal =. 2024 , url =. doi:10.48550/ARXIV.2408.00118 , eprinttype =. 2408.00118 , timestamp =

work page internal anchor Pith review doi:10.48550/arxiv.2408.00118 2024

-

[58]

Joonsuk Park and Claire Cardie , booktitle =

-

[59]

Segmentation of Argumentative Texts with Contextualised Word Representations , booktitle =

Georgios Petasis , editor =. Segmentation of Argumentative Texts with Contextualised Word Representations , booktitle =. 2019 , url =. doi:10.18653/V1/W19-4501 , timestamp =

-

[60]

Aspect-Controlled Neural Argument Generation

Schiller, Benjamin and Daxenberger, Johannes and Gurevych, Iryna. Aspect-Controlled Neural Argument Generation. Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. 2021. doi:10.18653/v1/2021.naacl-main.34

-

[61]

Association for Computational Linguistics

Learning From Revisions: Quality Assessment of Claims in Argumentation at Scale , author =. Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume , month = apr, year = 2021, publisher = "Association for Computational Linguistics", url =

2021

-

[62]

To Revise or Not to Revise: Learning to Detect Improvable Claims for Argumentative Writing Support , author =. Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) , month = jul, year =. doi:10.18653/v1/2023.acl-long.880 , pages =

-

[63]

Claim Optimization in Computational Argumentation

Skitalinskaya, Gabriella and Splieth. Claim Optimization in Computational Argumentation. Proceedings of the 16th International Natural Language Generation Conference. 2023. doi:10.18653/v1/2023.inlg-main.10

-

[64]

Proceedings of the 5th Workshop on Argument Mining 2017 (ArgMining 2017) , site =

Maria Skeppstedt and Andreas Peldszus and Manfred Stede , title =. Proceedings of the 5th Workshop on Argument Mining 2017 (ArgMining 2017) , site =

2017

-

[65]

An autonomous debating system , volume =

Noam Slonim and Yonatan Bilu and Carlos Alzate and Roy Bar-Haim and Ben Bogin and Francesca Bonin and Leshem Choshen and Edo Cohen and Lena Dankin and Lilach Edelstein and Liat Ein Dor and Roni Friedman-Melamed and Assaf Gavron and Ariel Gera and Martin Gleize and Shai Gretz and Dan Gutfreund and Alon Halfon and Daniel Hershcovich and Ranit Aharonov , yea...

-

[66]

Identifying Argumentative Discourse Structures in Persuasive Essays

Stab, Christian and Gurevych, Iryna. Identifying Argumentative Discourse Structures in Persuasive Essays. Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing ( EMNLP ). 2014. doi:10.3115/v1/D14-1006

-

[67]

Christian Stab and Iryna Gurevych , title =

-

[68]

Christian Matthias Edwin Stab , year =

-

[69]

Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing (EMNLP 2018) , month = nov, year = 2018, address =

Christian Stab and Tristan Miller and Benjamin Schiller and Pranav Rai and Iryna Gurevych , title =. Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing (EMNLP 2018) , month = nov, year = 2018, address =

2018

-

[70]

A rg I nstruct: S pecialized Instruction Fine-Tuning for Computational Argumentation

Stahl, Maja and Ziegenbein, Timon and Park, Joonsuk and Wachsmuth, Henning. A rg I nstruct: S pecialized Instruction Fine-Tuning for Computational Argumentation. Findings of the Association for Computational Linguistics: ACL 2025. 2025. doi:10.18653/v1/2025.findings-acl.579

-

[71]

Shuhei Watanabe , title =. CoRR , volume =. 2023 , url =. doi:10.48550/ARXIV.2304.11127 , eprinttype =. 2304.11127 , timestamp =

-

[72]

Computational Argumentation Quality Assessment in Natural Language

Wachsmuth, Henning and Naderi, Nona and Hou, Yufang and Bilu, Yonatan and Prabhakaran, Vinodkumar and Thijm, Tim Alberdingk and Hirst, Graeme and Stein, Benno. Computational Argumentation Quality Assessment in Natural Language. Proceedings of the 15th Conference of the European Chapter of the Association for Computational Linguistics: Volume 1, Long Papers. 2017

2017

-

[73]

Argument Quality Assessment in the Age of Instruction-Following Large Language Models

Wachsmuth, Henning and Lapesa, Gabriella and Cabrio, Elena and Lauscher, Anne and Park, Joonsuk and Vecchi, Eva Maria and Villata, Serena and Ziegenbein, Timon. Argument Quality Assessment in the Age of Instruction-Following Large Language Models. Proceedings of the 2024 Joint International Conference on Computational Linguistics, Language Resources and E...

2024

-

[74]

Smith, Daniel Khashabi, and Hannaneh Hajishirzi

Wang, Yizhong and Kordi, Yeganeh and Mishra, Swaroop and Liu, Alisa and Smith, Noah A. and Khashabi, Daniel and Hajishirzi, Hannaneh. Self-Instruct: A ligning Language Models with Self-Generated Instructions. Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). 2023. doi:10.18653/v1/2023.acl-long.754

-

[75]

Advances in Neural Information Processing Systems , editor=

Chain of Thought Prompting Elicits Reasoning in Large Language Models , author=. Advances in Neural Information Processing Systems , editor=. 2022 , url=

2022

-

[76]

Qwen2.5 Technical Report , author =. arXiv preprint arXiv:2412.15115 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[77]

Mahdi Zakizadeh and Kaveh Eskandari Miandoab and Mohammad Taher Pilehvar , editor =. DiFair:. Findings of the Association for Computational Linguistics:. 2023 , url =. doi:10.18653/V1/2023.FINDINGS-EMNLP.127 , timestamp =

-

[78]

Weinberger and Yoav Artzi , booktitle=

Tianyi Zhang and Varsha Kishore and Felix Wu and Kilian Q. Weinberger and Yoav Artzi , booktitle=. 2020 , url=

2020

-

[79]

Ziegenbein, Timon and Skitalinskaya, Gabriella and Bayat Makou, Alireza and Wachsmuth, Henning. LLM -based Rewriting of Inappropriate Argumentation using Reinforcement Learning from Machine Feedback. Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). 2024. doi:10.18653/v1/2024.acl-long.244

-

[80]

Poudyal, Prakash and Savelka, Jaromir and Ieven, Aagje and Moens, Marie Francine and Goncalves, Teresa and Quaresma, Paulo , booktitle =

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.