Recognition: unknown

Beyond Indistinguishability: Measuring Extraction Risk in LLM APIs

Pith reviewed 2026-05-10 04:10 UTC · model grok-4.3

The pith

Indistinguishability properties do not upper-bound extraction risk in LLM APIs.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Indistinguishability and inextractability are incomparable: upper-bounding distinguishability does not upper-bound extractability. The authors therefore introduce (l, b)-inextractability as a definition that requires at least 2^b expected queries for any black-box adversary to induce the LLM API to emit a protected l-gram substring, and they supply a rank-based estimator that gives a tight upper bound on the probabilistic extraction risk for any decoding configuration.

What carries the argument

The (l, b)-inextractability definition together with its worst-case extraction game and rank-based risk estimator, which counts the number of queries needed to surface protected n-grams under prefix adaptation.

If this is right

- Differential privacy or membership-inference bounds on a model do not guarantee that training data cannot be extracted via API queries.

- Extraction risk must be measured directly with query-adaptive estimators rather than inferred from indistinguishability metrics.

- Decoding parameters such as temperature or top-k sampling can be tuned to raise the query cost of extraction without retraining.

- API access policies can be set using the (l, b) bound to limit the number of queries an untrusted caller is allowed.

- Training-time regularization can be evaluated by how much it increases the estimated query cost for exact substring recovery.

Where Pith is reading between the lines

- The separation implies that future privacy audits for generative APIs should report both distinguishability and extractability numbers side by side.

- The rank-based estimator could be adapted to measure leakage of structured data such as code snippets or tabular records.

- If the bound holds across larger models, it offers a practical way to set query-rate limits that scale with model size.

- The framework naturally extends to measuring leakage of approximate rather than exact matches, which may matter for copyright or privacy compliance.

Load-bearing premise

The black-box adversary model and worst-case extraction game formalization accurately capture real-world extraction threats against LLM APIs.

What would settle it

An empirical counter-example in which an LLM API with low distinguishability scores (for instance, near-zero membership inference success) nevertheless permits extraction of protected substrings with far fewer queries than the (l, b) bound predicts.

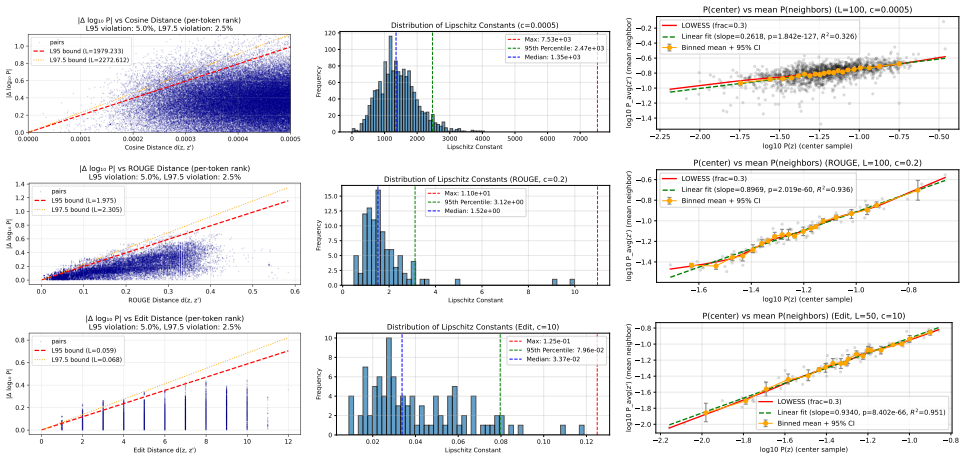

Figures

read the original abstract

Indistinguishability properties such as differential privacy bounds or low empirically measured membership inference are widely treated as proxies to show a model is sufficiently protected against broader memorization risks. However, we show that indistinguishability properties are neither sufficient nor necessary for preventing data extraction in LLM APIs. We formalize a privacy-game separation between extraction and indistinguishability-based privacy, showing that indistinguishability and inextractability are incomparable: upper-bounding distinguishability does not upper-bound extractability. To address this gap, we introduce $(l, b)$-inextractability as a definition that requires at least $2^b$ expected queries for any black-box adversary to induce the LLM API to emit a protected $l$-gram substring. We instantiate this via a worst-case extraction game and derive a rank-based extraction risk upper bound for targeted exact extraction, as well as extensions to cover untargeted and approximate extraction. The resulting estimator captures the extraction risk over multiple attack trials and prefix adaptations. We show that it can provide a tight and efficient estimation for standard greedy extraction and an upper bound on the probabilistic extraction risk given any decoding configuration. We empirically evaluate extractability across different models, clarifying its connection to distinguishability, demonstrating its advantage over existing extraction risk estimators, and providing actionable mitigation guidelines across model training, API access, and decoding configurations in LLM API deployment. Our code is publicly available at: https://github.com/Emory-AIMS/Inextractability.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that indistinguishability properties (e.g., DP bounds or low MIA success) are neither sufficient nor necessary to prevent data extraction in LLM APIs. It formalizes a game-based separation showing that indistinguishability and inextractability are incomparable, introduces the (l, b)-inextractability definition requiring at least 2^b expected queries for any black-box adversary to elicit a protected l-gram substring, derives a rank-based upper bound on targeted extraction risk (with extensions to untargeted/approximate cases), and empirically evaluates the estimator across models while providing mitigation guidelines. Public code is released.

Significance. If the separation holds and the estimator is tight as claimed, the work is significant for challenging reliance on indistinguishability proxies in LLM privacy and offering a direct, query-complexity-based risk measure with practical mitigation advice. The public code release and empirical comparisons to existing estimators are clear strengths supporting reproducibility.

major comments (2)

- [Definition of (l, b)-inextractability and extraction game] Formal definition of (l, b)-inextractability (via the worst-case extraction game): the black-box adversary is allowed fully adaptive prefix and query choices to meet the 2^b expected-query threshold. This formalization underpins the incomparability claim, yet it may not capture realistic API threats where attackers often operate with partial training-data knowledge, fixed decoding paths, or non-worst-case strategies; without addressing this gap the practical force of the separation and estimator is weakened.

- [Rank-based bound and estimator] Derivation of the rank-based extraction-risk upper bound: the bound is presented as tight for greedy decoding and an upper bound for arbitrary decoding configurations, and the estimator is said to aggregate over multiple trials and prefix adaptations. The central separation and estimator utility rest on this derivation; the manuscript must make the full game definition, proof steps, and any hidden assumptions explicit so that the bound's independence from fitted parameters can be verified.

minor comments (2)

- [Empirical evaluation] Empirical section: while connections to distinguishability are shown and advantages over prior estimators are demonstrated, the specific model sizes, training corpora, number of attack trials, and exact decoding parameters should be tabulated for reproducibility.

- [Introduction / Definition] Notation: the (l, b) parameters are central but introduced without a short concrete example (e.g., l=5, b=10) early in the text; adding one would aid readability.

Simulated Author's Rebuttal

We thank the referee for their constructive feedback, which helps us strengthen the presentation and practical relevance of our work. We address each major comment below.

read point-by-point responses

-

Referee: [Definition of (l, b)-inextractability and extraction game] Formal definition of (l, b)-inextractability (via the worst-case extraction game): the black-box adversary is allowed fully adaptive prefix and query choices to meet the 2^b expected-query threshold. This formalization underpins the incomparability claim, yet it may not capture realistic API threats where attackers often operate with partial training-data knowledge, fixed decoding paths, or non-worst-case strategies; without addressing this gap the practical force of the separation and estimator is weakened.

Authors: We agree that the worst-case adaptive adversary defines a strong formal notion. This is intentional and standard in cryptographic privacy definitions (e.g., differential privacy), as it is required to rigorously prove the incomparability result: if even the strongest black-box adversary cannot extract, then weaker realistic attackers also cannot. The separation would not hold under weaker adversaries. That said, we acknowledge the practical gap. In revision we will add a dedicated discussion subsection on realistic threat models, including partial training-data knowledge and non-adaptive/fixed-decoding attackers, and we will report additional experiments that apply the estimator under fixed decoding paths and limited prefix adaptation to illustrate its behavior in constrained settings. revision: partial

-

Referee: [Rank-based bound and estimator] Derivation of the rank-based extraction-risk upper bound: the bound is presented as tight for greedy decoding and an upper bound for arbitrary decoding configurations, and the estimator is said to aggregate over multiple trials and prefix adaptations. The central separation and estimator utility rest on this derivation; the manuscript must make the full game definition, proof steps, and any hidden assumptions explicit so that the bound's independence from fitted parameters can be verified.

Authors: We thank the referee for this observation. The current manuscript presents the bound and estimator at a high level; we will expand the relevant section to include (i) the complete formal definition of the extraction game, (ii) the full step-by-step proof of the rank-based upper bound, and (iii) an explicit enumeration of all assumptions, including the fact that the bound is independent of any fitted parameters and holds for arbitrary decoding configurations as an upper bound (with tightness shown for greedy decoding). These additions will make the derivation directly verifiable. revision: yes

Circularity Check

No significant circularity; derivation is self-contained from new game definition

full rationale

The paper defines (l, b)-inextractability directly from a worst-case black-box extraction game, then derives the rank-based upper bound and estimator as consequences of that game. No step reduces a claimed prediction or bound to a fitted parameter or prior self-citation by construction; the separation result between indistinguishability and inextractability follows from the formal game without circular reduction. The central claims rest on the introduced definition rather than renaming or smuggling prior results.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Black-box access model for the adversary in the extraction game

invented entities (1)

-

(l, b)-inextractability

no independent evidence

Reference graph

Works this paper leans on

-

[1]

OpenAI o1,

OpenAI, “OpenAI o1,” https://openai.com/o1/, OpenAI o1 model information

-

[2]

Create chat completion - DeepSeek API documentation,

DeepSeek, “Create chat completion - DeepSeek API documentation,” https://api-docs.deepseek.com/api/create-chat-completion, DeepSeek API documentation for chat completions

-

[3]

A survey on large language model based autonomous agents,

L. Wang, C. Ma, X. Feng, Z. Zhang, H. Yang, J. Zhang, Z. Chen, J. Tang, X. Chen, Y . Linet al., “A survey on large language model based autonomous agents,”Frontiers of Computer Science, vol. 18, no. 6, 2024

2024

-

[4]

Capabil- ities of GPT-4 on Medical Challenge Problems

H. Nori, N. King, S. M. McKinney, D. Carignan, and E. Horvitz, “Capabilities of GPT-4 on medical challenge problems,” arXiv:2303.13375, 2023

-

[5]

Scalable extraction of training data from aligned, production language models,

M. Nasr, J. Rando, N. Carlini, J. Hayase, M. Jagielski, A. F. Cooper, D. Ippolito, C. A. Choquette-Choo, F. Tramèr, and K. Lee, “Scalable extraction of training data from aligned, production language models,” in13 th International Conference on Learning Representations, 2025

2025

-

[6]

Extracting training data from large language models,

N. Carlini, F. Tramer, E. Wallace, M. Jagielski, A. Herbert-V oss, K. Lee, A. Roberts, T. Brown, D. Song, U. Erlingsson, A. Oprea, and C. Raffel, “Extracting training data from large language models,” in 30th USENIX Security Symposium, 2021

2021

-

[7]

Quantifying memorization across neural language mod- els,

N. Carlini, D. Ippolito, M. Jagielski, K. Lee, F. Tramer, and C. Zhang, “Quantifying memorization across neural language mod- els,” inEleventh International Conference on Learning Representa- tions, 2022

2022

-

[8]

Measuring memorization in language models via probabilistic extraction,

J. Hayes, M. Swanberg, H. Chaudhari, I. Yona, I. Shumailov, M. Nasr, C. A. Choquette-Choo, K. Lee, and A. F. Cooper, “Measuring memorization in language models via probabilistic extraction,” in Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics, 2025

2025

-

[9]

Preventing generation of verba- tim memorization in language models gives a false sense of privacy,

D. Ippolito, F. Tramer, M. Nasr, C. Zhang, M. Jagielski, K. Lee, C. Choquette Choo, and N. Carlini, “Preventing generation of verba- tim memorization in language models gives a false sense of privacy,” in16 th International Natural Language Generation Conference, 2023

2023

-

[10]

Deduplicating training data makes language models better,

K. Lee, D. Ippolito, A. Nystrom, C. Zhang, D. Eck, C. Callison- Burch, and N. Carlini, “Deduplicating training data makes language models better,” in60 th Annual Meeting of the Association for Com- putational Linguistics, May 2022

2022

-

[11]

ParaPO: Aligning lan- guage models to reduce verbatim reproduction of pre-training data,

T. Chen, F. Brahman, J. Liu, N. Mireshghallah, W. Shi, P. W. Koh, L. Zettlemoyer, and H. Hajishirzi, “ParaPO: Aligning lan- guage models to reduce verbatim reproduction of pre-training data,” arXiv:2504.14452, 2025

-

[12]

Deep learning with differential privacy,

M. Abadi, A. Chu, I. Goodfellow, H. B. McMahan, I. Mironov, K. Talwar, and L. Zhang, “Deep learning with differential privacy,” inACM SIGSAC Conference on Computer and Communications Security, 2016

2016

-

[13]

Membership inference attacks against machine learning models,

R. Shokri, M. Stronati, C. Song, and V . Shmatikov, “Membership inference attacks against machine learning models,” inIEEE Sympo- sium on Security and Privacy, 2017

2017

-

[14]

Strong membership inference attacks on massive datasets and (moderately) large language models,

J. Hayes, I. Shumailov, C. A. Choquette-Choo, M. Jagielski, G. Kaissis, K. Lee, M. Nasr, S. Ghalebikesabi, N. Mireshghallah, M. S. M. S. Annamalai, I. Shilov, M. Meeus, Y .-A. de Montjoye, F. Boenisch, A. Dziedzic, and A. F. Cooper, “Strong membership inference attacks on massive datasets and (moderately) large language models,”arXiv:2505.18773, 2025

-

[15]

Tight auditing of differentially private machine learning,

M. Nasr, J. Hayes, T. Steinke, B. Balle, F. Tramèr, M. Jagielski, N. Carlini, and A. Terzis, “Tight auditing of differentially private machine learning,” in32 nd USENIX Security Symposium, 2023

2023

-

[16]

Auditingf-differential privacy in one run,

S. Mahloujifar, L. Melis, and K. Chaudhuri, “Auditingf-differential privacy in one run,”arXiv:2410.22235, 2024

-

[17]

The secret sharer: Evaluating and testing unintended memorization in neural networks,

N. Carlini, C. Liu, Ú. Erlingsson, J. Kos, and D. Song, “The secret sharer: Evaluating and testing unintended memorization in neural networks,” in28 th USENIX Security Symposium, 2019

2019

-

[18]

Reconstructing training data with informed adversaries,

B. Balle, G. Cherubin, and J. Hayes, “Reconstructing training data with informed adversaries,” inIEEE Symposium on Security and Privacy, 2022

2022

-

[19]

Beyond the worst case: Extending differential privacy guarantees to realistic adversaries,

M. Swanberg, M. S. M. S. Annamalai, J. Hayes, B. Balle, and A. Smith, “Beyond the worst case: Extending differential privacy guarantees to realistic adversaries,”arXiv:2507.08158, 2025

-

[20]

SoK: Let the privacy games begin! a unified treatment of data inference privacy in machine learning,

A. Salem, G. Cherubin, D. Evans, B. Köpf, A. Paverd, A. Suri, S. Tople, and S. Zanella-Béguelin, “SoK: Let the privacy games begin! a unified treatment of data inference privacy in machine learning,” inIEEE Symposium on Security and Privacy, 2023

2023

-

[21]

Are attribute inference attacks just imputation?

B. Jayaraman and D. Evans, “Are attribute inference attacks just imputation?” inACM SIGSAC Conference on Computer and Com- munications Security, 2022

2022

-

[22]

Language models may verbatim complete text they were not explicitly trained on,

K. Z. Liu, C. A. Choquette-Choo, M. Jagielski, P. Kairouz, S. Koyejo, P. Liang, and N. Papernot, “Language models may verbatim complete text they were not explicitly trained on,”arXiv:2503.17514, 2025

-

[23]

PreCurious: How innocent pre-trained language models turn into privacy traps,

R. Liu, T. Wang, Y . Cao, and L. Xiong, “PreCurious: How innocent pre-trained language models turn into privacy traps,” inACM SIGSAC Conference on Computer and Communications Security, 2024

2024

-

[24]

Do membership inference attacks work on large language models?

M. Duan, A. Suri, N. Mireshghallah, S. Min, W. Shi, L. Zettlemoyer, Y . Tsvetkov, Y . Choi, D. Evans, and H. Hajishirzi, “Do membership inference attacks work on large language models?” inFirst Confer- ence on Language Modeling, 2024

2024

-

[25]

Emergent and predictable memorization in large language models,

S. Biderman, U. Prashanth, L. Sutawika, H. Schoelkopf, Q. Anthony, S. Purohit, and E. Raff, “Emergent and predictable memorization in large language models,”37 th International Conference on Neural Information Processing Systems, 2023

2023

-

[26]

Truncation sampling as language model desmoothing,

J. Hewitt, C. Manning, and P. Liang, “Truncation sampling as language model desmoothing,” inFindings of the Association for Computational Linguistics (EMNLP), Dec. 2022

2022

-

[27]

OpenAI GPT-5 API Document,

OpenAI, “OpenAI GPT-5 API Document,” https://platform.openai. com/docs/api-reference/backward-compatibility, OpenAI does not support probabilities access and decoding control in GPT-5

-

[28]

OpenAI SDK - Anthropic API Documentation,

Anthropic, “OpenAI SDK - Anthropic API Documentation,” https: //docs.anthropic.com/en/api/openai-sdk, Anthropic’s APIs do not pro- vide logprobs

-

[29]

Azure OpenAI Document,

Azure, “Azure OpenAI Document,” https://learn.microsoft.com/ en-us/azure/ai-foundry/openai/reference?utm_source=chatgpt.com, Azure OpenAI provides top-5 logprobs

-

[30]

Chat completions API reference,

OpenAI, “Chat completions API reference,” https://platform.openai. com/docs/api-reference/chat/create, openAI provides top-20 logprobs

-

[31]

Vertex AI Generative AI Model Reference - inference,

Google Cloud, “Vertex AI Generative AI Model Reference - inference,” https://cloud.google.com/vertex-ai/generative-ai/docs/ model-reference/inference#generationconfig, Gemini provides top-20 logprobs

-

[32]

Detecting pretraining data from large language mod- els,

W. Shi, A. Ajith, M. Xia, Y . Huang, D. Liu, T. Blevins, D. Chen, and L. Zettlemoyer, “Detecting pretraining data from large language mod- els,” in12 th International Conference on Learning Representations, 2024

2024

-

[33]

Data reconstruction: When you see it and when you don’t,

E. Cohen, H. Kaplan, Y . Mansour, S. Moran, K. Nissim, U. Stemmer, and E. Tsfadia, “Data reconstruction: When you see it and when you don’t,”arXiv:2405.15753, 2024

-

[34]

Investigating membership inference attacks under data dependencies,

T. Humphries, S. Oya, L. Tulloch, M. Rafuse, I. Goldberg, U. Hen- gartner, and F. Kerschbaum, “Investigating membership inference attacks under data dependencies,” in36 th IEEE Computer Security Foundations Symposium, 2023

2023

-

[35]

Privacy risk in machine learning: Analyzing the connection to overfitting,

S. Yeom, I. Giacomelli, M. Fredrikson, and S. Jha, “Privacy risk in machine learning: Analyzing the connection to overfitting,” in31 st Computer Security Foundations Symposium, 2018

2018

-

[36]

Membership inference attacks from first principles,

N. Carlini, S. Chien, M. Nasr, S. Song, A. Terzis, and F. Tramer, “Membership inference attacks from first principles,” inIEEE Sym- posium on Security and Privacy, 2022

2022

-

[37]

Measuring memo- rization in RLHF for code completion,

A. Pappu, B. Porter, I. Shumailov, and J. Hayes, “Measuring memo- rization in RLHF for code completion,”arXiv:2406.11715, 2024

-

[38]

Alpaca against Vicuna: Using LLMs to uncover memorization of LLMs,

A. M. Kassem, O. Mahmoud, N. Mireshghallah, H. Kim, Y . Tsvetkov, Y . Choi, S. Saad, and S. Rana, “Alpaca against Vicuna: Using LLMs to uncover memorization of LLMs,”arXiv:2403.04801, 2024

-

[39]

Rethinking LLM memorization through the lens of adversarial com- pression,

A. Schwarzschild, Z. Feng, P. Maini, Z. Lipton, and J. Z. Kolter, “Rethinking LLM memorization through the lens of adversarial com- pression,”International Conference on Neural Information Process- ing Systems, 2024

2024

-

[40]

Bag of tricks for training data extraction from language models,

W. Yu, T. Pang, Q. Liu, C. Du, B. Kang, Y . Huang, M. Lin, and S. Yan, “Bag of tricks for training data extraction from language models,” inInternational Conference on Machine Learning, 2023

2023

-

[41]

On the bit security of cryptographic primitives,

D. Micciancio and M. Walter, “On the bit security of cryptographic primitives,” inAnnual International Conference on the Theory and Applications of Cryptographic Techniques, 2018

2018

-

[42]

On the foundations of quantitative information flow,

G. Smith, “On the foundations of quantitative information flow,” in International Conference on Foundations of Software Science and Computational Structures, 2009

2009

-

[43]

Early detection and reduction of memorization for domain adaptation and instruction tuning,

D. L. Slack and N. Al Moubayed, “Early detection and reduction of memorization for domain adaptation and instruction tuning,”Trans- actions of the Association for Computational Linguistics, 2025

2025

-

[44]

F. Barbero, X. Gu, C. A. Choquette-Choo, C. Sitawarin, M. Jagiel- ski, I. Yona, P. Veli ˇckovi´c, I. Shumailov, and J. Hayes, “Extracting alignment data in open models,”arXiv:2510.18554, 2025

-

[45]

Language models are unsupervised multitask learners,

A. Radford, J. Wu, R. Child, D. Luan, D. Amodei, and I. Sutskever, “Language models are unsupervised multitask learners,” 2019

2019

-

[46]

The Enron corpus: A new dataset for email classification research,

B. Klimt and Y . Yang, “The Enron corpus: A new dataset for email classification research,” inEuropean Conference on Machine Learning, 2004

2004

-

[47]

A. Grattafiori, A. Dubey, A. Jauhri, A. Pandey, A. Kadian, A. Al- Dahle, A. Letman, A. Mathur, A. Schelten, A. Vaughanet al., “The Llama 3 herd of models,”arXiv:2407.21783, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[48]

The Pile: An 800GB Dataset of Diverse Text for Language Modeling

L. Gao, S. Biderman, S. Black, L. Golding, T. Hoppe, C. Foster, J. Phang, H. He, A. Thite, N. Nabeshima, S. Presser, and C. Leahy, “The Pile: An 800GB dataset of diverse text for language modeling,” arXiv:2101.00027, 2020

work page internal anchor Pith review arXiv 2020

-

[49]

BookSum: A collection of datasets for long-form narrative summa- rization,

W. Kryscinski, N. Rajani, D. Agarwal, C. Xiong, and D. Radev, “BookSum: A collection of datasets for long-form narrative summa- rization,” inFindings of the Association for Computational Linguistics (EMNLP), 2022

2022

-

[50]

Counterfactual memorization in neural language models,

C. Zhang, D. Ippolito, K. Lee, M. Jagielski, F. Tramèr, and N. Carlini, “Counterfactual memorization in neural language models,”Interna- tional Conference on Neural Information Processing Systems, 2023

2023

-

[51]

Unsupervised text deidentification,

J. Morris, J. Chiu, R. Zabih, and A. Rush, “Unsupervised text deidentification,” inFindings of the Association for Computational Linguistics (EMNLP), 2022

2022

-

[52]

Language models are advanced anonymizers,

R. Staab, M. Vero, M. Balunovic, and M. Vechev, “Language models are advanced anonymizers,” in13 th International Conference on Learning Representations, 2025

2025

-

[53]

Be like a goldfish, don’t memorize! Mitigating memorization in generative LLMs,

A. Hans, J. Kirchenbauer, Y . Wen, N. Jain, H. Kazemi, P. Singhania, S. Singh, G. Somepalli, J. Geiping, A. Bhateleet al., “Be like a goldfish, don’t memorize! Mitigating memorization in generative LLMs,”International Conference on Neural Information Processing Systems, 2024

2024

-

[54]

T. Tran, R. Liu, and L. Xiong, “Tokens for learning, tokens for un- learning: Mitigating membership inference attacks in large language models via dual-purpose training,”arXiv:2502.19726, 2025

-

[55]

Tulu 3: Pushing frontiers in open language model post-training,

N. Lambert, J. Morrison, V . Pyatkin, S. Huang, H. Ivison, F. Brahman, L. J. V . Miranda, A. Liu, N. Dziri, X. Lyu, Y . Gu, S. Malik, V . Graf, J. D. Hwang, J. Yang, R. L. Bras, O. Tafjord, C. Wilhelm, L. Soldaini, N. A. Smith, Y . Wang, P. Dasigi, and H. Hajishirzi, “Tulu 3: Pushing frontiers in open language model post-training,” inSecond Conference on ...

2025

-

[56]

Mirostat: A neural text decoding algorithm that directly controls perplexity,

S. Basu, G. S. Ramachandran, N. S. Keskar, and L. R. Varshney, “Mirostat: A neural text decoding algorithm that directly controls perplexity,” inInternational Conference on Learning Representations, 2021. Ethics considerations This work proposes a metric to quantify extraction risk in LLMs. All experiments are conducted on publicly available models and da...

2021

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.