Recognition: unknown

Indic-CodecFake meets SATYAM: Towards Detecting Neural Audio Codec Synthesized Speech Deepfakes in Indic Languages

Pith reviewed 2026-05-10 00:30 UTC · model grok-4.3

The pith

SATYAM detects neural audio codec deepfakes in Indic languages by fusing semantic and prosodic features through Bhattacharya distance in hyperbolic space.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

SATYAM integrates semantic representations from Whisper and prosodic representations from TRILLsson using Bhattacharya distance in hyperbolic space and subsequently performs the same alignment procedure between the fused speech representation and an input conditioning prompt. This dual-stage fusion framework enables SATYAM to effectively model hierarchical relationships both within speech (semantic-prosodic) and across modalities (speech-text).

What carries the argument

Dual-stage fusion of Whisper semantic and TRILLsson prosodic representations via Bhattacharya distance in hyperbolic space, followed by alignment with conditioning prompts.

If this is right

- Existing codec-fake detectors trained on English or Chinese data do not transfer to Indic languages.

- Current audio large language models perform poorly in zero-shot codec-fake detection on Indic speech.

- The Indic-CodecFake dataset provides a standardized benchmark for future Indic deepfake detection work.

- Hyperbolic-space alignment between speech and text modalities improves modeling of hierarchical speech structure.

Where Pith is reading between the lines

- Prosodic variability across Indic languages may require explicit modeling beyond what English-centric feature extractors supply.

- The same hyperbolic fusion technique could be tested on other low-resource language families where prosody differs from high-resource training data.

- If the dual alignment proves robust, it could be adapted to detect other synthesis artifacts such as voice conversion or text-to-speech without retraining the core components.

Load-bearing premise

The fusion of semantic and prosodic features through distance in hyperbolic space is sufficient to capture the artifacts that distinguish neural codec synthesized speech from real speech in Indic languages.

What would settle it

Evaluation on a fresh set of neural codec synthesized samples from Indic languages using codecs or dialects absent from the Indic-CodecFake benchmark, checking whether SATYAM's advantage over baselines disappears.

Figures

read the original abstract

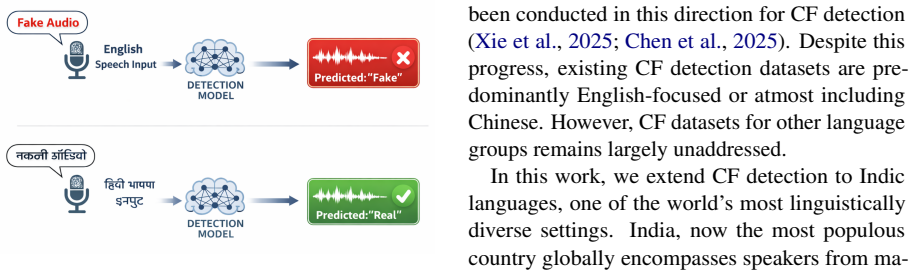

The rapid advancement of Audio Large Language Models (ALMs), driven by Neural Audio Codecs (NACs), has led to the emergence of highly realistic speech deepfakes, commonly referred to as CodecFakes (CFs). Consequently, CF detection has attracted increasing attention from the research community. However, existing studies predominantly focus on English or Chinese, leaving the vulnerability of Indic languages largely unexplored. To bridge this gap, we introduce Indic-CodecFake (ICF) dataset, the first large-scale benchmark comprising real and NAC-synthesized speech across multiple Indic languages, diverse speaker profiles, and multiple NAC types. We use IndicSUPERB as the real speech corpus for generation of ICF dataset. Our experiments demonstrate that state-of-the-art (SOTA) CF detectors trained on English-centric datasets fail to generalize to ICF, underscoring the challenges posed by phonetic diversity and prosodic variability in Indic speech. Further, we present systematic evaluation of SOTA ALMs in a zero-shot setting on ICF dataset. We evaluate these ALMs as they have shown effectiveness for different speech tasks. However, our findings reveal that current ALMs exhibit consistently poor performance. To address this, we propose SATYAM, a novel hyperbolic ALM tailored for CF detection in Indic languages. SATYAM integrates semantic representations from Whisper and prosodic representations from TRILLsson using through Bhattacharya distance in hyperbolic space and subsequently performs the same alignment procedure between the fused speech representation and an input conditioning prompt. This dual-stage fusion framework enables SATYAM to effectively model hierarchical relationships both within speech (semantic-prosodic) and across modalities (speech-text). Extensive evaluations show that SATYAM consistently outperforms competitive end-to-end and ALM-based baselines on the ICF benchmark.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces the Indic-CodecFake (ICF) dataset, the first large-scale benchmark for neural audio codec (NAC) synthesized speech deepfakes in Indic languages, generated from IndicSUPERB. It demonstrates that state-of-the-art codec fake detectors trained on English data and zero-shot audio large language models (ALMs) perform poorly on ICF due to phonetic and prosodic differences. The paper proposes SATYAM, a hyperbolic ALM that performs dual-stage fusion: first integrating Whisper semantic and TRILLsson prosodic features via Bhattacharyya distance in hyperbolic space, then aligning the fused representation with text prompts. Extensive experiments claim that SATYAM outperforms end-to-end and ALM-based baselines on the ICF benchmark.

Significance. If the empirical outperformance holds with reproducible details and ablations, the work is significant as it addresses the gap in deepfake detection for Indic languages, which exhibit phonetic and prosodic variability not captured by English-centric models. The ICF dataset provides a valuable new benchmark resource for the community. The targeted hyperbolic fusion approach for semantic-prosodic modeling represents a potentially useful adaptation, though its advantages require explicit validation against simpler alternatives to establish broader applicability.

major comments (3)

- §3 (SATYAM architecture): The central novelty claim rests on the dual-stage hyperbolic fusion of Whisper and TRILLsson representations via Bhattacharyya distance; however, no ablation is presented comparing this to Euclidean distance, direct concatenation, or other fusion strategies, which is load-bearing for asserting that the hyperbolic component is necessary for capturing Indic-specific distinctions.

- §4 (Experiments and results): The claim that SATYAM 'consistently outperforms' baselines is load-bearing for the paper's contribution, yet the reported tables lack error bars, statistical significance tests (e.g., McNemar's or paired t-tests), and cross-validation details; without these, it is unclear whether observed gains exceed variance or dataset-specific artifacts.

- §2.1 (ICF dataset construction): While the use of IndicSUPERB as the real-speech source is noted, the section does not provide per-language utterance counts, speaker demographics, or the exact NAC types and synthesis parameters employed; these details are required to substantiate the claim that prior English-centric detectors fail due to Indic phonetic/prosodic diversity rather than other factors.

minor comments (3)

- Abstract: Acronyms (ALM, NAC, CF, ICF) are used without initial expansion, reducing readability for a broad audience.

- §3.2: The description of the hyperbolic alignment procedure references 'the same alignment procedure' without a forward pointer to the exact equation or prior work being reused, which could be clarified with a numbered equation.

- Table captions (throughout): Captions should explicitly state the evaluation metric (e.g., EER or AUC) and whether results are averaged over multiple runs.

Simulated Author's Rebuttal

We thank the referee for the constructive comments. We address each major comment below and will revise the manuscript to incorporate the suggested improvements.

read point-by-point responses

-

Referee: §3 (SATYAM architecture): The central novelty claim rests on the dual-stage hyperbolic fusion of Whisper and TRILLsson representations via Bhattacharyya distance; however, no ablation is presented comparing this to Euclidean distance, direct concatenation, or other fusion strategies, which is load-bearing for asserting that the hyperbolic component is necessary for capturing Indic-specific distinctions.

Authors: We agree that explicit ablations are needed to substantiate the contribution of the hyperbolic fusion. The design choice was motivated by hyperbolic geometry's capacity to embed hierarchical semantic-prosodic structures more effectively than Euclidean space, yet we acknowledge the absence of direct comparisons in the original submission. In the revised manuscript we will add ablations that replace Bhattacharyya distance in hyperbolic space with Euclidean distance, direct concatenation, and at least one additional fusion baseline, reporting performance on the ICF benchmark to quantify the gains attributable to the proposed approach. revision: yes

-

Referee: §4 (Experiments and results): The claim that SATYAM 'consistently outperforms' baselines is load-bearing for the paper's contribution, yet the reported tables lack error bars, statistical significance tests (e.g., McNemar's or paired t-tests), and cross-validation details; without these, it is unclear whether observed gains exceed variance or dataset-specific artifacts.

Authors: We concur that statistical validation is essential. Although the experiments were repeated across multiple random seeds, the submitted version omitted error bars and formal tests. The revision will include standard-deviation error bars, McNemar's test (or paired t-tests where appropriate) for significance against each baseline, and explicit description of the train/validation/test splits and any cross-validation procedure employed. revision: yes

-

Referee: §2.1 (ICF dataset construction): While the use of IndicSUPERB as the real-speech source is noted, the section does not provide per-language utterance counts, speaker demographics, or the exact NAC types and synthesis parameters employed; these details are required to substantiate the claim that prior English-centric detectors fail due to Indic phonetic/prosodic diversity rather than other factors.

Authors: We will expand §2.1 with the requested statistics: per-language utterance counts and speaker demographics drawn from IndicSUPERB, together with the precise neural audio codec models (including EnCodec, SoundStream, and any others used) and all synthesis hyper-parameters. These additions will directly support the attribution of performance drops to phonetic and prosodic characteristics of Indic languages. revision: yes

Circularity Check

No significant circularity

full rationale

The paper introduces the ICF dataset from IndicSUPERB and proposes SATYAM as a dual-stage hyperbolic fusion of external pre-trained Whisper semantic and TRILLsson prosodic features using Bhattacharyya distance, followed by speech-text alignment. The central claim is an empirical one: SATYAM outperforms baselines on this new benchmark. No equations or derivations are presented that reduce by construction to fitted parameters or self-defined quantities; the architecture is described as a novel adaptation without load-bearing self-citations, uniqueness theorems, or renamed known results. The derivation chain is self-contained against external benchmarks and pre-trained models.

Axiom & Free-Parameter Ledger

free parameters (1)

- hyperbolic curvature and alignment parameters

axioms (3)

- domain assumption Whisper embeddings capture semantic content relevant to distinguishing codec artifacts

- domain assumption TRILLsson embeddings capture prosodic features useful for real vs. synthesized speech discrimination

- ad hoc to paper Bhattacharya distance in hyperbolic space effectively models hierarchical semantic-prosodic relationships

Reference graph

Works this paper leans on

-

[1]

Aho and Jeffrey D

Alfred V. Aho and Jeffrey D. Ullman , title =. 1972

1972

-

[2]

Publications Manual , year = "1983", publisher =

1983

-

[3]

Ashok K. Chandra and Dexter C. Kozen and Larry J. Stockmeyer , year = "1981", title =. doi:10.1145/322234.322243

-

[4]

Scalable training of

Andrew, Galen and Gao, Jianfeng , booktitle=. Scalable training of

-

[5]

Dan Gusfield , title =. 1997

1997

-

[6]

Tetreault , title =

Mohammad Sadegh Rasooli and Joel R. Tetreault , title =. Computing Research Repository , volume =. 2015 , url =

2015

-

[7]

A Framework for Learning Predictive Structures from Multiple Tasks and Unlabeled Data , Volume =

Ando, Rie Kubota and Zhang, Tong , Issn =. A Framework for Learning Predictive Structures from Multiple Tasks and Unlabeled Data , Volume =. Journal of Machine Learning Research , Month = dec, Numpages =

-

[8]

Communication, Simulation, and Intelligent Agents: Implications of Personal Intelligent Machines for Medical Education

Clancey, William J. Communication, Simulation, and Intelligent Agents: Implications of Personal Intelligent Machines for Medical Education. Proceedings of the Eighth International Joint Conference on Artificial Intelligence (IJCAI-83)

-

[9]

Classification Problem Solving

Clancey, William J. Classification Problem Solving. Proceedings of the Fourth National Conference on Artificial Intelligence

-

[10]

, title =

Robinson, Arthur L. , title =. 1980 , doi =. https://science.sciencemag.org/content/208/4447/1019.full.pdf , journal =

1980

-

[11]

New Ways to Make Microcircuits Smaller---Duplicate Entry

Robinson, Arthur L. New Ways to Make Microcircuits Smaller---Duplicate Entry. Science

-

[12]

Clancey and Glenn Rennels , abstract =

Diane Warner Hasling and William J. Clancey and Glenn Rennels , abstract =. Strategic explanations for a diagnostic consultation system , journal =. 1984 , issn =. doi:https://doi.org/10.1016/S0020-7373(84)80003-6 , url =

-

[13]

and Rennels, Glenn R

Hasling, Diane Warner and Clancey, William J. and Rennels, Glenn R. and Test, Thomas. Strategic Explanations in Consultation---Duplicate. The International Journal of Man-Machine Studies

-

[14]

Poligon: A System for Parallel Problem Solving

Rice, James. Poligon: A System for Parallel Problem Solving

-

[15]

Transfer of Rule-Based Expertise through a Tutorial Dialogue

Clancey, William J. Transfer of Rule-Based Expertise through a Tutorial Dialogue

-

[16]

The Engineering of Qualitative Models

Clancey, William J. The Engineering of Qualitative Models

-

[17]

2017 , eprint=

Attention Is All You Need , author=. 2017 , eprint=

2017

-

[18]

Pluto: The 'Other' Red Planet

NASA. Pluto: The 'Other' Red Planet

-

[19]

The Wall Street Journal , volume=

Fraudsters used AI to mimic CEO’s voice in unusual cybercrime case , author=. The Wall Street Journal , volume=

-

[20]

Fake Biden robocall tells voters to skip New Hampshire primary election - BBC News

-

[21]

and Kinnunen, T

Wu, Z. and Kinnunen, T. and Evans, N. and Yamagishi, J. and Hanilçi, C. and others , title =. Proc. of INTERSPEECH , year =

-

[22]

and Sahidullah, M

Kinnunen, T. and Sahidullah, M. and Delgado, H. and Evans, N. and Todisco, M. and others , title =. Proc. of INTERSPEECH , year =

-

[24]

and Wang, X

Todisco, M. and Wang, X. and Vestman, V. and Sahidullah, Md. and Lee, K. , title =. Proc. of INTERSPEECH , year =

-

[25]

and Wang, X

Liu, X. and Wang, X. and Sahidullah, M. and others , title =. IEEE/ACM Transactions on Audio, Speech, and Language Processing , year =

-

[26]

and Wang, X

Yamagishi, J. and Wang, X. and Todisco, M. and Sahidullah, M. and Patino, J. and Nautsch, A. and Liu, X. and Lee, K. A. and Kinnunen, T. and Evans, N. , title =. Proc. of INTERSPEECH , year =

-

[27]

and Lim, S

Zhou, Y. and Lim, S. N. , title =. Proc. IEEE/CVF International Conference on Computer Vision , year =

-

[28]

and Fu, R

Yi, J. and Fu, R. and Tao, J. and others , title =. Proc. of ICASSP , year =

-

[29]

Add 2023: the second audio deepfake detection challenge,

Yi, J. and Tao, J. and Fu, R. and others , title =. arXiv preprint arXiv:2305.13774 , year =

-

[30]

and Yi, J

Ma, H. and Yi, J. and Tao, J. and Bai, Y. and Tian, Z. and Wang, C. , title =. Proc. INTERSPEECH , year =

-

[31]

and Bai, Y

Yi, J. and Bai, Y. and Tao, J. and others , title =. Proc. of INTERSPEECH , year =

- [32]

-

[33]

and Khodabakhsh, A

Wu, Z. and Khodabakhsh, A. and Demiroglu, C. and Yamagishi, J. and Saito, D. and Toda, T. and King, S. , title =. Proc. of ICASSP , year =

-

[34]

and Tariq, S

Khalid, H. and Tariq, S. and Kim, M. and Woo, S. S. , title =. Proc. of NeurIPS Datasets and Benchmarks Track , year =

-

[35]

and Tzerpos, V

Reimao, R. and Tzerpos, V. , title =. Proc. of 2019 International Conference on Speech Technology and Human-Computer Dialogue (SpeD) , year =

2019

-

[36]

and Schönherr, L

Frank, J. and Schönherr, L. , title =. Proc. of NeurIPS Datasets and Benchmarks Track , year =

-

[37]

Shaaban, O. A. and Yildirim, R. and Alguttar, A. A. , title =. IEEE Access , volume =

-

[38]

and Javed, A

Hamza, A. and Javed, A. R. R. and Iqbal, F. and others , title =. IEEE Access , volume =

-

[39]

and AlZu'bi, S

Altalahin, I. and AlZu'bi, S. and Alqudah, A. and others , title =. Proc. of 2023 International Conference on Information Technology (ICIT) , pages =

2023

-

[40]

Kilinc, H. H. and Kaledibi, F. , title =. Proc. of 2023 10th International Conference on Wireless Networks and Mobile Communications (WINCOM) , pages =

2023

-

[41]

An initial investigation for detecting vocoder fingerprints of fake audio , author=. Proc. of the 1st International Workshop on Deepfake Detection for Audio Multimedia , year=

-

[42]

ArXiv , year=

Distinguishing Neural Speech Synthesis Models Through Fingerprints in Speech Waveforms , author=. ArXiv , year=

-

[43]

Source Tracing: Detecting Voice Spoofing , author=. Proc. Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC) , year=

-

[44]

ICASSP 2024-2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) , pages=

VFD-Net: Vocoder Fingerprints Detection for Fake Audio , author=. ICASSP 2024-2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) , pages=. 2024 , organization=

2024

-

[45]

ArXiv , year=

Attacker Attribution of Audio Deepfakes , author=. ArXiv , year=

-

[46]

ArXiv , year=

System Fingerprints Detection for DeepFake Audio: An Initial Dataset and Investigation , author=. ArXiv , year=

-

[47]

ArXiv , year=

Source Tracing of Audio Deepfake Systems , author=. ArXiv , year=

-

[48]

2023 , url=

How Distinguishable Are Vocoder Models? Analyzing Vocoder Fingerprints for Fake Audio , author=. 2023 , url=

2023

-

[49]

Asnani, Vishal and Yin, Xi and Hassner, Tal and Liu, Xiaoming , journal=. 2023 , volume=. doi:10.1109/TPAMI.2023.3301451 , url =

-

[50]

Submitted to ACL Rolling Review - June 2024 , year=

Source Attribution for Large Language Model-Generated Data , author=. Submitted to ACL Rolling Review - June 2024 , year=

2024

-

[51]

Computer Speech & Language , volume=

ASVspoof 2019: A large-scale public database of synthesized, converted and replayed speech , author=. Computer Speech & Language , volume=. 2020 , publisher=

2019

-

[52]

Speech Communication , volume=

CFAD: A Chinese dataset for fake audio detection , author=. Speech Communication , volume=. 2024 , publisher=

2024

-

[53]

Advances in neural information processing systems , volume=

wav2vec 2.0: A framework for self-supervised learning of speech representations , author=. Advances in neural information processing systems , volume=

-

[54]

Journal of Machine Learning Research , volume=

Scaling speech technology to 1,000+ languages , author=. Journal of Machine Learning Research , volume=

-

[55]

IEEE Journal of Selected Topics in Signal Processing , volume=

Wavlm: Large-scale self-supervised pre-training for full stack speech processing , author=. IEEE Journal of Selected Topics in Signal Processing , volume=. 2022 , publisher=

2022

-

[56]

, author=

x-vector DNN refinement with full-length recordings for speaker recognition. , author=. Interspeech , pages=

-

[57]

2022 Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC) , pages=

Source tracing: detecting voice spoofing , author=. 2022 Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC) , pages=. 2022 , organization=

2022

-

[58]

Source Tracing of Audio Deepfake Systems , author =. 2024 , booktitle =. doi:10.21437/Interspeech.2024-1283 , issn =

-

[59]

Chetia Phukan, Orchid and Kashyap, Gautam and Buduru, Arun Balaji and Sharma, Rajesh. Heterogeneity over Homogeneity: Investigating Multilingual Speech Pre-Trained Models for Detecting Audio Deepfake. Findings of the Association for Computational Linguistics: NAACL 2024. 2024. doi:10.18653/v1/2024.findings-naacl.160

-

[60]

Investigation of Ensemble features of Self-Supervised Pretrained Models for Automatic Speech Recognition , author =. 2022 , booktitle =. doi:10.21437/Interspeech.2022-11376 , issn =

-

[61]

Emotion Recognition from Speech Using wav2vec 2.0 Embeddings , author =. 2021 , booktitle =. doi:10.21437/Interspeech.2021-703 , issn =

-

[62]

XLS-R: Self-supervised Cross-lingual Speech Representation Learning at Scale , author =. 2022 , booktitle =. doi:10.21437/Interspeech.2022-143 , issn =

-

[63]

ML-SUPERB: Multilingual Speech Universal PERformance Benchmark , author =. 2023 , booktitle =. doi:10.21437/Interspeech.2023-1316 , issn =

-

[64]

2023 11th International Conference on Affective Computing and Intelligent Interaction (ACII) , pages=

Peft-ser: On the use of parameter efficient transfer learning approaches for speech emotion recognition using pre-trained speech models , author=. 2023 11th International Conference on Affective Computing and Intelligent Interaction (ACII) , pages=. 2023 , organization=

2023

-

[65]

Transforming the Embeddings: A Lightweight Technique for Speech Emotion Recognition Tasks , author =. 2023 , booktitle =. doi:10.21437/Interspeech.2023-2561 , issn =

-

[66]

2023 Asia Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC) , pages=

Investigating the effectiveness of speaker embeddings for shout intensity prediction , author=. 2023 Asia Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC) , pages=. 2023 , organization=

2023

-

[67]

Automatic Assessment of the Degree of Clinical Depression from Speech Using X-Vectors , year=

Egas-López, José Vicente and Kiss, Gábor and Sztahó, Dávid and Gosztolya, Gábor , booktitle=. Automatic Assessment of the Degree of Clinical Depression from Speech Using X-Vectors , year=

-

[68]

Van Erven, Tim and Harremos, Peter , journal=. R. 2014 , publisher=

2014

-

[69]

Speech Self-Supervised Representation Benchmarking: Are We Doing it Right? , author =. 2023 , booktitle =. doi:10.21437/Interspeech.2023-1087 , issn =

-

[70]

X-Vectors: Robust DNN Embeddings for Speaker Recognition , year=

Snyder, David and Garcia-Romero, Daniel and Sell, Gregory and Povey, Daniel and Khudanpur, Sanjeev , booktitle=. X-Vectors: Robust DNN Embeddings for Speaker Recognition , year=

-

[71]

Strong Alone, Stronger Together: Synergizing Modality-Binding Foundation Models with Optimal Transport for Non-Verbal Emotion Recognition , year=

Phukan, Orchid Chetia and Mujtaba Akhtar, Mohd and Girish and Ranjan Behera, Swarup and Kalita, Sishir and Buduru, Arun Balaji and Sharma, Rajesh and Prasanna, S.R Mahadeva , booktitle=. Strong Alone, Stronger Together: Synergizing Modality-Binding Foundation Models with Optimal Transport for Non-Verbal Emotion Recognition , year=

-

[72]

Cybercriminals Clone Voice of Company Director in \ 35 Million Bank Heist , year =

-

[73]

2020 , howpublished =

Shearman, Piers , title =. 2020 , howpublished =

2020

-

[74]

2025 , howpublished =

2025

-

[75]

2024 , howpublished =

2024

-

[76]

How Deepfake Phishing Attacks Work: A New Cyber Crime Threat , year =

-

[77]

2023 , howpublished =

Beware the Artificial Impostor:. 2023 , howpublished =

2023

-

[78]

2025 , howpublished =

Online Fraud and Scams in India: Deepfakes,. 2025 , howpublished =

2025

-

[79]

, title =

Khanjani, Zahra and Watson, Gabrielle and Janeja, Vandana P. , title =. Frontiers in Big Data , volume =. 2022 , doi =

2022

-

[80]

Fooled Twice: People Cannot Detect Deepfakes but Think They Can

Yi, Jiangyan and Wang, Chenglong and Tao, Jianhua and others , title =. arXiv preprint arXiv:2308.14970 , year =

-

[81]

WaveNet: A Generative Model for Raw Audio

van den Oord, Aaron and Dieleman, Sander and Zen, Heiga and others , title =. arXiv preprint arXiv:1609.03499 , year =

work page internal anchor Pith review arXiv

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.