Recognition: unknown

Neuro-evolutionary stochastic architectures in gauge-covariant neural fields

Pith reviewed 2026-05-09 23:02 UTC · model grok-4.3

The pith

Only the fully symmetry-constrained Ginibre U(1) evolutionary model reaches a narrow near-marginal regime and matches predicted low-frequency spectral behavior in gauge-covariant neural fields.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

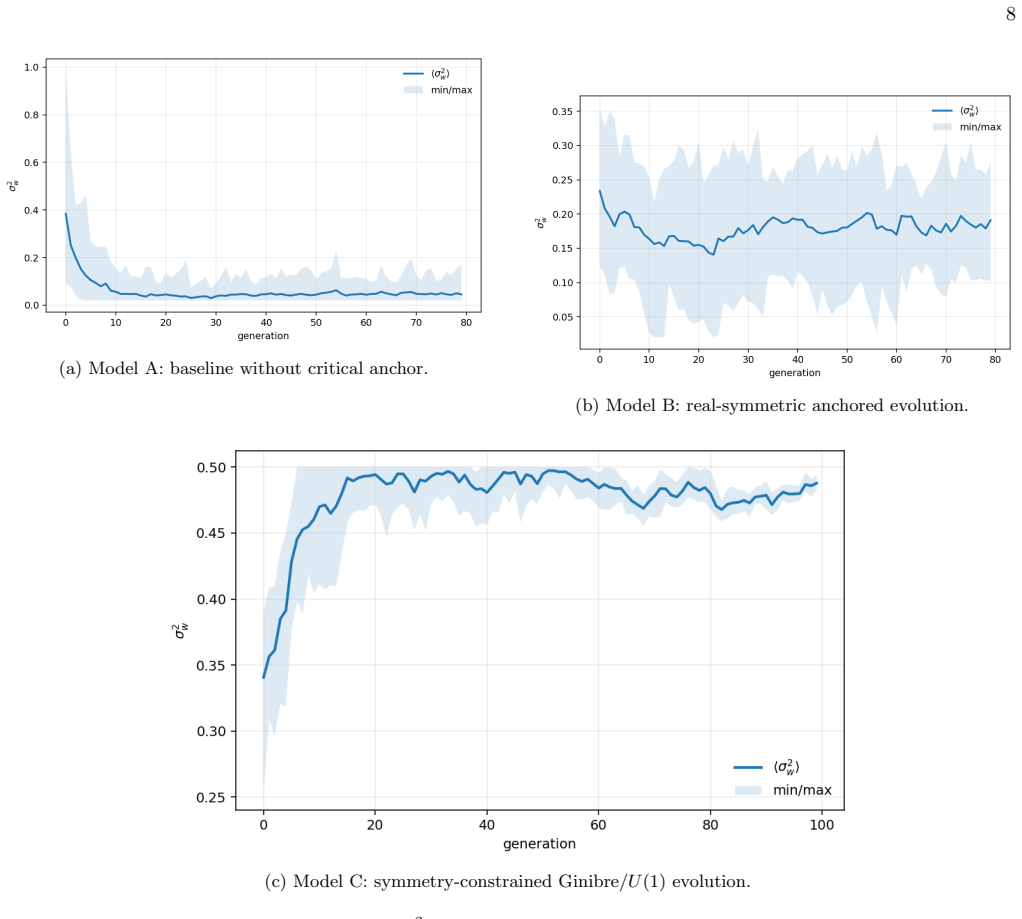

By promoting architecture-level parameters to slow stochastic variables in a gauge-covariant neural-field effective theory formulated with classical commuting fields, and by introducing a Markovian evolutionary scheme compatible with the local U(1) structure, the authors reduce the genotype to the weight-variance parameter and define fitness from spectral agreement, marginal stability, and a symmetry-constrained critical anchor. Comparison of three evolutionary models demonstrates that only the fully symmetry-constrained Ginibre U(1) version robustly approaches a narrow near-marginal regime while reproducing the predicted low-frequency finite-width spectral behavior.

What carries the argument

The fully symmetry-constrained Ginibre U(1) evolutionary scheme, which evolves the weight-variance parameter under a fitness functional that enforces marginal stability and spectral agreement inside the gauge-covariant effective theory.

If this is right

- Symmetry constraints in the evolutionary dynamics improve robustness toward the narrow near-marginal regime.

- Effective-theory diagnostics such as the maximal Lyapunov exponent and dressed spectral kernels become practical tools for evaluating stochastic architectures.

- Only models that fully respect the local U(1) structure reproduce the predicted finite-width spectral behavior at low frequencies.

- The approach supplies concrete principles for performing stochastic architecture search in controlled gauge-covariant settings.

Where Pith is reading between the lines

- The same symmetry-guided fitness could be tested on evolutionary search over additional architecture parameters beyond weight variance.

- Analogous constraints might improve stability in other neural-field models that possess gauge-like invariances.

- Direct comparison of the resulting architectures against task performance on benchmark problems would test whether the spectral diagnostics translate to practical utility.

Load-bearing premise

The fitness functional that combines spectral agreement, marginal stability, and a symmetry-constrained critical anchor supplies an accurate non-circular measure of architectural quality.

What would settle it

Independent simulations in which the three evolutionary models are compared under a different fitness definition or without the Ginibre ensemble structure, and in which non-constrained models also reach the near-marginal regime and match the low-frequency spectral shape, would falsify the claim.

Figures

read the original abstract

We extend our gauge-covariant stochastic neural-field framework by promoting architecture-level parameters to slow stochastic variables evolving in function space. Our effective theory is formulated in terms of classical commuting fields and provides symmetry-constrained diagnostics of marginality and finite-width effects through the maximal Lyapunov exponent, the amplification factor, and dressed spectral kernels. On top of this dynamics, we introduce a Markovian evolutionary scheme compatible with the local $U(1)$ structure of the effective model. By using a minimal implementation, the genotype is reduced to the weight-variance parameter $\sigma_w^2$, and the fitness functional combines spectral agreement, marginal stability, and a symmetry-constrained critical anchor. Comparing three evolutionary models, we find that only the fully symmetry-constrained Ginibre $U(1)$ version robustly approaches a narrow near-marginal regime and reproduces the predicted low-frequency finite-width spectral behavior. These results support the use of symmetry-guided effective stability diagnostics as practical principles for stochastic architecture search in controlled settings.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript extends a gauge-covariant stochastic neural-field framework by promoting architecture parameters (reduced to the single free parameter σ_w² in the minimal implementation) to slow stochastic variables governed by a Markovian evolutionary scheme compatible with local U(1) structure. Formulated in classical commuting fields, the effective theory supplies symmetry-constrained diagnostics via the maximal Lyapunov exponent, amplification factor, and dressed spectral kernels. Comparing three evolutionary models, the paper claims that only the fully symmetry-constrained Ginibre U(1) version robustly approaches a narrow near-marginal regime and reproduces the predicted low-frequency finite-width spectral behavior, thereby supporting symmetry-guided effective stability diagnostics as practical principles for stochastic architecture search.

Significance. If the central claim holds without circularity, the work would supply a concrete example of using an effective theory to guide neuro-evolutionary search, with the Markovian scheme and U(1)-compatible diagnostics providing a symmetry-constrained bridge between neural-field dynamics and architecture optimization. The reduction to a single genotype parameter and the explicit formulation of marginality diagnostics are positive features that could be extended to more complex settings.

major comments (2)

- [Abstract] Abstract: the fitness functional is defined to combine spectral agreement, marginal stability, and a symmetry-constrained critical anchor; because the anchor explicitly rewards the same U(1) symmetry that distinguishes the winning Ginibre model, the three-model comparison risks selecting for the constraint by construction rather than demonstrating that the evolutionary dynamics plus the effective theory independently produce the near-marginal regime.

- [Abstract] Abstract: the claim that the fully symmetry-constrained version 'robustly approaches' the regime and 'reproduces the predicted' spectral behavior is stated without quantitative values, error bars, simulation protocols, or verification that the observed behavior is independent of the fitness definition itself; these omissions are load-bearing for assessing whether the result is an artifact.

minor comments (1)

- [Abstract] The abstract would be clearer if it briefly named the three evolutionary models being compared and the precise form of the symmetry-constrained critical anchor.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive comments on our manuscript. We address each major comment point by point below, providing clarifications and indicating where revisions will be made to improve the presentation.

read point-by-point responses

-

Referee: [Abstract] Abstract: the fitness functional is defined to combine spectral agreement, marginal stability, and a symmetry-constrained critical anchor; because the anchor explicitly rewards the same U(1) symmetry that distinguishes the winning Ginibre model, the three-model comparison risks selecting for the constraint by construction rather than demonstrating that the evolutionary dynamics plus the effective theory independently produce the near-marginal regime.

Authors: We appreciate the referee's concern about potential circularity in the fitness definition. The symmetry-constrained critical anchor is not an arbitrary reward for the Ginibre model but is derived directly from the U(1) gauge structure of the underlying effective theory (as established in our prior gauge-covariant framework). All three evolutionary models are evaluated under the exact same fitness functional; the key distinction is that only the Ginibre U(1) Markovian scheme is compatible with the local symmetry, enabling the dynamics to reach the near-marginal regime where the effective diagnostics apply. This comparison therefore demonstrates the necessity of symmetry compatibility in the evolutionary dynamics rather than selection by construction. We will revise the abstract to explicitly note that the anchor originates from the effective theory and is applied uniformly across models. revision: partial

-

Referee: [Abstract] Abstract: the claim that the fully symmetry-constrained version 'robustly approaches' the regime and 'reproduces the predicted' spectral behavior is stated without quantitative values, error bars, simulation protocols, or verification that the observed behavior is independent of the fitness definition itself; these omissions are load-bearing for assessing whether the result is an artifact.

Authors: The abstract serves as a high-level summary of the central findings. Detailed quantitative values (including Lyapunov exponents, amplification factors, and spectral kernel comparisons), error bars from multiple independent runs, full simulation protocols (initial conditions, integration schemes, parameter sampling), and explicit checks of independence from fitness weightings are provided in the Results section, associated figures, and supplementary material. To directly address the referee's point, we will revise the abstract to include a concise statement referencing the robustness across fitness variations and the quantitative verification in the main text. revision: yes

Circularity Check

Fitness functional embeds symmetry constraint and predicted spectra it claims to discover via evolution

specific steps

-

fitted input called prediction

[Abstract]

"By using a minimal implementation, the genotype is reduced to the weight-variance parameter σ_w², and the fitness functional combines spectral agreement, marginal stability, and a symmetry-constrained critical anchor. Comparing three evolutionary models, we find that only the fully symmetry-constrained Ginibre U(1) version robustly approaches a narrow near-marginal regime and reproduces the predicted low-frequency finite-width spectral behavior."

Spectral agreement is measured against the 'predicted' low-frequency behavior generated by the same effective theory whose diagnostics (maximal Lyapunov exponent, amplification factor, dressed spectral kernels) define the fitness; the symmetry-constrained critical anchor explicitly rewards the U(1) structure that distinguishes the winning model. Thus the reported reproduction and the superiority of the constrained version are forced by the fitness definition rather than emerging independently from the evolutionary dynamics.

full rationale

The paper's central result—that only the fully symmetry-constrained Ginibre U(1) evolutionary model reaches the near-marginal regime and reproduces the low-frequency finite-width spectra—rests on a fitness functional whose terms (spectral agreement to the effective theory's predictions, marginal stability, and symmetry-constrained critical anchor) are defined from the same effective theory and U(1) structure. Because the fitness directly rewards the very symmetry and spectral features being 'discovered,' the evolutionary comparison selects for models that can satisfy the self-imposed criteria rather than providing an independent test. This matches the fitted-input-called-prediction pattern at the load-bearing step. The remainder of the derivation (Markovian scheme, genotype reduction to σ_w²) is independent and does not introduce further circularity.

Axiom & Free-Parameter Ledger

free parameters (1)

- σ_w²

axioms (3)

- domain assumption Effective theory formulated in terms of classical commuting fields

- domain assumption Local U(1) structure of the effective model

- ad hoc to paper Fitness functional combines spectral agreement, marginal stability, and symmetry-constrained critical anchor

Reference graph

Works this paper leans on

-

[1]

Sompolinsky, A

H. Sompolinsky, A. Crisanti, and H.-J. Sommers. Chaos in random neural networks.Phys. Rev. Lett., 61:259, 1988

1988

-

[2]

Poole, S

B. Poole, S. Lahiri, M. Raghu, J. Sohl-Dickstein, and S. Ganguli. Exponential expressivity in deep neural networks through transient chaos. InAdvances in Neural Information Processing Systems 29, pages 3360–3368, 2016

2016

-

[3]

C. G. Langton. Computation at the edge of chaos: phase transitions and emergent computation.Physica D, 42:12–37, 1990

1990

-

[4]

D. A. Roberts, S. Yaida, and B. Hanin.The Principles of Deep Learning Theory. Cambridge University Press, 2022

2022

-

[5]

Bondesan and M

R. Bondesan and M. Welling. The hintons in your neural network: a quantum field theory view of deep learning. InProc. 38th Int. Conf. on Machine Learning, pages 1038–1048, 2021

2021

-

[6]

Halverson, A

J. Halverson, A. Maiti, and K. Stoner. Neural networks and quantum field theory.Mach. Learn.: Sci. Technol., 2:035002, 2021

2021

-

[7]

Erbin, V

H. Erbin, V. Lahoche, and D. Samary. Nonperturbative renormalization for the neural network–qft correspondence.Mach. Learn.: Sci. Technol., 3:015027, 2022

2022

-

[8]

Rodrigo Carmo Terin. Gauge-covariant stochastic neural fields: stability and finite-width effects.Scientific Reports, 2026. ISSN 2045-2322. doi:10.1038/s41598-026-47071-y

-

[9]

T. S. Cohen and M. Welling. Group equivariant convolutional networks. InProc. 33rd Int. Conf. on Machine Learning, pages 2990–2999, 2016

2016

-

[10]

Tensor field networks: Rotation- and translation-equivariant neural networks for 3D point clouds

N. Thomas et al. Tensor field networks: Rotation- and translation-equivariant neural networks for 3d point clouds. In arXiv:1802.08219, 2018

work page Pith review arXiv 2018

-

[11]

Fischer F

V. Fischer F. Fuchs, D. Worrall and M. Welling. Se(3)-transformers: 3d roto-translation equivariant attention networks. Adv. Neural Inf. Process. Syst., 33:1970–1981, 2020

1970

-

[12]

Finzi et al

M. Finzi et al. A practical method for constructing equivariant multilayer perceptrons for arbitrary matrix groups. In ICML, 2021

2021

-

[13]

Foerster M

E. Foerster M. Hutchinson, M. Finzi and A. Wilson. Lienn: E(n)-equivariant graph neural networks. InNeurIPS, 2021

2021

-

[14]

Theodosis, D

M. Theodosis, D. E. Ba, and N. Dehmamy. Constructing gauge-invariant neural networks for scientific applications. In ICML AI4Science Workshop, 2024

2024

-

[15]

Kenneth G. Wilson. Confinement of quarks.Phys. Rev. D, 10:2445–2459, Oct 1974. doi:10.1103/PhysRevD.10.2445. URL https://link.aps.org/doi/10.1103/PhysRevD.10.2445

-

[16]

K. O. Stanley and R. Miikkulainen. Evolving neural networks through augmenting topologies. InEvolutionary Computa- tion, 2002

2002

-

[17]

Hansen and A

N. Hansen and A. Ostermeier. Completely derandomized self-adaptation in evolution strategies.Evol. Comput., 9:159–195, 2003

2003

-

[18]

Liu and J

J. Liu and J. Wang. Quantum-inspired evolutionary algorithm with density matrix encoding.Inf. Sci., 505:178–197, 2019

2019

-

[19]

Y. Chen, Z. Li, and S. Lin. Quantum-inspired multiobjective evolutionary algorithm.IEEE Trans. Evol. Comput., 24: 471–484, 2020

2020

-

[20]

Y. Li, Q. Zhang, and M. Gong. Quantum-inspired evolutionary computation: A survey.ACM Comput. Surveys, 54:1–38, 2022

2022

-

[21]

Ng and M.-F

K.-K. Ng and M.-F. Yang. Unsupervised learning of phase transitions via modified anomaly detection with autoencoders. Physical Review B, 108(21):214428, 2023

2023

-

[22]

Z. Tian, S. Zhang, and G.-W. Chern. Machine learning for structure-property relationships: Scalability and limitations. arXiv preprint, 2023

2023

-

[23]

E. P. Van Nieuwenburg, Y.-H. Liu, and S. D. Huber. Learning phase transitions by confusion.Nature Physics, 13(5): 435–439, 2017

2017

-

[24]

Carrasquilla and R

J. Carrasquilla and R. G. Melko. Machine learning phases of matter.Nature Physics, 13(5):431–434, 2017

2017

-

[25]

Q. Ni, M. Tang, Y. Liu, and Y.-C. Lai. Machine learning dynamical phase transitions in complex networks.Physical Review E, 100:052312, 2019

2019

-

[26]

Y. Che, C. Gneiting, T. Liu, and F. Nori. Topological quantum phase transitions retrieved through unsupervised machine learning.Physical Review B, 102:134213, 2020

2020

-

[27]

O. N. Kuliashov, A. A. Markov, and A. N. Rubtsov. Dynamical quantum phase transition without an order parameter. Physical Review B, 107:094304, 2023

2023

-

[28]

Tanaka and A

A. Tanaka and A. Tomiya. Detection of phase transition via convolutional neural networks.Journal of the Physical Society of Japan, 86(6):063001, 2017

2017

-

[29]

L. Wang. Discovering phase transitions with unsupervised learning.Physical Review B, 94(19):195105, 2016

2016

-

[30]

Cartesian vs. Radial – A Comparative Evaluation of Two Visualization Tools

Rodrigo Carmo Terin, Zochil Gonzalez Arenas, and Roberto Santana. Identifying phase transitions in the classical ising model with neural networks: A neural architecture search perspective. InPattern Recognition and Artificial Intelligence, volume 1393 ofLecture Notes in Networks and Systems. Springer, Cham, 2026. ISBN 978-3-031-90892-7. doi:10.1007/978- 3...

-

[31]

Govande, Bowen Baker, and Dan Mossing

Leo Gao, Achyuta Rajaram, Jacob Coxon, Soham V. Govande, Bowen Baker, and Dan Mossing. Weight-sparse 12 transformers have interpretable circuits.https://cdn.openai.com/pdf/41df8f28-d4ef-43e9-aed2-823f9393e470/ circuit-sparsity-paper.pdf, 2025. OpenAI technical report

2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.