Recognition: unknown

Mechanistic Interpretability Tool for AI Weather Models

Pith reviewed 2026-05-09 22:59 UTC · model grok-4.3

The pith

An open-source tool uses PCA and cosine similarity to find interpretable meteorological directions inside the latent space of AI weather models like GraphCast.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The paper claims that its tool can identify linear combinations of latent channels in AI weather models that appear to correspond to interpretable meteorological features, shown through preliminary case studies on GraphCast where directions extracted via PCA and cosine similarity align with mid-latitude synoptic-scale waves and specific humidity.

What carries the argument

The tool that organizes internal latent representations from the model processor and enables cosine similarity and Principal Component Analysis to extract directions in latent space potentially tied to meteorological features.

If this is right

- The tool can be applied to other AI weather models to examine how they represent different atmospheric variables.

- It supplies a practical starting point for mechanistic analyses that could help explain why specific forecasts are generated.

- Users can extend the same PCA and similarity methods to new case studies beyond waves and humidity.

Where Pith is reading between the lines

- If the identified directions prove stable, they could support targeted interventions in the model's latent space to adjust behavior for particular weather regimes.

- This style of analysis might eventually connect data-driven AI predictions more directly to physical principles used in traditional weather models.

Load-bearing premise

That the linear combinations of latent channels identified by PCA and cosine similarity actually represent real meteorological features rather than coincidental patterns.

What would settle it

A controlled test that quantifies how accurately the extracted latent directions predict the strength or location of the corresponding weather features across a large set of independent forecast cases, or a counterexample where the alignment fails systematically.

Figures

read the original abstract

Artificial Intelligence (AI) weather models are improving rapidly, and their forecasts are already competitive with long-established traditional Numerical Weather Prediction (NWP). To build confidence in this new methodology, it is critical that we understand how these predictions are generated. This is a huge challenge as these AI weather models remain largely black boxes. In other areas of Machine Learning (ML), mechanistic interpretability has emerged as a framework for understanding ML predictions by analysing the building blocks responsible for them. Here we present an open-source, highly adaptable tool which incorporates concepts from mechanistic interpretability. The tool organises internal latent representations from the model processor and allows for initial analyses, including cosine similarity and Principal Component Analysis (PCA), enabling the user to identify directions in latent space potentially associated with meteorological features. Applying our tool to the graph neural network GraphCast, we present preliminary case studies for mid-latitude synoptic-scale waves and specific humidity. These demonstrate the tool's ability to identify linear combinations of latent channels that appear to correspond to interpretable features.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

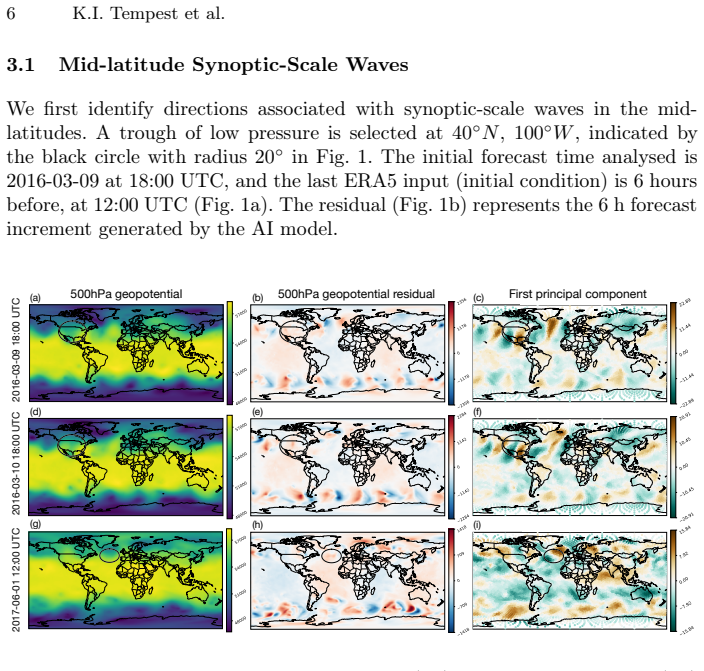

Summary. The manuscript introduces an open-source tool for mechanistic interpretability of AI weather models. It organizes latent representations from models such as the graph neural network GraphCast and applies standard techniques including cosine similarity and PCA to identify linear combinations of latent channels that may correspond to meteorological features. Preliminary case studies are presented for mid-latitude synoptic-scale waves and specific humidity, claiming these demonstrate the tool's ability to surface interpretable directions in latent space.

Significance. An adaptable open-source tool for probing internal representations in AI weather models could help build scientific confidence in forecasts that are already competitive with traditional NWP. The work correctly identifies the need for interpretability methods beyond black-box evaluation. However, because the central demonstrations remain qualitative and lack quantitative or causal validation, the immediate significance to atmospheric physics is modest and would increase substantially with stronger evidence that identified directions are mechanistically relevant rather than spurious.

major comments (3)

- [Case studies] Case studies section: the claim that PCA directions 'appear to correspond to interpretable features' for synoptic waves and specific humidity rests entirely on visual similarity. No quantitative metrics (e.g., spatial correlation coefficients with reanalysis fields, explained variance relative to random baselines, or forecast-error correlations) are reported to establish that these directions are not simply high-variance directions unrelated to the model's computation.

- [Tool description and results] Tool description and results: no activation patching, ablation, or intervention experiments are performed to test whether modifying the identified latent directions produces physically consistent changes in the model's output fields. Without such causal tests, the mapping from latent direction to meteorological feature remains correlational and could arise from shared variance structure in the training data.

- [Abstract and conclusions] Abstract and conclusions: the assertion that the tool enables identification of 'directions in latent space potentially associated with meteorological features' is not supported by controls for false positives (e.g., statistical significance of cosine similarities or comparison against shuffled or random directions). This weakens the central claim that the tool provides mechanistic insight rather than post-hoc pattern matching.

minor comments (3)

- [Figures] Figure captions should specify the exact PCA components plotted, the percentage of variance explained by each, and the units/color scales of the meteorological fields shown for reproducibility.

- [Introduction] The manuscript would benefit from a brief comparison to existing interpretability methods already applied to weather models (e.g., saliency maps or attention visualization) to clarify the incremental contribution of the new tool.

- [Tool description] Notation for latent channels and PCA directions should be defined consistently in the text and equations to avoid ambiguity when users adapt the code.

Simulated Author's Rebuttal

We thank the referee for their constructive and insightful review. We appreciate the acknowledgment of the tool's potential value for building scientific confidence in AI weather models. We address each major comment below and outline revisions to strengthen the manuscript while remaining honest about the preliminary nature of the current demonstrations.

read point-by-point responses

-

Referee: [Case studies] Case studies section: the claim that PCA directions 'appear to correspond to interpretable features' for synoptic waves and specific humidity rests entirely on visual similarity. No quantitative metrics (e.g., spatial correlation coefficients with reanalysis fields, explained variance relative to random baselines, or forecast-error correlations) are reported to establish that these directions are not simply high-variance directions unrelated to the model's computation.

Authors: We agree that the case studies currently rely on visual inspection, which limits the strength of the claims. In the revised manuscript, we will add quantitative metrics including spatial correlation coefficients with ERA5 reanalysis fields for the identified directions, as well as comparisons of explained variance against random and shuffled direction baselines. These additions will help demonstrate that the directions capture more than generic high-variance structure. revision: yes

-

Referee: [Tool description and results] Tool description and results: no activation patching, ablation, or intervention experiments are performed to test whether modifying the identified latent directions produces physically consistent changes in the model's output fields. Without such causal tests, the mapping from latent direction to meteorological feature remains correlational and could arise from shared variance structure in the training data.

Authors: The referee is correct that the present work provides only correlational evidence. Performing activation patching or ablation on GraphCast would require substantial new methodological development and compute, which is beyond the scope of this initial tool paper. We will revise the manuscript to explicitly discuss this as a limitation and to position causal interventions as an important direction for future extensions of the tool, while clarifying that the current analyses are intended as an exploratory first step for identifying candidate directions. revision: partial

-

Referee: [Abstract and conclusions] Abstract and conclusions: the assertion that the tool enables identification of 'directions in latent space potentially associated with meteorological features' is not supported by controls for false positives (e.g., statistical significance of cosine similarities or comparison against shuffled or random directions). This weakens the central claim that the tool provides mechanistic insight rather than post-hoc pattern matching.

Authors: We acknowledge the absence of explicit false-positive controls. In the revised version, we will add comparisons of cosine similarities against those obtained from shuffled latent channels and random directions, along with basic statistical significance assessments. These controls will be incorporated into the abstract, results, and conclusions to better support the claims of potential mechanistic relevance. revision: yes

Circularity Check

No circularity; standard tool using PCA and cosine similarity on latent space with no derivations or self-referential fits.

full rationale

The paper presents a methodological tool for mechanistic interpretability of AI weather models, applying off-the-shelf techniques (cosine similarity, PCA) to GraphCast's latent channels. Preliminary case studies for synoptic waves and specific humidity are described as qualitative demonstrations that linear combinations 'appear to correspond' to features. No equations, parameter fits, predictions derived from inputs, or load-bearing self-citations are present. The central contribution is tool development and empirical illustration rather than a closed derivation chain, making the analysis self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

Forward citations

Cited by 1 Pith paper

-

Toward a Scientific Discovery Engine for Weather and Climate Data: A Visual Analytics Workbench for Embedding-Based Exploration

A visual analytics workbench enables scientists to explore, query, and verify embedding-based similarity searches on weather and climate data by tracing results back to physical evidence.

Reference graph

Works this paper leans on

-

[1]

Beylich, M., Craig, G.C.: Interpretability of ai weather models via intermediate decoding (2026), to be submitted

2026

-

[2]

Accurate medium-range global weather forecasting with 3d neural networks,

Bi, K., Xie, L., Zhang, H., Chen, X., Gu, X., Tian, Q.: Accurate medium-range global weather forecasting with 3d neural networks. Nature619(7970), 533–538 (2023). https://doi.org/10.1038/s41586-023-06185-3, https://www.nature.com/a rticles/s41586-023-06185-3

-

[3]

anen, M. Ramonet, A. Richter, A. Sch

Bodnar, C., Bruinsma, W.P., Lucic, A., Stanley, M., Allen, A., Brandstetter, J., Garvan, P., Riechert, M., Weyn, J.A., Dong, H., Gupta, J.K., Thambiratnam, K., Archibald, A.T., Wu, C.C., Heider, E., Welling, M., Turner, R.E., Perdikaris, P.: A foundation model for the earth system. Nature641(8065), 1180–1187 (2025). https://doi.org/10.1038/s41586-025-0900...

-

[4]

Weather and Climate Dynamics4(2), 399–425 (2023)

Hauser,S.,Teubler,F., Riemer,M.,Knippertz, P.,Grams, C.M.:Towards aholistic understanding of blocked regime dynamics through a combination of complemen- tary diagnostic perspectives. Weather and Climate Dynamics4(2), 399–425 (2023). https://doi.org/10.5194/wcd-4-399-2023, https://wcd.copernicus.org/articles/4/ 399/2023/ Mechanistic Interpretability Tool f...

-

[5]

Hersbach, H., Bell, B., Berrisford, P., Hirahara, S., Horányi, A., Muñoz-Sabater, J., Nicolas, J., Peubey, C., Radu, R., Schepers, D., Simmons, A., Soci, C., Abdalla, S., Abellan, X., Balsamo, G., Bechtold, P., Biavati, G., Bidlot, J., Bonavita, M., De Chiara, G., Dahlgren, P., Dee, D., Diamantakis, M., Dragani, R., Flemming, J., Forbes, R., Fuentes, M., ...

- [6]

-

[7]

Scienc e 382(6669), 1416–1421 (2023) https://doi.org/10.1126/science.adi2336

Lam, R., Sanchez-Gonzalez, A., Willson, M., Wirnsberger, P., Fortunato, M., Alet, F.,Ravuri,S.,Ewalds,T.,Eaton-Rosen,Z.,Hu,W.,Merose,A.,Hoyer,S.,Holland, G., Vinyals, O., Stott, J., Pritzel, A., Mohamed, S., Battaglia, P.: Learning skillful medium-range global weather forecasting. Science382(6677), 1416–1421 (2023). https://doi.org/10.1126/science.adi2336...

-

[8]

https://doi.org/10.48550/arXiv.2412

Lang, S., Alexe, M., Clare, M.C.A., Roberts, C., Adewoyin, R., Bouallègue, Z.B., Chantry, M., Dramsch, J., Dueben, P.D., Hahner, S., Maciel, P., Prieto-Nemesio, A., O’Brien, C., Pinault, F., Polster, J., Raoult, B., Tietsche, S., Leutbecher, M.: AIFS-CRPS: Ensemble forecasting using a model trained with a loss function based on the continuous ranked proba...

- [9]

-

[10]

https://www.ecmwf.int/en/forecasts/documentation-and-sup port/medium-range-forecasts (2026), accessed 2026/02/13

for Medium-Range Weather Forecasts, E.C.: Medium-range forecasts: Forecasts up to 15 days ahead. https://www.ecmwf.int/en/forecasts/documentation-and-sup port/medium-range-forecasts (2026), accessed 2026/02/13

2026

-

[11]

https://www.transformer-circuits.pub/2022/mech-interp-essay (June 27 2022), accessed: 2026-02-13

Olah, C.: Mechanistic interpretability, variables, and the importance of inter- pretable bases. https://www.transformer-circuits.pub/2022/mech-interp-essay (June 27 2022), accessed: 2026-02-13

2022

-

[12]

https://distill.pub/2020/circuits/zoom-in/ (2020), accessed: 2026-02-13

Olah, C., Cammarata, N., Schubert, L., Goh, G., Petrov, M., Carter, S.: Zoom in: An introduction to circuits. https://distill.pub/2020/circuits/zoom-in/ (2020), accessed: 2026-02-13

2020

-

[13]

WeatherBench 2: A benchmark for the next generation of data‐driven global weather models

Rasp, S., Hoyer, S., Merose, A., Langmore, I., Battaglia, P., Russell, T., Sanchez- Gonzalez, A., Yang, V., Carver, R., Agrawal, S., Chantry, M., Ben Bouallegue, Z., Dueben, P., Bromberg, C., Sisk, J., Barrington, L., Bell, A., Sha, F.: Weatherbench 2: A benchmark for the next generation of data-driven global weather models. Journal of Advances in Modelin...

-

[14]

https://scikit-learn.org/stable/modules/generated/sklearn.decomposition

scikit-learn developers: sklearn.decomposition.pca — scikit-learn 1.8.0 documenta- tion. https://scikit-learn.org/stable/modules/generated/sklearn.decomposition. PCA.html (2025), accessed: 2026-02-14

2025

-

[15]

https://scikit-learn.org/stable/modules/generated/skle arn.metrics.pairwise.cosine_similarity.html (2025), accessed: 2026-02-14 14 K.I

scikit-learn developers: sklearn.metrics.pairwise.cosine similarity — scikit-learn 1.8.0 documentation. https://scikit-learn.org/stable/modules/generated/skle arn.metrics.pairwise.cosine_similarity.html (2025), accessed: 2026-02-14 14 K.I. Tempest et al

2025

-

[16]

https://stream lit.io (2026), accessed: 2026-02-14

Streamlit: Streamlit — a faster way to build and share data apps. https://stream lit.io (2026), accessed: 2026-02-14

2026

-

[17]

Atmospheric Environment338, 120797 (2024)

Yang, R., Hu, J., Li, Z., Mu, J., Yu, T., Xia, J., Li, X., Dasgupta, A., Xiong, H.: Interpretable machine learning for weather and climate prediction: A review. Atmospheric Environment338, 120797 (2024). https://doi.org/https://doi.org/ 10.1016/j.atmosenv.2024.120797, https://www.sciencedirect.com/science/article/ pii/S1352231024004722

- [18]

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.