Recognition: unknown

Structure-Guided Diffusion Model for EEG-Based Visual Cognition Reconstruction

Pith reviewed 2026-05-08 08:42 UTC · model grok-4.3

The pith

A diffusion model guided by structural information extracted from EEG signals reconstructs visual images with higher fidelity than existing methods.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

SGDM incorporates explicit structural information for EEG-based visual reconstruction by combining a structurally supervised variational autoencoder with a spatiotemporal EEG encoder aligned to a visual embedding space via contrastive learning, then integrating structural information into a diffusion model through ControlNet to guide image generation from EEG features, resulting in higher fidelity reconstructions on both abstract and natural image datasets.

What carries the argument

Structure-Guided Diffusion Model (SGDM), a two-stage system that extracts structural geometry from EEG signals using a supervised VAE and contrastive alignment, then uses ControlNet to inject this geometry into a diffusion process for image synthesis.

Load-bearing premise

That structural geometry can be reliably extracted from EEG via the supervised VAE and contrastive alignment, then effectively injected via ControlNet to guide diffusion without distorting subjective cognitive content or introducing generation artifacts.

What would settle it

Comparing reconstructions with and without the structural guidance from ControlNet on the same EEG inputs; if the version without guidance matches or exceeds the guided version in fidelity metrics, the benefit of structure injection would be refuted.

Figures

read the original abstract

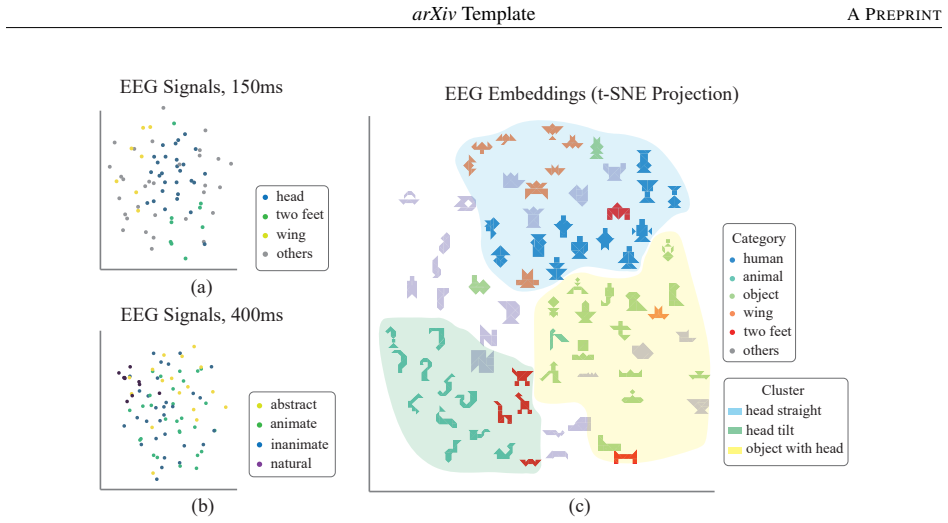

Objective: Decoding visual information from electroencephalography (EEG) is an important problem in neuroscience and brain-computer interface (BCI) research. Existing methods are largely restricted to natural images and categorical representations, with limited capacity to capture structural features and to differentiate objective perception from subjective cognition. We propose a Structure-Guided Diffusion Model (SGDM) that incorporates explicit structural information for EEG-based visual reconstruction. Approach: SGDM is evaluated on the Kilogram abstract visual object dataset and the THINGS natural image dataset using a two-stage generative mechanism. The framework combines a structurally supervised variational autoencoder with a spatiotemporal EEG encoder aligned to a visual embedding space via contrastive learning. Structural information is integrated into a diffusion model through ControlNet to guide image generation from EEG features. Results: SGDM outperforms existing methods on both abstract and natural image datasets. Reconstructed images achieve higher fidelity in low-level visual features and semantic representations, indicating improved decoding accuracy and strong generalization across diverse visual domains. Spatiotemporal analysis of EEG signals further reveals hierarchical structural encoding patterns, consistent with the neural dynamics of visual cognition. Significance: These findings validate the effectiveness of SGDM in capturing explicit structural geometry and generating images with high fidelity to individual cognitive representations. By enabling decoding of complex visual content from EEG signals, the framework extends neural decoding beyond low-dimensional or categorical outputs. This supports BCIs with increased degrees of freedom for intention decoding and more flexible brain-to-machine communication.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a Structure-Guided Diffusion Model (SGDM) for reconstructing visual images from EEG signals. It employs a two-stage pipeline consisting of a structurally supervised variational autoencoder (VAE) whose latent space is aligned to visual embeddings via contrastive learning, followed by a diffusion model whose generation is conditioned on EEG-derived features through ControlNet. The approach is tested on the Kilogram abstract-object dataset and the THINGS natural-image dataset; the abstract asserts that SGDM outperforms prior methods in low-level feature and semantic fidelity while also revealing hierarchical structural encoding patterns in the EEG time course.

Significance. If the performance and generalization claims are substantiated with rigorous quantitative evidence, the work would represent a meaningful advance in EEG-based visual decoding by moving beyond categorical or low-dimensional outputs toward explicit structural reconstruction. The integration of ControlNet-guided diffusion with contrastively aligned EEG features could increase the expressiveness of brain-computer interfaces and provide new empirical handles on the neural dynamics of visual structure perception.

major comments (2)

- [Abstract and §4] Abstract (Results paragraph) and §4 (Evaluation): The central claim that SGDM 'outperforms existing methods' and achieves 'higher fidelity' is stated without any reported quantitative metrics, statistical tests, error bars, cross-validation details, or ablation studies. This absence prevents assessment of whether the reported improvements are reliable or merely qualitative impressions.

- [§3.1 and §3.3] §3.1 (Structurally supervised VAE) and §3.3 (ControlNet integration): Because EEG has low spatial resolution and is subject to volume conduction, the VAE supervision signal must derive from image-based structural maps (edges, contours, depth) rather than direct neural measurements. No correlation analysis between the VAE structural latent and ground-truth image geometry, nor an ablation that removes the structural branch, is described. Without such checks it remains possible that ControlNet guidance is effectively image-conditioned generation with EEG acting only as a weak proxy, which would undermine the neuroscience premise that the model decodes genuine cognitive structural representations.

minor comments (2)

- [Abstract] The abstract would be strengthened by the inclusion of at least one key quantitative result (e.g., FID or SSIM improvement) to support the performance claims.

- [§3.2] Notation for the contrastive loss and ControlNet guidance scale should be defined explicitly when first introduced rather than left to supplementary material.

Simulated Author's Rebuttal

We thank the referee for the constructive comments on our manuscript. We provide point-by-point responses to the major comments below and outline the revisions we will make to address the concerns raised.

read point-by-point responses

-

Referee: [Abstract and §4] Abstract (Results paragraph) and §4 (Evaluation): The central claim that SGDM 'outperforms existing methods' and achieves 'higher fidelity' is stated without any reported quantitative metrics, statistical tests, error bars, cross-validation details, or ablation studies. This absence prevents assessment of whether the reported improvements are reliable or merely qualitative impressions.

Authors: We agree that the presentation of results in the abstract and Section 4 would benefit from more rigorous quantitative support. In the revised manuscript, we will incorporate specific quantitative metrics including FID, LPIPS, and classification accuracy for semantic fidelity, along with statistical tests such as Wilcoxon signed-rank tests for comparisons against baselines, error bars representing standard deviations across cross-validation folds, and explicit details on the train/test splits and cross-validation procedure. We will also add ablation studies in Section 4 to evaluate the contribution of the structurally supervised VAE, the contrastive alignment, and the ControlNet conditioning. revision: yes

-

Referee: [§3.1 and §3.3] §3.1 (Structurally supervised VAE) and §3.3 (ControlNet integration): Because EEG has low spatial resolution and is subject to volume conduction, the VAE supervision signal must derive from image-based structural maps (edges, contours, depth) rather than direct neural measurements. No correlation analysis between the VAE structural latent and ground-truth image geometry, nor an ablation that removes the structural branch, is described. Without such checks it remains possible that ControlNet guidance is effectively image-conditioned generation with EEG acting only as a weak proxy, which would undermine the neuroscience premise that the model decodes genuine cognitive structural representations.

Authors: The referee correctly notes that the structural supervision in the VAE relies on image-derived features due to the inherent limitations of EEG signals. Our approach uses these maps to train the VAE to extract structural information, which is then aligned with EEG features via contrastive learning to ensure the EEG encoder captures relevant cognitive representations. To directly address the concern, we will include in the revision a correlation analysis (e.g., computing correlations between latent dimensions and image structural metrics like edge histograms and depth maps) and an ablation experiment that disables the structural supervision branch while keeping other components fixed. This will demonstrate that performance degrades without it and that the generation is driven by EEG features rather than serving as a proxy for image conditioning. We believe these additions will reinforce the validity of our neuroscience premise. revision: yes

Circularity Check

No circularity in architectural pipeline or claims

full rationale

The paper proposes an empirical two-stage generative architecture (structurally supervised VAE + contrastive EEG-visual alignment + ControlNet diffusion) evaluated on image reconstruction metrics. No closed-form derivations, equations, or load-bearing premises reduce by construction to fitted parameters, self-definitions, or self-citation chains. Performance claims rest on dataset comparisons rather than tautological reductions. This is a standard methodological contribution without the enumerated circularity patterns.

Axiom & Free-Parameter Ledger

free parameters (2)

- Contrastive alignment hyperparameters

- ControlNet guidance scale

axioms (2)

- domain assumption EEG signals encode extractable explicit structural geometry of visual stimuli.

- domain assumption Diffusion models conditioned via ControlNet can faithfully translate EEG-derived features into high-fidelity images.

Reference graph

Works this paper leans on

-

[1]

Abstract visual reasoning with tangram shapes

Anya Ji, Noriyuki Kojima, Noah Rush, Alane Suhr, Wai Keen V ong, Robert Hawkins, and Yoav Artzi. Abstract visual reasoning with tangram shapes. InProceedings of the 2022 conference on empirical methods in natural language processing, pages 582–601,

2022

-

[2]

Brainvis: Exploring the bridge between brain and visual signals via image reconstruction

Honghao Fu, Hao Wang, Jing Jih Chin, and Zhiqi Shen. Brainvis: Exploring the bridge between brain and visual signals via image reconstruction. InICASSP 2025 - 2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 1–5,

2025

-

[3]

doi:10.1109/ICASSP49660.2025.10889805. Jinxin Zhou, Tianyu Ding, Tianyi Chen, Jiachen Jiang, Ilya Zharkov, Zhihui Zhu, and Luming Liang. Dream: Diffusion rectification and estimation-adaptive models. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 8342–8351, June

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.