Recognition: unknown

HeadRouter: Dynamic Head-Weight Routing for Task-Adaptive Audio Token Pruning in Large Audio Language Models

Pith reviewed 2026-05-08 05:14 UTC · model grok-4.3

The pith

HeadRouter dynamically weights attention heads to prune audio tokens adaptively without training.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

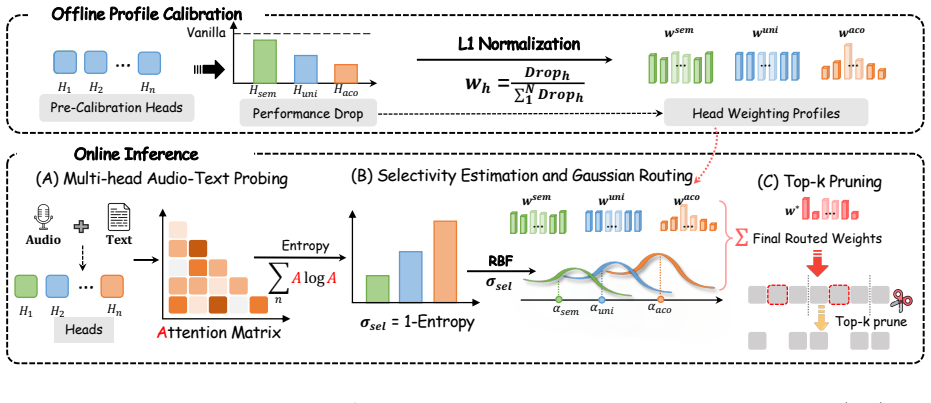

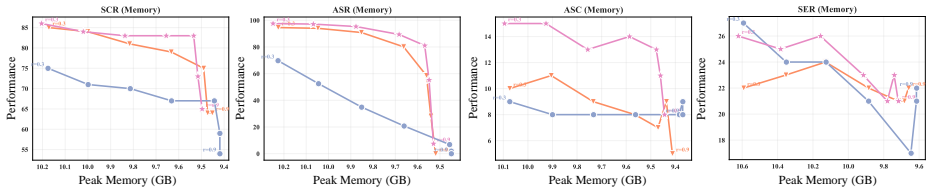

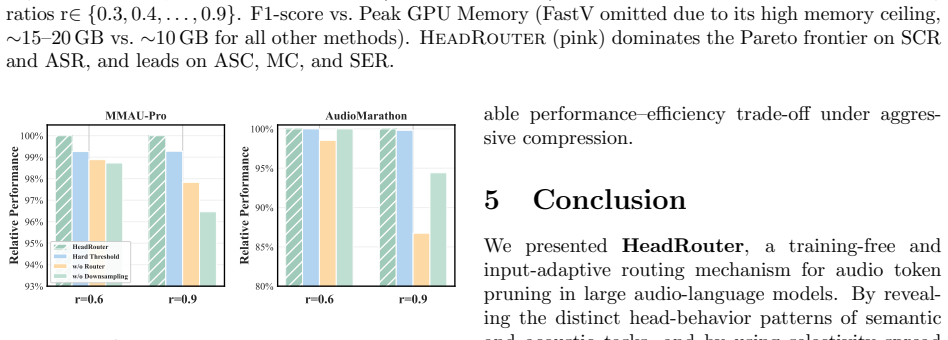

The authors show that attention heads in large audio language models exhibit distinct behaviors across audio domains, with only a sparse subset actively responding to tasks and showing completely different performance on semantic and acoustic subtasks. They introduce HeadRouter as a head-importance-aware, training-free token pruning method that perceives varying head importance for different audio tasks to maximize retention of crucial tokens. On AudioMarathon and MMAU-Pro benchmarks, the method achieves state-of-the-art compression, exceeding the baseline model even when retaining 70 percent of audio tokens and reaching 101.8 percent and 103.0 percent of the vanilla average on Qwen2.5-Omni-

What carries the argument

HeadRouter, a head-importance-aware token pruning method that dynamically routes token retention by weighting each attention head's contribution according to its observed responsiveness to the current audio task.

If this is right

- Token pruning can exceed the original model's accuracy by preserving task-critical tokens identified through head-specific scores.

- The same training-free method applies directly to different large audio language models.

- Performance remains at or above baseline levels when only 70 percent of audio tokens are retained.

- Uniform averaging of head scores underperforms because heads specialize differently on semantic and acoustic content.

Where Pith is reading between the lines

- The same head-routing logic could reduce compute in video or multimodal models where attention heads show comparable specialization.

- Real-time audio systems might process longer contexts on edge hardware by cutting memory and compute through selective retention.

- If head responsiveness patterns prove stable across languages or recording conditions, the router could be precomputed for even lower overhead.

Load-bearing premise

Attention heads exhibit distinct behaviors across diverse audio domains, with only a sparse subset actively responding differently to semantic and acoustic tasks, so that dynamic per-head weighting can reliably identify and retain crucial tokens without any training.

What would settle it

Run the same benchmarks with audio sequences pruned to 70 percent tokens using uniform head averaging versus HeadRouter; if the latter no longer exceeds the full model's accuracy, the benefit of task-adaptive head routing does not hold.

Figures

read the original abstract

Recent large audio language models (LALMs) demonstrate remarkable capabilities in processing extended multi-modal sequences, yet incur high inference costs. Token compression is an effective method that directly reduces redundant tokens in the sequence. Existing compression methods usually assume that all attention heads in LALMs contribute equally to various audio tasks and calculate token importance by averaging scores across all heads. However, our analysis demonstrates that attention heads exhibit distinct behaviors across diverse audio domains. We further reveal that only a sparse subset of attention heads actively responds to audio, with completely different performance when handling semantic and acoustic tasks. In light of this observation, we propose HeadRouter, a head-importance-aware token pruning method that perceives the varying importance of attention heads in different audio tasks to maximize the retention of crucial tokens. HeadRouter is training-free and can be applied to various LALMs. Extensive experiments on the AudioMarathon and MMAU-Pro benchmarks demonstrate that HeadRouter achieves state-of-the-art compression performance, exceeding the baseline model even when retaining 70% of the audio tokens and achieving 101.8% and 103.0% of the vanilla average on Qwen2.5-Omni-3B and Qwen2.5-Omni-7B, respectively.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces HeadRouter, a training-free token pruning method for large audio language models that dynamically routes based on per-head importance to adapt to semantic versus acoustic tasks. It claims that attention heads exhibit distinct behaviors across audio domains with only a sparse subset actively responding differently, enabling better retention of crucial tokens than uniform averaging across heads. On AudioMarathon and MMAU-Pro benchmarks, HeadRouter is reported to achieve state-of-the-art compression, exceeding the unpruned vanilla baseline even at 70% token retention and reaching 101.8% and 103.0% of vanilla average performance on Qwen2.5-Omni-3B and 7B models respectively.

Significance. If the head-distinction premise and training-free routing hold with generalizability, the work could meaningfully lower inference costs for long-context audio-language models while preserving or improving task performance, addressing a practical bottleneck in deploying LALMs. The reported outperformance of the full model at substantial compression rates would be a notable result if substantiated with reproducible details.

major comments (2)

- [Abstract] Abstract: The central performance claims (exceeding vanilla at 70% retention with 101.8% and 103.0% relative scores) are load-bearing for the contribution, yet the abstract supplies no implementation details on head-weight derivation, no error bars, no statistical significance tests, and no ablation on the routing mechanism, so the robustness of the training-free head-importance detection cannot be evaluated.

- [Method] The method relies on the assumption that attention heads show distinct task-specific behaviors with only a sparse subset responding differently to semantic and acoustic tasks; without quantitative evidence (e.g., activation statistics, entropy measures, or visualizations) or ablations demonstrating that dynamic per-head weighting reliably outperforms uniform averaging, the premise remains unverified and the gains could be benchmark-specific.

minor comments (2)

- [Method] Clarify the exact computation of head weights (e.g., attention entropy, activation norms, or input-dependent routing) with a precise algorithm or equation in the main text to enable reproducibility.

- [Abstract] Define 'vanilla average' explicitly when first used and ensure all benchmark splits and model variants are described consistently between abstract and experiments.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We address each major comment in detail below, clarifying the existing content of the paper and indicating revisions where they strengthen the presentation without misrepresenting our results.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central performance claims (exceeding vanilla at 70% retention with 101.8% and 103.0% relative scores) are load-bearing for the contribution, yet the abstract supplies no implementation details on head-weight derivation, no error bars, no statistical significance tests, and no ablation on the routing mechanism, so the robustness of the training-free head-importance detection cannot be evaluated.

Authors: We acknowledge that abstracts are necessarily concise. The head-weight derivation is fully specified in Section 3.1 as a training-free computation of per-head importance from attention activation patterns differentiated by semantic versus acoustic task signals. Error bars (from three random seeds), statistical significance (paired t-tests), and routing ablations appear in Sections 4.2–4.4 and the appendix. To improve accessibility, we will revise the abstract to add one sentence summarizing the head-weight routing procedure and explicitly note that detailed robustness analyses are provided in the main text and supplementary material. revision: partial

-

Referee: [Method] The method relies on the assumption that attention heads show distinct task-specific behaviors with only a sparse subset responding differently to semantic and acoustic tasks; without quantitative evidence (e.g., activation statistics, entropy measures, or visualizations) or ablations demonstrating that dynamic per-head weighting reliably outperforms uniform averaging, the premise remains unverified and the gains could be benchmark-specific.

Authors: Section 3.2 of the manuscript already supplies the requested quantitative evidence: per-head activation statistics across task categories, entropy of head-importance distributions demonstrating sparsity, and visualizations of head-specific token retention maps. Section 4.3 further contains direct ablations of dynamic per-head weighting versus uniform averaging, showing consistent gains on both AudioMarathon and MMAU-Pro. These results are not benchmark-specific; the same head-distinction pattern holds across model scales (3B and 7B). We therefore maintain that the premise is supported by the existing analysis, though we remain open to expanding the set of metrics in a revision if the referee identifies particular gaps. revision: no

Circularity Check

No significant circularity; derivation is self-contained

full rationale

The paper's core chain consists of an empirical observation (distinct head behaviors across audio domains), a training-free dynamic weighting method derived from that observation, and direct empirical validation on external benchmarks (AudioMarathon, MMAU-Pro) showing retention of performance at 70% token pruning. No equations or definitions reduce the claimed performance gains to fitted parameters, self-referential inputs, or prior self-citations by construction. The method is explicitly training-free and benchmark-tested, satisfying the criteria for an independent derivation.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Attention heads exhibit distinct behaviors across diverse audio domains

- domain assumption Only a sparse subset of attention heads actively responds to audio, with completely different performance on semantic versus acoustic tasks

Reference graph

Works this paper leans on

-

[1]

Phi-4-Mini Technical Report: Compact yet Powerful Multimodal Language Models via Mixture-of-LoRAs

Abdelrahman Abouelenin, Atabak Ashfaq, Adam Atkinson, Hany Awadalla, Nguyen Bach, Jianmin Bao, Alon Benhaim, Martin Cai, Vishrav Chaudhary, Congcong Chen, Dong Chen, Dongdong Chen, Junkun Chen, Weizhu Chen, Yen-Chun Chen, Yi-ling Chen, Qi Dai, Xiyang Dai, Ruchao Fan, Mei Gao, Min Gao, and Amit Garg. Phi-4-mini technical report: Compact yet powerful multim...

work page internal anchor Pith review arXiv 2025

-

[2]

Saurabhchand Bhati, Samuel Thomas, Hilde Kuehne, Rogerio Feris, and James Glass. 10 Towards audio token compression in large audio language models.arXiv preprint arXiv:2511.20973, 2025

-

[3]

Junjie Chen, Xuyang Liu, Zichen Wen, Yiyu Wang, Siteng Huang, and Honggang Chen. Variation-aware vision token dropping for faster large vision-language models.arXiv preprint arXiv:2509.01552, 2025

-

[4]

An image is worth 1/2 tokens after layer 2: Plug-and-play inference acceleration for large vision-language models

Liang Chen, Haozhe Zhao, Tianyu Liu, Shuai Bai, Junyang Lin, Chang Zhou, and Baobao Chang. An image is worth 1/2 tokens after layer 2: Plug-and-play inference acceleration for large vision-language models. InEuropean Conference on Computer Vision, pages 19–35. Springer, 2024

2024

-

[5]

Voxdialogue: Can spoken dialogue systems un- derstand information beyond words? InThe Thirteenth International Conference on Learn- ing Representations, 2025

Xize Cheng, Ruofan Hu, Xiaoda Yang, Jingyu Lu, Dongjie Fu, Zehan Wang, Shengpeng Ji, Rongjie Huang, Boyang Zhang, Tao Jin, et al. Voxdialogue: Can spoken dialogue systems un- derstand information beyond words? InThe Thirteenth International Conference on Learn- ing Representations, 2025

2025

-

[6]

Recent advances in speech language models: A survey

Wenqian Cui, Dianzhi Yu, Xiaoqi Jiao, Ziqiao Meng, Guangyan Zhang, Qichao Wang, Steven Y Guo, and Irwin King. Recent advances in speech language models: A survey. InProceed- ings of the 63rd Annual Meeting of the Associ- ation for Computational Linguistics (Volume 1: Long Papers), pages 13943–13970, 2025

2025

-

[7]

Yue Ding, Yiyan Ji, Jungang Li, Xuyang Liu, Xinlong Chen, Junfei Wu, Bozhou Li, Bohan Zeng, Yang Shi, Yushuo Guan, et al. Omnisift: Modality-asymmetric token compression for effi- cient omni-modal large language models.arXiv preprint arXiv:2602.04804, 2026

work page internal anchor Pith review arXiv 2026

-

[8]

Sreyan Ghosh, Zhifeng Kong, Sonal Kumar, S Sakshi, Jaehyeon Kim, Wei Ping, Rafael Valle, Dinesh Manocha, and Bryan Catanzaro. Audio flamingo 2: An audio-language model with long- audio understanding and expert reasoning abili- ties.arXiv preprint arXiv:2503.03983, 2025

-

[9]

Audio flamingo 3: Advancing audio intelligence with fully open large audio language models,

Arushi Goel, Sreyan Ghosh, Jaehyeon Kim, Sonal Kumar, Zhifeng Kong, Sang-gil Lee, Chao- Han Huck Yang, Ramani Duraiswami, Dinesh Manocha, Rafael Valle, et al. Audio flamingo 3: Advancing audio intelligence with fully open large audio language models.arXiv preprint arXiv:2507.08128, 2025

-

[10]

Filter, correlate, compress: Training-free token reduc- tion for mllm acceleration

Yuhang Han, Xuyang Liu, Zihan Zhang, Pengxi- ang Ding, Junjie Chen, Honggang Chen, Donglin Wang, Qingsen Yan, and Siteng Huang. Filter, correlate, compress: Training-free token reduc- tion for mllm acceleration. InProceedings of the AAAI Conference on Artificial Intelligence, vol- ume 40, pages 4601–4609, 2026

2026

-

[11]

Peize He, Zichen Wen, Yubo Wang, Yux- uan Wang, Xiaoqian Liu, Jiajie Huang, Ze- hui Lei, Zhuangcheng Gu, Xiangqi Jin, Jiabing Yang, et al. Audiomarathon: A comprehensive benchmark for long-context audio understand- ing and efficiency in audio llms.arXiv preprint arXiv:2510.07293, 2025

-

[12]

Sonal Kumar, ˇSimon Sedl´ aˇ cek, Vaibhavi Lokegaonkar, Fernando L´ opez, Wenyi Yu, Nishit Anand, Hyeonggon Ryu, Lichang Chen, Maxim Pliˇ cka, Miroslav Hlav´ aˇ cek, et al. Mmau-pro: A challenging and comprehensive benchmark for holistic evaluation of audio general intelligence. arXiv preprint arXiv:2508.13992, 2025

-

[13]

Xinying Lin, Xuyang Liu, Yiyu Wang, Teng Ma, and Wenqi Ren. V-cast: Video curvature- aware spatio-temporal pruning for efficient video large language models.arXiv preprint arXiv:2603.27650, 2026

-

[14]

Xuyang Liu, Xiyan Gui, Yuchao Zhang, and Lin- feng Zhang. Mixing importance with diversity: Joint optimization for kv cache compression in large vision-language models.arXiv preprint arXiv:2510.20707, 2025

-

[15]

Video compression commander: Plug-and-play inference acceleration for video large language models

Xuyang Liu, Yiyu Wang, Junpeng Ma, and Lin- feng Zhang. Video compression commander: Plug-and-play inference acceleration for video large language models. InProceedings of the 2025 Conference on Empirical Methods in Natu- ral Language Processing, pages 1910–1924, 2025

2025

-

[16]

Xuyang Liu, Zichen Wen, Shaobo Wang, Jun- jie Chen, Zhishan Tao, Yubo Wang, Xiangqi Jin, Chang Zou, Yiyu Wang, Chenfei Liao, et al. Shifting ai efficiency from model-centric to data-centric compression.arXiv preprint arXiv:2505.19147, 2025

-

[17]

Global compression commander: Plug-and-play infer- ence acceleration for high-resolution large vision- language models

Xuyang Liu, Ziming Wang, Junjie Chen, Yuhang Han, Yingyao Wang, Jiale Yuan, Jun Song, Siteng Huang, and Honggang Chen. Global compression commander: Plug-and-play infer- ence acceleration for high-resolution large vision- language models. InProceedings of the AAAI 11 Conference on Artificial Intelligence, volume 40, pages 7350–7358, 2026

2026

-

[18]

Librispeech: an asr corpus based on public domain audio books

Vassil Panayotov, Guoguo Chen, Daniel Povey, and Sanjeev Khudanpur. Librispeech: an asr corpus based on public domain audio books. In 2015 IEEE international conference on acous- tics, speech and signal processing (ICASSP), pages 5206–5210. IEEE, 2015

2015

-

[19]

Robust speech recognition via large- scale weak supervision

Alec Radford, Jong Wook Kim, Tao Xu, Greg Brockman, Christine McLeavey, and Ilya Sutskever. Robust speech recognition via large- scale weak supervision. InInternational Confer- ence on Machine Learning, pages 28492–28518. PMLR, 2023

2023

-

[20]

Sound event detec- tion in synthetic domestic environments

Romain Serizel, Nicolas Turpault, Ankit Shah, and Justin Salamon. Sound event detec- tion in synthetic domestic environments. In ICASSP 2020-2020 IEEE International Confer- ence on Acoustics, Speech and Signal Processing (ICASSP), pages 86–90. IEEE, 2020

2020

-

[21]

Changli Tang, Wenyi Yu, Guangzhi Sun, Xi- anzhao Chen, Tian Tan, Wei Li, Lu Lu, Zejun Ma, and Chao Zhang. Salmonn: Towards generic hearing abilities for large language models.arXiv preprint arXiv:2310.13289, 2023

-

[22]

Mmsu: A massive multi-task spoken language understanding and reasoning benchmark,

Dingdong Wang, Jincenzi Wu, Junan Li, Dongchao Yang, Xueyuan Chen, Tianhua Zhang, and Helen Meng. Mmsu: A mas- sive multi-task spoken language understand- ing and reasoning benchmark.arXiv preprint arXiv:2506.04779, 2025

-

[23]

Yiyu Wang, Xuyang Liu, Xiyan Gui, Xinying Lin, Boxue Yang, Chenfei Liao, Tailai Chen, and Linfeng Zhang. Accelerating streaming video large language models via hierarchical token compression.arXiv preprint arXiv:2512.00891, 2025

-

[24]

AudioKV: KV Cache Eviction in Efficient Large Audio Language Models

Yuxuan Wang, Peize He, Xiyan Gui, Xiaoqian Liu, Junhao He, Xuyang Liu, Zichen Wen, Xum- ing Hu, and Linfeng Zhang. Audiokv: Kv cache eviction in efficient large audio language models. arXiv preprint arXiv:2604.06694, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[25]

Zichen Wen, Yifeng Gao, Weijia Li, Conghui He, and Linfeng Zhang. Token pruning in mul- timodal large language models: Are we solv- ing the right problem? In Wanxiang Che, Joyce Nabende, Ekaterina Shutova, and Mo- hammad Taher Pilehvar, editors,Findings of the Association for Computational Linguistics: ACL 2025, pages 15537–15549, Vienna, Aus- tria, July ...

2025

-

[26]

Zichen Wen, Yifeng Gao, Shaobo Wang, Jun- yuan Zhang, Qintong Zhang, Weijia Li, Conghui He, and Linfeng Zhang. Stop looking for “im- portant tokens” in multimodal language mod- els: Duplication matters more. In Christos Christodoulopoulos, Tanmoy Chakraborty, Car- olyn Rose, and Violet Peng, editors,Proceedings of the 2025 Conference on Empirical Methods ...

-

[27]

emnlp-main.505/

URLhttps://aclanthology.org/2025. emnlp-main.505/

2025

-

[28]

Efficient Streaming Language Models with Attention Sinks

Guangxuan Xiao, Yuandong Tian, Beidi Chen, Song Han, and Mike Lewis. Efficient stream- ing language models with attention sinks.arXiv preprint arXiv:2309.17453, 2023

work page internal anchor Pith review arXiv 2023

-

[29]

Jin Xu, Zhifang Guo, Jinzheng He, Hangrui Hu, Ting He, Shuai Bai, Keqin Chen, Jialin Wang, Yang Fan, and Kai Dang. Qwen2.5-omni tech- nical report.ArXiv preprint, abs/2503.20215,

work page internal anchor Pith review arXiv

-

[30]

URLhttps://arxiv.org/abs/2503. 20215

-

[31]

Jin Xu, Zhifang Guo, Hangrui Hu, Yun- fei Chu, Xiong Wang, Jinzheng He, Yuxuan Wang, Xian Shi, Ting He, Xinfa Zhu, et al. Qwen3-omni technical report.arXiv preprint arXiv:2509.17765, 2025

work page internal anchor Pith review arXiv 2025

-

[32]

Sakura: On the multi-hop rea- soning of large audio-language models based on speech and audio information

Chih-Kai Yang, Neo Ho, Yen-Ting Piao, and Hung-yi Lee. Sakura: On the multi-hop rea- soning of large audio-language models based on speech and audio information. InProc. Inter- speech 2025, pages 1788–1792, 2025

2025

-

[33]

Visionzip: Longer is better but not nec- essary in vision language models

Senqiao Yang, Yukang Chen, Zhuotao Tian, Chengyao Wang, Jingyao Li, Bei Yu, and Jiaya Jia. Visionzip: Longer is better but not nec- essary in vision language models. InProceed- ings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 19792– 19802, 2025

2025

-

[34]

Speechgpt: Empowering large language models 12 with intrinsic cross-modal conversational abili- ties

Dong Zhang, Shimin Li, Xin Zhang, Jun Zhan, Pengyu Wang, Yaqian Zhou, and Xipeng Qiu. Speechgpt: Empowering large language models 12 with intrinsic cross-modal conversational abili- ties. InFindings of the Association for Computa- tional Linguistics: EMNLP 2023, pages 15757– 15773, 2023. 13

2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.