Recognition: unknown

Verification of Neural Networks (Lecture Notes)

Pith reviewed 2026-05-07 14:19 UTC · model grok-4.3

The pith

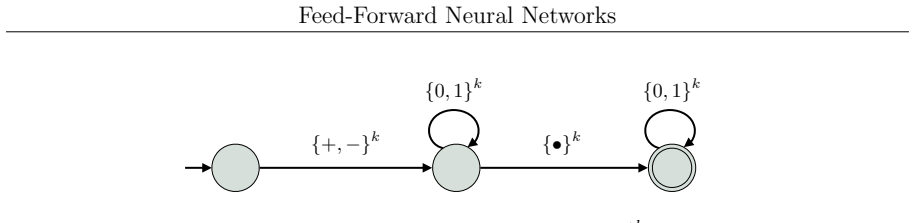

Lecture notes introduce theoretical verification of neural networks by covering feed-forward, recurrent, and transformer architectures along with specification languages and algorithms.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

These lecture notes survey feed-forward neural networks, recurrent neural networks, attention mechanisms, and transformers together with specification languages and algorithmic verification techniques to supply a theoretical introduction to neural-network verification.

What carries the argument

The combination of neural-network architectures with formal specification languages and algorithmic verification methods that together allow properties to be stated and checked.

If this is right

- The described techniques allow formal guarantees on network behavior instead of empirical sampling.

- Verification algorithms can be applied uniformly across feed-forward, recurrent, and attention-based models.

- Specification languages provide a common way to express requirements for different network types.

- The notes support development of automated tools that implement the listed verification procedures.

Where Pith is reading between the lines

- The framework could be tested by applying the presented algorithms to a small transformer and checking whether stated properties hold.

- Extending the notes to include hybrid systems that combine neural networks with traditional controllers would address a growing practical need.

- The material implies that similar verification patterns may apply to newer architectures not yet covered.

Load-bearing premise

The chosen architectures and techniques form a coherent, sufficient basis for an introductory theoretical treatment.

What would settle it

Discovery of major gaps in coverage of current transformer verification methods or clear inaccuracies in the described algorithms would show the notes fail to deliver a reliable foundation.

Figures

read the original abstract

These lecture notes provide an introduction to the verification of neural networks from a theoretical perspective. We discuss feed-forward neural networks, recurrent neural networks, attention mechanisms, and transformers, together with specification languages and algorithmic verification techniques.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript consists of lecture notes providing an introduction to the verification of neural networks from a theoretical perspective. It discusses feed-forward neural networks, recurrent neural networks, attention mechanisms, and transformers, together with specification languages and algorithmic verification techniques.

Significance. These lecture notes organize existing theoretical approaches to neural network verification into a cohesive pedagogical resource. Their value is primarily educational, offering an accessible overview of standard architectures and methods without advancing new theorems, empirical results, or completeness claims. This aligns with the expository scope and avoids unsubstantiated assertions.

Simulated Author's Rebuttal

We thank the referee for their positive review, accurate summary of the manuscript's scope, and recommendation to accept. The assessment correctly identifies the work as expository lecture notes without new theorems or empirical claims.

Circularity Check

No significant circularity; purely expository lecture notes

full rationale

The paper consists of lecture notes that introduce and survey existing verification techniques for feed-forward networks, RNNs, attention, and transformers, along with specification languages. No new derivations, predictions, fitted parameters, or uniqueness theorems are claimed. The content is a pedagogical overview of prior work without any load-bearing steps that reduce by construction to self-definitions, self-citations, or renamed inputs. All material is presented as established background rather than novel results requiring verification of circularity.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Akintunde, Andreea Kevorchian, Alessio Lomuscio, and Edoardo Pirovano

Michael E. Akintunde, Andreea Kevorchian, Alessio Lomuscio, and Edoardo Pirovano. Verification of rnn-based neural agent-environment systems. In The Thirty-Third AAAI Conference on Artificial Intelligence, AAAI 2019, Honolulu, Hawaii, USA, January 27 - February 1, 2019 , pages 6006--6013. AAAI Press, 2019

2019

-

[2]

Introduction to Neural Network Verification

Aws Albarghouthi. Introduction to Neural Network Verification . verifieddeeplearning.com, 2021. http://verifieddeeplearning.com

2021

- [3]

-

[4]

LIRA: Handling Constraints of Linear Arithmetics over the Integers and the Reals

Bernd Becker, Christian Dax, Jochen Eisinger, and Felix Klaedtke. LIRA: Handling Constraints of Linear Arithmetics over the Integers and the Reals . In Werner Damm and Holger Hermanns, editors, Computer Aided Verification, 19th International Conference, CAV 2007, Berlin, Germany, July 3-7, 2007, Proceedings , volume 4590 of Lecture Notes in Computer Scien...

2007

-

[5]

The solution of problems relative to probabilistic automata in the frame of the formal languages theory

Alberto Bertoni. The solution of problems relative to probabilistic automata in the frame of the formal languages theory. In Dirk Siefkes, editor, GI - 4. Jahrestagung, Berlin, 9.-12. Oktober 1974 , volume 26 of Lecture Notes in Computer Science , pages 107--112. Springer, 1974

1974

-

[6]

On the ability and limitations of transformers to recognize formal languages

Satwik Bhattamishra, Kabir Ahuja, and Navin Goyal. On the ability and limitations of transformers to recognize formal languages. In Bonnie Webber, Trevor Cohn, Yulan He, and Yang Liu, editors, Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing, EMNLP 2020, Online, November 16-20, 2020 , pages 7096--7116. Association for...

2020

-

[7]

On the Use of Weak Automata for Deciding Linear Arithmetic with Integer and Real Variables

Bernard Boigelot, S \' e bastien Jodogne, and Pierre Wolper. On the Use of Weak Automata for Deciding Linear Arithmetic with Integer and Real Variables . In Rajeev Gor \' e , Alexander Leitsch, and Tobias Nipkow, editors, Automated Reasoning, First International Joint Conference, IJCAR 2001, Siena, Italy, June 18-23, 2001, Proceedings , volume 2083 of Lec...

2001

-

[8]

A Survey of Model Learning Techniques for Recurrent Neural Networks

Benedikt Bollig, Martin Leucker, and Daniel Neider. A Survey of Model Learning Techniques for Recurrent Neural Networks . In Nils Jansen, Mari \" e lle Stoelinga, and Petra van den Bos, editors, A Journey from Process Algebra via Timed Automata to Model Learning - Essays Dedicated to Frits Vaandrager on the Occasion of His 60th Birthday , volume 13560 of ...

2022

-

[9]

Richard Büchi

J. Richard Büchi. Symposium on Decision Problems: On a Decision Method in Restricted Second Order Arithmetic . In Ernest Nagel, Patrick Suppes, and Alfred Tarski, editors, Logic, Methodology and Philosophy of Science , volume 44 of Studies in Logic and the Foundations of Mathematics , pages 1--11. Elsevier, 1966

1966

-

[10]

George E. Collins. Quantifier Elimination for Real Closed Fields by Cylindrical Algebraic Decomposition - Preliminary Report . SIGSAM Bull. , 8(3):80--90, 1974

1974

-

[11]

ss \' e goes elementarily automatic for structures of bounded degree . In Christoph D \

Antoine Durand - Gasselin and Peter Habermehl. Ehrenfeucht-Fra \" ss \' e goes elementarily automatic for structures of bounded degree . In Christoph D \" u rr and Thomas Wilke, editors, 29th International Symposium on Theoretical Aspects of Computer Science, STACS 2012, February 29th - March 3rd, 2012, Paris, France , volume 14 of LIPIcs , pages 242--253...

2012

-

[12]

Mathematical Logic

Heinz - Dieter Ebbinghaus, J \" o rg Flum, and Wolfgang Thomas. Mathematical Logic . Undergraduate Texts in Mathematics. Springer, 1994

1994

-

[13]

Upper Bounds on the Automata Size for Integer and Mixed Real and Integer Linear Arithmetic (Extended Abstract)

Jochen Eisinger. Upper Bounds on the Automata Size for Integer and Mixed Real and Integer Linear Arithmetic (Extended Abstract) . In Michael Kaminski and Simone Martini, editors, Computer Science Logic, 22nd International Workshop, CSL 2008, 17th Annual Conference of the EACSL, Bertinoro, Italy, September 16-19, 2008. Proceedings , volume 5213 of Lecture ...

2008

-

[14]

Jeffrey L. Elman. Finding structure in time. Cogn. Sci. , 14(2):179--211, 1990

1990

-

[15]

Undecidability results for probabilistic automata

Nathana \" e l Fijalkow. Undecidability results for probabilistic automata. ACM SIGLOG News , 4(4):10--17, 2017

2017

-

[16]

Probabilistic automata on finite words: Decidable and undecidable problems

Hugo Gimbert and Youssouf Oualhadj. Probabilistic automata on finite words: Decidable and undecidable problems. In Samson Abramsky, Cyril Gavoille, Claude Kirchner, Friedhelm Meyer auf der Heide, and Paul G. Spirakis, editors, Automata, Languages and Programming, 37th International Colloquium, ICALP 2010, Bordeaux, France, July 6-10, 2010, Proceedings, Pa...

2010

-

[17]

Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I

Kurt Gödel. Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I . Monatshefte für Mathematik und Physik , 38:173--198, 1931

1931

-

[18]

Approaching Arithmetic Theories with Finite-State Automata

Christoph Haase. Approaching Arithmetic Theories with Finite-State Automata . In Alberto Leporati, Carlos Mart \' n - Vide, Dana Shapira, and Claudio Zandron, editors, Language and Automata Theory and Applications - 14th International Conference, LATA 2020, Milan, Italy, March 4-6, 2020, Proceedings , volume 12038 of Lecture Notes in Computer Science , pa...

2020

-

[19]

Towards Lower Bounds on the Depth of ReLU Neural Networks

Christoph Hertrich, Amitabh Basu, Marco Di Summa, and Martin Skutella. Towards Lower Bounds on the Depth of ReLU Neural Networks . SIAM J. Discret. Math. , 37(2):997--1029, 2023

2023

-

[20]

Long short-term memory

Sepp Hochreiter and J \" u rgen Schmidhuber. Long short-term memory. Neural Comput. , 9(8):1735--1780, 1997

1997

-

[21]

Barrett, and Guy Katz

Omri Isac, Yoni Zohar, Clark W. Barrett, and Guy Katz. DNN Verification, Reachability, and the Exponential Function Problem . In Guillermo A. P \' e rez and Jean - Fran c ois Raskin, editors, 34th International Conference on Concurrency Theory, CONCUR 2023, September 18-23, 2023, Antwerp, Belgium , volume 279 of LIPIcs , pages 26:1--26:18. Schloss Dagstuh...

2023

-

[22]

Barrett, and Guy Katz

Yuval Jacoby, Clark W. Barrett, and Guy Katz. Verifying recurrent neural networks using invariant inference. In Dang Van Hung and Oleg Sokolsky, editors, Automated Technology for Verification and Analysis - 18th International Symposium, ATVA 2020, Hanoi, Vietnam, October 19-23, 2020, Proceedings , volume 12302 of Lecture Notes in Computer Science , pages ...

2020

-

[23]

The unreasonable effectiveness of recurrent neural networks

Andrej Karpathy. The unreasonable effectiveness of recurrent neural networks. http://karpathy.github.io/2015/05/21/rnn-effectiveness/, May 2015. Accessed: December 19, 2023

2015

-

[24]

Barrett, David L

Guy Katz, Clark W. Barrett, David L. Dill, Kyle Julian, and Mykel J. Kochenderfer. Reluplex: An efficient SMT solver for verifying deep neural networks. In Rupak Majumdar and Viktor Kuncak, editors, Computer Aided Verification - 29th International Conference, CAV 2017, Heidelberg, Germany, July 24-28, 2017, Proceedings, Part I , volume 10426 of Lecture No...

2017

-

[25]

Analysis of recurrent neural networks via property-directed verification of surrogate models

Igor Khmelnitsky, Daniel Neider, Rajarshi Roy, Xuan Xie, Beno \^ t Barbot, Benedikt Bollig, Alain Finkel, Serge Haddad, Martin Leucker, and Lina Ye. Analysis of recurrent neural networks via property-directed verification of surrogate models. Int. J. Softw. Tools Technol. Transf. , 25(3):341--354, 2023

2023

-

[26]

Bounds on the automata size for Presburger arithmetic

Felix Klaedtke. Bounds on the automata size for Presburger arithmetic . ACM Trans. Comput. Log. , 9(2):11:1--11:34, 2008

2008

-

[27]

Ehrenfeucht-Fra \" ss \' e goes automatic for real addition

Felix Klaedtke. Ehrenfeucht-Fra \" ss \' e goes automatic for real addition . Inf. Comput. , 208(11):1283--1295, 2010

2010

-

[28]

Decision Procedures - An Algorithmic Point of View, Second Edition

Daniel Kroening and Ofer Strichman. Decision Procedures - An Algorithmic Point of View, Second Edition . Texts in Theoretical Computer Science. An EATCS Series. Springer, 2016

2016

-

[29]

https://people.montefiore.uliege.be/boigelot/research/lash/

The LASH toolset . https://people.montefiore.uliege.be/boigelot/research/lash/

-

[30]

Martin Leucker. Formal Verification of Neural Networks? In Gustavo Carvalho and Volker Stolz, editors, Formal Methods: Foundations and Applications - 23rd Brazilian Symposium, SBMF 2020, Ouro Preto, Brazil, November 25-27, 2020, Proceedings , volume 12475 of Lecture Notes in Computer Science , pages 3--7. Springer, 2020

2020

-

[31]

Are transformers more robust? towards exact robustness verification for transformers

Brian Hsuan - Cheng Liao, Chih - Hong Cheng, Hasan Esen, and Alois Knoll. Are transformers more robust? towards exact robustness verification for transformers. In J \' e r \' e mie Guiochet, Stefano Tonetta, and Friedemann Bitsch, editors, Computer Safety, Reliability, and Security - 42nd International Conference, SAFECOMP 2023, Toulouse, France, Septembe...

2023

-

[32]

Natural-logarithm-rectified activation function in convolutional neural networks

Yang Liu, Jianpeng Zhang, Chao Gao, Jinghua Qu, and Lixin Ji. Natural-logarithm-rectified activation function in convolutional neural networks. CoRR , abs/1908.03682, 2019

-

[33]

Efficient minimization of deterministic weak -automata

Christof L \" o ding. Efficient minimization of deterministic weak -automata . Inf. Process. Lett. , 79(3):105--109, 2001

2001

-

[34]

William Merrill, Ashish Sabharwal, and Noah A. Smith. Saturated transformers are constant-depth threshold circuits. Trans. Assoc. Comput. Linguistics , 10:843--856, 2022

2022

-

[35]

Smith, and Eran Yahav

William Merrill, Gail Weiss, Yoav Goldberg, Roy Schwartz, Noah A. Smith, and Eran Yahav. A formal hierarchy of RNN architectures. In Dan Jurafsky, Joyce Chai, Natalie Schluter, and Joel R. Tetreault, editors, Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, ACL 2020, Online, July 5-10, 2020 , pages 443--459. Associa...

2020

-

[36]

Neural Nets and the Brain Model Problem

Marvin Lee Minsky. Neural Nets and the Brain Model Problem . PhD thesis, Princeton University, 1954

1954

-

[37]

Neural network diagrams with tikz

Izaak Neutelings. Neural network diagrams with tikz. https://tikz.net/neural_networks/, 2021

2021

-

[38]

Introduction to probabilistic automata

Azaria Paz. Introduction to probabilistic automata . Academic Press, 1971

1971

-

[39]

Attention is Turing -complete

Jorge P \' e rez, Pablo Barcel \' o , and Javier Marinkovic. Attention is Turing -complete. J. Mach. Learn. Res. , 22:75:1--75:35, 2021

2021

-

[40]

Michael O. Rabin. Probabilistic automata. Inf. Control. , 6(3):230--245, 1963

1963

-

[41]

Wonryong Ryou, Jiayu Chen, Mislav Balunovic, Gagandeep Singh, Andrei Marian Dan, and Martin T. Vechev. Scalable polyhedral verification of recurrent neural networks. In Alexandra Silva and K. Rustan M. Leino, editors, Computer Aided Verification - 33rd International Conference, CAV 2021, Virtual Event, July 20-23, 2021, Proceedings, Part I , volume 12759 ...

2021

-

[42]

Verifying and interpreting neural networks using finite automata

Marco S \" a lzer, Eric Alsmann, Florian Bruse, and Martin Lange. Verifying and interpreting neural networks using finite automata. Inf. Comput. , 308:105398, 2026

2026

-

[43]

Reachability is NP-Complete Even for the Simplest Neural Networks

Marco S \" a lzer and Martin Lange. Reachability is NP-Complete Even for the Simplest Neural Networks . In Paul C. Bell, Patrick Totzke, and Igor Potapov, editors, Reachability Problems - 15th International Conference, RP 2021, Liverpool, UK, October 25-27, 2021, Proceedings , volume 13035 of Lecture Notes in Computer Science , pages 149--164. Springer, 2021

2021

-

[44]

Reachability in simple neural networks

Marco S \" a lzer and Martin Lange. Reachability in simple neural networks. Fundam. Informaticae , 189(3-4):241--259, 2022

2022

-

[45]

Modeling and verification of randomized distributed real-time systems

Roberto Segala. Modeling and verification of randomized distributed real-time systems . PhD thesis, Massachusetts Institute of Technology, Cambridge, MA, USA , 1995

1995

-

[46]

Robustness verification for transformers

Zhouxing Shi, Huan Zhang, Kai - Wei Chang, Minlie Huang, and Cho - Jui Hsieh. Robustness verification for transformers. In 8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, April 26-30, 2020 . OpenReview.net, 2020

2020

-

[47]

Siegelmann and Eduardo D

Hava T. Siegelmann and Eduardo D. Sontag. On the computational power of neural nets. J. Comput. Syst. Sci. , 50(1):132--150, 1995

1995

-

[48]

What formal languages can transformers express? A survey

Lena Strobl, William Merrill, Gail Weiss, David Chiang, and Dana Angluin. What formal languages can transformers express? A survey. Trans. Assoc. Comput. Linguistics , 12:543--561, 2024

2024

-

[49]

Recurrent neural language models as probabilistic finite-state automata

Anej Svete and Ryan Cotterell. Recurrent neural language models as probabilistic finite-state automata. In Houda Bouamor, Juan Pino, and Kalika Bali, editors, Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, EMNLP 2023, Singapore, December 6-10, 2023 , pages 8069--8086. Association for Computational Linguistics, 2023

2023

-

[50]

A Decision Method for Elementary Algebra and Geometry

Alfred Tarski. A Decision Method for Elementary Algebra and Geometry . RAND Corporation Paper , 1948

1948

-

[51]

Languages, automata, and logic

Wolfgang Thomas. Languages, automata, and logic. In Grzegorz Rozenberg and Arto Salomaa, editors, Handbook of Formal Languages, Volume 3: Beyond Words , pages 389--455. Springer, 1997

1997

-

[52]

Prokhorov

Hoang - Dung Tran, Sung Woo Choi, Xiaodong Yang, Tomoya Yamaguchi, Bardh Hoxha, and Danil V. Prokhorov. Verification of recurrent neural networks with star reachability. In Proceedings of the 26th ACM International Conference on Hybrid Systems: Computation and Control, HSCC 2023, San Antonio, TX, USA, May 9-12, 2023 , pages 6:1--6:13. ACM , 2023

2023

-

[53]

A Review of Formal Methods applied to Machine Learning

Caterina Urban and Antoine Min \' e . A Review of Formal Methods applied to Machine Learning . CoRR , abs/2104.02466, 2021

-

[54]

Gomez, Lukasz Kaiser, and Illia Polosukhin

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, and Illia Polosukhin. Attention is all you need. In Isabelle Guyon, Ulrike von Luxburg, Samy Bengio, Hanna M. Wallach, Rob Fergus, S. V. N. Vishwanathan, and Roman Garnett, editors, Advances in Neural Information Processing Systems 30: Annual Conference...

2017

-

[55]

Complexity of reachability problems in neural networks

Adrian Wurm. Complexity of reachability problems in neural networks. In Olivier Bournez, Enrico Formenti, and Igor Potapov, editors, Reachability Problems - 17th International Conference, RP 2023, Nice, France, October 11-13, 2023, Proceedings , volume 14235 of Lecture Notes in Computer Science , pages 15--27. Springer, 2023

2023

-

[56]

Masked hard-attention transformers recognize exactly the star-free languages

Andy Yang, David Chiang, and Dana Angluin. Masked hard-attention transformers recognize exactly the star-free languages. In Amir Globersons, Lester Mackey, Danielle Belgrave, Angela Fan, Ulrich Paquet, Jakub M. Tomczak, and Cheng Zhang, editors, Advances in Neural Information Processing Systems 38: Annual Conference on Neural Information Processing System...

2024

-

[57]

Formal Verification of Deep Neural Networks: Theory and Practice

Huan Zhang, Kaidi Xu, Shiqi Wang, and Cho-Jui Hsieh. Formal Verification of Deep Neural Networks: Theory and Practice . https://neural-network-verification.com, 2022

2022

-

[58]

Author, A. (Year). Title of the paper. Journal Name, Volume(Issue), Page numbers

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.