Recognition: unknown

Auditing Marketing Budget Allocation with Hindsight Regret

Pith reviewed 2026-05-07 14:10 UTC · model grok-4.3

The pith

Hindsight regret lets organizations audit past marketing budget allocations against optimized feasible alternatives derived from historical response data.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

By estimating regime-specific spend-response functions from historical logs, computing feasible hindsight allocations via constrained optimization, and propagating uncertainty through Monte Carlo evaluation, the framework produces regret distributions, expected lift, and probability-of-improvement summaries that separate allocation inefficiency from uncertainty in the estimated response surfaces, as demonstrated on real marketing allocation logs.

What carries the argument

Hindsight regret, defined as the opportunity cost of the realized allocation relative to a constraint-faithful benchmark under the same budget and stability guardrails, which carries the argument by enabling post-hoc comparison and uncertainty-aware optimization.

Load-bearing premise

The regime-specific spend-response functions estimated from historical logs are sufficiently accurate and stable to support reliable constrained optimization and uncertainty propagation.

What would settle it

Running the optimized allocations in a subsequent real period and finding that actual outcomes fall outside the predicted regret distributions or show no lift beyond the uncertainty bands would falsify the claim that the framework reliably identifies measurable gains.

Figures

read the original abstract

Organizations routinely make strategic budget allocations under operational constraints, but often lack a principled way to assess whether realized allocations were close to the best feasible choices in hindsight. We present a retrospective auditing framework based on hindsight regret, defined as the opportunity cost of the realized allocation relative to a constraint-faithful benchmark under the same budget and stability guardrails. The framework estimates regime-specific spend--response functions from historical logs, computes feasible hindsight allocations via constrained optimization, and propagates uncertainty through Monte Carlo evaluation to produce regret distributions, expected lift, and probability-of-improvement summaries. This separates allocation inefficiency from uncertainty in the estimated response surfaces. Experiments on real marketing allocation logs show that the framework yields interpretable post-hoc diagnostics and reveals a practical trade-off between allocation flexibility and detectability: moderate feasible reallocations often capture most measurable gain, while larger shifts move into weak-support regions with higher uncertainty. The result is a practical method for auditing historical budget decisions when online experimentation is costly or infeasible.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a hindsight-regret auditing framework for marketing budget allocations. It estimates regime-specific spend-response functions from historical logs, solves a constrained optimization problem to obtain a feasible benchmark allocation under the same budget and stability constraints, and propagates uncertainty via Monte Carlo simulation to produce regret distributions, expected lift, and probability-of-improvement summaries. Experiments on real marketing logs are used to illustrate interpretable post-hoc diagnostics and a practical trade-off: moderate feasible reallocations capture most measurable gain while larger shifts enter weak-support regions with higher uncertainty.

Significance. If the regime-specific response surfaces can be shown to be sufficiently accurate and stable, the framework supplies a concrete, non-experimental method for separating allocation inefficiency from estimation uncertainty in budget decisions where RCTs are costly or infeasible. The reported flexibility-detectability trade-off would be a useful empirical regularity for practitioners.

major comments (3)

- [Abstract / Experiments] The central claim that moderate reallocations capture most measurable gain while larger shifts enter high-uncertainty regions rests on the accuracy of the estimated regime-specific spend-response functions. The abstract (and available description) provides no hold-out validation, cross-validation metrics, or sensitivity checks against functional-form or regime-segmentation misspecification; without these, the Monte Carlo regret summaries and the reported trade-off cannot be treated as reliable.

- [Framework description] Hindsight regret is defined relative to an optimized benchmark whose parameters are fitted from the identical historical logs used to evaluate the realized allocation. This introduces a circularity that can bias the regret distributions upward; the manuscript must either derive an external identification strategy or quantify the finite-sample bias induced by this dependence.

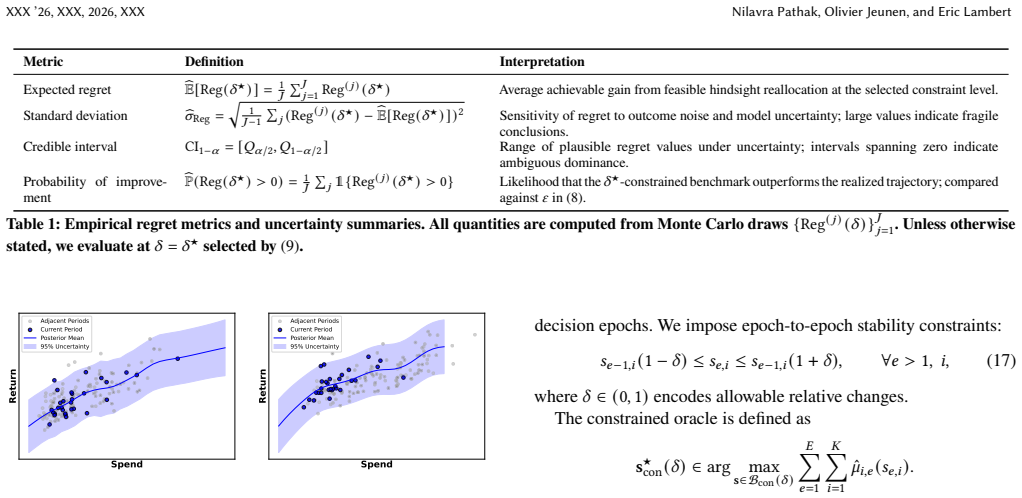

- [Method] The constrained optimization step and the Monte Carlo uncertainty propagation are described only at a high level. Explicit statements of the objective, the full set of constraints (budget, stability guardrails), the functional forms employed for the spend-response surfaces, and the precise Monte Carlo procedure are required before the regret distributions can be reproduced or stress-tested.

minor comments (2)

- [Notation] Clarify notation for the regime segmentation and the exact definition of the hindsight benchmark; a small table or diagram would help readers distinguish the realized allocation, the optimized benchmark, and the Monte Carlo draws.

- [Introduction] Add references to the marketing-response-function and regret-auditing literatures to situate the contribution.

Simulated Author's Rebuttal

We thank the referee for the constructive comments, which help clarify the presentation and strengthen the empirical support for the framework. We address each major point below and indicate the planned revisions.

read point-by-point responses

-

Referee: [Abstract / Experiments] The central claim that moderate reallocations capture most measurable gain while larger shifts enter high-uncertainty regions rests on the accuracy of the estimated regime-specific spend-response functions. The abstract (and available description) provides no hold-out validation, cross-validation metrics, or sensitivity checks against functional-form or regime-segmentation misspecification; without these, the Monte Carlo regret summaries and the reported trade-off cannot be treated as reliable.

Authors: We agree that the reported flexibility-detectability trade-off requires stronger validation of the regime-specific response surfaces. The experiments section contains internal checks, but we will add explicit hold-out validation, cross-validation error metrics, and sensitivity analyses to functional forms and regime segmentation in the revision. We will also update the abstract to reference these results. revision: yes

-

Referee: [Framework description] Hindsight regret is defined relative to an optimized benchmark whose parameters are fitted from the identical historical logs used to evaluate the realized allocation. This introduces a circularity that can bias the regret distributions upward; the manuscript must either derive an external identification strategy or quantify the finite-sample bias induced by this dependence.

Authors: This is a valid concern about potential upward bias from in-sample optimization. The retrospective nature of the audit makes some dependence unavoidable, but we will add a dedicated discussion quantifying the finite-sample bias (via analytic bounds or sample-splitting experiments) and clarify that the Monte Carlo procedure already propagates estimation uncertainty. revision: partial

-

Referee: [Method] The constrained optimization step and the Monte Carlo uncertainty propagation are described only at a high level. Explicit statements of the objective, the full set of constraints (budget, stability guardrails), the functional forms employed for the spend-response surfaces, and the precise Monte Carlo procedure are required before the regret distributions can be reproduced or stress-tested.

Authors: We will expand the main text (and move key equations from the appendix) to state the optimization objective, the complete constraint set, the exact functional forms used for each regime, and the Monte Carlo steps with pseudocode. This will ensure full reproducibility of the regret distributions. revision: yes

Circularity Check

No significant circularity in framework or empirical claims

full rationale

The paper presents a retrospective auditing framework whose core quantities (regret, expected lift) are explicitly defined relative to constrained optima computed from regime-specific response surfaces estimated on the same historical logs. This dependence is definitional to the method rather than a hidden reduction of an independent claim. The reported trade-off between allocation flexibility and detectability is an observed outcome of applying the framework to real data, not a first-principles derivation or prediction that collapses to the fitted inputs by construction. No equations, uniqueness theorems, or self-citations are invoked in a load-bearing way that would force the central empirical diagnostics. The analysis therefore remains self-contained as a practical diagnostic tool without circularity.

Axiom & Free-Parameter Ledger

free parameters (1)

- regime-specific spend-response function parameters

axioms (2)

- domain assumption Historical logs contain sufficient variation to identify regime-specific response functions

- domain assumption The constraint set (budget, stability guardrails) is known and correctly encoded for the optimization step

Reference graph

Works this paper leans on

-

[1]

Alberto Abadie. 2021. Using Synthetic Controls: Feasibility, Data Requirements, and Methodological Aspects.Journal of Economic Literature59, 2 (2021), 391–425

2021

-

[2]

Yasin Abbasi-Yadkori, Csaba Szepesvári, and Peter Bartlett. 2011. Regret Bounds for the Adaptive Control of Linear Quadratic Systems. InCOLT

2011

-

[3]

Princeton University Press

JoshuaD.AngristandJörn-SteffenPischke.2009.MostlyHarmlessEconometrics: An Empiricist’s Companion. Princeton University Press

2009

-

[4]

Peter Auer. 2002. Using confidence bounds for exploitation-exploration trade-offs. Journal of machine learning research3, Nov (2002), 397–422

2002

-

[5]

Lennart Baardman, Elaheh Fata, Abhishek Pani, and Georgia Perakis. 2019. Learning optimal online advertising portfolios with periodic budgets.Available at SSRN 3346642(2019)

2019

-

[6]

Balseiro, Kshipra Bhawalkar, Zhe Feng, Haihao Lu, Vahab Mirrokni, BalasubramanianSivan,andDiWang.2024

Santiago R. Balseiro, Kshipra Bhawalkar, Zhe Feng, Haihao Lu, Vahab Mirrokni, BalasubramanianSivan,andDiWang.2024. AFieldGuideforPacingBudgetand ROS Constraints. InProceedings of the 41st International Conference on Machine Learning (Proceedings of Machine Learning Research, Vol. 235). 2607–2638

2024

-

[7]

Interrupted time series regression for the evaluation of public health interventions: a tutorial

JamesLopezBernal,StevenCummins,andAntonioGasparrini.2017. Interrupted time series regression for the evaluation of public health interventions: a tutorial. International Journal of Epidemiology46, 1 (2017), 348–355

2017

-

[8]

LéonBottou,JonasPeters,JoaquinQuiñonero-Candela,DenisX.Charles,D.Max Chickering, Elon Portugaly, Dipankar Ray, Patrice Simard, and Ed Snelson. 2013. Counterfactual reasoning and learning systems: The example of computational advertising.TheJournalofMachineLearningResearch14,1(2013),3207–3260

2013

-

[9]

Sarah Dean, Horia Mania, Nikolai Matni, Benjamin Recht, and Stephen Tu. 2018. Regret Bounds for Robust Adaptive Control of Linear Quadratic Systems. In Advances in Neural Information Processing Systems

2018

-

[10]

2014.Pooled Synthetic Control Estimates for ContinuousTreatments:AnApplicationtoMinimumWageCaseStudies

Arindrajit Dubé and Ben Zipperer. 2014.Pooled Synthetic Control Estimates for ContinuousTreatments:AnApplicationtoMinimumWageCaseStudies. Technical Report. IZA

2014

-

[11]

Dean Eckles, Brian Karrer, and Johan Ugander. 2017. Design and analysis of experiments in networks: Reducing bias from interference.Journal of Causal Inference5, 1 (2017), 20150021

2017

-

[12]

Holthausen and Gert Assmus

Duncan M. Holthausen and Gert Assmus. 1982. Advertising Budget Allocation under Uncertainty.Management Science28, 5 (1982), 487–499

1982

-

[13]

Hoyer, Dominik Janzing, Joris M

Patrik O. Hoyer, Dominik Janzing, Joris M. Mooij, Jonas Peters, and Bernhard Schölkopf. 2009. Nonlinear Causal Discovery with Additive Noise Models. In Advances in Neural Information Processing Systems, Vol. 21

2009

-

[14]

Vogt, Bernhard Schölkopf, Peter Bühlmann, and Alexander Marx

Alexander Immer, Christoph Schultheiss, Julia E. Vogt, Bernhard Schölkopf, Peter Bühlmann, and Alexander Marx. 2023. On the Identifiability and Estimation of Causal Location-Scale Noise Models. InInternational Conference on Machine Learning. 14316–14332

2023

-

[15]

Near-optimalRegretBounds for Reinforcement Learning.Journal of Machine Learning Research11 (2010), 1563–1600

ThomasJaksch,RonaldOrtner,andPeterAuer.2010. Near-optimalRegretBounds for Reinforcement Learning.Journal of Machine Learning Research11 (2010), 1563–1600

2010

-

[16]

2020.Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing

Ron Kohavi, Diane Tang, and Ya Xu. 2020.Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing. Cambridge University Press

2020

-

[17]

Regrettheory:Analternativetheoryof rationalchoiceunderuncertainty.TheEconomicJournal92,368(1982),805–824

GrahamLoomesandRobertSugden.1982. Regrettheory:Analternativetheoryof rationalchoiceunderuncertainty.TheEconomicJournal92,368(1982),805–824

1982

-

[18]

PracticalBudgetPacingAlgorithmsAndSimulationTestBed For eBay Marketplace Sponsored Search

Ha Nguyen, Djordje Gligorijevic, Arnab Borah, Gajanan Adalinge, and Abraham Bagherjeiran.2023. PracticalBudgetPacingAlgorithmsAndSimulationTestBed For eBay Marketplace Sponsored Search. InAdKDD Workshop 2023 at the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining

2023

-

[19]

Cambridge University Press

JudeaPearl.2009.Causality:Models,Reasoning,andInference(2ed.). Cambridge University Press

2009

-

[20]

MIT Press

JonasPeters,DominikJanzing,andBernhardSchölkopf.2017.ElementsofCausal Inference: Foundations and Learning Algorithms. MIT Press

2017

-

[21]

Leonard J. Savage. 1954.The Foundations of Statistics. Wiley

1954

-

[22]

Hoyer, Aapo Hyvärinen, and Antti Kerminen

Shohei Shimizu, Patrik O. Hoyer, Aapo Hyvärinen, and Antti Kerminen. 2006. A Linear Non-Gaussian Acyclic Model for Causal Discovery.Journal of Machine Learning Research7 (2006), 2003–2030

2006

-

[23]

Niranjan Srinivas, Andreas Krause, Sham Kakade, and Matthias Seeger. 2010. Gaussian Process Optimization in the Bandit Setting: No Regret and Experimental Design.InProceedingsofthe27thInternationalConferenceonMachineLearning

2010

-

[24]

Markellos, and Murali K

Efthymia Symitsi, Raphael N. Markellos, and Murali K. Mantrala. 2022. Key- word Portfolio Optimization in Paid Search Advertising.European Journal of Operational Research303, 2 (2022), 767–778

2022

-

[25]

Naiyu Yin, Tian Gao, Yue Yu, and Qiang Ji. 2024. Effective Causal Discovery under Identifiable Heteroscedastic Noise Model. InProceedings of the AAAI Conference on Artificial Intelligence, Vol. 38. 16486–16494

2024

-

[26]

InferenceforSyntheticControlMethodswithMultipleTreated Units.arXiv preprint arXiv:1912.00568(2019)

ZiyanZhang.2019. InferenceforSyntheticControlMethodswithMultipleTreated Units.arXiv preprint arXiv:1912.00568(2019)

-

[27]

Kui Zhao, Junhao Hua, Ling Yan, Qi Zhang, Huan Xu, and Cheng Yang. 2019. A Unified Framework for Marketing Budget Allocation. InProceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining (KDD ’19). 3305–3313

2019

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.