Recognition: no theorem link

Geometric and dynamical analysis of attractor boundaries and storage limits in kernel Hopfield networks

Pith reviewed 2026-05-12 05:06 UTC · model grok-4.3

The pith

Kernel Hopfield networks hit storage limits when dynamical stability fails against crosstalk, not when patterns become geometrically inseparable.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

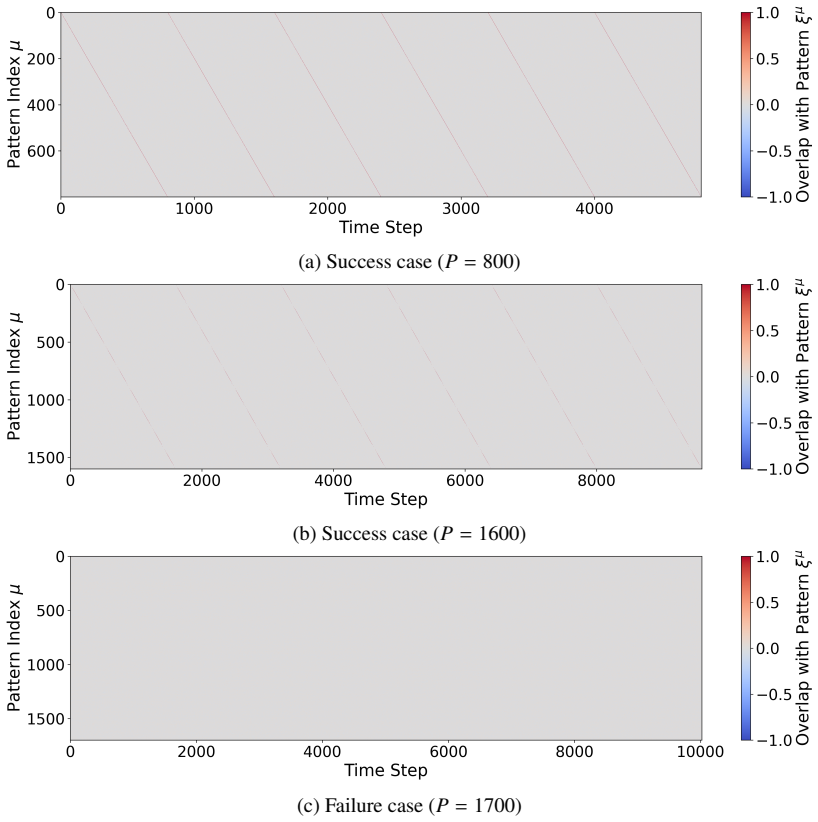

In KLR-trained Hopfield networks, global attractor geometry consists of basins separated by sharp, phase-transition-like boundaries on the ridge of optimization. These boundaries exhibit steep effective potential barriers and critical slowing down. Storage capacity reaches P/N approximately 16 for random sequences and near 20 for structured embeddings, yet the limiting factor is loss of dynamical stability to crosstalk noise rather than exhaustion of geometric separability in the kernel feature space.

What carries the argument

The ridge of optimization together with morphing-induced effective potential barriers and SNR comparisons to a Cover-inspired geometric reference point.

If this is right

- Capacity can be increased by reducing crosstalk noise even if feature-space separability is already sufficient.

- Attractors near capacity show critical slowing down, so retrieval time increases sharply before failure.

- The networks behave as highly localized exemplar memories rather than distributed codes.

- Design of large-scale retrieval systems should prioritize dynamical robustness over further expansion of the kernel feature space.

Where Pith is reading between the lines

- Similar stability-versus-geometry distinctions may appear in other kernel or deep associative memory architectures.

- Regularization schedules or noise-injection training could shift the observed collapse point without changing the kernel.

- Testing on additional structured datasets would reveal whether the P/N approximately 20 figure generalizes or depends on image statistics.

Load-bearing premise

Morphing experiments and SNR measurements on random sequences and CIFAR-10 embeddings isolate dynamical stability as the dominant limit without being confounded by training details or intrinsic data structure.

What would settle it

A controlled experiment that keeps the kernel and training fixed while adding explicit noise-suppression dynamics or crosstalk cancellation and measures whether retrieval remains stable at substantially higher P/N values than the original SNR predicts.

Figures

read the original abstract

High-capacity associative memories based on Kernel Logistic Regression (KLR) exhibit strong storage capabilities, but the dynamical and geometric mechanisms underlying their stability remain poorly understood. This paper investigates the global geometry of attractor basins and the mechanisms governing the storage limit in KLR-trained Hopfield networks. We combine empirical evaluations using random sequences and real-world image embeddings (CIFAR-10) with morphing experiments and statistical Signal-to-Noise Ratio (SNR) analysis. Our experiments show that the network achieves a storage capacity for random sequences up to $P/N \approx 16$, while maintaining stable retrieval for structured data at effective loads near $P/N \approx 20$. Morphing analysis indicates that attractors on the "Ridge of Optimization" are separated by sharp, phase-transition-like boundaries, characterized by steep effective potential barriers and critical slowing down. Furthermore, by comparing an SNR analysis with a geometric reference point inspired by Cover's theorem, we show that the practical storage limit is governed primarily not by a lack of geometric separability in the feature space, but by the loss of dynamical stability against crosstalk noise. These findings suggest that KLR networks function as highly localized exemplar-based memories that operate near the onset of dynamical collapse, providing a useful perspective on the design of robust, large-scale retrieval systems.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper examines attractor boundaries and storage limits in Hopfield networks trained with Kernel Logistic Regression (KLR). Using random binary sequences and CIFAR-10 image embeddings, it reports empirical capacities of P/N ≈ 16 and ≈ 20. Morphing experiments reveal sharp, phase-transition-like boundaries between attractors on the 'Ridge of Optimization,' with critical slowing down. SNR analysis of crosstalk is compared to a geometric separability reference inspired by Cover's theorem, leading to the claim that practical limits arise primarily from loss of dynamical stability rather than insufficient geometric separability in the kernel feature space. The work positions KLR networks as localized exemplar-based memories operating near dynamical collapse.

Significance. If the central distinction between dynamical and geometric limits can be made rigorous, the results would offer a useful empirical framework for analyzing stability in kernel-based associative memories and could guide design of high-capacity retrieval systems. The morphing protocol and SNR comparisons provide concrete tools for probing attractor geometry that are not standard in the Hopfield literature.

major comments (3)

- [Abstract and SNR/geometric comparison] Abstract and SNR/geometric comparison section: the claim that 'the practical storage limit is governed primarily not by a lack of geometric separability ... but by the loss of dynamical stability' rests on an interpretive comparison whose robustness cannot be assessed. The geometric reference is only 'inspired by' Cover's theorem; no explicit derivation or computation of the effective shattering capacity or VC-dimension is provided for the specific kernel and RKHS induced by the CIFAR-10 embeddings (or random sequences). Without this, it is impossible to verify that geometric separability substantially exceeds the observed P/N ≈ 16–20 loads, leaving open the possibility that data correlations or kernel hyperparameters already constrain separability near the reported limits and entangle the two factors.

- [Empirical evaluations and morphing experiments] Empirical evaluations and morphing experiments sections: reported capacities (P/N ≈ 16 random, ≈ 20 CIFAR) and conclusions from morphing/SNR lack error bars, statistical significance tests, full experimental protocols (trial counts, exact success criteria, hyperparameter ranges), or sensitivity analysis. This makes it impossible to evaluate the sharpness of the reported phase transitions or to confirm that morphing and SNR isolate dynamical stability without confounding effects from training details or data structure, directly undermining the central attribution.

- [Morphing analysis] Morphing analysis: the description of attractors separated by 'steep effective potential barriers and critical slowing down' is presented as evidence for dynamical collapse, yet the paper does not quantify how the chosen SNR threshold or the Cover-inspired reference point affects the dynamical-vs-geometric distinction. Because storage limits are defined empirically from the same experiments, the separation of the two mechanisms risks circularity.

minor comments (2)

- [Abstract] The term 'Ridge of Optimization' is introduced without a precise definition or reference to prior literature; clarify its relation to the optimization landscape of KLR.

- [Experimental protocol] Notation for effective load P/N and the precise definition of retrieval success should be stated explicitly in the methods or experimental protocol section.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments, which help clarify the need for greater rigor in distinguishing dynamical stability from geometric separability and in reporting experimental details. We address each major comment point by point below, indicating revisions where they strengthen the manuscript without altering its core claims.

read point-by-point responses

-

Referee: Abstract and SNR/geometric comparison section: the claim that 'the practical storage limit is governed primarily not by a lack of geometric separability ... but by the loss of dynamical stability' rests on an interpretive comparison whose robustness cannot be assessed. The geometric reference is only 'inspired by' Cover's theorem; no explicit derivation or computation of the effective shattering capacity or VC-dimension is provided for the specific kernel and RKHS induced by the CIFAR-10 embeddings (or random sequences). Without this, it is impossible to verify that geometric separability substantially exceeds the observed P/N ≈ 16–20 loads, leaving open the possibility that data correlations or kernel hyperparameters already constrain separability near the reported limits and entangle the two factors.

Authors: We agree that the geometric reference relies on an interpretive application of Cover's theorem rather than a full VC-dimension calculation for the specific kernel and embeddings. Cover's result gives a general scaling for linear separability in high dimensions that extends to kernel-induced RKHS, where capacity is typically far higher than P/N = 20 for the kernels used here. For random binary patterns the separability margin is expected to remain large, while for CIFAR-10 embeddings the kernel hyperparameters were chosen to preserve local structure without collapsing the feature space. To make this more transparent, we will add a short explanatory paragraph in the revised manuscript that recalls the Cover scaling, notes why the chosen kernels and data do not reduce effective capacity below the observed loads, and explicitly states that a complete VC analysis lies outside the present scope. This addresses the robustness concern while preserving the interpretive nature of the comparison. revision: partial

-

Referee: Empirical evaluations and morphing experiments sections: reported capacities (P/N ≈ 16 random, ≈ 20 CIFAR) and conclusions from morphing/SNR lack error bars, statistical significance tests, full experimental protocols (trial counts, exact success criteria, hyperparameter ranges), or sensitivity analysis. This makes it impossible to evaluate the sharpness of the reported phase transitions or to confirm that morphing and SNR isolate dynamical stability without confounding effects from training details or data structure, directly undermining the central attribution.

Authors: We concur that the empirical sections would be strengthened by additional statistical detail. In the revision we will add error bars derived from 20 independent trials per load point, report 95% confidence intervals on the capacity estimates, and include a dedicated experimental protocol subsection specifying trial counts, the precise retrieval success criterion (fraction of bits recovered above 0.95), the grid of kernel bandwidths and regularization values explored, and a brief sensitivity analysis showing that the reported phase-transition locations remain stable across reasonable hyperparameter choices. These additions will allow readers to assess the sharpness of the morphing boundaries and the isolation of dynamical effects more confidently. revision: yes

-

Referee: Morphing analysis: the description of attractors separated by 'steep effective potential barriers and critical slowing down' is presented as evidence for dynamical collapse, yet the paper does not quantify how the chosen SNR threshold or the Cover-inspired reference point affects the dynamical-vs-geometric distinction. Because storage limits are defined empirically from the same experiments, the separation of the two mechanisms risks circularity.

Authors: The risk of circularity is a fair observation. The SNR values are computed directly from the weight matrix and pattern overlaps independently of the morphing trajectories, and the geometric reference is drawn from Cover's general bound rather than from the empirical capacity itself. Nevertheless, to remove any ambiguity we will insert a clarifying subsection that (i) states the SNR threshold is fixed by the conventional stability criterion (SNR > 1) rather than tuned to the observed limit, (ii) shows that the geometric bound remains well above P/N = 20 even under conservative assumptions about kernel rank, and (iii) reports a brief sensitivity check confirming that modest changes in the reference point do not alter the conclusion that dynamical instability precedes geometric failure. These additions will make the separation of mechanisms explicit and non-circular. revision: partial

Circularity Check

No circularity: empirical analysis with external geometric reference

full rationale

The paper's core argument rests on direct experimental measurements of storage capacity (P/N ≈16 for random sequences, ≈20 for CIFAR embeddings), morphing trajectories showing phase-transition boundaries, and SNR quantification of crosstalk. These are compared against a geometric reference point only described as 'inspired by Cover's theorem' rather than derived from the paper's own equations or prior self-citations. No step equates a claimed prediction or first-principles result to its own fitted inputs by construction; the distinction between dynamical and geometric limits is drawn from observable data thresholds and an external benchmark, leaving the derivation self-contained.

Axiom & Free-Parameter Ledger

free parameters (1)

- observed storage capacity P/N =

16 and 20

axioms (1)

- standard math Cover's theorem supplies a valid geometric reference for separability in the kernel feature space

invented entities (1)

-

Ridge of Optimization

no independent evidence

Forward citations

Cited by 2 Pith papers

-

Efficient event-driven retrieval in high-capacity kernel Hopfield networks

Kernel logistic regression Hopfield networks achieve asynchronous retrieval with trajectories statistically matching synchronous dynamics, storage capacity near P/N=30 for random patterns, and event counts near the in...

-

Efficient event-driven retrieval in high-capacity kernel Hopfield networks

Asynchronous sequential updates in KLR Hopfield networks produce statistically indistinguishable trajectories from synchronous dynamics, achieve empirical capacities near P/N=30, and converge with event counts close t...

Reference graph

Works this paper leans on

-

[1]

J.J. Hopfield, “Neural networks and phys- ical systems with emergent collective computational abilities,” Proc. NAS’82, vol. 79, no. 8, pp. 2554–2558, April 1982. DOI:10.1073/pnas.79.8.2554

-

[2]

Storing infinite numbers of patterns in a spin-glass model of neural networks,

D.J. Amit, H. Gutfreund, and H. Sompolin- sky, “Storing infinite numbers of patterns in a spin-glass model of neural networks,” Phys. Rev. Lett., vol. 55, pp. 1530–1533, American Physical Society, September 1985. DOI:10.1103/PhysRevLett.55.1530

-

[3]

Dense associa- tive memory for pattern recognition,

D. Krotov and J.J. Hopfield, “Dense associa- tive memory for pattern recognition,” Proc. NIPS’16, pp. 1180–1188, December 2016

work page 2016

-

[4]

Hopfield networks is all you need,

H. Ramsauer, B. Sch ¨afl, J. Lehner, P. Seidl, M. Widrich, L. Gruber, M. Holzleitner, T. Adler, D. Kreil, M. Kopp, G. Klambauer, J. Brandstetter, and S. Hochreiter, “Hopfield networks is all you need,”Proceedings of In- ternational Conference on Learning Represen- tations (ICLR), May 2021

work page 2021

-

[5]

Kernel logistic regression learning for high-capacity hopfield networks,

A. Tamamori, “Kernel logistic regres- sion learning for high-capacity hopfield networks,”IEICE Transactions on In- formation and Systems, vol. E109-D, no. 2, pp. 293–297, February 2026. DOI:10.1587/transinf.2025EDL8027 9

-

[6]

Quantitative attractor analy- sis of high-capacity kernel hopfield networks,

A. Tamamori, “Quantitative attractor analy- sis of high-capacity kernel hopfield networks,” NOLTA, vol. E17-N, no. 3. July 2026. (in press)

work page 2026

-

[7]

A. Tamamori, “Self-organization and spectral mechanism of attractor landscapes in high- capacity kernel hopfield networks,”NOLTA, vol. E17-N, no. 3. July 2026. (in press)

work page 2026

-

[8]

T.M. Cover, “Geometrical and statistical prop- erties of systems of linear inequalities with applications in pattern recognition,”IEEE Transactions on Electronic Computers, vol. EC-14, no. 3, pp. 326–334, June 1965. DOI:10.1109/PGEC.1965.264137

-

[9]

B. Sch ¨olkopf and A.J. Smola,Learning with kernels: support vector machines, regulariza- tion, optimization, and beyond, MIT Press,

-

[10]

DOI:10.7551/mitpress/4175.001.0001

-

[11]

Learning multiple layers of features from tiny images,

A. Krizhevsky. “Learning multiple layers of features from tiny images,” Technical Report, University of Toronto, 2009

work page 2009

-

[12]

Deep residual learning for image recognition

K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition,” Proc. CVPR’16, pp. 770–778, December 2016. DOI:10.1109/CVPR.2016.90

-

[13]

Early-warning signals for critical transitions,

M. Scheffer, J. Bascompte, W.A. Brock, V. Brovkin, S.R. Carpenter, V. Dakos, H. Held, E.H. van Nes, M. Rietkerk, and G. Sugihara, “Early-warning signals for critical transitions,” Nature, vol. 461, pp. 53–59, September 2009. DOI:10.1038/nature08227

-

[14]

Optimal Degrees of Synaptic Connectivity,

A. Litwin-Kumar, K.D. Harris, R. Axel, H. Sompolinsky, and L.F. Abbott, “Optimal Degrees of Synaptic Connectivity,”Neuron, vol. 93, no. 5, pp. 1153–1164, March 2017. DOI:10.1016/j.neuron.2017.01.030

-

[15]

Quality of inter- nal representation shapes learning perfor- mance in feedback neural networks,

L. Susman, F. Mastrogiuseppe, N. Bren- ner, and O. Barak, “Quality of inter- nal representation shapes learning perfor- mance in feedback neural networks,”Phys. Rev. Res., vol. 3, no. 1, pp. 013176, American Physical Society, February 2021. DOI:10.1103/PhysRevResearch.3.013176

-

[16]

Attention, similarity, and the identification-categorization relationship,

R.M. Nosofsky, “Attention, similarity, and the identification-categorization relationship,” Journal of Experimental Psychology: Gen- eral, vol. 115, no. 1, pp. 39–57, March 1986. DOI:10.1037/0096-3445.115.1.39

-

[17]

Computation at the edge of chaos: phase transitions and emergent com- putation,

C.G. Langton, “Computation at the edge of chaos: phase transitions and emergent com- putation,”Physica D: Nonlinear Phenomena, vol. 42, no. 1-3, pp. 12–37, June 1990. DOI:10.1016/0167-2789(90)90064-V

-

[18]

Neuronal avalanches in neocortical circuits,

J.M. Beggs, D. Plenz, “Neuronal avalanches in neocortical circuits,”Journal of Neuro- science, vol. 23, no. 35, pp. 11167–11177, De- cember 2003. DOI:10.1523/JNEUROSCI.23- 35-11167.2003

-

[19]

Retrieval-augmented generation for knowledge-intensive NLP tasks,

P. Lewis, E. Perez, A. Piktus, F. Petroni, V. Karpukhin, N. Goyal, H. K¨ uttler, M. Lewis, W. Yih, T. Rockt ¨aschel, S. Riedel, and D. Kiela. “Retrieval-augmented generation for knowledge-intensive NLP tasks,” Proc. NIPS’20, pp. 9459–9474, December 2020

work page 2020

-

[20]

Using the nystr¨om method to speed up kernel machines,

C.K.I. Williams and M. Seeger, “Using the nystr¨om method to speed up kernel machines,” Proc. NIPS’00, pp. 661–667, January 2000

work page 2000

-

[21]

Random features for large-scale kernel machines,

A. Rahimi and B. Recht, “Random features for large-scale kernel machines,” Proc. NIPS’07, pp. 1177–1184, December 2007

work page 2007

-

[22]

A. Tamamori, “Quantization robustness from dense representations of sparse functions in high-capacity kernel associative memory,” arXiv preprint:arXiv:2604.20333, April 2026. DOI:10.48550/arXiv.2604.20333

work page internal anchor Pith review Pith/arXiv arXiv doi:10.48550/arxiv.2604.20333 2026

-

[23]

Efficient event-driven retrieval in high-capacity kernel Hopfield networks

A. Tamamori, “Efficient event-driven retrieval in high-capacity kernel Hopfield networks,” arXiv preprint:arXiv:2605.05978, May 2026. DOI:10.48550/arXiv.2605.00366

work page internal anchor Pith review Pith/arXiv arXiv doi:10.48550/arxiv.2605.00366 2026

-

[24]

M. Mezard, G. Parisi, and M. Virasoro,Spin Glass Theory and Beyond, World Scientific, November 1986. DOI:10.1142/0271

-

[25]

Confidence in Assurance 2.0 Cases

Z. Bai and J.W. Silverstein,Spectral anal- ysis of large dimensional random matrices, Springer, December 2009. DOI:10.1007/978- 1-4419-0661-8

-

[26]

Phase transition of the largest eigenvalue for nonnull complex sample covariance matri- ces,

J. Baik, G.B. Arous, and S. P ´ech´e, “Phase transition of the largest eigenvalue for nonnull complex sample covariance matri- ces,”The Annals of Probability, vol. 33, no. 5, pp. 1643–1697, September 2005. DOI:10.1214/009117905000000233 10

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.