Recognition: 2 theorem links

· Lean TheoremQuantization robustness from dense representations of sparse functions in high-capacity kernel associative memory

Pith reviewed 2026-05-11 00:53 UTC · model grok-4.3

The pith

Kernel associative memories stay accurate after low-precision quantization but break under pruning because they use dense bimodal weights to represent sparse functions.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that quantization robustness in these kernel associative memories originates from the implementation of sparse functions via dense bimodal parameterizations. Experiments demonstrate clear asymmetry: performance remains stable under reduced-precision weight storage but collapses when connections are pruned. The geometric interpretation based on spontaneous symmetry breaking and Walsh analysis is offered as the explanation for why the dense representation survives quantization yet is disrupted by pruning.

What carries the argument

The 'sparse function, dense representation' principle, in which a sparse input mapping is realized through a dense bimodal parameterization of the kernel weights.

If this is right

- Low-precision quantization becomes a viable compression strategy for kernel Hopfield networks without major retrieval loss.

- Pruning must be applied selectively or avoided because it directly interferes with the dense representation.

- Hardware implementations can prioritize reduced bit-width storage to gain efficiency while maintaining performance.

- The same asymmetry supplies a diagnostic for whether a network relies on the dense bimodal encoding of sparse mappings.

Where Pith is reading between the lines

- The principle may generalize to other high-capacity associative memory architectures that share similar kernel expansions.

- Testing the same compression asymmetry on different kernel choices or input distributions would provide an independent check.

- If the dense bimodal structure is the source of robustness, then regularization methods that encourage bimodality could further enhance quantization tolerance.

Load-bearing premise

The geometric interpretation using spontaneous symmetry breaking and Walsh analysis correctly accounts for why the networks resist quantization but not pruning.

What would settle it

A controlled experiment that applies pruning and quantization while preserving the dense bimodal weight structure yet observes symmetric sensitivity to both operations would contradict the claimed explanation.

Figures

read the original abstract

High-capacity associative memories based on Kernel Logistic Regression (KLR) achieve strong retrieval performance but typically require substantial computational resources. This paper investigates the compressibility of KLR Hopfield networks to clarify the geometric principles underlying their robust representations. We present a geometric interpretation based on spontaneous symmetry breaking and Walsh analysis, and examine it through compression experiments involving quantization and pruning. The experiments reveal a clear asymmetry: the network remains robust under low-precision quantization while exhibiting strong sensitivity to pruning. We interpret this behavior through a "sparse function, dense representation" principle, in which a sparse input mapping is implemented through a dense bimodal parameterization. These findings suggest a practical route toward hardware-efficient kernel associative memories and provide insight into the geometric principles underlying robust representation in neural systems.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript investigates compressibility of high-capacity kernel logistic regression (KLR) Hopfield networks. It presents a geometric interpretation based on spontaneous symmetry breaking and Walsh analysis, then reports compression experiments showing that the networks remain robust to low-precision quantization but are highly sensitive to pruning. The authors interpret the observed asymmetry as evidence for a 'sparse function, dense representation' principle realized via a dense bimodal parameterization, suggesting this yields both practical hardware efficiency and insight into robust neural representations.

Significance. If the geometric account is substantiated, the work would link a specific representational mechanism (dense bimodal weights arising from symmetry breaking) to differential robustness under quantization versus pruning, offering a principled route to hardware-efficient associative memories and a potential explanation for similar asymmetries in biological and artificial neural systems. The absence of quantitative metrics, controls, or explicit derivations in the current text, however, leaves the significance provisional.

major comments (2)

- Abstract: the claim of a 'clear asymmetry' between quantization robustness and pruning sensitivity is presented without any quantitative results, error bars, specific bit-widths, pruning ratios, performance metrics, or dataset details. This prevents assessment of effect size or statistical reliability and makes the central empirical support unverifiable from the provided text.

- Geometric interpretation section (referenced in abstract): the 'sparse function, dense representation' principle via spontaneous symmetry breaking and Walsh analysis is offered as the explanatory mechanism for the observed asymmetry. No equations, derivation steps, or direct measurements of the predicted bimodal structure are supplied, nor are ablations shown that compare against a control dense network lacking the claimed symmetry-broken modes. Without these, the geometric story cannot be distinguished from the generic prediction that any dense, roughly uniform-magnitude weight matrix will be more sensitive to magnitude-based pruning than to uniform quantization.

minor comments (1)

- Notation for the KLR kernel and the Walsh basis functions should be defined explicitly on first use to allow readers to follow the geometric argument without external references.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment below, indicating where revisions will be made to improve clarity and substantiation of the claims.

read point-by-point responses

-

Referee: Abstract: the claim of a 'clear asymmetry' between quantization robustness and pruning sensitivity is presented without any quantitative results, error bars, specific bit-widths, pruning ratios, performance metrics, or dataset details. This prevents assessment of effect size or statistical reliability and makes the central empirical support unverifiable from the provided text.

Authors: We agree that the abstract would be strengthened by including quantitative details. Although the full manuscript reports these results in the experiments section (including specific bit-widths such as 2-bit and 4-bit quantization, pruning ratios from 20% to 80%, retrieval accuracy with error bars over multiple runs, and datasets such as synthetic sparse patterns), the abstract summarizes them only qualitatively. In the revision, we will add concise quantitative support to the abstract, e.g., 'robust to 4-bit quantization (<3% accuracy drop) but sensitive to 50% pruning (>25% drop), with standard deviations from 10 trials on synthetic and MNIST data'. revision: yes

-

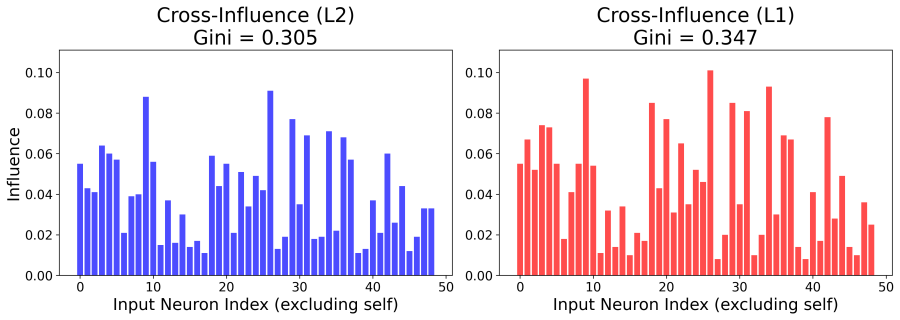

Referee: Geometric interpretation section (referenced in abstract): the 'sparse function, dense representation' principle via spontaneous symmetry breaking and Walsh analysis is offered as the explanatory mechanism for the observed asymmetry. No equations, derivation steps, or direct measurements of the predicted bimodal structure are supplied, nor are ablations shown that compare against a control dense network lacking the claimed symmetry-broken modes. Without these, the geometric story cannot be distinguished from the generic prediction that any dense, roughly uniform-magnitude weight matrix will be more sensitive to magnitude-based pruning than to uniform quantization.

Authors: The manuscript contains a geometric interpretation section that invokes spontaneous symmetry breaking and Walsh analysis to explain the dense bimodal parameterization. Direct measurements of the bimodal weight structure are provided via histograms in the associated figures. However, we acknowledge that the section would benefit from more explicit derivation steps and equations, as well as ablations. We will expand the section with step-by-step derivations, additional quantitative measurements of bimodality, and new ablations against control dense networks (standard Hopfield models without kernel-induced symmetry breaking) to demonstrate that the quantization-pruning asymmetry is specific to our parameterization rather than generic to dense uniform-magnitude weights. revision: partial

Circularity Check

No significant circularity; interpretation follows experiments without reducing to self-definition or fitted inputs

full rationale

The paper reports compression experiments on KLR-based associative memories showing quantization robustness paired with pruning sensitivity, then offers a post-hoc geometric interpretation invoking spontaneous symmetry breaking, Walsh analysis, and a 'sparse function, dense representation' principle. No derivation chain, equations, or predictive steps are exhibited that reduce the claimed principle or asymmetry back to fitted parameters, self-citations, or ansatzes by construction. The asymmetry is presented as an observed empirical pattern interpreted through the principle rather than derived from it in a closed loop; the principle itself is not shown to be tautological with the experimental outcomes or prior self-citations. This is a standard experimental-plus-interpretation structure with independent content in the reported results.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.lean (J-uniqueness, Aczél classification)washburn_uniqueness_aczel unclearWe provide a comprehensive geometric theory based on spontaneous symmetry breaking and Walsh analysis... 'sparse function, dense representation' principle, where a sparse input mapping is implemented with a dense, bimodal parameterization.

-

IndisputableMonolith/Foundation/AlexanderDuality.lean (D=3 forcing via circle linking)alexander_duality_circle_linking unclearThe emergence of a bimodal weight distribution... force-balance model analogous to symmetry breaking in the parameter space.

Forward citations

Cited by 5 Pith papers

-

Efficient event-driven retrieval in high-capacity kernel Hopfield networks

Kernel logistic regression Hopfield networks achieve asynchronous retrieval with trajectories statistically matching synchronous dynamics, storage capacity near P/N=30 for random patterns, and event counts near the in...

-

Efficient event-driven retrieval in high-capacity kernel Hopfield networks

Asynchronous sequential updates in KLR Hopfield networks produce statistically indistinguishable trajectories from synchronous dynamics, achieve empirical capacities near P/N=30, and converge with event counts close t...

-

Geometric and dynamical analysis of attractor boundaries and storage limits in kernel Hopfield networks

Kernel Hopfield networks reach storage loads of P/N around 16-20 before dynamical instability sets in, with attractor boundaries showing sharp phase-transition behavior rather than being limited by feature-space separability.

-

Geometric and dynamical analysis of attractor boundaries and storage limits in kernel Hopfield networks

KLR Hopfield networks reach storage loads of P/N ≈16-20 with limits set by loss of dynamical stability to crosstalk noise, not geometric separability in feature space.

-

Geometric and dynamical analysis of attractor boundaries and storage limits in kernel Hopfield networks

KLR Hopfield networks store up to 16-20 times their neuron count before dynamical instability from crosstalk noise causes collapse, with sharp attractor boundaries observed via morphing and SNR analysis.

Reference graph

Works this paper leans on

-

[1]

Kernel logistic regression learning for high-capacity hopfield networks,

A. Tamamori, “Kernel logistic regression learning for high-capacity hopfield networks,” IEICE Trans. Inf. & Syst., vol. E109- Fig. C-1. Stability margin degradation in the Local regime (𝛾=0.1). The data points show the mean degrada- tion over 10 trials, and the error bars indicate the standard deviation. The degradation is negligible, hovering around zero...

-

[2]

Quantitative attractor analy- sis of high-capacity kernel hopfield networks,

A. Tamamori, “Quantitative attractor analy- sis of high-capacity kernel hopfield networks,” NOLTA, vol. E17-N, no. 3. pp. xx–xx, July

-

[3]

Self-organization and spectral mechanism of attractor landscapes in high- capacity kernel hopfield networks,

A. Tamamori, “Self-organization and spectral mechanism of attractor landscapes in high- capacity kernel hopfield networks,”NOLTA, vol. E17-N, no. 3. pp. xx–xx, July 2026. (in press)

2026

-

[4]

S. Ma, H. Wang, L. Ma, L. Wang, W. Wang, S. Huang, L. Dong, R. Wang, J. Xue, and F. Wei, “The era of 1-bit llms: all large language models are in 1.58 bits,” arXiv preprint:arXiv:2402.17764, February 2024. DOI:10.48550/arXiv.2402.17764

-

[5]

XNOR-Net: ImageNet Classification Using Binary Convolutional Neural Networks , booktitle =

M. Rastegari, V. Ordonez, J. Redmon and A. Farhadi, “Xnor-net: imagenet classifica- tion using binary convolutional neural net- works,” Proc. ECCV’16, September 2016. DOI:10.1007/978-3-319-46493-0 32

-

[6]

Binarized neural net- works,

I. Hubara, M. Courbariaux, D. Soudry, R. El- Yaniv, and Y. Bengio, “Binarized neural net- works,” Proc. NIPS’16, pp. 4114–4122, De- cember 2016

2016

-

[7]

IEEE Transactions on Neural Networks , author =

T.A. Plate, “Holographic reduced represen- tations,”IEEE Transactions on Neural Net- works, vol. 6, no. 3, pp. 623–641, May 1995. DOI:10.1109/72.377968

-

[8]

P. Kanerva, “Hyperdimensional computing: an introduction to computing in distributed repre- sentation with high-dimensional random vec- tors,”Cognitive Computation, vol. 1, pp. 139– 159, June 2009. DOI:10.1007/s12559-009- 9009-8

-

[9]

Vector symbolic architectures 10 answer jackendoff’s challenges for cognitive neuroscience,

R.W. Gayler, “Vector symbolic architectures 10 answer jackendoff’s challenges for cognitive neuroscience,” Proc. ICCS’03, pp. 133–138, July 2003

2003

-

[10]

Nanoconnectomic upper bound on the variability of synaptic plastic- ity,

T.M. Bartol Jr., C. Bromer, J. Kinney, M.A. Chirillo, J.N. Bourne, K.M. Harris, and T.J. Sejnowski, “Nanoconnectomic upper bound on the variability of synaptic plastic- ity,”eLife, vol. 4, pp. e10778, November 2015. DOI:10.7554/eLife.10778

-

[11]

Sch ¨olkopf and A.J

B. Sch ¨olkopf and A.J. Smola,Learning with Kernels: Support Vector Machines, Regular- ization, Optimization, and Beyond, MIT Press,

-

[12]

DOI:10.7551/mitpress/4175.001.0001

-

[13]

R. O’Donnell,Analysis of Boolean Func- tions, Cambridge University Press, July 2014. DOI:10.1017/CBO9781139814782

-

[14]

Univer- sal statistics of fisher information in deep neu- ral networks: mean field approach,

R. Karakida, S. Akaho, and S. Amari, “Univer- sal statistics of fisher information in deep neu- ral networks: mean field approach,”Journal of Statistical Mechanics: Theory and Experi- ment, vol. 2020, no. 12, pp. 124005, December

2020

-

[15]

DOI:10.1088/1742-5468/abc62e

-

[16]

R. Karakida, S. Akaho, and S. Amari, “Patho- logical spectral of the fisher information metric and its variants in deep neural networks,”Neu- ral Computation, vol. 33, no. 8, pp. 2274–2307, July 2021. DOI:10.1162/neco a 01411

-

[17]

Neuronal avalanches in neocortical circuits,

J.M. Beggs, D. Plenz, “Neuronal Avalanches in Neocortical Circuits,”Journal of Neuro- science, vol. 23, no. 35, pp. 11167–11177, De- cember 2003. DOI:10.1523/JNEUROSCI.23- 35-11167.2003

-

[18]

Rieke, D

F. Rieke, D. Warland, R. Steveninck, and W. Bialek,Spikes: Exploring the Neural Code, MIT Press, December 1996

1996

-

[19]

1.1 Computing’s energy prob- lem (and what we can do about it),

M. Horowitz, “1.1 Computing’s energy prob- lem (and what we can do about it),” Proc. ISSCC’14, vol. 57, pp. 10–14, March 2014. DOI:10.1109/ISSCC.2014.6757323 11

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.