Recognition: no theorem link

Beyond Heuristics: Learnable Density Control for 3D Gaussian Splatting

Pith reviewed 2026-05-12 02:43 UTC · model grok-4.3

The pith

LeGS replaces handcrafted heuristics with a reinforcement learning policy for density control in 3D Gaussian Splatting.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

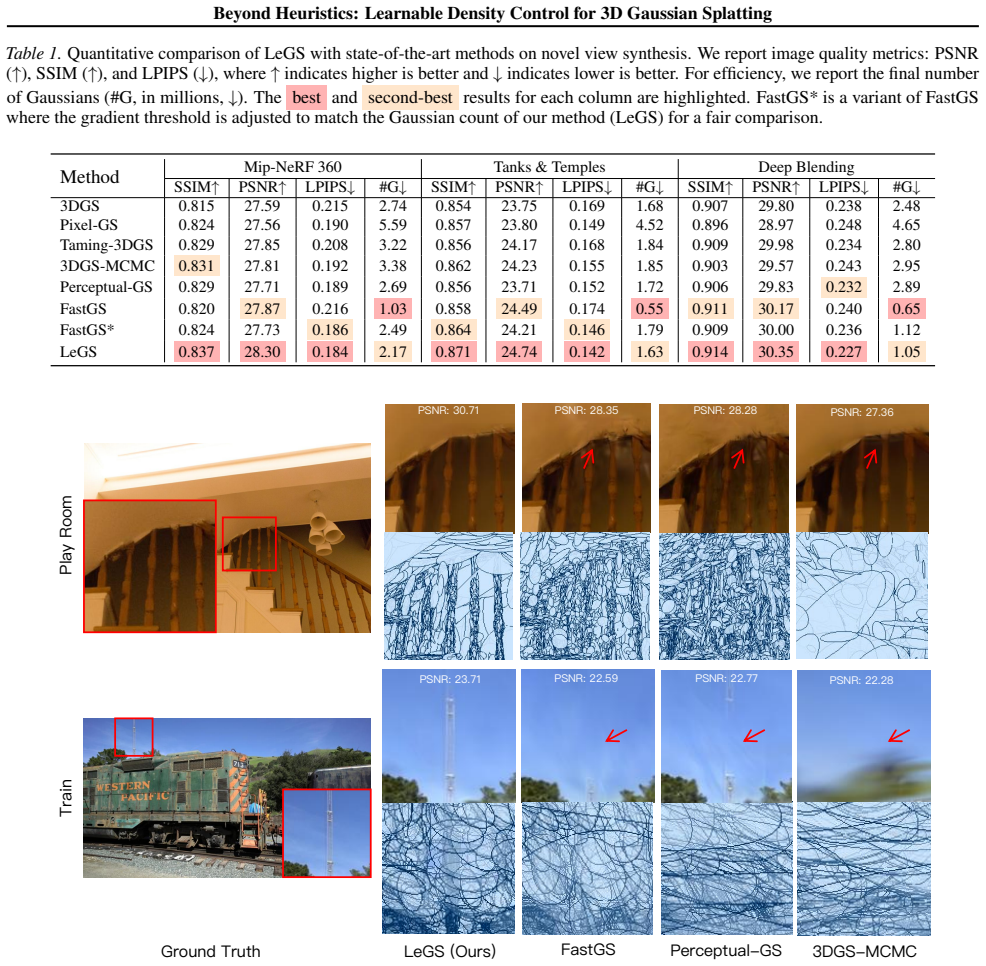

LeGS reformulates density control as a parameterized policy network optimized via Reinforcement Learning, with a tailored effective reward function grounded in sensitivity analysis that reduces complexity from O(N²) to O(N) and significantly outperforms state-of-the-art methods on Mip-NeRF 360, Tanks & Temples, and Deep Blending.

What carries the argument

The parameterized policy network trained by RL, driven by a closed-form sensitivity-analysis reward that scores the marginal contribution of each Gaussian to final reconstruction quality.

Load-bearing premise

The sensitivity-based reward correctly ranks how much each Gaussian improves final image quality, and the resulting policy transfers to new scenes without overfitting to the training data or reward design.

What would settle it

A policy trained only on Mip-NeRF 360 scenes produces lower PSNR or higher rendering time than standard heuristics when applied to entirely new, unseen scenes from a different dataset.

Figures

read the original abstract

While 3D Gaussian Splatting (3DGS) has demonstrated impressive real-time rendering performance, its efficacy remains constrained by a reliance on heuristic density control. Despite numerous refinements to these handcrafted rules, such methods inherently lack the flexibility to adapt to diverse scenes with complex geometries. In this paper, we propose a paradigm shift for density control from rigid heuristics to fully learnable policies. Specifically, we introduce \textbf{LeGS}, a framework that reformulates density control as a parameterized policy network optimized via Reinforcement Learning (RL). Central to our approach is the tailored effective reward function grounded in sensitivity analysis, which precisely quantifies the marginal contribution of individual Gaussians to reconstruction quality. To maintain computational tractability, we derive a closed-form solution that reduces the complexity of reward calculation from $O(N^2)$ to $O(N)$. Extensive experiments on the Mip-NeRF 360, Tanks \& Temples, and Deep Blending datasets demonstrate that \textbf{LeGS} significantly outperforms state-of-the-art methods, striking a superior balance between reconstruction quality and efficiency. The code will be released at https://github.com/AaronNZH/LeGS

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces LeGS, a framework that replaces heuristic density control in 3D Gaussian Splatting with a parameterized policy network trained via reinforcement learning. The core technical contribution is a sensitivity-analysis-derived reward function that quantifies each Gaussian's marginal contribution to reconstruction quality and admits a closed-form O(N) implementation, reducing complexity from O(N²). Experiments on Mip-NeRF 360, Tanks & Temples, and Deep Blending report improved quality-efficiency trade-offs over prior methods.

Significance. If the reward function reliably approximates marginal contributions despite non-linear alpha compositing and view-dependent overlaps, the work would provide a principled, learnable alternative to hand-crafted density rules, potentially improving generalization across scenes with complex geometry. The closed-form O(N) derivation, if rigorously validated, would be a notable efficiency gain.

major comments (3)

- [§3.2] §3.2 (Reward Formulation) and the sensitivity-analysis derivation: the claim that the closed-form O(N) reward precisely quantifies marginal contribution rests on a first-order linearization. Because final pixel color arises from depth-ordered alpha compositing of overlapping Gaussians, removing or perturbing one Gaussian produces non-additive, higher-order changes that depend on all other primitives along each ray. The manuscript must explicitly state the independence or small-perturbation assumptions used in the reduction and provide a quantitative validation (e.g., correlation between the O(N) reward and the true ΔPSNR obtained by ablating individual Gaussians on held-out views).

- [§4.2] §4.2 (RL Training and Policy Generalization): the reported gains on Mip-NeRF 360, Tanks & Temples, and Deep Blending could be explained by reward shaping or scene-specific normalization embedded in the sensitivity analysis rather than by superior policy learning. The paper should include an ablation that replaces the learned policy with the original heuristic while keeping the same reward, and a cross-scene transfer experiment (train on one dataset, evaluate density control on another) to demonstrate that the policy generalizes beyond the training scenes.

- [Table 2] Table 2 and Figure 5 (Quantitative and Qualitative Results): the reported PSNR/SSIM improvements are modest (typically <1 dB). Without error bars over multiple random seeds or statistical significance tests, it is unclear whether the gains exceed the variability introduced by the RL training itself.

minor comments (2)

- [Abstract] The abstract states that the reward 'precisely quantifies' marginal contribution; this language should be softened to 'approximates' pending the validation requested above.

- [§3.1] Notation for the policy network parameters and the sensitivity matrix should be introduced once and used consistently; several symbols appear without prior definition in §3.1.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments. We address each major point below and commit to revisions that strengthen the manuscript's rigor and clarity without altering its core claims.

read point-by-point responses

-

Referee: [§3.2] §3.2 (Reward Formulation) and the sensitivity-analysis derivation: the claim that the closed-form O(N) reward precisely quantifies marginal contribution rests on a first-order linearization. Because final pixel color arises from depth-ordered alpha compositing of overlapping Gaussians, removing or perturbing one Gaussian produces non-additive, higher-order changes that depend on all other primitives along each ray. The manuscript must explicitly state the independence or small-perturbation assumptions used in the reduction and provide a quantitative validation (e.g., correlation between the O(N) reward and the true ΔPSNR obtained by ablating individual Gaussians on held-out views).

Authors: We agree that the reward is derived via first-order linearization of the rendering equation. In the revision we will explicitly state the small-perturbation assumption and the conditions (local linearity around the current Gaussian parameters) under which the O(N) closed-form approximates marginal contribution. We will also add a quantitative validation subsection that reports the Pearson correlation between our O(N) reward values and the true ΔPSNR obtained by ablating individual Gaussians on held-out views across representative scenes, thereby demonstrating the practical fidelity of the approximation. revision: yes

-

Referee: [§4.2] §4.2 (RL Training and Policy Generalization): the reported gains on Mip-NeRF 360, Tanks & Temples, and Deep Blending could be explained by reward shaping or scene-specific normalization embedded in the sensitivity analysis rather than by superior policy learning. The paper should include an ablation that replaces the learned policy with the original heuristic while keeping the same reward, and a cross-scene transfer experiment (train on one dataset, evaluate density control on another) to demonstrate that the policy generalizes beyond the training scenes.

Authors: To isolate the policy's contribution, we will add an ablation that substitutes the learned policy with the original heuristic density-control rules while retaining the identical sensitivity-derived reward; any remaining performance gap will then be attributable to the learned policy rather than reward shaping. We will also include a cross-scene transfer experiment in which the policy is trained on scenes from one dataset and evaluated on unseen scenes from the other datasets, reporting the resulting quality-efficiency metrics to substantiate generalization. revision: yes

-

Referee: [Table 2] Table 2 and Figure 5 (Quantitative and Qualitative Results): the reported PSNR/SSIM improvements are modest (typically <1 dB). Without error bars over multiple random seeds or statistical significance tests, it is unclear whether the gains exceed the variability introduced by the RL training itself.

Authors: We acknowledge that the absolute gains are modest yet consistent across datasets and yield improved quality-efficiency trade-offs. In the revision we will rerun the RL training over multiple random seeds, report mean ± standard deviation for PSNR/SSIM and efficiency metrics, and include paired statistical significance tests to confirm that the observed improvements exceed the stochastic variability of the training process. revision: yes

Circularity Check

No significant circularity in derivation chain

full rationale

The paper's core derivation introduces a parameterized policy network for density control optimized via RL, with the reward function obtained by applying sensitivity analysis to quantify marginal Gaussian contributions and then deriving a closed-form O(N) expression from the O(N²) formulation. This is a standard mathematical reduction under stated assumptions rather than a self-referential definition, fitted input renamed as prediction, or load-bearing self-citation. No equations or steps in the abstract or described chain reduce the claimed result to its own inputs by construction; the RL training and empirical validation on external datasets remain independent of the reward derivation. The approach is self-contained against the original 3DGS heuristics without circularity.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

- [1]

-

[2]

arXiv preprint arXiv:2511.04283 , year=

FastGS: Training 3D Gaussian Splatting in 100 Seconds , author=. arXiv preprint arXiv:2511.04283 , year=

-

[3]

SIGGRAPH Asia 2024 Conference Papers , pages=

Taming 3dgs: High-quality radiance fields with limited resources , author=. SIGGRAPH Asia 2024 Conference Papers , pages=

work page 2024

-

[4]

European Conference on Computer Vision , pages=

Pixel-gs: Density control with pixel-aware gradient for 3d gaussian splatting , author=. European Conference on Computer Vision , pages=. 2024 , organization=

work page 2024

-

[5]

Visualizing and Understanding Convolutional Networks , author=. 2013 , eprint=

work page 2013

-

[6]

Proximal Policy Optimization Algorithms

Proximal policy optimization algorithms , author=. arXiv preprint arXiv:1707.06347 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[7]

High-Dimensional Continuous Control Using Generalized Advantage Estimation

High-dimensional continuous control using generalized advantage estimation , author=. arXiv preprint arXiv:1506.02438 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[8]

DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models

Deepseekmath: Pushing the limits of mathematical reasoning in open language models , author=. arXiv preprint arXiv:2402.03300 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[9]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Mip-nerf 360: Unbounded anti-aliased neural radiance fields , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[10]

ACM Transactions on Graphics (ToG) , volume=

Deep blending for free-viewpoint image-based rendering , author=. ACM Transactions on Graphics (ToG) , volume=. 2018 , publisher=

work page 2018

-

[11]

ACM Transactions on Graphics (ToG) , volume=

Tanks and temples: Benchmarking large-scale scene reconstruction , author=. ACM Transactions on Graphics (ToG) , volume=. 2017 , publisher=

work page 2017

-

[12]

IEEE transactions on image processing , volume=

Image quality assessment: from error visibility to structural similarity , author=. IEEE transactions on image processing , volume=. 2004 , publisher=

work page 2004

-

[13]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

The unreasonable effectiveness of deep features as a perceptual metric , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

- [14]

-

[15]

Proceedings of the Computer Vision and Pattern Recognition Conference , pages=

Speedy-splat: Fast 3d gaussian splatting with sparse pixels and sparse primitives , author=. Proceedings of the Computer Vision and Pattern Recognition Conference , pages=

-

[16]

European Conference on Computer Vision , pages=

Revising densification in gaussian splatting , author=. European Conference on Computer Vision , pages=. 2024 , organization=

work page 2024

-

[17]

Advances in Neural Information Processing Systems , volume=

3d gaussian splatting as markov chain monte carlo , author=. Advances in Neural Information Processing Systems , volume=

- [18]

-

[19]

European Conference on Computer Vision , pages=

Mini-splatting: Representing scenes with a constrained number of gaussians , author=. European Conference on Computer Vision , pages=. 2024 , organization=

work page 2024

-

[20]

GLU Variants Improve Transformer

Glu variants improve transformer , author=. arXiv preprint arXiv:2002.05202 , year=

work page internal anchor Pith review Pith/arXiv arXiv 2002

-

[21]

Lyu, Yanzhe and Cheng, Kai and Kang, Xin and Chen, Xuejin , journal=

-

[22]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

Zhou, Shijie and Chang, Haoran and Jiang, Sicheng and Fan, Zhiwen and Zhu, Zehao and Xu, Dejia and Chari, Pradyumna and You, Suya and Wang, Zhangyang and Kadambi, Achuta , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

-

[23]

Ni, Zhangkai and Ma, Lin and Zeng, Huanqiang and Cai, Canhui and Ma, Kai-Kuang , journal=

-

[24]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

Zhang, Jiahui and Zhan, Fangneng and Xu, Muyu and Lu, Shijian and Xing, Eric , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

-

[25]

Shen, Xuelin and Ni, Zhangkai and Yang, Wenhan and Zhang, Xinfeng and Wang, Shiqi and Kwong, Sam , journal=

-

[26]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops , year =

Kim, Sieun and Lee, Kyungjin and Lee, Youngki , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops , year =

-

[27]

Liu, Wenkai and Guan, Tao and Zhu, Bin and Xu, Luoyuan and Song, Zikai and Li, Dan and Wang, Yuesong and Yang, Wei , journal=

-

[28]

Du, Xiaobiao and Wang, Yida and Yu, Xin , journal=

-

[29]

Li, Zhuoxiao and Yao, Shanliang and Chu, Yijie and Garcia-Fernandez, Angel F and Yue, Yong and Lim, Eng Gee and Zhu, Xiaohui , journal=

-

[30]

Mulin Yu and Tao Lu and Linning Xu and Lihan Jiang and Yuanbo Xiangli and Bo Dai , booktitle=. 2024 , pages=

work page 2024

-

[31]

Xu, Qingshan and Cui, Jiequan and Yi, Xuanyu and Wang, Yuxuan and Zhou, Yuan and Ong, Yew-Soon and Zhang, Hanwang , journal=

-

[32]

Deng, Xiaobin and Diao, Changyu and Li, Min and Yu, Ruohan and Xu, Duanqing , journal=

-

[33]

Ross, John and Speed, Harriet D , journal=

-

[34]

Jiang, Hanqing and Xiang, Xiaojun and Sun, Han and Li, Hongjie and Zhou, Liyang and Zhang, Xiaoyu and Zhang, Guofeng , journal=

-

[35]

Wang, Hongkui and Yu, Li and Liang, Junhui and Yin, Haibing and Li, Tiansong and Wang, Shengwei , journal=. 2021 , volume=

work page 2021

-

[36]

Campbell, Fergus W and Robson, John G , journal=

-

[37]

Xue, Wufeng and Zhang, Lei and Mou, Xuanqin and Bovik, Alan C. , journal=. 2014 , volume=

work page 2014

-

[38]

Liu, Anmin and Lin, Weisi and Narwaria, Manish , journal=. 2012 , volume=

work page 2012

-

[39]

Lin, Weikai and Feng, Yu and Zhu, Yuhao , booktitle=

-

[40]

Franke, Linus and Fink, Laura and Stamminger, Marc , booktitle=

-

[41]

Proceedings of the European Conference on Computer Vision

Xiangli, Yuanbo and Xu, Linning and Pan, Xingang and Zhao, Nanxuan and Rao, Anyi and Theobalt, Christian and Dai, Bo and Lin, Dahua. Proceedings of the European Conference on Computer Vision. 2022

work page 2022

-

[42]

and Tancik, Matthew and Barron, Jonathan T

Mildenhall, Ben and Srinivasan, Pratul P. and Tancik, Matthew and Barron, Jonathan T. and Ramamoorthi, Ravi and Ng, Ren. Proceedings of the European Conference on Computer Vision. 2020

work page 2020

-

[43]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

Turki, Haithem and Ramanan, Deva and Satyanarayanan, Mahadev , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

-

[44]

Proceedings of the AAAI Conference on Artificial Intelligence , author=. 2024 , pages=

work page 2024

-

[45]

Barron and Ben Mildenhall , booktitle=

Ben Poole and Ajay Jain and Jonathan T. Barron and Ben Mildenhall , booktitle=. 2023 , pages=

work page 2023

-

[46]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

Xie, Haozhe and Chen, Zhaoxi and Hong, Fangzhou and Liu, Ziwei , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

-

[47]

Ren, Kerui and Jiang, Lihan and Lu, Tao and Yu, Mulin and Xu, Linning and Ni, Zhangkai and Dai, Bo , journal=

-

[48]

Liu, Hengyu and Liu, Yifan and Li, Chenxin and Li, Wuyang and Yuan, Yixuan. Proceedings of the International Conference on Medical Image Computing and Computer Assisted Intervention. 2024

work page 2024

-

[49]

Proceedings of the European Conference on Computer Vision

Lee, Byeonghyeon and Lee, Howoong and Sun, Xiangyu and Ali, Usman and Park, Eunbyung. Proceedings of the European Conference on Computer Vision. 2025

work page 2025

-

[50]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

Lu, Tao and Yu, Mulin and Xu, Linning and Xiangli, Yuanbo and Wang, Limin and Lin, Dahua and Dai, Bo , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

-

[51]

Yu, Zehao and Chen, Anpei and Huang, Binbin and Sattler, Torsten and Geiger, Andreas , booktitle=

-

[52]

Proceedings of the European Conference on Computer Vision

Chen, Yihang and Wu, Qianyi and Lin, Weiyao and Harandi, Mehrtash and Cai, Jianfei. Proceedings of the European Conference on Computer Vision. 2025

work page 2025

-

[53]

Fengyi Zhang and Tianjun Zhang and Lin Zhang and Helen Huang and Yadan Luo , title=. 2024 , journal=

work page 2024

-

[54]

Proceedings of the International Broadcasting Conference , pages=

Lubin, Jeffrey , title=. Proceedings of the International Broadcasting Conference , pages=

-

[55]

Gu, Ke and Wang, Shiqi and Yang, Huan and Lin, Weisi and Zhai, Guangtao and Yang, Xiaokang and Zhang, Wenjun , journal=

-

[56]

Xiang, Haodong and Li, Xinghui and Cheng, Kai and Lai, Xiansong and Zhang, Wanting and Liao, Zhichao and Zeng, Long and Liu, Xueping , journal=

-

[57]

Lin, Xin and Luo, Shi and Shan, Xiaojun and Zhou, Xiaoyu and Ren, Chao and Qi, Lu and Yang, Ming-Hsuan and Vasconcelos, Nuno , booktitle=

-

[58]

Zhang, Jiawei and Li, Jiahe and Yu, Xiaohan and Huang, Lei and Gu, Lin and Zheng, Jin and Bai, Xiao , booktitle=

-

[59]

Proceedings of the 32nd ACM International Conference on Multimedia , pages=

Absgs: Recovering fine details in 3d gaussian splatting , author=. Proceedings of the 32nd ACM International Conference on Multimedia , pages=

-

[60]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Mip-nerf: A multiscale representation for anti-aliasing neural radiance fields , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[61]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Sc-gs: Sparse-controlled gaussian splatting for editable dynamic scenes , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[62]

ACM Transactions on Graphics (TOG) , volume=

3dgsr: Implicit surface reconstruction with 3d gaussian splatting , author=. ACM Transactions on Graphics (TOG) , volume=. 2024 , publisher=

work page 2024

-

[63]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Regnerf: Regularizing neural radiance fields for view synthesis from sparse inputs , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[64]

IEEE Transactions on Pattern Analysis and Machine Intelligence , volume=

Ref-nerf: Structured view-dependent appearance for neural radiance fields , author=. IEEE Transactions on Pattern Analysis and Machine Intelligence , volume=. 2024 , publisher=

work page 2024

-

[65]

European conference on computer vision , pages=

Tensorf: Tensorial radiance fields , author=. European conference on computer vision , pages=. 2022 , organization=

work page 2022

-

[66]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Plenoxels: Radiance fields without neural networks , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[67]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Plenoctrees for real-time rendering of neural radiance fields , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[68]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Fastnerf: High-fidelity neural rendering at 200fps , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[69]

2024 International Conference on 3D Vision (3DV) , pages=

Dynamic 3d gaussians: Tracking by persistent dynamic view synthesis , author=. 2024 International Conference on 3D Vision (3DV) , pages=. 2024 , organization=

work page 2024

-

[70]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

4d gaussian splatting for real-time dynamic scene rendering , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[71]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Deformable 3d gaussians for high-fidelity monocular dynamic scene reconstruction , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[72]

ACM SIGGRAPH 2024 Conference Papers , pages=

4d-rotor gaussian splatting: towards efficient novel view synthesis for dynamic scenes , author=. ACM SIGGRAPH 2024 Conference Papers , pages=

work page 2024

-

[73]

European conference on computer vision , pages=

Fsgs: Real-time few-shot view synthesis using gaussian splatting , author=. European conference on computer vision , pages=. 2024 , organization=

work page 2024

-

[74]

European conference on computer vision , pages=

Mvsplat: Efficient 3d gaussian splatting from sparse multi-view images , author=. European conference on computer vision , pages=. 2024 , organization=

work page 2024

-

[75]

ACM SIGGRAPH 2024 conference papers , pages=

2d gaussian splatting for geometrically accurate radiance fields , author=. ACM SIGGRAPH 2024 conference papers , pages=

work page 2024

-

[76]

ACM SIGGRAPH 2024 conference papers , pages=

High-quality surface reconstruction using gaussian surfels , author=. ACM SIGGRAPH 2024 conference papers , pages=

work page 2024

-

[77]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Vastgaussian: Vast 3d gaussians for large scene reconstruction , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[78]

European Conference on Computer Vision , pages=

Citygaussian: Real-time high-quality large-scale scene rendering with gaussians , author=. European Conference on Computer Vision , pages=. 2024 , organization=

work page 2024

-

[79]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Compact 3d gaussian representation for radiance field , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[80]

Proceedings of the 32nd ACM International Conference on Multimedia , pages=

Compgs: Efficient 3d scene representation via compressed gaussian splatting , author=. Proceedings of the 32nd ACM International Conference on Multimedia , pages=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.