Recognition: unknown

MMAudio-LABEL: Audio Event Labeling via Audio Generation for Silent Video

Pith reviewed 2026-05-09 18:48 UTC · model grok-4.3

The pith

Jointly generating audio and sound event labels from silent videos improves onset detection to 75 percent accuracy.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

MMAudio-LABEL is an event-aware audio generation framework built on a foundational audio generation model that jointly generates audio and frame-aligned sound event predictions from silent videos, raising onset-detection accuracy from 46.7 percent to 75.0 percent and material-classification accuracy from 40.6 percent to 61.0 percent over baselines that apply sound event detection after audio generation.

What carries the argument

The latent-based event labeling mechanism that adds frame-aligned sound event prediction as a joint output of the foundational audio generation model.

If this is right

- Joint training removes the need for a separate detection stage and its associated error propagation.

- Frame-aligned predictions supply explicit timing that downstream sound-production tools can use directly.

- The same backbone model can be reused for both high-quality audio synthesis and interpretable event labels.

- Video-to-audio systems become more practical for tasks that require labeled sound events rather than raw waveforms alone.

Where Pith is reading between the lines

- The joint-training pattern could be applied to other video-to-audio tasks where explicit event labels are needed for editing or interaction.

- Similar integration of generation and analysis heads might reduce error accumulation in related multimodal synthesis problems.

- Evaluating the approach on datasets with more varied environments would test whether the accuracy gains hold beyond the Greatest Hits collection.

Load-bearing premise

The standard post-hoc pipeline of generating audio first and then running separate sound event detection is limited by error accumulation, and joint training avoids that limit without lowering audio quality.

What would settle it

A side-by-side test that measures audio quality metrics on the joint model versus the original foundational generator alone, or that applies a stronger independent sound event detector to the baseline audio and checks whether the accuracy gap closes.

Figures

read the original abstract

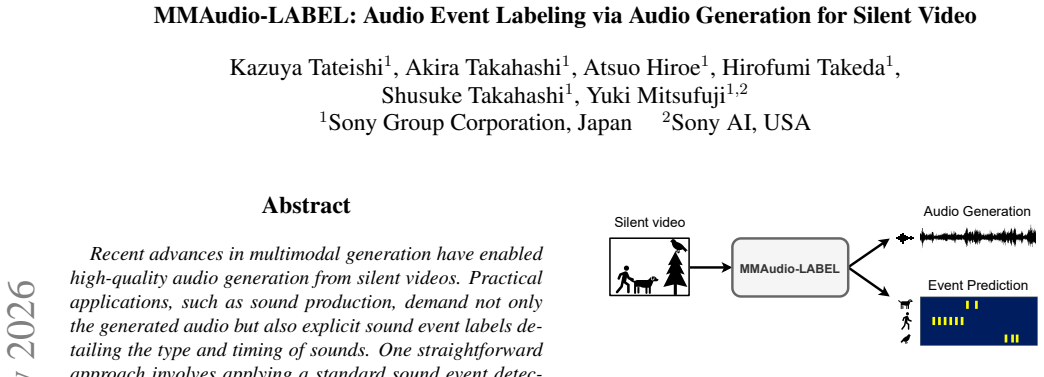

Recent advances in multimodal generation have enabled high-quality audio generation from silent videos. Practical applications, such as sound production, demand not only the generated audio but also explicit sound event labels detailing the type and timing of sounds. One straightforward approach involves applying a standard sound event detection to the generated audio. However, this post-hoc pipeline is inherently limited, as it is prone to error accumulation. To address this limitation, we propose MMAudio-LABEL (LAtent-Based Event Labeling), an event-aware audio generation framework built on a foundational audio generation model as its backbone that jointly generates audio and frame-aligned sound event predictions from silent videos. We evaluate our method on the Greatest Hits dataset for onset detection and 17-class material classification. Our approach improves onset-detection accuracy from 46.7% to 75.0% and material-classification accuracy from 40.6% to 61.0% over baselines. These results suggest that jointly learning audio generation and event prediction enables a more interpretable and practical video-to-audio synthesis.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes MMAudio-LABEL, an event-aware audio generation framework built on a foundational audio generation model as backbone. It jointly generates audio and frame-aligned sound event predictions from silent videos to avoid error accumulation in post-hoc sound event detection. Evaluated on the Greatest Hits dataset, the method reports improved onset-detection accuracy from 46.7% to 75.0% and material-classification accuracy from 40.6% to 61.0% over baselines, suggesting benefits for interpretable video-to-audio synthesis.

Significance. If the joint training maintains the audio generation quality of the backbone while delivering the reported labeling gains, the work could be significant for practical applications in sound production by enabling explicit event labels without post-hoc pipelines. The concrete empirical improvements on a named dataset provide a starting point for assessing utility in multimodal generation.

major comments (2)

- [Abstract] Abstract: the reported accuracy gains (onset detection 46.7% to 75.0%, material classification 40.6% to 61.0%) are presented without any details on model architecture, training procedure, baseline implementations, or statistical significance testing. This directly undermines verification of the central claim that joint training on the foundational model produces the improvements by avoiding post-hoc error accumulation.

- [Evaluation] Evaluation on Greatest Hits: no audio fidelity metrics (FAD, perceptual scores) or direct comparison to the non-joint foundational backbone are provided. This is load-bearing for the claim that the joint objective preserves high-quality audio output; without these, it is impossible to confirm that the labeling gains do not come at the cost of degraded synthesis quality, which would eliminate any practical advantage over a post-hoc pipeline using the same backbone.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We address each major comment point by point below, indicating where revisions will be made to the next version.

read point-by-point responses

-

Referee: [Abstract] Abstract: the reported accuracy gains (onset detection 46.7% to 75.0%, material classification 40.6% to 61.0%) are presented without any details on model architecture, training procedure, baseline implementations, or statistical significance testing. This directly undermines verification of the central claim that joint training on the foundational model produces the improvements by avoiding post-hoc error accumulation.

Authors: We agree that the abstract is intentionally concise and therefore omits full details on architecture, training procedure, baseline implementations, and statistical testing. These elements are described in the main body (model architecture and joint objective in Section 3, training and baselines in Section 4, and experimental results in Section 5). To address the concern, we will expand the abstract with a brief reference to the foundational backbone, the joint training setup, and a note that the accuracy gains are consistent across our evaluation protocol. The central claim is supported by the direct comparison to post-hoc baselines on the Greatest Hits dataset, where the joint model yields the reported improvements by predicting events and audio together rather than sequentially. revision: partial

-

Referee: [Evaluation] Evaluation on Greatest Hits: no audio fidelity metrics (FAD, perceptual scores) or direct comparison to the non-joint foundational backbone are provided. This is load-bearing for the claim that the joint objective preserves high-quality audio output; without these, it is impossible to confirm that the labeling gains do not come at the cost of degraded synthesis quality, which would eliminate any practical advantage over a post-hoc pipeline using the same backbone.

Authors: We acknowledge that the current manuscript does not report audio fidelity metrics such as FAD or perceptual scores, nor an explicit side-by-side comparison against the unmodified foundational backbone. While the primary focus was on labeling accuracy gains, this omission limits verification of synthesis quality preservation. In the revised manuscript we will add FAD scores, perceptual evaluations, and direct comparisons to the non-joint backbone to demonstrate that audio generation quality remains comparable while the joint objective provides the additional event-labeling capability. revision: yes

Circularity Check

No circularity: empirical claims rest on experimental comparisons, not derivations or self-referential fits

full rationale

The manuscript describes an empirical framework (MMAudio-LABEL) that jointly trains audio generation and event labeling on a backbone model, then reports accuracy gains on onset detection and material classification versus baselines on the Greatest Hits dataset. No equations, derivations, or parameter-fitting steps are presented that would reduce the reported improvements to quantities defined by the inputs themselves. No self-citations are invoked as load-bearing uniqueness theorems or ansatzes. The central claim is therefore self-contained against external benchmarks and does not exhibit any of the enumerated circularity patterns.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

H. K. Cheng, M. Ishii, A. Hayakawa, T. Shibuya, A. Schwing, and Y . Mitsufuji. MMAudio: Taming Multi- modal Joint Training for High-Quality Video-to-Audio Syn- thesis. InCVPR, 2025. 1, 2, 3

2025

-

[2]

Y . Du, Z. Chen, J. Salamon, B. Russell, and A. Owens. Con- ditional Generation of Audio from Video via Foley Analo- gies. InCVPR, 2023. 1, 3

2023

-

[3]

P. Fang, Y . He, Y . Xing, Q. Chen, S.-N. Lim, and H. Yang. AC-Foley: Reference-Audio-Guided Video-to-Audio Syn- thesis with Acoustic Transfer. InICLR, 2026. 1

2026

-

[4]

gil Lee, W

S. gil Lee, W. Ping, B. Ginsburg, B. Catanzaro, and S. Yoon. BigVGAN: A Universal Neural V ocoder with Large-Scale Training. InICLR, 2023. 2

2023

-

[5]

Iashin, W

V . Iashin, W. Xie, E. Rahtu, and A. Zisserman. Synchformer: Efficient Synchronization from Sparse Cues. InICASSP,

-

[6]

Jeong, Y

Y . Jeong, Y . Kim, S. Chun, and J. Lee. Read, Watch and Scream! Sound Generation from Text and Video. InAAAI,

-

[7]

D. P. Kingma and M. Welling. Auto-Encoding Variational Bayes. InICLR, 2014. 2

2014

-

[8]

Q. Kong, Y . Cao, T. Iqbal, Y . Wang, W. Wang, and M. D. Plumbley. PANNs: Large-Scale Pretrained Audio Neural Networks for Audio Pattern Recognition. InACM, 2020. 1

2020

-

[9]

Lipman, R

Y . Lipman, R. T. Q. Chen, H. Ben-Hamu, M. Nickel, and M. Le. Flow Matching for Generative Modeling. InICLR,

-

[10]

X. Liu, K. Su, and E. Shlizerman. Tell What You Hear From What You See – Video to Audio Generation Through Text. InNeurIPS, 2024. 1

2024

-

[11]

Owens, P

A. Owens, P. Isola, J. McDermott, A. Torralba, E. H. Adel- son, and W. T. Freeman. Visually Indicated Sounds. In CVPR, 2016. 1

2016

-

[12]

Radford, J

A. Radford, J. W. Kim, C. Hallacy, A. Ramesh, G. Goh, S. Agarwal, G. Sastry, A. Askell, P. Mishkin, J. Clark, G. Krueger, and I. Sutskever. Learning Transferable Vi- sual Models From Natural Language Supervision. InICML,

-

[13]

Y . Ren, C. Li, M. Xu, W. Liang, Y . Gu, R. Chen, and D. Yu. STA-V2A: Video-to-Audio Generation with Semantic and Temporal Alignment. InICASSP, 2025. 1

2025

-

[14]

Takahashi, S

A. Takahashi, S. Takahashi, and Y . Mitsufuji. MMAu- dioSep: Taming Video-to-Audio Generative Model Towards Video/Text-Queried Sound Separation. InICASSP, 2026. 1

2026

-

[15]

A. Tong, K. Fatras, N. Malkin, G. Huguet, Y . Zhang, J. Rector-Brooks, G. Wolf, and Y . Bengio. Improving and generalizing flow-based generative models with minibatch optimal transport. 2024. 3

2024

-

[16]

Zhang, K

X. Zhang, K. Fan, Y . Wang, Y . Liang, J. Lu, Z. Du, Q. Shi, and P. Qin. TAGMO: Temporal Control Audio Generation for Multiple Visual Objects Without Training. InICASSP,

-

[17]

Foleycrafter: Bring silent videos to life with lifelike and synchronized sounds

Y . Zhang, Y . Gu, Y . Zeng, Z. Xing, Y . Wang, Z. Wu, and K. Chen. FoleyCrafter: Bring Silent Videos to Life with Lifelike and Synchronized Sounds.arXiv preprint arXiv:2407.01494, 2024. 1

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.