Recognition: unknown

Multi-frame Restoration for High-rate Lissajous Confocal Laser Endomicroscopy

Pith reviewed 2026-05-09 18:46 UTC · model grok-4.3

The pith

A lightweight recurrent network called MIRA restores high-rate Lissajous CLE images by iteratively aggregating temporal context through feature reuse and displacement alignment.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that supervised multi-frame restoration becomes feasible for high-rate Lissajous CLE once a dataset of low-quality video clips is paired with temporally aligned high-quality references obtained from stitched slow-scan mosaics. MIRA exploits this pairing by running a lightweight recurrent network that reuses features across frames and aligns displacements, iteratively filling the resonant scanning gaps. The result is better image quality than competing methods at computational cost suitable for handheld deployment.

What carries the argument

MIRA, a lightweight recurrent network that aggregates temporal context across frames by reusing extracted features and aligning displacements between them.

If this is right

- MIRA produces higher restoration quality than both lightweight and high-complexity baselines on the introduced benchmark.

- The method maintains computational efficiency low enough for potential clinical deployment.

- The paired dataset enables supervised training for high-rate Lissajous CLE restoration tasks.

- Multi-frame processing can compensate for the sparse pixel sampling that arises from resonant Lissajous trajectories at high frame rates.

Where Pith is reading between the lines

- If the approach succeeds, handheld high-speed optical biopsy could become more practical without requiring slower, more stable scans.

- The use of stitched slow-scan references for supervision may transfer to other sparse or irregular scanning modalities in microscopy.

- Real-time restored images could support immediate diagnostic feedback during live tissue procedures.

Load-bearing premise

Stitched wide-field mosaics from stabilized slow-scan frames provide accurate high-quality references that are temporally aligned with the high-rate video clips used for training.

What would settle it

Test MIRA on fresh high-rate Lissajous video sequences of the same tissue and compare its output directly against independently acquired slow-scan or wide-field images taken at the same locations to measure whether the reported quality gains hold.

Figures

read the original abstract

Lissajous confocal laser endomicroscopy (CLE) is a promising solution for high speed in vivo optical biopsy for handheld scenarios. However, Lissajous scanning traces a resonant trajectory and samples only the visited pixels per frame; at high frame rates, many pixels remain unvisited, creating structured holes. In this work, we introduce the first benchmark for high-rate Lissajous CLE, consisting of low-quality video clips paired with high-quality reference images. The reference images are wide-FOV mosaics obtained by stitching stabilized, slow-scan frames of the same tissue, enabling temporally aligned supervision. Using this dataset, we propose MIRA, a lightweight recurrent framework for Lissajous CLE restoration that iteratively aggregates temporal context through feature reuse and displacement alignment. Our experiments demonstrate that MIRA outperforms both lightweight and high-complexity baselines in restoration quality while maintaining a favorable computational efficiency suitable for clinical deployment.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces the first benchmark for high-rate Lissajous confocal laser endomicroscopy (CLE) restoration, pairing low-quality video clips (with structured unvisited pixels) against high-quality wide-FOV mosaic references obtained by stitching stabilized slow-scan frames of the same tissue. It proposes MIRA, a lightweight recurrent framework that iteratively aggregates temporal context through feature reuse and displacement alignment. Experiments claim that MIRA outperforms both lightweight and high-complexity baselines in restoration quality while maintaining computational efficiency suitable for clinical deployment.

Significance. If the stitched mosaics can be validated as reliable, pixel-accurate ground truth, the work would be significant for the CLE field by establishing the first public benchmark for this resonant scanning modality and delivering a practical, deployable restoration method that addresses missing-data artifacts in high-speed handheld imaging.

major comments (2)

- [Abstract and Dataset Construction] Abstract and Dataset Construction: The entire quantitative evaluation (PSNR/SSIM gains, outperformance claims) rests on the assumption that the wide-FOV mosaics provide temporally aligned, pixel-accurate supervision. No quantitative assessment of residual stitching errors, non-rigid deformation correction accuracy, or illumination consistency between slow-scan passes is provided. In-vivo CLE data commonly exhibit probe drift and tissue deformation; any uncorrected misalignment directly corrupts the reference pixels used for both training and metric computation, rendering the reported improvements difficult to interpret as true restoration quality.

- [§4 (Experiments)] §4 (Experiments): The abstract states outperformance over baselines but the provided summary contains no numerical results, dataset cardinality, number of test clips, or ablation studies on the recurrent components. Without these specifics, it is impossible to judge whether the gains are statistically meaningful, robust across tissue types, or attributable to the proposed feature reuse and alignment rather than training choices.

minor comments (1)

- [Abstract] The abstract would be strengthened by including at least one key quantitative result (e.g., mean PSNR or SSIM delta versus the strongest baseline) so readers can immediately gauge effect size.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment below and have revised the manuscript to strengthen the presentation of the dataset validation and experimental details.

read point-by-point responses

-

Referee: [Abstract and Dataset Construction] The entire quantitative evaluation (PSNR/SSIM gains, outperformance claims) rests on the assumption that the wide-FOV mosaics provide temporally aligned, pixel-accurate supervision. No quantitative assessment of residual stitching errors, non-rigid deformation correction accuracy, or illumination consistency between slow-scan passes is provided. In-vivo CLE data commonly exhibit probe drift and tissue deformation; any uncorrected misalignment directly corrupts the reference pixels used for both training and metric computation, rendering the reported improvements difficult to interpret as true restoration quality.

Authors: We agree that explicit quantitative validation of the stitched references is important for interpreting the benchmark results. The mosaics are formed from multiple stabilized slow-scan frames of the identical tissue region using standard registration techniques to compensate for probe motion. In the revised manuscript we have added a dedicated paragraph and supplementary table in the dataset construction section that reports average residual alignment error (measured via overlap consistency), non-rigid deformation residual after correction, and inter-pass illumination variance. These metrics indicate that residual errors remain below the level that would materially affect the reported PSNR/SSIM differences. We believe this addition directly addresses the concern while preserving the utility of the paired benchmark. revision: yes

-

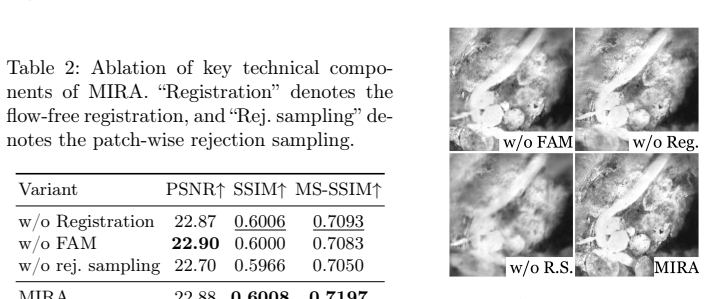

Referee: [§4 (Experiments)] The abstract states outperformance over baselines but the provided summary contains no numerical results, dataset cardinality, number of test clips, or ablation studies on the recurrent components. Without these specifics, it is impossible to judge whether the gains are statistically meaningful, robust across tissue types, or attributable to the proposed feature reuse and alignment rather than training choices.

Authors: The full manuscript already contains these details in Section 4: a table of PSNR/SSIM values across all baselines, the total number of paired clips and their split into training/validation/test sets, and dedicated ablation experiments isolating the contribution of recurrent feature reuse and displacement alignment. To improve accessibility we have revised the abstract to include concise quantitative highlights and expanded the opening paragraph of Section 4 to explicitly state dataset cardinality, number of test clips, and tissue-type coverage. These changes make the experimental claims self-contained without altering the underlying results. revision: partial

Circularity Check

No circularity: empirical method with independent benchmarks

full rationale

The paper introduces a dataset and a recurrent restoration network (MIRA) evaluated via direct comparison to baselines on PSNR/SSIM. No equations, derivations, fitted parameters renamed as predictions, or self-referential definitions appear in the abstract or described content. The reference mosaics are constructed externally via stitching and stabilization; they are not derived from the model's outputs or fitted to its predictions. Central claims rest on experimental results against external baselines rather than reducing to inputs by construction. This is a standard empirical contribution with no load-bearing self-citation chains or ansatzes.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Diagnostics (2025)

Carbone, F., Fochi, N.P., Di Perna, G., Wagner, A., Schlegel, J., Ranieri, E., Spet- zger, U., Armocida, D., Cofano, F., Garbossa, D., et al.: Confocal laser endomi- croscopy: enhancing intraoperative decision making in neurosurgery. Diagnostics (2025)

2025

-

[2]

In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2022)

Chan, K.C., Zhou, S., Xu, X., Loy, C.C.: BasicVSR++: Improving video super- resolution with enhanced propagation and alignment. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2022)

2022

-

[3]

Gastrointestinal Endoscopy (2014)

Chauhan, S.S., Dayyeh, B.K.A., Bhat, Y.M., Gottlieb, K.T., Hwang, J.H., Koman- duri, S., Konda, V., Lo, S.K., Manfredi, M.A., Maple, J.T., et al.: Confocal laser endomicroscopy. Gastrointestinal Endoscopy (2014)

2014

-

[4]

In: Proceedings of the IEEE/CVF International Conference on Computer Vision (2021)

Cho, S.J., Ji, S.W., Hong, J.P., Jung, S.W., Ko, S.J.: Rethinking coarse-to-fine ap- proach in single image deblurring. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (2021)

2021

-

[5]

In: Advances in Neural Information Processing Systems (2024)

Ghasemabadi, A., Janjua, M.K., Salameh, M., Niu, D.: Learning truncated causal history model for video restoration. In: Advances in Neural Information Processing Systems (2024)

2024

-

[6]

Scientific Reports (2017)

Hwang, K., Seo, Y.H., Ahn, J., Kim, P., Jeong, K.H.: Frequency selection rule for high definition and high frame rate Lissajous scanning. Scientific Reports (2017)

2017

-

[7]

Microsystems & Nanoengineering (2020)

Hwang, K., Seo, Y.H., Kim, D.Y., Ahn, J., Lee, S., Han, K.H., Lee, K.H., Jon, S., Kim, P., Yu, K.E., et al.: Handheld endomicroscope using a fiber-optic harmono- graph enables real-time and in vivo confocal imaging of living cell morphology and capillary perfusion. Microsystems & Nanoengineering (2020)

2020

-

[8]

Biomedical Optics Express (2022)

Jeon, J., Kim, H., Jang, H., Hwang, K., Kim, K., Park, Y.G., Jeong, K.H.: Hand- held laser scanning microscope catheter for real-time and in vivo confocal mi- croscopy using a high definition high frame rate Lissajous mems mirror. Biomedical Optics Express (2022)

2022

-

[9]

In: Proceedings of the IEEE/CVF Con- ference on Computer Vision and Pattern Recognition (2023)

Kong, L., Dong, J., Ge, J., Li, M., Pan, J.: Efficient frequency domain-based trans- formers for high-quality image deblurring. In: Proceedings of the IEEE/CVF Con- ference on Computer Vision and Pattern Recognition (2023)

2023

-

[10]

IEEE Transactions on Biomed- ical Engineering (2011)

Latt, W.T., Newton, R.C., Visentini-Scarzanella, M., Payne, C.J., Noonan, D.P., Shang, J., Yang, G.Z.: A hand-held instrument to maintain steady tissue contact during probe-based confocal laser endomicroscopy. IEEE Transactions on Biomed- ical Engineering (2011)

2011

-

[11]

In: Advances in Neural Information Processing Systems (2022)

Liang, J., Fan, Y., Xiang, X., Ranjan, R., Ilg, E., Green, S., Cao, J., Zhang, K., Timofte, R., Gool, L.V.: Recurrent video restoration transformer with guided de- formable attention. In: Advances in Neural Information Processing Systems (2022)

2022

-

[12]

In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2025)

Liu, X., Sun, G., Wang, C., Yuan, Y., Konukoglu, E.: MedVSR: Medical video super-resolution with cross state-space propagation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2025)

2025

-

[13]

IEEE Transactions on Medical Imaging (2019) 10 M

Loewke, N.O., Qiu, Z., Mandella, M.J., Ertsey, R., Loewke, A., Gunaydin, L.A., Rosenthal, E.L., Contag, C.H., Solgaard, O.: Software-based phase control, video- rate imaging, and real-time mosaicing with a Lissajous-scanned confocal micro- scope. IEEE Transactions on Medical Imaging (2019) 10 M. Lee et al

2019

-

[14]

Nature Communications (2024)

Ma, J., He, Y., Li, F., Han, L., You, C., Wang, B.: Segment anything in medical images. Nature Communications (2024)

2024

-

[15]

In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2021)

Maggioni, M., Huang, Y., Li, C., Xiao, S., Fu, Z., Song, F.: Efficient multi- stage video denoising with recurrent spatio-temporal fusion. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2021)

2021

-

[16]

In: In- ternational Conference on Medical Image Computing and Computer-Assisted In- tervention (2015)

Mahé, J., Linard, N., Tafreshi, M.K., Vercauteren, T., Ayache, N., Lacombe, F., Cuingnet, R.: Motion-aware mosaicing for confocal laser endomicroscopy. In: In- ternational Conference on Medical Image Computing and Computer-Assisted In- tervention (2015)

2015

-

[17]

In: Proceedings of the AAAI Conference on Artificial Intelligence (2023)

Mao, X., Liu, Y., Liu, F., Li, Q., Shen, W., Wang, Y.: Intriguing findings of fre- quency selection for image deblurring. In: Proceedings of the AAAI Conference on Artificial Intelligence (2023)

2023

-

[18]

Journal of Biomedical Optics (2019)

Marques, M.J., Hughes, M.R., Vyas, K., Thrapp, A., Zhang, H., Bradu, A., Ge- likonov, G., Giataganas, P., Payne, C.J., Yang, G.Z., et al.: En-face optical co- herence tomography/fluorescence endomicroscopy for minimally invasive imaging using a robotic scanner. Journal of Biomedical Optics (2019)

2019

-

[19]

Medical Image Analysis (2021)

Ozyoruk, K.B., Gokceler, G.I., Bobrow, T.L., Coskun, G., Incetan, K., Almalioglu, Y., Mahmood, F., Curto, E., Perdigoto, L., Oliveira, M., Sahin, H., Araujo, H., Alexandrino, H., Durr, N.J., Gilbert, H.B., Turan, M.: EndoSLAM dataset and an unsupervised monocular visual odometry and depth estimation approach for endoscopic videos. Medical Image Analysis (2021)

2021

-

[20]

In: Proceedings of the ACM International Conference on Mul- timedia (2022)

Qi, C., Chen, J., Yang, X., Chen, Q.: Real-time streaming video denoising with bidirectional buffers. In: Proceedings of the ACM International Conference on Mul- timedia (2022)

2022

-

[21]

In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2017)

Ranjan, A., Black, M.J.: Optical flow estimation using a spatial pyramid network. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2017)

2017

-

[22]

In: International Conference on Medical Image Computing and Computer-Assisted Intervention (2015)

Ronneberger, O., Fischer, P., Brox, T.: U-Net: Convolutional networks for biomedi- cal image segmentation. In: International Conference on Medical Image Computing and Computer-Assisted Intervention (2015)

2015

-

[23]

Bild- verarbeitung für die Medizin (2018)

Stoeve, M., Aubreville, M., Oetter, N., Knipfer, C., Neumann, H., Stelzle, F., Maier, A.: Motion artifact detection in confocal laser endomicroscopy images. Bild- verarbeitung für die Medizin (2018)

2018

-

[24]

Scientific Data (2023)

Studier-Fischer, A., Seidlitz, S., Sellner, J., Bressan, M., Özdemir, B., Ayala, L., Odenthal, J., Knoedler, S., Kowalewski, K.F., Haney, C.M., Salg, G., Dietrich, M., Kenngott, H., Gockel, I., Hackert, T., Müller-Stich, B.P., Maier-Hein, L., Nickel, F.: HeiPorSPECTRAL - the Heidelberg porcine hyperspectral imaging dataset of 20 physiological organs. Scie...

2023

-

[25]

In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2018)

Sun, D., Yang, X., Liu, M.Y., Kautz, J.: PWC-net: Cnns for optical flow using pyramid, warping, and cost volume. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2018)

2018

-

[26]

Gastroen- terology (2009)

Wallace, M.B., Fockens, P.: Probe-based confocal laser endomicroscopy. Gastroen- terology (2009)

2009

-

[27]

Image and Vision Computing (2003)

Zitova, B., Flusser, J.: Image registration methods: A survey. Image and Vision Computing (2003)

2003

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.