Recognition: no theorem link

Recovering Hidden Reward in Diffusion-Based Policies

Pith reviewed 2026-05-12 02:48 UTC · model grok-4.3

The pith

Diffusion policies recover the expert's hidden reward by equating their denoising score to the gradient of the soft Q-function under maximum entropy.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

EnergyFlow unifies generative action modeling with inverse reinforcement learning by parameterizing a scalar energy function whose gradient is the denoising field. Under maximum-entropy optimality, the score function learned via denoising score matching recovers the gradient of the expert's soft Q-function, enabling reward extraction without adversarial training. Formally, constraining the learned field to be conservative reduces hypothesis complexity and tightens out-of-distribution generalization bounds. The framework also characterizes the identifiability of recovered rewards and bounds how score estimation errors propagate to action preferences.

What carries the argument

A scalar energy function whose gradient serves as a conservative denoising field that, under maximum-entropy optimality, equals the gradient of the expert's soft Q-function.

Load-bearing premise

The expert policy is optimal under maximum entropy, and constraining the denoising field to be conservative still permits accurate modeling of the observed action distribution.

What would settle it

Running reinforcement learning with the recovered reward on the original tasks and observing that the resulting policy underperforms the expert or adversarial baselines would falsify the claim that the energy gradient recovers a usable reward.

Figures

read the original abstract

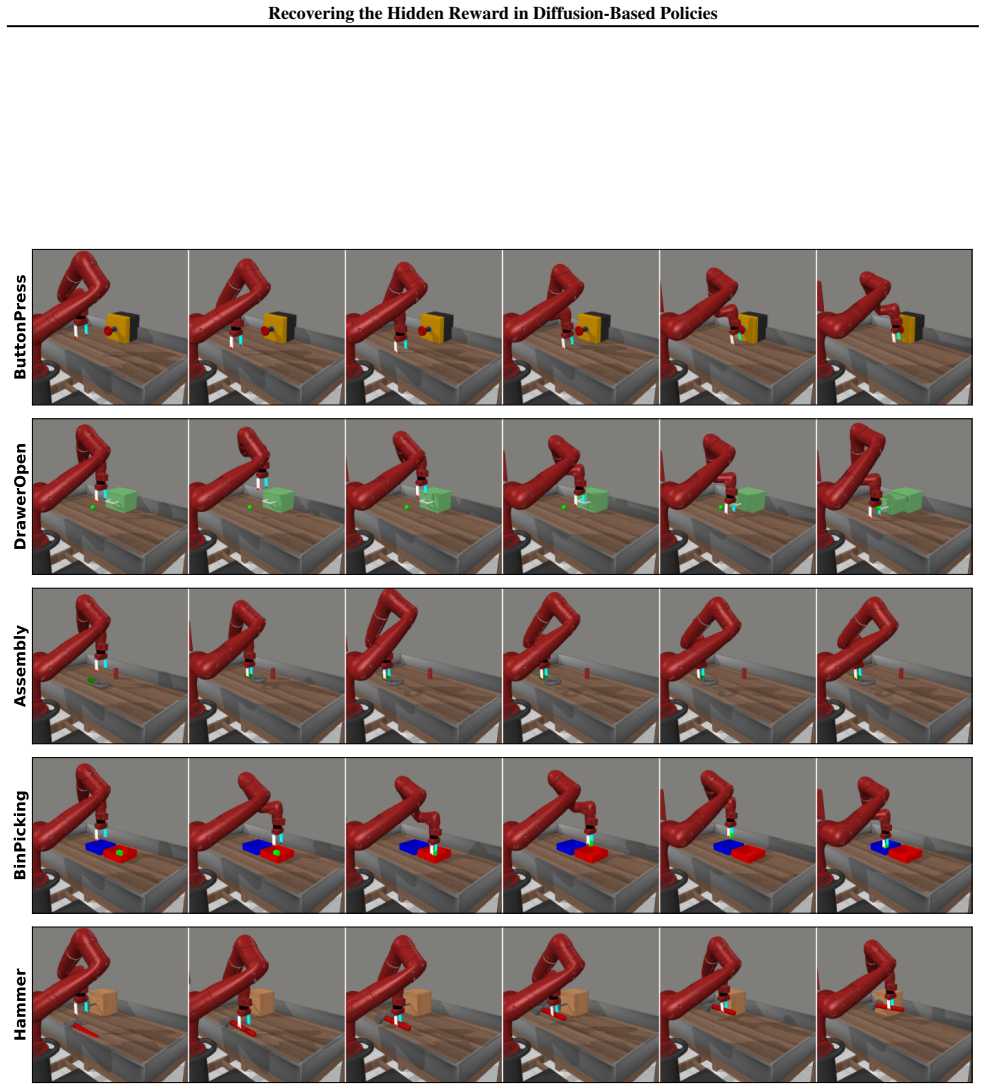

This paper introduces EnergyFlow, a framework that unifies generative action modeling with inverse reinforcement learning by parameterizing a scalar energy function whose gradient is the denoising field. We establish that under maximum-entropy optimality, the score function learned via denoising score matching recovers the gradient of the expert's soft Q-function, enabling reward extraction without adversarial training. Formally, we prove that constraining the learned field to be conservative reduces hypothesis complexity and tightens out-of-distribution generalization bounds. We further characterize the identifiability of recovered rewards and bound how score estimation errors propagate to action preferences. Empirically, EnergyFlow achieves state-of-the-art imitation performance on various manipulation tasks while providing an effective reward signal for downstream reinforcement learning that outperforms both adversarial IRL methods and likelihood-based alternatives. These results show that the structural constraints required for valid reward extraction simultaneously serve as beneficial inductive biases for policy generalization. The code is available at https://github.com/sotaagi/EnergyFlow.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces EnergyFlow, a framework that parameterizes diffusion-based policies via a scalar energy function whose gradient is the denoising score. Under maximum-entropy optimality of the expert policy, it claims that denoising score matching recovers the gradient of the expert's soft Q-function, enabling direct reward extraction without adversarial training. It proves that the conservative (gradient-of-scalar) parameterization reduces hypothesis complexity and tightens out-of-distribution generalization bounds, while also characterizing reward identifiability and bounding propagation of score estimation errors to action preferences. Empirically, EnergyFlow reports state-of-the-art imitation learning performance on manipulation tasks and supplies effective rewards for downstream RL that outperform both adversarial IRL and likelihood-based baselines. Code is released at the provided GitHub link.

Significance. If the derivations hold, the work offers a principled unification of generative diffusion modeling with inverse RL that avoids adversarial training while leveraging the conservative inductive bias for both reward recovery and improved generalization. The explicit proofs of identifiability and error bounds, together with the released code, would make the contribution verifiable and extensible. The empirical claims, if supported by rigorous baselines, would indicate practical value for robotics manipulation.

minor comments (2)

- The abstract and introduction would benefit from explicit forward references to the theorem numbers or sections containing the proofs of Q-gradient recovery and the OOD bound tightening (e.g., the statement that the conservative constraint 'reduces hypothesis complexity').

- Empirical results would be strengthened by an ablation isolating the effect of the conservative parameterization versus an unconstrained score model, including quantitative metrics on both imitation performance and downstream RL reward quality.

Simulated Author's Rebuttal

We thank the referee for the positive summary, significance assessment, and recommendation of minor revision. The report correctly captures the core technical contributions of EnergyFlow, including the recovery of soft Q-function gradients via denoising score matching under maximum-entropy optimality, the conservative parameterization benefits for generalization, and the empirical advantages in imitation and downstream RL. No specific major comments or requested changes were listed in the report.

Circularity Check

No significant circularity; derivation follows from standard max-ent form

full rationale

The paper's core claim—that the learned denoising score recovers ∇Q under max-ent optimality—follows directly from the known policy form π(a|s) ∝ exp(Q(s,a)) with action-independent partition function, so that ∇_a log π = ∇_a Q. Parameterizing the field as the gradient of a scalar energy is conservative by construction but matches the mathematical property that every score function is conservative; no expressivity is lost and the constraint is not smuggled in via self-citation or fitted input. No load-bearing step reduces to a self-definition, renamed empirical pattern, or unverified self-citation chain. The identifiability and generalization bounds are presented as consequences of these external facts rather than tautological reparameterizations.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

URL https: //doi.org/10.1109/lra.2024.3363530

doi: 10.1109/LRA.2024.3363530. URL https: //doi.org/10.1109/lra.2024.3363530. Antonelo, E., Couto, G., and M ¨oller, C. Exploring multi- modal implicit behavior learning for vehicle navigation in simulated cities. InAnais do XXII Encontro Nacional de Inteligˆencia Artificial e Computacional, pp. 962–973, Porto Alegre, RS, Brasil, 2025. SBC. doi: 10.5753/ ...

-

[2]

A theory of learning from different domains.Machine Learn- ing, 79(1):151–175, 2010

doi: 10.1007/s10994-009-5152-4. URL https: //doi.org/10.1007/s10994-009-5152-4. Braun, M., Jaquier, N., Rozo, L., and Asfour, T. Rie- mannian flow matching policy for robot motion learn- ing. InIEEE/RSJ International Conference on Intelli- gent Robots and Systems, IROS 2024, Abu Dhabi, United Arab Emirates, October 14-18, 2024, pp. 5144–5151. IEEE, 2024. ...

-

[3]

Diffusion policy: Visuomotor policy learning via action diffusion

doi: 10.15607/RSS.2023.XIX.026. URL https: //doi.org/10.15607/RSS.2023.XIX.026. Dalal, M., Mandlekar, A., Garrett, C. R., Handa, A., Salakhutdinov, R., and Fox, D. Imitating task and motion planning with visuomotor transformers. In Tan, J., Tou- ssaint, M., and Darvish, K. (eds.),Conference on Robot Learning, CoRL 2023, 6-9 November 2023, Atlanta, GA, USA...

-

[4]

PMLR, 2021. URL http://proceedings. mlr.press/v139/du21b.html. 9 Recovering the Hidden Reward in Diffusion-Based Policies Finn, C., Levine, S., and Abbeel, P. Guided cost learning: deep inverse optimal control via policy optimization. In Proceedings of the 33rd International Conference on In- ternational Conference on Machine Learning - Volume 48, ICML’16...

work page 2021

-

[5]

Funk, N., Urain, J., Carvalho, J., Prasad, V ., Chalvatzaki, G., and Peters, J

URL https://openreview.net/forum? id=rkHywl-A-. Funk, N., Urain, J., Carvalho, J., Prasad, V ., Chalvatzaki, G., and Peters, J. Actionflow: Equivariant, accurate, and efficient manipulation policies with flow matching. In CoRL 2024 Workshop on Mastering Robot Manipulation in a World of Abundant Data, 2024. URL https:// openreview.net/forum?id=TJaTxWqnzv. ...

-

[6]

URL https: //doi.org/10.1007/s10107-010-0419-x

doi: 10.1007/s10107-010-0419-x. URL https: //doi.org/10.1007/s10107-010-0419-x. Lai, C., Wang, H., Hsieh, P., Wang, Y . F., Chen, M., and Sun, S. Diffusion-reward adversarial imitation learning. In Globersons, A., Mackey, L., Belgrave, D., Fan, A., Pa- quet, U., Tomczak, J. M., and Zhang, C. (eds.),Advances in Neural Information Processing Systems 38: Ann...

-

[7]

URL https: //doi.org/10.1038/s41467-025-66009-y

doi: 10.1038/s41467-025-66009-y. URL https: //doi.org/10.1038/s41467-025-66009-y. Mandlekar, A., Xu, D., Wong, J., Nasiriany, S., Wang, C., Kulkarni, R., Fei-Fei, L., Savarese, S., Zhu, Y ., and Mart´ın-Mart´ın, R. What matters in learning from of- fline human demonstrations for robot manipulation. In Conference on Robot Learning (CoRL), 2021. McLean, R.,...

-

[8]

URL https://openreview.net/forum? id=1de3azE606. Ng, A. Y ., Harada, D., and Russell, S. J. Policy invariance under reward transformations: Theory and application to reward shaping. InProceedings of the Sixteenth Inter- national Conference on Machine Learning, ICML ’99, pp. 278–287, San Francisco, CA, USA, 1999. Morgan Kaufmann Publishers Inc. ISBN 155860...

-

[9]

URL https: //doi.org/10.15607/RSS.2024.XX.071

doi: 10.15607/RSS.2024.XX.071. URL https: //doi.org/10.15607/RSS.2024.XX.071. Ramachandran, D. and Amir, E. Bayesian inverse rein- forcement learning. In Veloso, M. M. (ed.),IJCAI 2007, Proceedings of the 20th International Joint Conference on Artificial Intelligence, Hyderabad, India, January 6- 12, 2007, pp. 2586–2591, 2007. URL http://ijcai. org/Procee...

-

[10]

cc/paper_files/paper/1996/file/ 68d13cf26c4b4f4f932e3eff990093ba-Paper

URL https://proceedings.neurips. cc/paper_files/paper/1996/file/ 68d13cf26c4b4f4f932e3eff990093ba-Paper. pdf. Singh, S., Tu, S., and Sindhwani, V . Revisiting energy based models as policies: Ranking noise contrastive estimation and interpolating energy models.Transac- tions on Machine Learning Research, 2024. ISSN 2835-

work page 1996

-

[11]

URL https://openreview.net/forum? id=JmKAYb7I00. Song, Y . and Kingma, D. P. How to train your energy-based models.CoRR, abs/2101.03288, 2021. URL https: //arxiv.org/abs/2101.03288. Song, Y ., Sohl-Dickstein, J., Kingma, D. P., Kumar, A., Er- mon, S., and Poole, B. Score-based generative modeling through stochastic differential equations. In9th Interna- t...

-

[12]

Wolf, R., Shi, Y ., Liu, S., and Rayyes, R

URL https://proceedings.mlr.press/ v305/wen25b.html. Wolf, R., Shi, Y ., Liu, S., and Rayyes, R. Diffusion models for robotic manipulation: a survey.Frontiers in Robotics and AI, V olume 12 - 2025, 2025. ISSN 2296-9144. doi: 10.3389/frobt.2025.1606247. URL https://www.frontiersin.org/journals/ robotics-and-ai/articles/10.3389/ frobt.2025.1606247. Ye, W., ...

-

[13]

sup ∥W∥ F ≤Λ nX i=1 ⟨σi,Wϕ(x i)⟩ # =E σ

AAAI Press, 2008. 13 Recovering the Hidden Reward in Diffusion-Based Policies A. Proofs A.1. Proof of Theorem 3.6 Theorem 3.6 (Complexity Reduction via Conservative Constraints).Let ϕ:R in →R k be a neural feature representation with bounded feature norm supx ∥ϕ(x)∥2 ≤B and bounded Jacobian Frobenius normsupx ∥Jϕ(x)∥F ≤L . Let Func be the class of arbitra...

work page 2008

-

[14]

Thus, on the bounded domain, the squared loss is Lipschitz with constant2M

The squared loss is not globally Lipschitz, but under the boundedness assumption (∥f(x)∥2 ≤M and ∥h∗(x)∥2 ≤M for all x), the loss is restricted to a bounded domain where: |ℓ(y1)−ℓ(y 2)|=|y 2 1 −y 2 2|=|y 1 +y 2||y1 −y 2| ≤2M|y 1 −y 2|. Thus, on the bounded domain, the squared loss is Lipschitz with constant2M. By Talagrand’s contraction lemma: RS(ℓ◦ H)≤2M...

-

[15]

Within-state ranking is exact: For any fixed states,arg min a Eϕ(a,s) = arg max a Q∗(s,a)

-

[16]

Cross-state comparison is ambiguous: The differenceEϕ(a,s)−E ϕ(a′,s ′) includes the unknown quantity c(s)−c(s ′). Proof.From Theorem 3.3, the learned energy satisfies: Eϕ(a,s) =− Q∗(s,a) α +c(s),(28) wherec(s)is a state-dependent constant arising from integration. 16 Recovering the Hidden Reward in Diffusion-Based Policies Within-state ranking.For a fixed...

work page 2023

-

[17]

Each block utilizes residual connections and Group Normalization (groups=8). • Conditioning:The state embedding cstate and time embedding are injected into every convolutional block via Feature- wise Linear Modulation (FiLM), ensuring the energy landscape is globally conditioned on the current agent state. Modifications for Energy Parameterization.To sati...

-

[18]

Since our training objective (Eq

C2 Differentiable Activations:The standard ReLU activation is non-differentiable at zero. Since our training objective (Eq. (15)) involves the derivative of the score (which is the second derivative of the energy), the network must be twice-differentiable (C2). We replace all ReLU activations withMish. This ensures a smooth gradient flow during the double...

work page 2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.