Recognition: 3 theorem links

· Lean TheoremShiftLIF: Efficient Multi-Level Spiking Neurons with Power-of-Two Quantization

Pith reviewed 2026-05-08 19:13 UTC · model grok-4.3

The pith

ShiftLIF maps membrane potentials to logarithmically spaced power-of-two levels so multi-level spiking neurons gain accuracy while synaptic energy stays near binary LIF levels.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

ShiftLIF maps the membrane potential to a logarithmically spaced set of power-of-two spike levels. This mapping supplies finer resolution near zero where potentials cluster, and it converts all synaptic multiplications into bit-shift and add operations. When embedded in networks trained on ten cross-modal datasets, the resulting models match or surpass the accuracy of earlier multi-level spiking neurons while keeping synaptic energy consumption comparable to that of ordinary binary LIF neurons.

What carries the argument

The logarithmically spaced power-of-two spike set that replaces uniform quantization and turns synaptic multiplications into bit shifts.

If this is right

- Multi-level spiking neurons can be used on edge hardware without introducing multiplier circuits.

- Synaptic energy per operation stays comparable to binary LIF even though each neuron emits more distinct spike values.

- The same architecture works across wireless, acoustic, motion, and visual sensing tasks without task-specific redesign of the spike levels.

- Bit-shift accumulation replaces floating-point or integer multiplies in the forward pass, reducing both latency and power on standard digital logic.

Where Pith is reading between the lines

- If deeper layers shift the membrane-potential distribution away from the small-amplitude peak, the fixed power-of-two levels may need periodic re-centering during training.

- The bit-shift property could reduce silicon area in custom neuromorphic accelerators that already implement shift-add ALUs.

- The approach might combine with temporal coding or adaptive thresholds to further raise information per spike on the same energy budget.

- Hardware measurements on actual silicon would be needed to confirm that spike encoding and routing overheads do not offset the claimed multiplier savings.

Load-bearing premise

Logarithmically spaced power-of-two levels remain effective even when membrane-potential distributions change across tasks, layers, or training regimes.

What would settle it

Measure accuracy on a new dataset whose membrane-potential histogram is uniform or strongly Gaussian rather than peaked near zero; if ShiftLIF accuracy falls below uniform-quantization baselines, the central claim fails.

Figures

read the original abstract

Spiking neural networks (SNNs) are promising for edge sensing due to their event-driven computation and temporal filtering capability. However, standard leaky integrate-and-fire (LIF) neurons communicate only through binary spikes, which severely limit representational capacity. Existing multi-level spiking neurons improve information transmission, but often rely on uniform quantization that mismatches membrane-potential distributions or introduces costly synaptic multiplications. In this paper, we propose ShiftLIF, a multi-level spiking neuron that maps membrane potentials to a logarithmically spaced power-of-two spike set. This design provides finer representation in the small-amplitude regime, where membrane potentials are densely concentrated, while enabling multiplier-free synaptic computation through bit-shift and accumulation operations. As a result, ShiftLIF improves spike-level expressiveness without sacrificing the hardware-friendly nature of standard SNN computation. We evaluate ShiftLIF on 10 datasets spanning wireless, acoustic, motion, and visual sensing tasks. Results show that ShiftLIF consistently matches or exceeds the accuracy of existing multi-level spiking neurons while maintaining synaptic energy consumption close to standard binary LIF. These results indicate that ShiftLIF provides a favorable accuracy-efficiency trade-off for cross-modal edge sensing.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes ShiftLIF, a multi-level spiking neuron model that quantizes membrane potentials using a fixed set of logarithmically spaced power-of-two levels. This design aims to provide higher resolution for small-amplitude potentials (where distributions are dense) while enabling multiplier-free synaptic computations via bit-shift and accumulate operations. The authors evaluate the approach on 10 datasets spanning wireless, acoustic, motion, and visual sensing tasks, claiming that ShiftLIF matches or exceeds the accuracy of prior multi-level spiking neurons while keeping synaptic energy consumption close to that of standard binary LIF neurons.

Significance. If the empirical results and hardware assumptions hold, ShiftLIF would represent a practical advance for energy-efficient SNNs on edge devices by improving representational capacity without introducing multiplications or adaptive quantization overhead. The power-of-two choice is a strength for hardware mapping, and the cross-modal evaluation on 10 datasets provides broader evidence than typical single-task SNN papers.

major comments (1)

- The central claim that ShiftLIF maintains accuracy and energy close to binary LIF rests on the assumption that a single static set of log-spaced power-of-two levels remains effective when membrane-potential distributions vary across layers, tasks, or training regimes. The manuscript reports results on 10 datasets but provides no ablation on level placement, no per-layer membrane-potential histograms, and no explicit tests of distribution mismatch (e.g., after batch-norm or in deeper networks). If the covered range is exceeded, either accuracy degrades or additional clipping/overflow logic is required, which would undermine the energy claim.

minor comments (1)

- The abstract and introduction would benefit from a brief explicit statement of the exact power-of-two levels chosen and the rationale for their spacing (e.g., number of levels and the base-2 exponents).

Simulated Author's Rebuttal

We thank the referee for the constructive feedback and for recognizing the potential of ShiftLIF as a practical advance for energy-efficient SNNs. We address the major comment point by point below and commit to revisions that directly strengthen the supporting evidence.

read point-by-point responses

-

Referee: The central claim that ShiftLIF maintains accuracy and energy close to binary LIF rests on the assumption that a single static set of log-spaced power-of-two levels remains effective when membrane-potential distributions vary across layers, tasks, or training regimes. The manuscript reports results on 10 datasets but provides no ablation on level placement, no per-layer membrane-potential histograms, and no explicit tests of distribution mismatch (e.g., after batch-norm or in deeper networks). If the covered range is exceeded, either accuracy degrades or additional clipping/overflow logic is required, which would undermine the energy claim.

Authors: We agree that the manuscript does not contain explicit ablations on level placement, per-layer membrane-potential histograms, or targeted tests of distribution mismatch. The log-spaced power-of-two levels were selected to allocate higher resolution where membrane potentials are known to concentrate (near zero), a property observed across many SNN training regimes. The consistent accuracy results across 10 datasets spanning four sensing modalities provide indirect support for robustness, as these tasks and network depths induce varied potential statistics. However, direct visualization and controlled variation would strengthen the claim. In the revised manuscript we will add (1) per-layer histograms of membrane potentials extracted from trained models on representative datasets, (2) an ablation comparing our fixed power-of-two levels against uniform quantization and alternative log placements, and (3) quantification of how often potentials exceed the highest level together with the exact clipping implementation used. Clipping is performed by simple saturation to the maximum power-of-two value; this requires only comparison logic already present in standard fixed-point arithmetic and does not introduce multiplications or adaptive overhead, thereby preserving the claimed energy profile. These additions will be placed in a new experimental subsection and will not alter the core method or reported accuracy numbers. revision: yes

Circularity Check

No circularity: design motivated by distribution observation, not fitted or self-referential

full rationale

The paper's core proposal is a fixed, logarithmically spaced power-of-two quantization for multi-level spikes, chosen because membrane potentials concentrate at small amplitudes. This is presented as a heuristic design choice rather than a parameter fitted to the evaluation data or derived from a self-citation chain. No equations reduce the claimed accuracy or energy results to inputs by construction; the 10-dataset evaluation is independent of the level placement. No self-citations are invoked as load-bearing uniqueness theorems, and the method does not rename a known result or smuggle an ansatz. The derivation chain is therefore self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

Constants (φ-ladder)phi_golden_ratio echoesShiftLIF maps the continuous membrane potential to a logarithmically spaced set of spikes defined by powers of two, {0, 2^-K, 2^-(K-1), ..., 2^-1, 1}

-

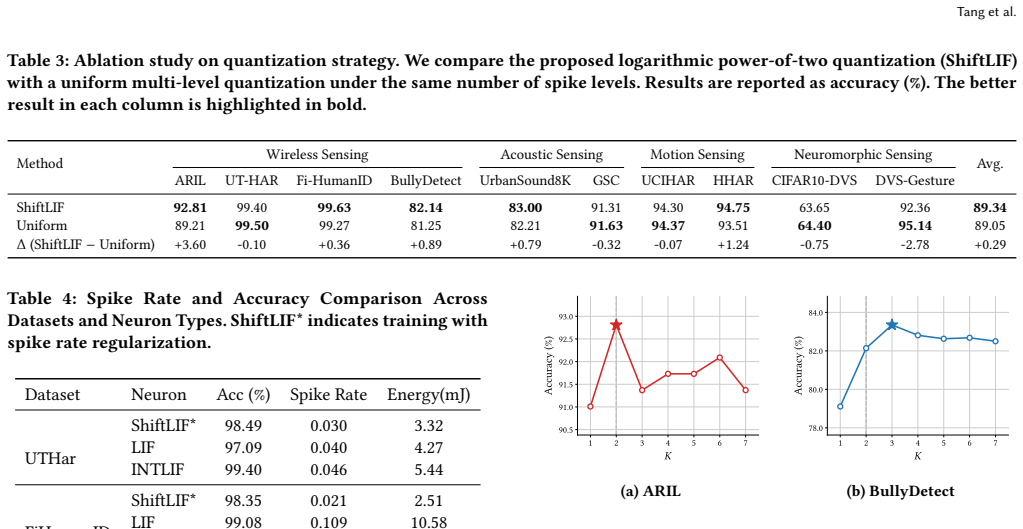

Cost.FunctionalEquation (parameter-free J)washburn_uniqueness_aczel unclearwe set the precision factor to K=2, defining a 2-level spike alphabet S={0, 2^-2, 2^-1, 1}. ... the optimum appears at K=2 on ARIL and K=3 on BullyDetect

Reference graph

Works this paper leans on

-

[1]

Arnon Amir, Brian Taba, David Berg, Timothy Melano, Jeffrey McKinstry, Carmelo Di Nolfo, Tapan Nayak, Alexander Andreopoulos, Guillaume Garreau, Marcela Mendoza, et al. 2017. A low power, fully event-based gesture recogni- tion system. InProceedings of the IEEE conference on computer vision and pattern recognition. 7243–7252

2017

-

[2]

Davide Anguita, Alessandro Ghio, Luca Oneto, Xavier Parra, Jorge Luis Reyes- Ortiz, et al. 2013. A public domain dataset for human activity recognition using smartphones.. InEsann, Vol. 3. 3–4

2013

-

[3]

Suwhan Baek and Jaewon Lee. 2024. Snn and sound: a comprehensive review of spiking neural networks in sound.Biomedical Engineering Letters14, 5 (2024), 981–991

2024

-

[4]

William M Connelly, Michael Laing, Adam C Errington, and Vincenzo Crunelli

-

[5]

The thalamus as a low pass filter: filtering at the cellular level does not equate with filtering at the network level.Frontiers in neural circuits9 (2016), 89

2016

-

[6]

Samuel Coward, Theo Drane, Emiliano Morini, and George A Constantinides

-

[7]

In 2024 IEEE 31st Symposium on Computer Arithmetic (ARITH)

Combining power and arithmetic optimization via datapath rewriting. In 2024 IEEE 31st Symposium on Computer Arithmetic (ARITH). IEEE, 24–31

2024

- [8]

-

[9]

Wei Fang, Yanqi Chen, Jianhao Ding, Zhaofei Yu, Timothée Masquelier, Ding Chen, Liwei Huang, Huihui Zhou, Guoqi Li, and Yonghong Tian. 2023. Spiking- jelly: An open-source machine learning infrastructure platform for spike-based intelligence.Science Advances9, 40 (2023), eadi1480

2023

-

[10]

Wei Fang, Zhaofei Yu, Yanqi Chen, Tiejun Huang, Timothée Masquelier, and Yonghong Tian. 2021. Deep residual learning in spiking neural networks.Ad- vances in neural information processing systems34 (2021), 21056–21069

2021

-

[11]

Wei Fang, Zhaofei Yu, Yanqi Chen, Timothée Masquelier, Tiejun Huang, and Yonghong Tian. 2021. Incorporating learnable membrane time constant to enhance learning of spiking neural networks. InProceedings of the IEEE/CVF international conference on computer vision. 2661–2671

2021

-

[12]

Wei Fang, Zhaofei Yu, Zhaokun Zhou, Ding Chen, Yanqi Chen, Zhengyu Ma, Timothée Masquelier, and Yonghong Tian. 2023. Parallel spiking neurons with high efficiency and ability to learn long-term dependencies.Advances in Neural Information Processing Systems36 (2023), 53674–53687

2023

-

[13]

Yuetong Fang, Deming Zhou, Ziqing Wang, Hongwei Ren, ZeCui Zeng, Lusong Li, Renjing Xu, et al. [n. d.]. Spiking Neural Networks Need High-Frequency Information. InThe Thirty-ninth Annual Conference on Neural Information Pro- cessing Systems

-

[14]

Yufei Guo, Yuanpei Chen, Xiaode Liu, Weihang Peng, Yuhan Zhang, Xuhui Huang, and Zhe Ma. 2024. Ternary spike: Learning ternary spikes for spiking neural networks. InProceedings of the AAAI conference on artificial intelligence, Vol. 38. 12244–12252

2024

-

[15]

Yufei Guo, Xuhui Huang, and Zhe Ma. 2023. Direct learning-based deep spiking neural networks: a review.Frontiers in Neuroscience17 (2023), 1209795

2023

-

[16]

Yufei Guo, Xiaode Liu, Yuanpei Chen, Liwen Zhang, Weihang Peng, Yuhan Zhang, Xuhui Huang, and Zhe Ma. 2023. Rmp-loss: Regularizing membrane potential distribution for spiking neural networks. InProceedings of the IEEE/CVF International Conference on Computer Vision. 17391–17401

2023

-

[17]

Yufei Guo, Xinyi Tong, Yuanpei Chen, Liwen Zhang, Xiaode Liu, Zhe Ma, and Xuhui Huang. 2022. Recdis-snn: Rectifying membrane potential distribution for directly training spiking neural networks. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition. 326–335

2022

-

[18]

Bing Han, Gopalakrishnan Srinivasan, and Kaushik Roy. 2020. Rmp-snn: Residual membrane potential neuron for enabling deeper high-accuracy and low-latency spiking neural network. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition. 13558–13567

2020

-

[19]

Zecheng Hao, Xinyu Shi, Yujia Liu, Zhaofei Yu, and Tiejun Huang. 2024. Lm-ht snn: Enhancing the performance of snn to ann counterpart through learnable multi-hierarchical threshold model.Advances in Neural Information Processing Systems37 (2024), 101905–101927

2024

-

[20]

Yifan Hu, Lei Deng, Yujie Wu, Man Yao, and Guoqi Li. 2024. Advancing spiking neural networks toward deep residual learning.IEEE transactions on neural networks and learning systems36, 2 (2024), 2353–2367

2024

- [21]

-

[22]

Meng-Huang Lai and Kang-Shuo Chang. 2023. Ai sensor applications in edge computing.IEEE Nanotechnology Magazine17, 6 (2023), 23–28

2023

-

[23]

Bo Lan, Fei Wang, Lekun Xia, Fan Nai, Shiqiang Nie, Han Ding, and Jinsong Han. 2024. Bullydetect: Detecting school physical bullying with wi-fi and deep wavelet transformer.IEEE Internet of Things Journal12, 5 (2024), 5160–5169

2024

-

[24]

Yann LeCun, Léon Bottou, Yoshua Bengio, and Patrick Haffner. 2002. Gradient- based learning applied to document recognition.Proc. IEEE86, 11 (2002), 2278– 2324

2002

-

[25]

Hongmin Li, Hanchao Liu, Xiangyang Ji, Guoqi Li, and Luping Shi. 2017. Cifar10- dvs: an event-stream dataset for object classification.Frontiers in neuroscience11 (2017), 244131

2017

- [26]

-

[27]

Yuhang Li, Ruokai Yin, Youngeun Kim, and Priyadarshini Panda. 2023. Effi- cient human activity recognition with spatio-temporal spiking neural networks. Frontiers in Neuroscience17 (2023), 1233037

2023

-

[28]

Yang Li, Xinyi Zeng, Zhe Xue, Pinxian Zeng, Zikai Zhang, and Yan Wang. 2025. Incorporating the Refractory Period into Spiking Neural Networks through Spike- Triggered Threshold Dynamics. InProceedings of the 33rd ACM International Conference on Multimedia. 10876–10885

2025

-

[29]

Xinhao Luo, Man Yao, Yuhong Chou, Bo Xu, and Guoqi Li. 2024. Integer-valued training and spike-driven inference spiking neural network for high-performance and energy-efficient object detection. InEuropean Conference on Computer Vision. Springer, 253–272

2024

- [30]

-

[31]

Mattia Merluzzi, Nicola Di Pietro, Paolo Di Lorenzo, Emilio Calvanese Strinati, and Sergio Barbarossa. 2021. Discontinuous computation offloading for energy- efficient mobile edge computing.IEEE Transactions on Green Communications and Networking6, 2 (2021), 1242–1257

2021

-

[32]

Emre O Neftci, Hesham Mostafa, and Friedemann Zenke. 2019. Surrogate gradient learning in spiking neural networks.IEEE Signal Processing Magazine36, 6 (2019), 51–63

2019

- [33]

- [34]

-

[35]

Justin Salamon, Christopher Jacoby, and Juan Pablo Bello. 2014. A dataset and taxonomy for urban sound research. InProceedings of the 22nd ACM international conference on Multimedia. 1041–1044

2014

-

[36]

Weisong Shi, Jie Cao, Quan Zhang, Youhuizi Li, and Lanyu Xu. 2016. Edge computing: Vision and challenges.IEEE internet of things journal3, 5 (2016), 637–646

2016

-

[37]

Karen Simonyan and Andrew Zisserman. 2014. Very deep convolutional net- works for large-scale image recognition.arXiv preprint arXiv:1409.1556(2014)

work page Pith review arXiv 2014

- [38]

-

[39]

Allan Stisen, Henrik Blunck, Sourav Bhattacharya, Thor Siiger Prentow, Mikkel Baun Kjærgaard, Anind Dey, Tobias Sonne, and Mads Møller Jensen

-

[40]

InProceedings of the 13th ACM conference on embedded networked sensor systems

Smart devices are different: Assessing and mitigatingmobile sensing het- erogeneities for activity recognition. InProceedings of the 13th ACM conference on embedded networked sensor systems. 127–140

- [41]

- [42]

-

[43]

Hokchhay Tann, Soheil Hashemi, R Iris Bahar, and Sherief Reda. 2017. Hardware- software codesign of accurate, multiplier-free deep neural networks. InProceed- ings of the 54th Annual Design Automation Conference 2017. 1–6

2017

-

[44]

Corinne Teeter, Ramakrishnan Iyer, Vilas Menon, Nathan Gouwens, David Feng, Jim Berg, Aaron Szafer, Nicholas Cain, Hongkui Zeng, Michael Hawrylycz, et al

-

[45]

Nature communications9, 1 (2018), 709

Generalized leaky integrate-and-fire models classify multiple neuron types. Nature communications9, 1 (2018), 709

2018

-

[46]

Michael Voudaskas, Jack Iain MacLean, Neale AW Dutton, Brian D Stewart, and Istvan Gyongy. 2025. Spiking neural networks in imaging: A review and case study.Sensors25, 21 (2025), 6747

2025

-

[47]

Fei Wang, Jianwei Feng, Yinliang Zhao, Xiaobin Zhang, Shiyuan Zhang, and Jinsong Han. 2019. Joint activity recognition and indoor localization with WiFi fingerprints.Ieee Access7 (2019), 80058–80068

2019

-

[48]

Tian Wang, Yuzhu Liang, Xuewei Shen, Xi Zheng, Adnan Mahmood, and Quan Z Sheng. 2023. Edge computing and sensor-cloud: Overview, solutions, and direc- tions.ACM computing surveys55, 13s (2023), 1–37

2023

- [49]

- [50]

- [51]

-

[52]

Zhanglu Yan, Zhenyu Bai, and Weng-Fai Wong. 2024. Reconsidering the energy efficiency of spiking neural networks.arXiv preprint arXiv:2409.08290(2024)

work page internal anchor Pith review arXiv 2024

-

[53]

Jianfei Yang, Xinyan Chen, Han Zou, Chris Xiaoxuan Lu, Dazhuo Wang, Sumei Sun, and Lihua Xie. 2023. SenseFi: A library and benchmark on deep-learning- empowered WiFi human sensing.Patterns4, 3 (2023)

2023

-

[54]

Xingting Yao, Fanrong Li, Zitao Mo, and Jian Cheng. 2022. Glif: A unified gated leaky integrate-and-fire neuron for spiking neural networks.Advances in Neural Information Processing Systems35 (2022), 32160–32171

2022

-

[55]

Guanlei Zhang, Lei Feng, Fanqin Zhou, Zhixiang Yang, Qiyang Zhang, Alaa Saleh, Praveen Kumar Donta, and Chinmaya Kumar Dehury. 2024. Spiking neural networks in intelligent edge computing.IEEE Consumer Electronics Magazine14, 4 (2024), 66–75

2024

-

[56]

Chenlin Zhou, Han Zhang, Zhaokun Zhou, Liutao Yu, Liwei Huang, Xiaopeng Fan, Li Yuan, Zhengyu Ma, Huihui Zhou, and Yonghong Tian. 2024. Qkformer: Hierarchical spiking transformer using qk attention.Advances in Neural Infor- mation Processing Systems37 (2024), 13074–13098

2024

-

[57]

Yue Zhou, Jiawei Fu, Zirui Chen, Fuwei Zhuge, Yasai Wang, Jianmin Yan, Sijie Ma, Lin Xu, Huanmei Yuan, Mansun Chan, et al. 2023. Computational event-driven vision sensors for in-sensor spiking neural networks.Nature Electronics6, 11 (2023), 870–878

2023

-

[58]

Zhaokun Zhou, Yuesheng Zhu, Chao He, Yaowei Wang, Shuicheng Yan, Yonghong Tian, and Li Yuan. 2022. Spikformer: When spiking neural network meets transformer.arXiv preprint arXiv:2209.15425(2022). 10 ShiftLIF: Efficient Multi-Level Spiking Neurons with Power-of-Two Quantization A Theoretical Analysis Lemma 3.2.Let 𝑟=Pr(𝑋< 1 2 ). Let 𝑅, 𝑉 , and 𝑇 denote the...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.