Recognition: 3 theorem links

· Lean TheoremViM-Q: Scalable Algorithm-Hardware Co-Design for Vision Mamba Model Inference on FPGA

Pith reviewed 2026-05-08 19:34 UTC · model grok-4.3

The pith

ViM-Q delivers 4.96x speedup and 59.8x energy efficiency for Vision Mamba inference on FPGA versus a quantized GPU baseline using dynamic activation quantization, per-block APoT weights, and a pipelined SSM engine.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Implemented on an AMD ZCU102 FPGA, ViM-Q achieves an average 4.96x speedup and 59.8x energy efficiency gain over a quantized NVIDIA RTX 3090 GPU baseline for low-batch inference on ViM-tiny.

Load-bearing premise

The quantization scheme preserves sufficient model accuracy for the target use cases and the hardware design generalizes across the ViM family without major accuracy or performance loss; the abstract provides no accuracy metrics, ablation studies, or error bars to support this.

Figures

read the original abstract

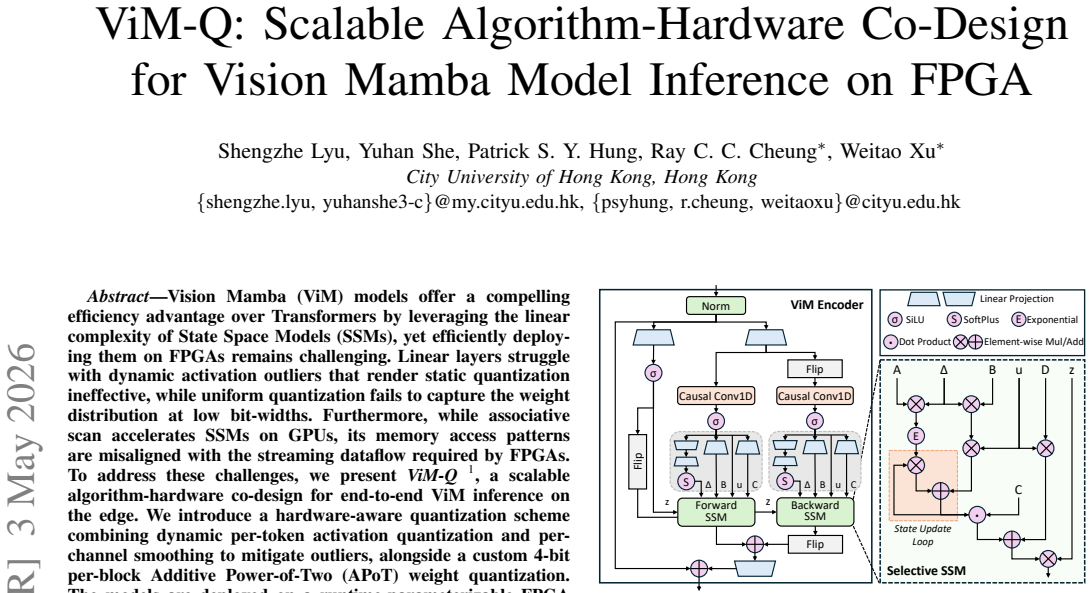

Vision Mamba (ViM) models offer a compelling efficiency advantage over Transformers by leveraging the linear complexity of State Space Models (SSMs), yet efficiently deploying them on FPGAs remains challenging. Linear layers struggle with dynamic activation outliers that render static quantization ineffective, while uniform quantization fails to capture the weight distribution at low bit-widths. Furthermore, while associative scan accelerates SSMs on GPUs, its memory access patterns are misaligned with the streaming dataflow required by FPGAs. To address these challenges, we present ViM-Q, a scalable algorithm-hardware co-design for end-to-end ViM inference on the edge. We introduce a hardware-aware quantization scheme combining dynamic per-token activation quantization and per-channel smoothing to mitigate outliers, alongside a custom 4-bit per-block Additive Power-of-Two (APoT) weight quantization. The models are deployed on a runtime-parameterizable FPGA accelerator featuring a linear engine employing a Lookup-Table (LUT) unit to replace multiplications with shift-add operations, and a fine-grained pipelined SSM engine that parallelizes the state dimension while preserving sequential recurrence. Crucially, the hardware supports runtime configuration, adapting to diverse dimensions and input resolutions across the ViM family. Implemented on an AMD ZCU102 FPGA, ViM-Q achieves an average 4.96x speedup and 59.8x energy efficiency gain over a quantized NVIDIA RTX 3090 GPU baseline for low-batch inference on ViM-tiny. This co-design shows a viable path for deploying ViM models on resource-constrained edge devices.

Editorial analysis

A structured set of objections, weighed in public.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Attention is all you need,

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, Ł. Kaiser, and I. Polosukhin, “Attention is all you need,”Advances in Neural Information Processing Systems (NeurIPS), vol. 30, 2017

2017

-

[2]

An image is worth 16x16 words: Transformers for image recognition at scale,

A. Dosovitskiy, L. Beyer, A. Kolesnikov, D. Weissenborn, X. Zhai, T. Unterthiner, M. Dehghani, M. Minderer, G. Heigold, S. Gelly, J. Uszkoreit, and N. Houlsby, “An image is worth 16x16 words: Transformers for image recognition at scale,” inProceedings of the International Conference on Learning Representations (ICLR), 2021

2021

-

[3]

Swin transformer: Hierarchical vision transformer using shifted windows,

Z. Liu, Y . Lin, Y . Cao, H. Hu, Y . Wei, Z. Zhang, S. Lin, and B. Guo, “Swin transformer: Hierarchical vision transformer using shifted windows,” inProceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021, pp. 10 012–10 022

2021

-

[4]

Training data-efficient image transformers & distillation through attention,

H. Touvron, M. Cord, M. Douze, F. Massa, A. Sablayrolles, and H. J ´egou, “Training data-efficient image transformers & distillation through attention,” inProceedings of the International Conference on Machine Learning (ICML), 2021, pp. 10 347–10 357

2021

-

[5]

Masked au- toencoders are scalable vision learners,

K. He, X. Chen, S. Xie, Y . Li, P. Doll ´ar, and R. Girshick, “Masked au- toencoders are scalable vision learners,” inProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022, pp. 16 000–16 009

2022

-

[6]

Dynam- icvit: Efficient vision transformers with dynamic token sparsification,

Y . Rao, W. Zhao, B. Liu, J. Lu, J. Zhou, and C.-J. Hsieh, “Dynam- icvit: Efficient vision transformers with dynamic token sparsification,” Advances in Neural Information Processing Systems (NeurIPS), vol. 34, pp. 13 937–13 949, 2021

2021

-

[7]

BEit: BERT pre-training of image transformers,

H. Bao, L. Dong, S. Piao, and F. Wei, “BEit: BERT pre-training of image transformers,” inProceedings of the International Conference on Learning Representations (ICLR), 2022

2022

-

[8]

Taming transformers for high- resolution image synthesis,

P. Esser, R. Rombach, and B. Ommer, “Taming transformers for high- resolution image synthesis,” inProceedings of the IEEE/CVF Confer- ence on Computer Vision and Pattern Recognition (CVPR), 2021, pp. 12 873–12 883

2021

-

[9]

Transformers in vision: A survey,

S. Khan, M. Naseer, M. Hayat, S. W. Zamir, F. S. Khan, and M. Shah, “Transformers in vision: A survey,”ACM Computing Surveys (CSUR), vol. 54, no. 10s, pp. 1–41, 2022

2022

-

[10]

A survey on vision transformer,

K. Han, Y . Wang, H. Chen, X. Chen, J. Guo, Z. Liu, Y . Tang, A. Xiao, C. Xu, Y . Xuet al., “A survey on vision transformer,”IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI), vol. 45, no. 1, pp. 87–110, 2022

2022

-

[11]

Mamba: Linear-time sequence modeling with selective state spaces,

A. Gu and T. Dao, “Mamba: Linear-time sequence modeling with selective state spaces,” inConference on Language Modeling (COLM), 2024

2024

-

[12]

Transformers are ssms: generalized models and effi- cient algorithms through structured state space duality,

T. Dao and A. Gu, “Transformers are ssms: generalized models and effi- cient algorithms through structured state space duality,” inProceedings of the International Conference on Machine Learning (ICML), 2024, pp. 10 041–10 071

2024

-

[13]

Vision mamba: Efficient visual representation learning with bidirectional state space model,

L. Zhu, B. Liao, Q. Zhang, X. Wang, W. Liu, and X. Wang, “Vision mamba: Efficient visual representation learning with bidirectional state space model,” inProceedings of the International Conference on Ma- chine Learning (ICML), 2024

2024

-

[14]

Vmamba: Visual state space model,

Y . Liu, Y . Tian, Y . Zhao, H. Yu, L. Xie, Y . Wang, Q. Ye, J. Jiao, and Y . Liu, “Vmamba: Visual state space model,”Advances in Neural In- formation Processing Systems (NeurIPS), vol. 37, pp. 103 031–103 063, 2024

2024

-

[15]

Mambaout: Do we really need mamba for vision?

W. Yu and X. Wang, “Mambaout: Do we really need mamba for vision?” inProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025, pp. 4484–4496

2025

-

[16]

Mambavision: A hybrid mamba- transformer vision backbone,

A. Hatamizadeh and J. Kautz, “Mambavision: A hybrid mamba- transformer vision backbone,” inProceedings of the IEEE/CVF Con- ference on Computer Vision and Pattern Recognition (CVPR), 2025, pp. 25 261–25 270

2025

-

[17]

Mobilemamba: Lightweight multi-receptive visual mamba network,

H. He, J. Zhang, Y . Cai, H. Chen, X. Hu, Z. Gan, Y . Wang, C. Wang, Y . Wu, and L. Xie, “Mobilemamba: Lightweight multi-receptive visual mamba network,” inProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025, pp. 4497– 4507

2025

-

[18]

Localmamba: Visual state space model with windowed selective scan,

T. Huang, X. Pei, S. You, F. Wang, C. Qian, and C. Xu, “Localmamba: Visual state space model with windowed selective scan,” inProceedings of the European Conference on Computer Vision (ECCV), 2024, pp. 12– 22

2024

-

[19]

arXiv preprint arXiv:2410.03105 (2024)

M. M. Rahman, A. A. Tutul, A. Nath, L. Laishram, S. K. Jung, and T. Hammond, “Mamba in vision: A comprehensive survey of techniques and applications,”arXiv preprint arXiv:2410.03105 (arXiv), 2024

-

[20]

Vision mamba: A comprehensive survey and taxonomy,

X. Liu, C. Zhang, F. Huang, S. Xia, G. Wang, and L. Zhang, “Vision mamba: A comprehensive survey and taxonomy,”IEEE Transactions on Neural Networks and Learning Systems (TNNLS), 2025

2025

-

[21]

arXiv preprint arXiv:2502.07161 (2025)

F. Ibrahim, G. Liu, and G. Wang, “A survey on mamba architecture for vision applications,”arXiv preprint arXiv:2502.07161 (arXiv), 2025

-

[22]

Ptq4vm: Post-training quantization for visual mamba,

Y . Cho, C. Lee, S. Kim, and E. Park, “Ptq4vm: Post-training quantization for visual mamba,” inProceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), 2025, pp. 1176–1185

2025

-

[23]

Mamba-ptq: Outlier channels in recurrent large language models.arXiv preprint arXiv:2407.12397, 2024

A. Pierro and S. Abreu, “Mamba-ptq: Outlier channels in recurrent large language models,”arXiv preprint arXiv:2407.12397 (arXiv), 2024

-

[24]

Mambaquant: Quantizing the mamba family with variance aligned rotation methods,

Z. Xu, Y . Yue, X. Hu, D. Yang, Z. Yuan, Z. Jiang, Z. Chen, Jiangy- ongYu, XUCHEN, and S. Zhou, “Mambaquant: Quantizing the mamba family with variance aligned rotation methods,” inProceedings of the International Conference on Learning Representations (ICLR), 2025

2025

-

[25]

Quamba: A post-training quantization recipe for selective state space models,

H.-Y . Chiang, C.-C. Chang, N. Frumkin, K.-C. Wu, and D. Marculescu, “Quamba: A post-training quantization recipe for selective state space models,” inProceedings of the International Conference on Learning Representations (ICLR), 2025

2025

-

[26]

Quamba2: A robust and scalable post-training quantization framework for selective state space models,

H.-Y . Chiang, C.-C. Chang, N. Frumkin, K.-C. Wu, M. S. Abdelfattah, and D. Marculescu, “Quamba2: A robust and scalable post-training quantization framework for selective state space models,” inProceedings of the International Conference on Machine Learning (ICML), 2025

2025

-

[27]

Post-training quan- tization for vision mamba with k-scaled quantization and reparame- terization,

B.-Y . Shi, Y .-C. Lo, A.-Y . Wu, and Y .-M. Tsai, “Post-training quan- tization for vision mamba with k-scaled quantization and reparame- terization,” inProceedings of the International Workshop on Machine Learning for Signal Processing (MLSP), 2025, pp. 1–6

2025

-

[28]

Vim-vq: Efficient post-training vector quantization for visual mamba,

J. Deng, S. Li, Z. Wang, K. Xu, H. Gu, and K. Huang, “Vim-vq: Efficient post-training vector quantization for visual mamba,” inProceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2025, pp. 24 518–24 527

2025

-

[29]

Ouro- mamba: A data-free quantization framework for vision mamba,

A. Ramachandran, M. Lee, H. Xu, S. Kundu, and T. Krishna, “Ouro- mamba: A data-free quantization framework for vision mamba,” in Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2025, pp. 21 177–21 186

2025

-

[30]

Lut tensor core: A software-hardware co-design for lut-based low-bit llm inference,

Z. Mo, L. Wang, J. Wei, Z. Zeng, S. Cao, L. Ma, N. Jing, T. Cao, J. Xue, F. Yanget al., “Lut tensor core: A software-hardware co-design for lut-based low-bit llm inference,” inProceedings of the International Symposium on Computer Architecture (ISCA), 2025, pp. 514–528

2025

-

[31]

Vs-quant: Per-vector scaled quantization for accurate low-precision neural network inference,

S. Dai, R. Venkatesan, M. Ren, B. Zimmer, W. Dally, and B. Khailany, “Vs-quant: Per-vector scaled quantization for accurate low-precision neural network inference,”Machine Learning and Systems (MLSys), vol. 3, pp. 873–884, 2021

2021

-

[32]

Ladder: Enabling efficient low-precision deep learning computing through hardware-aware tensor transformation,

L. Wang, L. Ma, S. Cao, Q. Zhang, J. Xue, Y . Shi, N. Zheng, Z. Miao, F. Yang, T. Caoet al., “Ladder: Enabling efficient low-precision deep learning computing through hardware-aware tensor transformation,” in Proceedings of the USENIX Symposium on Operating Systems Design and Implementation (OSDI), 2024, pp. 307–323

2024

-

[33]

T- mac: Cpu renaissance via table lookup for low-bit llm deployment on edge,

J. Wei, S. Cao, T. Cao, L. Ma, L. Wang, Y . Zhang, and M. Yang, “T- mac: Cpu renaissance via table lookup for low-bit llm deployment on edge,” inProceedings of the European Conference on Computer Systems (EuroSys), 2025, pp. 278–292

2025

-

[34]

Awq: Activation-aware weight quantiza- tion for on-device llm compression and acceleration,

J. Lin, J. Tang, H. Tang, S. Yang, W.-M. Chen, W.-C. Wang, G. Xiao, X. Dang, C. Gan, and S. Han, “Awq: Activation-aware weight quantiza- tion for on-device llm compression and acceleration,”Machine Learning and Systems (MLSys), vol. 6, pp. 87–100, 2024

2024

-

[35]

Marca: Mamba accelerator with reconfigurable architecture,

J. Li, S. Huang, J. Xu, J. Liu, L. Ding, N. Xu, and G. Dai, “Marca: Mamba accelerator with reconfigurable architecture,” inProceedings of the IEEE/ACM International Conference on Computer-Aided Design (ICCAD), 2024, pp. 1–9

2024

-

[36]

Lightmamba: Efficient mamba acceleration on fpga with quantization and hardware co-design,

R. Wei, S. Xu, L. Zhong, Z. Yang, Q. Guo, Y . Wang, R. Wang, and M. Li, “Lightmamba: Efficient mamba acceleration on fpga with quantization and hardware co-design,” inProceedings of the Conference on Design, Automation and Test in Europe (DATE), 2025, pp. 1–7

2025

-

[37]

An efficient fpga-based hardware accelerator of fully quantized mamba-2,

K. Zhou, H. Jiao, W. Huang, and Y . Huang, “An efficient fpga-based hardware accelerator of fully quantized mamba-2,” inProceedings of the IEEE International Symposium on Field-Programmable Custom Computing Machines (FCCM), 2025, pp. 217–226

2025

-

[38]

Mamba-x: An end-to-end vision mamba accelerator for edge computing devices,

D. Yoon, G. Lee, J. Chang, Y . Lee, D. Lee, and M. Rhu, “Mamba-x: An end-to-end vision mamba accelerator for edge computing devices,” in Proceedings of the IEEE/ACM International Conference on Computer- Aided Design (ICCAD), 2025, pp. 1–9

2025

-

[39]

Specmamba: Accelerating mamba inference on fpga with speculative decoding,

L. Zhong, S. Xu, H. Wen, T. Xie, Q. Guo, Y . Wang, and M. Li, “Specmamba: Accelerating mamba inference on fpga with speculative decoding,” inProceedings of the IEEE/ACM International Conference on Computer-Aided Design (ICCAD). IEEE, 2025, pp. 1–9. 9

2025

-

[40]

Fastmamba: A high-speed and efficient mamba accelerator on fpga with accurate quantization,

A. Wang, H. Shao, S. Ma, and Z. Wang, “Fastmamba: A high-speed and efficient mamba accelerator on fpga with accurate quantization,” in Proceedings of the IEEE Computer Society Annual Symposium on VLSI (ISVLSI), vol. 1, 2025, pp. 1–6

2025

-

[41]

Smoothquant: Accurate and efficient post-training quantization for large language models,

G. Xiao, J. Lin, M. Seznec, H. Wu, J. Demouth, and S. Han, “Smoothquant: Accurate and efficient post-training quantization for large language models,” inProceedings of the International Conference on Machine Learning (ICML), 2023, pp. 38 087–38 099

2023

-

[42]

SVDQuant: Absorbing outliers by low-rank com- ponent for 4-bit diffusion models,

M. Li, Y . Lin, Z. Zhang, T. Cai, J. Guo, X. Li, E. Xie, C. Meng, J.-Y . Zhu, and S. Han, “SVDQuant: Absorbing outliers by low-rank com- ponent for 4-bit diffusion models,” inProceedings of the International Conference on Learning Representations (ICLR), 2025

2025

-

[43]

Mix and match: A novel fpga-centric deep neural network quantization framework,

S.-E. Chang, Y . Li, M. Sun, R. Shi, H. K.-H. So, X. Qian, Y . Wang, and X. Lin, “Mix and match: A novel fpga-centric deep neural network quantization framework,” inProceedings of the IEEE International Symposium on High-Performance Computer Architecture (HPCA), 2021, pp. 208–220

2021

-

[44]

P 2-vit: Power-of-two post- training quantization and acceleration for fully quantized vision trans- former,

H. Shi, X. Cheng, W. Mao, and Z. Wang, “P 2-vit: Power-of-two post- training quantization and acceleration for fully quantized vision trans- former,”IEEE Transactions on Very Large Scale Integration Systems (TVLSI), 2024

2024

-

[45]

Additive powers-of-two quantization: An efficient non-uniform discretization for neural networks,

Y . Li, X. Dong, and W. Wang, “Additive powers-of-two quantization: An efficient non-uniform discretization for neural networks,” inProceedings of the International Conference on Learning Representations (ICLR), 2020

2020

-

[46]

Rapq: Rescuing accuracy for power-of-two low-bit post-training quantization,

H. Yao, P. Li, J. Cao, X. Liu, C. Xie, and B. Wang, “Rapq: Rescuing accuracy for power-of-two low-bit post-training quantization,” inPro- ceedings of the International Joint Conference on Artificial Intelligence (IJCAI), 2022, pp. 1573–1579

2022

-

[47]

Power-of-two quantization for low bitwidth and hardware compliant neural networks,

D. Przewlocka-Rus, S. S. Sarwar, H. E. Sumbul, Y . Li, and B. De Salvo, “Power-of-two quantization for low bitwidth and hardware compliant neural networks,”arXiv preprint arXiv:2203.05025 (arXiv), 2022

-

[48]

Zeroquant: Efficient and affordable post-training quantization for large- scale transformers,

Z. Yao, R. Yazdani Aminabadi, M. Zhang, X. Wu, C. Li, and Y . He, “Zeroquant: Efficient and affordable post-training quantization for large- scale transformers,”Advances in Neural Information Processing Systems (NeurIPS), vol. 35, pp. 27 168–27 183, 2022

2022

-

[49]

Llm.int8(): 8- bit matrix multiplication for transformers at scale,

T. Dettmers, M. Lewis, Y . Belkada, and L. Zettlemoyer, “Llm.int8(): 8- bit matrix multiplication for transformers at scale,”Advances in Neural Information Processing Systems (NeurIPS), vol. 35, pp. 30 318–30 332, 2022

2022

-

[50]

Edge-moe: Memory-efficient multi-task vision transformer architecture with task- level sparsity via mixture-of-experts,

R. Sarkar, H. Liang, Z. Fan, Z. Wang, and C. Hao, “Edge-moe: Memory-efficient multi-task vision transformer architecture with task- level sparsity via mixture-of-experts,” inProceedings of the IEEE/ACM International Conference on Computer Aided Design (ICCAD), 2023, pp. 01–09

2023

-

[51]

Finn: A framework for fast, scalable binarized neural network inference,

Y . Umuroglu, N. J. Fraser, G. Gambardella, M. Blott, P. Leong, M. Jahre, and K. Vissers, “Finn: A framework for fast, scalable binarized neural network inference,” inProceedings of the ACM/SIGDA International Symposium on Field-Programmable Gate Arrays (ISFPGA), 2017, pp. 65–74

2017

-

[52]

Famous: Flexible accelerator for the attention mechanism of transformer on ultrascale+ fpgas,

E. Kabir, M. A. Kabir, A. R. Downey, J. D. Bakos, D. Andrews, and M. Huang, “Famous: Flexible accelerator for the attention mechanism of transformer on ultrascale+ fpgas,” inProceedings of the International Conference on Field Programmable Technology (ICFPT), 2024, pp. 1–2

2024

-

[53]

Lut-dla: Lookup table as efficient extreme low-bit deep learning accelerator,

G. Li, S. Ye, C. Chen, Y . Wang, F. Yang, T. Cao, C. Liu, M. M. S. Aly, and M. Yang, “Lut-dla: Lookup table as efficient extreme low-bit deep learning accelerator,” inProceedings of the IEEE International Sym- posium on High Performance Computer Architecture (HPCA). IEEE, 2025, pp. 671–684

2025

-

[54]

Figlut: An energy-efficient accelerator design for fp-int gemm using look-up tables,

G. Park, H. Kwon, J. Kim, J. Bae, B. Park, D. Lee, and Y . Lee, “Figlut: An energy-efficient accelerator design for fp-int gemm using look-up tables,” inProceedings of the IEEE International Symposium on High Performance Computer Architecture (HPCA). IEEE, 2025, pp. 1098– 1111

2025

-

[55]

Lut-gemm: Quantized matrix multiplication based on luts for efficient inference in large-scale generative language models,

G. Park, B. Park, M. Kim, S. Lee, J. Kim, B. Kwon, S. J. Kwon, B. Kim, Y . Lee, and D. Lee, “Lut-gemm: Quantized matrix multiplication based on luts for efficient inference in large-scale generative language models,” inProceedings of the International Conference on Learning Represen- tations (ICLR), 2022

2022

-

[56]

Prefix sums and their applications,

G. E. Blelloch, “Prefix sums and their applications,” 1990. 10

1990

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.