Recognition: 3 theorem links

· Lean TheoremShared Autonomy Assisted by Impedance-Driven Anisotropic Guidance Field

Pith reviewed 2026-05-08 18:08 UTC · model grok-4.3

The pith

A shared autonomy system uses an impedance-driven anisotropic guidance field to communicate robot intent to humans through physical feedback.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

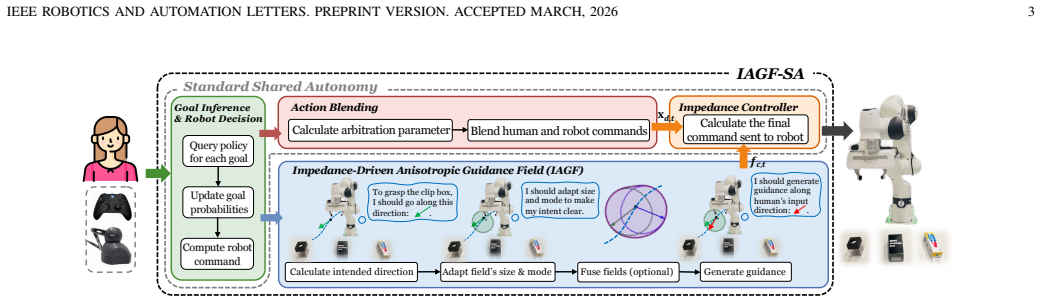

By extending shared autonomy with an impedance-driven anisotropic guidance field, the robot's dynamic response to human input is adaptively modulated according to the robot's inferred intent, creating an embodied communication channel that lets the human perceive and align with the robot's plan through natural physical interaction without extra interfaces.

What carries the argument

The Impedance-Driven Anisotropic Guidance Field (IAGF), which uses direction-dependent impedance parameters to shape the robot's resistance and guidance forces in response to human commands, thereby encoding and transmitting the robot's intent through the resulting physical sensations.

If this is right

- Task performance and human-robot agreement both increase because the physical channel reduces mismatches between what the human tries to do and what the robot executes.

- Subjective experience improves because operators no longer need to monitor auxiliary interfaces to stay aligned with the robot.

- The same mechanism works across different teleoperation interfaces, showing that the benefit comes from the physical modulation rather than the input device.

- Collaboration becomes more effective in continuous control loops because intent information flows at the same rate as the physical interaction.

Where Pith is reading between the lines

- The same field could be used in direct physical contact tasks such as co-manipulation or exoskeleton assistance, where the human already feels forces.

- Designers could tune the anisotropy to match the geometry of specific tasks, potentially making guidance stronger along critical directions and weaker along free ones.

- If the guidance field is made visible in simulation or augmented reality, it might help train new operators before they feel the real forces.

Load-bearing premise

That changing the robot's stiffness and directional resistance according to its intent gives humans an intuitive, continuous sense of what the robot wants to do without any separate display or signal.

What would settle it

A within-subjects user study in one of the three scenarios that finds no reliable difference in task success rate, completion time, or post-task ratings of robot-intent understanding between the IAGF-SA condition and a standard shared-autonomy baseline.

Figures

read the original abstract

Shared autonomy (SA) enables robots to infer human intent and assist in its achievement. While most research focuses on improving intent inference, it overlooks whether humans can understand the robot's intent in return. Without such mutual understanding, collaboration becomes less effective, degrading user experience and task performance. To address this gap, previous studies have explicitly conveyed the robot intent through additional interfaces, which remain unintuitive and limited in expressiveness. Inspired by impedance control, we propose Impedance-Driven Anisotropic Guidance Field Enhanced Shared Autonomy (IAGF-SA), a novel paradigm that extends SA with an embodied, physically-grounded communication channel. This channel adaptively modulates the robot's dynamic response to human input, enabling intuitive, continuous, physically-grounded robot intent communication while naturally guiding human actions. User studies across three scenarios and two teleoperation interfaces indicate that IAGF-SA improves task performance, human-robot agreement, and subjective experience, thus demonstrating its effectiveness in enhancing human-robot communication and collaboration.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

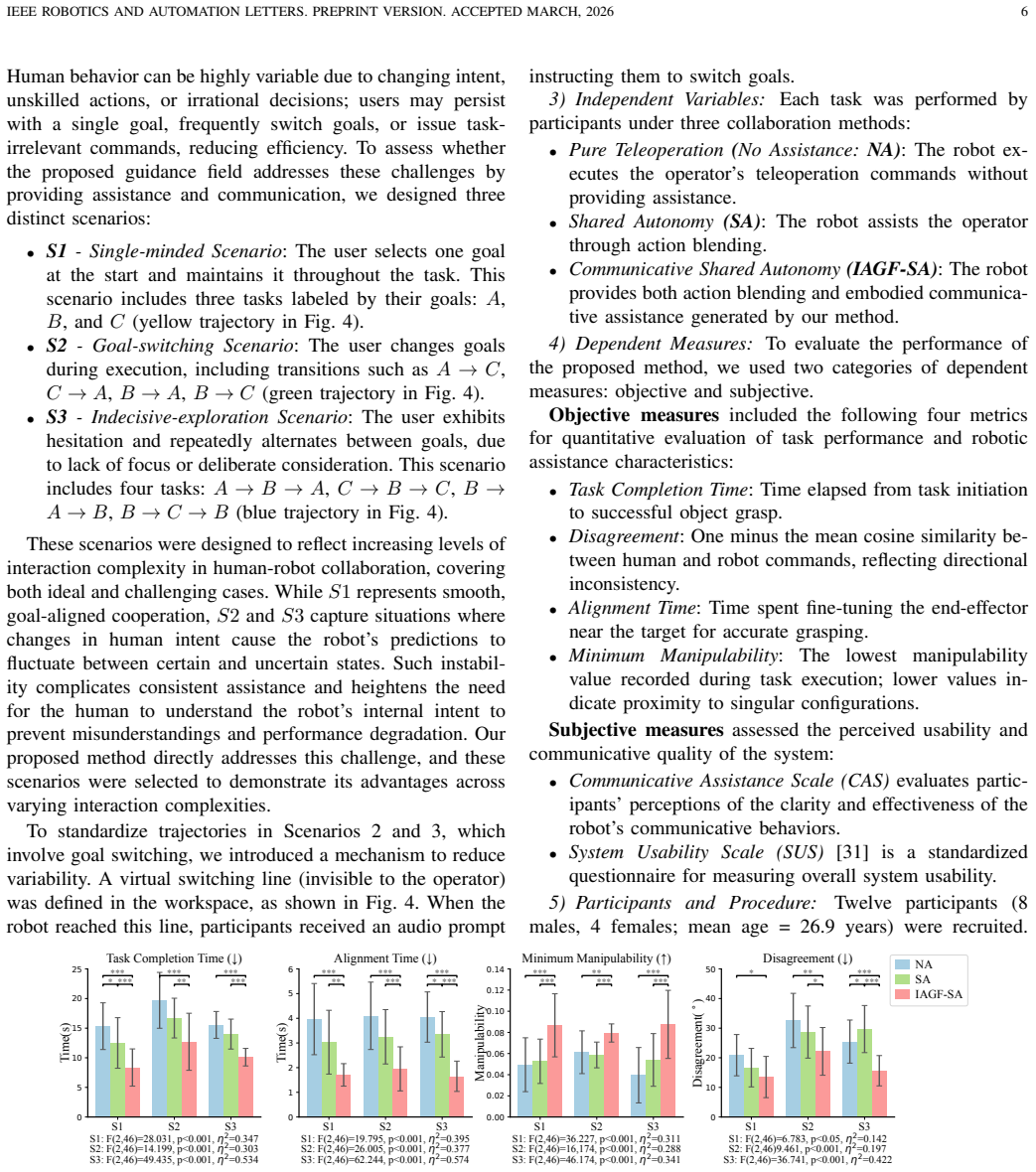

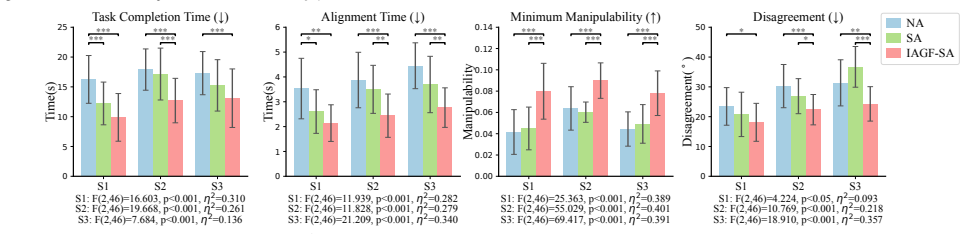

Summary. The manuscript introduces Impedance-Driven Anisotropic Guidance Field Enhanced Shared Autonomy (IAGF-SA), extending standard shared autonomy by adding an anisotropic guidance field whose impedance is modulated to create an embodied, physically-grounded channel for the robot to communicate its inferred intent back to the human operator. The authors argue this enables more intuitive, continuous mutual understanding without extra interfaces, leading to better task performance, human-robot agreement, and subjective experience. These claims are supported by user studies conducted across three scenarios and two teleoperation interfaces.

Significance. If the central claims hold after addressing experimental gaps, the work could meaningfully advance shared autonomy and human-robot collaboration research by providing a physically intuitive mechanism for bidirectional intent communication. Leveraging impedance control for directional guidance is a technically grounded idea that avoids the limitations of visual or auditory cues, potentially improving teleoperation effectiveness in unstructured environments.

major comments (1)

- User Studies section: the reported performance gains, improved agreement, and subjective scores with IAGF-SA are not accompanied by an ablation or control arm that retains the shared-autonomy intent inference and assistance but replaces the anisotropic guidance field with isotropic or non-directional impedance modulation. Without this comparison, it remains unclear whether the observed benefits arise specifically from the anisotropic field's role in communicating robot intent (the load-bearing assumption stated in the abstract) or from nonspecific changes in haptic dynamics or added feedback. This directly weakens attribution of the results to the proposed mechanism.

Simulated Author's Rebuttal

Thank you for the constructive feedback on our manuscript. We appreciate the referee's careful reading and address the major comment below, making revisions where appropriate to clarify the experimental design and strengthen attribution of results.

read point-by-point responses

-

Referee: User Studies section: the reported performance gains, improved agreement, and subjective scores with IAGF-SA are not accompanied by an ablation or control arm that retains the shared-autonomy intent inference and assistance but replaces the anisotropic guidance field with isotropic or non-directional impedance modulation. Without this comparison, it remains unclear whether the observed benefits arise specifically from the anisotropic field's role in communicating robot intent (the load-bearing assumption stated in the abstract) or from nonspecific changes in haptic dynamics or added feedback. This directly weakens attribution of the results to the proposed mechanism.

Authors: We thank the referee for highlighting this important consideration for isolating the contribution of anisotropy. Our user studies compare IAGF-SA against standard shared autonomy (which retains intent inference and assistance but provides no impedance modulation or embodied feedback channel). This baseline demonstrates the overall benefit of adding the proposed physically-grounded channel. We acknowledge that an isotropic impedance control arm would more precisely test whether directionality (rather than any haptic change) drives the gains in intent communication. In the revised manuscript, we have expanded the User Studies and Discussion sections to include a theoretical analysis of the guidance field: isotropic modulation applies uniform resistance without spatial bias and therefore cannot convey directional intent in the same manner as the anisotropic field. We have also explicitly noted the absence of this control as a limitation of the current evaluation and identified it as valuable future work. These additions clarify the design rationale without new experiments. revision: partial

Circularity Check

No circularity: empirical validation of a proposed method

full rationale

The paper proposes IAGF-SA as an extension of shared autonomy using an anisotropic guidance field inspired by impedance control. Its claims rest on user studies across three scenarios and two interfaces showing performance gains, without any derivation chain, fitted parameters renamed as predictions, or load-bearing self-citations. The method is presented as a novel paradigm and evaluated externally via human-subject experiments rather than reducing to its own inputs by construction.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Impedance control principles can be extended to create an anisotropic guidance field that communicates robot intent through physical interaction.

invented entities (1)

-

Impedance-Driven Anisotropic Guidance Field

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith.Cost (J(x) = ½(x + x⁻¹) − 1)washburn_uniqueness_aczel uncleard(ϕ) = d₁ ± d₂ u(ϕ)ᵀ v_r ... circle-like field where the radial length d(ϕ) in each direction depends on its angular alignment with v_r

-

IndisputableMonolith.Foundation (parameter-free constants chain)reality_from_one_distinction unclear¨x_d,t = M⁻¹[K(x_d,t − x_t) + D(ẋ_d,t − ẋ_t) + f_c,t] — Cartesian impedance controller with tuned gains K_p=80 N/m, K_d=10 N·s/m, d₁=2

Reference graph

Works this paper leans on

-

[1]

A policy-blending formalism for shared control,

A. D. Dragan and S. S. Srinivasa, “A policy-blending formalism for shared control,”IJRR, vol. 32, no. 7, pp. 790–805, 2013

2013

-

[2]

Recursive bayesian human intent recognition in shared-control robotics,

S. Jain and B. Argall, “Recursive bayesian human intent recognition in shared-control robotics,” inIROS, 2018, pp. 3905–3912

2018

-

[3]

Learning to arbitrate human and robot control using disagreement between sub-policies,

Y . Oh, M. Toussaint, and J. Mainprice, “Learning to arbitrate human and robot control using disagreement between sub-policies,” inIROS, 2021, pp. 5305–5311

2021

-

[4]

Residual policy learning for shared autonomy,

C. Schaff and M. R. Walter, “Residual policy learning for shared autonomy,”arXiv:2004.05097, 2020

-

[5]

Sari: Shared autonomy across repeated interaction,

A. Jonnavittula, S. A. Mehta, and D. P. Losey, “Sari: Shared autonomy across repeated interaction,”ACM THRI, vol. 13, no. 2, pp. 1–36, 2024

2024

-

[6]

Situational confidence assistance for lifelong shared autonomy,

M. Zurek, A. Bobu, D. S. Brown, and A. D. Dragan, “Situational confidence assistance for lifelong shared autonomy,” inICRA, 2021, pp. 2783–2789

2021

-

[7]

System transparency in shared autonomy: A mini review,

V . Alonso and P. De La Puente, “System transparency in shared autonomy: A mini review,”Frontiers in neurorobotics, vol. 12, p. 83, 2018

2018

-

[8]

Aligning learning with communication in shared autonomy,

J. Hoegerman, S. Sagheb, B. A. Christie, and D. P. Losey, “Aligning learning with communication in shared autonomy,” inIROS, 2024, pp. 11 530–11 536

2024

-

[9]

Communicating and controlling robot arm motion intent through mixed-reality head-mounted displays,

E. Rosen, D. Whitney, E. Phillips, G. Chien, J. Tompkin, G. Konidaris, and S. Tellex, “Communicating and controlling robot arm motion intent through mixed-reality head-mounted displays,”IJRR, vol. 38, no. 12-13, pp. 1513–1526, 2019

2019

-

[10]

A survey of communicating robot learning during human-robot interaction,

S. Habibian, A. Alvarez Valdivia, L. H. Blumenschein, and D. P. Losey, “A survey of communicating robot learning during human-robot interaction,”IJRR, vol. 44, no. 4, pp. 665–698, 2025

2025

-

[11]

From novice to skilled: Rl-based shared autonomy communicating with pilots in uav multi-task missions,

K. Backman, D. Kuli ´c, and H. Chung, “From novice to skilled: Rl-based shared autonomy communicating with pilots in uav multi-task missions,” ACM THRI, vol. 14, no. 2, pp. 1–37, 2025

2025

-

[12]

Haptic feedback improves human-robot agreement and user satisfaction in shared-autonomy tele- operation,

D. Zhang, R. Tron, and R. P. Khurshid, “Haptic feedback improves human-robot agreement and user satisfaction in shared-autonomy tele- operation,” inICRA, 2021, pp. 3306–3312

2021

-

[13]

Collaborative teleoperation with haptic feedback for collision-free navigation of ground robots,

M. Coffey and A. Pierson, “Collaborative teleoperation with haptic feedback for collision-free navigation of ground robots,” inIROS, 2022, pp. 8141–8148

2022

-

[14]

Goal-driven variable admittance control for robot manual guidance,

D. Bazzi, M. Lapertosa, A. M. Zanchettin, and P. Rocco, “Goal-driven variable admittance control for robot manual guidance,” inIROS, 2020, pp. 9759–9766

2020

-

[15]

Iterative learning- based robotic controller with prescribed human–robot interaction force,

X. Xing, K. Maqsood, D. Huang, C. Yang, and Y . Li, “Iterative learning- based robotic controller with prescribed human–robot interaction force,” IEEE TASE, vol. 19, no. 4, pp. 3395–3408, 2021

2021

-

[16]

Telerobotics,

G. Niemeyer, C. Preusche, S. Stramigioli, and D. Lee, “Telerobotics,” inSpringer handbook of robotics. Springer, 2016, pp. 1085–1108

2016

-

[17]

Evaluation of a humanoid robot’s emotional gestures for transparent interaction,

A. Rossi, M. M. Scheunemann, G. L’Arco, and S. Rossi, “Evaluation of a humanoid robot’s emotional gestures for transparent interaction,” inICSR, 2021, pp. 397–407

2021

-

[18]

Dynamic path visualization for human-robot collaboration,

A. Cleaver, D. V . Tang, V . Chen, E. S. Short, and J. Sinapov, “Dynamic path visualization for human-robot collaboration,” inHRI, 2021, pp. 339–343

2021

-

[19]

Aug- mented reality and robotics: A survey and taxonomy for ar-enhanced human-robot interaction and robotic interfaces,

R. Suzuki, A. Karim, T. Xia, H. Hedayati, and N. Marquardt, “Aug- mented reality and robotics: A survey and taxonomy for ar-enhanced human-robot interaction and robotic interfaces,” inCHI, 2022, pp. 1– 33

2022

-

[20]

Explainable human-robot training and cooperation with augmented reality,

C. Wang, A. Belardinelli, S. Hasler, T. Stouraitis, D. Tanneberg, and M. Gienger, “Explainable human-robot training and cooperation with augmented reality,” inCHI, 2023, pp. 1–5

2023

-

[21]

Designing led lights for a robot to communi- cate gaze,

S. Song and S. Yamada, “Designing led lights for a robot to communi- cate gaze,”Adv. Robot., vol. 33, no. 7-8, pp. 360–368, 2019

2019

-

[22]

Robots that use language,

S. Tellex, N. Gopalan, H. Kress-Gazit, and C. Matuszek, “Robots that use language,”Annual Review of Control, Robotics, and Autonomous Systems, vol. 3, no. 1, pp. 25–55, 2020

2020

-

[23]

Decision-making for bidirectional communication in sequential human-robot collaborative tasks,

V . V . Unhelkar, S. Li, and J. A. Shah, “Decision-making for bidirectional communication in sequential human-robot collaborative tasks,” inHRI, 2020, pp. 329–341

2020

-

[24]

Safe and intuitive manual guidance of a robot manipulator using adaptive admittance control towards robot agility,

D. Reyes-Uquillas and T. Hsiao, “Safe and intuitive manual guidance of a robot manipulator using adaptive admittance control towards robot agility,”RCIM, vol. 70, p. 102127, 2021

2021

-

[25]

Variable impedance control of redundant manipulators for intuitive human–robot physical interaction,

F. Ficuciello, L. Villani, and B. Siciliano, “Variable impedance control of redundant manipulators for intuitive human–robot physical interaction,” IEEE TRO, vol. 31, no. 4, pp. 850–863, 2015

2015

-

[26]

Variable admittance con- trol using velocity-curvature patterns to enhance physical human-robot interaction,

H. Chen, W. Xu, W. Guo, and X. Sheng, “Variable admittance con- trol using velocity-curvature patterns to enhance physical human-robot interaction,”RAL, vol. 9, no. 6, pp. 5054–5061, 2024

2024

-

[27]

Variable impedance control in cartesian latent space while avoiding obstacles in null space,

D. Parent, A. Colom ´e, and C. Torras, “Variable impedance control in cartesian latent space while avoiding obstacles in null space,” inICRA, 2020, pp. 9888–9894

2020

-

[28]

A probabilistic approach to multi-modal adaptive virtual fixtures,

M. M ¨uhlbauer, T. Hulin, B. Weber, S. Calinon, F. Stulp, A. Albu- Sch¨affer, and J. Silv ´erio, “A probabilistic approach to multi-modal adaptive virtual fixtures,”RAL, vol. 9, no. 6, pp. 5298–5305, 2024

2024

-

[29]

Manipulability of robotic mechanisms,

T. Yoshikawa, “Manipulability of robotic mechanisms,”IJRR, vol. 4, no. 2, pp. 3–9, 1985

1985

-

[30]

Learning Fine-Grained Bimanual Manipulation with Low-Cost Hardware

T. Z. Zhao, V . Kumar, S. Levine, and C. Finn, “Learning fine-grained bimanual manipulation with low-cost hardware,”arXiv:2304.13705, 2023

work page internal anchor Pith review arXiv 2023

-

[31]

SUS: a retrospective

J. Brooke, “SUS: a retrospective.”Journal of Usability Studies, vol. 8, no. 2, 2013

2013

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.