Recognition: unknown

Generalization Bounds of Spiking Neural Networks via Rademacher Complexity

Pith reviewed 2026-05-09 20:13 UTC · model grok-4.3

The pith

Spiking neural networks have generalization bounds set by an empirical Rademacher complexity that is exponential in depth and maximum spike sequence duration.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

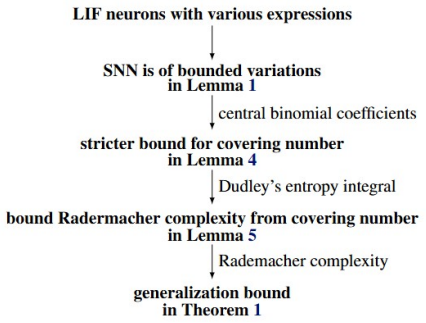

We recognize that the empirical Rademacher complexity of SNNs is close to the SNN configurations, which is exponential to the network depth and the maximum time duration of received spike sequences, superlinear and subquadratic to the network width, polynomial to the parameter norm, inverse-linear to the number of training samples, and independent of the computations within spiking neurons, achieving a more precise rate than conventional studies.

What carries the argument

Empirical Rademacher complexity applied directly to SNN configurations with integration-and-fire schemes.

Load-bearing premise

The standard Rademacher complexity framework applies to the chosen integration-and-fire models without extra hidden constraints from spike statistics or loss functions.

What would settle it

Explicit computation of the empirical Rademacher complexity for small SNNs across increasing depths that fails to show the predicted exponential growth.

Figures

read the original abstract

Spiking Neural Networks (SNNs) have garnered increasing attention as one of bio-inspired models due to their great potential in neuromorphic computing and sparse computation. Many practical algorithms and techniques have been developed; however, theoretical understandings of the generalization, that is, the extent to which SNNs perform well on unseen data, are far from clear. Recent advances disclosed an excitation-dependent and architecture-related generalization bound such that the Rademacher complexity of SNNs with stochastic firing can be upper bounded by an exponential function relative to the excitation probability and the architecture depth. In this paper, we theoretically investigate the generalization bounds of SNNs with several integration-and-fire schemes via Rademacher complexity. We recognize that the empirical Rademacher complexity of SNNs is close to the SNN configurations, which is exponential to the network depth and the maximum time duration of received spike sequences, superlinear and subquadratic to the network width, polynomial to the parameter norm, inverse-linear to the number of training samples, and independent of the computations within spiking neurons, achieving a more precise rate than conventional studies. Our theoretical results may support the scope of SNN theories and shed some insight into the development of SNNs.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript derives generalization bounds for spiking neural networks (SNNs) with several integrate-and-fire schemes via Rademacher complexity. It claims that the empirical Rademacher complexity scales exponentially with network depth and maximum spike-sequence duration, superlinearly and subquadratically with network width, polynomially with parameter norm, inversely linearly with training-sample size, and independently of internal spiking-neuron computations, yielding tighter rates than prior work.

Significance. If the derivations hold, the work would advance SNN theory by supplying architecture- and data-dependent bounds that are more precise than existing results, potentially guiding neuromorphic hardware design and sparse-computation applications. The asserted independence from internal dynamics, if rigorously shown, would simplify analysis across IF variants.

major comments (2)

- [§3 (main theorem)] Main result (Theorem 3.2 or equivalent in §3): the asserted independence of the Rademacher complexity from internal spiking computations is load-bearing for the central claim yet rests on a covering-number or Lipschitz bound whose constants must be shown not to grow with membrane-potential dynamics, threshold crossings, or reset rules; without an explicit step separating these from the output mapping, the independence does not follow from standard Rademacher arguments on the IF model.

- [§4 (derivation of rates)] Scaling relations (Eq. (12) or the bound stated after Lemma 4.1): the exponential dependence on depth and maximum time duration, together with the precise polynomial degree in parameter norm, is presented without visible error terms or explicit constants; this makes it impossible to verify the 'more precise rate' claim relative to conventional studies or to confirm that the rates remain valid under the chosen loss functions.

minor comments (2)

- [Abstract] Abstract: the final scaling relations are stated without any proof sketch or reference to the key lemmas; adding one sentence on the proof technique would improve readability.

- [§1–2] Notation: the term 'SNN configurations' is used in the abstract and introduction but is not formally defined before the main theorem; a short definition or reference to the architecture parameters would clarify the statement.

Simulated Author's Rebuttal

We thank the referee for the constructive comments on our manuscript. We address each major comment point by point below, indicating the revisions we will make to improve the rigor and clarity of the presentation.

read point-by-point responses

-

Referee: [§3 (main theorem)] Main result (Theorem 3.2 or equivalent in §3): the asserted independence of the Rademacher complexity from internal spiking computations is load-bearing for the central claim yet rests on a covering-number or Lipschitz bound whose constants must be shown not to grow with membrane-potential dynamics, threshold crossings, or reset rules; without an explicit step separating these from the output mapping, the independence does not follow from standard Rademacher arguments on the IF model.

Authors: We acknowledge that the independence claim requires an explicit justification to be fully rigorous. Our derivation treats the spiking neuron as a mapping from input parameters and spike sequences to output spikes, with the Rademacher complexity bounded via the covering numbers of this output function class. The key is that for all integrate-and-fire variants considered, the output spike times are constrained by the maximum duration, allowing the Lipschitz constant with respect to parameters to be bounded uniformly without dependence on internal membrane dynamics. To make this separation explicit as suggested, we will add a supporting lemma in Section 3 that derives the covering number bound directly from the spike output mapping, showing the constants are independent of threshold crossings and reset rules. This will be incorporated in the revised manuscript. revision: yes

-

Referee: [§4 (derivation of rates)] Scaling relations (Eq. (12) or the bound stated after Lemma 4.1): the exponential dependence on depth and maximum time duration, together with the precise polynomial degree in parameter norm, is presented without visible error terms or explicit constants; this makes it impossible to verify the 'more precise rate' claim relative to conventional studies or to confirm that the rates remain valid under the chosen loss functions.

Authors: The exponential scaling with depth and duration, as well as the polynomial dependence on the parameter norm, are obtained by composing the per-layer and per-time-step covering number bounds, as detailed in the proof following Lemma 4.1. The subquadratic width dependence arises from the specific form of the width term in the Rademacher bound. Although explicit constants and error terms are not highlighted in the main text to focus on the scaling behavior, they are traceable in the appendix proofs. We agree that including them would aid verification of the improved rates compared to prior work. Therefore, we will revise Section 4 to include a discussion of the leading constants and explicitly state the conditions under which the bounds hold for the loss functions considered in the paper. revision: yes

Circularity Check

No significant circularity; derivation applies standard Rademacher analysis to IF models

full rationale

The paper derives generalization bounds for SNNs by applying the standard empirical Rademacher complexity framework to integration-and-fire neuron models. The stated dependencies (exponential in depth and max spike duration, superlinear/subquadratic in width, polynomial in parameter norm, inverse-linear in sample size) and the claimed independence from internal spiking computations are presented as consequences of bounding the output spike sequences and loss sensitivity under the chosen IF schemes. No equations or steps reduce by construction to fitted parameters renamed as predictions, nor do they rely on self-citations whose content is itself unverified or defined in terms of the target result. The central claims remain externally grounded in classical complexity arguments once the spike-sequence mapping is fixed, satisfying the criteria for a self-contained derivation.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Rademacher complexity bounds for feed-forward networks extend to spiking networks under the chosen integration-and-fire schemes

- standard math Standard mathematical properties of Rademacher complexity (subadditivity, contraction, etc.) hold without modification for the SNN loss

Reference graph

Works this paper leans on

-

[1]

L. F. Abbott, B. DePasquale, and R.-M. Memmesheimer. Building functional networks of spiking model neurons.Nature Neuroscience, 19(3):350–355, 2016

2016

-

[2]

E. D. Adrian. The impulses produced by sensory nerve endings.The Journal of Physiology, 61(49): 156–193, 1926

1926

-

[3]

Aertsen, P

A. Aertsen, P. I. Johannesma, and D. J. Hermes. Spectro-temporal receptive fields of auditory neurons in the grassfrog.Biological Cybernetics, 38(4):235–248, 1980

1980

-

[4]

D. G. Barrett, S. Den `eve, and C. K. Machens. Firing rate predictions in optimal balanced networks. In Advances in Neural Information Processing Systems 26, pages 1538–1546, 2013

2013

-

[5]

P. L. Bartlett, D. J. Foster, and M. J. Telgarsky. Spectrally-normalized margin bounds for neural networks. InAdvances in Neural Information Processing Systems 30, pages 6241–6250, 2017

2017

-

[6]

Chou, K.-M

C.-N. Chou, K.-M. Chung, and C.-J. Lu. On the algorithmic power of spiking neural networks. InPro- ceedings of the 10th Innovations in Theoretical Computer Science Conference, pages 26:1–26:20, 2019. 20

2019

-

[7]

D. S. Clark. Short proof of a discrete gronwall inequality.Discrete Applied Mathematics, 16(3):279–281, 1987

1987

-

[8]

Dutta and K

P. Dutta and K. T. Nguyen. Covering numbers for bounded variation functions.Journal of Mathematical Analysis and Applications, 468(2):1131–1143, 2018

2018

-

[9]

W. Fang, Y . Chen, J. Ding, Z. Yu, T. Masquelier, D. Chen, L. Huang, H. Zhou, G. Li, and Y . Tian. Spik- ingjelly: An open-source machine learning infrastructure platform for spike-based intelligence.Science Advances, 9(40):eadi1480, 2023

2023

-

[10]

R. Florian. Reinforcement learning through modulation of spike-timing-dependent synaptic plasticity. Neural Computation, 19(6):1468–1502, 2007

2007

-

[11]

Gautschi

W. Gautschi. Some elementary inequalities relating to the gamma and incomplete gamma function.Journal of Mathematical Physics, 38(1):77–81, 1959

1959

-

[12]

Gerstner

W. Gerstner. Time structure of the activity in neural network models.Physical Review E, 51(1):738, 1995

1995

-

[13]

Gerstner and W

W. Gerstner and W. M. Kistler.Spiking Neuron Models: Single Neurons, Populations, Plasticity. Cam- bridge University Press, 2002

2002

-

[14]

G ¨oltz, L

J. G ¨oltz, L. Kriener, A. Baumbach, S. Billaudelle, O. Breitwieser, B. Cramer, D. Dold, A. F. Kungl, W. Senn, and J. Schemmel. Fast and energy-efficient neuromorphic deep learning with first-spike times. Nature machine intelligence, 3(9):823–835, 2021

2021

- [15]

-

[16]

S. R. Kheradpisheh, M. Ganjtabesh, S. J. Thorpe, and T. Masquelier. STDP-based spiking deep convolu- tional neural networks for object recognition.Neural Networks, 99:56–67, 2018

2018

-

[17]

J. H. Lee, D. Carlson, H. Shokri, W. Yao, G. Goetz, E. Hagen, E. Batty, E. Chichilnisky, G. Einevoll, and L. Paninski. Yass: Yet another spike sorter. InAdvances in Neural Information Processing Systems 30, pages 4005–4015, 2017

2017

-

[18]

W. Maass. Lower bounds for the computational power of networks of spiking neurons.Neural Computa- tion, 8(1):1–40, 1996

1996

-

[19]

W. Maass. Networks of spiking neurons: The third generation of neural network models.Neural Networks, 10(9):1659–1671, 1997

1997

-

[20]

Maass and C

W. Maass and C. M. Bishop.Pulsed Neural Networks. MIT press, 2001

2001

-

[21]

Maass and H

W. Maass and H. Markram. On the computational power of circuits of spiking neurons.Journal of Computer and System Sciences, 69(4):593–616, 2004. 21

2004

-

[22]

Maass and M

W. Maass and M. Schmitt. On the complexity of learning for a spiking neuron. InProceedings of the 10th Annual Conference on Computational Learning Theory, pages 54–61, 1997

1997

-

[23]

Mohri, A

M. Mohri, A. Rostamizadeh, and A. Talwalkar.Foundations of Machine Learning. MIT press, 2018

2018

-

[24]

A. M. Neuman, D. Dold, and P. C. Petersen. Stable learning using spiking neural networks equipped with affine encoders and decoders.Journal of Machine Learning Research, 26(246):1–49, 2025

2025

-

[25]

R. Q. Quiroga, L. Reddy, G. Kreiman, C. Koch, and I. Fried. Invariant visual representation by single neurons in the human brain.Nature, 435(7045):1102–1107, 2005

2005

-

[26]

Rotter and M

S. Rotter and M. Diesmann. Exact digital simulation of time-invariant linear systems with applications to neuronal modeling.Biological Cybernetics, 81(5):381–402, 1999

1999

-

[27]

C. J. Rozell, D. H. Johnson, R. G. Baraniuk, and B. A. Olshausen. Sparse coding via thresholding and local competition in neural circuits.Neural Computation, 20(10):2526–2563, 2008

2008

-

[28]

M. Schmitt. Vc dimension bounds for networks of spiking neurons. InProceedings of the 7th European Symposium on Artificial Neural Networks, pages 429–434, 1999

1999

-

[29]

Schrauwen, M

B. Schrauwen, M. D’Haene, D. Verstraeten, and J. V . Campenhout. Compact hardware liquid state ma- chines on FPGA for real-time speech recognition.Neural Networks, 21(2-3):511–523, 2008

2008

-

[30]

X. She, S. Dash, and S. Mukhopadhyay. Sequence approximation using feedforward spiking neural net- work for spatiotemporal learning: Theory and optimization methods. InProceedings of the 9th Interna- tional Conference on Learning Representations, 2021

2021

-

[31]

Srebro and K

N. Srebro and K. Sridharan. Note on refined dudley integral covering number bound.Retrieved from https://www.cs.cornell.edu/ sridharan/dudley.pdf, 2010

2010

-

[32]

R. B. Stein. Some models of neuronal variability.Biophysical Journal, 7(1):37–68, 1967

1967

- [33]

- [34]

-

[35]

V . N. Vapnik.The nature of statistical learning theory. Springer, 2000

2000

-

[36]

Vasilaki, N

E. Vasilaki, N. Fr ´emaux, R. Urbanczik, W. Senn, and W. Gerstner. Spike-based reinforcement learning in continuous state and action space: when policy gradient methods fail.PLoS Computational Biology, 5 (12):e1000586, 2009

2009

-

[37]

M. Verma and M. Kumar. Generalization bound for a general class of neural ordinary differential equations. arXiv:2508.18920, 2025. 22

-

[38]

Verstraeten, B

D. Verstraeten, B. Schrauwen, D. Stroobandt, and J. V . Campenhout. Isolated word recognition with the liquid state machine: A case study.Information Processing Letters, 95(6):521–528, 2005

2005

-

[39]

Q. Yu, H. Tang, K. C. Tan, and H. Yu. A brain-inspired spiking neural network model with temporal encoding and learning.Neurocomputing, 138:3–13, 2014

2014

-

[40]

Zhang and Z.-H

S.-Q. Zhang and Z.-H. Zhou. Theoretically provable spiking neural networks. InAdvances in Neural Information Processing Systems 35, pages 19345–19356, 2022

2022

-

[41]

Zhang, Z.-Y

S.-Q. Zhang, Z.-Y . Zhang, and Z.-H. Zhou. Bifurcation spiking neural network.Journal of Machine Learning Research, 22(253):1–21, 2021

2021

-

[42]

Zhang, J.-Y

S.-Q. Zhang, J.-Y . Chen, J.-H. Wu, G. Zhang, H. Xiong, B. Gu, and Z.-H. Zhou. On the intrinsic structures of spiking neural networks.Journal of Machine Learning Research, 25(194):1–74, 2024. 23

2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.