Recognition: unknown

Relation Reasoning with LLMs in Expensive Optimization

Pith reviewed 2026-05-09 20:36 UTC · model grok-4.3

The pith

A large language model fine-tuned to reason about solution relations can guide evolutionary optimization of expensive problems without retraining.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

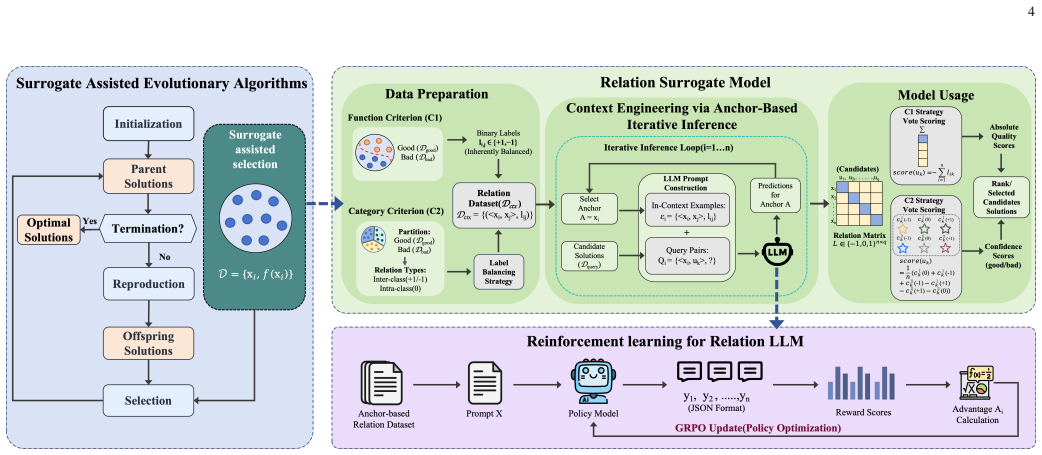

The paper establishes that relation-based surrogate modeling, cast as an in-context pairwise reasoning task inside a large language model, enables effective guidance within evolutionary algorithms for expensive black-box problems. Training the model on trajectories with GRPO, combined with anchor-based iterative context construction and voting-based aggregation of predicted relations into scores, produces improved relation prediction and state-of-the-art optimization results on single- and multi-objective benchmarks while allowing a zero-shot surrogate paradigm without per-generation retraining.

What carries the argument

The anchor-based iterative context construction strategy that reduces prompt complexity from quadratic to linear in population size, together with a voting-based aggregation scheme that converts predicted relations into scores for offspring selection.

Load-bearing premise

That the LLM's predictions of relations between candidate solutions, learned from evolutionary trajectories, will generalize reliably to new problems and supply accurate guidance for selection without any retraining during the search.

What would settle it

A test on held-out optimization benchmarks in which the R2SAEA algorithm produces final solution quality no better than strong SAEA baselines after the same evaluation budget, or in which the model's pairwise relation accuracy falls sharply on populations not seen during training.

Figures

read the original abstract

Expensive optimization problems (EOPs) are black-box tasks with costly objective evaluations and no gradient access, making the evaluation budget the key bottleneck. Surrogate-assisted evolutionary algorithms (SAEAs) reduce evaluations via surrogate predictions, but conventional surrogates often require frequent retraining as populations evolve, incurring overhead. This paper proposes R2SAEA, a reinforcement-trained relation-based large language model (LLM) surrogate assisted evolutionary algorithm. We cast relation-based surrogate modeling as an in-context pairwise reasoning task. To enable efficient inference in evolutionary loops, we develop an anchor-based iterative context construction strategy that reduces prompt complexity from quadratic to linear in population size, and a voting-based aggregation scheme that converts predicted relations into scores for offspring selection. We further build an RL pipeline from evolutionary trajectories and fine-tune Qwen2.5 with GRPO. Experiments on single- and multi-objective benchmarks show improved relation prediction and state-of-the-art optimization performance over strong SAEA baselines and general LLMs. Quantization also enables efficient edge deployment, supporting a zero-shot surrogate paradigm without per-generation retraining. Code and models are available at https://github.com/Septend9/R2SAEA.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes R2SAEA, a surrogate-assisted evolutionary algorithm (SAEA) for expensive optimization problems (EOPs) that replaces conventional surrogates with a relation-reasoning LLM. The LLM (Qwen2.5) is fine-tuned via GRPO on evolutionary trajectories to predict pairwise relations between candidate solutions. An anchor-based iterative context construction reduces prompt length from quadratic to linear in population size, and a voting aggregation converts relation predictions into scalar scores for offspring selection. The method is positioned as enabling a zero-shot surrogate paradigm without per-generation retraining. Experiments on single- and multi-objective benchmarks are reported to show improved relation prediction and state-of-the-art optimization performance relative to strong SAEA baselines and general LLMs; quantization for edge deployment is also demonstrated.

Significance. If the generalization claims hold, the work would be significant for the SAEA community by showing that relation-based LLM reasoning can serve as a reusable, low-overhead surrogate that avoids repeated retraining. The open release of code and models is a clear strength that supports reproducibility and follow-on research. The approach also opens a new direction for leveraging LLM reasoning capabilities in black-box optimization rather than direct value prediction.

major comments (2)

- [§4 (Training Pipeline)] §4 (Training Pipeline): The paper does not characterize the distribution of evolutionary trajectories used for GRPO fine-tuning (problem classes, dimensions, objective landscape features, or diversity metrics). This is load-bearing for the zero-shot generalization claim, because without such characterization it is impossible to determine whether reported gains on the test benchmarks reflect true out-of-distribution robustness or partial overlap with the training distribution.

- [§5 (Experimental Results)] §5 (Experimental Results): The optimization performance claims (SOTA over SAEA baselines) are presented without reported statistical tests, number of independent runs, or variance measures. In addition, the precise configuration of the “strong SAEA baselines” is not detailed enough to allow direct replication or assessment of whether the comparison is fair on evaluation budget and hyperparameter tuning.

minor comments (2)

- [Abstract] The abstract states “improved relation prediction” without naming the metric or reporting numerical gains; adding a brief quantitative statement would strengthen the summary.

- [§5] Figure captions and axis labels in the experimental plots should explicitly state the number of function evaluations used and whether shaded regions represent standard deviation or standard error.

Simulated Author's Rebuttal

We thank the referee for the constructive comments, which help strengthen the presentation of our work on R2SAEA. We address each major comment below and will incorporate revisions to improve clarity and rigor.

read point-by-point responses

-

Referee: [§4 (Training Pipeline)] The paper does not characterize the distribution of evolutionary trajectories used for GRPO fine-tuning (problem classes, dimensions, objective landscape features, or diversity metrics). This is load-bearing for the zero-shot generalization claim, because without such characterization it is impossible to determine whether reported gains on the test benchmarks reflect true out-of-distribution robustness or partial overlap with the training distribution.

Authors: We agree that a detailed characterization of the training trajectories is important for supporting the zero-shot generalization claims. In the revised manuscript, we will add a new subsection in §4 describing the distribution of evolutionary trajectories collected for GRPO fine-tuning. This will include the specific problem classes (e.g., CEC 2017/2020 single- and multi-objective benchmarks), dimension ranges (10D to 50D), objective landscape features (unimodal/multimodal, separable/non-separable), and diversity metrics such as average population variance and convergence statistics across the collected trajectories. revision: yes

-

Referee: [§5 (Experimental Results)] The optimization performance claims (SOTA over SAEA baselines) are presented without reported statistical tests, number of independent runs, or variance measures. In addition, the precise configuration of the “strong SAEA baselines” is not detailed enough to allow direct replication or assessment of whether the comparison is fair on evaluation budget and hyperparameter tuning.

Authors: We acknowledge that the current experimental reporting lacks sufficient statistical detail and baseline transparency. In the revised §5, we will explicitly state the number of independent runs (20 per benchmark instance), report mean performance with standard deviations, and include statistical significance tests (Wilcoxon rank-sum test with p-values) comparing R2SAEA against each baseline. We will also expand the baseline descriptions with a dedicated table listing exact configurations, including population sizes, surrogate hyperparameters, evaluation budgets per generation, and any tuning procedures used, to enable direct replication and confirm fairness of the comparisons. revision: yes

Circularity Check

No circularity; claims rest on independent benchmark evaluation after training on trajectories

full rationale

The paper trains the LLM surrogate via GRPO on evolutionary trajectories, then deploys the resulting model for relation prediction and offspring selection on separate single- and multi-objective benchmarks. The anchor-based context construction and voting aggregation are procedural engineering steps that convert LLM outputs into selection scores without defining the performance metric in terms of the training data itself. No equations, self-citations, or uniqueness theorems are invoked that reduce the reported SOTA gains to a fitted quantity or prior result by construction. Generalization to new EOPs is presented as an empirical outcome of the experiments rather than a definitional necessity.

Axiom & Free-Parameter Ledger

free parameters (1)

- GRPO and fine-tuning hyperparameters

axioms (2)

- domain assumption LLM pairwise relation predictions can be aggregated via voting into reliable scalar scores that improve evolutionary selection

- domain assumption Anchor-based iterative context construction retains sufficient relational information while reducing prompt complexity

Reference graph

Works this paper leans on

-

[1]

Evolutionary computation for ex- pensive optimization: A survey,

J.-Y . Li, Z.-H. Zhan, and J. Zhang, “Evolutionary computation for ex- pensive optimization: A survey,”Machine Intelligence Research, vol. 19, no. 1, pp. 3–23, 2022

2022

-

[2]

A survey on evolutionary neural architecture search,

Y . Liu, Y . Sun, B. Xue, M. Zhang, G. G. Yen, and K. C. Tan, “A survey on evolutionary neural architecture search,”IEEE Transactions on Neural Networks and Learning Systems, vol. 34, no. 2, pp. 550–570, 2023

2023

-

[3]

Derivative-free optimization via classifi- cation,

Y . Yu, H. Qian, and Y .-Q. Hu, “Derivative-free optimization via classifi- cation,” inProceedings of the AAAI Conference on Artificial Intelligence, vol. 30, no. 1, 2016

2016

-

[4]

Surrogate-assisted evolutionary computation: Recent advances and future challenges,

Y . Jin, “Surrogate-assisted evolutionary computation: Recent advances and future challenges,”Swarm and Evolutionary Computation, vol. 1, no. 2, pp. 61–70, 2011

2011

-

[5]

Dual relational surrogate-assisted evolution for expensive constrained multiobjective optimization,

S. Liu, P. Chen, and Q. Lin, “Dual relational surrogate-assisted evolution for expensive constrained multiobjective optimization,”IEEE Transac- tions on Evolutionary Computation, 2025. 13 TABLE IV: Performance of C1-Trained Models across Varying Parameter Sizes and Quantization Precisions on the Offline Test Set. 3B 7B Metric Qwen2.5-instruct ReLLM-C1 ReL...

2025

-

[6]

Comparison-based opti- mizers need comparison-based surrogates,

I. Loshchilov, M. Schoenauer, and M. Sebag, “Comparison-based opti- mizers need comparison-based surrogates,” inInternational conference on parallel problem solving from nature. Springer, 2010, pp. 364–373

2010

-

[7]

Large language models as surrogate models in evolutionary algorithms: A preliminary study,

H. Hao, X. Zhang, and A. Zhou, “Large language models as surrogate models in evolutionary algorithms: A preliminary study,”

-

[8]

Available: https://arxiv.org/abs/2406.10675

[Online]. Available: https://arxiv.org/abs/2406.10675

-

[9]

Expensive optimization via relation,

——, “Expensive optimization via relation,”IEEE Transactions on Evolutionary Computation, 2025

2025

-

[10]

DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models

Z. Shao, P. Wang, Q. Zhu, R. Xu, J. Song, X. Bi, H. Zhang, M. Zhang, Y . Li, Y . Wuet al., “Deepseekmath: Pushing the limits of mathematical reasoning in open language models,”arXiv preprint arXiv:2402.03300, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[11]

A review of surrogate-assisted evolutionary multi-objective optimization,

Q. Wang, Y . Zhang, D. Gong, X. Song, C. He, X. Ji, and F. Chu, “A review of surrogate-assisted evolutionary multi-objective optimization,” IEEE Transactions on Emerging Topics in Computational Intelligence, 2026

2026

-

[12]

Multiobjective evolutionary algorithms: A survey of the state of the art,

A. Zhou, B.-Y . Qu, H. Li, S.-Z. Zhao, P. N. Suganthan, and Q. Zhang, “Multiobjective evolutionary algorithms: A survey of the state of the art,”Swarm and evolutionary computation, vol. 1, no. 1, pp. 32–49, 2011

2011

-

[13]

Multiobjective optimization using coupled response surface model and evolutionary algorithm

Y . Lian and M.-S. Liou, “Multiobjective optimization using coupled response surface model and evolutionary algorithm.”AIAA journal, vol. 43, no. 6, pp. 1316–1325, 2005

2005

-

[14]

Surrogate-assisted co- operative swarm optimization of high-dimensional expensive problems,

C. Sun, Y . Jin, R. Cheng, J. Ding, and J. Zeng, “Surrogate-assisted co- operative swarm optimization of high-dimensional expensive problems,” IEEE Transactions on Evolutionary Computation, vol. 21, no. 4, pp. 644–660, 2017

2017

-

[15]

A gaussian process surrogate model assisted evolutionary algorithm for medium scale expensive optimization problems,

B. Liu, Q. Zhang, and G. G. Gielen, “A gaussian process surrogate model assisted evolutionary algorithm for medium scale expensive optimization problems,”IEEE Transactions on Evolutionary Computation, vol. 18, no. 2, pp. 180–192, 2013

2013

-

[16]

An approximated domination re- lationship based on binary classifiers for evolutionary multiobjective optimization,

H. Hao, A. Zhou, and H. Zhang, “An approximated domination re- lationship based on binary classifiers for evolutionary multiobjective optimization,” in2021 IEEE Congress on Evolutionary Computation (CEC). IEEE, 2021, pp. 2427–2434

2021

-

[17]

A classification- based surrogate-assisted evolutionary algorithm for expensive many- objective optimization,

L. Pan, C. He, Y . Tian, H. Wang, X. Zhang, and Y . Jin, “A classification- based surrogate-assisted evolutionary algorithm for expensive many- objective optimization,”IEEE Transactions on Evolutionary Computa- tion, vol. 23, no. 1, pp. 74–88, 2018

2018

-

[18]

Fuzzy-classification assisted solution preselection in evolutionary optimization,

A. Zhou, J. Zhang, J. Sun, and G. Zhang, “Fuzzy-classification assisted solution preselection in evolutionary optimization,” inProceedings of the AAAI conference on artificial intelligence, vol. 33, no. 01, 2019, pp. 2403–2410

2019

-

[19]

Binary relation learning and classifying for preselection in evolutionary algorithms,

H. Hao, J. Zhang, X. Lu, and A. Zhou, “Binary relation learning and classifying for preselection in evolutionary algorithms,”IEEE Transac- tions on Evolutionary Computation, vol. 24, no. 6, pp. 1125–1139, 2020

2020

-

[20]

Enhancing saeas with unevaluated solutions: a case study of relation model for expensive optimization,

H. Hao, X. Zhang, and A. Zhou, “Enhancing saeas with unevaluated solutions: a case study of relation model for expensive optimization,” Science China Information Sciences, vol. 67, no. 2, p. 120103, 2024

2024

-

[21]

Expensive multiobjective evolutionary op- timization assisted by dominance prediction,

Y . Yuan and W. Banzhaf, “Expensive multiobjective evolutionary op- timization assisted by dominance prediction,”IEEE Transactions on Evolutionary Computation, vol. 26, no. 1, pp. 159–173, 2021

2021

-

[22]

Expensive multiobjective optimization by relation learning and prediction,

H. Hao, A. Zhou, H. Qian, and H. Zhang, “Expensive multiobjective optimization by relation learning and prediction,”IEEE Transactions on Evolutionary Computation, vol. 26, no. 5, pp. 1157–1170, 2022

2022

-

[23]

Platemo: A matlab platform for evolutionary multi-objective optimization [educational forum],

Y . Tian, R. Cheng, X. Zhang, and Y . Jin, “Platemo: A matlab platform for evolutionary multi-objective optimization [educational forum],”IEEE Computational Intelligence Magazine, vol. 12, no. 4, pp. 73–87, 2017

2017

-

[24]

Evolutionary computation in the era of large language model: Survey and roadmap,

X. Wu, S. hao Wu, J. Wu, L. Feng, and K. C. Tan, “Evolutionary computation in the era of large language model: Survey and roadmap,” 2024

2024

-

[25]

Large language models as optimizers,

C. Yang, X. Wang, Y . Lu, H. Liu, Q. V . Le, D. Zhou, and X. Chen, “Large language models as optimizers,” 2024

2024

-

[26]

Evolution of heuristics: Towards efficient automatic algorithm design using large language mode,

F. Liu, X. Tong, M. Yuan, X. Lin, F. Luo, Z. Wang, Z. Lu, and Q. Zhang, “Evolution of heuristics: Towards efficient automatic algorithm design using large language mode,” 2024

2024

-

[27]

Algorithm evolution using large language model,

F. Liu, X. Tong, M. Yuan, and Q. Zhang, “Algorithm evolution using large language model,” 2023

2023

-

[28]

Large Language Models to Enhance Bayesian Optimization

T. Liu, N. Astorga, N. Seedat, and M. van der Schaar, “Large language models to enhance bayesian optimization,”arXiv preprint arXiv:2402.03921, 2024

-

[29]

Language model crossover: Variation through few-shot prompting

E. Meyerson, M. J. Nelson, H. Bradley, A. Moradi, A. K. Hoover, and J. Lehman, “Language model crossover: Variation through few-shot prompting,”arXiv preprint arXiv:2302.12170, 2023

-

[30]

Large language models as evolution strategies,

R. Lange, Y . Tian, and Y . Tang, “Large language models as evolution strategies,” inProceedings of the Genetic and Evolutionary Computation Conference Companion, 2024, pp. 579–582

2024

-

[31]

As-llm: When algorithm se- lection meets large language model,

X. Wu, Y . Zhong, J. Wu, and K. C. Tan, “As-llm: When algorithm se- lection meets large language model,”arXiv preprint arXiv:2311.13184, 2023

-

[32]

L-autoda: Leveraging large language models for automated decision-based adversarial attacks,

P. Guo, F. Liu, X. Lin, Q. Zhao, and Q. Zhang, “L-autoda: Leveraging large language models for automated decision-based adversarial attacks,” arXiv preprint arXiv:2401.15335, 2024

-

[33]

Optimus: Optimization modeling using MIP solvers and large language models

A. AhmadiTeshnizi, W. Gao, and M. Udell, “Optimus: Optimization modeling using mip solvers and large language models,”arXiv preprint arXiv:2310.06116, 2023

-

[34]

The openelm library: Leveraging progress in language models for novel evolutionary algorithms,

H. Bradley, H. Fan, T. Galanos, R. Zhou, D. Scott, and J. Lehman, “The openelm library: Leveraging progress in language models for novel evolutionary algorithms,” inGenetic Programming Theory and Practice XX. Springer, 2024, pp. 177–201

2024

-

[35]

Large language models as surrogate models in evolutionary algorithms: A preliminary study,

H. Hao, X. Zhang, and A. Zhou, “Large language models as surrogate models in evolutionary algorithms: A preliminary study,”Swarm and Evolutionary Computation, vol. 91, p. 101741, 2024

2024

-

[36]

Deep reinforcement learning from human preferences,

P. F. Christiano, J. Leike, T. Brown, M. Martic, S. Legg, and D. Amodei, “Deep reinforcement learning from human preferences,”Advances in neural information processing systems, 2017

2017

-

[37]

Learning to summarize with human feedback,

N. Stiennon, L. Ouyang, J. Wu, D. Ziegler, R. Lowe, C. V oss, A. Rad- ford, D. Amodei, and P. F. Christiano, “Learning to summarize with human feedback,”Advances in neural information processing systems, vol. 33, pp. 3008–3021, 2020

2020

-

[38]

Proximal Policy Optimization Algorithms

J. Schulman, F. Wolski, P. Dhariwal, A. Radford, and O. Klimov, “Prox- imal policy optimization algorithms,”arXiv preprint arXiv:1707.06347, 2017

work page internal anchor Pith review Pith/arXiv arXiv 2017

-

[39]

Z. Liu, X. Guo, Z. Yang, F. Lou, L. Zeng, M. Li, Q. Qi, Z. Liu, Y . Han, D. Cheng, X. Feng, H. J. Wang, C. Shi, and L. Zhang, “Fin-r1: A large language model for financial reasoning through reinforcement learning,” 2025. [Online]. Available: https://arxiv.org/abs/2503.16252

-

[40]

X. Dai, B. Xu, Z. Liu, Y . Yan, H. Xie, X. Yi, S. Wang, and G. Yu, “Legalδ: Enhancing legal reasoning in llms via reinforcement learning with chain-of-thought guided information gain,” 2025. [Online]. Available: https://arxiv.org/abs/2508.12281

-

[41]

Evaluating Test-Time Scaling LLMs for Legal Reasoning: OpenAI o1, DeepSeek-R1, and Beyond , url=

Y . Hu, Y . Yu, L. Gan, B. Wei, K. Kuang, and F. Wu, “Evaluating test-time scaling llms for legal reasoning: Openai o1, deepseek-r1, and beyond,” inFindings of the Association for Computational Linguistics: EMNLP 2025. Association for Computational Linguistics, 2025, p. 13759–13781. [Online]. Available: http://dx.doi.org/10.18653/v1/2025.findings-emnlp.742

-

[42]

K. Zhang, G. Xie, W. Yu, M. Xu, X. Tang, Y . Li, and J. Xu, “Legal mathematical reasoning with llms: Procedural alignment through two-stage reinforcement learning,” 2025. [Online]. Available: https://arxiv.org/abs/2504.02590 14

-

[43]

Z. Chu, S. Wang, J. Xie, T. Zhu, Y . Yan, J. Ye, A. Zhong, X. Hu, J. Liang, P. S. Yu, and Q. Wen, “Llm agents for education: Advances and applications,” 2025. [Online]. Available: https://arxiv.org/abs/2503.11733

-

[44]

S. Song, W. Liu, Y . Lu, R. Zhang, T. Liu, J. Lv, X. Wang, A. Zhou, F. Tan, B. Jiang, and H. Hao, “Cultivating helpful, personalized, and creative ai tutors: A framework for pedagogical alignment using reinforcement learning,” 2025. [Online]. Available: https://arxiv.org/abs/2507.20335

-

[45]

Benchmark functions for the cec’2008 special session and competition on high-dimensional real-parameter optimization,

K. Tang, X. Yao, P. Suganthan, C. MacNish, Y . Chen, C. Chen, and Z. Yang, “Benchmark functions for the cec’2008 special session and competition on high-dimensional real-parameter optimization,”Na- ture Inspired Computation and Applications Laboratory, USTC, Hefei, China, 2007

2008

-

[46]

Problem definitions and evaluation criteria for the cec 2010 competition on constrained real-parameter optimization,

R. Mallipeddi and P. N. Suganthan, “Problem definitions and evaluation criteria for the cec 2010 competition on constrained real-parameter optimization,”Nanyang Technological University, Singapore, vol. 24, p. 910, 2010

2010

-

[47]

A large population size can be unhelpful in evolutionary algorithms,

T. Chen, K. Tang, G. Chen, and X. Yao, “A large population size can be unhelpful in evolutionary algorithms,”Theoretical Computer Science, vol. 436, pp. 54–70, 2012

2012

-

[48]

Evolutionary algorithm with dynamic population size for constrained multiobjective optimiza- tion,

B.-C. Wang, Z.-Y . Shui, Y . Feng, and Z. Ma, “Evolutionary algorithm with dynamic population size for constrained multiobjective optimiza- tion,”Swarm and Evolutionary Computation, vol. 73, p. 101104, 2022

2022

-

[49]

The impact of population sizes and diversity on the adaptability of evolution strategies in dynamic environments,

L. Schonemann, “The impact of population sizes and diversity on the adaptability of evolution strategies in dynamic environments,” in Proceedings of the 2004 Congress on Evolutionary Computation (IEEE Cat. No. 04TH8753), vol. 2. IEEE, 2004, pp. 1270–1277

2004

-

[50]

An estimation of distribution algorithm with cheap and expensive local search methods,

A. Zhou, J. Sun, and Q. Zhang, “An estimation of distribution algorithm with cheap and expensive local search methods,”IEEE Transactions on Evolutionary Computation, vol. 19, no. 6, pp. 807–822, 2015

2015

-

[51]

On the robustness of a simple domain reduction scheme for simulation-based optimization,

N. Stander and K. J. Craig, “On the robustness of a simple domain reduction scheme for simulation-based optimization,”Engineering Com- putations, vol. 19, no. 4, pp. 431–450, 2002

2002

-

[52]

Efficient global optimiza- tion of expensive black-box functions,

D. R. Jones, M. Schonlau, and W. J. Welch, “Efficient global optimiza- tion of expensive black-box functions,”Journal of Global optimization, vol. 13, no. 4, pp. 455–492, 1998

1998

-

[53]

A surrogate-assisted multiswarm optimization algorithm for high-dimensional computationally expensive problems,

F. Li, X. Cai, L. Gao, and W. Shen, “A surrogate-assisted multiswarm optimization algorithm for high-dimensional computationally expensive problems,”IEEE transactions on cybernetics, vol. 51, no. 3, pp. 1390– 1402, 2020

2020

-

[54]

Hollander, D

M. Hollander, D. A. Wolfe, and E. Chicken,Nonparametric statistical methods. John Wiley & Sons, 2013

2013

-

[55]

Evolutionary programming made faster,

X. Yao, Y . Liu, and G. Lin, “Evolutionary programming made faster,” IEEE Transactions on Evolutionary computation, vol. 3, no. 2, pp. 82– 102, 1999

1999

-

[56]

A classification and pareto dom- ination based multiobjective evolutionary algorithm,

J. Zhang, A. Zhou, and G. Zhang, “A classification and pareto dom- ination based multiobjective evolutionary algorithm,” in2015 IEEE congress on evolutionary computation (CEC). IEEE, 2015, pp. 2883– 2890

2015

-

[57]

A surrogate-assisted reference vector guided evolutionary algorithm for computationally expensive many-objective optimization,

T. Chugh, Y . Jin, K. Miettinen, J. Hakanen, and K. Sindhya, “A surrogate-assisted reference vector guided evolutionary algorithm for computationally expensive many-objective optimization,”IEEE Trans- actions on Evolutionary Computation, vol. 22, no. 1, pp. 129–142, 2016

2016

-

[58]

A pairwise com- parison based surrogate-assisted evolutionary algorithm for expensive multi-objective optimization,

Y . Tian, J. Hu, C. He, H. Ma, L. Zhang, and X. Zhang, “A pairwise com- parison based surrogate-assisted evolutionary algorithm for expensive multi-objective optimization,”Swarm and Evolutionary Computation, vol. 80, p. 101323, 2023

2023

-

[59]

Solving multiobjective optimization problems using an artificial immune system,

C. A. C. Coello and N. C. Cort ´es, “Solving multiobjective optimization problems using an artificial immune system,”Genetic programming and evolvable machines, vol. 6, no. 2, pp. 163–190, 2005

2005

-

[60]

Scalable test problems for evolutionary multiobjective optimization,

K. Deb, L. Thiele, M. Laumanns, and E. Zitzler, “Scalable test problems for evolutionary multiobjective optimization,” inEvolutionary multiob- jective optimization: theoretical advances and applications. Springer, 2005, pp. 105–145

2005

-

[61]

A review of multiobjective test problems and a scalable test problem toolkit,

S. Huband, P. Hingston, L. Barone, and L. While, “A review of multiobjective test problems and a scalable test problem toolkit,”IEEE Transactions on Evolutionary Computation, vol. 10, no. 5, pp. 477–506, 2006

2006

-

[62]

A benchmark test suite for evolutionary many-objective optimization,

R. Cheng, M. Li, Y . Tian, X. Zhang, S. Yang, Y . Jin, and X. Yao, “A benchmark test suite for evolutionary many-objective optimization,” Complex & Intelligent Systems, vol. 3, no. 1, pp. 67–81, 2017

2017

-

[63]

J. H. Holland,Adaptation in natural and artificial systems: an intro- ductory analysis with applications to biology, control, and artificial intelligence. MIT press, 1992

1992

-

[64]

A. Hurst, A. Lerer, A. P. Goucher, A. Perelman, A. Ramesh, A. Clark, A. Ostrow, A. Welihinda, A. Hayes, A. Radfordet al., “Gpt-4o system card,”arXiv preprint arXiv:2410.21276, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[65]

G. Comanici, E. Bieber, M. Schaekermann, I. Pasupat, N. Sachdeva, I. Dhillon, M. Blistein, O. Ram, D. Zhang, E. Rosenet al., “Gem- ini 2.5: Pushing the frontier with advanced reasoning, multimodality, long context, and next generation agentic capabilities,”arXiv preprint arXiv:2507.06261, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[66]

Introducing Claude 4,

Anthropic, “Introducing Claude 4,” May 2025. [Online]. Available: https://www.anthropic.com/news/claude-4

2025

-

[67]

A. Yang, A. Li, B. Yang, B. Zhang, B. Hui, B. Zheng, B. Yu, C. Gao, C. Huang, C. Lvet al., “Qwen3 technical report,”arXiv preprint arXiv:2505.09388, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[68]

Qwen2.5 technical report,

Qwen, :, A. Yang, B. Yang, B. Zhang, B. Hui, B. Zheng, B. Yu, C. Li, D. Liu, F. Huang, H. Wei, H. Lin, J. Yang, J. Tu, J. Zhang, J. Yang, J. Yang, J. Zhou, J. Lin, K. Dang, K. Lu, K. Bao, K. Yang, L. Yu, M. Li, M. Xue, P. Zhang, Q. Zhu, R. Men, R. Lin, T. Li, T. Tang, T. Xia, X. Ren, X. Ren, Y . Fan, Y . Su, Y . Zhang, Y . Wan, Y . Liu, Z. Cui, Z. Zhang, ...

-

[69]

[Online]. Available: https://arxiv.org/abs/2412.15115

work page internal anchor Pith review Pith/arXiv arXiv

-

[70]

llama.cpp,

G. Gerganov, I. Kawrakow, and Contributors, “llama.cpp,” https://github.com/ggerganov/llama.cpp, 2023

2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.