Recognition: unknown

Enwar 3.0: An Agentic Multi-Modal LLM Orchestrator for Situation-Aware Beamforming, Blockage Prediction, and Handover Management

Pith reviewed 2026-05-08 01:52 UTC · model grok-4.3

The pith

An LLM orchestrates specialized agents to select beams, forecast blockages, and manage handovers in mmWave vehicular networks by first checking the health of camera, radar, LiDAR, and GPS inputs.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

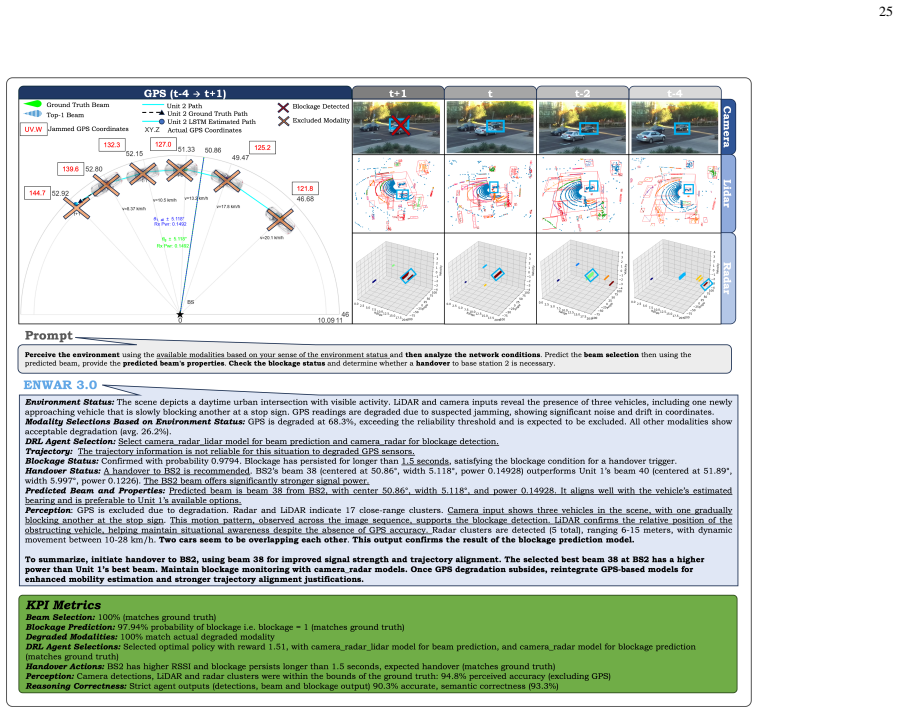

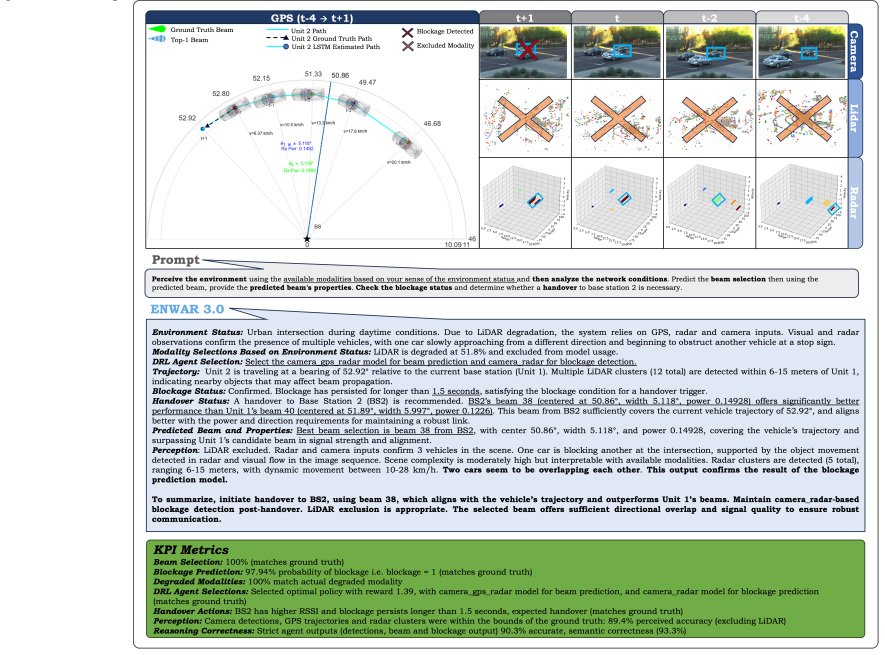

Enwar 3.0 unifies multi-modal sensing, a sensor-degradation classifier, and a primed LLM that orchestrates multiple agents through structured task-aware prompting, thereby enabling predictive beamforming, blockage detection, and handover management while dynamically selecting sensor-specific models according to environmental context and achieving the stated accuracy levels on the tested combinations.

What carries the argument

The agentic LLM orchestrator that receives sensor-health assessments from the degradation classifier and issues structured calls to specialized agents for beam selection, blockage forecasting, and handover management.

If this is right

- Real-time sensor-health classification allows the system to maintain connectivity when individual sensors fail or degrade.

- LLM-driven orchestration supplies interpretable reasoning for beam and handover choices on complex prompts.

- Context-driven model selection works across varied sensor input combinations without requiring separate training for each.

- The architecture provides a template for embedding language-model reasoning into other wireless adaptation loops.

Where Pith is reading between the lines

- The same orchestration pattern could be applied to non-vehicular high-frequency links where sensor reliability also changes rapidly.

- Reducing reliance on the synthetic training set by collecting limited real-world degradation examples would test whether the reported accuracies hold outside simulation.

- Integrating the LLM decisions with lower-latency edge hardware could make the full pipeline viable for sub-second adaptation cycles.

Load-bearing premise

The synthetic degradation pipeline used to train the sensor classifier accurately represents real-world impairments across camera, radar, LiDAR, and GPS, and the LLM orchestration generalizes beyond the fifteen tested sensor combinations to live deployment.

What would settle it

Running the deployed system on actual vehicles experiencing natural sensor degradations such as fog-obscured cameras or rain-induced radar interference and comparing measured beam-selection accuracy and blockage F1-scores against the reported figures without any retraining on real data.

Figures

read the original abstract

Maintaining robust millimeter-wave (mmWave) connectivity in vehicular networks requires real-time adaptation to environmental dynamics, sensor degradation, and link variability. This paper presents Enwar 3.0, an environment-aware reasoning framework that unifies multi-modal sensing, agentic large language models (LLMs), and context-driven model selection for predictive beamforming, blockage detection, and handover management. Building upon prior iterations of Enwar, the proposed architecture integrates a classifier-driven assessment of sensor health with a primed LLM that orchestrates multiple specialized agents through structured, task-aware prompting. A novel synthetic degradation pipeline enables the training of a sensor degradation classifier that detects real-time impairments across camera, radar, LiDAR, and GPS inputs, achieving over 99% accuracy. The LLM, trained via chain-of-thought (CoT) priming and human-in-the-loop feedback, coordinates agent calls for beam selection, blockage forecasting, and environment perception while dynamically loading sensor-specific models based on environmental context. Extensive evaluations across 15 sensor combinations demonstrate that Enwar 3.0 delivers state-of-the-art performance in both predictive accuracy and interpretability, with beam selection accuracy exceeding 88%, blockage F1-scores surpassing 98%, and reasoning correctness reaching 87% on complex decision prompts. This work establishes a scalable foundation for LLM-integrated wireless systems that reason, perceive, and adapt in real-time.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents Enwar 3.0, an agentic multi-modal LLM orchestrator for situation-aware beamforming, blockage prediction, and handover management in vehicular mmWave networks. It integrates multi-modal sensing, a classifier-driven sensor health assessment trained via a synthetic degradation pipeline (claimed >99% accuracy), and a primed LLM that orchestrates specialized agents through structured prompting for dynamic model selection. Evaluations across 15 sensor combinations are reported to achieve beam selection accuracy >88%, blockage F1-scores >98%, and 87% reasoning correctness on complex prompts, positioning the framework as a scalable foundation for LLM-integrated wireless systems.

Significance. If the empirical results prove robust under real-world conditions and the synthetic pipeline generalizes, the work could meaningfully advance LLM-orchestrated multi-agent approaches in dynamic wireless environments, offering a concrete example of context-aware model selection and interpretability in mmWave vehicular networks. The unification of sensing, reasoning, and adaptation is a timely direction, though its impact hinges on verifiable transfer beyond the reported synthetic evaluations.

major comments (2)

- [Abstract] Abstract: The abstract states beam selection accuracy exceeding 88%, blockage F1-scores surpassing 98%, and classifier accuracy over 99% across 15 sensor combinations, yet supplies no information on experimental setup, baselines, validation datasets, number of trials, or statistical significance. Without these details it is impossible to assess whether the data support the state-of-the-art claims.

- [Abstract] Abstract: The central performance numbers rest on a novel synthetic degradation pipeline used to train the multi-modal sensor health classifier, but the manuscript provides no quantitative evidence that the simulated impairments (fog, noise, occlusion, etc.) reproduce the joint statistics and temporal correlations of real vehicular mmWave sensor degradations. A substantial distribution shift would invalidate both the reported accuracies and the dynamic orchestration logic for live deployment.

minor comments (1)

- The description of the 15 sensor combinations and the precise definition of 'reasoning correctness' on complex decision prompts should be expanded for reproducibility.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We address each major comment point by point below, proposing revisions where they strengthen the presentation without altering the core contributions.

read point-by-point responses

-

Referee: [Abstract] Abstract: The abstract states beam selection accuracy exceeding 88%, blockage F1-scores surpassing 98%, and classifier accuracy over 99% across 15 sensor combinations, yet supplies no information on experimental setup, baselines, validation datasets, number of trials, or statistical significance. Without these details it is impossible to assess whether the data support the state-of-the-art claims.

Authors: We agree that the abstract's brevity limits immediate context for the reported metrics. The full manuscript details the experimental setup in Section 4 (including the simulation environment, sensor models, and 15 combinations), baselines in Section 5 (traditional beam selection and non-orchestrated LLM variants), validation on held-out synthetic test sets, multiple trials with variance reporting, and significance testing. To improve accessibility, we will revise the abstract to incorporate a concise clause on the evaluation methodology and dataset characteristics while respecting length constraints. revision: yes

-

Referee: [Abstract] Abstract: The central performance numbers rest on a novel synthetic degradation pipeline used to train the multi-modal sensor health classifier, but the manuscript provides no quantitative evidence that the simulated impairments (fog, noise, occlusion, etc.) reproduce the joint statistics and temporal correlations of real vehicular mmWave sensor degradations. A substantial distribution shift would invalidate both the reported accuracies and the dynamic orchestration logic for live deployment.

Authors: We acknowledge this as a substantive limitation. The pipeline models common impairments drawn from vehicular sensing literature, but the manuscript does not supply direct quantitative comparisons (e.g., distributional distances or correlation statistics) to real-world joint degradation statistics. This reflects practical constraints in acquiring labeled real mmWave sensor data at scale. In revision we will add an explicit limitations paragraph discussing potential distribution shift risks, their implications for the orchestration logic, and directions for future real-world validation, while clarifying that current results hold under the modeled conditions. revision: partial

Circularity Check

Minor self-citation to prior Enwar versions; central claims rest on independent empirical evaluations

full rationale

The paper references building upon prior Enwar iterations by the same authors, but this is not load-bearing for the reported results. All performance numbers (beam selection >88%, blockage F1 >98%, classifier >99%, reasoning 87%) are presented as outcomes of new experiments across 15 sensor combinations using a synthetic degradation pipeline for training and testing. No equations, derivations, or first-principles claims appear in the provided text that reduce by construction to fitted inputs or prior self-citations. The architecture description and model-selection logic are presented as engineering choices validated empirically rather than derived from self-referential premises.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Multi-modal sensors provide complementary and useful information for real-time environment perception in vehicular scenarios.

- domain assumption Chain-of-thought priming and human-in-the-loop feedback enable LLMs to perform reliable task-aware coordination of specialized agents.

Reference graph

Works this paper leans on

-

[1]

Multi-modal sensor fusion for proactive blockage prediction in mmWave vehicular networks,

A. M. Nazar, A. Celik, M. Y . Selim, A. Abdallah, D. Qiao, and A. M. Eltawil, “Multi-modal sensor fusion for proactive blockage prediction in mmWave vehicular networks,” 2025. [Online]. Available: https://arxiv.org/abs/2507.15769

-

[2]

At the dawn of generative AI era: A tutorial-cum-survey on new frontiers in 6G wireless intelligence,

A. Celik and A. M. Eltawil, “At the dawn of generative AI era: A tutorial-cum-survey on new frontiers in 6G wireless intelligence,”IEEE Open Journal of the Comms. Soc., vol. 5, pp. 2433–2489, 2024

2024

-

[3]

H. Zou, Q. Zhao, L. Bariah, M. Bennis, and M. Debbah, “Wireless multi-agent generative AI: From connected intelligence to collective intelligence,” 2023. [Online]. Available: https://arxiv.org/abs/2307.02757

-

[4]

ENW AR 2.0: An agentic multimodal wireless LLM framework with reasoning, situation-aware explainability and beam tracking,

A. M. Nazar, A. Celik, M. Y . Selim, A. Abdallah, D. Qiao, and A. M. Eltawil, “ENW AR 2.0: An agentic multimodal wireless LLM framework with reasoning, situation-aware explainability and beam tracking,”IEEE Transactions on Mobile Computing, pp. 1–18, 2025

2025

-

[5]

ENW AR: A RAG-empowered multi-modal LLM framework for wireless environment perception,

——, “ENW AR: A RAG-empowered multi-modal LLM framework for wireless environment perception,”IEEE Comms. Magazine, 2026

2026

-

[6]

Encoders, roll out! A multi-modal sensor transfusion for proac- tive I2V beam prediction,

——, “Encoders, roll out! A multi-modal sensor transfusion for proac- tive I2V beam prediction,” 03 2025

2025

-

[7]

Large Multi-Modal Models (LMMs) as universal founda- tion models for AI-native wireless systems,

S. Xuet al., “Large Multi-Modal Models (LMMs) as universal founda- tion models for AI-native wireless systems,”IEEE Network, 2024

2024

-

[8]

TelecomRAG: Taming telecom standards with re- trieval augmented generation and LLMs,

G. M. Yilmaet al., “TelecomRAG: Taming telecom standards with re- trieval augmented generation and LLMs,”SIGCOMM Comput. Commun. Rev., Jan. 2025

2025

-

[9]

NextG-GPT: Leveraging GenAI for advancing wireless networks and communication research,

A. M. Nazar, M. Y . Selim, D. Qiao, and H. Zhang, “NextG-GPT: Leveraging GenAI for advancing wireless networks and communication research,” 2025. [Online]. Available: https://arxiv.org/abs/2505.19322

-

[10]

TelecomGPT: A framework to build telecom-specific large language models,

H. Zouet al., “TelecomGPT: A framework to build telecom-specific large language models,”IEEE Trans. on Machine Learning in Comms. and Networking, 2025

2025

-

[11]

Large language models empowered autonomous edge AI for connected intelligence,

Y . Shen, J. Shao, X. Zhang, Z. Lin, H. Pan, D. Li, J. Zhang, and K. B. Letaief, “Large language models empowered autonomous edge AI for connected intelligence,”IEEE Communications Magazine, 2024

2024

-

[12]

Large language model enhanced multi-agent systems for 6G communications,

F. Jianget al., “Large language model enhanced multi-agent systems for 6G communications,”IEEE Wireless Communications, 2024

2024

-

[13]

WirelessAgent: Large language model agents for intelligent wireless networks,

J. Tonget al., “WirelessAgent: Large language model agents for intelligent wireless networks,” 2025. [Online]. Available: https: //arxiv.org/abs/2505.01074 17

-

[14]

WirelessLLM: Empowering large language models towards wireless intelligence,

J. Shaoet al., “WirelessLLM: Empowering large language models towards wireless intelligence,”Journal of Comms. and Information Networks, 2024

2024

-

[15]

When large language model agents meet 6G networks: Perception, grounding, and alignment,

M. Xuet al., “When large language model agents meet 6G networks: Perception, grounding, and alignment,” 2024

2024

-

[16]

WirelessGPT: A generative pre-trained multi-task learning framework for wireless communication,

T. Yang, P. Zhang, M. Zheng, Y . Shi, L. Jing, J. Huang, and N. Li, “WirelessGPT: A generative pre-trained multi-task learning framework for wireless communication,”IEEE Network, 2025

2025

-

[17]

The llama 3 herd of models,

L. Team, “The llama 3 herd of models,” Jul 2024. [Online]. Available: https://ai.meta.com/research/publications/the-llama-3-herd-of-models/

2024

-

[18]

Visual instruction tuning,

H. Liu, C. Li, Q. Wu, and Y . J. Lee, “Visual instruction tuning,” inProc. Adv. Neural Inf. Process. Syst. (NeurIPS), 2023

2023

-

[19]

Vision-language models for vision tasks: A survey,

J. Zhang, J. Huang, S. Jin, and S. Lu, “Vision-language models for vision tasks: A survey,”IEEE Trans. on Pattern Analysis and Machine Intelligence, 2024

2024

-

[20]

Radar aided proactive blockage prediction in real-world millimeter wave systems,

U. Demirhan and A. Alkhateeb, “Radar aided proactive blockage prediction in real-world millimeter wave systems,” 2021. [Online]. Available: https://arxiv.org/abs/2111.14805

-

[21]

Millimeter-wave vehicular communication to support massive automotive sensing,

J. Choi, V . Va, N. Gonzalez-Prelcic, R. Daniels, C. R. Bhat, and R. W. Heath, “Millimeter-wave vehicular communication to support massive automotive sensing,”IEEE Communications Magazine, 2016

2016

-

[22]

Multi-agent deep reinforcement learning for beam training in cell-free RIS-aided systems,

A. Abdallah, A. Celik, M. M. Mansour, and A. M. Eltawil, “Multi-agent deep reinforcement learning for beam training in cell-free RIS-aided systems,”IEEE Transactions on Wireless Communications, 2024

2024

-

[23]

Multi-cell multi-beam prediction using auto-encoder LSTM for mmWave systems,

S. H. A. Shah and S. Rangan, “Multi-cell multi-beam prediction using auto-encoder LSTM for mmWave systems,”IEEE Transactions on Wireless Communications, vol. 21, no. 12, pp. 10 366–10 380, 2022

2022

-

[24]

Millimeter wave base stations with cameras: Vision aided beam and blockage prediction,

M. Alrabeiah, A. Hredzak, and A. Alkhateeb, “Millimeter wave base stations with cameras: Vision aided beam and blockage prediction,”

-

[25]

Available: https://arxiv.org/abs/1911.06255

[Online]. Available: https://arxiv.org/abs/1911.06255

-

[26]

LiDAR aided future beam prediction in real-world millimeter wave V2I communications,

S. Jiang, G. Charan, and A. Alkhateeb, “LiDAR aided future beam prediction in real-world millimeter wave V2I communications,”IEEE Wireless Communications Letters, pp. 1–1, 2022

2022

-

[27]

Vehicular blockage modelling and performance analysis for mmWave V2V com- munications,

K. Dong, M. Mizmizi, D. Tagliaferri, and U. Spagnolini, “Vehicular blockage modelling and performance analysis for mmWave V2V com- munications,” inIEEE International Conference on Comms., 2022

2022

-

[28]

Wireless agentic AI with retrieval-augmented multimodal semantic perception,

G. Liuet al., “Wireless agentic AI with retrieval-augmented multimodal semantic perception,”IEEE Communications Magazine, 2026

2026

-

[29]

Task-oriented semantic com- munication in large multimodal models-based vehicle networks,

B. Du, H. Du, D. Niyato, and R. Li, “Task-oriented semantic com- munication in large multimodal models-based vehicle networks,”IEEE Transactions on Mobile Computing, 2025

2025

-

[30]

Explainable and robust artificial intelligence for trustworthy resource management in 6G networks,

N. Khan, S. Coleri, A. Abdallah, A. Celik, and A. M. Eltawil, “Explainable and robust artificial intelligence for trustworthy resource management in 6G networks,”IEEE Communications Magazine, 2024

2024

-

[31]

Ex- plainable and robust millimeter wave beam alignment for AI-native 6G networks,

N. Khan, A. Abdallah, A. Celik, A. M. Eltawil, and S. Coleri, “Ex- plainable and robust millimeter wave beam alignment for AI-native 6G networks,”arXiv preprint arXiv:2501.17883, 2025

-

[32]

Explainable AI-aided feature selection and model reduction for DRL-based V2X resource allocation,

——, “Explainable AI-aided feature selection and model reduction for DRL-based V2X resource allocation,”IEEE Trans. on Comms., 2025

2025

-

[33]

Large wireless model (LWM): A foundation model for wireless channels,

S. Alikhani, G. Charan, and A. Alkhateeb, “Large wireless model (LWM): A foundation model for wireless channels,” 2025. [Online]. Available: https://arxiv.org/abs/2411.08872

-

[34]

A new paradigm of user-centric wireless communication driven by large language models,

K. Ding, C. Guo, Y . Yang, W. Hu, and Y . C. Eldar, “A new paradigm of user-centric wireless communication driven by large language models,”

-

[35]

Available: https://arxiv.org/abs/2504.11696

[Online]. Available: https://arxiv.org/abs/2504.11696

-

[36]

NetOrchLLM: Mastering wireless network orchestration with large language models,

A. Abdallah, A. Albaseer, A. Celik, M. Abdallah, and A. M. Eltawil, “NetOrchLLM: Mastering wireless network orchestration with large language models,”arXiv preprint arXiv:2412.10107, 2024

-

[37]

Agentic large language models: A survey.arXiv preprint arXiv:2503.23037, 2025

A. Plaat, M. van Duijn, N. van Stein, M. Preuss, P. van der Putten, and K. J. Batenburg, “Agentic large language models, a survey,” 2025. [Online]. Available: https://arxiv.org/abs/2503.23037

-

[38]

Chain-of-thought prompting elicits reasoning in large language models,

J. Weiet al., “Chain-of-thought prompting elicits reasoning in large language models,” inProc. Adv. Neural Inf. Process. Syst. (NeurIPS), Dec. 2022

2022

-

[39]

Language models are few-shot learners,

T. Brown, B. Mann, N. Ryder, M. Subbiah, J. D. Kaplan, P. Dhariwal, A. Neelakantan, P. Shyam, G. Sastry, A. Askellet al., “Language models are few-shot learners,” inProc. Adv. Neural Inf. Process. Syst. (NeurIPS), Dec. 2020

2020

-

[40]

5G 3GPP-like channel models for outdoor urban microcellular and macrocellular environments,

K. Hanedaet al., “5G 3GPP-like channel models for outdoor urban microcellular and macrocellular environments,” in2016 IEEE 83rd Vehicular Technology Conference (VTC Spring). IEEE, May 2016. [Online]. Available: http://dx.doi.org/10.1109/VTCSpring.2016.7503971

-

[41]

Proximal Policy Optimization Algorithms

J. Schulman, F. Wolski, P. Dhariwal, A. Radford, and O. Klimov, “Proximal policy optimization algorithms,” 2017. [Online]. Available: https://arxiv.org/abs/1707.06347

work page internal anchor Pith review arXiv 2017

-

[42]

Multi-modal beam prediction challenge 2022: Towards generalization,

G. Charan, U. Demirhan, J. Morais, A. Behboodi, H. Pezeshki, and A. Alkhateeb, “Multi-modal beam prediction challenge 2022: Towards generalization,” 2022. [Online]. Available: https://arxiv.org/abs/2209. 07519

2022

-

[43]

DeepSense 6G: A large-scale real-world multi-modal sensing and comm. dataset,

A. Alkhateeb, G. Charan, T. Osman, A. Hredzak, J. Morais, U. Demirhan, and N. Srinivas, “DeepSense 6G: A large-scale real-world multi-modal sensing and comm. dataset,”IEEE Comm. Mag., 2023

2023

-

[44]

LiDAR Filtering in 3D Object Detection Based on Improved RANSAC,

B. Wang, J. Lan, and J. Gao, “LiDAR Filtering in 3D Object Detection Based on Improved RANSAC,”Remote Sensing, 2022

2022

-

[45]

Pointnet: Deep learning on point sets for 3D classification and segmentation,

C. R. Qi, H. Su, K. Mo, and L. J. Guibas, “Pointnet: Deep learning on point sets for 3D classification and segmentation,” 2017. [Online]. Available: https://arxiv.org/abs/1612.00593

-

[46]

[Online]

OpenAI. [Online]. Available: https://chat.openai.com/chat

-

[47]

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

D. Guoet al., “DeepSeek-R1: Incentivizing reasoning capability in LLMs via reinforcement learning,” 2025. [Online]. Available: https://arxiv.org/abs/2501.12948

work page internal anchor Pith review arXiv 2025

-

[48]

A. Yanget al., “Qwen2.5 technical report,” 2025. [Online]. Available: https://arxiv.org/abs/2412.15115 Ahmad M. Nazar(Member, IEEE) is a Postdoc- toral Scholar in the Department of Electrical and Computer Engineer at Iowa State University (ISU), USA and a Lead Research Engineer at Gladio- lus Technological Institute, USA. He received a Ph.D. degree in Com...

work page internal anchor Pith review arXiv 2025

-

[49]

[11], [14]–[16] Integration of LLMs, GPTs, and AI in 6G architectures for intent-driven, intelligent network operations

Perspectives on large multi-modal models with causal reasoning and neuro-symbolic AI for 6G Networks [8]–[10] Introduces RAG LLM frameworks for wireless systems within domain-specific datasets for context-aware, real-time support in network and telecom domains. [11], [14]–[16] Integration of LLMs, GPTs, and AI in 6G architectures for intent-driven, intell...

-

[50]

LLaVa vision-language model

-

[51]

[20]–[26] Machine learning methods incorporating sensor-based blockage/beam prediction

Survey on vision-language models. [20]–[26] Machine learning methods incorporating sensor-based blockage/beam prediction. [27], [28] Task-oriented semantic communication using large multi-modal model, agents, and RAG for efficient bandwidth data exchange in vehicular environments. [29]–[31] Frameworks for explainable and robust AI solutions in 6G networks...

-

[52]

[33] LLM framework transforming user requests into intent-focused structured optimization tasks/queries for real-time wireless semantic communication systems

Large Wireless Model, a fine-tuned LLM for wireless communication-based solutions. [33] LLM framework transforming user requests into intent-focused structured optimization tasks/queries for real-time wireless semantic communication systems

-

[53]

Chain-of-Thought prompting techniques and how it improves reasoning in LLMs

-

[54]

Few-shot learning methods and its effects on LLMs

-

[55]

vehicle at 33.420, -111.929 heading NE at 12 km/h

Deep-learning based PPO algorithm. [40], [41] I2V dataset used within ENWAR3.0. [42], [43] LiDAR preprocessing, and PointNet architecture. APPENDIXB LLM PRIMING This section presents the prompt template used for LLM priming, along with a three-iteration example with reward- guided human feedback and iterative response refinement. A. Main Priming Template ...

-

[56]

Each frame passes through three convolutional layers with ReLU activa- tions, followed by flattening and a single-layer LSTM with 128 hidden units

Camera Encoder:The camera encoder extracts spa- tial–temporal features from RGB sequences. Each frame passes through three convolutional layers with ReLU activa- tions, followed by flattening and a single-layer LSTM with 128 hidden units. The final hidden state is used as the compact visual representation

-

[57]

The final hidden vector is projected through a fully connected layer to encode trajectory dynamics

GPS Encoder:The GPS encoder processes normalized displacement, velocity, and angular features using a two-layer LSTM (128 hidden units). The final hidden vector is projected through a fully connected layer to encode trajectory dynamics

-

[58]

Each point cloud frame is pro- cessed via three 1D convolutions (kernel size 1) with ReLU activations, followed by max pooling

LiDAR Encoder:The LiDAR encoder follows a PointNet-based design [43]. Each point cloud frame is pro- cessed via three 1D convolutions (kernel size 1) with ReLU activations, followed by max pooling. Processed frames are passed to a single-layer LSTM (128 hidden units) to capture temporal evolution

-

[59]

The final hidden state captures reflectivity and motion cues relevant to beam selection

Radar Encoder:The radar encoder transforms radar tensors into spatiotemporal embeddings using three fully con- nected layers with ReLU activations followed by an LSTM (128 hidden units). The final hidden state captures reflectivity and motion cues relevant to beam selection

-

[60]

Encoder out- puts are concatenated and passed through two fully connected layers with ReLU and dropout to produce a unified repre- sentation

Early Fusion:A key design element in this pipeline is early feature fusion pre-transformer processing. Encoder out- puts are concatenated and passed through two fully connected layers with ReLU and dropout to produce a unified repre- sentation. This early fusion enables the transformer to learn inter-modal dependencies from semantically aligned features

-

[61]

The output encodes high-level relationships across modalities

Transformer Block:The transformer block models cross-modal and temporal dependencies via multi-head self- attention, residual connections, layer normalization, dropout, and a two-layer feed-forward network. The output encodes high-level relationships across modalities

-

[62]

The beam with the highest score is selected as the optimal beam

Output Layer:A final fully connected layer maps the transformer output to aQ-dimensional beam score vector. The beam with the highest score is selected as the optimal beam. APPENDIXE DETAILEDPREDICTIONAGENTS’ MODELARCHITECTURE This section illustrates each prediction agent’s internal three stage architecture: 1) data preprocessing, 2) feature extraction a...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.