Recognition: unknown

Contrastive Regularization for Accent-Robust ASR

Pith reviewed 2026-05-07 13:28 UTC · model grok-4.3

The pith

Utterance-level contrastive loss regularizes ASR encoders to reduce accent sensitivity without model changes or accent labels.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Supervised contrastive learning (SupCon) serves as a lightweight, accent-invariant auxiliary objective for CTC fine-tuning. An utterance-level contrastive loss regularizes encoder representations without architectural modification or explicit accent supervision. Experiments on the L2-ARCTIC benchmark show consistent WER reductions across multiple pretrained encoders, with up to 25-29% relative reduction under unseen-accent evaluation. Analysis using within-transcript cosine dispersion indicates that SupCon promotes more compact and stable representation geometry under accent variability.

What carries the argument

Utterance-level supervised contrastive loss (SupCon) applied during CTC fine-tuning to pull same-transcript encoder representations together and thereby increase accent invariance.

If this is right

- Consistent word error rate reductions occur across multiple pretrained encoders when the contrastive objective is added to standard CTC fine-tuning.

- Relative WER improvements reach 25-29% specifically on accents held out from training.

- Within-transcript cosine dispersion decreases, indicating more compact representation clusters despite accent variation.

- The method applies without any change to model architecture or need for accent labels.

- It functions as a model-agnostic regularization step that can be inserted into existing self-supervised pretraining plus CTC pipelines.

Where Pith is reading between the lines

- The same regularization might also improve robustness to other uncontrolled factors such as background noise or speaking rate, since the loss operates on transcript identity rather than accent identity.

- Combining the contrastive term with existing data-augmentation strategies could produce additive gains on more challenging test distributions.

- Testing the approach on larger, multi-accent corpora would reveal whether the observed geometry changes scale with data diversity.

- Deployment in production systems serving international users could benefit from this lightweight addition if the WER gains hold on real-world traffic.

Load-bearing premise

That the within-transcript cosine dispersion metric reliably indicates accent invariance and that gains on the L2-ARCTIC benchmark generalize beyond the tested accents and models.

What would settle it

No measurable drop in word error rate or within-transcript cosine dispersion when the contrastive loss is added to a previously unseen set of accents or to a different family of pretrained encoders would falsify the claimed robustness benefit.

Figures

read the original abstract

ASR systems based on self-supervised acoustic pretraining and CTC fine-tuning achieve strong performance on native speech but remain sensitive to accent variability. We investigate supervised contrastive learning (SupCon) as a lightweight, accent-invariant auxiliary objective for CTC fine-tuning. An utterance-level contrastive loss regularizes encoder representations without architectural modification or explicit accent supervision. Experiments on the L2-ARCTIC benchmark show consistent WER reductions across multiple pretrained encoders, with up to 25 -- 29\% relative reduction under unseen-accent evaluation. Analysis using within-transcript cosine dispersion indicates that SupCon promotes more compact and stable representation geometry under accent variability. Overall, SupCon provides an effective and model-agnostic regularization strategy for improving accent robustness.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that an utterance-level supervised contrastive (SupCon) loss can be used as a lightweight auxiliary objective during CTC fine-tuning of pretrained ASR encoders to regularize representations for improved accent robustness, without architectural changes or explicit accent labels. On the L2-ARCTIC benchmark, this yields consistent WER reductions across multiple encoders, with relative gains up to 25-29% in unseen-accent evaluation settings. The authors further support the approach with an analysis showing reduced within-transcript cosine dispersion, indicating more compact representation geometry under accent variability.

Significance. If the empirical gains and mechanistic interpretation hold, the work provides a practical, model-agnostic regularization strategy for accent-robust ASR that requires no additional supervision. The consistent improvements across several pretrained encoders is a positive aspect that strengthens the case for broad applicability. However, the significance is tempered by the need for stronger validation that the gains reflect accent invariance rather than benchmark-specific effects.

major comments (2)

- [Analysis] Analysis section: The within-transcript cosine dispersion metric is computed using transcript identity to define groups, which is the identical grouping used to select positive pairs in the SupCon loss. This makes the metric confirmatory of the loss's direct objective rather than independent evidence that representations have become invariant to accent (as opposed to simply more compact within transcripts).

- [Experiments] Experiments section: The reported WER reductions lack accompanying details on statistical significance testing, exact hyperparameter values for the contrastive loss weighting coefficient, baseline configurations, and the precise unseen-accent data splits on L2-ARCTIC. These omissions make it difficult to assess whether the up to 25-29% relative gains are robust or reproducible.

minor comments (1)

- [Abstract] Abstract: The phrasing '25 -- 29% relative reduction' would benefit from a brief qualifier indicating the specific models or conditions under which the upper end is achieved.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our work. We address each major comment below and have made revisions to improve the manuscript's clarity, rigor, and reproducibility.

read point-by-point responses

-

Referee: [Analysis] Analysis section: The within-transcript cosine dispersion metric is computed using transcript identity to define groups, which is the identical grouping used to select positive pairs in the SupCon loss. This makes the metric confirmatory of the loss's direct objective rather than independent evidence that representations have become invariant to accent (as opposed to simply more compact within transcripts).

Authors: We agree that the within-transcript cosine dispersion metric relies on the same transcript-based grouping as the SupCon loss, rendering it confirmatory of the loss objective rather than fully independent validation of accent invariance. The metric was included to demonstrate the geometric impact of regularization on content-matched utterances (which vary in accent within L2-ARCTIC). In the revised manuscript, we have updated the analysis section to explicitly acknowledge this relationship, tempered the interpretation to focus on compactness under content-matched conditions, and added a brief discussion of its limitations as standalone evidence for accent invariance. We also include a supplementary figure showing dispersion trends across different groupings to provide additional context. revision: partial

-

Referee: [Experiments] Experiments section: The reported WER reductions lack accompanying details on statistical significance testing, exact hyperparameter values for the contrastive loss weighting coefficient, baseline configurations, and the precise unseen-accent data splits on L2-ARCTIC. These omissions make it difficult to assess whether the up to 25-29% relative gains are robust or reproducible.

Authors: We acknowledge these omissions in the original submission. The revised manuscript now incorporates: statistical significance testing via bootstrap resampling with reported p-values for all WER comparisons; the exact contrastive loss weighting coefficient (λ = 0.1); complete baseline configuration details including all pretrained encoders, fine-tuning schedules, and optimization settings; and the precise L2-ARCTIC unseen-accent splits (holding out Arabic, Mandarin, and Spanish speakers for evaluation while training on the remaining accents). These additions enable full reproducibility and allow assessment of the robustness of the reported relative gains. revision: yes

Circularity Check

No significant circularity; empirical application of standard loss with independent evaluation

full rationale

The paper applies the standard supervised contrastive (SupCon) loss as an auxiliary objective during CTC fine-tuning of pretrained ASR encoders, without deriving new equations or claiming first-principles predictions. Reported gains consist of measured WER reductions on the L2-ARCTIC benchmark (including unseen-accent splits) plus post-hoc analysis of within-transcript cosine dispersion. These outcomes are obtained via direct experimentation rather than any reduction of results to fitted parameters, self-citations, or quantities defined by the inputs. The contrastive formulation is a known technique used here in a model-agnostic way; the evaluation metrics and benchmark results remain externally falsifiable and do not collapse to the training objective by construction. No load-bearing self-citation chains or ansatz smuggling appear in the provided claims.

Axiom & Free-Parameter Ledger

free parameters (1)

- contrastive loss weighting coefficient

axioms (1)

- domain assumption Utterance-level representations from pretrained encoders can be made more accent-invariant through contrastive regularization without explicit accent labels.

Reference graph

Works this paper leans on

-

[1]

Introduction Modern ASR systems based on self-supervised acoustic pre- training and CTC fine-tuning achieve strong performance on benchmarks dominated by native speech [1, 2, 3]. However, performance degrades substantially for non-native speech, par- ticularly in low-resource or globally deployed settings, due to systematic pronunciation variability that ...

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[2]

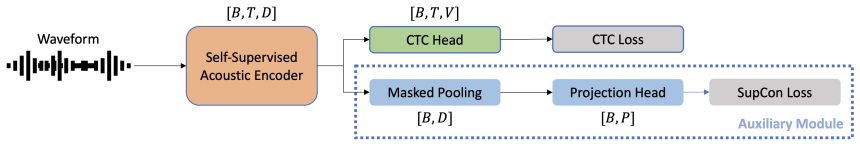

Problem Formulation As illustrated in Figure 1, letD={(x i, yi)}N i=1 denote a train- ing dataset, wherex i is a raw speech waveform andyi is the cor- responding transcript

Methodology 2.1. Problem Formulation As illustrated in Figure 1, letD={(x i, yi)}N i=1 denote a train- ing dataset, wherex i is a raw speech waveform andyi is the cor- responding transcript. Given an utterancex i, a self-supervised pretrained acoustic encoder produces frame-level representa- tions that are shared by the ASR and auxiliary contrastive ob- j...

-

[3]

Datasets We conduct experiments on the L2-ARCTIC [4], a widely used benchmark for non-native and multi-accent ASR

Experimental Setting 3.1. Datasets We conduct experiments on the L2-ARCTIC [4], a widely used benchmark for non-native and multi-accent ASR. The dataset consists of English speech from non-native speakers across six L1 backgrounds: Arabic, Mandarin, Hindi, Korean, Spanish, and Vietnamese. Each accent group includes four speakers (24 speakers in total), wi...

-

[4]

Main Results Table 2 reports word error rate (WER) on the L2-ARCTIC benchmark under unseen-transcript (UT) and unseen-accent (UA) evaluation settings

Results 4.1. Main Results Table 2 reports word error rate (WER) on the L2-ARCTIC benchmark under unseen-transcript (UT) and unseen-accent (UA) evaluation settings. All results are obtained using iden- tical CTC decoding with a 4-gram language model. The pro- posed supervised contrastive regularization consistently im- proves recognition performance over s...

-

[5]

Conclusion This paper demonstrates that supervised contrastive learning is an effective utterance-level regularizer for ASR fine-tuning. Without modifying model architectures or pretraining proce- dures, the proposed approach improves accent robustness, sta- bilizes encoder representations, and yields consistent WER re- ductions across multiple self-super...

-

[6]

Acknowledgments This research is supported by the National Research Founda- tion, Singapore, and the Civil Aviation Authority of Singapore, under the Aviation Transformation Programme. Any opinions, findings and conclusions or recommendations expressed in this material are those of the author(s) and do not reflect the views of National Research Foundation...

-

[7]

After using this tool, the author(s) reviewed and edited the content as needed and take(s) full responsibility for the content of the published article

Generative AI Use Disclosure During the preparation of this manuscript, the author(s) used ChatGPT to check grammar, spelling, and syntax errors. After using this tool, the author(s) reviewed and edited the content as needed and take(s) full responsibility for the content of the published article

-

[8]

wav2vec 2.0: A framework for self-supervised learning of speech repre- sentations,

A. Baevski, Y . Zhou, A. Mohamed, and M. Auli, “wav2vec 2.0: A framework for self-supervised learning of speech repre- sentations,”Advances in neural information processing systems, vol. 33, pp. 12 449–12 460, 2020

2020

-

[9]

Wavlm: Large-scale self- supervised pre-training for full stack speech processing,

S. Chen, C. Wang, Z. Chen, Y . Wu, S. Liu, Z. Chen, J. Li, N. Kanda, T. Yoshioka, X. Xiaoet al., “Wavlm: Large-scale self- supervised pre-training for full stack speech processing,”IEEE Journal of Selected Topics in Signal Processing, vol. 16, no. 6, pp. 1505–1518, 2022

2022

-

[10]

Robust speech recognition via large-scale weak supervision,

A. Radford, J. W. Kim, T. Xu, G. Brockman, C. McLeavey, and I. Sutskever, “Robust speech recognition via large-scale weak supervision,” inInternational conference on machine learning. PMLR, 2023, pp. 28 492–28 518

2023

-

[11]

L2-arctic: A non-native english speech corpus,

G. Zhao, S. Sonsaat, A. Silpachai, I. Lucic, E. Chukharev- Hudilainen, J. Levis, and R. Gutierrez-Osuna, “L2-arctic: A non-native english speech corpus,” inProc. Interspeech, 2018, p. 2783–2787. [Online]. Available: http://dx.doi.org/10.21437/ Interspeech.2018-1110

2018

-

[12]

End-to-end accented speech recognition

T. Viglino, P. Motlicek, and M. Cernak, “End-to-end accented speech recognition.” inInterspeech, 2019, pp. 2140–2144

2019

-

[13]

Joint training frame- work for accent and speech recognition based on conformer low- rank adaptation,

X. Zhuang, Y . Qian, S. Xu, and M. Wang, “Joint training frame- work for accent and speech recognition based on conformer low- rank adaptation,” inICASSP 2025-2025 IEEE International Con- ference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2025, pp. 1–5

2025

-

[14]

Mixture of lora experts for low-resourced multi-accent automatic speech recognition,

R. Bagat, I. Illina, and E. Vincent, “Mixture of lora experts for low-resourced multi-accent automatic speech recognition,” inIn- terspeech, 2025, pp. 1143–1147

2025

-

[15]

Im- proving self-supervised pre-training using accent-specific code- books,

D. Prabhu, A. Gupta, O. Nitsure, P. Jyothi, and S. Ganapathy, “Im- proving self-supervised pre-training using accent-specific code- books,” inInterspeech, 2024, pp. 2310–2314

2024

-

[16]

End- to-end multi-accent speech recognition with unsupervised ac- cent modelling,

S. Li, B. Ouyang, D. Liao, S. Xia, L. Li, and Q. Hong, “End- to-end multi-accent speech recognition with unsupervised ac- cent modelling,” inICASSP 2021-2021 IEEE International Con- ference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2021, pp. 6418–6422

2021

-

[17]

Unsupervised end-to-end ac- cented speech recognition under low-resource conditions,

L. Li, Y . Li, D. Xu, and Y . Long, “Unsupervised end-to-end ac- cented speech recognition under low-resource conditions,”IEEE Transactions on Audio, Speech and Language Processing, 2025

2025

-

[18]

Improving ac- cented speech recognition using data augmentation based on un- supervised text-to-speech synthesis,

C.-T. Do, S. Imai, R. Doddipatla, and T. Hain, “Improving ac- cented speech recognition using data augmentation based on un- supervised text-to-speech synthesis,” in2024 32nd European Sig- nal Processing Conference (EUSIPCO). IEEE, 2024, pp. 136– 140

2024

-

[19]

Supervised contrastive learning,

P. Khosla, P. Teterwak, C. Wang, A. Sarna, Y . Tian, P. Isola, A. Maschinot, C. Liu, and D. Krishnan, “Supervised contrastive learning,”Advances in neural information processing systems, vol. 33, pp. 18 661–18 673, 2020

2020

-

[20]

Supervised contrastive learning for pre-trained language model fine-tuning,

B. Gunel, J. Du, A. Conneau, and V . Stoyanov, “Supervised contrastive learning for pre-trained language model fine-tuning,” arXiv, vol. abs/2011.01403, 2020

-

[21]

Supervised con- trastive learning for accented speech recognition,

T. Han, H. Huang, Z. Yang, and W. Han, “Supervised con- trastive learning for accented speech recognition,”arXiv, vol. abs/2107.00921, 2021

-

[22]

Scala: Supervised contrastive learning for end-to-end automatic speech recognition,

L. Fu, X. Li, R. Wang, Z. Zhang, Y . Wu, X. He, and B. Zhou, “Scala: Supervised contrastive learning for end-to-end automatic speech recognition,” inInterspeech, 2022, pp. 1006–1010

2022

-

[23]

Clustering-based hard negative sampling for su- pervised contrastive speaker verification,

P. Masztalski, M. Romaniuk, J. ˙Zak, M. Matuszewski, and K. Kowalczyk, “Clustering-based hard negative sampling for su- pervised contrastive speaker verification,” inInterspeech, 2025, pp. 3698–3702

2025

-

[24]

On the Predictive Power of Representation Dispersion in Language Models

Y . Li, M. Li, K. Livescu, and J. Zhou, “On the predictive power of representation dispersion in language models,”arXiv, vol. abs/2506.24106, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.