Recognition: unknown

Stage-adaptive audio diffusion modeling

Pith reviewed 2026-05-08 17:16 UTC · model grok-4.3

The pith

Audio diffusion models train more efficiently by adapting guidance, timestep sampling, and regularization to the current training stage.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The authors show that deriving a progress-based regime variable from the training-time slope of an SSL-space discrepancy allows dynamic adjustment of training components, specifically by decaying SSL guidance, adapting timestep sampling, and activating structure-aware regularization at appropriate stages, resulting in improved training behavior and metric gains over static approaches in both evaluated settings.

What carries the argument

The progress-based regime variable from the slope of the SSL-space discrepancy, which detects the shift from semantic acquisition to refinement and controls activation of decayed guidance, adaptive sampling, and regularization.

If this is right

- Decayed SSL guidance in early training supports semantic bootstrapping without later interference.

- Self-adaptive timestep sampling aligns optimization emphasis with the current learning regime.

- Structure-aware regularization engages once convergent grouping appears in parameter space.

- Together these produce faster convergence and higher scores on primary generation and spectral metrics.

- Treating guidance, sampling, and regularization as stage-dependent yields better results than holding them fixed.

Where Pith is reading between the lines

- The same regime-tracking idea could be tested on diffusion models for images or video where analogous semantic-to-detail transitions occur.

- A lighter proxy for the SSL discrepancy might eventually replace the external model used for monitoring.

- If thresholds prove stable across datasets, the method could support shorter overall training schedules while keeping quality.

- Extending the approach to additional audio tasks would test how general the detected stage boundaries are.

Load-bearing premise

The slope of the SSL-space discrepancy reliably tracks the shift from semantic acquisition to refinement so that activating the three mechanisms at slope-derived thresholds produces stable additive gains.

What would settle it

If replacing the slope-derived activation thresholds with randomly chosen ones eliminates the reported gains in convergence and metrics, the claim that the regime variable drives the improvement would be falsified.

Figures

read the original abstract

Recent progress in diffusion-based audio generation and restoration has substantially improved performance across heterogeneous conditioning regimes, including text-conditioned audio generation and audio-conditioned super-resolution. However, training audio diffusion models remains computationally expensive, and most existing pipelines still rely on static optimization recipes that treat the relative importance of training signals as fixed throughout learning. In this work, we argue that a major source of inefficiency lies in the evolving balance between semantic acquisition and generation-oriented refinement. Early training places stronger emphasis on acquiring condition-aligned semantic structure and coarse global organization, whereas later training increasingly emphasizes temporal consistency, perceptual fidelity, and fine-detail refinement. To characterize this evolving balance, we introduce a progress-based regime variable derived from the training-time slope of an SSL-space discrepancy, which measures semantic progress during training. Based on this signal, we develop three complementary stage-aware mechanisms: decayed SSL guidance for early semantic bootstrapping, self-adaptive timestep sampling driven by the regime variable, and structure-aware regularization activated from convergent grouped organization in parameter space. We evaluate these mechanisms on text-conditioned audio generation and audio-conditioned super-resolution. Across both settings, the proposed stage-aware strategies improve convergence behavior and yield gains on the primary generation and spectral reconstruction metrics over standard static baselines. These results support the view that efficient audio diffusion training can benefit from treating external guidance, internal organization, and optimization emphasis as stage-dependent components rather than fixed ingredients.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that audio diffusion training can be made more efficient by deriving a progress-based regime variable from the slope of an SSL-space discrepancy during training, then using this signal to activate three stage-aware mechanisms—decayed SSL guidance (early), self-adaptive timestep sampling, and structure-aware regularization (later)—yielding improved convergence and gains on generation and spectral reconstruction metrics for both text-conditioned audio generation and audio-conditioned super-resolution relative to static baselines.

Significance. If the empirical improvements hold with proper statistical support, the work offers a plausible route toward dynamic, progress-dependent training schedules that could reduce compute costs while improving quality in audio generative models. The data-driven regime variable is a constructive alternative to hand-tuned schedules, and the three complementary mechanisms address distinct aspects of the claimed semantic-to-refinement transition.

major comments (3)

- [Abstract] Abstract: the central claim of improved convergence and metric gains is stated without any numerical values, error bars, ablation tables, or details on how regime-variable thresholds were selected or validated, leaving the magnitude and reliability of the reported benefits unassessable from the summary alone.

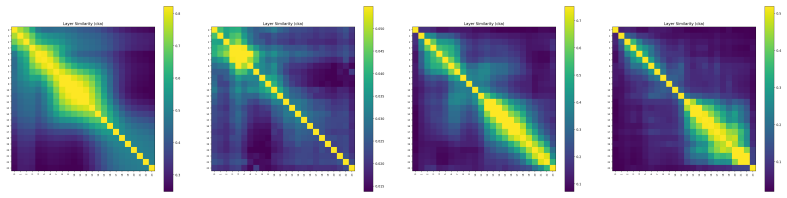

- [§3] §3 (regime variable definition): the progress signal is computed from the slope of discrepancy in an external SSL embedding space rather than from the diffusion model’s own loss landscape or parameter dynamics; without correlation plots, ablation against alternatives (e.g., loss curvature or perceptual metrics), or sensitivity analysis on the SSL backbone, it is unclear whether the slope reliably tracks the claimed semantic-acquisition to refinement transition or merely reflects dataset/SSL artifacts.

- [Experiments] Experiments section: the evaluation across the two conditioning regimes reports aggregate gains but supplies no per-mechanism ablations, no statistical significance tests, and no comparison of the full stage-adaptive recipe against each component applied in isolation or against random-threshold schedules, so the additivity and necessity of the three mechanisms remain unproven.

minor comments (1)

- [§3] The notation for the regime variable and its slope threshold should be introduced with an explicit equation or pseudocode in the main text rather than only in the abstract description.

Simulated Author's Rebuttal

We thank the referee for their constructive feedback on our manuscript. We address each of the major comments below and describe the revisions we intend to incorporate to address the concerns raised.

read point-by-point responses

-

Referee: [Abstract] Abstract: the central claim of improved convergence and metric gains is stated without any numerical values, error bars, ablation tables, or details on how regime-variable thresholds were selected or validated, leaving the magnitude and reliability of the reported benefits unassessable from the summary alone.

Authors: We agree that including quantitative results in the abstract would improve clarity and allow readers to better assess the claims. In the revised manuscript, we will update the abstract to include specific numerical improvements on key metrics (such as FID or spectral distance reductions with standard deviations), reference the ablation studies presented in the experiments section, and briefly describe the threshold selection process based on validation performance. This will make the benefits more concrete without exceeding the word limit. revision: yes

-

Referee: [§3] §3 (regime variable definition): the progress signal is computed from the slope of discrepancy in an external SSL embedding space rather than from the diffusion model’s own loss landscape or parameter dynamics; without correlation plots, ablation against alternatives (e.g., loss curvature or perceptual metrics), or sensitivity analysis on the SSL backbone, it is unclear whether the slope reliably tracks the claimed semantic-acquisition to refinement transition or merely reflects dataset/SSL artifacts.

Authors: The use of an external SSL embedding space is intentional, as it provides a semantic signal decoupled from the diffusion model's training dynamics, which can be noisy early on. We will add correlation plots in the revised §3 showing the relationship between the regime variable and both the diffusion loss and perceptual metrics to validate the tracking of semantic progress. Additionally, we will include a sensitivity analysis across different SSL backbones and an ablation comparing the slope-based signal to alternatives like loss curvature. These additions will address concerns about reliability and potential artifacts. revision: partial

-

Referee: [Experiments] Experiments section: the evaluation across the two conditioning regimes reports aggregate gains but supplies no per-mechanism ablations, no statistical significance tests, and no comparison of the full stage-adaptive recipe against each component applied in isolation or against random-threshold schedules, so the additivity and necessity of the three mechanisms remain unproven.

Authors: We acknowledge the value of detailed ablations and statistical analysis for establishing the contributions of each mechanism. In the revised experiments section, we will expand to include per-mechanism ablation results, showing performance when each is applied in isolation as well as in combination. We will also report statistical significance tests (e.g., paired t-tests or bootstrap confidence intervals) for the metric improvements and include comparisons against random-threshold schedules to demonstrate the benefit of the data-driven regime variable. These revisions will provide stronger evidence for the additivity and necessity of the proposed mechanisms. revision: yes

Circularity Check

No significant circularity detected

full rationale

The derivation introduces a regime variable computed from the slope of an external SSL-space discrepancy (independent of the diffusion model's own parameters or loss), then activates three mechanisms at thresholds derived from that signal. Reported gains on generation and spectral metrics are framed as empirical results from experiments rather than consequences forced by definition, fitting, or self-citation chains. No equations or steps reduce the central claim to its inputs by construction; the approach remains self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The slope of SSL-space discrepancy during training accurately reflects the evolving balance between semantic acquisition and generation-oriented refinement.

invented entities (1)

-

progress-based regime variable

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Usad: Universal speech and audio representation via distillation.arXiv preprint arXiv:2506.18843,

Heng-Jui Chang, Saurabhchand Bhati, James Glass, and Alexander H Liu. Usad: Universal speech and audio representation via distillation.arXiv preprint arXiv:2506.18843,

-

[2]

Hesen Chen, Junyan Wang, Zhiyu Tan, and Hao Li Sara. Structural and adversarial representation alignment for training-efficient diffusion models.arXiv preprint arXiv:2503.08253, 3,

-

[3]

Stable audio open

Zach Evans, Julian D Parker, CJ Carr, Zack Zukowski, Josiah Taylor, and Jordi Pons. Stable audio open. InICASSP 2025-2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 1–5. IEEE,

2025

-

[4]

Imagen Video: High Definition Video Generation with Diffusion Models

Jonathan Ho, William Chan, Chitwan Saharia, Jay Whang, Ruiqi Gao, Alexey Gritsenko, Diederik P Kingma, Ben Poole, Mohammad Norouzi, David J Fleet, et al. Imagen video: High definition video generation with diffusion models.arXiv preprint arXiv:2210.02303,

work page internal anchor Pith review arXiv

-

[5]

Dengyang Jiang, Mengmeng Wang, Liuzhuozheng Li, Lei Zhang, Haoyu Wang, Wei Wei, Guang Dai, Yanning Zhang, and Jingdong Wang. No other representation component is needed: Diffusion transformers can provide representation guidance by themselves.arXiv preprint arXiv:2505.02831,

-

[6]

Fr\’echet audio distance: A metric for evaluating music enhancement algo- rithms,

Kevin Kilgour, Mauricio Zuluaga, Dominik Roblek, and Matthew Sharifi. Fr\’echet audio distance: A metric for evaluating music enhancement algorithms.arXiv preprint arXiv:1812.08466,

-

[7]

Audiocaps: Generating captions for audios in the wild

Chris Dongjoo Kim, Byeongchang Kim, Hyunmin Lee, and Gunhee Kim. Audiocaps: Generating captions for audios in the wild. InProceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, V olume 1 (Long and Short Papers), pages 119–132,

2019

-

[8]

Audiogen: Textually guided audio generation,

Felix Kreuk, Gabriel Synnaeve, Adam Polyak, Uriel Singer, Alexandre Défossez, Jade Copet, Devi Parikh, Yaniv Taigman, and Yossi Adi. Audiogen: Textually guided audio generation.arXiv preprint arXiv:2209.15352,

-

[9]

Sdr–half-baked or well done? InICASSP 2019-2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 626–630

Jonathan Le Roux, Scott Wisdom, Hakan Erdogan, and John R Hershey. Sdr–half-baked or well done? InICASSP 2019-2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 626–630. IEEE,

2019

-

[10]

Chang Li, Ruoyu Wang, Lijuan Liu, Jun Du, Yixuan Sun, Zilu Guo, Zhenrong Zhang, Yuan Jiang, Jianqing Gao, and Feng Ma. Quality-aware masked diffusion transformer for enhanced music generation.arXiv preprint arXiv:2405.15863,

-

[11]

Audio super-resolution with latent bridge models

Chang Li, Zehua Chen, Liyuan Wang, and Jun Zhu. Audio super-resolution with latent bridge models. arXiv preprint arXiv:2509.17609,

-

[12]

Neural vocoder is all you need for speech super-resolution.arXiv preprint arXiv:2203.14941,

Haohe Liu, Woosung Choi, Xubo Liu, Qiuqiang Kong, Qiao Tian, and DeLiang Wang. Neural vocoder is all you need for speech super-resolution.arXiv preprint arXiv:2203.14941,

-

[13]

Audioldm: Text-to-audio generation with latent diffusion models,

Haohe Liu, Zehua Chen, Yi Yuan, Xinhao Mei, Xubo Liu, Danilo Mandic, Wenwu Wang, and Mark D Plumbley. Audioldm: Text-to-audio generation with latent diffusion models.arXiv preprint arXiv:2301.12503,

-

[14]

Audiosr: Versatile audio super-resolution at scale

10 Haohe Liu, Ke Chen, Qiao Tian, Wenwu Wang, and Mark D Plumbley. Audiosr: Versatile audio super-resolution at scale. InICASSP 2024-2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 1076–1080. IEEE, 2024a. Haohe Liu, Yi Yuan, Xubo Liu, Xinhao Mei, Qiuqiang Kong, Qiao Tian, Yuping Wang, Wenwu Wang, Yuxuan Wang, ...

2024

-

[15]

Make-A-Video: Text-to-Video Generation without Text-Video Data

Uriel Singer, Adam Polyak, Thomas Hayes, Xi Yin, Jie An, Songyang Zhang, Qiyuan Hu, Harry Yang, Oron Ashual, Oran Gafni, et al. Make-a-video: Text-to-video generation without text-video data.arXiv preprint arXiv:2209.14792,

work page internal anchor Pith review arXiv

-

[16]

Score-Based Generative Modeling through Stochastic Differential Equations

Yang Song, Jascha Sohl-Dickstein, Diederik P Kingma, Abhishek Kumar, Stefano Ermon, and Ben Poole. Score-based generative modeling through stochastic differential equations.arXiv preprint arXiv:2011.13456,

work page internal anchor Pith review arXiv 2011

-

[17]

Runqian Wang and Kaiming He. Diffuse and disperse: Image generation with representation regularization.arXiv preprint arXiv:2506.09027,

-

[18]

Ge Wu, Shen Zhang, Ruijing Shi, Shanghua Gao, Zhenyuan Chen, Lei Wang, Zhaowei Chen, Hongcheng Gao, Yao Tang, Jian Yang, et al. Representation entanglement for generation: Training diffusion transformers is much easier than you think.arXiv preprint arXiv:2507.01467,

-

[19]

Large-scale contrastive language-audio pretraining with feature fusion and keyword-to-caption augmentation

Yusong Wu, Ke Chen, Tianyu Zhang, Yuchen Hui, Taylor Berg-Kirkpatrick, and Shlomo Dubnov. Large-scale contrastive language-audio pretraining with feature fusion and keyword-to-caption augmentation. InICASSP 2023-2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 1–5. IEEE,

2023

-

[20]

Mengping Yang, Zhiyu Tan, Binglei Li, Xiaomeng Yang, Hesen Chen, and Hao Li. Diversedit: Towards diverse representation learning in diffusion transformers.arXiv preprint arXiv:2603.04239,

-

[21]

Representation Alignment for Generation: Training Diffusion Transformers Is Easier Than You Think

11 Sihyun Yu, Sangkyung Kwak, Huiwon Jang, Jongheon Jeong, Jonathan Huang, Jinwoo Shin, and Saining Xie. Representation alignment for generation: Training diffusion transformers is easier than you think.arXiv preprint arXiv:2410.06940,

work page internal anchor Pith review arXiv

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.