Recognition: unknown

Guidelines for Designing AI Technologies to Support Adult Learning

Pith reviewed 2026-05-08 16:45 UTC · model grok-4.3

The pith

Analysis of real AI deployments produces 19 guidelines for adult learning technologies

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

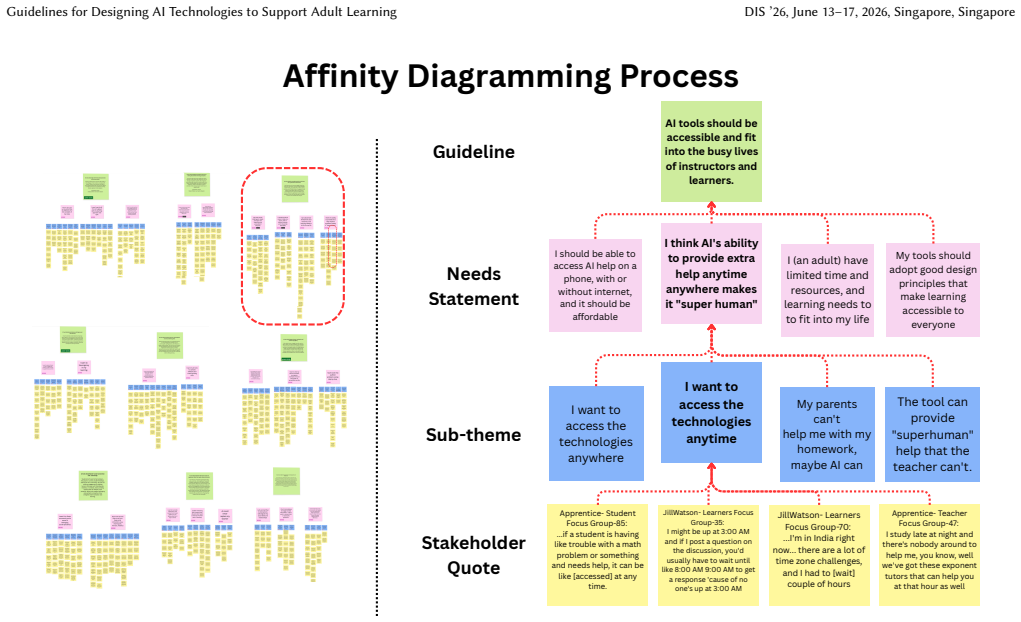

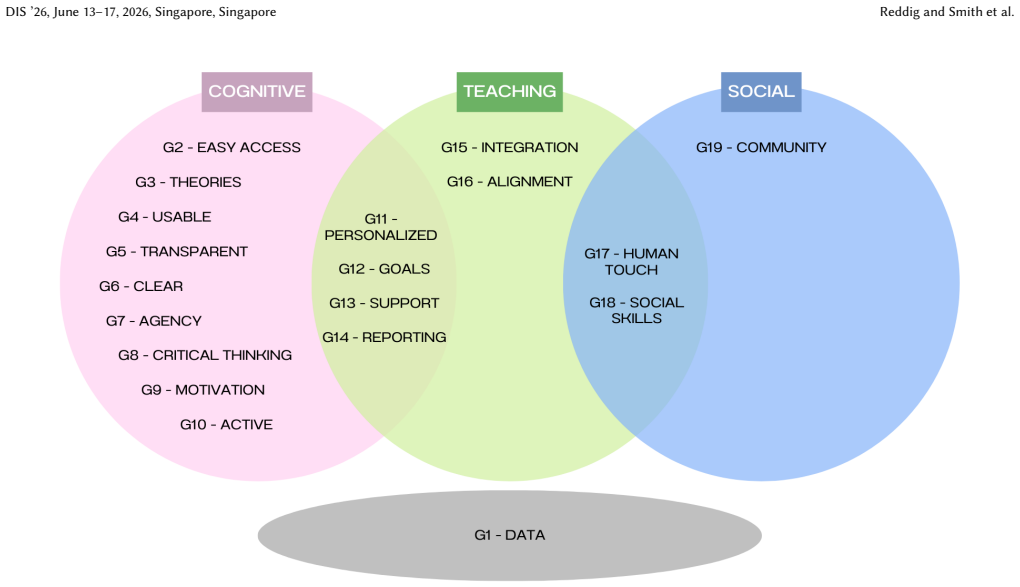

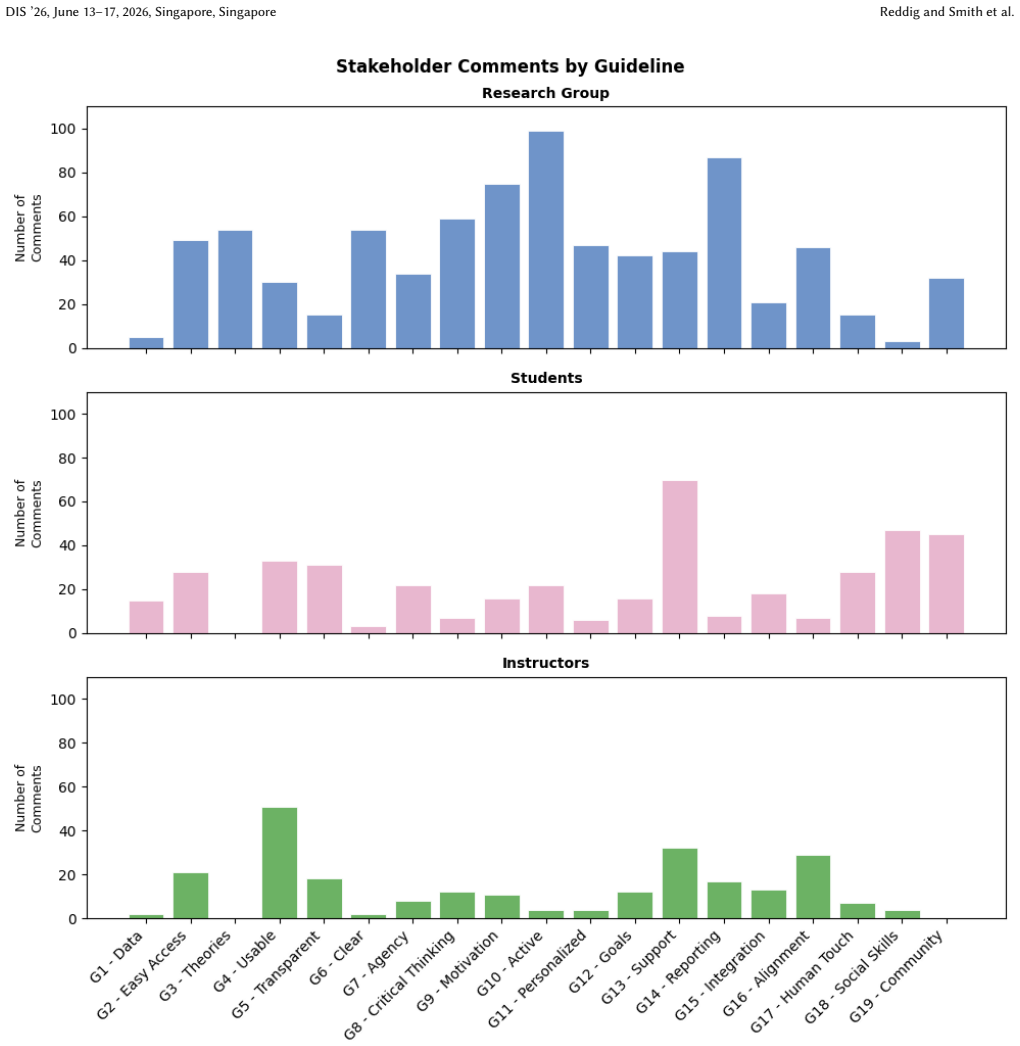

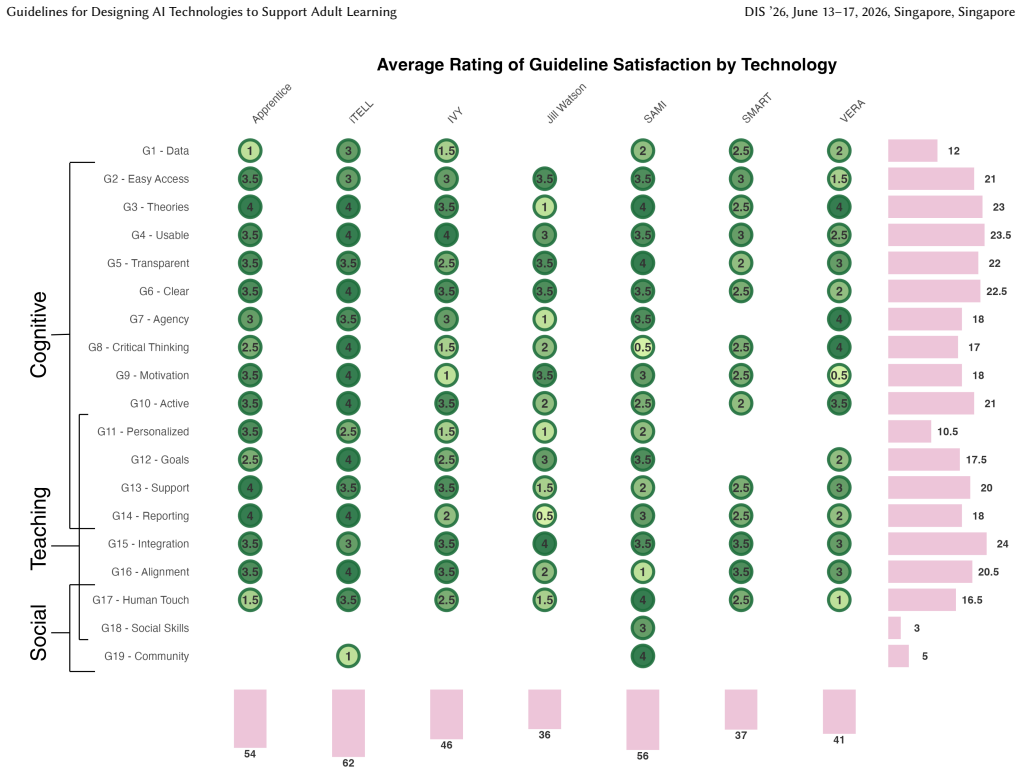

The paper establishes that reflexive thematic analysis of longitudinal deployment data from AI-supported adult learning systems can identify recurring challenges, which are then synthesized into a set of 19 design guidelines. These guidelines are demonstrated to be useful through heuristic evaluation of the systems, and a guideline exploration tool is presented to link the guidelines to stakeholder statements.

What carries the argument

Reflexive thematic analysis of deployment data to derive design guidelines for AI in adult learning

If this is right

- The 19 guidelines should inform the design of future AI-supported adult learning technologies.

- Heuristic evaluation provides a way to assess existing systems against the guidelines.

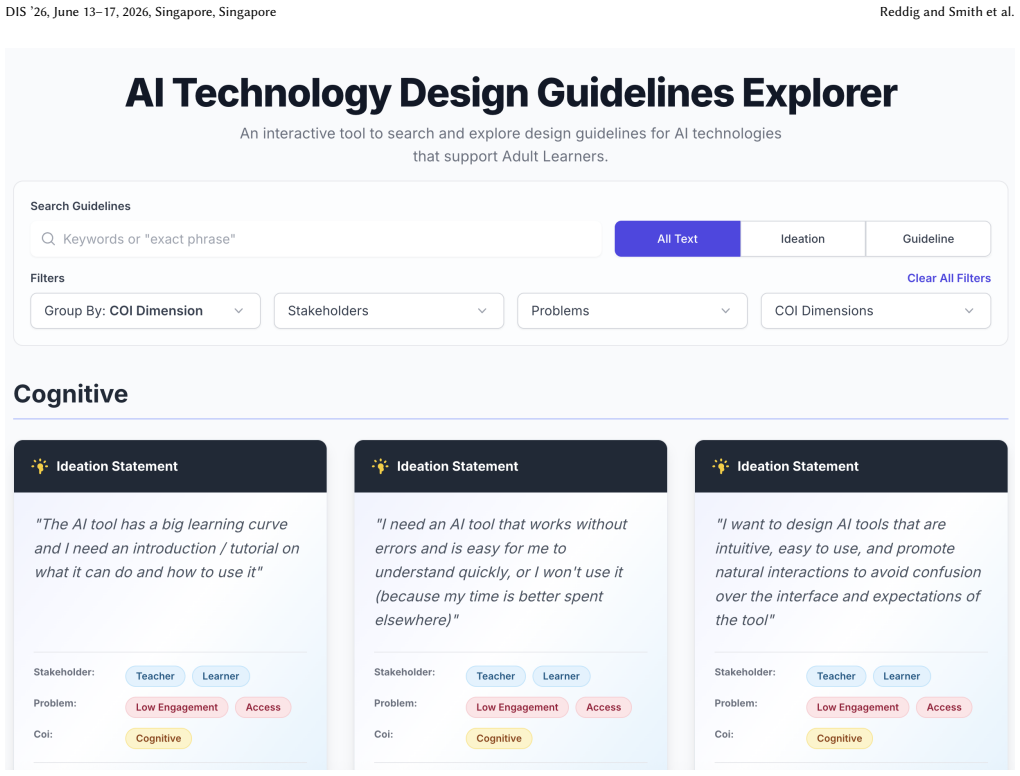

- The guideline exploration tool can assist in generating new technology ideas based on stakeholder needs.

- Adult learners benefit when AI tools account for their distinct constraints compared to younger students.

Where Pith is reading between the lines

- The guidelines may require testing and refinement when used in adult learning contexts different from the national institute studied.

- Following these guidelines could improve learner engagement and completion rates in adult education programs.

- Similar analysis methods could generate domain-specific guidelines for AI in other educational or training settings.

Load-bearing premise

The patterns of challenges found in the authors' own AI systems are general enough to guide design in other adult learning settings and technologies.

What would settle it

Deploying AI learning tools in other adult education programs that follow the 19 guidelines but still encounter significant unaddressed problems would indicate the guidelines are not broadly applicable.

Figures

read the original abstract

AI-powered educational technologies have demonstrated measurable benefits for learners, but their design and evaluation have largely centered on K-12 contexts. As a result, many AI-supported learning systems remain poorly aligned with the needs, constraints, and goals of adult learners. To better understand how AI systems function in adult education, this paper examines the deployment of several AI learning technologies developed within a multidisciplinary, national research institute in the United States focused on adult learning and online education. Drawing on longitudinal deployment data, we conducted a reflexive thematic analysis to identify recurring challenges and design considerations across systems. These insights were synthesized into a set of 19 design guidelines intended to inform future AI-supported adult learning technologies. We demonstrate the utility of these guidelines through a heuristic evaluation of the deployed systems. Lastly, we present a guideline exploration tool that aids in the ideation of technologies by connecting the guidelines to stakeholder statements surfaced in the analysis process.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper reports on a reflexive thematic analysis of longitudinal deployment data from multiple AI-powered learning technologies developed at a US national research institute focused on adult and online education. Recurring challenges and design considerations are identified and synthesized into 19 guidelines for future AI-supported adult learning systems. Utility is demonstrated by applying these guidelines in a heuristic evaluation of the original deployed systems, and a guideline exploration tool is presented that links the guidelines to stakeholder statements from the analysis.

Significance. If the guidelines hold beyond the source context, the work would address a clear gap in AIED research, which has focused predominantly on K-12 settings, by offering adult-learner-specific design considerations grounded in actual deployment experience. The provision of a practical exploration tool that connects guidelines to real stakeholder statements is a concrete strength that could aid practitioners in ideation. The contribution is timely given increasing interest in lifelong and online learning technologies.

major comments (1)

- [Heuristic evaluation] Heuristic evaluation section: The utility of the 19 guidelines is demonstrated solely by re-applying them heuristically to the identical deployed systems that supplied the longitudinal data for the reflexive thematic analysis. This tests consistency with the authors' prior observations rather than independent generalizability, applicability to other adult-learning contexts, or value for novel AI designs. No external systems, prospective design exercises, or baseline comparisons are described, weakening support for the claim that the guidelines can inform future technologies.

minor comments (3)

- [Methods] Methods section: The reflexive thematic analysis description provides insufficient detail on the number of systems and data volume analyzed, the coding process (including number of analysts and any reliability checks), and how saturation was determined. These omissions make it difficult to assess the robustness and representativeness of the resulting 19 guidelines.

- [Results] Results and synthesis: The mapping from identified themes to the specific set of 19 guidelines is not transparent. A table or diagram showing how themes were consolidated into guidelines would improve clarity and allow readers to trace the derivation.

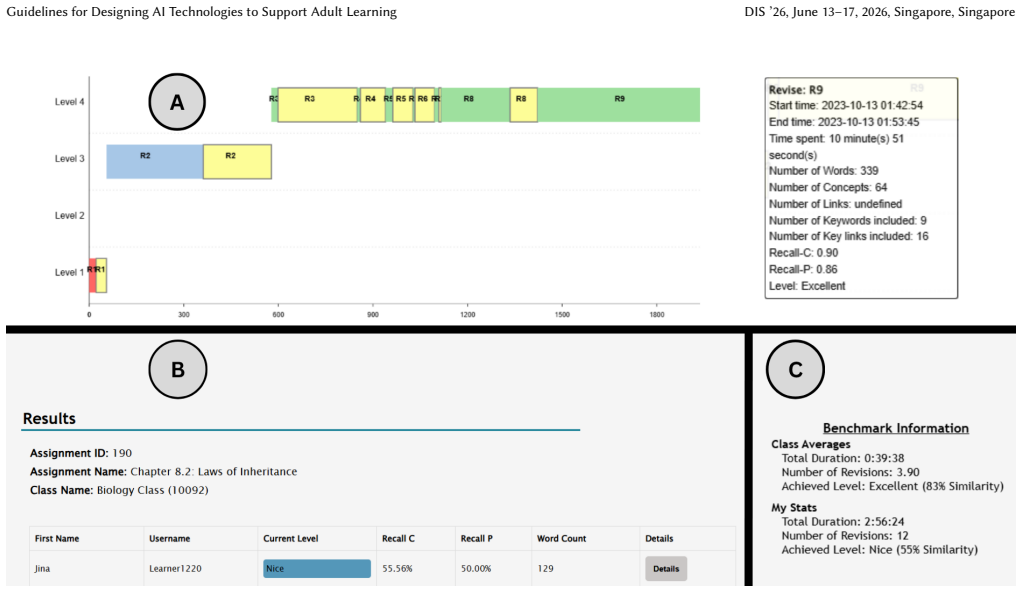

- [Guideline exploration tool] Guideline exploration tool: Additional information is needed on the tool's technical implementation, user interface, and any evaluation of its effectiveness in supporting ideation beyond the authors' own use.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed feedback. We address the major comment below and have revised the manuscript to better frame the scope and limitations of our demonstration.

read point-by-point responses

-

Referee: Heuristic evaluation section: The utility of the 19 guidelines is demonstrated solely by re-applying them heuristically to the identical deployed systems that supplied the longitudinal data for the reflexive thematic analysis. This tests consistency with the authors' prior observations rather than independent generalizability, applicability to other adult-learning contexts, or value for novel AI designs. No external systems, prospective design exercises, or baseline comparisons are described, weakening support for the claim that the guidelines can inform future technologies.

Authors: We acknowledge that the heuristic evaluation is performed on the same systems used to derive the guidelines via reflexive thematic analysis. This serves as an internal demonstration of how the guidelines can surface and organize design considerations from the original deployment data, rather than an independent test of generalizability. We agree this limits claims about applicability to entirely new contexts or novel designs. To address the concern, we have revised the manuscript as follows: (1) clarified in the heuristic evaluation section that the exercise illustrates practical utility and consistency within the source context; (2) added a dedicated Limitations subsection explicitly noting the absence of external validation and calling for future studies with other adult-learning systems and prospective design exercises; and (3) expanded the Discussion with concrete examples of how the guidelines, linked to stakeholder statements, could guide ideation for new AI technologies outside the original deployments. These changes better scope the contribution without overstating the validation provided. revision: yes

Circularity Check

Heuristic evaluation of the 19 guidelines performed only on the same deployed systems used to derive them via reflexive thematic analysis

specific steps

-

other

[Abstract]

"Drawing on longitudinal deployment data, we conducted a reflexive thematic analysis to identify recurring challenges and design considerations across systems. These insights were synthesized into a set of 19 design guidelines intended to inform future AI-supported adult learning technologies. We demonstrate the utility of these guidelines through a heuristic evaluation of the deployed systems."

The guidelines are synthesized directly from analysis of the authors' own deployed systems; the utility demonstration then re-applies the guidelines heuristically to those exact same systems. This reduces the 'demonstration' step to a consistency check on the input data rather than an independent test of usefulness for future technologies.

full rationale

The paper derives the guidelines from reflexive thematic analysis of longitudinal data on the authors' own deployed AI learning systems, then claims to demonstrate their utility by applying a heuristic evaluation to those identical systems. This creates a self-referential loop where the 'demonstration' largely confirms consistency with the original observations rather than providing independent evidence of generalizability or value for novel designs. No external systems, prospective applications, or baseline comparisons are described. However, the core synthesis of guidelines from qualitative data remains a valid contribution independent of the evaluation step, so the circularity is partial and does not render the entire paper equivalent to its inputs by construction.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Reflexive thematic analysis applied to longitudinal deployment data can reliably surface generalizable design challenges for AI adult learning systems.

Reference graph

Works this paper leans on

-

[1]

Saleema Amershi, Dan Weld, Mihaela Vorvoreanu, Adam Fourney, Besmira Nushi, Penny Collisson, Jina Suh, Shamsi Iqbal, Paul N Bennett, Kori Inkpen, et al. 2019. Guidelines for human-AI interaction. InProceedings of the 2019 chi conference on human factors in computing systems. 1–13

2019

-

[2]

Sungeun An, Robert Bates, Jen Hammock, Spencer Rugaber, Emily Weigel, and Ashok Goel. 2020. Scientific modeling using large scale knowledge. InInterna- tional Conference on Artificial Intelligence in Education. Springer, 20–24

2020

-

[3]

Sungeun An, Jennifer Hammock, and Ashok Goel. 2025. How Online Learners Engage in Self-Directed Modeling: A Behavioral Analysis.International Journal of Artificial Intelligence in Education(2025), 1–28. Guidelines for Designing AI Technologies to Support Adult Learning DIS ’26, June 13–17, 2026, Singapore, Singapore

2025

-

[4]

Yoojin Bae, Jinho Kim, Andrea Davis, and Min Kyu Kim. 2024. A Study on AI- Augmented Concept Learning: Impact on Learner Perceptions and Outcomes in STEM Education. InProceedings of the 18th International Conference of the Learning Sciences-ICLS 2024, pp. 1450-1453. International Society of the Learning Sciences

2024

-

[5]

Matthew L Bernacki, Meghan J Greene, and Nikki G Lobczowski. 2021. A sys- tematic review of research on personalized learning: Personalized by whom, to what, how, and for what purpose (s)?Educational Psychology Review33, 4 (2021), 1675–1715

2021

-

[6]

Melissa Bond, Olaf Zawacki-Richter, and Mark Nichols. 2019. Revisiting five decades of educational technology research: A content and authorship analysis of the British Journal of Educational Technology.British journal of educational technology50, 1 (2019), 12–63

2019

-

[7]

2000.How people learn

John D Bransford, Ann L Brown, Rodney R Cocking, et al. 2000.How people learn. Vol. 11. Washington, DC: National academy press

2000

-

[8]

Virginia Braun and Victoria Clarke. 2006. Using thematic analysis in psychology. Qualitative research in psychology3, 2 (2006), 77–101

2006

-

[9]

Virginia Braun and Victoria Clarke. 2019. Reflecting on reflexive thematic analysis. Qualitative research in sport, exercise and health11, 4 (2019), 589–597

2019

-

[10]

Stephen Buckley, John Kos, Rahul Dass, Cathy Teng, Kenneth Eaton, Sareen Zhang, and Ashok Goel. 2025. A Personalized AI Coach to Assist in Self-Directed Learning. InProceedings of the Innovation and Responsibility in AI-Supported Education Workshop (Proceedings of Machine Learning Research, Vol. 273), Zichao Wang, Simon Woodhead, Muktha Ananda, Debshila B...

2025

-

[11]

CAST. 2024. CAST Universal Design for Learning Guidelines version 3.0. https: //udlguidelines.cast.org/ Accessed: 2026-04-01

2024

-

[12]

Lijia Chen, Pingping Chen, and Zhijian Lin. 2020. Artificial Intelligence in Education: A Review.IEEE Access8 (2020), 75264–75278. doi:10.1109/ACCESS. 2020.2988510

-

[13]

Michelene TH Chi and Ruth Wylie. 2014. The ICAP framework: Linking cognitive engagement to active learning outcomes.Educational psychologist49, 4 (2014), 219–243

2014

-

[14]

Thomas KF Chiu, Qi Xia, Xinyan Zhou, Ching Sing Chai, and Miaoting Cheng

-

[15]

Systematic literature review on opportunities, challenges, and future research recommendations of artificial intelligence in education.Computers and Education: Artificial Intelligence4 (2023), 100118

2023

-

[16]

Rudrajit Choudhuri, Dylan Liu, Igor Steinmacher, Marco Gerosa, and Anita Sarma. 2024. How Far Are We? The Triumphs and Trials of Generative AI in Learning Software Engineering. InProceedings of the IEEE/ACM 46th International Conference on Software Engineering(Lisbon, Portugal)(ICSE ’24). Association for Computing Machinery, New York, NY, USA, Article 184...

-

[17]

Scott Crossley, Joon Suh Choi, Wesley Morris, Langdon Holmes, and David Joyner

-

[18]

AI Enhanced Intelligent Texts and Learning Gains. (2025)

2025

-

[19]

Scott Crossley, Wesley Morris, Joon Suh Choi, and Langdon Holmes. 2025. Ex- ploratory Assessment of Learning in an Intelligent Text Framework: iTELL RCT. InProceedings of the Twelfth ACM Conference on Learning@ Scale. 2–12

2025

-

[20]

Rahul K Dass, Rochan H Madhusudhana, Erin C Deye, Shashank Verma, Timo- thy A Bydlon, Grace Brazil, and Ashok K Goel. 2025. Ivy: a hybrid knowledge- based and generative AI coach for explaining procedural skills. InInternational Conference on Artificial Intelligence in Education. Springer, 233–246

2025

-

[21]

Rocio De la Torre, Bhakti S Onggo, Canan G Corlu, Maria Nogal, and Angel A Juan. 2021. The role of simulation and serious games in teaching concepts on circular economy and sustainable energy.Energies14, 4 (2021), 1138

2021

-

[22]

what" and

Edward L Deci and Richard M Ryan. 2000. The" what" and" why" of goal pursuits: Human needs and the self-determination of behavior.Psychological inquiry11, 4 (2000), 227–268

2000

-

[23]

2013.Intrinsic motivation and self- determination in human behavior

Edward L Deci and Richard M Ryan. 2013.Intrinsic motivation and self- determination in human behavior. Springer Science & Business Media

2013

-

[24]

Brayan Díaz and Miguel Nussbaum. 2024. Artificial intelligence for teaching and learning in schools: The need for pedagogical intelligence.Computers & Education217 (2024), 105071

2024

-

[25]

Adam Kenneth Dubé and Run Wen. 2022. Identification and evaluation of tech- nology trends in K-12 education from 2011 to 2021.Education and information technologies27, 2 (2022), 1929–1958

2022

-

[26]

Andrea Fedele, Clara Punzi, Stefano Tramacere, et al. 2024. The ALTAI checklist as a tool to assess ethical and legal implications for a trustworthy AI development in education.Computer Law & Security Review53 (2024), 105986

2024

-

[27]

Centre for Excellence in Universal Design. 2026. The 7 Principles of Univer- sal Design. https://universaldesign.ie/about-universal-design/the-7-principles Accessed: 2026-04-01

2026

-

[28]

Aaron A Funa and Renz Alvin E Gabay. 2025. Policy guidelines and recommenda- tions on AI use in teaching and learning: A meta-synthesis study.Social Sciences & Humanities Open11 (2025), 101221

2025

-

[29]

D Randy Garrison, Terry Anderson, and Walter Archer. 1999. Critical inquiry in a text-based environment: Computer conferencing in higher education.The internet and higher education2, 2-3 (1999), 87–105

1999

-

[30]

D Randy Garrison, Terry Anderson, and Walter Archer. 2010. The first decade of the community of inquiry framework: A retrospective.The internet and higher education13, 1-2 (2010), 5–9

2010

-

[31]

D Randy Garrison and J Ben Arbaugh. 2007. Researching the community of inquiry framework: Review, issues, and future directions.The Internet and higher education10, 3 (2007), 157–172

2007

-

[32]

Ashok Goel, Chris Dede, Myk Garn, and Chaohua Ou. 2024. AI-ALOE: AI for reskilling, upskilling, and workforce development.Ai Magazine45, 1 (2024), 77–82

2024

-

[33]

A Goel and D Joyner. 2015. Impact of a creativity support tool on student learning about scientific discovery processes. InProceedings of the Sixth International Conference on Computational Creativity, Vol. 284

2015

-

[34]

Ashok K Goel and Lalith Polepeddi. 2018. Jill Watson: A virtual teaching assistant for online education. InLearning engineering for online education. Routledge, 120–143

2018

- [35]

-

[36]

Adit Gupta, Jennifer Reddig, Tommaso Calo, Daniel Weitekamp, and Christopher J MacLellan. 2025. Beyond final answers: Evaluating large language models for math tutoring. InInternational Conference on Artificial Intelligence in Education. Springer, 323–337

2025

-

[37]

Adit Gupta, Momin Siddiqui, Glen Smith, Jenn Reddig, and Christopher MacLellan

-

[38]

arXiv preprint arXiv:2412.04477(2024)

Intelligent tutors for adult learners: An analysis of needs and challenges. arXiv preprint arXiv:2412.04477(2024)

-

[39]

Golnoush Haddadian, Hyunkyu Han, Jinho Kim, Mohamed Shameer Abdeen, and Min Kyu Kim. 2025. Exploring AI-Generated Expert Models: Instructor Interaction and Learner Perceptions in a Physics Class. InProceedings of the 19th International Conference of the Learning Sciences-ICLS 2025, pp. 1684-1688. International Society of the Learning Sciences

2025

-

[40]

1997.Contextual design: defining customer- centered systems

Karen Holtzblatt and Hugh Beyer. 1997.Contextual design: defining customer- centered systems. Elsevier

1997

-

[41]

Sandeep Kakar, Rhea Basappa, Ida Camacho, Christopher Griswold, Alex Houk, Christopher Leung, Mustafa Tekman, Patrick Westervelt, Qiaosi Wang, and Ashok K Goel. 2024. SAMI: an AI actor for fostering social interactions in online classrooms. InInternational Conference on Intelligent Tutoring Systems. Springer, 149–161

2024

-

[42]

Sandeep Kakar, Pratyusha Maiti, Karan Taneja, Alekhya Nandula, Gina Nguyen, Aiden Zhao, Vrinda Nandan, and Ashok Goel. 2024. Jill Watson: scaling and deploying an AI conversational agent in online classrooms. InInternational Conference on Intelligent Tutoring Systems. Springer, 78–90

2024

-

[43]

Hassan Khosravi, Simon Buckingham Shum, Guanliang Chen, Cristina Conati, Yi-Shan Tsai, Judy Kay, Simon Knight, Roberto Martinez-Maldonado, Shazia Sadiq, and Dragan Gašević. 2022. Explainable Artificial Intelligence in education. Computers and Education: Artificial Intelligence3 (2022), 100074. doi:10.1016/j. caeai.2022.100074

work page doi:10.1016/j 2022

-

[44]

Jinho Kim, Yoojin Bae, Jonathan Stravelakis, and Min Kyu Kim. 2024. Inves- tigating the Influence of AI-Augmented Summarization on Concept Learning, Summarization Skills, Argumentative Essay Writing, and Course Outcomes in Online Adult Education. InProceedings of the 18th International Conference of the Learning Sciences-ICLS 2024, pp. 2309-2310. Internat...

2024

-

[45]

Min Kyu Kim, Jinho Kim, and Ali Heidari. 2024. Exploring the multi-dimensional human mind: Model-based and text-based approaches.Assessing Writing61 (2024), 100878

2024

-

[46]

Royce Kimmons and Joshua M Rosenberg. 2022. Trends and topics in educational technology, 2022 edition.TechTrends66, 2 (2022), 134–140

2022

-

[47]

Malcolm S. Knowles. 1973.The Adult Learner: A Neglected Species. Gulf Publishing Company, Houston

1973

-

[48]

Malcolm Shepherd Knowles. 1984. Andragogy in action. (1984)

1984

-

[49]

John Kos, Dinesh Ayyappan, and Ashok Goel. 2024. A Constructivist Framing of Wheel Spinning: Identifying Unproductive Behaviors with Sequence Analysis. InInternational Conference on Intelligent Tutoring Systems. Springer, 174–187

2024

-

[50]

J. Kos, R. Singh, C. Lum, and A. Goel. 2025. SCAFFOLDING LEARNERS’ ECO- LOGICAL DOMAIN KNOWLEDGE TO SUPPORT INQUIRY BASED MODELING. InEDULEARN25 Proceedings(Palma, Spain)(17th International Conference on Education and New Learning Technologies). IATED, 5591–5599. doi:10.21125/ edulearn.2025.1382

-

[51]

Lasha Labadze, Maya Grigolia, and Lela Machaidze. 2023. Role of AI chatbots in education: systematic literature review.International journal of Educational Technology in Higher education20, 1 (2023), 56

2023

-

[52]

Robert Lindgren, Sandeep Kakar, Pratyusha Maiti, Karan Taneja, and Ashok Goel

-

[53]

InProceedings of the Eleventh ACM Conference on Learning@ Scale

Does Jill Watson Increase Teaching Presence?. InProceedings of the Eleventh ACM Conference on Learning@ Scale. 269–273

-

[54]

Marsha C Lovett. 1998. Cognitive task analysis in service of intelligent tutoring system design: A case study in statistics. InInternational Conference on Intelligent Tutoring Systems. Springer, 234–243. DIS ’26, June 13–17, 2026, Singapore, Singapore Reddig and Smith et al

1998

-

[55]

Cherie Lum, Erin Deye, Grace Brazil, Tim Bydlon, Shashank Verma, Rochan Madhusudhana, Rahul Dass, and Ashok Goel. 2025. Designing an AI coaching system for interactive video-based skill learning. InInternational Conference on Intelligent Tutoring Systems. Springer, 281–291

2025

-

[56]

Jihao Luo, Chenxu Zheng, Jiamin Yin, and Hock Hai Teo. 2025. Design and assessment of AI-based learning tools in higher education: A systematic review. International Journal of Educational Technology in Higher Education22, 1 (2025), 42

2025

-

[57]

Pratyusha Maiti and Ashok Goel. 2025. Can an AI Partner Empower Learners to Ask Critical Questions?. InProceedings of the 30th International Conference on Intelligent User Interfaces. 314–324

2025

-

[58]

Nikola Marangunić and Andrina Granić. 2015. Technology acceptance model: a literature review from 1986 to 2013.Universal access in the information society 14, 1 (2015), 81–95

2015

-

[59]

Richard E Mayer. 1997. Multimedia learning: Are we asking the right questions? Educational psychologist32, 1 (1997), 1–19

1997

-

[60]

Anja Meierkord and Roland Tusz. 2025. Trends in Adult Learning: New Data from the 2023 Survey of Adult Skills. Getting Skills Right.OECD Publishing (2025)

2025

-

[61]

Thomas Mejtoft, Sarah Hale, and Ulrik Söderström. 2019. Design friction. In Proceedings of the 31st European Conference on Cognitive Ergonomics. 41–44

2019

-

[62]

Sharan B Merriam et al. 2001. Andragogy and self-directed learning: Pillars of adult learning theory.New directions for adult and continuing education2001, 89 (2001), 3

2001

-

[63]

Robert J Mills and Matthew Harris. 2019. Alignment between technology accep- tance and instructional design via self-efficacy.Review of Business Information Systems23, 1 (2019)

2019

-

[64]

Thomas Howard Morris. 2020. Experiential learning–a systematic review and revision of Kolb’s model.Interactive learning environments28, 8 (2020), 1064– 1077

2020

-

[65]

Wesley Morris, Joon Suh Choi, Langdon Holmes, Vaibhav Gupta, and Scott Crossley. 2024. Automatic Question Generation and Constructed Response Scoring in Intelligent Texts. InProceedings of the 17th International Conference on Educational Data Mining

2024

-

[66]

Wesley Morris, Scott Crossley, Langdon Holmes, Chaohua Ou, Mihai Dascalu, and Danielle McNamara. 2025. Formative feedback on student-authored summaries in intelligent textbooks using large language models.International Journal of Artificial Intelligence in Education35, 3 (2025), 1022–1043

2025

-

[67]

Wesley Morris, Scott Crossley, Langdon Holmes, Chaohua Ou, Danielle McNa- mara, and Mihai Dascalu. 2023. Using large language models to provide formative feedback in intelligent textbooks. InInternational Conference on Artificial Intelli- gence in Education. Springer, 484–489

2023

-

[68]

Chantal Mutimukwe, Olga Viberg, Lena-Maria Oberg, and Teresa Cerratto- Pargman. 2022. Students’ privacy concerns in learning analytics: Model de- velopment.British Journal of Educational Technology53, 4 (2022), 932–951

2022

-

[69]

Andy Nguyen, Anh Thi Duong, Diep Thi Bich Nguyen, Van Thi Thanh Lai, and Belle Dang. 2025. Guidelines for learning design and assessment for genera- tive artificial intelligence-integrated education: a unified view.Information and Learning Sciences(2025)

2025

-

[70]

Andy Nguyen, Shohil Kishore, Yvonne Hong, Saima Qutab, and Belle Dang. 2025. Generative Artificial Intelligence (AI) in education: from organizing visions to official guidelines.Information Technology & People38, 8 (2025), 172–199

2025

-

[71]

Jakob Nielsen and Rolf Molich. 1990. Heuristic evaluation of user interfaces. InProceedings of the SIGCHI conference on Human factors in computing systems. 249–256

1990

-

[72]

EG Nikiforova and D Sh Shakirova. 2025. Ethical Guidelines for the Use of Artificial Intelligence in Education. InInternational Scientific Conference Digital Future: Science, Education, and Innovative Development of Socio-Economic Systems. Springer, 605–612

2025

-

[73]

JASMINE Park and AMELIA Vance. 2021. College Students’ Attitudes Toward Data Privacy.Journal of Information Privacy and Security17, 2 (2021), 1–16

2021

-

[74]

Jan L Plass and Shashank Pawar. 2020. Toward a taxonomy of adaptivity for learning.Journal of Research on Technology in Education52, 3 (2020), 275–300

2020

-

[75]

Rolf Ploetzner. 2024. The effectiveness of enhanced interaction features in edu- cational videos: a meta-analysis.Interactive Learning Environments32, 5 (2024), 1597–1612

2024

-

[76]

Valerie J Shute. 2008. Focus on formative feedback.Review of educational research 78, 1 (2008), 153–189

2008

-

[77]

Alexander Skulmowski and Kate Man Xu. 2022. Understanding cognitive load in digital and online learning: A new perspective on extraneous cognitive load. Educational psychology review34, 1 (2022), 171–196

2022

-

[78]

Sharon Slade, Paul Prinsloo, and Mohammad Khalil. 2019. Learning analytics at the intersections of student trust, disclosure and benefit. InProceedings of the 9th international conference on learning analytics & knowledge. 235–244

2019

- [79]

-

[80]

Sergey Sosnovsky, Peter Brusilovsky, and Andrew Lan. 2025. Intelligent textbooks. International Journal of Artificial Intelligence in Education35, 3 (2025), 967–986

2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.