Recognition: unknown

UVMarvel: an Automated LLM-aided UVM Machine for Subsystem-level RTL Verification

Pith reviewed 2026-05-08 15:31 UTC · model grok-4.3

The pith

UVMarvel uses LLMs with an intermediate representation and protocol libraries to automatically build subsystem UVM testbenches that reach 95.65 percent coverage.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

UVMarvel is the first framework capable of automatically constructing subsystem-level UVM testbenches across mainstream bus protocols. It achieves an average code coverage of 95.65 percent by introducing an Intermediate Representation and a Bus Protocol Library to translate heterogeneous specifications into protocol-correct testbenches, and employs a Signal Tracker and a Verilog Patching Library to guide LLM-based stimuli refinement, reducing verification time from several human working days to a 4.5-hour automated execution.

What carries the argument

The central mechanism is the combination of an Intermediate Representation for translating specifications, a Bus Protocol Library for ensuring correctness across protocols, a Signal Tracker for monitoring, and a Verilog Patching Library for directing LLM refinements to produce complete high-coverage UVM testbenches.

If this is right

- Subsystem-level RTL verification environments become generatable automatically for different bus protocols without repeated manual coding.

- Verification time for such subsystems drops from multiple human working days to a single automated run of 4.5 hours.

- Average code coverage of 95.65 percent is attainable through LLM-guided stimulus refinement without deep micro-architectural expertise per design.

- Heterogeneous specifications can be uniformly handled to produce reusable UVM structures across mainstream protocols.

Where Pith is reading between the lines

- If the libraries generalize, the approach could shorten overall chip design cycles by allowing faster verification iterations.

- Similar guidance structures might apply to other verification flows such as coverage-driven closure or property generation.

- Integration into existing EDA tools could shift verification roles toward higher-level oversight rather than low-level coding.

Load-bearing premise

The assumption that these libraries and trackers together will guide LLMs to generate protocol-correct and high-coverage testbenches without needing substantial manual corrections or expert oversight for each new design.

What would settle it

Running the framework on a new complex subsystem using a mainstream bus protocol and finding that the output testbench requires extensive manual fixes or delivers code coverage below 80 percent would falsify the central claim.

Figures

read the original abstract

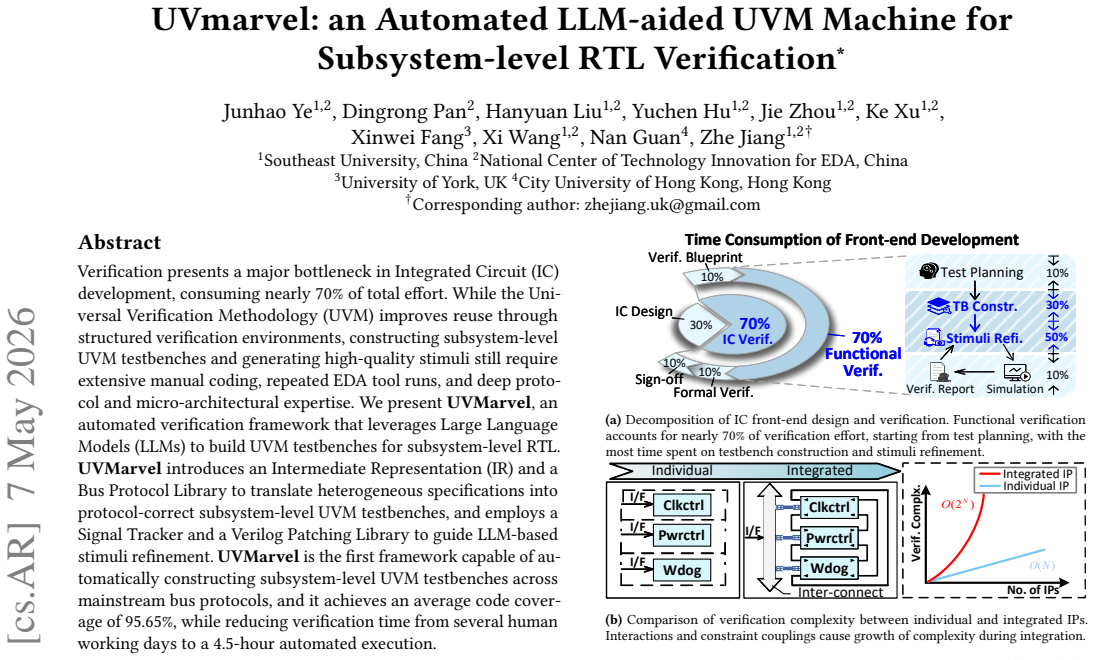

Verification presents a major bottleneck in Integrated Circuit (IC) development, consuming nearly 70% of total effort. While the Universal Verification Methodology (UVM) improves reuse through structured verification environments, constructing subsystem-level UVM testbenches and generating high-quality stimuli still require extensive manual coding, repeated EDA tool runs, and deep protocol and micro-architectural expertise. We present UVMarvel, an automated verification framework that leverages Large Language Models (LLMs) to build UVM testbenches for subsystem-level RTL. UVMarvel introduces an Intermediate Representation (IR) and a Bus Protocol Library to translate heterogeneous specifications into protocol-correct subsystem-level UVM testbenches, and employs a Signal Tracker and a Verilog Patching Library to guide LLM-based stimuli refinement. UVMarvel is the first framework capable of automatically constructing subsystem-level UVM testbenches across mainstream bus protocols, and it achieves an average code coverage of 95.65%, while reducing verification time from several human working days to a 4.5-hour automated execution.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents UVMarvel, an automated LLM-based framework for generating subsystem-level UVM testbenches from RTL designs. It introduces an Intermediate Representation (IR) and Bus Protocol Library to translate specifications for mainstream protocols (AXI, APB, AXI-Lite), plus a Signal Tracker and Verilog Patching Library to refine LLM-generated stimuli. The central claim is that this is the first such end-to-end automated system, delivering 95.65% average code coverage while reducing verification effort from multiple human days to a 4.5-hour run.

Significance. If the reported coverage and runtime results prove reproducible across a broader set of designs, the work could meaningfully address the verification bottleneck (cited as ~70% of IC effort) by reducing manual UVM coding and protocol expertise requirements. The engineering integration of IR, protocol libraries, and patching mechanisms offers a concrete, extensible template for LLM-assisted hardware verification that could accelerate subsystem-level sign-off in practice.

major comments (2)

- [Abstract and evaluation section] The abstract and evaluation section report 95.65% average code coverage and a 4.5-hour automated runtime but supply no details on the number or identity of evaluated RTL subsystems, the specific LLMs and prompting strategies used, baseline comparisons against manual UVM flows or prior tools, or any quantification of LLM failure modes and required manual corrections. These omissions make the performance claims impossible to assess for generalizability or robustness.

- [Section 3] Section 3 (pipeline description) presents the combination of IR, Bus Protocol Library, Signal Tracker, and Verilog Patching Library as sufficient to steer LLMs toward protocol-correct, high-coverage testbenches, yet provides no quantitative data on how often the LLM still produces incorrect protocol behavior or requires substantial human intervention for new designs outside the demonstrated AXI/APB/AXI-Lite cases.

minor comments (1)

- [Evaluation section] The manuscript would benefit from a dedicated table listing the exact designs, protocols, and coverage metrics per case to support the reported average.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We address each major comment below and will revise the paper to enhance the clarity and completeness of the reported results and methodology.

read point-by-point responses

-

Referee: [Abstract and evaluation section] The abstract and evaluation section report 95.65% average code coverage and a 4.5-hour automated runtime but supply no details on the number or identity of evaluated RTL subsystems, the specific LLMs and prompting strategies used, baseline comparisons against manual UVM flows or prior tools, or any quantification of LLM failure modes and required manual corrections. These omissions make the performance claims impossible to assess for generalizability or robustness.

Authors: We agree that the abstract and evaluation section would benefit from greater specificity to support assessment of generalizability. In the revised manuscript, we will expand these sections to detail the number and identities of the evaluated RTL subsystems (including their protocol configurations and complexity), the specific LLMs and versions employed, the prompting strategies used, and quantitative baseline comparisons to manual UVM development in terms of time and coverage achieved. We will also add a table or subsection quantifying LLM failure modes, such as protocol violations or incomplete stimuli, along with the frequency and nature of required manual corrections based on our experimental records. revision: yes

-

Referee: [Section 3] Section 3 (pipeline description) presents the combination of IR, Bus Protocol Library, Signal Tracker, and Verilog Patching Library as sufficient to steer LLMs toward protocol-correct, high-coverage testbenches, yet provides no quantitative data on how often the LLM still produces incorrect protocol behavior or requires substantial human intervention for new designs outside the demonstrated AXI/APB/AXI-Lite cases.

Authors: We acknowledge that Section 3 would be strengthened by quantitative evidence on robustness. We will revise the section to include data from our experiments on the frequency of incorrect protocol behavior generated by the LLM despite the IR, libraries, and trackers. This will encompass metrics on intervention rates for the demonstrated protocols. For new designs beyond AXI/APB/AXI-Lite, we will report additional case studies or extensions to quantify human intervention needs, highlighting both the framework's extensibility and any observed limitations. revision: yes

Circularity Check

No significant circularity

full rationale

The paper presents an engineering framework for LLM-assisted UVM testbench generation rather than a mathematical derivation chain. No equations, fitted parameters, or predictions that reduce to inputs by construction appear in the described pipeline (IR translation, Bus Protocol Library, Signal Tracker, Verilog Patching Library). Claims rest on empirical coverage results and runtime measurements from concrete AXI/APB/AXI-Lite examples; the components are introduced as design inputs, not derived outputs. No self-citation load-bearing steps or ansatz smuggling are present.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption LLMs can generate syntactically and semantically correct UVM and Verilog code when provided with structured protocol information.

invented entities (4)

-

Intermediate Representation (IR)

no independent evidence

-

Bus Protocol Library

no independent evidence

-

Signal Tracker

no independent evidence

-

Verilog Patching Library

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Mohamed A Abd El Ghany and Khaled A Ismail. 2021. Speed up functional cover- age closure of cordic designs using machine learning models. In2021 International Conference on Microelectronics (ICM). IEEE, 91–95

2021

-

[2]

Accellera Systems Initiative

Accellera Systems Initiative 2015.Universal Verification Methodology (UVM) 1.2 Reference Manual. Accellera Systems Initiative

2015

-

[3]

Berk Berabi et al. 2024. LLM4HW: From Natural Language to Verilog Generation. InProceedings of the 61st ACM/IEEE Design Automation Conference (DAC). ACM, 1–6

2024

-

[4]

2000.Writing Testbenches: Functional Verification of HDL Models

Janick Bergeron. 2000.Writing Testbenches: Functional Verification of HDL Models. Springer

2000

- [5]

-

[6]

Harsh Bhargav, Vineesh Vs, Binod Kumar, and Virendra Singh. 2021. Enhanc- ing testbench quality via genetic algorithm. In2021 IEEE International Midwest Symposium on Circuits and Systems (MWSCAS). IEEE, 652–656

2021

-

[7]

Cadence Design Systems

Cadence Design Systems 2019.Metric-Driven Verification Methodology User Guide. Cadence Design Systems

2019

-

[8]

Guanlan Chen et al. 2024. LLM4DV: Large Language Models for Design and Verification. In2024 IEEE/ACM International Conference on Computer-Aided Design (ICCAD). IEEE, 1–9

2024

- [9]

-

[10]

Jingyi Chen, Lei Yan, Shikai Wang, and Wenxuan Zheng. 2024. Deep reinforce- ment learning-based automatic test case generation for hardware verification. Journal of Artificial Intelligence General science (JAIGS) ISSN: 3006-40236, 1 (2024), 409–429

2024

-

[11]

Hyojin Choi, In Huh, Seungju Kim, Jeonghoon Ko, Changwook Jeong, Hyeonsik Son, Kiwon Kwon, Joonwan Chai, Younsik Park, Jaehoon Jeong, et al . 2021. Application of deep reinforcement learning to dynamic verification of dram designs. In2021 58th ACM/IEEE Design Automation Conference (DAC). IEEE, 523–528

2021

-

[12]

Gabriel Mihail Danciu and Alexandru Dinu. 2022. Coverage fulfillment automa- tion in hardware functional verification using genetic algorithms.Applied Sciences 12, 3 (2022), 1559

2022

-

[13]

Siddhanth Dhodhi, Debarshi Chatterjee, Eric Hill, and Saad Godil. 2021. Deep stalling using a coverage driven genetic algorithm framework. In2021 IEEE 39th VLSI Test Symposium (VTS). IEEE Computer Society, 1–4

2021

-

[14]

Jaideep Varier EV, V Prabakar, and Karthigha Balamurugan. 2019. Design of generic verification procedure for IIC protocol in UVM. In2019 3rd International conference on Electronics, Communication and Aerospace Technology (ICECA). IEEE, 1146–1150

2019

-

[15]

Martin Fajcik, Pavel Smrz, and Marcela Zachariasova. 2017. Automation of processor verification using recurrent neural networks. In2017 18th International Workshop on Microprocessor and SOC Test and Verification (MTV). IEEE, 15–20

2017

- [16]

-

[17]

Harry Foster. 2020. Wilson research group functional verification study: IC/ASIC functional verification trend report.Wilson Research Group and Mentor, A Siemens Business, White Paper(2020)

2020

-

[18]

Deepak Narayan Gadde, Thomas Nalapat, Aman Kumar, Djones Lettnin, Wolf- gang Kunz, and Sebastian Simon. 2024. Efficient stimuli generation using rein- forcement learning in design verification. In2024 20th International Conference on Synthesis, Modeling, Analysis and Simulation Methods and Applications to Circuit Design (SMACD). IEEE, 1–4

2024

-

[19]

Nikolaos Georgoulopoulos and Alkiviadis Hatzopoulos. 2019. UVM-based verifi- cation of a digital PLL using systemverilog. In2019 29th International Symposium on Power and Timing Modeling, Optimization and Simulation (PATMOS). IEEE, 23–28

2019

-

[20]

Stepan Harutyunyan, Taron Kaplanyan, Artak Kirakosyan, and Haykaram Khachatryan. 2020. Configurable verification IP for UART. In2020 IEEE 40th international conference on electronics and nanotechnology (ELNANO). IEEE, 234– 237

2020

- [21]

-

[22]

Qijing Huang, Hamid Shojaei, Fred Zyda, Azade Nazi, Shobha Vasudevan, Sat Chatterjee, and Richard Ho. 2022. Test parameter tuning with blackbox optimiza- tion: A simple yet effective way to improve coverage. InProceedings of the design and verification conference and exhibition US (DVCon)

2022

-

[23]

Kensen Li, Uri Alon, Alessio Parisi, and Richard Sutton. 2024. Large Language Models Are Zero-Shot Program Synthesizers.Transactions on Machine Learning Research(2024)

2024

-

[24]

Mengming Li, Wenji Fang, Qijun Zhang, and Zhiyao Xie. 2025. Specllm: Exploring generation and review of vlsi design specification with large language model. In 2025 International Symposium of Electronics Design Automation (ISEDA). IEEE, 749–755

2025

-

[25]

Mingjie Liu, Nathaniel Pinckney, Brucek Khailany, and Haoxing Ren. 2023. Ver- ilogeval: Evaluating large language models for verilog code generation. In2023 IEEE/ACM International Conference on Computer Aided Design (ICCAD). IEEE, 1–8

2023

-

[26]

Shang Liu, Wenji Fang, Yao Lu, Jing Wang, Qijun Zhang, Hongce Zhang, and Zhiyao Xie. 2024. Rtlcoder: Fully open-source and efficient llm-assisted rtl code generation technique.IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems(2024)

2024

-

[27]

Yuntao Lu, Chen Bai, Yuxuan Zhao, Ziyue Zheng, Yangdi Lyu, Mingyu Liu, and Bei Yu. 2025. DeepVerifier: Learning to Update Test Sequences for Coverage- Guided Verification.ACM Transactions on Design Automation of Electronic Systems (2025)

2025

- [28]

-

[29]

Karthik Maddala, Bhabesh Mali, and Chandan Karfa. 2024. Laag-rv: Llm assisted assertion generation for rtl design verification. In2024 IEEE 8th International Test Conference India (ITC India). IEEE, 1–6

2024

-

[30]

Vazgen Melikyan, Stepan Harutyunyan, Artak Kirakosyan, and Taron Kaplanyan

-

[31]

In2021 IEEE East-West Design & Test Symposium (EWDTS)

Uvm verification ip for axi. In2021 IEEE East-West Design & Test Symposium (EWDTS). IEEE, 1–4

-

[32]

Nurun Nahar Mondol, Arash Vafei, Kimia Zamiri Azar, Farimah Farahmandi, and Mark Tehranipoor. 2024. RL-TPG: automated pre-silicon security verification through reinforcement learning-based test pattern generation. In2024 Design, Automation & Test in Europe Conference & Exhibition (DATE). IEEE, 1–6

2024

-

[33]

Eric Ohana. 2023. Closing functional coverage with deep reinforcement learning: A compression encoder example.San Jose, USA(2023)

2023

-

[34]

TM Pavithran and Ramesh Bhakthavatchalu. 2017. UVM based testbench ar- chitecture for logic sub-system verification. In2017 International Conference on Technological Advancements in Power and Energy (TAP Energy). IEEE, 1–5

2017

-

[35]

Amer Samarah, Ali Habibi, Sofiene Tahar, and Nawwaf Kharma. 2006. Automated coverage directed test generation using a cell-based genetic algorithm. In2006 IEEE International High Level Design Validation and Test Workshop. IEEE, 19–26

2006

- [36]

-

[37]

2012.SystemVerilog for Verification: A Guide to Learning the Testbench Methodology

Chris Spear and Greg Tumbush. 2012.SystemVerilog for Verification: A Guide to Learning the Testbench Methodology. Springer

2012

-

[38]

SL Tweehuysen, GLA Adriaans, and M Gomony. 2023. Stimuli generation for ic design verification using reinforcement learning with an actor-critic model. In 2023 IEEE European Test Symposium (ETS). IEEE, 1–4

2023

-

[39]

Simone Vagaggini, Marco Trafeli, Roberto Ciardi, Daniele Davalle, Lucana Santos, Pietro Nannipieri, and Luca Fanucci. 2022. SpaceWire Codec VIP: An innovative architecture of UVM-based Verification Environment: SpaceWire Test and Ver- ification, Short Paper. In2022 International SpaceWire & SpaceFibre Conference (ISC). IEEE, 1–4

2022

-

[40]

Shobha Vasudevan, Wenjie Joe Jiang, David Bieber, Rishabh Singh, C Richard Ho, Charles Sutton, et al . 2021. Learning semantic representations to verify hardware designs.Advances in Neural Information Processing Systems34 (2021), 23491–23504

2021

-

[41]

Shikai Wang, Jingyi Chen, Lei Yan, and Zuwei Shui. 2025. Automated test case generation for chip verification using deep reinforcement learning.Journal of 7 DAC 2026, July 2026, Long Beach, CA, USA Junhao Ye1,2, Dingrong Pan2, Hanyuan Liu1,2, Yuchen Hu1,2, Jie Zhou1,2, Ke Xu1,2, Xinwei Fang3, Xi Wang1,2, Nan Guan4, Zhe Jiang1,2† Knowledge Learning and Sci...

2025

- [42]

-

[43]

Ke Xu, Jialin Sun, Yuchen Hu, Xinwei Fang, Weiwei Shan, Xi Wang, and Zhe Jiang. 2025. MEIC: Re-thinking RTL Debug Automation using LLMs. InProceed- ings of the 43rd IEEE/ACM International Conference on Computer-Aided Design (Newark Liberty International Airport Marriott, New York, NY, USA)(ICCAD ’24). Association for Computing Machinery, New York, NY, USA...

- [44]

-

[45]

Zhiyuan Yan, Wenji Fang, Mengming Li, Min Li, Shang Liu, Zhiyao Xie, and Hongce Zhang. 2025. Assertllm: Generating hardware verification assertions from design specifications via multi-llms. InProceedings of the 30th Asia and South Pacific Design Automation Conference. 614–621

2025

-

[46]

Deheng Yang, Jiayu He, Xiaoguang Mao, Tun Li, Yan Lei, Xin Yi, and Jiang Wu

-

[47]

STRIDER: Signal value transition-guided defect repair for HDL program- ming assignments.IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems43, 5 (2023), 1594–1607

2023

-

[48]

Junhao Ye, Yuchen Hu, Ke Xu, Dingrong Pan, Qichun Chen, Jie Zhou, Shuai Zhao, Xinwei Fang, Xi Wang, Nan Guan, et al. 2025. From Concept to Practice: an Automated LLM-aided UVM Machine for RTL Verification.arXiv preprint arXiv:2504.19959(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

- [49]

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.