Recognition: 2 theorem links

· Lean TheoremPosition: Embodied AI Requires a Privacy-Utility Trade-off

Pith reviewed 2026-05-08 17:53 UTC · model grok-4.3

The pith

Embodied AI systems create irreversible privacy risks when their stages are optimized independently, requiring privacy to be managed as a lifecycle-wide constraint.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

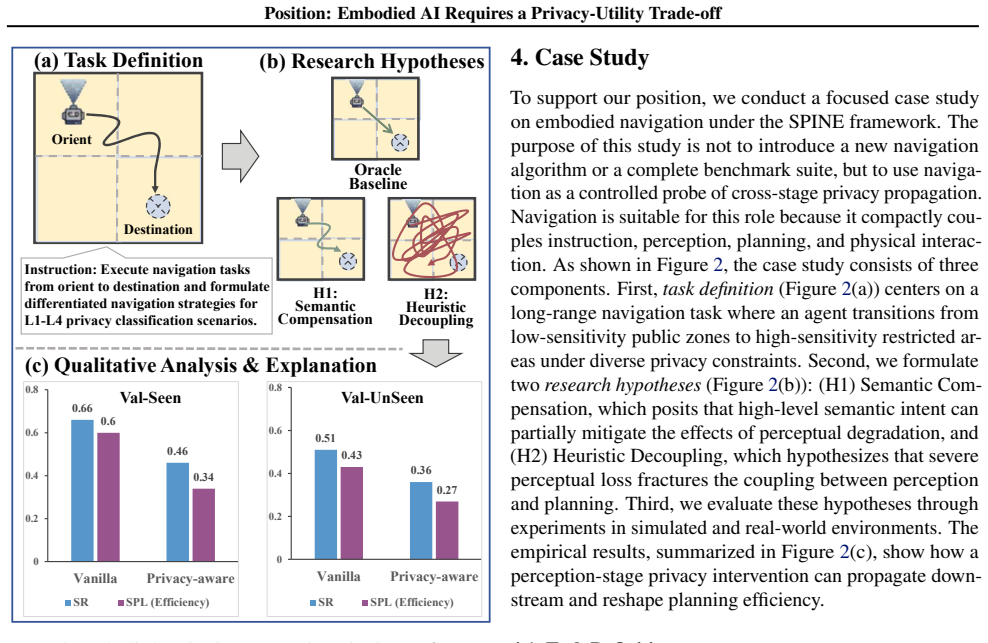

The paper claims that optimizing Embodied AI components independently creates a systemic privacy crisis in sensitive settings, and therefore privacy must be treated as a life cycle-level architectural constraint rather than a stage-local feature. To support this, it proposes the SPINE framework which decomposes the pipeline into stages, applies a multi-criterion privacy classification matrix, and treats privacy as a dynamic control signal for cross-stage coupling. Preliminary studies illustrate how constraints reshape behavior downstream.

What carries the argument

The SPINE framework, which establishes a multi-criterion privacy classification matrix to orchestrate contextual sensitivity across EAI stage boundaries and uses privacy as a dynamic control signal.

If this is right

- Privacy constraints propagate downstream to reshape system behavior in the EAI pipeline.

- Fragmented privacy patches applied to individual stages are insufficient for preventing systemic risks.

- High-frequency deployments in domestic environments make privacy leakage often irreversible.

- Future research must target secure yet functional embodied AI systems that integrate privacy across the entire lifecycle.

Where Pith is reading between the lines

- Adopting SPINE could reduce the overall utility or performance of EAI systems as privacy constraints limit data sharing between stages.

- This approach might generalize to other AI systems that interact with physical environments, such as autonomous robots in public spaces.

- Developers of EAI might need new evaluation metrics that account for cumulative privacy exposure over time rather than per-module.

Load-bearing premise

That advancements in Embodied AI have been shown only in isolated stages without accounting for how their privacy implications couple together in frequent real-world use.

What would settle it

A demonstration of an Embodied AI system with independently optimized stages that maintains privacy without leaks in high-frequency domestic deployments would challenge the claim.

Figures

read the original abstract

Embodied AI (EAI) systems are rapidly transitioning from simulations into real-world domestic and other sensitive environments. However, recent EAI solutions have largely demonstrated advancements within isolated stages such as instruction, perception, planning and interaction, without considering their coupled privacy implications in high-frequency deployments where privacy leakage is often irreversible. This position paper argues that optimizing these components independently creates a systemic privacy crisis when deployed in sensitive settings, thereby advancing the position that privacy in EAI is a life cycle-level architectural constraint rather than a stage-local feature. To address these challenges, we propose Secure Privacy Integration in Next-generation Embodied AI (SPINE), a unified privacy-aware framework that treats privacy as a dynamic control signal governing cross-stage coupling throughout the entire EAI life cycle. SPINE decomposes the EAI pipeline into various stages and establishes a multi-criterion privacy classification matrix to orchestrate contextual sensitivity across stage boundaries. We conduct preliminary simulation and real-world case studies to conceptually validate how privacy constraints propagate downstream to reshape system behavior, illustrating the insufficiency of fragmented privacy patches and motivating future research directions into secure yet functional embodied AI systems. We detail the SPINE framework and case studies at https://github.com/rminshen03/EAI_Privacy_Position.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript is a position paper arguing that Embodied AI (EAI) systems advance by optimizing isolated stages (instruction, perception, planning, interaction) without accounting for coupled privacy implications; in high-frequency real-world deployments this produces a systemic, often irreversible privacy crisis. It advances the view that privacy must be treated as a life-cycle architectural constraint rather than a stage-local feature and proposes the SPINE framework, which decomposes the EAI pipeline and uses a multi-criterion privacy classification matrix to orchestrate contextual sensitivity as a dynamic control signal. Preliminary simulations and real-world case studies are offered to conceptually illustrate downstream propagation of privacy constraints and the inadequacy of fragmented patches.

Significance. If the central position is substantiated, the work could usefully redirect EAI research toward integrated privacy-utility architectures for domestic and sensitive environments. The SPINE matrix supplies a concrete organizing device that future systems could adopt. The preliminary studies provide an initial existence proof of cross-stage effects, but the absence of quantitative leakage metrics or controlled comparisons limits the immediate technical contribution.

major comments (3)

- [Abstract / Case Studies] Abstract and case-study description: the assertion that independent stage optimization produces an irreversible systemic crisis rests on conceptual validation only. No attack models, leakage metrics (mutual information, inference success rates), or head-to-head results are supplied showing that cross-stage orchestration outperforms stage-local patches while preserving utility.

- [SPINE Framework] SPINE framework description: the multi-criterion privacy classification matrix is introduced to govern cross-stage coupling, yet no formal definitions of the criteria, scoring procedure, or concrete orchestration rules across instruction-perception-planning-interaction boundaries are given, rendering the claim that privacy functions as a dynamic control signal difficult to evaluate or implement.

- [Introduction] Introduction / weakest assumption: the statement that recent EAI solutions have not considered coupled privacy implications in high-frequency deployments is asserted without citing specific prior systems or providing evidence that privacy leakage is tightly coupled across stages in a manner that renders local fixes insufficient.

minor comments (2)

- [Title] Title refers to a 'Privacy-Utility Trade-off' while the body emphasizes a privacy crisis; the manuscript would benefit from explicit discussion of how the SPINE matrix quantifies or balances utility loss.

- [Abstract] The GitHub repository is referenced for further details, but the manuscript should contain self-contained summaries of the simulation setup and case-study outcomes so that readers can assess the conceptual validation without external material.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our position paper. We respond to each major comment below, clarifying the conceptual scope of the work while committing to targeted revisions that improve rigor without shifting the paper from a position statement to an empirical study.

read point-by-point responses

-

Referee: [Abstract / Case Studies] Abstract and case-study description: the assertion that independent stage optimization produces an irreversible systemic crisis rests on conceptual validation only. No attack models, leakage metrics (mutual information, inference success rates), or head-to-head results are supplied showing that cross-stage orchestration outperforms stage-local patches while preserving utility.

Authors: As a position paper, our objective is to articulate a systemic problem in current EAI practices and motivate a shift toward lifecycle privacy architectures, using preliminary simulations and case studies for illustration rather than exhaustive empirical validation. We agree that quantitative attack models and metrics are absent; the case studies serve to demonstrate downstream propagation conceptually. We will add a dedicated subsection outlining candidate quantitative metrics (e.g., cross-stage mutual information and inference success rates) and potential attack models for future work, while preserving the paper's focus on motivating such evaluations. revision: partial

-

Referee: [SPINE Framework] SPINE framework description: the multi-criterion privacy classification matrix is introduced to govern cross-stage coupling, yet no formal definitions of the criteria, scoring procedure, or concrete orchestration rules across instruction-perception-planning-interaction boundaries are given, rendering the claim that privacy functions as a dynamic control signal difficult to evaluate or implement.

Authors: The SPINE framework is intentionally presented at a conceptual level to provide an organizing device for the community. We will revise the manuscript to supply formal definitions of the matrix criteria (sensitivity, propagation risk, reversibility, and context dependency), a high-level scoring procedure, and explicit orchestration rules for propagating the dynamic control signal across the four stages. Pseudocode and boundary examples will be added to the main text, with further implementation details referenced from the GitHub repository. revision: yes

-

Referee: [Introduction] Introduction / weakest assumption: the statement that recent EAI solutions have not considered coupled privacy implications in high-frequency deployments is asserted without citing specific prior systems or providing evidence that privacy leakage is tightly coupled across stages in a manner that renders local fixes insufficient.

Authors: We will strengthen the introduction by citing concrete recent EAI systems that optimize stages independently (e.g., modular perception-planning pipelines in works such as RT-2 and PaLM-E derivatives) and by referencing prior studies on privacy propagation in multi-stage AI systems. The case studies will be explicitly linked to these citations to illustrate why stage-local patches are insufficient, thereby grounding the coupling claim more firmly. revision: yes

Circularity Check

No circularity: position paper uses descriptive arguments and preliminary case studies without derivations or self-referential reductions

full rationale

The paper is a position statement arguing that independent optimization of EAI stages creates systemic privacy risks, supported by conceptual observations and preliminary simulations rather than any equations, fitted parameters, or formal derivations. No load-bearing steps reduce to self-definitions, fitted inputs renamed as predictions, or self-citation chains; the SPINE framework is proposed as an architectural response without claiming uniqueness theorems or smuggling ansatzes. The central claim rests on external observations of current practices, making the reasoning self-contained and non-circular by the specified criteria.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption privacy leakage is often irreversible in high-frequency deployments

- domain assumption optimizing EAI components independently creates coupled privacy implications across stages

invented entities (1)

-

SPINE framework

no independent evidence

Lean theorems connected to this paper

-

Cost.FunctionalEquation (J = ½(x+x⁻¹)−1)washburn_uniqueness_aczel unclearthe privacy-utility trade-off in EAI is inherently non-linear: utility loss is not proportional to the strength of privacy protection

Reference graph

Works this paper leans on

-

[1]

An, D., Wang, H., Wang, W., Wang, Z., Huang, Y ., He, K., and Wang, L

URL https://arxiv.org/abs/2411.12284. An, D., Wang, H., Wang, W., Wang, Z., Huang, Y ., He, K., and Wang, L. Etpnav: Evolving topological planning for vision-language navigation in continuous environments. IEEE Transactions on Pattern Analysis and Machine In- telligence,

-

[2]

Real-time execution of action chunking flow policies.arXiv preprint arXiv:2506.07339, 2025

Black, K., Galliker, M. Y ., and Levine, S. Real-time exe- cution of action chunking flow policies.arXiv preprint arXiv:2506.07339,

-

[3]

doi: 10.1145/ 2696454.2696484. URL https://doi.org/10. 1145/2696454.2696484. California State Legislature. California con- sumer privacy act of 2018 (ccpa),

-

[4]

arXiv preprint arXiv:2505.05519 , year=

URL https://leginfo.legislature.ca.gov/ faces/codes_displaySection.xhtml? lawCode=CIV§ionNum=1798.140. Califor- nia Civil Code § 1798.100 et seq. Choi, M., Yang, Y ., Bhatt, N. P., Gupta, K., Shah, S., Rai, A., Fridovich-Keil, D., Topcu, U., and Chinchali, S. P. Real-time privacy preservation for robot visual perception. arXiv preprint arXiv:2505.05519,

-

[5]

doi: 10.3389/frobt.2023.1236733. Duan, J., Yu, S., Tan, H. L., Zhu, H., and Tan, C. A survey of embodied ai: From simulators to research tasks.arXiv preprint arXiv:2103.04918,

-

[6]

Regulation (eu) 2016/679 of the european parliament and of the council (general data protection regulation),

European Parliament and Council of the European Union. Regulation (eu) 2016/679 of the european parliament and of the council (general data protection regulation),

2016

-

[7]

Multi-agent embodied ai: Advances and future directions.arXiv preprint arXiv:2505.05108,

URL https://eur-lex.europa.eu/eli/reg/ 2016/679/oj. Official Journal of the European Union. Feng, Z., Xue, R., Yuan, L., Yu, Y ., Ding, N., Liu, M., Gao, B., Sun, J., and Wang, G. Multi-agent embod- ied ai: Advances and future directions.arXiv preprint arXiv:2505.05108,

-

[8]

Privacy risks of robot vision: A user study on image modalities and resolution

Huang, X., Pan, S., and Bennewitz, M. Privacy risks of robot vision: A user study on image modalities and resolution. arXiv preprint arXiv:2505.07766, 2025a. Huang, X., Pan, S., Zatsarynna, O., Gall, J., and Ben- newitz, M. Improved semantic segmentation from ultra- low-resolution rgb images applied to privacy-preserving object-goal navigation.arXiv prepr...

-

[9]

Kawaharazuka, K., Oh, J., Yamada, J., Posner, I., and Zhu, Y

doi: 10.48550/arxiv.2510.08464. Kawaharazuka, K., Oh, J., Yamada, J., Posner, I., and Zhu, Y . Vision-language-action models for robotics: A review towards real-world applications.IEEE Access,

-

[10]

doi: 10.1109/ACCESS.2024. 3467049. Kim, M., Lee, H., Yang, H., and Ryoo, M. Privacy- preserving robot vision with anonymized faces by ex- treme low resolution. pp. 462–467, 11

-

[11]

Li, L., Bayuelo, A., Bobadilla, L., Alam, T., and Shell, D

doi: 10.1109/IROS40897.2019.8967681. Li, L., Bayuelo, A., Bobadilla, L., Alam, T., and Shell, D. A. Coordinated multi-robot planning while preserving individual privacy. In2019 International Conference on Robotics and Automation (ICRA), pp. 2188–2194,

-

[12]

doi: 10.1109/ICRA.2019.8794460. Li, M., Ding, W., and Zhao, D. Privacy risks in reinforce- ment learning for household robots. In2024 IEEE Inter- national Conference on Robotics and Automation (ICRA), pp. 5148–5154,

-

[13]

arXiv preprint arXiv:2508.10399 , year=

Liang, W., Zhou, R., Ma, Y ., Zhang, B., Li, S., Liao, Y ., and Kuang, P. Large model empowered embodied ai: A survey on decision-making and embodied learning.arXiv preprint arXiv:2508.10399,

-

[14]

Liu, X., Liu, Y ., Qiu, H., Qirong, Y ., and Lian, Z. In- dooruav: Benchmarking vision-language uav naviga- tion in continuous indoor environments.arXiv preprint arXiv:2512.19024,

-

[15]

A Survey on Vision-Language-Action Models for Embodied AI

Ma, Y ., Song, Z., Zhuang, Y ., Hao, J., and King, I. A survey on vision-language-action models for embodied ai.arXiv preprint arXiv:2405.14093,

work page internal anchor Pith review Pith/arXiv arXiv

-

[16]

Can llms keep a secret? testing privacy implications of language models via con- textual integrity theory

10 Position: Embodied AI Requires a Privacy-Utility Trade-off Mireshghallah, N., Kim, H., Zhou, X., Tsvetkov, Y ., Sap, M., Shokri, R., and Choi, Y . Can llms keep a secret? testing privacy implications of language models via con- textual integrity theory. InInternational Conference on Representation Learning, pp. 1892–1915,

1915

-

[17]

Neupane, S., Mitra, S., Fernandez, I

URL https: //arxiv.org/abs/2502.14780. Neupane, S., Mitra, S., Fernandez, I. A., Saha, S., Mit- tal, S., Chen, J., Pillai, N., and Rahimi, S. Secu- rity considerations in ai-robotics: A survey of current methods, challenges, and opportunities.arXiv preprint arXiv:2310.08565,

-

[18]

Qu, C., Kong, W., Yang, L., Zhang, M., Bendersky, M., and Najork, M

URL https://arxiv.org/abs/2511.22515. Qu, C., Kong, W., Yang, L., Zhang, M., Bendersky, M., and Najork, M. Natural language understanding with privacy- preserving bert. pp. 1488–1497, October

-

[19]

doi: 10.1145/3459637.3482281. URL http://dx.doi. org/10.1145/3459637.3482281. Sapkota, R., Cao, Y ., Roumeliotis, K. I., and Kar- kee, M. Vision-language-action models: Concepts, progress, applications and challenges.arXiv preprint arXiv:2505.04769,

-

[20]

doi: 10.1109/MED64031. 2025.11073467. Shome, R., Kingston, Z. K., and Kavraki, L. E. Robots as ai double agents: Privacy in motion planning. 2023 IEEE/RSJ International Conference on Intel- ligent Robots and Systems (IROS), pp. 2861–2868,

-

[21]

In: 2025 20th ACM/IEEE International Conference on Human-Robot Interaction (HRI)

doi: 10.1109/HRI61500. 2025.10974013. Sullivan, D., Zhang, S., Li, J., Kirkorian, H., Mutlu, B., and Fawaz, K. Benchmarking llm privacy recogni- tion for social robot decision making.arXiv preprint arXiv:2507.16124,

-

[22]

doi: 10.1145/3576842.3582325. URL http://dx.doi. org/10.1145/3576842.3582325. Tan, X., Liu, B., Bao, Y ., Tian, Q., Gao, Z., Wu, X., Luo, Z., Wang, S., Zhang, Y ., Wang, X., Lu, C., and Zhou, B. Towards safe and trustworthy embodied ai: Foundations, status, and prospects.Open Review,

-

[23]

URL https://arxiv. org/abs/2509.23827. U.S. Congress. Health insurance portability and account- ability act of 1996 (hipaa),

-

[24]

Yang, D., Chae, Y .-J., Kim, D., Lim, Y ., Kim, D., Kim, C., Park, S.-K., and Nam, C

URL https: //arxiv.org/abs/2504.15699. Yang, D., Chae, Y .-J., Kim, D., Lim, Y ., Kim, D., Kim, C., Park, S.-K., and Nam, C. Effects of social behaviors of robots in privacy-sensitive situations.International Journal of Social Robotics, 14, 03

-

[25]

doi: 10.3390/ app15052583

ISSN 2076-3417. doi: 10.3390/ app15052583. URL https://www.mdpi.com/ 2076-3417/15/5/2583. Yeke, D., Pant, K. A., Ozmen, M. O., Kim, H., Goppert, J. M., Hwang, I., Bianchi, A., and Celik, Z. B. Automated discovery of semantic attacks in multi-robot navigation systems. InProceedings of the 34th USENIX Conference 11 Position: Embodied AI Requires a Privacy-U...

2076

-

[26]

USENIX Association. ISBN 978-1-939133-52-6. Yu, B., Kasaei, H., and Cao, M. Panav: Toward privacy- aware robot navigation via vision-language models.arXiv preprint arXiv:2410.04302,

- [27]

-

[28]

ISSN 0028-1522. doi: 10.33012/navi.518. URL https://navi.ion.org/content/69/2/ navi.518. 12

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.