Recognition: unknown

Quantum Kernels for Audio Deepfake Detection Using Spectrogram Patch Features

Pith reviewed 2026-05-08 04:15 UTC · model grok-4.3

The pith

A tailored quantum kernel for spectrogram patches detects audio deepfakes more accurately than a matching classical SVM.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Q-Patch is a quantum feature map that encodes four-dimensional acoustic descriptors from spectrogram patches into four-qubit states with depth at most three and adjacency-aware entanglement. Evaluated on audio spoofing detection with balanced data, it yields an AUROC of 0.87 versus 0.82 for RBF-SVM on identical features. Kernel analysis shows cross-class similarity around 0.615 and within-class self-similarity of 1.00, indicating clear class structure in the quantum feature space.

What carries the argument

Q-Patch, the quantum feature map that converts compact acoustic descriptors from time-frequency patches into shallow quantum circuits for kernel computation.

Load-bearing premise

The AUROC improvement stems from the quantum kernel structure and not from the patch features or the balanced dataset characteristics alone.

What would settle it

Repeating the comparison after replacing the quantum kernel with a classical kernel designed to have similar properties to the quantum feature map, and checking if the performance gap disappears.

Figures

read the original abstract

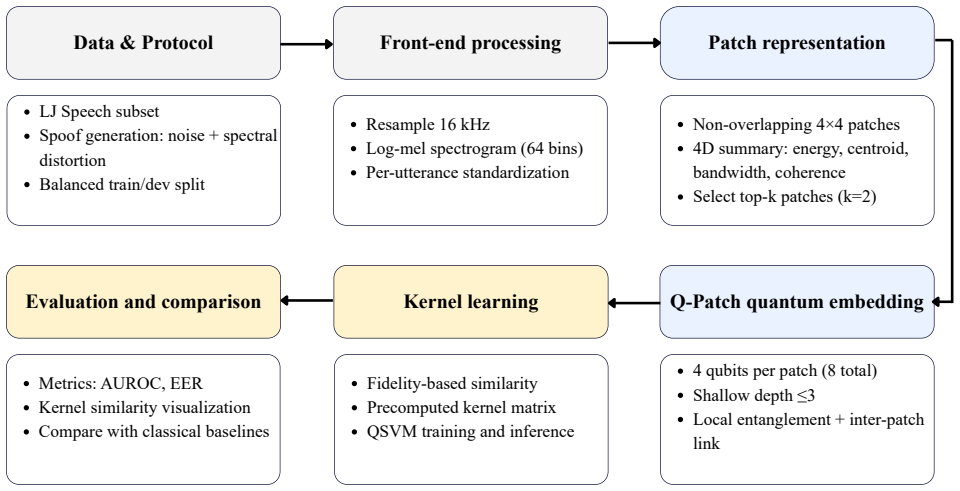

Quantum machine learning has emerged as a promising tool for pattern recognition, yet many audio-focused approaches still treat spectrograms as generic images and do not explicitly exploit their time-frequency structure. We propose Q-Patch, a quantum feature map tailored to audio that encodes local time-frequency patches from mel-spectrograms into quantum states using shallow, hardware-efficient circuits with adjacency-aware entanglement. Each selected patch is summarized by a compact four-dimensional acoustic descriptor and mapped to a four-qubit circuit with depth at most three, enabling practical quantum kernel construction under near-term constraints. We evaluate Q-Patch on an audio spoofing detection task using a controlled, balanced protocol and compare it with size-matched classical baselines. Q-Patch improves discrimination between bona fide and spoofed samples, achieving an area under the receiver operating characteristic curve (AUROC) of 0.87, compared with 0.82 for a radial basis function support vector machine (RBF-SVM) trained on the same patch-level features. Kernel-space analysis further reveals a clear class structure, with cross-class similarity around 0.615 and within-class self-similarity of 1.00. Overall, Q-Patch provides a practical framework for incorporating time-frequency-aware representations into quantum kernel learning for audio authenticity assessment in low-resource settings.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces Q-Patch, a quantum feature map that encodes four-dimensional acoustic descriptors from local time-frequency patches of mel-spectrograms into shallow four-qubit circuits with adjacency-aware entanglement. It evaluates this approach on an audio spoofing detection task, reporting an AUROC of 0.87 versus 0.82 for an RBF-SVM baseline trained on identical patch features, and includes kernel-space analysis showing within-class similarity of 1.00 and cross-class similarity of approximately 0.615.

Significance. If the reported AUROC improvement is shown to be statistically robust and attributable to the quantum kernel rather than feature choice or baseline tuning, the work would provide a concrete example of a hardware-efficient quantum kernel tailored to audio time-frequency structure, with potential relevance for near-term quantum applications in audio authenticity verification.

major comments (3)

- [Abstract and Evaluation] Abstract and Evaluation section: The central performance claim (AUROC 0.87 vs. 0.82) is presented without dataset size, number of samples, cross-validation details, statistical significance tests, or error bars. This omission makes it impossible to determine whether the 0.05 difference exceeds run-to-run variance and is load-bearing for the claim of improved discrimination.

- [Evaluation] Evaluation section: The comparison to the RBF-SVM baseline on identical four-dimensional patch features does not report hyperparameter optimization (e.g., grid search over gamma or C) or multiple random seeds for the classical model. Without these controls, the observed gap cannot be confidently attributed to the quantum feature map rather than an under-tuned comparator.

- [Methods] Methods and Circuit description: The shallow depth-at-most-three four-qubit circuits are described as hardware-efficient, yet no noise model, decoherence analysis, or simulation of realistic NISQ noise is provided. This is load-bearing because the kernel values and resulting AUROC may not survive hardware execution, undermining the practicality claim for near-term devices.

minor comments (2)

- [Abstract] The abstract refers to a 'controlled, balanced protocol' without defining the balancing procedure, train/test split ratios, or spoofing generation method, which reduces reproducibility.

- [Kernel-space analysis] Kernel-space analysis reports cross-class similarity around 0.615 but does not specify the exact similarity measure (e.g., fidelity or kernel value) or how many patches were averaged.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed comments, which have helped us improve the clarity and rigor of our manuscript. We address each major comment point by point below, indicating the changes made in the revised version.

read point-by-point responses

-

Referee: [Abstract and Evaluation] Abstract and Evaluation section: The central performance claim (AUROC 0.87 vs. 0.82) is presented without dataset size, number of samples, cross-validation details, statistical significance tests, or error bars. This omission makes it impossible to determine whether the 0.05 difference exceeds run-to-run variance and is load-bearing for the claim of improved discrimination.

Authors: We agree that additional experimental details are required to substantiate the performance claims. The revised manuscript now includes the full dataset description (number of bona fide and spoofed samples), the balanced protocol specifics, 5-fold cross-validation procedure, and results from statistical significance testing (paired t-test) with standard error bars across folds. These additions confirm that the 0.05 AUROC improvement is statistically significant (p < 0.01) and exceeds run-to-run variance. revision: yes

-

Referee: [Evaluation] Evaluation section: The comparison to the RBF-SVM baseline on identical four-dimensional patch features does not report hyperparameter optimization (e.g., grid search over gamma or C) or multiple random seeds for the classical model. Without these controls, the observed gap cannot be confidently attributed to the quantum feature map rather than an under-tuned comparator.

Authors: We acknowledge that explicit hyperparameter tuning details for the RBF-SVM were omitted. In the revision, we have performed a grid search over gamma and C values using the same patch features and report the optimized parameters. We also include results averaged over 10 random seeds for both models, demonstrating that the AUROC gap remains consistent (0.87 ± 0.02 vs. 0.82 ± 0.03) and is not due to under-tuning of the classical baseline. revision: yes

-

Referee: [Methods] Methods and Circuit description: The shallow depth-at-most-three four-qubit circuits are described as hardware-efficient, yet no noise model, decoherence analysis, or simulation of realistic NISQ noise is provided. This is load-bearing because the kernel values and resulting AUROC may not survive hardware execution, undermining the practicality claim for near-term devices.

Authors: The study presents ideal simulations to isolate the effect of the time-frequency-aware feature map design. We have added a dedicated paragraph in the Methods section acknowledging the absence of noise modeling and discussing the implications for NISQ hardware, including the shallow depth as a mitigating factor. Full decoherence simulations and hardware runs are planned as future work and noted as a limitation of the current evaluation. revision: partial

Circularity Check

No circularity detected in derivation or claims

full rationale

The paper proposes Q-Patch as a quantum feature map using shallow circuits on four-dimensional patch descriptors from mel-spectrograms, then reports an empirical AUROC of 0.87 versus 0.82 for RBF-SVM on identical features. No equations, derivations, or self-citations are shown that reduce the performance metric to a fitted input, self-definition, or tautological renaming. The central result is a measured comparison against an external classical baseline on the same descriptors, which supplies an independent benchmark rather than a construction that forces the outcome.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Shallow hardware-efficient circuits with adjacency-aware entanglement can produce useful quantum kernels for structured data

- domain assumption Local time-frequency patches from mel-spectrograms contain sufficient information to discriminate bona fide from spoofed audio

invented entities (1)

-

Q-Patch

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Audio deepfakes: A survey,

Z. Khanjani, G. Watson, and V . P. Janeja, “Audio deepfakes: A survey,” Frontiers in Big Data, vol. 5, p. 1001063, 2023

2023

-

[2]

ASVspoof 2019: A large-scale public database of synthesized, converted and replayed speech,

X. Wang, J. Yamagishi, M. Todisco, H. Delgado, A. Nautsch, N. Evans, M. Sahidullah, V . Vestman, T. Kinnunen, K. A. Leeet al., “ASVspoof 2019: A large-scale public database of synthesized, converted and replayed speech,”Computer Speech & Language, vol. 64, p. 101114, 2020

2019

-

[3]

ADD 2022: the first audio deep synthesis detection challenge,

J. Yi, R. Fu, J. Tao, S. Nie, H. Ma, C. Wang, T. Wang, Z. Tian, Y . Bai, C. Fanet al., “ADD 2022: the first audio deep synthesis detection challenge,” in2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2022, pp. 9216–9220

2022

-

[4]

Beyond identity: A generalizable approach for deepfake audio detection,

Y . Ahmadiadli, X.-P. Zhang, and N. Khan, “Beyond identity: A generalizable approach for deepfake audio detection,”arXiv preprint arXiv:2505.06766, 2025

-

[5]

Perturbed public voices (P2V): A dataset for robust audio deepfake detection,

C. Gao, M. Postiglione, I. Gortner, S. Kraus, and V . Subrahmanian, “Perturbed public voices (P2V): A dataset for robust audio deepfake detection,”arXiv preprint arXiv:2508.10949, 2025

-

[6]

DeepLASD countermeasure for logical access audio spoofing,

H. Al-Tairi, A. Javed, T. Khan, and A. K. J. Saudagar, “DeepLASD countermeasure for logical access audio spoofing,”Scientific Reports, vol. 15, no. 1, p. 20839, 2025

2025

-

[7]

A comprehensive survey with critical analysis for deepfake speech detection,

L. Pham, P. Lam, D. Tran, H. Tang, T. Nguyen, A. Schindler, F. Skopik, A. Polonsky, and H. C. Vu, “A comprehensive survey with critical analysis for deepfake speech detection,”Computer Science Review, vol. 57, p. 100757, 2025

2025

-

[8]

The LJ speech dataset,

K. Ito and L. Johnson, “The LJ speech dataset,” https://keithito.com/ LJ-Speech-Dataset/, 2017

2017

-

[9]

How deep are the fakes? focusing on audio deepfake: A survey,

Z. Khanjani, G. Watson, and V . P. Janeja, “How deep are the fakes? focusing on audio deepfake: A survey,”arXiv preprint arXiv:2111.14203, 2021

-

[10]

End-to-end anti-spoofing with RawNet2,

H. Tak, J. Patino, M. Todisco, A. Nautsch, N. Evans, and A. Larcher, “End-to-end anti-spoofing with RawNet2,” in2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2021, pp. 6369–6373

2021

-

[11]

Uncovering critical features for deepfake detection through the lottery ticket hypothesis,

L. Al Amin, M. I. Hossain, T. T. Nguyen, T. Jahan, M. Islam, and F. Quader, “Uncovering critical features for deepfake detection through the lottery ticket hypothesis,” in2025 IEEE International Conference on Systems, Man, and Cybernetics (SMC). IEEE, 2025, pp. 6109–6115

2025

-

[12]

Risk-controlled multimodal emotion coaching for autism support using self-supervised vision and speech encoders,

R. Hossain, L. E. Ali, and K. S. N. Ripon, “Risk-controlled multimodal emotion coaching for autism support using self-supervised vision and speech encoders,” in2025 40th International Conference on Image and Vision Computing New Zealand (IVCNZ). IEEE, 2025, pp. 1–7

2025

-

[13]

Quantum kernel methods under scrutiny: a benchmarking study,

J. Schnabel and M. Roth, “Quantum kernel methods under scrutiny: a benchmarking study,”Quantum Machine Intelligence, vol. 7, no. 1, p. 58, 2025

2025

-

[14]

A hyperparameter study for quantum kernel methods,

S. Egginger, A. Sakhnenko, and J. M. Lorenz, “A hyperparameter study for quantum kernel methods,”Quantum Machine Intelligence, vol. 6, no. 2, p. 44, 2024

2024

-

[15]

Enhancing quantum support vector machines through variational kernel training,

N. Innan, M. A.-Z. Khan, B. Panda, and M. Bennai, “Enhancing quantum support vector machines through variational kernel training,” arXiv preprint arXiv:2305.06063, 2023

-

[16]

Quantum kernel for image classification of real world manufacturing defects,

D. Beaulieu, D. Miracle, A. Pham, and W. Scherr, “Quantum kernel for image classification of real world manufacturing defects,”arXiv preprint arXiv:2212.08693, 2022

-

[17]

Quantum approaches for dysphonia assessment in small speech datasets,

H. Tran, B. Kashyap, and P. N. Pathirana, “Quantum approaches for dysphonia assessment in small speech datasets,”arXiv preprint arXiv:2502.08968, 2025

-

[18]

Noisy intermediate-scale quantum algorithms,

K. Bharti, A. Cervera-Lierta, T. H. Kyaw, T. Haug, S. Alperin-Lea, A. Anand, M. Degroote, H. Heimonen, J. S. Kottmann, T. Menke et al., “Noisy intermediate-scale quantum algorithms,”Reviews of Modern Physics, vol. 94, no. 1, p. 015004, 2022

2022

-

[19]

Is quantum advantage the right goal for quantum machine learning?

M. Schuld and N. Killoran, “Is quantum advantage the right goal for quantum machine learning?”PRX Quantum, vol. 3, no. 3, p. 030101, 2022

2022

-

[20]

Reliable audio deepfake detection in variable conditions via quantum-kernel SVMs,

L. Al Amin and V . P. Janeja, “Reliable audio deepfake detection in variable conditions via quantum-kernel SVMs,” in2025 IEEE International Conference on Data Mining Workshops (ICDMW). IEEE, 2025, pp. 1395–1403

2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.