Recognition: unknown

RobotEQ: Transitioning from Passive Intelligence to Active Intelligence in Embodied AI

Pith reviewed 2026-05-08 09:07 UTC · model grok-4.3

The pith

The first benchmark for active intelligence shows embodied AI models still cannot reliably follow social norms without explicit instructions.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

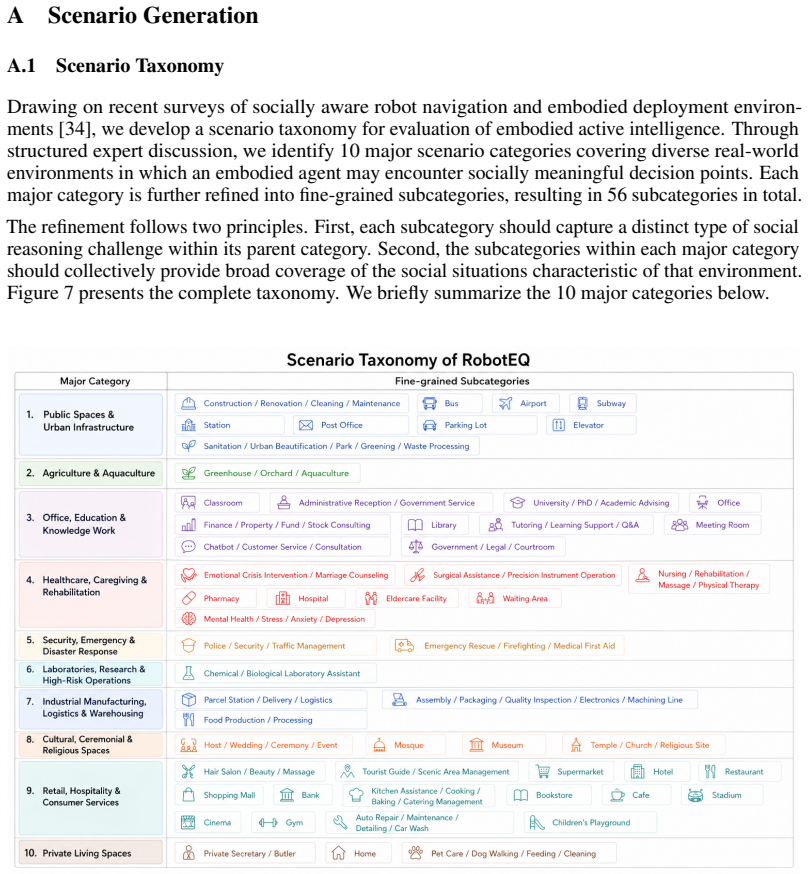

RobotEQ is introduced as the first benchmark for active intelligence, which enables robots to judge permissible actions based on social norms in embodied settings absent explicit instructions. The accompanying RobotEQ-Data contains 1,900 egocentric images across 10 categories and 56 subcategories, annotated with 5,353 action judgment questions and 1,286 spatial grounding questions. RobotEQ-Bench applies this to assess state-of-the-art models, finding they fall short particularly in spatial grounding while benefiting from retrieval-augmented generation with social norm knowledge.

What carries the argument

The RobotEQ benchmark, built on the RobotEQ-Data dataset of manually annotated egocentric images and questions about permissible robot actions and spatial grounding, together with the RobotEQ-Bench evaluation protocol.

If this is right

- Existing models cannot yet achieve reliable active intelligence in embodied scenarios.

- Performance is weakest on spatial grounding tasks that require understanding physical constraints in context.

- Incorporating external social norm knowledge via retrieval techniques generally improves adherence to permissible actions.

- This benchmark can facilitate the transition of robotics from user-guided passive manipulation to active social compliance.

Where Pith is reading between the lines

- Robots with effective active intelligence could manage unexpected situations in homes or public spaces with less human oversight.

- Expanding the benchmark to include dynamic video sequences or multi-turn interactions would better test real-time norm following.

- The spatial grounding weakness points to a broader need for tighter coupling between visual perception and normative reasoning in embodied models.

Load-bearing premise

The manually annotated action judgments and spatial questions in RobotEQ-Data accurately and comprehensively represent real-world social norms and permissible robot behaviors across diverse embodied scenarios.

What would settle it

A physical robot running a model that scores high on RobotEQ-Bench is deployed in varied human environments and observed for the frequency of actions that violate social norms when no instructions are given.

Figures

read the original abstract

Embodied AI is a prominent research topic in both academia and industry. Current research centers on completing tasks based on explicit user instructions. However, for robots to integrate into human society, they must understand which actions are permissible and which are prohibited, even without explicit commands. We refer to the user-guided AI as passive intelligence and the unguided AI as active intelligence. This paper introduces RobotEQ, the first benchmark for active intelligence, aiming to assess whether existing models can comprehend and adhere to social norms in embodied scenarios. First, we construct RobotEQ-Data, a dataset consisting of 1,900 egocentric images, spanning 10 representative embodied categories and 56 subcategories. Through extensive manual annotation, we provide 5,353 action judgment questions and 1,286 spatial grounding questions, specifying appropriate robot actions across diverse scenarios. Furthermore, we establish RobotEQ-Bench to evaluate the performance of state-of-the-art models on this task. Experimental results show that current models still fall short in achieving reliable active intelligence, particularly in spatial grounding. Meanwhile, we observe that leveraging RAG techniques to incorporate external social norm knowledge bases can generally enhance performance. This work can facilitate the transition of robotics from user-guided passive manipulation to active social compliance.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces RobotEQ as the first benchmark for 'active intelligence' in embodied AI, defined as the ability of robots to comprehend and adhere to social norms without explicit user instructions (contrasted with 'passive intelligence' for user-guided tasks). It constructs RobotEQ-Data from 1,900 egocentric images across 10 categories and 56 subcategories, providing 5,353 manually annotated action judgment questions and 1,286 spatial grounding questions. RobotEQ-Bench evaluates state-of-the-art models, reporting underperformance (especially in spatial grounding) that can be mitigated by RAG with external social norm knowledge bases. The work positions this as facilitating a transition to active social compliance in robotics.

Significance. If the annotations reliably capture generalizable social norms across embodied scenarios, RobotEQ could provide a valuable standardized benchmark for evaluating and improving social awareness in embodied AI, addressing a gap beyond explicit task completion. The empirical findings on model limitations and RAG benefits offer concrete directions for future work. The introduction of the 'active intelligence' framing, while novel, would benefit from stronger ties to existing literature on ethical robotics and value alignment.

major comments (2)

- [RobotEQ-Data construction] §3 (RobotEQ-Data construction): The dataset relies on 'extensive manual annotation' to create the 5,353 action judgment and 1,286 spatial grounding questions, but reports no inter-annotator agreement metrics, annotator demographics, or external validation against established ethical corpora or incident databases. This is load-bearing for the central claim that RobotEQ measures active intelligence, as social norms are culturally variable and the benchmark's validity as a faithful proxy depends on annotation reliability and generalizability.

- [RobotEQ-Bench evaluation] §4 (RobotEQ-Bench evaluation): The results claim that current models 'fall short' in active intelligence and that RAG 'can generally enhance performance,' but provide no specific quantitative metrics (e.g., accuracy or F1 scores per category), baseline model details, or error analysis across the question sets. This limits verification of the underperformance extent and the improvement magnitude, weakening the empirical support for the benchmark's utility.

minor comments (1)

- [Abstract and Introduction] The abstract and introduction assert RobotEQ is the 'first benchmark' for active intelligence without citing or contrasting against prior datasets on social norms, ethical decision-making, or value alignment in robotics/AI.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We address each major comment below, indicating revisions where appropriate to strengthen the work.

read point-by-point responses

-

Referee: The dataset relies on 'extensive manual annotation' to create the 5,353 action judgment and 1,286 spatial grounding questions, but reports no inter-annotator agreement metrics, annotator demographics, or external validation against established ethical corpora or incident databases. This is load-bearing for the central claim that RobotEQ measures active intelligence, as social norms are culturally variable and the benchmark's validity as a faithful proxy depends on annotation reliability and generalizability.

Authors: We agree that inter-annotator agreement metrics are essential to demonstrate annotation reliability, given the cultural variability of social norms. In the revised manuscript, we will report Fleiss' kappa scores computed on a 10% re-annotated subset of the questions. We will also add a description of annotator demographics, noting that the team consisted of researchers with expertise in robotics and AI ethics. For external validation, we will expand the discussion to explicitly map our 10 categories and 56 subcategories to established social norm frameworks from ethical robotics literature (e.g., value alignment studies), while acknowledging this as an area for future work rather than claiming full external corpus validation. revision: yes

-

Referee: The results claim that current models 'fall short' in active intelligence and that RAG 'can generally enhance performance,' but provide no specific quantitative metrics (e.g., accuracy or F1 scores per category), baseline model details, or error analysis across the question sets. This limits verification of the underperformance extent and the improvement magnitude, weakening the empirical support for the benchmark's utility.

Authors: We will revise §4 to include a detailed results table reporting accuracy and F1 scores broken down by the 10 categories (and where feasible, subcategories) for both action judgment and spatial grounding tasks. We will explicitly list the evaluated models (including versions and prompting details) and add a dedicated error analysis subsection identifying common failure modes, such as spatial mis-grounding and norm misinterpretation. These changes will provide verifiable quantitative support for the reported underperformance and RAG benefits. revision: yes

Circularity Check

No circularity: empirical benchmark construction with independent annotations

full rationale

The paper presents RobotEQ as an empirical benchmark for active intelligence via manual annotation of 1,900 images into 5,353 action judgments and 1,286 spatial questions across 10 categories. No mathematical derivations, equations, fitted parameters, or predictions appear in the provided text. The central claims rest on dataset construction and model evaluation, which do not reduce to self-citations, self-definitions, or inputs by construction. This is a standard benchmark-creation effort whose validity can be assessed externally against real-world norms or inter-annotator metrics, with no load-bearing step that collapses into its own inputs.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Social norms can be consistently defined and manually annotated as appropriate or inappropriate robot actions in embodied scenarios.

invented entities (1)

-

active intelligence

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Phi-4-Mini Technical Report: Compact yet Powerful Multimodal Language Models via Mixture-of-LoRAs

Abdelrahman Abouelenin, Atabak Ashfaq, Adam Atkinson, Hany Awadalla, Nguyen Bach, Jianmin Bao, Alon Benhaim, Martin Cai, Vishrav Chaudhary, Congcong Chen, et al. Phi-4-mini technical report: Compact yet powerful multimodal language models via mixture-of-loras. arXiv preprint arXiv:2503.01743, 2025

work page internal anchor Pith review arXiv 2025

-

[2]

Pravesh Agrawal, Szymon Antoniak, Emma Bou Hanna, Baptiste Bout, Devendra Chaplot, Jessica Chudnovsky, Diogo Costa, Baudouin De Monicault, Saurabh Garg, Theophile Gervet, Soham Ghosh, Amélie Héliou, Paul Jacob, Albert Q. Jiang, Kartik Khandelwal, Timothée Lacroix, Guillaume Lample, Diego Las Casas, Thibaut Lavril, Teven Le Scao, Andy Lo, William Marshall,...

2024

-

[3]

Reid, Stephen Gould, and Anton van den Hengel

Peter Anderson, Qi Wu, Damien Teney, Jake Bruce, Mark Johnson, Niko Sünderhauf, Ian D. Reid, Stephen Gould, and Anton van den Hengel. Vision-and-language navigation: Interpreting visually-grounded navigation instructions in real environments. In2018 IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2018, Salt Lake City, UT, USA, June 18-22,...

2018

-

[4]

System Card: Claude Opus 4.6

Anthropic. System Card: Claude Opus 4.6. https://www-cdn.anthropic.com/0dd8650 75ad3132672ee0ab40b05a53f14cf5288.pdf, February 2026. Released February 5, 2026. 212 pages. Also available athttps://www.anthropic.com/system-cards

2026

-

[5]

System Card: Claude Opus 4.7

Anthropic. System Card: Claude Opus 4.7. https://www.anthropic.com/system-cards, April 2026. Released April 16, 2026. 232 pages. Download PDF from the System Cards page

2026

-

[6]

System Card: Claude Sonnet 4.6

Anthropic. System Card: Claude Sonnet 4.6. https://www-cdn.anthropic.com/78073 f739564e986ff3e28522761a7a0b4484f84.pdf, February 2026. Released February 2026. Also available athttps://www.anthropic.com/system-cards

2026

-

[7]

Qwen-vl: A versatile vision-language model for understanding, localization, text reading, and beyond, 2023

Jinze Bai, Shuai Bai, Shusheng Yang, Shijie Wang, Sinan Tan, Peng Wang, Junyang Lin, Chang Zhou, and Jingren Zhou. Qwen-vl: A versatile vision-language model for understanding, localization, text reading, and beyond, 2023

2023

-

[8]

Shuai Bai, Yuxuan Cai, Ruizhe Chen, Keqin Chen, Xionghui Chen, Zesen Cheng, Lianghao Deng, Wei Ding, Chang Gao, Chunjiang Ge, et al. Qwen3-vl technical report.arXiv preprint arXiv:2511.21631, 2025

work page internal anchor Pith review arXiv 2025

-

[9]

Qwen2.5-vl technical report, 2025

Shuai Bai, Keqin Chen, Xuejing Liu, Jialin Wang, Wenbin Ge, Sibo Song, Kai Dang, Peng Wang, Shijie Wang, Jun Tang, Humen Zhong, Yuanzhi Zhu, Mingkun Yang, Zhaohai Li, Jianqiang Wan, Pengfei Wang, Wei Ding, Zheren Fu, Yiheng Xu, Jiabo Ye, Xi Zhang, Tianbao Xie, Zesen Cheng, Hang Zhang, Zhibo Yang, Haiyang Xu, and Junyang Lin. Qwen2.5-vl technical report, 2025

2025

-

[10]

Seed 1.6 Technical Report

ByteDance Seed Team. Seed 1.6 Technical Report. https://seed.bytedance.com/en/se ed1_6, 2025. Chinese version:https://research.doubao.com/zh/seed1_6. 10

2025

-

[11]

Janus-pro: Unified multimodal understanding and generation with data and model scaling, 2025

Xiaokang Chen, Zhiyu Wu, Xingchao Liu, Zizheng Pan, Wen Liu, Zhenda Xie, Xingkai Yu, and Chong Ruan. Janus-pro: Unified multimodal understanding and generation with data and model scaling, 2025

2025

-

[12]

Gheorghe Comanici, Eric Bieber, Mike Schaekermann, Ice Pasupat, Noveen Sachdeva, Inderjit Dhillon, Marcel Blistein, Ori Ram, Dan Zhang, Evan Rosen, et al. Gemini 2.5: Pushing the frontier with advanced reasoning, multimodality, long context, and next generation agentic capabilities.arXiv preprint arXiv:2507.06261, 2025

work page internal anchor Pith review arXiv 2025

-

[13]

Aya vision: Advancing the frontier of multilingual multimodality, 2025

Saurabh Dash, Yiyang Nan, John Dang, Arash Ahmadian, Shivalika Singh, Madeline Smith, Bharat Venkitesh, Vlad Shmyhlo, Viraat Aryabumi, Walter Beller-Morales, Jeremy Pekmez, Jason Ozuzu, Pierre Richemond, Acyr Locatelli, Nick Frosst, Phil Blunsom, Aidan Gomez, Ivan Zhang, Marzieh Fadaee, Manoj Govindassamy, Sudip Roy, Matthias Gallé, Beyza Ermis, Ahmet Üst...

2025

-

[14]

Danny Driess, Fei Xia, Mehdi S. M. Sajjadi, Corey Lynch, Aakanksha Chowdhery, Brian Ichter, Ayzaan Wahid, Jonathan Tompson, Quan Vuong, Tianhe Yu, Wenlong Huang, Yevgen Chebotar, Pierre Sermanet, Daniel Duckworth, Sergey Levine, Vincent Vanhoucke, Karol Hausman, Marc Toussaint, Klaus Greff, Andy Zeng, Igor Mordatch, and Pete Florence. Palm-e: an embodied ...

2023

-

[15]

Rodriguez, Nicolas Chapados, David Vazquez, Adriana Romero-Soriano, Reihaneh Rabbany, Perouz Taslakian, Christopher Pal, Spandana Gella, and Sai Rajeswar

Aarash Feizi, Shravan Nayak, Xiangru Jian, Kevin Qinghong Lin, Kaixin Li, Rabiul Awal, Xing Han Lù, Johan Obando-Ceron, Juan A. Rodriguez, Nicolas Chapados, David Vazquez, Adriana Romero-Soriano, Reihaneh Rabbany, Perouz Taslakian, Christopher Pal, Spandana Gella, and Sai Rajeswar. Grounding computer use agents on human demonstrations, 2025

2025

-

[16]

Seedream 3.0 technical report, 2025

Yu Gao, Lixue Gong, Qiushan Guo, Xiaoxia Hou, Zhichao Lai, Fanshi Li, Liang Li, Xiaochen Lian, Chao Liao, Liyang Liu, Wei Liu, Yichun Shi, Shiqi Sun, Yu Tian, Zhi Tian, Peng Wang, Rui Wang, Xuanda Wang, Xun Wang, Ye Wang, Guofeng Wu, Jie Wu, Xin Xia, Xuefeng Xiao, Zhonghua Zhai, Xinyu Zhang, Qi Zhang, Yuwei Zhang, Shijia Zhao, Jianchao Yang, and Weilin Hu...

2025

-

[17]

Gemini 3 Pro Image Model Card

Google DeepMind. Gemini 3 Pro Image Model Card. https://storage.googleapis.com /deepmind-media/Model-Cards/Gemini-3-Pro-Image-Model-Card.pdf , November

-

[18]

Released November 20, 2025

2025

-

[19]

Gemini 3.1 Pro Model Card

Google DeepMind. Gemini 3.1 Pro Model Card. https://deepmind.google/models/mod el-cards/gemini-3-1-pro/ , February 2026. PDF version: https://storage.google apis.com/deepmind-media/Model-Cards/Gemini-3-1-Pro-Model-Card.pdf

2026

-

[20]

Navigating the digital world as humans do: Universal visual grounding for GUI agents

Boyu Gou, Ruohan Wang, Boyuan Zheng, Yanan Xie, Cheng Chang, Yiheng Shu, Huan Sun, and Yu Su. Navigating the digital world as humans do: Universal visual grounding for GUI agents. InThe Thirteenth International Conference on Learning Representations, 2025

2025

-

[21]

Aaron Grattafiori, Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex Vaughan, et al. The llama 3 herd of models.arXiv preprint arXiv:2407.21783, 2024

work page internal anchor Pith review arXiv 2024

-

[22]

Wenyi Hong, Wenmeng Yu, Xiaotao Gu, Guo Wang, Guobing Gan, Haomiao Tang, Jiale Cheng, Ji Qi, Junhui Ji, Lihang Pan, et al. Glm-4.5 v and glm-4.1 v-thinking: Towards versatile multimodal reasoning with scalable reinforcement learning.arXiv preprint arXiv:2507.01006, 2025

work page internal anchor Pith review arXiv 2025

-

[23]

Inner monologue: Em- bodied reasoning through planning with language models

Wenlong Huang, Fei Xia, Ted Xiao, Harris Chan, Jacky Liang, Pete Florence, Andy Zeng, Jonathan Tompson, Igor Mordatch, Yevgen Chebotar, Pierre Sermanet, Tomas Jackson, Noah Brown, Linda Luu, Sergey Levine, Karol Hausman, and brian ichter. Inner monologue: Em- bodied reasoning through planning with language models. In Karen Liu, Dana Kulic, and Jeff Ichnow...

2023

-

[24]

Joshi, Kyle Jeffrey, Rosario Jauregui Ruano, Jasmine Hsu, Keerthana Gopalakrishnan, Byron David, Andy Zeng, and Chuyuan Kelly Fu

brian ichter, Anthony Brohan, Yevgen Chebotar, Chelsea Finn, Karol Hausman, Alexander Herzog, Daniel Ho, Julian Ibarz, Alex Irpan, Eric Jang, Ryan Julian, Dmitry Kalashnikov, Sergey Levine, Yao Lu, Carolina Parada, Kanishka Rao, Pierre Sermanet, Alexander T Toshev, Vincent Vanhoucke, Fei Xia, Ted Xiao, Peng Xu, Mengyuan Yan, Noah Brown, Michael Ahn, Omar ...

2023

-

[25]

Building and better understanding vision-language models: insights and future directions, 2024

Hugo Laurençon, Andrés Marafioti, Victor Sanh, and Léo Tronchon. Building and better understanding vision-language models: insights and future directions, 2024

2024

-

[26]

LLaV A-onevision: Easy visual task transfer

Bo Li, Yuanhan Zhang, Dong Guo, Renrui Zhang, Feng Li, Hao Zhang, Kaichen Zhang, Peiyuan Zhang, Yanwei Li, Ziwei Liu, and Chunyuan Li. LLaV A-onevision: Easy visual task transfer. Transactions on Machine Learning Research, 2025

2025

-

[27]

Aligning cyber space with physical world: A comprehensive survey on embodied ai, 2025

Yang Liu, Weixing Chen, Yongjie Bai, Xiaodan Liang, Guanbin Li, Wen Gao, and Liang Lin. Aligning cyber space with physical world: A comprehensive survey on embodied ai, 2025

2025

-

[28]

Infigui-g1: Advancing gui grounding with adaptive exploration policy optimization

Yuhang Liu, Zeyu Liu, Shuanghe Zhu, Pengxiang Li, Congkai Xie, Jiasheng Wang, Xueyu Hu, Xiaotian Han, Jianbo Yuan, Xinyao Wang, et al. Infigui-g1: Advancing gui grounding with adaptive exploration policy optimization. InProceedings of the AAAI Conference on Artificial Intelligence, pages 32267–32275, 2026

2026

-

[29]

Advancing social intelligence in ai agents: Technical challenges and open questions

Leena Mathur, Paul Pu Liang, and Louis-Philippe Morency. Advancing social intelligence in ai agents: Technical challenges and open questions. InProceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, pages 20541–20560, 2024

2024

-

[30]

Human-level control through deep reinforcement learning.Nature, 518(7540):529–533, 2015

V olodymyr Mnih, Koray Kavukcuoglu, David Silver, Andrei A Rusu, Joel Veness, Marc G Bellemare, Alex Graves, Martin Riedmiller, Andreas K Fidjeland, Georg Ostrovski, et al. Human-level control through deep reinforcement learning.Nature, 518(7540):529–533, 2015

2015

-

[31]

Keane Ong, Wei Dai, Carol Li, Dewei Feng, Hengzhi Li, Jingyao Wu, Jiaee Cheong, Rui Mao, Gianmarco Mengaldo, Erik Cambria, et al. Human behavior atlas: Benchmarking unified psychological and social behavior understanding.arXiv preprint arXiv:2510.04899, 2025

-

[32]

GPT-4o System Card, 2024

OpenAI. GPT-4o System Card, 2024. Covers GPT-4o and GPT-4o-mini

2024

-

[33]

GPT-5.4 Thinking System Card

OpenAI. GPT-5.4 Thinking System Card. https://deploymentsafety.openai.com/gp t-5-4-thinking, March 2026. Released March 5, 2026

2026

-

[34]

GPT-5.5 System Card

OpenAI. GPT-5.5 System Card. https://deploymentsafety.openai.com/gpt-5-5 , April 2026. Released April 23, 2026

2026

-

[35]

Manso, Anaís Garrell, Alberto Sanfeliu, Anne Spalanzani, and Rachid Alami

Phani Teja Singamaneni, Pilar Bachiller-Burgos, Luis J. Manso, Anaís Garrell, Alberto Sanfeliu, Anne Spalanzani, and Rachid Alami. A survey on socially aware robot navigation: Taxonomy and future challenges.The International Journal of Robotics Research, 43(10):1533–1572, February 2024

2024

-

[36]

Gui-g2: Gaussian reward modeling for gui grounding, 2025

Fei Tang, Zhangxuan Gu, Zhengxi Lu, Xuyang Liu, Shuheng Shen, Changhua Meng, Wen Wang, Wenqi Zhang, Yongliang Shen, Weiming Lu, Jun Xiao, and Yueting Zhuang. Gui-g2: Gaussian reward modeling for gui grounding, 2025

2025

-

[37]

Gemma Team. Gemma 3 technical report.arXiv preprint arXiv:2503.19786, 2025

work page internal anchor Pith review arXiv 2025

-

[38]

GUI-actor: Coordinate-free visual grounding for GUI agents

Qianhui Wu, Kanzhi Cheng, Rui Yang, Chaoyun Zhang, Jianwei Yang, Huiqiang Jiang, Jian Mu, Baolin Peng, Bo Qiao, Reuben Tan, Si Qin, Lars Liden, Qingwei Lin, Huan Zhang, Tong Zhang, Jianbing Zhang, Dongmei Zhang, and Jianfeng Gao. GUI-actor: Coordinate-free visual grounding for GUI agents. InThe Thirty-ninth Annual Conference on Neural Information Processi...

2026

-

[39]

Deepseek-vl2: Mixture-of-experts vision-language models for advanced multimodal understanding, 2024

Zhiyu Wu, Xiaokang Chen, Zizheng Pan, Xingchao Liu, Wen Liu, Damai Dai, Huazuo Gao, Yiyang Ma, Chengyue Wu, Bingxuan Wang, Zhenda Xie, Yu Wu, Kai Hu, Jiawei Wang, Yaofeng Sun, Yukun Li, Yishi Piao, Kang Guan, Aixin Liu, Xin Xie, Yuxiang You, Kai Dong, Xingkai Yu, Haowei Zhang, Liang Zhao, Yisong Wang, and Chong Ruan. Deepseek-vl2: Mixture-of-experts visio...

2024

-

[40]

React: Synergizing reasoning and acting in language models

Shunyu Yao, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik R Narasimhan, and Yuan Cao. React: Synergizing reasoning and acting in language models. InThe Eleventh International Conference on Learning Representations, 2023

2023

-

[41]

Social- iq: A question answering benchmark for artificial social intelligence

Amir Zadeh, Michael Chan, Paul Pu Liang, Edmund Tong, and Louis-Philippe Morency. Social- iq: A question answering benchmark for artificial social intelligence. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2019

2019

-

[42]

Tensor fusion network for multimodal sentiment analysis

Amir Zadeh, Minghai Chen, Soujanya Poria, Erik Cambria, and Louis-Philippe Morency. Tensor fusion network for multimodal sentiment analysis. InProceedings of the 2017 conference on empirical methods in natural language processing, pages 1103–1114, 2017

2017

-

[43]

Multimodal language analysis in the wild: Cmu-mosei dataset and interpretable dynamic fusion graph

AmirAli Bagher Zadeh, Paul Pu Liang, Soujanya Poria, Erik Cambria, and Louis-Philippe Morency. Multimodal language analysis in the wild: Cmu-mosei dataset and interpretable dynamic fusion graph. InProceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 2236–2246, 2018

2018

-

[44]

Internvl3: Exploring advanced training and test-time recipes for open-source multimodal models, 2025

Jinguo Zhu, Weiyun Wang, Zhe Chen, Zhaoyang Liu, Shenglong Ye, Lixin Gu, Hao Tian, Yuchen Duan, Weijie Su, Jie Shao, Zhangwei Gao, Erfei Cui, Xuehui Wang, Yue Cao, Yangzhou Liu, Xingguang Wei, Hongjie Zhang, Haomin Wang, Weiye Xu, Hao Li, Jiahao Wang, Nianchen Deng, Songze Li, Yinan He, Tan Jiang, Jiapeng Luo, Yi Wang, Conghui He, Botian Shi, Xingcheng Zh...

2025

-

[45]

Sanketi, Grecia Salazar, Michael S

Brianna Zitkovich, Tianhe Yu, Sichun Xu, Peng Xu, Ted Xiao, Fei Xia, Jialin Wu, Paul Wohlhart, Stefan Welker, Ayzaan Wahid, Quan Vuong, Vincent Vanhoucke, Huong Tran, Radu Soricut, Anikait Singh, Jaspiar Singh, Pierre Sermanet, Pannag R. Sanketi, Grecia Salazar, Michael S. Ryoo, Krista Reymann, Kanishka Rao, Karl Pertsch, Igor Mordatch, Henryk Michalewski...

2023

-

[46]

Non-verbal Signal Recognition: The ability to interpret non-verbal communicative cues, including gaze direction, hand gestures, body posture, head movements, pointing, beckoning, and other implicit signals such as chin-directed requests. 26

-

[47]

Proxemics & Spatial Norms: The ability to reason about personal space, appropriate pass- ing distance, queuing, yielding, spatial occlusion, positional relationships, and movement boundaries in shared environments

-

[48]

Role Boundary & Authority: The ability to recognize role-defined responsibilities and authority relations, including who may issue instructions, whether a request is legitimate, and whether an action oversteps age-, identity-, responsibility-, or organization-based boundaries

-

[49]

Timing & Interruption Norms: The ability to judge when to intervene, wait, interrupt, or yield, taking into account turn-taking conventions, ongoing interactions, sequential order, and the pacing of human activities

-

[50]

Contextual Volume & Behavioral Restraint: The ability to adjust voice volume, notifica- tion sounds, movement amplitude, and behavioral conspicuousness according to the social and environmental context

-

[51]

Resource & Ownership Norms: The ability to reason about ownership, borrowing, sharing, occupation rights, unattended belongings, and whether an object may be moved, used, returned, or left untouched

-

[52]

Priority & Protected Persons: The ability to identify people who require prioritized assistance or protection, such as children, elderly people, patients, vulnerable individuals, or people involved in emergency situations

-

[53]

Annotation methodology.We assign dimension labels through a two-stage process that combines LLM-based classification with human calibration

Culture-Specific Norms: The ability to recognize etiquette, taboos, ceremonial practices, religious norms, and behavioral boundaries that vary across cultural or occasion-specific contexts. Annotation methodology.We assign dimension labels through a two-stage process that combines LLM-based classification with human calibration. In the first stage, Gemini...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.