Recognition: unknown

Constraint Decay: The Fragility of LLM Agents in Backend Code Generation

Pith reviewed 2026-05-08 08:44 UTC · model grok-4.3

The pith

LLM agents for backend code generation lose about 30 points in success rates as structural constraints accumulate.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

As structural requirements accumulate in backend code generation tasks, agent performance exhibits a substantial decline known as constraint decay, with capable configurations losing 30 points on average in assertion pass rates from baseline to fully specified tasks. Agents succeed more readily in minimal explicit frameworks such as Flask but perform substantially worse on average in convention-heavy environments such as FastAPI and Django. Error analysis identifies data-layer defects, including incorrect query composition and ORM runtime violations, as the leading root causes of failure.

What carries the argument

Constraint decay, the measured decline in assertion pass rates as structural constraints such as architecture patterns, databases, and mappings are added to otherwise identical tasks, evaluated through a dual system of end-to-end behavioral tests and static verifiers on a fixed API contract.

If this is right

- Capable agent configurations lose approximately 30 points in assertion pass rates when moving from baseline to fully specified structural requirements.

- Agents achieve higher success rates in minimal explicit frameworks such as Flask compared with convention-heavy frameworks such as FastAPI and Django.

- Data-layer defects such as incorrect query composition and ORM runtime violations constitute the primary root causes of observed failures.

- Weaker agent configurations approach zero success rates on tasks that accumulate multiple structural constraints.

Where Pith is reading between the lines

- Production backend development may need supplementary enforcement mechanisms because agents alone cannot reliably meet both functional and structural demands at scale.

- The decay pattern could appear in other constrained generation settings such as mobile or embedded systems, suggesting a broader limitation in current agent designs.

- Training regimes that progressively introduce structural rules alongside functional examples might reduce the severity of performance drops on complex tasks.

Load-bearing premise

The fixed unified API contract, the 80 greenfield plus 20 feature tasks, and the eight web frameworks sufficiently isolate structural complexity effects without confounding variations in task difficulty or framework-specific quirks.

What would settle it

An experiment showing that capable agents maintain or increase assertion pass rates on fully specified tasks relative to their baseline loose-specification performance would falsify the constraint decay claim.

Figures

read the original abstract

Large Language Model (LLM) agents demonstrate strong performance in autonomous code generation under loose specifications. However, production-grade software requires strict adherence to structural constraints, such as architectural patterns, databases, and object-relational mappings. Existing benchmarks often overlook these non-functional requirements, rewarding functionally correct but structurally arbitrary solutions. We present a systematic study evaluating how well agents handle structural constraints in multi-file backend generation. By fixing a unified API contract across 80 greenfield generation tasks and 20 feature-implementation tasks spanning eight web frameworks, we isolate the effect of structural complexity using a dual evaluation with end-to-end behavioral tests and static verifiers. Our findings reveal a phenomenon of constraint decay: as structural requirements accumulate, agent performance exhibits a substantial decline. Capable configurations lose 30 points on average in assertion pass rates from baseline to fully specified tasks, while some weaker configurations approach zero. Framework sensitivity analysis exposes significant performance disparities: agents succeed in minimal, explicit frameworks (e.g., Flask) but perform substantially worse on average in convention-heavy environments (e.g., FastAPI, Django). Finally, error analysis identifies data-layer defects (e.g., incorrect query composition and ORM runtime violations) as the leading root causes. This work highlights that jointly satisfying functional and structural requirements remains a key open challenge for coding agents.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

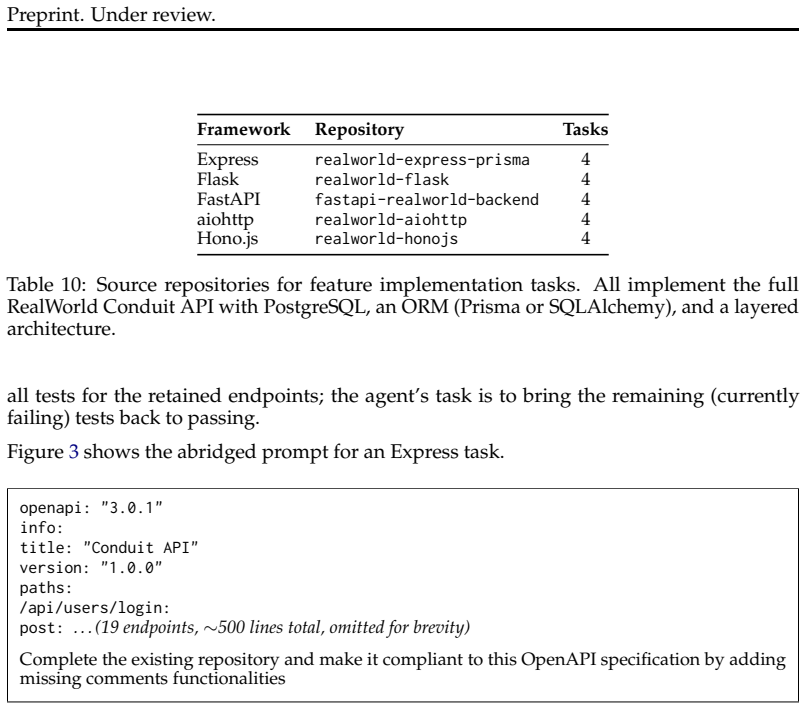

Summary. The paper claims that LLM agents for multi-file backend code generation suffer from 'constraint decay,' with capable configurations losing an average of 30 points in assertion pass rates as structural requirements (architectural patterns, databases, ORMs) accumulate from baseline to fully specified tasks. This is shown via 100 tasks (80 greenfield + 20 feature-implementation) spanning eight web frameworks under a fixed unified API contract, evaluated dually with end-to-end behavioral tests and static verifiers. Additional results highlight framework sensitivity (strong performance on minimal frameworks like Flask, substantial drops on convention-heavy ones like FastAPI and Django) and data-layer defects (incorrect queries, ORM violations) as the dominant error category.

Significance. If the results hold after controlling for potential confounds, this would meaningfully advance understanding of LLM agent limitations in realistic software engineering settings. The systematic multi-framework design with dual verification and error analysis provides a useful empirical lens on why agents struggle to jointly meet functional and structural requirements, identifying data-layer issues as a concrete priority for future work. The fixed-API isolation attempt and scale (100 tasks) are strengths that could influence benchmark design if the attribution to constraint accumulation is robust.

major comments (2)

- [Abstract] Abstract: The core attribution of performance decline to accumulating structural constraints (the 'constraint decay' phenomenon) is load-bearing but at risk due to the reported large framework disparities (Flask succeeds while FastAPI/Django fail substantially). Without explicit controls such as per-framework decline curves, task-difficulty metrics (e.g., required boilerplate lines or ORM entity counts), or balanced task sets across the 80+20 split, the 30-point drop cannot be cleanly separated from framework-specific quirks or inherent task variation.

- [Abstract] Evaluation setup (abstract and presumed §4 Results): The dual-evaluation protocol with tests and verifiers is described at a high level, but lacks details on statistical controls, exact agent configurations, pass-rate aggregation, or how framework disparities are accounted for in the average. This undermines verification of the central 30-point claim and the isolation of structural complexity effects.

minor comments (2)

- [Abstract] Abstract: The phrase 'capable configurations lose 30 points on average' would benefit from a parenthetical note on the exact baseline vs. fully-specified comparison and number of runs.

- The manuscript would be strengthened by adding a short related-work paragraph contrasting this setup against prior code-generation benchmarks that omit structural constraints.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. The comments highlight important aspects of attribution and methodological transparency that we address below. We have revised the paper to incorporate additional analyses and details while preserving the core experimental design.

read point-by-point responses

-

Referee: [Abstract] Abstract: The core attribution of performance decline to accumulating structural constraints (the 'constraint decay' phenomenon) is load-bearing but at risk due to the reported large framework disparities (Flask succeeds while FastAPI/Django fail substantially). Without explicit controls such as per-framework decline curves, task-difficulty metrics (e.g., required boilerplate lines or ORM entity counts), or balanced task sets across the 80+20 split, the 30-point drop cannot be cleanly separated from framework-specific quirks or inherent task variation.

Authors: We appreciate the referee's point on potential confounds between framework effects and constraint accumulation. The unified API contract was specifically chosen to hold functional requirements constant, allowing structural constraints to vary while keeping task semantics aligned. Framework sensitivity is presented as a substantive finding rather than a confound. In the revision we add per-framework decline curves (showing consistent decay within each framework, albeit with different slopes) and task-difficulty metrics (average boilerplate lines and ORM entity counts per task category). These confirm that the 80 greenfield and 20 feature tasks are balanced in structural load. The reported 30-point average is now accompanied by framework-stratified results to clarify the separation of effects. revision: yes

-

Referee: [Abstract] Evaluation setup (abstract and presumed §4 Results): The dual-evaluation protocol with tests and verifiers is described at a high level, but lacks details on statistical controls, exact agent configurations, pass-rate aggregation, or how framework disparities are accounted for in the average. This undermines verification of the central 30-point claim and the isolation of structural complexity effects.

Authors: We agree that greater detail is required for reproducibility. The revised manuscript expands the evaluation section to specify exact agent configurations (models, temperatures, and prompt structures), statistical controls (fixed seeds and reporting of any variance across runs), pass-rate aggregation (mean assertion pass rate per task, then macro-averaged), and framework accounting (overall mean plus per-framework breakdowns with the fixed API as the isolating mechanism). These additions directly support verification of the 30-point decline and the structural-complexity isolation. revision: yes

Circularity Check

No circularity: purely empirical measurement study

full rationale

This paper reports direct experimental measurements of LLM agent performance on fixed backend code generation tasks. The 'constraint decay' observation is computed from assertion pass rates across baseline vs. fully-specified tasks; no equations, parameter fits, or predictions are derived that could reduce to inputs by construction. Framework disparities and error categorizations are likewise raw empirical outputs. No self-citations, uniqueness theorems, or ansatzes appear in the load-bearing claims. The derivation chain is therefore self-contained against the task executions themselves.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The selected greenfield and feature tasks across eight frameworks isolate structural complexity without significant confounding from task difficulty variations.

Reference graph

Works this paper leans on

-

[1]

Kimi K2.5: Visual Agentic Intelligence , url=

Kimi , year=. Kimi K2.5: Visual Agentic Intelligence , url=

-

[2]

Minimax M2.5: Built for real-world productivity

MiniMax , year=. Minimax M2.5: Built for real-world productivity. , url=

-

[3]

Qwen3 Technical Report , author=. arXiv preprint arXiv:2505.09388 , year=

work page internal anchor Pith review arXiv

-

[4]

2025 , eprint=

Devstral: Fine-tuning Language Models for Coding Agent Applications , author=. 2025 , eprint=

2025

-

[5]

, title =

Martin, Robert C. , title =. 2017 , isbn =

2017

-

[6]

Better Software , volume=

Introducing bdd , author=. Better Software , volume=

-

[7]

2026 , eprint=

Qwen3-Coder-Next Technical Report , author=. 2026 , eprint=

2026

-

[8]

2026 , url =

Introducing the OpenHands Index , author =. 2026 , url =

2026

-

[9]

2026 , url =

Introducing. 2026 , url =

2026

-

[10]

2025 , url =

Introducing. 2025 , url =

2025

-

[11]

Qwen3.5: Accelerating Productivity with Native Multimodal Agents , url =

-

[12]

Sirui Hong and Mingchen Zhuge and Jonathan Chen and Xiawu Zheng and Yuheng Cheng and Jinlin Wang and Ceyao Zhang and Zili Wang and Steven Ka Shing Yau and Zijuan Lin and Liyang Zhou and Chenyu Ran and Lingfeng Xiao and Chenglin Wu and J. Meta. The Twelfth International Conference on Learning Representations , year=

-

[13]

2026 , url=

Jane Luo and Xin Zhang and Steven Liu and Jie Wu and Yiming Huang and Yangyu Huang and Chengyu Yin and Ying Xin and Jianfeng Liu and Yuefeng Zhan and Hao Sun and Qi Chen and Scarlett Li and Mao Yang , booktitle=. 2026 , url=

2026

-

[14]

The Fourteenth International Conference on Learning Representations , year=

Paper2Code: Automating Code Generation from Scientific Papers in Machine Learning , author=. The Fourteenth International Conference on Learning Representations , year=

-

[15]

The Thirteenth International Conference on Learning Representations , year=

Commit0: Library Generation from Scratch , author=. The Thirteenth International Conference on Learning Representations , year=

-

[16]

Attention is All you Need , url =

Vaswani, Ashish and Shazeer, Noam and Parmar, Niki and Uszkoreit, Jakob and Jones, Llion and Gomez, Aidan N and Kaiser, ukasz and Polosukhin, Illia , booktitle =. Attention is All you Need , url =

-

[17]

2024 , url=

Carlos E Jimenez and John Yang and Alexander Wettig and Shunyu Yao and Kexin Pei and Ofir Press and Karthik R Narasimhan , booktitle=. 2024 , url=

2024

-

[18]

Daoguang Zan and Zhirong Huang and Wei Liu and Hanwu Chen and Shulin Xin and Linhao Zhang and Qi Liu and Aoyan Li and Lu Chen and Xiaojian Zhong and Siyao Liu and Yongsheng Xiao and Liangqiang Chen and Yuyu Zhang and Jing Su and Tianyu Liu and RUI LONG and Ming Ding and liang xiang , booktitle=. Multi-. 2025 , url=

2025

-

[19]

SWE-Bench Pro: Can AI Agents Solve Long-Horizon Software Engineering Tasks?

SWE-Bench Pro: Can AI Agents Solve Long-Horizon Software Engineering Tasks? , author=. arXiv preprint arXiv:2509.16941 , year=

work page internal anchor Pith review arXiv

-

[20]

SWE-PolyBench: A multi-language benchmark for repository level evaluation of coding agents , author=. arXiv preprint arXiv:2504.08703 , year=

-

[21]

FEA -Bench: A Benchmark for Evaluating Repository-Level Code Generation for Feature Implementation

Li, Wei and Zhang, Xin and Guo, Zhongxin and Mao, Shaoguang and Luo, Wen and Peng, Guangyue and Huang, Yangyu and Wang, Houfeng and Li, Scarlett. FEA -Bench: A Benchmark for Evaluating Repository-Level Code Generation for Feature Implementation. Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)...

-

[22]

arXiv preprint arXiv:2509.22237 , year=

FeatBench: Evaluating Coding Agents on Feature Implementation for Vibe Coding , author=. arXiv preprint arXiv:2509.22237 , year=

-

[23]

ABC-Bench: Benchmarking Agentic Backend Coding in Real-World Development , author=. arXiv preprint arXiv:2601.11077 , year=

-

[24]

2024 , booktitle=

BaxBench: Can LLMs Generate Correct and Secure Backends? , author=. 2024 , booktitle=

2024

-

[25]

Xu and Xiangru Tang and Mingchen Zhuge and Jiayi Pan and Yueqi Song and Bowen Li and Jaskirat Singh and Hoang H

Xingyao Wang and Boxuan Li and Yufan Song and Frank F. Xu and Xiangru Tang and Mingchen Zhuge and Jiayi Pan and Yueqi Song and Bowen Li and Jaskirat Singh and Hoang H. Tran and Fuqiang Li and Ren Ma and Mingzhang Zheng and Bill Qian and Yanjun Shao and Niklas Muennighoff and Yizhe Zhang and Binyuan Hui and Junyang Lin and Robert Brennan and Hao Peng and H...

2025

-

[26]

2024 , booktitle=

SWE-agent: Agent-Computer Interfaces Enable Automated Software Engineering , author=. 2024 , booktitle=

2024

-

[27]

Agentless: Demystifying LLM-based Software Engineering Agents

Agentless: Demystifying llm-based software engineering agents , author=. arXiv preprint arXiv:2407.01489 , year=

work page internal anchor Pith review arXiv

-

[28]

mini-SWE-agent: The 100 Line AI Agent That's Actually Useful , url =

-

[29]

2025 , howpublished =

2025

-

[30]

2025 , journal =

Xia, Chunqiu Steven and Wang, Zhe and Yang, Yan and Wei, Yuxiang and Zhang, Lingming , title =. 2025 , journal =

2025

-

[31]

arXiv preprint arXiv:2512.12730 , year=

NL2Repo-Bench: Towards Long-Horizon Repository Generation Evaluation of Coding Agents , author=. arXiv preprint arXiv:2512.12730 , year=

-

[32]

Evaluating Large Language Models Trained on Code

Evaluating large language models trained on code , author=. arXiv preprint arXiv:2107.03374 , year=

work page internal anchor Pith review arXiv

-

[33]

International Conference on Machine Learning , pages=

SWE-Lancer: Can Frontier LLMs Earn \ 1 Million from Real-World Freelance Software Engineering? , author=. International Conference on Machine Learning , pages=. 2025 , organization=

2025

-

[34]

RepoBench: Benchmarking Repository-Level Code Auto-Completion Systems , author=

-

[35]

Advances in Neural Information Processing Systems , volume=

Crosscodeeval: A diverse and multilingual benchmark for cross-file code completion , author=. Advances in Neural Information Processing Systems , volume=

-

[36]

Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing , pages=

Repocoder: Repository-level code completion through iterative retrieval and generation , author=. Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing , pages=

2023

-

[37]

R2E-Gym: Procedural Environment Generation and Hybrid Verifiers for Scaling Open-Weights

Naman Jain and Jaskirat Singh and Manish Shetty and Tianjun Zhang and Liang Zheng and Koushik Sen and Ion Stoica , booktitle=. R2E-Gym: Procedural Environment Generation and Hybrid Verifiers for Scaling Open-Weights. 2025 , url=

2025

-

[38]

Yiqing Xie and Alex Xie and Divyanshu Sheth and Pengfei Liu and Daniel Fried and Carolyn Rose , booktitle=. Repo. 2025 , url=

2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.