Recognition: unknown

ROSE: Rollout On Serving GPUs via Cooperative Elasticity for Agentic RL

Pith reviewed 2026-05-08 05:09 UTC · model grok-4.3

The pith

ROSE accelerates agentic RL by repurposing idle serving GPUs for rollouts while preserving SLOs.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

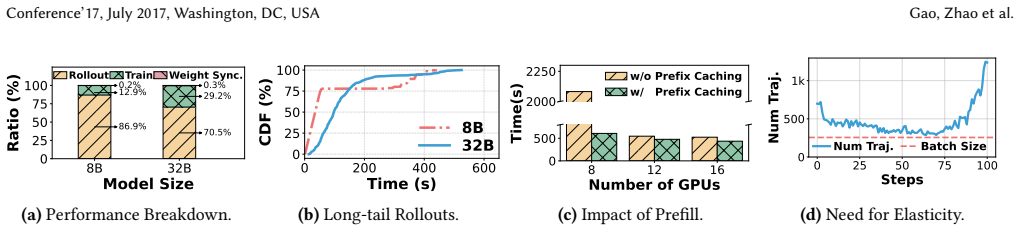

ROSE is a post-training system that safely harvests idle compute and memory on serving GPUs to execute agentic RL rollouts via an SLO-preserving co-serving executor, a cross-cluster weight transfer engine using shards and sparsity, and an elastic scheduler that dynamically allocates cooperative capacity, delivering 1.20-3.31x higher end-to-end throughput than resource-fixed or elastic baselines.

What carries the argument

Cooperative elasticity: an SLO-safe co-serving executor for GPU memory and compute sharing, combined with sparsity-leveraging weight transfer and dynamic rollout scheduling across serving and dedicated GPUs.

If this is right

- Agentic RL training throughput increases without adding dedicated GPUs.

- Overall cluster utilization rises by filling serving headroom with rollout work.

- Multi-turn rollout latency drops through dynamic capacity borrowing.

- Weight synchronization overhead stays low even across separate serving and training clusters.

Where Pith is reading between the lines

- The same sharing pattern could apply to other long-tail workloads such as search or planning agents.

- Operators might design unified GPU pools that mix serving and training jobs by default.

- Further gains may appear when extending the scheduler to predict traffic bursts more accurately.

Load-bearing premise

Production serving clusters routinely leave substantial GPU compute and memory headroom that can be safely repurposed for rollouts without violating serving SLOs under bursty traffic.

What would settle it

A measurement during sudden traffic bursts that checks whether co-locating RL rollouts on serving GPUs causes any service level objective violations.

Figures

read the original abstract

Agentic reinforcement learning (RL) has emerged as a key driver for improving the multi-step reasoning and tool-use capabilities of LLMs. However, its efficiency is bottlenecked by long-tail rollouts with multi-turn environment interactions, making static GPU provisioning a poor fit: overprovisioning wastes GPUs on stragglers, while underprovisioning increases contention and slows training. We observe that production serving clusters routinely leave substantial GPU compute and memory headroom. Based on this observation, we argue for cooperative elasticity: opportunistically repurposing underutilized serving GPUs to execute rollouts. Realizing cooperative elasticity is non-trivial because it must preserve serving Service Level Objectives (SLOs) under bursty traffic and minimize communication overhead. To address these challenges, we present ROSE, a cooperative, resource-elastic post-training system that safely harvests idle compute and memory on serving GPUs to accelerate agentic RL rollouts. ROSE consists of three components: (1) an SLO-safe co-serving executor that improves rollout throughput while preserving serving SLOs through efficient GPU memory and compute sharing; (2) a cross-cluster weight transfer engine that leverages weight shards and sparsity for fast weight synchronization across clusters; and (3) an elastic rollout scheduler that dynamically provisions cooperative capacity and routes trajectory rollouts across dedicated rollout GPUs and opportunistic serving GPUs. Experiments across multiple model sizes and cluster scales show that ROSE improves average end-to-end throughput by 1.20-3.31 x compared with state-of-the-art resource-fixed and elastic baselines.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces ROSE, a cooperative elasticity system for agentic RL that opportunistically repurposes underutilized serving GPUs for rollout execution. It consists of an SLO-safe co-serving executor for memory/compute sharing, a cross-cluster weight transfer engine leveraging shards and sparsity, and an elastic rollout scheduler. The central empirical claim is that ROSE achieves 1.20-3.31x average end-to-end throughput improvement over state-of-the-art resource-fixed and elastic baselines across multiple model sizes and cluster scales.

Significance. If the SLO-preservation result holds under realistic bursty workloads, ROSE could meaningfully improve GPU utilization for post-training by co-locating serving and RL workloads, reducing waste from static provisioning of long-tail rollouts. The approach is practically relevant for production clusters that already run LLM serving.

major comments (2)

- [Abstract] Abstract: The headline 1.20-3.31x throughput claim rests on the SLO-safe co-serving executor harvesting headroom without violating p99 latency or throughput SLOs under bursty traffic. The abstract asserts this via 'efficient GPU memory and compute sharing' but provides no description of preemption, eviction, or isolation mechanisms, nor any indication that experiments used high-CV production traces rather than synthetic low-variance loads. This assumption is load-bearing; if bursts cause activation eviction or delayed preemption, the reported gains are conditional on unvalidated traffic assumptions.

- [§5] Experimental evaluation (assumed §5): The abstract states results 'across multiple model sizes and cluster scales' and 'compared with state-of-the-art resource-fixed and elastic baselines' but supplies no concrete baselines, workload traces, SLO definitions (e.g., exact p99 targets), or statistical significance. Without these details the cross-scale claim cannot be assessed for robustness.

minor comments (1)

- [Abstract] Abstract: The phrase 'production serving clusters routinely leave substantial GPU compute and memory headroom' is presented as an observation but lacks a supporting citation or measurement; a brief reference to prior utilization studies would strengthen it.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback. We address each major comment below with clarifications from the manuscript and commit to revisions that improve the presentation of our mechanisms and experimental details.

read point-by-point responses

-

Referee: [Abstract] Abstract: The headline 1.20-3.31x throughput claim rests on the SLO-safe co-serving executor harvesting headroom without violating p99 latency or throughput SLOs under bursty traffic. The abstract asserts this via 'efficient GPU memory and compute sharing' but provides no description of preemption, eviction, or isolation mechanisms, nor any indication that experiments used high-CV production traces rather than synthetic low-variance loads. This assumption is load-bearing; if bursts cause activation eviction or delayed preemption, the reported gains are conditional on unvalidated traffic assumptions.

Authors: We appreciate the referee highlighting the load-bearing nature of the SLO claims. Section 3.1 of the manuscript details the SLO-safe co-serving executor, which uses activation paging for memory eviction, priority-based preemption with bounded delay, and isolation via per-tenant CUDA streams plus memory quotas. Section 5.1 further specifies that the evaluation employs production traces with CV > 2.0 to model bursty traffic, alongside synthetic loads. We will revise the abstract to concisely reference these mechanisms and the realistic workload characteristics. revision: yes

-

Referee: [§5] Experimental evaluation (assumed §5): The abstract states results 'across multiple model sizes and cluster scales' and 'compared with state-of-the-art resource-fixed and elastic baselines' but supplies no concrete baselines, workload traces, SLO definitions (e.g., exact p99 targets), or statistical significance. Without these details the cross-scale claim cannot be assessed for robustness.

Authors: We agree the abstract is high-level. The concrete elements appear in Section 5: baselines are vLLM (fixed) and Orca/FlexGen variants (elastic); traces are production serving logs detailed in §5.1; SLOs are p99 latency < 200 ms and >90% peak throughput; results report means with standard deviations over 5–10 runs and t-test significance. We will add a brief summary sentence to the abstract (or a footnote) listing these to make the claims self-contained without lengthening it excessively. revision: yes

Circularity Check

No circularity; empirical system evaluation with no derivation chain

full rationale

The paper describes an engineering system (ROSE) with three components for cooperative elasticity on serving GPUs, motivated by an observation about GPU headroom and validated through end-to-end throughput experiments across model sizes and scales. No mathematical derivations, first-principles predictions, fitted parameters renamed as outputs, or self-referential equations appear in the abstract or description. Claims reduce to measured benchmark results rather than any tautological reduction to inputs. Self-citations, if present, are not load-bearing for any derivation.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Alibaba Cloud. 2026. Creating a GPU function.https://www.alibabac loud.com/help/en/functioncompute/fc/user-guide/creating-a-gpu- function/. (2026). Accessed: 2026-04

2026

-

[2]

Li, Ryota Tomioka, and Milan Vojnovic

Dan Alistarh, Demjan Grubic, Jerry Z. Li, Ryota Tomioka, and Milan Vojnovic. 2017. QSGD: communication-efficient SGD via gradient quantization and encoding. InProceedings of the 31st International Conference on Neural Information Processing Systems (NIPS’17). Curran Associates Inc., Red Hook, NY, USA, 1707–1718

2017

-

[3]

Romil Bhardwaj, Zhengxu Xia, Ganesh Ananthanarayanan, Junchen Jiang, Yuanchao Shu, Nikolaos Karianakis, Kevin Hsieh, Paramvir Bahl, and Ion Stoica. 2022. Ekya: Continuous Learning of Video Analytics Models on Edge Compute Servers. In19th USENIX Symposium on Networked Systems Design and Implementation (NSDI 22). USENIX Association, Renton, WA, 119–135.http...

2022

-

[4]

Hegde, Connor Chen, Charlie Ruan, Tyler Griggs, Shu Liu, Eric Tang, Richard Liaw, Philipp Moritz, Matei Zaharia, Joseph E

Shiyi Cao, Dacheng Li, Fangzhou Zhao, Shuo Yuan, Sumanth R. Hegde, Connor Chen, Charlie Ruan, Tyler Griggs, Shu Liu, Eric Tang, Richard Liaw, Philipp Moritz, Matei Zaharia, Joseph E. Gonzalez, and Ion Stoica

-

[5]

Skyrl-agent: Efficient rl training for multi-turn llm agent.arXiv preprint arXiv:2511.16108, 2025

SkyRL-Agent: Efficient RL Training for Multi-turn LLM Agent. arXiv preprint arXiv:2511.16108(2025)

- [6]

-

[7]

Yihua Cheng, Yuhan Liu, Jiayi Yao, Yuwei An, Xiaokun Chen, Shaoting Feng, Yuyang Huang, Samuel Shen, Kuntai Du, and Junchen Jiang

- [8]

-

[9]

Jiangfei Duan, Runyu Lu, Haojie Duanmu, Xiuhong Li, Xingcheng Zhang, Dahua Lin, Ion Stoica, and Hao Zhang. 2024. MuxServe: Flexible Spatial-Temporal Multiplexing for Multiple LLM Serving. InICML

2024

-

[10]

Assaf Eisenman, Kiran Kumar Matam, Steven Ingram, Dheevatsa Mudigere, Raghuraman Krishnamoorthi, Krishnakumar Nair, Misha Smelyanskiy, and Murali Annavaram. 2022. Check-N-Run: A check- pointing system for training deep learning recommendation models. In19th USENIX Symposium on Networked Systems Design and Imple- mentation (NSDI 22). 929–943

2022

-

[11]

Farama Foundation. 2024. Gymnasium - FrozenLake Environment. https://gymnasium.farama.org/environments/toy_text/frozen_lake/. (2024). Accessed: 2025-09

2024

-

[12]

Jiawei Fei, Chen-Yu Ho, Atal N Sahu, Marco Canini, and Amedeo Sapio

-

[13]

InProceedings of the 2021 ACM SIGCOMM 2021 Conference

Efficient sparse collective communication and its application to 14 ROSE: Rollouts on Serving GPUs Conference’17, July 2017, Washington, DC, USA accelerate distributed deep learning. InProceedings of the 2021 ACM SIGCOMM 2021 Conference. 676–691

2017

-

[16]

Wei Fu, Jiaxuan Gao, Xujie Shen, Chen Zhu, Zhiyu Mei, Chuyi He, Shusheng Xu, Guo Wei, Jun Mei, Jiashu Wang, Tongkai Yang, Binhang Yuan, and Yi Wu. 2025. AReaL: A Large-Scale Asynchronous Rein- forcement Learning System for Language Reasoning.arXiv preprint arXiv:2505.10978(2025)

work page internal anchor Pith review arXiv 2025

-

[17]

Yao Fu, Leyang Xue, Yeqi Huang, Andrei-Octavian Brabete, Dmitrii Ustiugov, Yuvraj Patel, and Luo Mai. 2024. ServerlessLLM: Low- Latency Serverless Inference for Large Language Models. InOSDI’24

2024

-

[18]

Wei Gao, Zhuoyuan Ouyang, Peng Sun, Tianwei Zhang, and Yonggang Wen. 2025. IceFrog: A Layer-Elastic Scheduling System for Deep Learning Training in GPU Clusters.IEEE Transactions on Parallel and Distributed Systems36, 6 (2025), 1071–1086.https://doi.org/10.1109/ TPDS.2025.3553137

-

[19]

Wei Gao, Yuheng Zhao, Dakai An, Tianyuan Wu, Lunxi Cao, Shaopan Xiong, Ju Huang, Weixun Wang, Siran Yang, Wenbo Su, Jiamang Wang, Lin Qu, Bo Zheng, and Wei Wang. 2025. RollPacker: Mitigating Long- Tail Rollouts for Fast, Synchronous RL Post-Training.arXiv preprint arXiv:2509.21009(2025)

-

[20]

Wei Gao, Yuheng Zhao, Tianyuan Wu, Shaopan Xiong, Weixun Wang, Dakai An, Lunxi Cao, Dilxat Muhtar, Zichen Liu, Haizhou Zhao, Ju Huang, Siran Yang, Yongbin Li, Wenbo Su, Jiamang Wang, Lin Qu, Bo Zheng, and Wei Wang. 2025. RollArt: Scaling Agentic RL Training via Disaggregated Infrastructure.arXiv preprint arXiv:2512.22560(2025)

-

[21]

Mingcong Han, Hanze Zhang, Rong Chen, and Haibo Chen

-

[22]

In16th USENIX Symposium on Oper- ating Systems Design and Implementation (OSDI 22)

Microsecond-scale preemption for concurrent {GPU- accelerated} {DNN} inferences. In16th USENIX Symposium on Oper- ating Systems Design and Implementation (OSDI 22). 539–558

-

[23]

Zhenyu Han, Ansheng You, Haibo Wang, Kui Luo, Guang Yang, Wenqi Shi, Menglong Chen, Sicheng Zhang, Zeshun Lan, Chunshi Deng, Huazhong Ji, Wenjie Liu, Yu Huang, Yixiang Zhang, Chenyi Pan, Jing Wang, Xin Huang, Chunsheng Li, and Jianping Wu. 2025. AsyncFlow: An Asynchronous Streaming RL Framework for Efficient LLM Post- Training.arXiv preprint arXiv:2507.01...

- [24]

-

[25]

Mor Harchol-Balter, Cuihong Li, Takayuki Osogami, Alan Scheller- Wolf, and Mark S. Squillante. 2003. Cycle stealing under immediate dispatch task assignment. InProceedings of the Fifteenth Annual ACM Symposium on Parallel Algorithms and Architectures (SPAA ’03). As- sociation for Computing Machinery, New York, NY, USA, 274–285. https://doi.org/10.1145/777...

-

[26]

Eric Harper, Somshubra Majumdar, Oleksii Kuchaiev, Li Jason, Yang Zhang, Evelina Bakhturina, Vahid Noroozi, Sandeep Subramanian, Koluguri Nithin, Huang Jocelyn, Fei Jia, Jagadeesh Balam, Xuesong Yang, Micha Livne, Yi Dong, Sean Naren, and Boris Ginsburg. 2025. NeMo: a toolkit for Conversational AI and Large Language Models. (2025).https://github.com/NVIDIA/NeMo

2025

- [27]

- [28]

-

[29]

Ziheng Jiang, Haibin Lin, Yinmin Zhong, Qi Huang, Yangrui Chen, Zhi Zhang, Yanghua Peng, Xiang Li, Cong Xie, Shibiao Nong, Yulu Jia, Sun He, Hongmin Chen, Zhihao Bai, Qi Hou, Shipeng Yan, Ding Zhou, Yiyao Sheng, Zhuo Jiang, Haohan Xu, Haoran Wei, Zhang Zhang, Pengfei Nie, Leqi Zou, Sida Zhao, Liang Xiang, Zherui Liu, Zhe Li, Xiaoying Jia, Jianxi Ye, Xin J...

2024

-

[30]

SWE-bench: Can Language Models Resolve Real-World GitHub Issues?

Carlos E. Jimenez, John Yang, Alexander Wettig, Shunyu Yao, Kexin Pei, Ofir Press, and Karthik Narasimhan. 2024. SWE-bench: Can Language Models Resolve Real-World GitHub Issues?arXiv preprint arXiv:2310.06770(2024)

work page internal anchor Pith review arXiv 2024

-

[31]

Norm Jouppi, George Kurian, Sheng Li, Peter Ma, Rahul Nagarajan, Lifeng Nai, Nishant Patil, Suvinay Subramanian, Andy Swing, Brian Towles, et al. 2023. Tpu v4: An optically reconfigurable supercom- puter for machine learning with hardware support for embeddings. In Proceedings of the 50th annual international symposium on computer architecture. 1–14

2023

-

[32]

Gonzalez, Hao Zhang, and Ion Sto- ica

Woosuk Kwon, Zhuohan Li, Siyuan Zhuang, Ying Sheng, Lianmin Zheng, Cody Hao Yu, Joseph E. Gonzalez, Hao Zhang, and Ion Sto- ica. 2023. Efficient Memory Management for Large Language Model Serving with PagedAttention. InProceedings of the ACM SIGOPS 29th Symposium on Operating Systems Principles

2023

- [33]

-

[34]

Jiamin Li, Hong Xu, Yibo Zhu, Zherui Liu, Chuanxiong Guo, and Cong Wang. 2023. Lyra: Elastic Scheduling for Deep Learning Clusters. In Proceedings of the Eighteenth European Conference on Computer Systems. Association for Computing Machinery, New York, NY, USA, 835–850. https://doi.org/10.1145/3552326.3587445

- [35]

-

[36]

Zhuohan Li, Lianmin Zheng, Yinmin Zhong, Vincent Liu, Ying Sheng, Xin Jin, Yanping Huang, Zhifeng Chen, Hao Zhang, Joseph E Gon- zalez, et al. 2023. {AlpaServe}: Statistical multiplexing with model parallelism for deep learning serving. In17th USENIX Symposium on Operating Systems Design and Implementation (OSDI 23). 663–679

2023

-

[37]

Hwijoon Lim, Juncheol Ye, Sangeetha Abdu Jyothi, and Dongsu Han

-

[38]

InProceedings of the ACM SIGCOMM 2024 Con- ference

Accelerating model training in multi-cluster environments with consumer-grade gpus. InProceedings of the ACM SIGCOMM 2024 Con- ference. 707–720

2024

- [39]

-

[40]

Han Lu, Zichen Liu, Shaopan Xiong, Yancheng He, Wei Gao, Yanan Wu, Weixun Wang, Jiashun Liu, Yang Li, Haizhou Zhao, Ju Huang, Siran Yang, Xiaoyang Li, Yijia Luo, Zihe Liu, Ling Pan, Junchi Yan, Wei Wang, Wenbo Su, Jiamang Wang, Lin Qu, and Bo Zheng. 2025. Part II: ROLL Flash – Accelerating RLVR and Agentic Training with Asynchrony.arXiv preprint arXiv:251...

- [41]

-

[42]

Michael Luo, Sijun Tan, Roy Huang, Ameen Patel, Alpay Ariyak, Qingyang Wu, Xiaoxiang Shi, Rachel Xin, Colin Cai, Maurice We- ber, Ce Zhang, Li Erran Li, Raluca Ada Popa, and Ion Stoica. 2025. DeepCoder: A Fully Open-Source 14B Coder at O3-mini Level.https: 15 Conference’17, July 2017, Washington, DC, USA Gao, Zhao et al. //pretty-radio-b75.notion.site/Dee...

2025

- [43]

-

[44]

Philipp Moritz, Robert Nishihara, Stephanie Wang, Alexey Tumanov, Richard Liaw, Eric Liang, Melih Elibol, Zongheng Yang, William Paul, Michael I Jordan, et al. 2018. Ray: A distributed framework for emerg- ing {AI} applications. In13th USENIX symposium on operating systems design and implementation (OSDI 18). 561–577

2018

-

[45]

Aashiq Muhamed, Oscar Li, David Woodruff, Mona Diab, and Virginia Smith. 2024. Grass: Compute efficient low-memory llm training with structured sparse gradients. InProceedings of the 2024 Conference on Empirical Methods in Natural Language Processing. 14978–15003

2024

-

[46]

NVIDIA Corporation. 2024. NVIDIA Multi-Process Service (MPS) Documentation.https://docs.nvidia.com/deploy/mps/index.html. (2024)

2024

-

[47]

OpenPipe. 2025. Serverless RL. (2025).https://openpipe.ai/blog/serve rless-rl

2025

-

[48]

Pratyush Patel, Esha Choukse, Chaojie Zhang, Aashaka Shah, Íñigo Goiri, Saeed Maleki, and Ricardo Bianchini. 2025. Splitwise: Efficient Generative LLM Inference Using Phase Splitting. InProceedings of the 51st Annual International Symposium on Computer Architecture (ISCA ’24). IEEE Press, 118–132.https://doi.org/10.1109/ISCA59077.2024.000 19

-

[49]

Gon- zalez, Ion Stoica, and Harry Xu

Yifan Qiao, Shu Anzai, Shan Yu, Haoran Ma, Shuo Yang, Yang Wang, Miryung Kim, Yongji Wu, Yang Zhou, Jiarong Xing, Joseph E. Gon- zalez, Ion Stoica, and Harry Xu. 2025. ConServe: Fine-Grained GPU Harvesting for LLM Online and Offline Co-Serving.arXiv preprint arXiv:2410.01228(2025)

-

[50]

Ruoyu Qin, Weiran He, Weixiao Huang, Yangkun Zhang, Yikai Zhao, Bo Pang, Xinran Xu, Yingdi Shan, Yongwei Wu, and Mingxing Zhang

-

[51]

Seer: Online Context Learning for Fast Synchronous LLM Rein- forcement Learning.arXiv preprint arXiv:2511.14617(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[52]

Ruoyu Qin, Zheming Li, Weiran He, Jialei Cui, Heyi Tang, Feng Ren, Teng Ma, Shangming Cai, Yineng Zhang, Mingxing Zhang, et al. 2024. Mooncake: A kvcache-centric disaggregated architecture for llm serv- ing.ACM Transactions on Storage(2024)

2024

-

[53]

Haoran Qiu, Anish Biswas, Zihan Zhao, Jayashree Mohan, Alind Khare, Esha Choukse, Íñigo Goiri, Zeyu Zhang, Haiying Shen, Chetan Bansal, Ramachandran Ramjee, and Rodrigo Fonseca. 2025. ModServe: Modality- and Stage-Aware Resource Disaggregation for Scalable Mul- timodal Model Serving. InProceedings of the 2025 ACM Symposium on Cloud Computing (SoCC 2025). ...

2025

- [54]

- [55]

- [56]

-

[57]

SGLang Team. 2025. SGLang: Fast Serving Framework for Large Language Models.https://github.com/sgl-project/sglang. (2025). Version 0.4

2025

-

[58]

Zelei Shao, Vikranth Srivatsa, Sanjana Srivastava, Qingyang Wu, Al- pay Ariyak, Xiaoxia Wu, Ameen Patel, Jue Wang, Percy Liang, Tri Dao, Ce Zhang, Yiying Zhang, Ben Athiwaratkun, Chenfeng Xu, and Junx- iong Wang. 2025. Beat the long tail: Distribution-Aware Speculative Decoding for RL Training.arXiv preprint arXiv:2511.13841(2025)

-

[59]

Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Y Wu, et al. 2024. Deepseekmath: Pushing the limits of mathematical reasoning in open language models.arXiv preprint arXiv:2402.03300(2024)

work page internal anchor Pith review arXiv 2024

- [60]

-

[61]

Guangming Sheng, Chi Zhang, Zilingfeng Ye, Xibin Wu, Wang Zhang, Ru Zhang, Yanghua Peng, Haibin Lin, and Chuan Wu. 2024. Hy- bridFlow: A Flexible and Efficient RLHF Framework.arXiv preprint arXiv:2409.19256(2024)

work page internal anchor Pith review arXiv 2024

-

[62]

Guangming Sheng, Chi Zhang, Zilingfeng Ye, Xibin Wu, Wang Zhang, Ru Zhang, Yanghua Peng, Haibin Lin, and Chuan Wu. 2024. verl: Volcano Engine Reinforcement Learning for LLM.https://github.com /volcengine/verl. (2024)

2024

-

[63]

Mohammad Shoeybi, Mostofa Patwary, Raul Puri, Patrick LeGresley, Jared Casper, and Bryan Catanzaro. 2019. Megatron-lm: Training multi- billion parameter language models using model parallelism.arXiv preprint arXiv:1909.08053(2019)

work page internal anchor Pith review arXiv 2019

-

[64]

Mohit Shridhar, Xingdi Yuan, Marc-Alexandre Côté, Yonatan Bisk, Adam Trischler, and Matthew Hausknecht. 2021. ALFWorld: Aligning Text and Embodied Environments for Interactive Learning.arXiv preprint arXiv:2010.03768(2021)

work page internal anchor Pith review arXiv 2021

-

[65]

Joykirat Singh, Raghav Magazine, Yash Pandya, and Akshay Nambi

- [66]

-

[67]

Jovan Stojkovic, Chaojie Zhang, Íñigo Goiri, Josep Torrellas, and Esha Choukse. 2025. Dynamollm: Designing llm inference clusters for per- formance and energy efficiency. In2025 IEEE International Symposium on High Performance Computer Architecture (HPCA). IEEE, 1348–1362

2025

-

[68]

The Terminal-Bench Team. 2025. Terminal-Bench: A Benchmark for AI Agents in Terminal Environments. (2025).https://github.com/laude- institute/terminal-bench

2025

-

[69]

Thinking Machines AI. 2025. Tinker.https://thinkingmachines.ai/ti nker/. (2025). Accessed: 2026-02

2025

-

[70]

Jiahao Wang, Jinbo Han, Xingda Wei, Sijie Shen, Dingyan Zhang, Chenguang Fang, Rong Chen, Wenyuan Yu, and Haibo Chen. 2025. KVCache cache in the wild: characterizing and optimizing KVCache cache at a large cloud provider. InProceedings of the 2025 USENIX Conference on Usenix Annual Technical Conference (USENIX ATC ’25). USENIX Association, USA, Article 28...

2025

-

[71]

Weixun Wang, Shaopan Xiong, Gengru Chen, Wei Gao, Sheng Guo, Yancheng He, Ju Huang, Jiaheng Liu, Zhendong Li, Xiaoyang Li, Zichen Liu, Haizhou Zhao, Dakai An, Lunxi Cao, Qiyang Cao, Wanxi Deng, Feilei Du, Yiliang Gu, Jiahe Li, Xiang Li, Mingjie Liu, Yijia Luo, Zihe Liu, Yadao Wang, Pei Wang, Tianyuan Wu, Yanan Wu, Yuheng Zhao, Shuaibing Zhao, Jin Yang, Si...

-

[72]

Weixun Wang, XiaoXiao Xu, Wanhe An, Fangwen Dai, Wei Gao, Yancheng He, Ju Huang, Qiang Ji, Hanqi Jin, Xiaoyang Li, Yang Li, Zhongwen Li, Shirong Lin, Jiashun Liu, Zenan Liu, Tao Luo, Dilxat Muhtar, Yuanbin Qu, Jiaqiang Shi, Qinghui Sun, Yingshui Tan, Hao Tang, Runze Wang, Yi Wang, Zhaoguo Wang, Yanan Wu, Shaopan Xiong, Binchen Xu, Xander Xu, Yuchi Xu, Qip...

-

[73]

Yuxin Wang, Yuhan Chen, Zeyu Li, Xueze Kang, Yuchu Fang, Yeju Zhou, Yang Zheng, Zhenheng Tang, Xin He, Rui Guo, Xin Wang, Qiang Wang, Amelie Chi Zhou, and Xiaowen Chu. 2025. BurstGPT: A Real- world Workload Dataset to Optimize LLM Serving Systems.arXiv preprint arXiv:2401.17644(2025)

-

[74]

Zhuang Wang, Zhaozhuo Xu, Jingyi Xi, Yuke Wang, Anshumali Shri- vastava, and TS Eugene Ng. 2025. {ZEN}: Empowering Distributed Training with Sparsity-driven Data Synchronization. In19th USENIX Symposium on Operating Systems Design and Implementation (OSDI 25). 537–556

2025

-

[75]

Junde Wu, Jiayuan Zhu, Yuyuan Liu, Min Xu, and Yueming Jin. 2025. Agentic reasoning: A streamlined framework for enhancing llm rea- soning with agentic tools. InProceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). 28489–28503

2025

-

[76]

Tianyuan Wu, Lunxi Cao, Yining Wei, Wei Gao, Yuheng Zhao, Dakai An, Shaopan Xiong, Zhiqiang Lv, Ju Huang, Siran Yang, Yinghao Yu, Jiamang Wang, Lin Qu, and Wei Wang. 2025. RollMux: Phase- Level Multiplexing for Disaggregated RL Post-Training.arXiv preprint arXiv:2512.11306(2025)

-

[77]

RLBoost: Harvesting Preemptible Resources for Cost-Efficient Reinforcement Learning on LLMs

Yongji Wu, Xueshen Liu, Haizhong Zheng, Juncheng Gu, Beidi Chen, Z. Morley Mao, Arvind Krishnamurthy, and Ion Stoica. 2025. RLBoost: Harvesting Preemptible Resources for Cost-Efficient Reinforcement Learning on LLMs.arXiv preprint arXiv:2510.19225(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[78]

Bingquan Xia, Bowen Shen, Cici, Dawei Zhu, Di Zhang, Gang Wang, Hailin Zhang, Huaqiu Liu, Jiebao Xiao, Jinhao Dong, Liang Zhao, Peid- ian Li, Peng Wang, Shihua Yu, Shimao Chen, Weikun Wang, Wenhan Ma, Xiangwei Deng, Yi Huang, Yifan Song, Zihan Jiang, Bowen Ye, Can Cai, Chenhong He, Dong Zhang, Duo Zhang, Guoan Wang, Hao Tian, Haochen Zhao, Heng Qu, Hongsh...

-

[79]

Yuxing Xiang, Xue Li, Kun Qian, Yufan Yang, Diwen Zhu, Wenyuan Yu, Ennan Zhai, Xuanzhe Liu, Xin Jin, and Jingren Zhou. 2025. Aegaeon: Effective GPU pooling for concurrent LLM serving on the market. In Proceedings of the ACM SIGOPS 31st Symposium on Operating Systems Principles. 1030–1045

2025

-

[80]

Wencong Xiao, Shiru Ren, Yong Li, Yang Zhang, Pengyang Hou, Zhi Li, Yihui Feng, Wei Lin, and Yangqing Jia. 2020. AntMan: Dynamic scaling on GPU clusters for deep learning. InUSENIX OSDI

2020

- [81]

-

[82]

An Yang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv, Chujie Zheng, Dayiheng Liu, Fan Zhou, Fei Huang, Feng Hu, Hao Ge, Haoran Wei, Huan Lin, Jialong Tang, Jian Yang, Jianhong Tu, Jianwei Zhang, Jianxin Yang, Jiaxi Yang, Jing Zhou, Jingren Zhou, Junyang Lin, Kai Dang, Keqin Bao, Kexin Yang, ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.