Recognition: unknown

A Geometry-Aware Residual Correction of Hagan's SABR Implied Volatility Formula

Pith reviewed 2026-05-08 02:59 UTC · model grok-4.3

The pith

A neural network trained on the residual error of Hagan's SABR formula, supplied with geometry-aware inputs from the model's SDEs, improves implied volatility accuracy and robustness.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

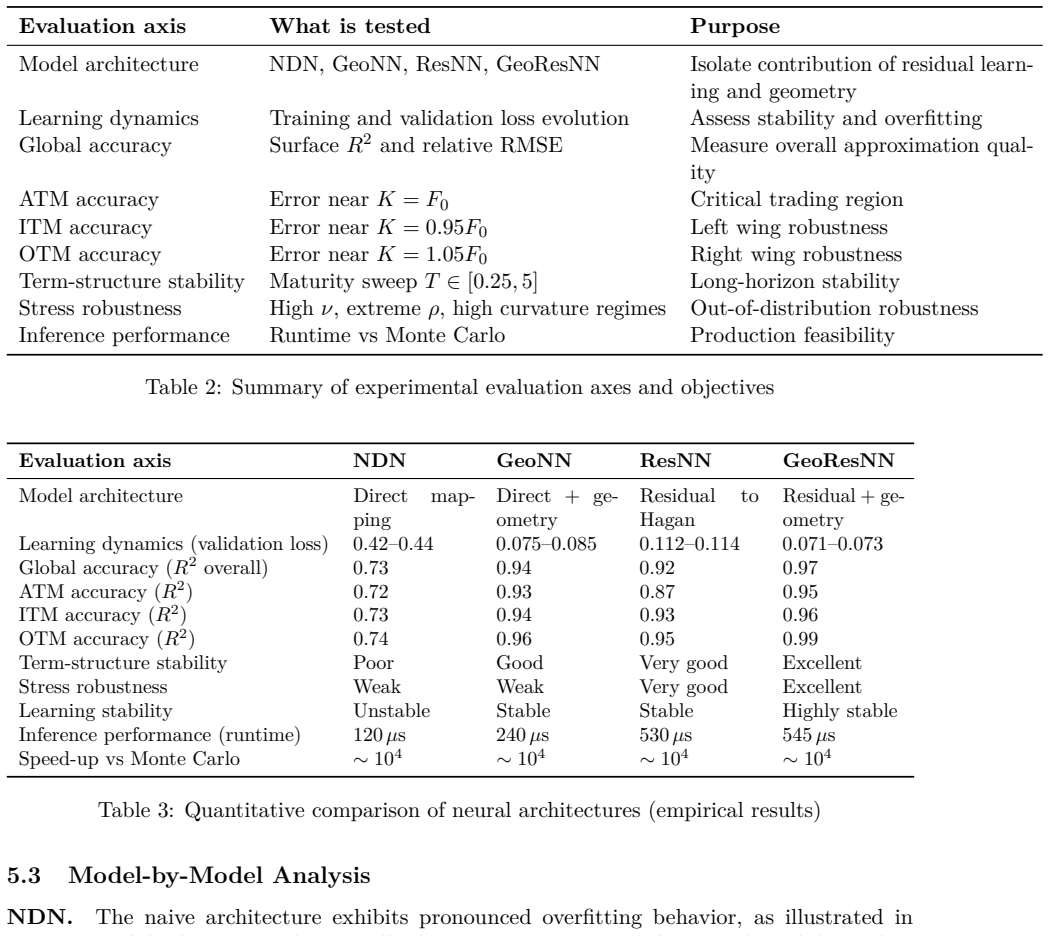

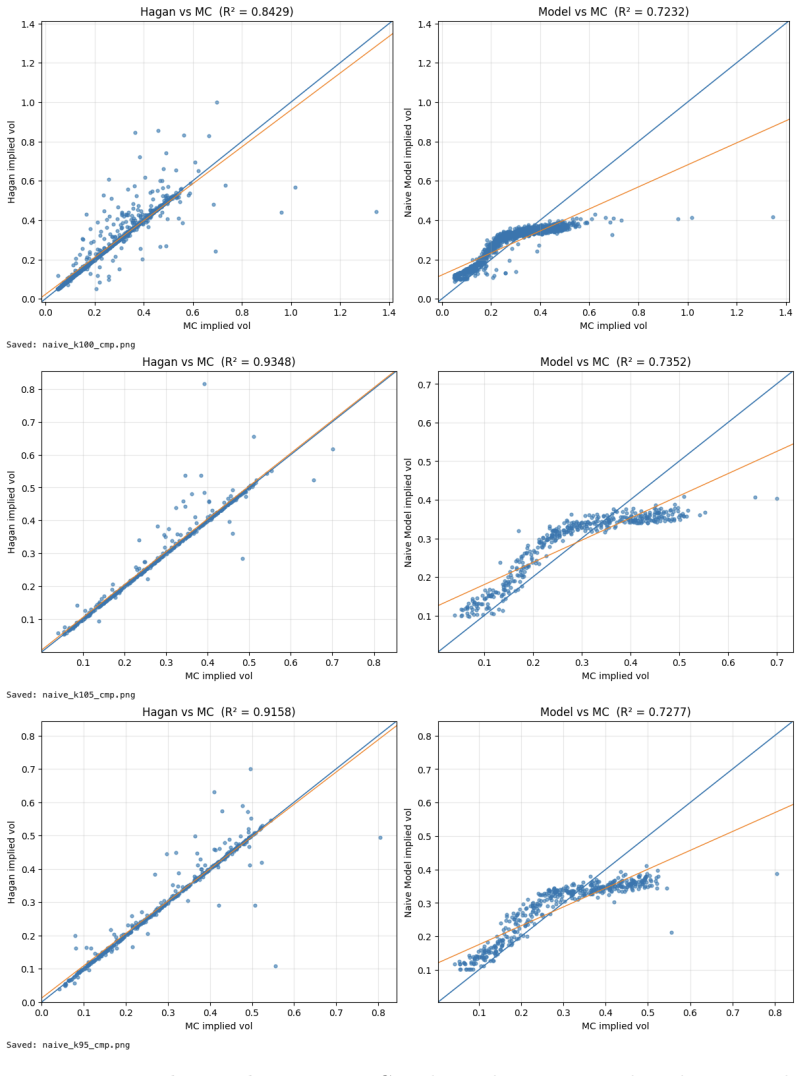

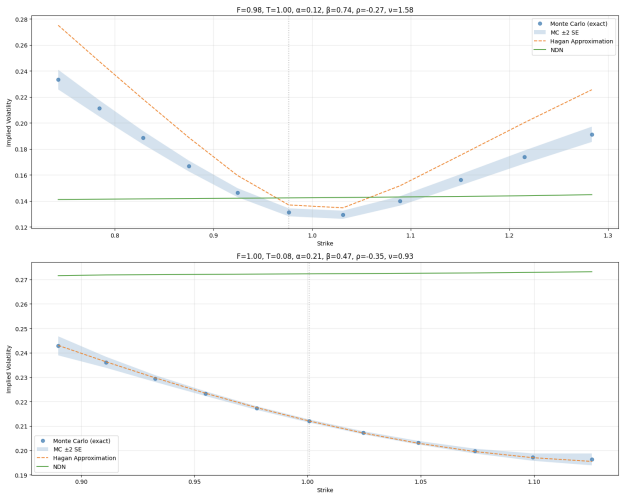

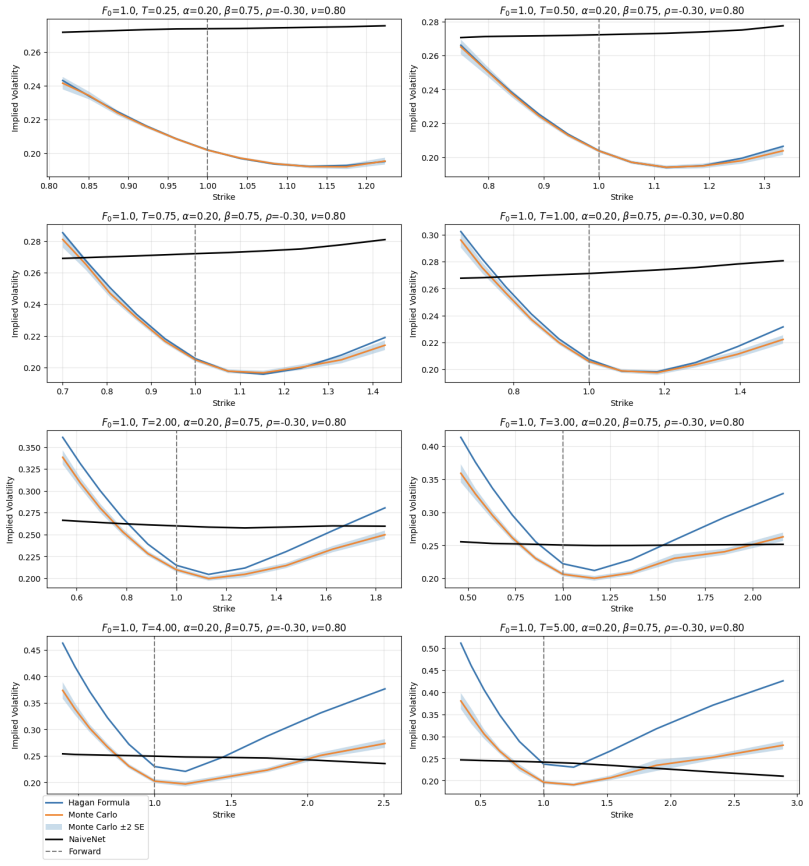

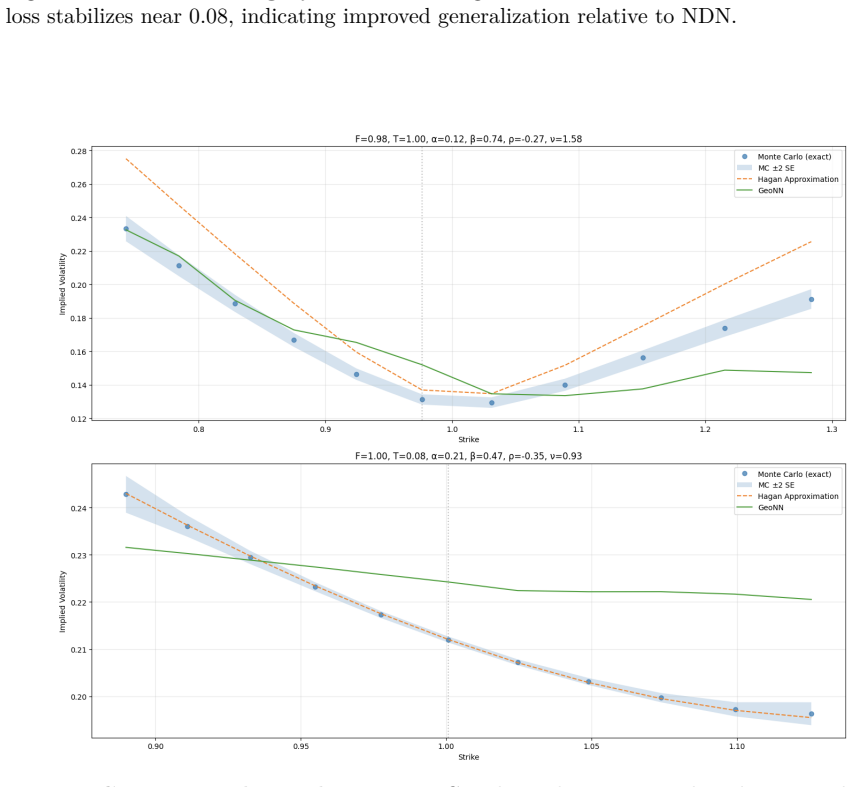

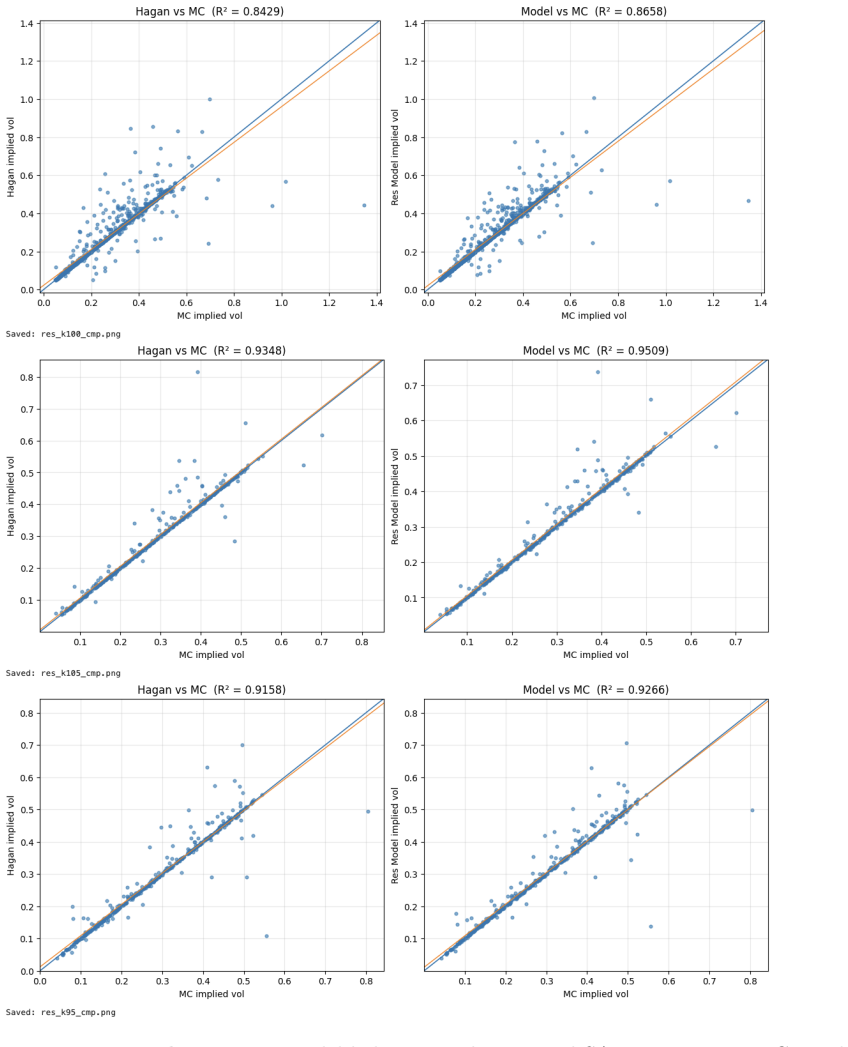

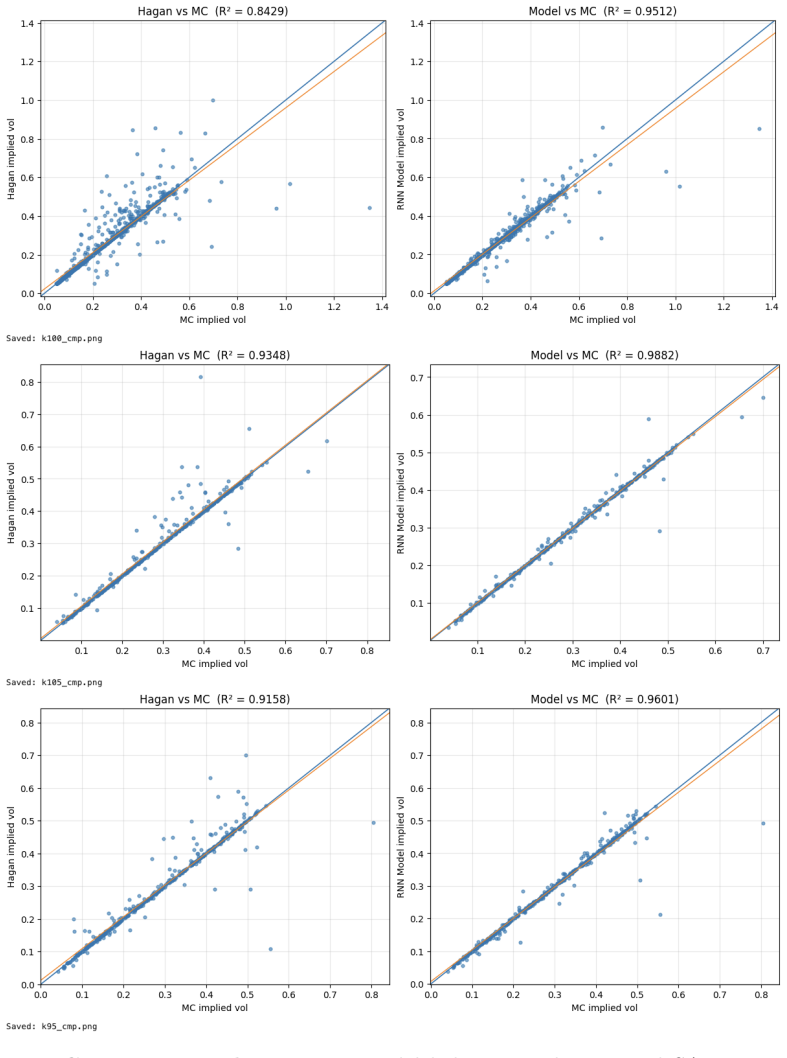

The central claim is that augmenting the input space of a neural network with geometry-aware features derived from the SABR SDEs and training the network to predict the residual between exact implied volatility and Hagan's closed-form expression produces a correction that is both more accurate and more robust than either the standalone analytical formula or a conventional neural network trained directly on volatility values.

What carries the argument

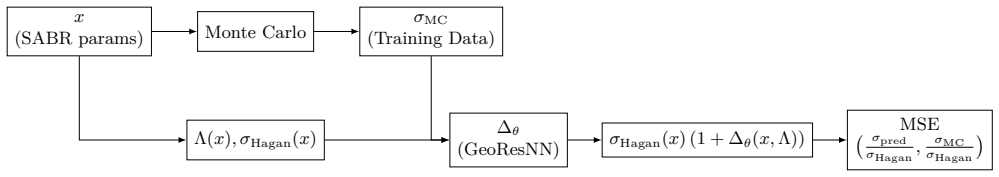

The geometry-aware residual correction, in which variables that reflect intrinsic properties of the SABR stochastic differential equations are concatenated to the network inputs while the training target is defined as the difference from Hagan's approximation.

If this is right

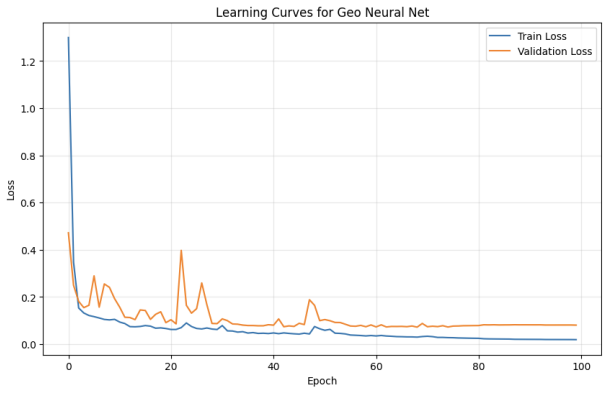

- The hybrid model delivers higher accuracy and robustness than Hagan's formula alone or than standard neural networks across realistic and stressed parameter domains.

- The correction remains lightweight and structurally consistent with the SABR model, preserving the analytical backbone.

- The framework supports real-time pricing and calibration tasks in practical trading environments.

Where Pith is reading between the lines

- The same residual-correction pattern could be applied to other asymptotic expansions that leave systematic higher-order errors.

- Training directly on observed market quotes instead of simulated paths might further align the correction with live conditions.

- Systematic testing on parameter values deliberately chosen to violate the geometry assumptions would map the practical limits of the approach.

Load-bearing premise

The geometry-aware variables extracted from the SABR stochastic equations encode the higher-order effects missing from the asymptotic expansion well enough for the residual network to generalize reliably outside the training parameter ranges.

What would settle it

If, on parameter sets drawn from stressed regimes lying outside the training distribution, the hybrid model's absolute error in implied volatility exceeds the error of the uncorrected Hagan formula, the claim of improved robustness would be falsified.

Figures

read the original abstract

This paper proposes a hybrid methodology to improve the approximation of SABR (Stochastic Alpha Beta Rho) implied volatility by combining analytical structure with machine learning. The approach augments the neural-network input representation with geometric features derived from the stochastic differential equations of the SABR model. Unlike approaches that fully replace analytical formulas with black-box models, the proposed framework preserves the analytical backbone of the model. The hybridization operates along two complementary dimensions. First, geometry-aware variables reflecting intrinsic properties of the SABR dynamics are used as structured inputs to the network. Second, the neural network is trained to learn the residual error relative to Hagan's closed-form approximation rather than implied volatility directly. The resulting model acts as a structured residual correction to the analytical formula, retaining interpretability while capturing higher-order effects that are not included in the asymptotic expansion. Numerical experiments conducted over realistic parameter domains, as well as stressed environments, show that the method improves accuracy and robustness compared with both analytical approximations and standard neural-network approaches. Because the correction remains lightweight and structurally consistent with the underlying model, the framework is well suited for real-time pricing and calibration in practical trading environments.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. This paper presents a hybrid methodology for approximating the implied volatility in the SABR model. It retains Hagan's closed-form asymptotic expansion as the base and employs a neural network to learn and correct the residual error. The network inputs are augmented with geometry-aware features extracted from the SABR stochastic differential equations. The authors claim that this structured residual correction improves accuracy and robustness relative to both the standalone analytical formula and conventional neural network approaches, as evidenced by experiments across realistic and stressed parameter domains. The framework is positioned as suitable for real-time pricing and calibration due to its lightweight nature and retention of analytical structure.

Significance. Should the empirical results be substantiated with detailed metrics and reproducible protocols, this contribution would be significant for the field of computational finance. It exemplifies a principled way to integrate domain knowledge from stochastic processes with machine learning, avoiding the pitfalls of fully black-box models. By focusing on residual correction informed by geometric properties of the dynamics, the approach could lead to more reliable and interpretable enhancements to existing pricing formulas, with potential benefits for practitioners in volatility modeling and risk management.

major comments (3)

- Abstract: The assertion that 'Numerical experiments conducted over realistic parameter domains, as well as stressed environments, show that the method improves accuracy and robustness' lacks any accompanying quantitative data, such as error values, tables, or figures with comparisons. This is a load-bearing issue for the central claim, as the improvement cannot be assessed without metrics or baseline results.

- Section 3 (Methodology): The geometry-aware variables are stated to reflect 'intrinsic properties of the SABR dynamics' and are used as structured inputs, but the manuscript does not provide their explicit definitions or how they are derived from the SABR SDEs. This omission prevents verification of whether these features adequately address the higher-order effects absent in Hagan's expansion.

- Numerical Experiments section: There are no details on the training/test splits, the specific computation of geometry features, error bars, data exclusion rules, or out-of-distribution testing in stressed environments. These are necessary to support the generalization claims.

minor comments (1)

- Abstract: The description of the hybrid framework is clear, but adding a brief note on the dimensionality or typical ranges of the geometry-aware inputs would aid reader intuition without altering the technical content.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed feedback on our manuscript. The comments identify key areas where additional clarity and transparency will strengthen the presentation of our hybrid SABR approximation method. We have revised the manuscript accordingly and provide point-by-point responses below.

read point-by-point responses

-

Referee: Abstract: The assertion that 'Numerical experiments conducted over realistic parameter domains, as well as stressed environments, show that the method improves accuracy and robustness' lacks any accompanying quantitative data, such as error values, tables, or figures with comparisons. This is a load-bearing issue for the central claim, as the improvement cannot be assessed without metrics or baseline results.

Authors: We agree that the abstract claim would be more compelling with explicit quantitative support. Although the Numerical Experiments section contains the relevant error metrics, tables, and baseline comparisons, we have revised the abstract to summarize key quantitative findings, including average error reductions relative to Hagan's formula and standard neural-network baselines across both realistic and stressed parameter regimes, with references to the supporting tables and figures. revision: yes

-

Referee: Section 3 (Methodology): The geometry-aware variables are stated to reflect 'intrinsic properties of the SABR dynamics' and are used as structured inputs, but the manuscript does not provide their explicit definitions or how they are derived from the SABR SDEs. This omission prevents verification of whether these features adequately address the higher-order effects absent in Hagan's expansion.

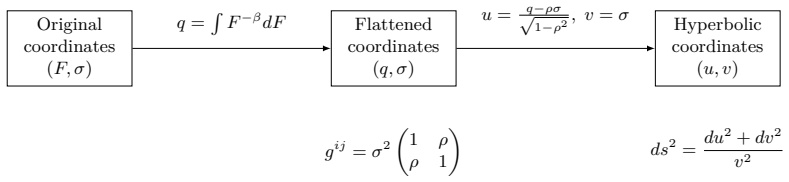

Authors: We appreciate the referee highlighting this omission. The geometry-aware inputs are derived from the SABR SDEs via the local volatility expression, the correlation-driven drift terms, and selected higher-order corrections arising from Ito's lemma applied to the forward price and volatility processes. In the revised Section 3 we now supply the explicit mathematical definitions, the step-by-step derivation from the SDEs, and the precise mapping from model parameters and state variables to each network input. This addition enables direct verification of how the features target effects beyond the leading-order terms retained in Hagan's expansion. revision: yes

-

Referee: Numerical Experiments section: There are no details on the training/test splits, the specific computation of geometry features, error bars, data exclusion rules, or out-of-distribution testing in stressed environments. These are necessary to support the generalization claims.

Authors: We acknowledge that greater experimental detail is required for reproducibility and to substantiate the generalization claims. The revised Numerical Experiments section now includes: (i) the precise training/test split ratios and stratified sampling procedure over the parameter domains; (ii) explicit formulas together with pseudocode for computing each geometry-aware feature from the SABR state and parameters; (iii) error bars obtained from five independent training runs with distinct random seeds; (iv) data exclusion rules (e.g., removal of samples yielding negative forwards or numerically unstable volatility paths); and (v) a dedicated out-of-distribution evaluation protocol using stressed parameter sets (high volatility-of-volatility, extreme correlations, and low forward prices) withheld from training. These additions directly address the concerns raised. revision: yes

Circularity Check

No significant circularity in hybrid analytical-ML residual correction

full rationale

The paper defines a hybrid method that takes Hagan's external closed-form SABR implied-volatility approximation as its analytical backbone and trains a neural network to predict only the residual correction, with additional inputs consisting of geometry-aware variables extracted directly from the SABR SDEs. No equation or claim reduces the target output to a quantity defined by the fitted parameters themselves, nor does any load-bearing step rely on a self-citation chain, uniqueness theorem imported from the authors' prior work, or an ansatz smuggled via citation. The improvement claim rests on numerical experiments conducted over stated parameter domains; these experiments are independent empirical checks rather than tautological predictions. The derivation chain therefore remains non-circular.

Axiom & Free-Parameter Ledger

free parameters (1)

- Neural network weights and hyperparameters

axioms (1)

- domain assumption SABR dynamics are described by the standard stochastic differential equations

Reference graph

Works this paper leans on

-

[1]

and Kumar, Deep and Lesniewski, Andrew S

Hagan, Patrick S. and Kumar, Deep and Lesniewski, Andrew S. and Woodward, Diana E. , title =. Wilmott Magazine , year =

-

[2]

, title =

McGhee, William A. , title =. Journal of Computational Finance , year =

-

[3]

2021 , address =

Thorin, Henrik , title =. 2021 , address =

2021

-

[4]

2021 , address =

Stuijt, Hugo , title =. 2021 , address =

2021

-

[5]

Feng, Lingjie and Wu, Xintao and Arik, Sercan. Incorporating Prior Financial Domain Knowledge into Neural Networks for Implied Volatility Surface Prediction , journal =. 2019 , volume =. 1904.12834 , archivePrefix =

-

[6]

Fine-Tune Your Smile: Correction to Hagan et al

Ob. Fine-Tune Your Smile: Correction to Hagan et al. , journal =. 2008 , pages =

2008

-

[7]

Paulot, Louis , title =. arXiv preprint , year =. 0906.0658 , archivePrefix =

-

[8]

Risk Magazine , year =

Antonov, Alexandre and Konikov, Michael and Spector, Michael , title =. Risk Magazine , year =

-

[9]

CBS Research Working Paper , year =

Lund, Brian , title =. CBS Research Working Paper , year =

-

[10]

Muguruza, Aitor and Manso, Jose and Serna, German , title =. arXiv preprint , year =. 1901.09647 , archivePrefix =

-

[11]

Adam: A Method for Stochastic Optimization

Kingma, Diederik P. and Ba, Jimmy , title =. arXiv preprint , year =. 1412.6980 , archivePrefix =

work page internal anchor Pith review arXiv

-

[12]

, title =

do Carmo, Manfredo P. , title =

-

[13]

Rosenberg, Steven , title =

-

[14]

2020 , doi =

Reghai, Adil and Kettani, Othmane , title =. 2020 , doi =

2020

-

[15]

Analysis, Geometry, and Modeling in Finance: Advanced Methods in Option Pricing , publisher =

Henry-Labord. Analysis, Geometry, and Modeling in Finance: Advanced Methods in Option Pricing , publisher =

discussion (0)

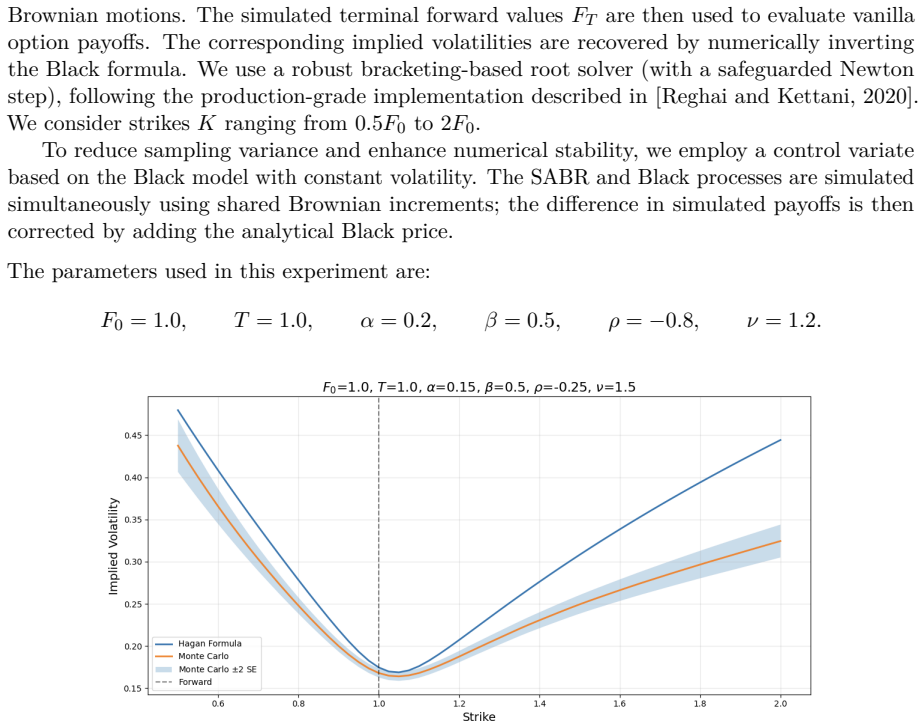

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.