Recognition: no theorem link

A Differentiable Bayesian Relaxation for Latent Partial-Order Inference

Pith reviewed 2026-05-11 01:14 UTC · model grok-4.3

The pith

A differentiable relaxation turns hard partial-order inference into a continuous model that supports gradient-based sampling from linear traces.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

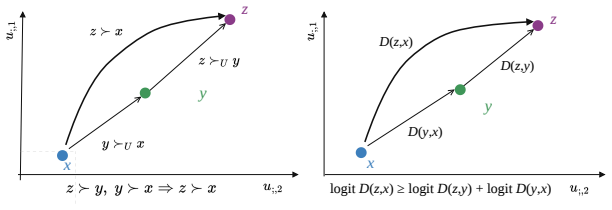

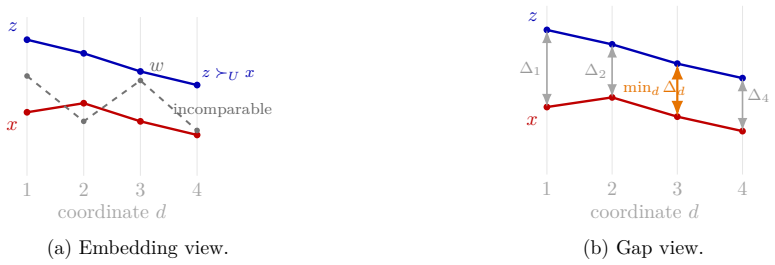

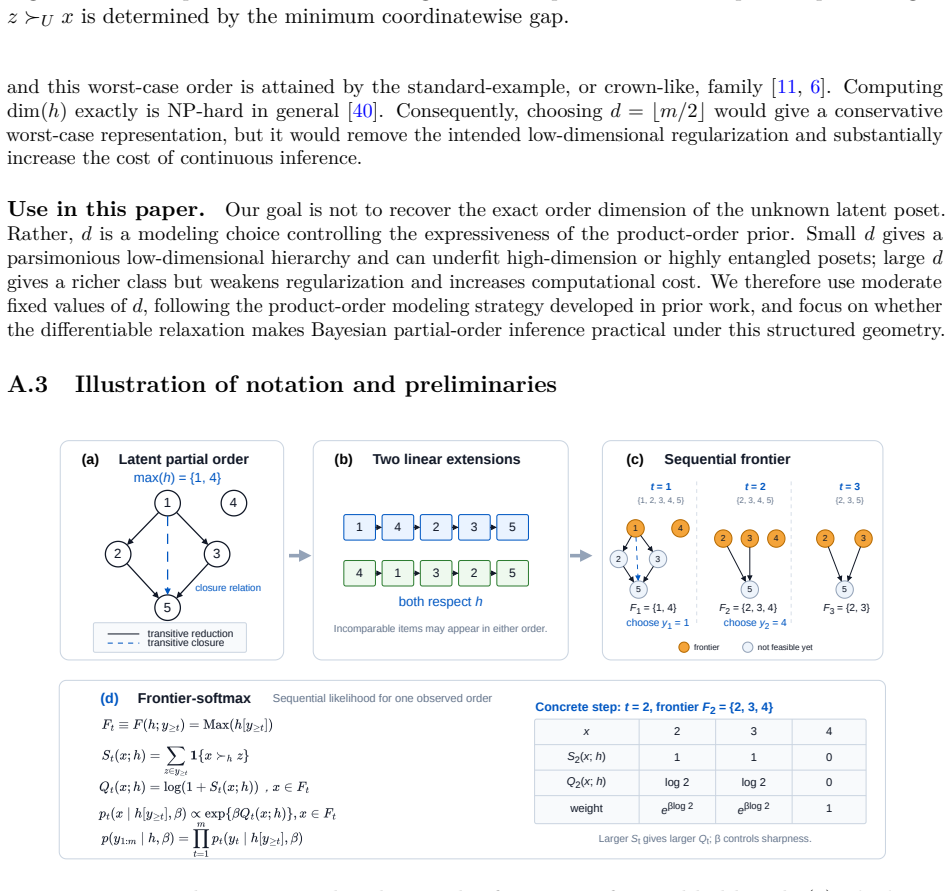

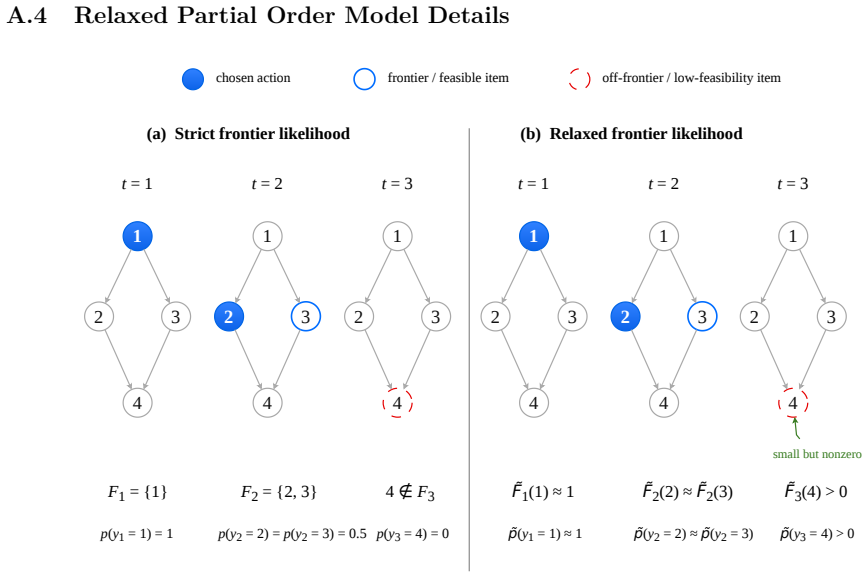

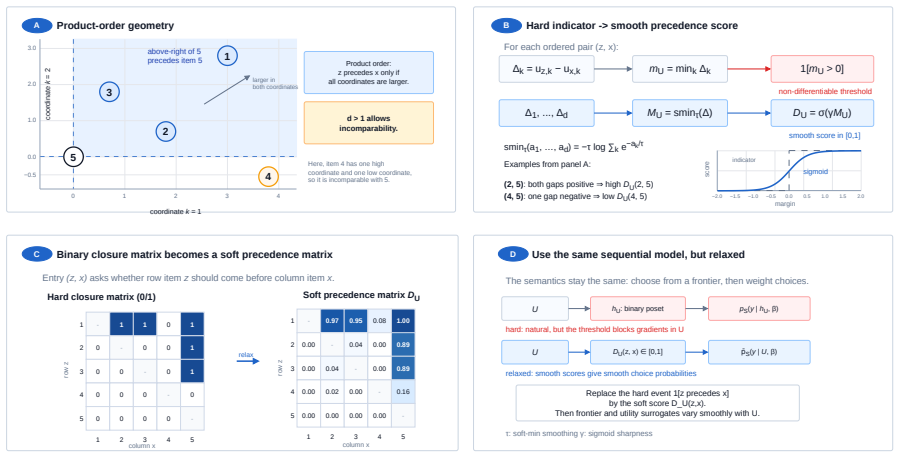

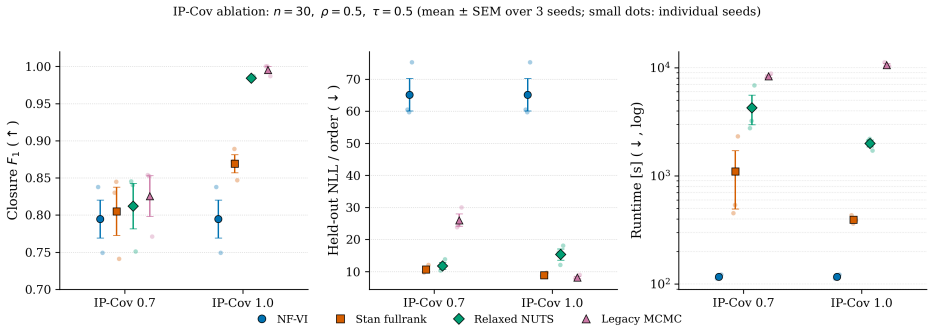

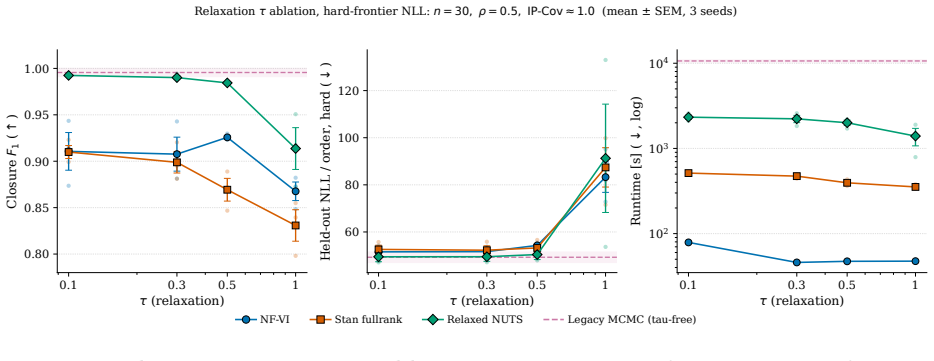

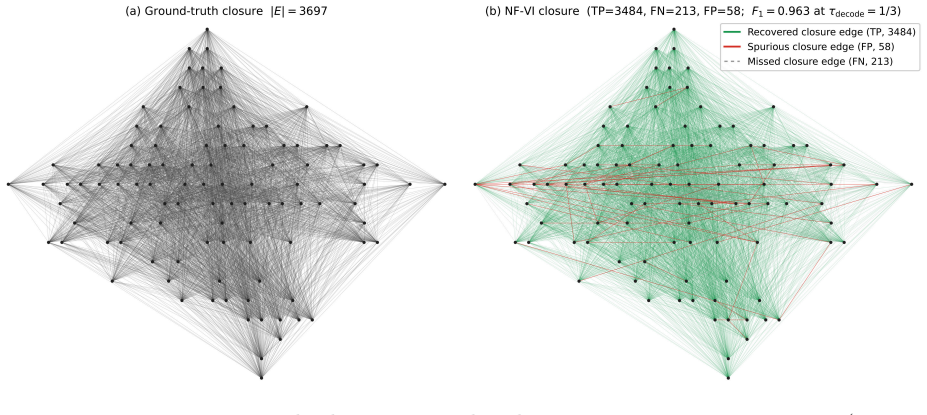

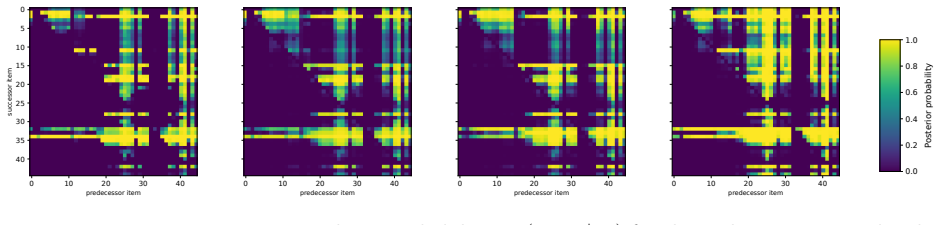

Starting from a hard frontier-constrained model of noisy linear extensions of an underlying partial order, the authors replace discontinuous product-order precedence and binary frontier feasibility with smooth surrogates. This yields a continuous posterior that preserves closure-level partial-order semantics and supports gradient-based MCMC and variational inference. They prove soft transitivity, sharp-limit frontier recovery, and convergence to the hard likelihood.

What carries the argument

The differentiable relaxation that replaces discontinuous precedence and frontier feasibility checks with smooth surrogates inside a frontier-constrained generative model of linear extensions.

If this is right

- The relaxed posterior converges to the exact hard likelihood as the relaxation parameter reaches its limit.

- Soft transitivity is preserved, so inferred relations remain consistent with partial-order axioms.

- Frontier recovery becomes sharp, allowing exact identification of feasible sets in the limit.

- Posterior samples on small problems match those from hard MCMC, while runtime improves on larger instances.

Where Pith is reading between the lines

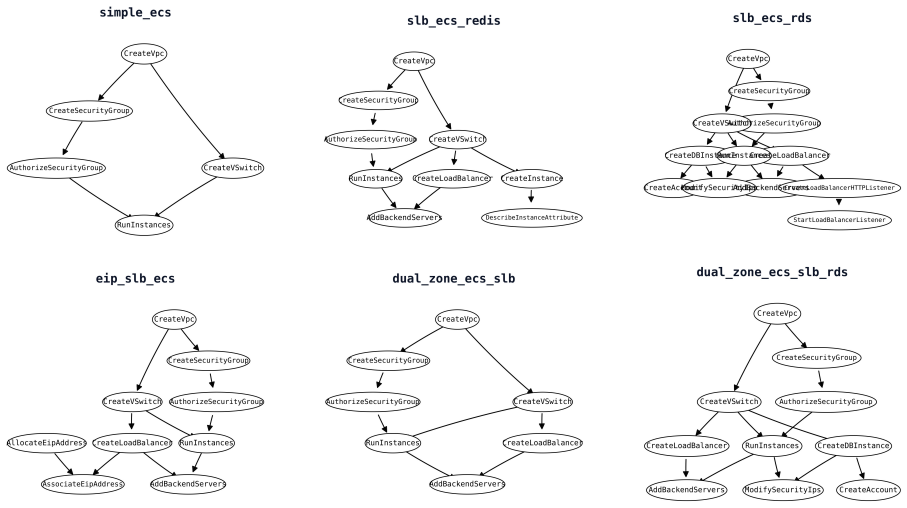

- The same smoothing technique could be tested on task-dependency data from software repositories or educational curricula to surface implicit prerequisites.

- If the relaxation generalizes, it offers a template for making other discrete combinatorial constraints differentiable in Bayesian models of sequences.

- A direct comparison on citation or workflow networks with known ground-truth partial orders would show whether the frontier assumption fits real data better than alternative generative stories.

Load-bearing premise

The observed linear traces are generated as noisy linear extensions of a latent partial order under frontier constraints.

What would settle it

Generate synthetic traces from a partial-order model that violates the frontier constraint and check whether the relaxed posterior still recovers the correct frontiers and relations in the sharp limit.

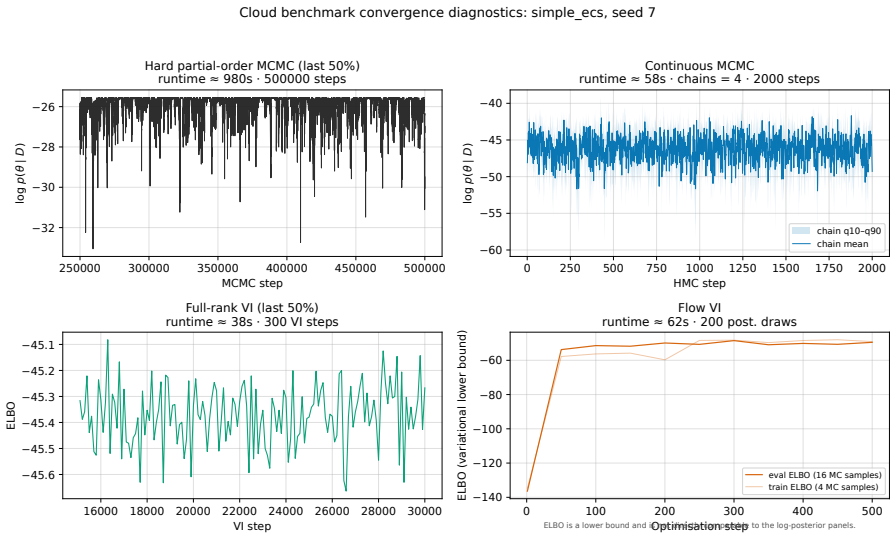

Figures

read the original abstract

Many ranking and agent trace datasets are recorded as linear orders even though their latent structure is only partially ordered. This is especially common in agent and workflow traces, where observed order may reflect arbitrary linearization rather than true prerequisites. We introduce a differentiable relaxation for latent partial-order inference from such traces. Starting from a hard frontier-constrained model of noisy linear extensions, we replace discontinuous product-order precedence and binary frontier feasibility with smooth surrogates, yielding a continuous posterior that preserves closure-level partial-order semantics and supports gradient-based MCMC and variational inference. We prove soft transitivity, sharp-limit frontier recovery, and convergence to the hard likelihood. Experiments on synthetic data, records of social dominance relations, and cloud-agent traces show close posterior fidelity to hard MCMC on small instances and improved runtime--accuracy trade-offs on larger problems.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

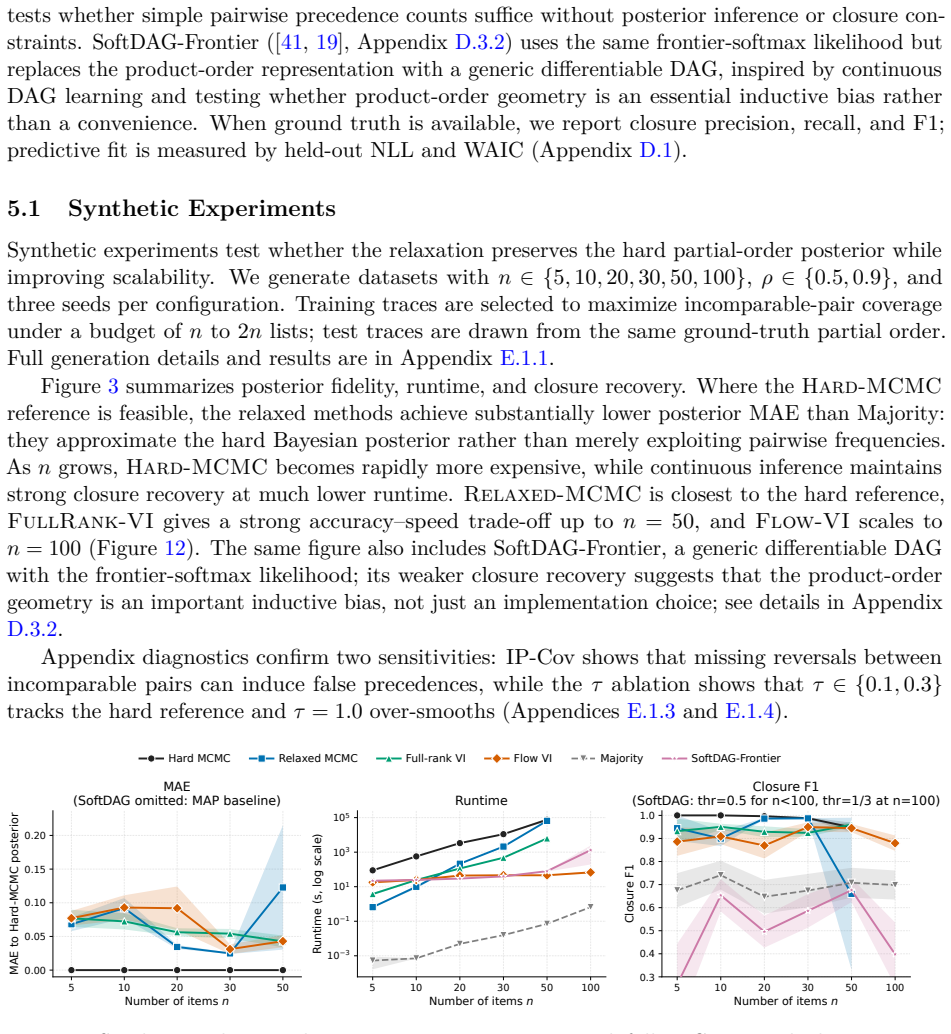

Summary. The paper introduces a differentiable Bayesian relaxation for latent partial-order inference from linear traces. Starting from a hard frontier-constrained generative model of noisy linear extensions, it replaces discontinuous precedence relations and binary frontier feasibility with smooth surrogates to obtain a continuous posterior amenable to gradient-based MCMC and variational inference. The authors prove soft transitivity, sharp-limit frontier recovery, and convergence to the hard likelihood; experiments on synthetic data, social dominance records, and cloud-agent traces report close posterior fidelity to hard MCMC on small instances together with improved runtime-accuracy trade-offs on larger problems.

Significance. If the proofs and empirical fidelity hold, the work supplies a practical bridge between discrete partial-order models and continuous inference methods, which is valuable for ranking, workflow, and agent-trace applications. Explicit recovery of the hard model in the limit and support for both MCMC and variational inference are clear strengths; the generative assumption is presented as a scoped modeling choice rather than a universal claim.

minor comments (3)

- The abstract asserts the existence of proofs and quantitative experimental results but supplies neither the surrogate definitions nor any numerical metrics or baselines; adding one representative equation and a brief numerical highlight would improve immediate accessibility without altering the technical content.

- The smoothing parameter is listed as the sole free parameter; its selection procedure, sensitivity analysis, and effect on posterior concentration should be stated explicitly (e.g., in the experimental or implementation section) so that readers can reproduce the reported fidelity and runtime gains.

- Notation for the soft frontier feasibility surrogate and the product-order relaxation should be introduced once with a clear mapping to the corresponding hard quantities; repeated re-definition across sections risks minor confusion for readers following the limit arguments.

Simulated Author's Rebuttal

We thank the referee for the positive and accurate summary of our manuscript, the recognition of its contributions, and the recommendation for minor revision. We are pleased that the work is viewed as providing a practical bridge between discrete partial-order models and continuous inference methods.

Circularity Check

No significant circularity identified

full rationale

The derivation begins from an external hard frontier-constrained generative model of noisy linear extensions and introduces smooth surrogates for precedence and feasibility. It then proves independent properties (soft transitivity, sharp-limit frontier recovery, and convergence to the hard likelihood) that recover the original model in the limit. This is a standard relaxation construction whose limit behavior is shown mathematically rather than assumed by definition or fit. No load-bearing self-citations, self-definitional steps, or fitted inputs renamed as predictions appear in the abstract or described chain. The posterior is defined via the relaxation and supports gradient-based inference without reducing to its inputs by construction.

Axiom & Free-Parameter Ledger

free parameters (1)

- smoothing parameter

axioms (1)

- domain assumption Observed linear traces are noisy linear extensions of a latent partial order under frontier constraints

invented entities (1)

-

soft frontier feasibility surrogate

no independent evidence

Reference graph

Works this paper leans on

-

[1]

BayesDAG: Gradient-based posterior inference for causal discovery

Yashas Annadani, Nick Pawlowski, Joel Jennings, Stefan Bauer, Cheng Zhang, and Wenbo Gong. BayesDAG: Gradient-based posterior inference for causal discovery. InAdvances in Neural Information Processing Systems, volume 36, 2023

work page 2023

-

[2]

Hierarchical density order embeddings

Ben Athiwaratkun and Andrew Gordon Wilson. Hierarchical density order embeddings. In International Conference on Learning Representations (ICLR), 2018

work page 2018

-

[3]

Statistical models of top-k partial orders

Amel Awadelkarim and Johan Ugander. Statistical models of top-k partial orders. InProceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, pages 39–48, 2024

work page 2024

-

[4]

Differentiable structure learning with partial orders

Taiyu Ban, Lyuzhou Chen, Xiangyu Wang, Xin Wang, Derui Lyu, and Huanhuan Chen. Differentiable structure learning with partial orders. InAdvances in Neural Information Processing Systems, volume 37, 2024. doi: 10.52202/079017-3728

-

[5]

Niko Beerenwinkel, Nicholas Eriksson, and Bernd Sturmfels. Conjunctive Bayesian networks. Bernoulli, 13(4):893–909, 2007. doi: 10.3150/07-BEJ6133

-

[6]

Kenneth P. Bogart. Maximal dimensional partially ordered sets I. Hiraguchi’s theorem.Discrete Mathematics, 5:21–31, 5 1973. ISSN 0012-365X. doi: 10.1016/0012-365X(73)90024-1

-

[7]

Counting linear extensions.Order, 8(3):225–242, 1991

Graham Brightwell and Peter Winkler. Counting linear extensions.Order, 8(3):225–242, 1991. doi: 10.1007/BF00383444. 11

-

[8]

Bob Carpenter, Andrew Gelman, Matthew D. Hoffman, Daniel Lee, Ben Goodrich, Michael Betancourt, Marcus Brubaker, Jiqiang Guo, Peter Li, and Allen Riddell. Stan: A probabilistic programming language.Journal of Statistical Software, 76(1):1–32, 2017. doi: 10.18637/jss. v076.i01. URLhttps://www.jstatsoft.org/article/view/v076i01

-

[9]

Proceso L. Fernandez, Lenwood S. Heath, Naren Ramakrishnan, Michael Tan, and John Paul C. Vergara. Mining posets from linear orders.Discrete Mathematics, Algorithms and Applications, 5(4):1350030, 2013

work page 2013

-

[10]

Andrew Gelman, Jessica Hwang, and Aki Vehtari. Understanding predictive information criteria for Bayesian models.Statistics and Computing, 24(6):997–1016, 2014. doi: 10.1007/ s11222-013-9416-2

work page 2014

-

[11]

Toshio Hiraguchi. On the dimension of partially ordered sets.The Science Reports of the Kanazawa University, 1(2):77–94, 1951

work page 1951

-

[12]

Matthew D. Hoffman and Andrew Gelman. The no-u-turn sampler: Adaptively setting path lengths in hamiltonian monte carlo.Journal of Machine Learning Research, 15(47):1593–1623,

-

[13]

URLhttps://jmlr.org/papers/v15/hoffman14a.html

-

[14]

Categorical reparameterization with Gumbel-Softmax

Eric Jang, Shixiang Gu, and Ben Poole. Categorical reparameterization with Gumbel-Softmax. InInternational Conference on Learning Representations, 2017. URLhttps://openreview. net/forum?id=rkE3y85ee

work page 2017

-

[15]

Chuxuan Jiang, Geoff K. Nicholls, and Jeong Eun Lee. Bayesian inference for vertex-series- parallel partial orders. InProceedings of the Thirty-Ninth Conference on Uncertainty in Artificial Intelligence, volume 216 ofProceedings of Machine Learning Research, pages 995–1004, 2023

work page 2023

-

[16]

Alp Kucukelbir, Dustin Tran, Rajesh Ranganath, Andrew Gelman, and David M. Blei. Auto- matic differentiation variational inference.Journal of Machine Learning Research, 18(14):1–45,

-

[17]

URLhttps://jmlr.org/papers/v18/16-107.html

-

[18]

Sander J. J. Leemans, Sebastiaan J. van Zelst, and Xixi Lu. Partial-order-based process mining: A survey and outlook.Knowledge and Information Systems, 65:1–29, 2023. doi: 10.1007/s10115-022-01777-3

-

[19]

Dongqing Li, Zheqiao Cheng, Geoff K. Nicholls, and Quyu Kong. De-linearizing agent traces: Bayesian inference of latent partial orders for efficient execution, 2026. URLhttps://arxiv. org/abs/2602.02806

-

[20]

Pseudo-mallows for efficient probabilistic preference learning, 2022

Qinghua Liu, Valeria Vitelli, Carlo Mannino, Arnoldo Frigessi, and Ida Scheel. Pseudo-mallows for efficient probabilistic preference learning, 2022. URLhttps://arxiv.org/abs/2205.13911

-

[21]

DiBS: Differentiable bayesian structure learning

Lars Lorch, Jonas Rothfuss, Bernhard Schölkopf, and Andreas Krause. DiBS: Differentiable bayesian structure learning. InAdvances in Neural Information Processing Systems, volume 34, pages 24111–24123, 2021

work page 2021

-

[22]

Duncan Luce.Individual Choice Behavior: A Theoretical Analysis

R. Duncan Luce.Individual Choice Behavior: A Theoretical Analysis. Wiley, New York, 1959

work page 1959

-

[23]

The concrete distribution: A continuous relaxationofdiscreterandomvariables

Chris J Maddison, Andriy Mnih, and Yee Whye Teh. The concrete distribution: A continuous relaxationofdiscreterandomvariables. InInternational Conference on Learning Representations (ICLR), 2017. 12

work page 2017

-

[24]

Colin L Mallows. Non-null ranking models. i.Biometrika, 44(1/2):114–130, 1957

work page 1957

-

[25]

Finding total and partial orders from data for seriation

Heikki Mannila. Finding total and partial orders from data for seriation. In Jean-François Boulicaut, Michael R. Berthold, and Tamás Horváth, editors,Discovery Science, 11th Interna- tional Conference, DS 2008, Budapest, Hungary, October 13–16, 2008, Proceedings, volume 5255 ofLecture Notes in Computer Science, pages 16–25, Berlin, Heidelberg, 2008. Sprin...

-

[26]

Globalpartialordersfromsequentialdata

HeikkiMannilaandChristopherMeek. Globalpartialordersfromsequentialdata. InProceedings of the Sixth ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD’00, page161–168, NewYork, NY,USA,2000.AssociationforComputingMachinery. ISBN 1581132336. doi: 10.1145/347090.347122. URLhttps://doi.org/10.1145/347090.347122

-

[27]

Bayesian Plackett–Luce mixture models for partially ranked data.Psychometrika, 82(2):442–458, 2017

Cristina Mollica and Luca Tardella. Bayesian Plackett–Luce mixture models for partially ranked data.Psychometrika, 82(2):442–458, 2017. doi: 10.1007/s11336-016-9530-0

-

[28]

Smooth minimization of non-smooth functions.Mathematical Programming, 103(1):127–152, 2005

Yurii Nesterov. Smooth minimization of non-smooth functions.Mathematical Programming, 103(1):127–152, 2005

work page 2005

-

[29]

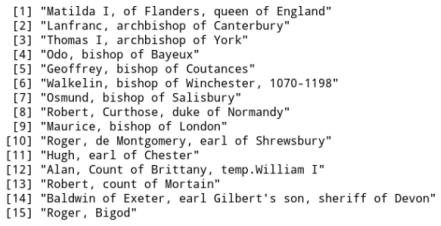

Partial order models for episcopal social status in 12th century England.IWSM 2011, page 437, 2011

Geoff K Nicholls and Alexis Muir Watt. Partial order models for episcopal social status in 12th century England.IWSM 2011, page 437, 2011

work page 2011

-

[30]

Nicholls, Jeong Eun Lee, Nicholas Karn, David Johnson, Rukuang Huang, and Alexis Muir-Watt

Geoff K. Nicholls, Jeong Eun Lee, Nicholas Karn, David Johnson, Rukuang Huang, and Alexis Muir-Watt. Bayesian inference for partial orders from random linear extensions: Power relations from 12th century royal acta.The Annals of Applied Statistics, 19(2):1663–1690, 2025. doi: 10.1214/24-AOAS2002

-

[31]

Teppo Niinimäki, Pekka Parviainen, and Mikko Koivisto. Structure discovery in bayesian networks by sampling partial orders.Journal of Machine Learning Research, 17(57):1–47, 2016

work page 2016

-

[32]

Robin L. Plackett. The analysis of permutations.Journal of the Royal Statistical Society. Series C (Applied Statistics), 24(2):193–202, 1975. doi: 10.2307/2346567

-

[33]

Variational inference with normalizing flows

Danilo Jimenez Rezende and Shakir Mohamed. Variational inference with normalizing flows. InProceedings of the 32nd International Conference on Machine Learning, volume 37 of Proceedings of Machine Learning Research, pages 1530–1538. PMLR, 2015. URLhttps: //proceedings.mlr.press/v37/rezende15.html

work page 2015

-

[34]

Seshadri.Learning preferences from choices and rankings

A. Seshadri.Learning preferences from choices and rankings. PhD thesis, Stanford University Department of Electrical Engineering, 2021. URLhttps://purl.stanford.edu/zv003vd9732

work page 2021

-

[35]

Arjun Seshadri, Stephen Ragain, and Johan Ugander. Learning rich rankings. InAdvances in Neural Information Processing Systems, volume 33, pages 9435–9446. Curran Associates, Inc., 2020

work page 2020

-

[36]

Carpenter, Hugh Doherty, Mark Hagger, and Nicholas Karn

Richard Sharpe, David X. Carpenter, Hugh Doherty, Mark Hagger, and Nicholas Karn. The charters of William II and Henry I, 2014. URLhttps://actswilliam2henry1.wordpress. com/the-charters/. Online database; accessed 1 October 2022

work page 2014

-

[37]

The mixing of markov chains on linear extensions in practice

Topi Talvitie, Teppo Niinimäki, and Mikko Koivisto. The mixing of markov chains on linear extensions in practice. InProceedings of the Twenty-Sixth International Joint Conference on Artificial Intelligence, pages 524–530. IJCAI, 2017. doi: 10.24963/ijcai.2017/74. 13

-

[38]

Order -embeddings of images and language[J]

Ivan Vendrov, Ryan Kiros, Sanja Fidler, and Raquel Urtasun. Order-embeddings of images and language. InInternational Conference on Learning Representations, 2016. URLhttps: //arxiv.org/abs/1511.06361. ICLR 2016 Oral

-

[39]

Probabilistic embedding of knowledge graphs with box lattice measures

Luke Vilnis, Xiang Li, Shikhar Murty, and Andrew McCallum. Probabilistic embedding of knowledge graphs with box lattice measures. InProceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 263–272, Melbourne, Australia, 2018. Association for Computational Linguistics. doi: 10.18653/v1/P18-1025

-

[40]

Sumio Watanabe. Asymptotic equivalence of Bayes cross validation and widely applicable information criterion in singular learning theory.Journal of Machine Learning Research, 11 (116):3571–3594, 2010. URLhttps://www.jmlr.org/papers/v11/watanabe10a.html

work page 2010

-

[41]

Random orders.Order, 1(4):317–331, 1985

Peter Winkler. Random orders.Order, 1(4):317–331, 1985

work page 1985

-

[42]

Mihalis Yannakakis. The complexity of the partial order dimension problem.SIAM Journal on Algebraic Discrete Methods, 3(3):351–358, 1982

work page 1982

-

[43]

Xun Zheng, Bryon Aragam, Pradeep K. Ravikumar, and Eric P. Xing. DAGs with NO TEARS: Continuous optimization for structure learning. InAdvances in Neural Information Processing Systems, volume 31, 2018. A Partial Order Basics A.1 Notation reference Sets, traces, and hard partial orders M= [M]Universe of items. BM Set of all nonempty subsets ofM. HS Class ...

work page 2018

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.