Recognition: no theorem link

Partitioning Neural Co-Variability

Pith reviewed 2026-05-11 01:32 UTC · model grok-4.3

The pith

A new model partitions neural variability into single-neuron and shared population components, showing that shared co-variability peaks in primary visual cortex and declines higher up.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

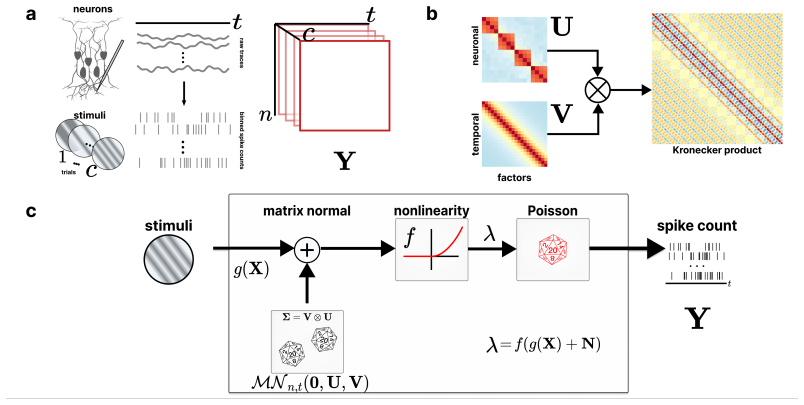

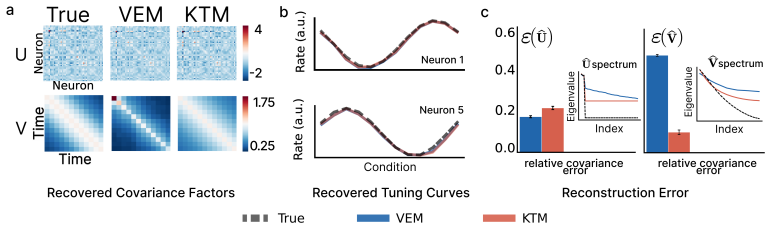

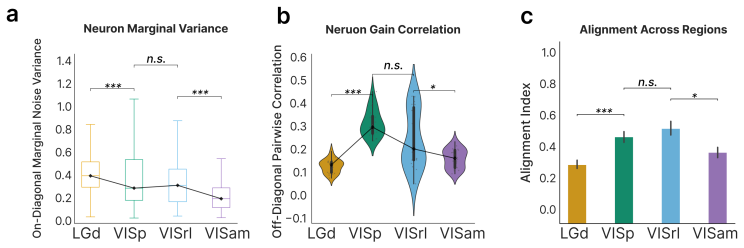

The PMNLV model extends single-neuron overdispersion to populations by placing a matrix-normal prior with Kronecker-factored covariance over the latent gain matrix. Spike counts follow a Poisson distribution whose rate is the sum of a per-neuron stimulus tuning term and the matrix-normal gain after a quadratic soft-rectifying link. Variational EM recovers dense Kronecker factors without structural assumptions. Applied to recordings across the mouse visual hierarchy, the model replicates that single-neuron marginal variability changes little between areas, yet demonstrates that shared population co-variability peaks in primary visual cortex and declines in higher visual areas.

What carries the argument

The matrix-normal prior with Kronecker-factored covariance in the PMNLV model, which places a joint distribution over the population gain matrix and factors its covariance into separate inter-neuron and temporal components.

If this is right

- Structured population gain covariance becomes estimable from simultaneously recorded spike counts without assuming neuron independence.

- Variational EM recovers dense inter-neuron and temporal covariance factors directly from data.

- Single-neuron marginal variability can be shown to be roughly constant while population co-variability varies across cortical areas.

- The framework applies to any simultaneously recorded population where structured gain covariance is of interest.

Where Pith is reading between the lines

- The observed decline in shared co-variability may reflect a shift from correlated early sensory processing to more independent representations in higher areas.

- Similar partitioning in other sensory modalities could test whether the pattern of decreasing population co-variability is general to cortical hierarchies.

- Linking the recovered covariance factors to specific stimulus features or behavioral variables could reveal computational roles for the shared gain modulation.

Load-bearing premise

The matrix-normal prior with Kronecker-factored covariance and the quadratic soft-rectifying link together capture the true network statistics of gain modulation without extra unmodeled structure.

What would settle it

Re-fitting the model to the same Neuropixel recordings and finding either no systematic decline in shared covariance across areas or substantial residual structure not explained by the Kronecker factors would falsify the reported partitioning.

Figures

read the original abstract

Trial-to-trial variability of neural responses has been linked to important aspects of neural computation and is essential for understanding how neuronal populations respond. While current overdispersion models treat each neuron's gain as independent of each other, this assumption fails to capture the network statistics of neuronal populations. As no existing model can capture overdispersed structured spiking gain-modulation across a neural population, network-level gain covariance remains largely unstudied. We thus present the Poisson matrix-normal latent variable (PMNLV) model, which extends single-neuron overdispersion to neural populations by placing a matrix-normal prior over the latent gain with a Kronecker-factored covariance. Spike counts are Poisson-distributed with a rate equal to the sum of a per-neuron stimulus tuning term and a matrix-normal gain, passed through a quadratic soft-rectifying link. We derive two complementary estimation algorithms: a variational EM (VEM) with a matrix-normal posterior that recovers dense Kronecker factors without structural assumptions, and a Kernel Tournament Method (KTM) that performs data-driven selection over a biologically motivated kernel dictionary and composite likelihood. On simulated data, both algorithms recover the inter-neuron and temporal covariance factors and accurate tuning curves. Applying VEM to Neuropixel recordings across four cortical regions of mouse visual hierarchy, we replicate a previous finding that single-neuron marginal variability changes little across cortical areas. We then show that shared population co-variability, invisible to scalar summaries e.g., the Fano factor, peaks in primary visual cortex and declines in higher visual areas. The PMNLV framework is applicable to any simultaneously recorded population where structured gain covariance is of scientific interest.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces the Poisson matrix-normal latent variable (PMNLV) model to capture structured, population-level gain modulation in neural spike counts. A matrix-normal prior with Kronecker-factored covariance is placed on latent gains; rates are formed by adding a fixed per-neuron tuning term to the gain and passing the sum through a quadratic soft-rectifying link, with Poisson observations. Two estimators are derived: variational EM (VEM) that recovers dense Kronecker factors and a Kernel Tournament Method (KTM) that selects from a kernel dictionary. On Neuropixel recordings from four areas of the mouse visual hierarchy, marginal single-neuron variability is shown to be stable while shared population co-variability peaks in V1 and declines in higher areas.

Significance. If the partitioning of variability is robust to the modeling assumptions, the work supplies a principled framework for studying network-level gain covariance that scalar statistics such as the Fano factor cannot access. The empirical hierarchy result would then provide new evidence that co-variability, rather than marginal variability, changes systematically along the visual pathway.

major comments (3)

- [Model definition] Model definition (abstract and §2): the quadratic soft-rectifying link together with area-dependent mean rates can induce mean-variance coupling that is absorbed into the Kronecker factors; because V1 responses are typically stronger, this coupling risks inflating the inferred shared covariance in V1 relative to higher areas. No alternative link functions or mean-matched simulations are reported to test whether the observed decline is an artifact of this interaction.

- [Simulation section] Simulation section: parameter recovery is demonstrated only under data generated from the exact PMNLV assumptions. Because the central empirical claim rests on the estimated Kronecker factors from real data, the absence of misspecification experiments (non-separable gain, alternative rectification, or area-specific mean-variance relationships) leaves open whether the V1 peak is recoverable under plausible violations.

- [Results on Neuropixel data] Results on Neuropixel data: the reported decline in shared co-variability is obtained solely from the VEM fit; no cross-validation against the KTM estimator or against simpler baselines (e.g., independent gain models or factorized covariance without the quadratic link) is provided to confirm that the hierarchy is not an artifact of the particular estimator or prior.

minor comments (2)

- [Abstract] The abstract states that 'no existing model can capture overdispersed structured spiking gain-modulation'; a brief comparison table or paragraph situating PMNLV against existing population overdispersion models (e.g., those with independent or low-rank gain) would clarify the precise novelty.

- [Model definition] Notation for the Kronecker factors (neuron vs. time) and the precise form of the quadratic soft-rectifier should be introduced with an equation number at first use to aid readability.

Simulated Author's Rebuttal

We thank the referee for the constructive comments, which highlight important considerations for validating the robustness of the PMNLV model and its empirical findings. We address each major point below, agreeing that additional checks will strengthen the manuscript, and outline the revisions we plan to incorporate.

read point-by-point responses

-

Referee: Model definition (abstract and §2): the quadratic soft-rectifying link together with area-dependent mean rates can induce mean-variance coupling that is absorbed into the Kronecker factors; because V1 responses are typically stronger, this coupling risks inflating the inferred shared covariance in V1 relative to higher areas. No alternative link functions or mean-matched simulations are reported to test whether the observed decline is an artifact of this interaction.

Authors: We acknowledge this potential interaction between the link function and area-specific mean rates. The quadratic soft-rectifier was chosen to maintain non-negativity and induce overdispersion consistent with neural data. However, to rule out artifactual inflation of V1 shared covariance, we will add mean-matched simulations across areas (holding marginal rates fixed while varying only the gain covariance structure) and results using an alternative exponential link function. These will be included in a new subsection of the revised manuscript to confirm the hierarchy persists. revision: yes

-

Referee: Simulation section: parameter recovery is demonstrated only under data generated from the exact PMNLV assumptions. Because the central empirical claim rests on the estimated Kronecker factors from real data, the absence of misspecification experiments (non-separable gain, alternative rectification, or area-specific mean-variance relationships) leaves open whether the V1 peak is recoverable under plausible violations.

Authors: We agree that demonstrating recovery under misspecification is essential for trusting the real-data Kronecker factor estimates. In the revision we will expand the simulation section with experiments generating data from non-separable gain models, alternative rectification functions, and area-specific mean-variance relationships, then show that both VEM and KTM still recover the V1 peak in shared co-variability when it is present in the ground truth. revision: yes

-

Referee: Results on Neuropixel data: the reported decline in shared co-variability is obtained solely from the VEM fit; no cross-validation against the KTM estimator or against simpler baselines (e.g., independent gain models or factorized covariance without the quadratic link) is provided to confirm that the hierarchy is not an artifact of the particular estimator or prior.

Authors: We recognize the value of estimator and baseline comparisons for the Neuropixel results. Although KTM targets kernel selection rather than dense factors, we will apply it to the same recordings for qualitative comparison of selected kernels. We will also add fits of simpler baselines (independent per-neuron gains and factorized covariance without the quadratic link) and report that the V1-to-higher-area decline in shared co-variability remains specific to the full PMNLV model. revision: yes

Circularity Check

No circularity: empirical co-variability hierarchy is output of model fit, not tautological reduction

full rationale

The paper introduces the PMNLV model by placing a matrix-normal prior with Kronecker-factored covariance on latent gain, defines rates via per-neuron tuning plus gain passed through quadratic soft-rectifier, and derives VEM and KTM estimators. These are applied to Neuropixel recordings to report that shared population co-variability (extracted from the fitted Kronecker factors) peaks in V1 and declines higher in the hierarchy, while replicating that marginal variability is stable. No equation or claim reduces the reported hierarchy to a fitted input by construction, nor does any self-citation or ansatz smuggle in the result; the central observation is a post-fit statistic on real data under stated assumptions. The derivation chain is self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

free parameters (1)

- Kronecker covariance factors

axioms (1)

- domain assumption Spike counts follow a Poisson distribution conditional on the rate

invented entities (1)

-

matrix-normal latent gain

no independent evidence

Reference graph

Works this paper leans on

-

[1]

S. Keeleyet al.“Identifying signal and noise structure in neural population activity with Gaussian process factor models”. In:Advances in Neural Information Processing Systems. V ol. 33. Curran Associates, Inc., 2020, pp. 13795–13805

work page 2020

-

[2]

Variance as a Signature of Neural Computations during Decision Making

A. K. Churchlandet al.“Variance as a Signature of Neural Computations during Decision Making”.Neuron 69.4 (Feb. 2011), pp. 818–831.DOI:10.1016/j.neuron.2010.12.037

-

[3]

Dynamic Structure of Neural Variability in the Cortical Representation of Speech Sounds

B. K. Dichteret al.“Dynamic Structure of Neural Variability in the Cortical Representation of Speech Sounds”.Journal of Neuroscience36.28 (July 2016), pp. 7453–7463.DOI:10.1523/JNEUROSCI.0156- 16.2016

-

[4]

Dynamical latent state computation in the male macaque posterior parietal cortex

K. J. Lakshminarasimhanet al.“Dynamical latent state computation in the male macaque posterior parietal cortex”.Nature Communications14.1 (Apr. 2023), p. 1832.DOI:10.1038/s41467-023-37400-4

-

[5]

Partitioning neuronal variability

R. L. T. Goriset al.“Partitioning neuronal variability”.Nature Neuroscience17.6 (June 2014), pp. 858–865. DOI:10.1038/nn.3711

-

[6]

Dethroning the Fano Factor: A Flexible, Model-Based Approach to Partitioning Neural Variability

A. S. Charleset al.“Dethroning the Fano Factor: A Flexible, Model-Based Approach to Partitioning Neural Variability”.Neural Computation30.4 (Apr. 2018), pp. 1012–1045.DOI:10.1162/neco_a_01062

-

[7]

A doubly stochastic renewal framework for partitioning spiking variability

C. Aghamohammadiet al.“A doubly stochastic renewal framework for partitioning spiking variability”. Nature Communications16.1 (Sept. 2025), p. 8656.DOI:10.1038/s41467-025-63821-4

-

[8]

On the Spike Train Variability Characterized by Variance-to-Mean Power Relationship

S. Koyama. “On the Spike Train Variability Characterized by Variance-to-Mean Power Relationship”. Neural Computation27.7 (July 2015), pp. 1530–1548.DOI:10.1162/NECO_a_00748

-

[9]

Inferring Neural Firing Rates from Spike Trains Using Gaussian Processes

J. P. Cunninghamet al.“Inferring Neural Firing Rates from Spike Trains Using Gaussian Processes”. In: Advances in Neural Information Processing Systems. V ol. 20. Curran Associates, Inc., 2007

work page 2007

-

[10]

Tractable nonparametric Bayesian inference in Poisson processes with Gaussian process intensities

R. P. Adamset al.“Tractable nonparametric Bayesian inference in Poisson processes with Gaussian process intensities”. In:Proceedings of the 26th Annual International Conference on Machine Learning. Montreal Quebec Canada: ACM, June 2009, pp. 9–16.DOI:10.1145/1553374.1553376

-

[11]

Response Variability of Neurons in Primary Visual Cortex (V1) of Alert Monkeys

M. Guret al.“Response Variability of Neurons in Primary Visual Cortex (V1) of Alert Monkeys”. The Journal of Neuroscience17.8 (Apr. 1997), pp. 2914–2920.DOI: 10.1523/JNEUROSCI.17- 08- 02914.1997

-

[12]

A Comparison of Spiking Statistics in Motion Sensing Neurones of Flies and Monkeys

C. L. Barberiniet al.“A Comparison of Spiking Statistics in Motion Sensing Neurones of Flies and Monkeys”. In:Motion Vision: Computational, Neural, and Ecological Constraints. Ed. by J. M. Zanker and J. Zeil. Berlin, Heidelberg: Springer, 2001, pp. 307–320.DOI:10.1007/978-3-642-56550-2_16

-

[13]

Z. Friedenberger and R. Naud.Dendritic excitability controls overdispersion. Pages: 2022.11.18.517108 Section: New Results. June 2023.DOI:10.1101/2022.11.18.517108

-

[14]

Amplification of Trial-to-Trial Response Variability by Neurons in Visual Cortex: e264

M. Carandini. “Amplification of Trial-to-Trial Response Variability by Neurons in Visual Cortex: e264”. PLoS Biology2.9 (Sept. 2004). Num Pages: E264 Place: San Francisco, United States, E264.DOI: 10.1371/journal.pbio.0020264

- [15]

-

[16]

Information-limiting correlations.Nature Neuroscience, 17(10):1410–1417, 2014

R. Moreno-Boteet al.“Information-limiting correlations”.Nature Neuroscience17.10 (Oct. 2014), pp. 1410–1417.DOI:10.1038/nn.3807

-

[17]

Continuous partitioning of neuronal variability

A. Rupasingheet al.“Continuous partitioning of neuronal variability”.bioRxiv(July 2025), p. 2025.07.23.666404.DOI:10.1101/2025.07.23.666404

-

[18]

Probabilistic Non-linear Principal Component Analysis with Gaussian Process Latent Variable Models

N. Lawrence. “Probabilistic Non-linear Principal Component Analysis with Gaussian Process Latent Variable Models”.Journal of Machine Learning Research6.60 (2005), pp. 1783–1816. 10

work page 2005

-

[19]

B. M. Yuet al.“Gaussian-process factor analysis for low-dimensional single-trial analysis of neural population activity”. In:Advances in Neural Information Processing Systems. V ol. 21. Curran Associates, Inc., 2008

work page 2008

-

[20]

Probabilistic Interpretation of Population Codes

R. S. Zemelet al.“Probabilistic Interpretation of Population Codes”.Neural Computation10.2 (Feb. 1998), pp. 403–430.DOI:10.1162/089976698300017818

-

[21]

S. Nirenberg and P. E. Latham. “Decoding neuronal spike trains: How important are correlations?”Pro- ceedings of the National Academy of Sciences100.12 (June 2003), pp. 7348–7353.DOI:10.1073/pnas. 1131895100

-

[22]

Spike Count Reliability and the Poisson Hypothesis

A. Amarasinghamet al.“Spike Count Reliability and the Poisson Hypothesis”.Journal of Neuroscience 26.3 (Jan. 2006), pp. 801–809.DOI:10.1523/JNEUROSCI.2948-05.2006

-

[23]

Measuring and interpreting neuronal correlations

M. R. Cohen and A. Kohn. “Measuring and interpreting neuronal correlations”.Nature Neuroscience14.7 (July 2011), pp. 811–819.DOI:10.1038/nn.2842

-

[24]

Spatio-temporal correlations and visual signalling in a complete neuronal population

J. W. Pillowet al.“Spatio-temporal correlations and visual signalling in a complete neuronal population”. Nature454.7207 (Aug. 2008), pp. 995–999.DOI:10.1038/nature07140

-

[25]

Lfads-latent factor analysis via dynamical systems.arXiv preprint arXiv:1608.06315,

D. Sussilloet al. LF ADS - Latent Factor Analysis via Dynamical Systems. arXiv:1608.06315 [cs]. Aug. 2016.DOI:10.48550/arXiv.1608.06315

-

[26]

S. Keeleyet al.“Identifying signal and noise structure in neural population activity with Gaussian process factor models”.Advances in neural information processing systems33 (2020), pp. 13795–13805

work page 2020

-

[27]

Factor-Analysis Methods for Higher-Performance Neural Prostheses

G. Santhanamet al.“Factor-Analysis Methods for Higher-Performance Neural Prostheses”.Journal of Neurophysiology102.2 (Aug. 2009), pp. 1315–1330.DOI:10.1152/jn.00097.2009

-

[28]

Gaussian process based nonlinear latent structure discovery in multivariate spike train data

A. Wuet al.“Gaussian process based nonlinear latent structure discovery in multivariate spike train data”. In:Advances in Neural Information Processing Systems. V ol. 30. Curran Associates, Inc., 2017

work page 2017

-

[29]

Kronecker Structured Covariance Matrix Estimation

K. Werneret al.“Kronecker Structured Covariance Matrix Estimation”. In:2007 IEEE International Conference on Acoustics, Speech and Signal Processing - ICASSP ’07. V ol. 3. Apr. 2007, pp. III–825–III– 828.DOI:10.1109/ICASSP.2007.366807

-

[30]

C. E. Rasmussen and C. K. I. Williams.Gaussian processes for machine learning. 3. print. Adaptive computation and machine learning. Cambridge, Mass.: MIT Press, 2008

work page 2008

-

[31]

H. Rueet al.“Approximate Bayesian Inference for Latent Gaussian models by using Integrated Nested Laplace Approximations”.Journal of the Royal Statistical Society Series B: Statistical Methodology71.2 (Apr. 2009), pp. 319–392.DOI:10.1111/j.1467-9868.2008.00700.x

-

[32]

Laplace Approximation of High Dimensional Integrals

Z. Shun and P. McCullagh. “Laplace Approximation of High Dimensional Integrals”.Journal of the Royal Statistical Society: Series B (Methodological)57.4 (Jan. 1995), pp. 749–760.DOI: 10.1111/j.2517- 6161.1995.tb02060.x

-

[33]

Fully Exponential Laplace Approximations to Expectations and Variances of Nonpositive Functions

L. Tierneyet al.“Fully Exponential Laplace Approximations to Expectations and Variances of Nonpositive Functions”.Journal of the American Statistical Association84.407 (1989), pp. 710–716.DOI: 10.2307/ 2289652

work page 1989

-

[34]

D. M. Bleiet al.“Variational Inference: A Review for Statisticians”.Journal of the American Statistical Association112.518 (Apr. 2017). arXiv:1601.00670 [stat], pp. 859–877.DOI: 10.1080/01621459.2017. 1285773

-

[35]

Variational Inference for sparse network reconstruction from count data

J. Chiquetet al.“Variational Inference for sparse network reconstruction from count data”. In:Proceedings of the 36th International Conference on Machine Learning. PMLR, May 2019, pp. 1162–1171

work page 2019

-

[36]

S. Boyd and L. Vandenberghe.Convex Optimization. 1st ed. Cambridge University Press, Mar. 2004.DOI: 10.1017/CBO9780511804441

-

[37]

The Multivariate Poisson-Log Normal Distribution

J. Aitchison and C. H. Ho. “The Multivariate Poisson-Log Normal Distribution”.Biometrika76.4 (1989), pp. 643–653.DOI:10.2307/2336624

-

[38]

Sparse estimation of a covariance matrix

J. Bien and R. J. Tibshirani. “Sparse estimation of a covariance matrix”.Biometrika98.4 (Dec. 2011), pp. 807–820.DOI:10.1093/biomet/asr054

-

[39]

An Overview of Composite Likelihood Methods

C. Varinet al.“An Overview of Composite Likelihood Methods”.Statistica Sinica21.1 (2011), pp. 5–42

work page 2011

-

[40]

A. K. Saibaba and A. Mi˛ edlar.Randomized low-rank approximations beyond Gaussian random matrices. arXiv:2308.05814 [math]. Aug. 2023.DOI:10.48550/arXiv.2308.05814

-

[41]

The Square Root Transformation in Analysis of Variance

M. S. Bartlett. “The Square Root Transformation in Analysis of Variance”.Journal of the Royal Statistical Society Series B: Statistical Methodology3.1 (Jan. 1936), pp. 68–78.DOI:10.2307/2983678

-

[42]

Survey of spiking in the mouse visual system reveals functional hierarchy

J. H. Siegleet al.“Survey of spiking in the mouse visual system reveals functional hierarchy”.Nature 592.7852 (Apr. 2021), pp. 86–92.DOI:10.1038/s41586-020-03171-x

-

[43]

Highly Selective Receptive Fields in Mouse Visual Cortex

C. M. Niell and M. P. Stryker. “Highly Selective Receptive Fields in Mouse Visual Cortex”.The Journal of Neuroscience28.30 (July 2008), pp. 7520–7536.DOI:10.1523/JNEUROSCI.0623-08.2008

-

[44]

Deciphering neuronal variability across states reveals dynamic sensory encoding

S. Akellaet al.“Deciphering neuronal variability across states reveals dynamic sensory encoding”.Nature Communications16.1 (Feb. 2025), p. 1768.DOI:10.1038/s41467-025-56733-w

-

[45]

Multi-regional module-based signal transmission in mouse visual cortex

X. Jiaet al.“Multi-regional module-based signal transmission in mouse visual cortex”.Neuron(2022). 11

work page 2022

-

[46]

J. S. Montjinet al.“Population-Level Neural Codes Are Robust to Single-Neuron Variability from a Multidimensional Coding Perspective”.Cell Reports16.9 (2016), pp. 2486–2498.DOI: https://doi. org/10.1016/j.celrep.2016.07.065

-

[47]

Reorganization between preparatory and movement population responses in motor cortex

G. F. Elsayedet al.“Reorganization between preparatory and movement population responses in motor cortex”.Nature Communications7.1 (Oct. 2016), p. 13239.DOI:10.1038/ncomms13239

-

[48]

Multiple neural spike train data analysis: state-of-the-art and future challenges

E. N. Brownet al.“Multiple neural spike train data analysis: state-of-the-art and future challenges”.Nature Neuroscience7.5 (May 2004), pp. 456–461.DOI:10.1038/nn1228

-

[49]

M. Abramowitz and I. A. Stegun.Handbook of Mathematical Functions: With F ormulas, Graphs, and Mathematical Tables. Google-Books-ID: MtU8uP7XMvoC. Courier Corporation, Jan. 1965. [50]Matrix V ariate Distributions | A K Gupta, D K Nagar | Taylor & Francis. 12 Supplement A Gauss–Hermite Quadrature The nonlinear link g(·) = softplus(·)2 makes the expected lo...

work page 1965

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.