Recognition: no theorem link

Functional-prior-based Bayesian PDE-constrained inversion using PINNs

Pith reviewed 2026-05-11 02:14 UTC · model grok-4.3

The pith

Functional priors integrate into Bayesian PINN inversion for PDE-constrained problems through weight-space learning or direct function-space inference.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We introduce a unified framework, termed functional-prior-based approaches to Bayesian PDE-constrained inversion using physics-informed neural networks (fpBPINN), to incorporate functional priors into Bayesian PINN-based inversion. Two complementary approaches are considered: the functional-prior-informed Bayesian PINN (FPI-BPINN), in which a neural network weight prior is learned to be consistent with a prescribed functional prior and Bayesian inference is performed in weight space, and function-space particle-based variational inference for PINNs (fParVI-PINN), which performs Bayesian estimation using ParVI directly in function space. Random Fourier features play an important role in both.

What carries the argument

The fpBPINN framework consisting of FPI-BPINN (learning a weight prior consistent with a functional prior) and fParVI-PINN (direct function-space ParVI), supported by random Fourier features for representing Gaussian functional priors in neural networks.

If this is right

- Both FPI-BPINN and fParVI-PINN accurately estimate posterior distributions for PDE-constrained inverse problems.

- FPI-BPINN provides flexibility in prior specification while fParVI-PINN offers higher accuracy in posterior approximation.

- Physically interpretable functional priors replace opaque weight-space priors in Bayesian PINN inversion.

- Random Fourier features improve representation of Gaussian functional priors and posterior quality.

- The approaches apply to seismic traveltime tomography and Darcy-flow permeability inversion.

Where Pith is reading between the lines

- The framework could extend naturally to other geophysical or fluid-mechanics inverse problems where priors derive from known physical correlations.

- Direct function-space inference may reduce discretization artifacts that arise when mapping functional assumptions onto network weights.

- Hybrid use of the two approaches might be tested to trade off computational flexibility against posterior fidelity in larger-scale settings.

- The emphasis on random Fourier features suggests examining whether other basis expansions could further tighten the link between functional priors and network representations.

Load-bearing premise

Neural networks with random Fourier features can sufficiently represent the prescribed functional priors and the subsequent Bayesian inference in weight space or function space converges to accurate posteriors without significant approximation error.

What would settle it

A test case in which the true posterior is known from an independent high-fidelity method and the fpBPINN posteriors deviate substantially in mean, variance, or credible intervals from that reference.

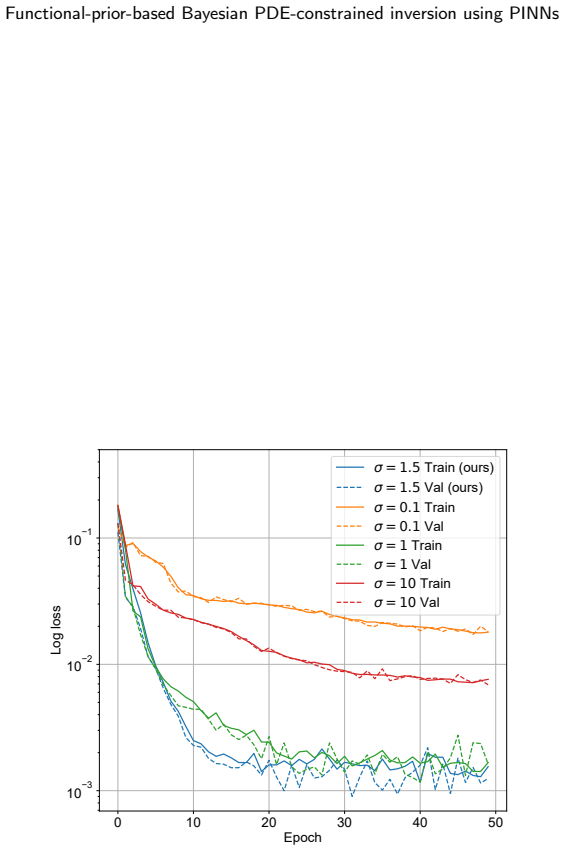

Figures

read the original abstract

Physics-informed neural networks (PINNs) provide a mesh-free framework for solving PDE-constrained inverse problems, but their extension to Bayesian inversion still faces a fundamental difficulty: prior distributions are typically defined in the weight space of neural networks, whereas physically meaningful prior assumptions are more naturally expressed in function space. In this study, we introduce a unified framework, termed functional-prior-based approaches to Bayesian PDE-constrained inversion using physics-informed neural networks (fpBPINN), to incorporate functional priors into Bayesian PINN-based inversion. We consider two complementary approaches. The first is a functional-prior-informed Bayesian PINN (FPI-BPINN), in which a neural network weight prior is learned to be consistent with a prescribed functional prior, and Bayesian inference is subsequently performed in weight space. The second is function-space particle-based variational inference for PINNs (fParVI-PINN), which performs Bayesian estimation using ParVI directly in function space. We also show that random Fourier features (RFF) play an important role in representing Gaussian functional priors with neural networks and in improving posterior approximation. We applied the proposed approaches to one-dimensional seismic traveltime tomography and two-dimensional Darcy-flow permeability inversion. These numerical experiments showed that both approaches accurately estimated posterior distributions, highlighting the significance of introducing physically interpretable functional priors into Bayesian PINN-based inverse problems. We also identified the contrasting advantages of FPI-BPINN and fParVI-PINN, namely flexibility and accuracy, respectively.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces a unified fpBPINN framework for Bayesian PDE-constrained inversion with PINNs to address the mismatch between weight-space priors and physically meaningful function-space priors. It proposes two complementary methods: FPI-BPINN, which learns a weight-space prior consistent with a prescribed functional prior (using RFF for Gaussian processes) before weight-space Bayesian inference, and fParVI-PINN, which performs particle-based variational inference directly in function space. The approaches are tested on 1D seismic traveltime tomography and 2D Darcy-flow permeability inversion, with the claim that both yield accurate posterior estimates and that RFF improves prior representation and posterior approximation.

Significance. If validated, the work would be significant for enabling interpretable functional priors in Bayesian PINN inversions, a key gap in mesh-free PDE-constrained inverse problems common in geophysics. The two methods provide contrasting strengths (flexibility vs. accuracy), and the RFF emphasis is a useful technical contribution. The numerical demonstrations on two distinct problems are a positive step, but the absence of quantitative validation metrics and baselines substantially reduces the strength of the central claim.

major comments (3)

- [Abstract and numerical experiments] Abstract and numerical experiments section: the central claim that 'both approaches accurately estimated posterior distributions' is supported only by qualitative statements with no quantitative metrics (KL divergence, MMD, posterior coverage, or predictive checks), no comparison to gold-standard function-space MCMC, and no error bounds on the RFF approximation of the functional prior. This leaves the accuracy assertion weakly supported and load-bearing for the paper's conclusions.

- [Methods (RFF and prior embedding)] RFF representation of functional priors (methods section): the assertion that random Fourier features 'play an important role in representing Gaussian functional priors with neural networks' lacks any quantitative fidelity assessment (e.g., covariance matching or MMD between the RFF-NN prior and the target GP), which is required to confirm that the subsequent Bayesian inference is not biased by representation error.

- [fParVI-PINN method] fParVI-PINN formulation: the function-space particle variational inference lacks analysis of convergence to the true posterior or approximation error bounds relative to the neural-network parametrization, making it unclear whether the reported posteriors are free of optimization or representation bias.

minor comments (2)

- [Introduction] The acronym fpBPINN is used in the title and abstract but its precise expansion is not restated clearly at first use in the main text.

- [Figures in numerical experiments] Figure captions for the tomography and Darcy results should explicitly state the quantitative metrics (if any) used to assess posterior accuracy rather than relying on visual inspection alone.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments on our manuscript. We have carefully addressed each major point below and revised the manuscript to strengthen the quantitative support for our claims where feasible.

read point-by-point responses

-

Referee: Abstract and numerical experiments section: the central claim that 'both approaches accurately estimated posterior distributions' is supported only by qualitative statements with no quantitative metrics (KL divergence, MMD, posterior coverage, or predictive checks), no comparison to gold-standard function-space MCMC, and no error bounds on the RFF approximation of the functional prior. This leaves the accuracy assertion weakly supported and load-bearing for the paper's conclusions.

Authors: We agree that the original presentation relied primarily on visual and qualitative assessments, which limits the strength of the accuracy claim. In the revised manuscript we have added quantitative metrics including KL divergence and MMD to reference distributions (where computable), posterior coverage probabilities, and predictive checks. For the 1D seismic traveltime tomography example we now include a direct comparison against a gold-standard function-space MCMC sampler. Error bounds on the RFF approximation are quantified via covariance discrepancy and MMD in a new appendix. The abstract has been updated to reflect these additions. For the 2D Darcy-flow case a full MCMC comparison remains computationally prohibitive. revision: yes

-

Referee: RFF representation of functional priors (methods section): the assertion that random Fourier features 'play an important role in representing Gaussian functional priors with neural networks' lacks any quantitative fidelity assessment (e.g., covariance matching or MMD between the RFF-NN prior and the target GP), which is required to confirm that the subsequent Bayesian inference is not biased by representation error.

Authors: We appreciate this observation. The revised methods section now contains a quantitative fidelity analysis of the RFF embedding, reporting both covariance-function matching errors and MMD distances between the RFF-induced neural-network prior and the target Gaussian process. These metrics demonstrate that the representation error is small and does not materially bias the subsequent inference, thereby supporting the role of RFF. revision: yes

-

Referee: fParVI-PINN formulation: the function-space particle variational inference lacks analysis of convergence to the true posterior or approximation error bounds relative to the neural-network parametrization, making it unclear whether the reported posteriors are free of optimization or representation bias.

Authors: We acknowledge the absence of formal convergence analysis in the original submission. The revised manuscript expands the fParVI-PINN section with a discussion of convergence properties drawn from the ParVI literature, together with empirical diagnostics (evolution of the variational objective and stability of posterior statistics across particle counts and iterations). While deriving rigorous approximation-error bounds for the neural-network parametrization lies beyond the present scope, we have added sensitivity experiments to network width, depth, and particle number to assess representation bias. revision: partial

- A direct comparison against gold-standard function-space MCMC for the 2D Darcy-flow permeability inversion, which is computationally intractable at the problem size considered.

Circularity Check

No significant circularity; algorithmic proposal validated by experiments

full rationale

The paper proposes two algorithmic frameworks (FPI-BPINN and fParVI-PINN) for incorporating functional priors into Bayesian PINN inversion, using RFF to represent Gaussian priors, and evaluates them via numerical experiments on 1D traveltime tomography and 2D Darcy inversion. No load-bearing step reduces by construction to a fitted parameter, self-definition, or self-citation chain; the central claims rest on empirical posterior estimation rather than tautological reductions. The methods are self-contained algorithmic contributions whose performance is assessed externally through simulation results.

Axiom & Free-Parameter Ledger

free parameters (2)

- Random Fourier feature hyperparameters

- PINN architecture and training hyperparameters

axioms (2)

- domain assumption The governing PDE and boundary conditions are known exactly.

- ad hoc to paper Neural networks with RFF can faithfully approximate the chosen functional prior.

invented entities (2)

-

FPI-BPINN

no independent evidence

-

fParVI-PINN

no independent evidence

Reference graph

Works this paper leans on

-

[1]

IEEE Transactions on Geoscience and Remote Sensing 61, 1–17

Bayesian Seismic Tomography Based on Velocity-Space Stein Variational Gradient Descent for Physics- Informed Neural Network. IEEE Transactions on Geoscience and Remote Sensing 61, 1–17. doi:10.1109/TGRS.2023.3295414. Agata, R., Shiraishi, K., Fujie, G.,

-

[2]

Physics letters B 195, 216–222

Hybrid monte carlo. Physics letters B 195, 216–222. Fukushima,R.,Kano,M.,Hirahara,K.,Ohtani,M.,Im,K.,Avouac,J.P.,2025. Physics-informeddeeplearningforestimatingthespatialdistribution of frictional parameters in slow slip regions. Journal of Geophysical Research: Solid Earth 130, e2024JB030256. Gallego, V., Insua, D.R.,

work page 2025

-

[3]

arXiv preprint arXiv:1812.00071

Stochastic gradient MCMC with repulsive forces. arXiv preprint arXiv:1812.00071 . Goodfellow, I.J., Pouget-Abadie, J., Mirza, M., Xu, B., Warde-Farley, D., Ozair, S., Courville, A., Bengio, Y.,

-

[4]

46 Zhao et al., 2026 Lindseth, R

DiffusionInv: Prior-enhanced Bayesian Full Waveform Inversion using Diffusion models. arXiv preprint arXiv:2505.03138 . Liu, C., Zhuo, J., Cheng, P., Zhang, R., Zhu, J.,

-

[5]

Theridgeletprior:Acovariancefunctionapproachtopriorspecificationforbayesianneuralnetworks

Matsubara,T.,Oates,C.J.,Briol,F.X.,2021. Theridgeletprior:Acovariancefunctionapproachtopriorspecificationforbayesianneuralnetworks. Journal of Machine Learning Research 22, 1–57. Meng, X., Yang, L., Mao, Z., del Águila Ferrandis, J., Karniadakis, G.E.,

work page 2021

-

[6]

arXiv preprint arXiv:1908.08681 , year=

Mish: A self regularized non-monotonic neural activation function. arXiv preprint arXiv:1908.08681 . Pensoneault, A., Zhu, X.,

-

[7]

arXiv preprint arXiv:2505.17308

Repulsive Ensembles for Bayesian Inference in Physics-informed Neural Networks. arXiv preprint arXiv:2505.17308 . Raissi, M., Perdikaris, P., Karniadakis, G.E.,

-

[8]

arXiv preprint arXiv.2403.13899

PINNferring the Hubble function with uncertainties. arXiv preprint arXiv.2403.13899 . Rudner, T.G., Chen, Z., Teh, Y.W., Gal, Y.,

-

[9]

Uncertainty Quantification in PINNs for Turbulent Flows: Bayesian Inference and Repulsive Ensembles

Uncertainty quantification in pinns for turbulent flows: Bayesian inference and repulsive ensembles. arXiv preprint arXiv:2604.17156 . Smith, J.D., Azizzadenesheli, K., Ross, Z.E.,

work page internal anchor Pith review Pith/arXiv arXiv

-

[10]

IEEE Transactions on Geoscience and Remote Sensing 59, 10685–10696

Eikonet: Solving the eikonal equation with deep neural networks. IEEE Transactions on Geoscience and Remote Sensing 59, 10685–10696. doi:10.1109/TGRS.2020.3039165. Sun, L., Wang, J.X.,

-

[11]

Functional variational Bayesian neural networks, in: International Conference on Learning Representations. Tancik,M.,Srinivasan,P.,Mildenhall,B.,Fridovich-Keil,S.,Raghavan,N.,Singhal,U.,Ramamoorthi,R.,Barron,J.,Ng,R.,2020. Fourierfeatures let networks learn high frequency functions in low dimensional domains. Advances in Neural Information Processing Syst...

work page 2020

-

[12]

Journal of Computational Physics 425, 109913

B-PINNs: Bayesian physics-informed neural networks for forward and inverse PDE problems with noisy data. Journal of Computational Physics 425, 109913. Yin,M.,Zheng,X.,Humphrey,J.D.,Karniadakis,G.E.,2021. Non-invasiveinferenceofthrombusmaterialpropertieswithphysics-informedneural networks. Computer Methods in Applied Mechanics and Engineering 375, 113603. ...

work page 2021

-

[13]

(2021)), and the proposed fpBPINN approaches, including FPI-BPINN and fParVI-PINN

18:Compute𝐠 𝑙 𝑖 = ∇ 𝜽𝑚(𝜽 𝑙 𝑢,𝑖,𝜽 𝑙 𝑚,𝑖)using Equation 38 19:end for 20:Update{𝜽 𝑙 𝑚,𝑖} 𝑛𝑝 𝑖=1 by one SGLD+R step using{𝐠𝑙 𝑖} 𝑛𝑝 𝑖=1 21:end for Agata and Okazaki:Preprint submitted to ElsevierPage 18 of 18 Functional-prior-based Bayesian PDE-constrained inversion using PINNs Algorithm 2fParVI-PINN Require:functional prior𝑝(𝐦), particle number𝑛 𝑝, evaluati...

work page 2021

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.