Recognition: no theorem link

A Decomposed Retrieval-Edit-Rerank Framework for Chord Generation

Pith reviewed 2026-05-11 01:55 UTC · model grok-4.3

The pith

Decomposing chord generation into retrieval, editing and reranking stages improves the balance between musical diversity and theoretical feasibility over end-to-end models.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

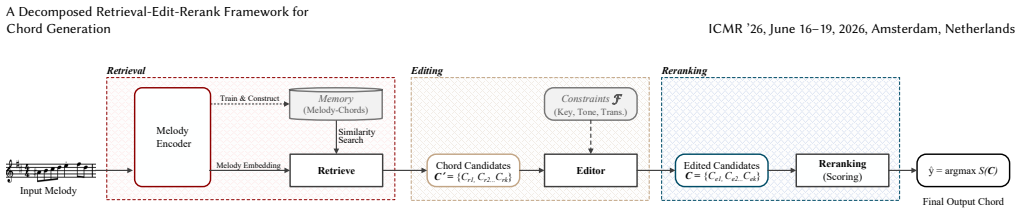

The paper establishes that a Retrieval-Edit-Rerank framework, by explicitly separating the definition of a stylistically plausible candidate space, the enforcement of music-theoretic feasibility via minimal modifications, and the resolution of soft preferences, provides a controllable and interpretable pipeline that outperforms end-to-end chord generation baselines in balancing chord diversity and music-theoretic feasibility.

What carries the argument

The Retrieval-Edit-Rerank (RER) framework that decomposes chord generation into three stages: retrieval for style, editing for constraints, and reranking for preferences.

If this is right

- Each stage can be adjusted independently to tune the output characteristics without retraining the whole system.

- The separation makes the generation process more interpretable, as the contribution of each component to the final chord is clear.

- Ablation studies indicate that all three stages play complementary roles in achieving creative exploration while satisfying constraints.

- Subjective evaluations confirm the objective improvements in perceived quality and adherence to musical rules.

Where Pith is reading between the lines

- The modular structure could extend to other constrained music tasks such as melody or rhythm generation where style and rules must be balanced.

- Explicit stages allow easier insertion of domain knowledge or user controls specifically into the editing or reranking steps.

- Learned components could replace any one stage while retaining the overall decomposition benefits.

Load-bearing premise

That the retrieval, editing, and reranking stages can be implemented separately without their combination introducing inconsistencies or new trade-offs that cancel out the benefits of decomposition.

What would settle it

A well-tuned single end-to-end model matching or exceeding the decomposed system's objective diversity and feasibility scores, plus listener ratings, on the same test sets would refute the advantage of explicit separation.

Figures

read the original abstract

Chord generation is an inherently constrained creative task that requires balancing stylistic diversity with music-theoretic feasibility. Existing approaches typically entangle candidate generation and constraint enforcement within a single model, making the diversity-feasibility trade-off difficult to control and interpret. In this work, we approach chord generation from a system-level perspective, introducing a Retrieval-Edit-Rerank (RER) framework that decomposes the task into three explicit stages: i) retrieval, which defines a stylistically plausible candidate space; ii) editing, which enforces music-theoretic feasibility through minimal modifications; and iii) reranking, which resolves soft preferences among feasible candidates. This separation provides a controllable pipeline, where each component addresses a distinct aspect of the generation process, thereby enhancing both the interpretability and adjustability of the output chords. Through objective metrics and subjective evaluation, our decomposed system outperforms all end-to-end chord generation baselines in balancing chord diversity and music-theoretic feasibility. Ablation studies further confirm the complementary roles of each stage in creative exploration and constraint satisfaction.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces a Retrieval-Edit-Rerank (RER) framework for chord generation that decomposes the task into three stages: retrieval to define a stylistically plausible candidate space, editing to enforce music-theoretic feasibility via minimal modifications, and reranking to resolve soft preferences among feasible candidates. The central claim is that this explicit decomposition provides better control and interpretability than entangled end-to-end models, leading to superior performance in balancing chord diversity and feasibility. The authors support this with objective metrics, subjective human evaluation, and ablation studies demonstrating the complementary contributions of each stage.

Significance. If the empirical results hold, the work offers a meaningful advance in controllable music generation by treating the diversity-feasibility trade-off as a system-level design problem rather than an implicit optimization target. The decomposition into stylistically grounded retrieval, constraint-focused editing, and preference-based reranking improves interpretability and adjustability, which could generalize to other constrained creative tasks. The inclusion of ablation studies that isolate stage roles is a positive methodological feature that strengthens the case for the framework's utility.

minor comments (2)

- The abstract states that the system 'outperforms all end-to-end chord generation baselines' but does not name the specific baselines or report the magnitude of improvements on the objective metrics; adding these details would strengthen the summary of results.

- The description of the editing stage as enforcing feasibility 'through minimal modifications' would benefit from a brief concrete example or pseudocode in the methods section to illustrate how the minimal-change criterion is operationalized.

Simulated Author's Rebuttal

We thank the referee for the positive and constructive review. The summary accurately reflects the motivation and contributions of the RER framework, and we appreciate the recognition of its potential for controllable music generation and the value of the ablation studies. The minor revision recommendation is noted.

Circularity Check

No significant circularity

full rationale

The paper introduces a high-level system architecture decomposing chord generation into retrieval (candidate space), editing (feasibility via minimal changes), and reranking (preference resolution) stages. All performance claims rest on empirical comparisons to baselines, objective metrics, subjective evaluations, and ablation studies confirming complementary stage roles. No equations, derivations, fitted parameters renamed as predictions, or self-citation chains appear in the provided text; the construction is self-contained against external benchmarks with no reduction of outputs to inputs by definition.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Ting Chen, Simon Kornblith, Mohammad Norouzi, and Geoffrey Hinton. 2020. A Simple Framework for Contrastive Learning of Visual Representations. In Proceedings of the 37th International Conference on Machine Learning (Proceedings of Machine Learning Research, Vol. 119). PMLR, 1597–1607. https://proceedings. mlr.press/v119/chen20j.html

work page 2020

-

[2]

Ching-Hua Chuan and Elaine Chew. 2007. A Hybrid System for Automatic Gen- eration of Style-Specific Accompaniment. InProceedings of the 4th International Joint Workshop on Computational Creativity. London, UK, 57–64

work page 2007

-

[3]

Tianyu Gao, Xingcheng Yao, and Danqi Chen. 2021. SimCSE: Simple Con- trastive Learning of Sentence Embeddings. InProceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Compu- tational Linguistics, Online and Punta Cana, Dominican Republic, 6894–6910. https://doi.org/10.18653/v1/2021.emnlp-main.552

-

[4]

Masataka Goto. 2006. AIST Annotation for the RWC Music Database. InPro- ceedings of the 7th International Conference on Music Information Retrieval (ISMIR 2006). Victoria, Canada, 359–360

work page 2006

-

[5]

Masataka Goto, Hiroki Hashiguchi, Takuichi Nishimura, and Ryuichi Oka. 2002. RWC Music Database: Popular, Classical, and Jazz Music Databases. InProceedings of the 3rd International Conference on Music Information Retrieval (ISMIR 2002). Paris, France, 287–288

work page 2002

-

[6]

Nabil Hossain, Marjan Ghazvininejad, and Luke Zettlemoyer. 2020. Simple and Effective Retrieve-Edit-Rerank Text Generation. InProceedings of the 58th Annual Meeting of the Association for Computational Linguistics. Association for Computational Linguistics, Online, 2532–2538. https://doi.org/10.18653/v1/2020. acl-main.228

-

[7]

Jeff Johnson, Matthijs Douze, and Hervé Jégou. 2021. Billion-Scale Similarity Search with GPUs.IEEE Transactions on Big Data7, 3 (2021), 535–547. https: //doi.org/10.1109/TBDATA.2019.2921572

-

[8]

Roberto Sebastian Legaspi, Yuya Hashimoto, Koichi Moriyama, Satoshi Kurihara, and Masayuki Numao. 2007. Music Compositional Intelligence with an Affective Flavor. InProceedings of the 12th International Conference on Intelligent User Interfaces. 216–224. https://doi.org/10.1145/1216295.1216335

-

[9]

Shuyu Li and Yunsick Sung. 2023. Transformer-Based Seq2Seq Model for Chord Progression Generation.Mathematics11, 5 (2023), 1111. https://doi.org/10.3390/ math11051111

work page 2023

-

[10]

Li Yi, Haochen Hu, Jingwei Zhao, and Gus Xia. 2022. AccoMontage2: A Complete Harmonization and Accompaniment Arrangement System. InProceedings of the 23rd International Society for Music Information Retrieval Conference (ISMIR 2022). Bengaluru, India, 248–255

work page 2022

-

[11]

Hyungui Lim, Seungyeon Rhyu, and Kyogu Lee. 2017. Chord Generation from Symbolic Melody Using BLSTM Networks. InProceedings of the 18th International Society for Music Information Retrieval Conference (ISMIR 2017). Suzhou, China, 621–627

work page 2017

-

[12]

Jean-François Paiement, Douglas Eck, and Samy Bengio. 2006. Probabilistic Melodic Harmonization. InAdvances in Artificial Intelligence: 19th Conference of the Canadian Society for Computational Studies of Intelligence (Canadian AI 2006) (Lecture Notes in Computer Science, Vol. 4013). Springer, Québec City, Québec, Canada, 218–229. https://doi.org/10.1007/...

-

[13]

1969.Structural Functions of Harmony

Arnold Schoenberg and Leonard Stein. 1969.Structural Functions of Harmony. W. W. Norton, New York

work page 1969

-

[14]

Ian Simon, Dan Morris, and Sumit Basu. 2008. MySong: Automatic Accom- paniment Generation for Vocal Melodies. InProceedings of the SIGCHI Confer- ence on Human Factors in Computing Systems. ACM, Florence, Italy, 725–734. https://doi.org/10.1145/1357054.1357169

-

[15]

Chung-En Sun, Yi-Wei Chen, Hung-Shin Lee, Yen-Hsing Chen, and Hsin-Min Wang. 2021. Melody Harmonization Using Orderless NADE, Chord Balancing, and Blocked Gibbs Sampling. In2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 4145–4149. https://doi.org/10.1109/ ICASSP39728.2021.9414281

-

[16]

Suno. 2023. Suno | AI Music Generator. https://suno.com/

work page 2023

-

[17]

Hiroaki Tsushima, Eita Nakamura, Katsutoshi Itoyama, and Kazuyoshi Yoshii

-

[18]

Interactive Arrangement of Chords and Melodies Based on a Tree- Structured Generative Model. InProceedings of the 19th International Society for Music Information Retrieval Conference (ISMIR 2018). Paris, France, 145–151

work page 2018

-

[19]

Ziyu Wang, Ke Chen, Junyan Jiang, Yiyi Zhang, Maoran Xu, Shuqi Dai, Xianbin Gu, and Gus Xia. 2020. POP909: A Pop-Song Dataset for Music Arrangement Generation. InProceedings of the 21st International Society for Music Information Retrieval Conference (ISMIR 2020). Montreal, Canada, 38–45

work page 2020

-

[20]

Shangda Wu, Yashan Wang, Xiaobing Li, Feng Yu, and Maosong Sun. 2024. MelodyT5: A Unified Score-to-Score Transformer for Symbolic Music Processing. InProceedings of the 25th International Society for Music Information Retrieval Conference (ISMIR 2024). 642–650

work page 2024

-

[21]

Dongchao Yang, Yuxin Xie, Yuguo Yin, Zheyu Wang, Xiaoyu Yi, Gongxi Zhu, Xiaolong Weng, Zihan Xiong, Yingzhe Ma, Dading Cong, Jingliang Liu, Zihang Huang, Jinghan Ru, Rongjie Huang, Haoran Wan, Peixu Wang, Kuoxi Yu, He- lin Wang, Liming Liang, Xianwei Zhuang, Yuanyuan Wang, Haohan Guo, Jun- jie Cao, Zeqian Ju, Songxiang Liu, Yuewen Cao, Heming Weng, and Yu...

-

[22]

Wei Yang, Ping Sun, Yi Zhang, and Ying Zhang. 2019. CLSTMS: A Combination of Two LSTM Models to Generate Chords Accompaniment for Symbolic Melody. In 2019 International Conference on High Performance Big Data and Intelligent Systems (HPBD&IS). IEEE, 176–180. https://doi.org/10.1109/HPBDIS.2019.8735487

-

[23]

Yin-Cheng Yeh, Wen-Yi Hsiao, Satoru Fukayama, Tetsuro Kitahara, Benjamin Genchel, Hao-Min Liu, Hao-Wen Dong, Yian Chen, Terence Leong, and Yi-Hsuan Yang. 2021. Automatic Melody Harmonization with Triad Chords: A Comparative Study.Journal of New Music Research50, 1 (2021), 37–51. https://doi.org/10.1080/ 09298215.2021.1873392

-

[24]

Shingchern D. You and Po-Sheng Liu. 2016. Automatic Chord Generation System Using Basic Music Theory and Genetic Algorithm. In2016 IEEE International Conference on Consumer Electronics-Taiwan (ICCE-TW). IEEE, 1–2

work page 2016

-

[25]

Ziya Zhou, Yuhang Wu, Zhiyue Wu, Xinyue Zhang, Ruibin Yuan, Yinghao Ma, Lu Wang, Emmanouil Benetos, Wei Xue, and Yike Guo. 2024. Can LLMs “Rea- son” in Music? An Evaluation of LLMs’ Capability of Music Understanding and Generation. InProceedings of the 25th International Society for Music Information Retrieval Conference (ISMIR 2024). 103–110

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.