Recognition: no theorem link

GRASP -- Graph-Based Anomaly Detection Through Self-Supervised Classification

Pith reviewed 2026-05-11 02:59 UTC · model grok-4.3

The pith

GRASP detects anomalous processes by learning to predict their executables from two-hop provenance graph neighborhoods and flagging prediction errors.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

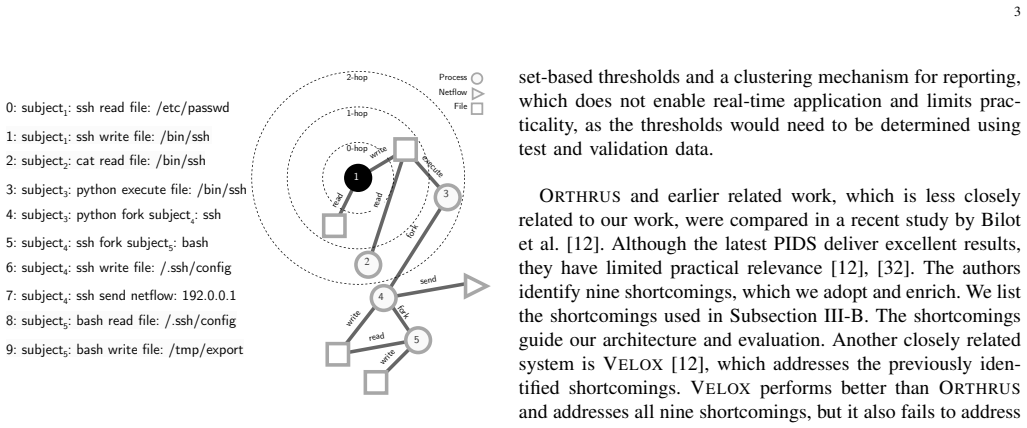

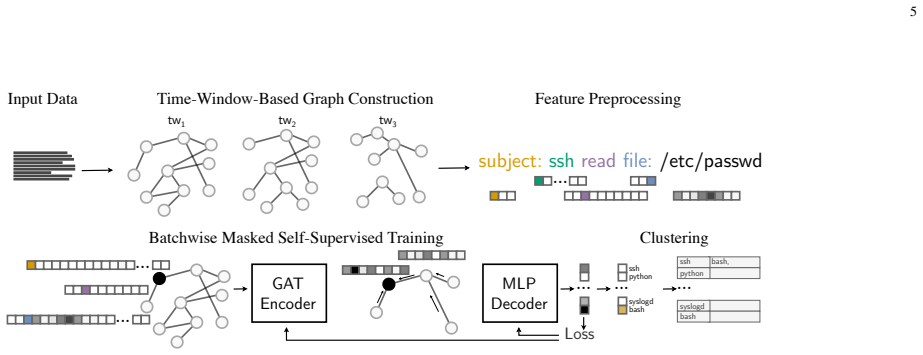

GRASP masks executable information in process nodes of provenance graphs and learns to infer the correct executable from the two-hop neighborhood. Processes whose executables are misclassified by the trained model are marked as anomalies. This removes the need for predefined or subset-determined thresholds, allowing the system to capture normal behavior patterns while remaining robust to interference and unknown activities. On the DARPA datasets the approach detects all documented attacks where executable behaviors prove learnable and additionally identifies potentially malicious anomalies not recorded as attacks in the ground truth.

What carries the argument

Masked self-supervised classification that predicts a process executable from its two-hop provenance neighborhood, with misclassifications treated as anomalies.

If this is right

- The system identifies all documented attacks on datasets where executable behaviors are learnable.

- GRASP surfaces potentially malicious anomalies beyond those labeled as attacks in existing documentation.

- Detection remains stable without reliance on manually chosen thresholds.

- Performance exceeds prior provenance-based systems on the evaluated DARPA TC and OpTC traces.

Where Pith is reading between the lines

- The two-hop neighborhood restriction may limit detection of longer-range or multi-stage attack chains that require wider context.

- If new executables appear after training, the model would need retraining or an explicit out-of-distribution mechanism to avoid false positives.

- The approach could extend to other graph-structured security logs where entity identity prediction serves as a proxy for normality.

Load-bearing premise

Normal executable behavior is stable enough to be learned from two-hop neighborhoods and that prediction errors reliably indicate anomalous rather than merely noisy or incomplete activity.

What would settle it

A controlled experiment in which a known normal executable is subjected to documented interference that does not change its attack status yet causes the model to misclassify it, or an attack that perfectly mimics normal neighborhood patterns yet is labeled as anomalous.

Figures

read the original abstract

Advanced persistent threat (APT) attacks remain difficult to detect due to their stealth, adaptability, and use of legitimate system components. Provenance-based intrusion detection systems (PIDS) offer a promising defense by capturing detailed relationships between system components and actions. However, current PIDS rely on predefined or subset-determined thresholds, which limit detection stability and the ability to detect any anomalous behavior in general. Furthermore, related work often neglects the role of process executables, which describe system activity by interacting through a process with files, network components, and other processes. We introduce GRASP, a PIDS based on masked self-supervised classification. GRASP masks the executable information of processes and learns to infer it from their two-hop provenance graph neighborhood, marking misclassified processes as anomalies. It captures behavior patterns for the learned executables without thresholding, making it robust against interference and unknown activities. Evaluations on the DARPA TC and OpTC datasets demonstrate that GRASP consistently detects anomalous behavior, including known attack-related activities, outperforming existing systems. Our PIDS identifies all documented attacks on datasets where the behavior of executables is learnable. In addition, compared to existing systems, GRASP uncovers potentially malicious anomalous behavior not labeled as an attack in the documentation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

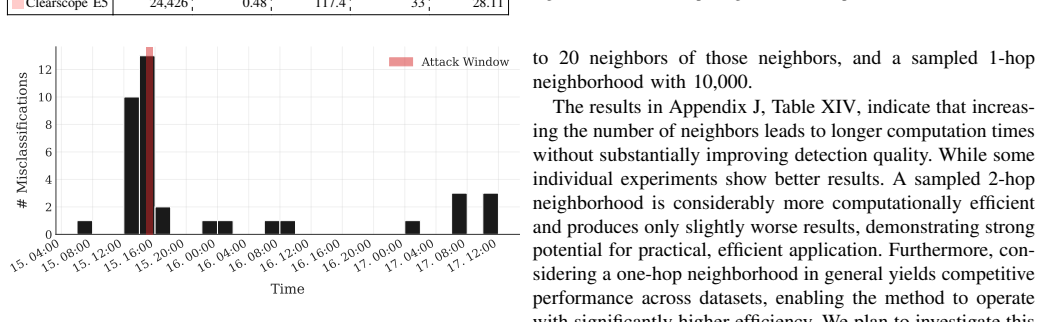

Summary. GRASP is a provenance-based intrusion detection system (PIDS) that masks the executable label of processes and trains a self-supervised classifier to infer it from the two-hop neighborhood in the provenance graph; misclassifications are treated as anomalies. The approach is presented as threshold-free and robust to interference. On the DARPA TC and OpTC datasets the paper claims consistent outperformance over prior systems, detection of all documented attacks on datasets where executable behavior is learnable, and discovery of additional unlabeled malicious anomalies.

Significance. If the core assumption holds—that normal executable behaviors produce stable, high-accuracy predictions from two-hop provenance neighborhoods—the method would remove the threshold-tuning problem that limits many current PIDS and provide a more generalizable detector. The self-supervised formulation avoids circularity with anomaly labels, which is a methodological strength. However, the practical significance cannot be assessed without evidence that the classifier actually achieves reliable accuracy on normal data and that neighborhood overlap does not produce excessive false positives.

major comments (3)

- [Abstract and Evaluation section] Abstract and Evaluation section: the claims of 'consistent outperformance,' 'identifies all documented attacks,' and discovery of additional malicious anomalies are stated without any reported classification accuracy, precision/recall, or false-positive rate on the normal subsets of the DARPA TC and OpTC datasets, leaving the central learnability assumption unverified.

- [Method section] Method section: the self-supervised task is defined as predicting the masked executable from the two-hop neighborhood, yet no analysis of neighborhood uniqueness, overlap between distinct executables, or robustness to interference is provided; without such analysis it is unclear whether misclassification reliably signals anomaly rather than normal variation.

- [Evaluation section] Evaluation section: no model architecture, loss function, hyperparameters, training procedure, or accuracy metrics on held-out normal data are reported, which prevents assessment of whether the classifier learns stable mappings as required by the detection mechanism.

minor comments (2)

- [Abstract] The acronym PIDS is introduced in the abstract before its expansion; a parenthetical definition on first use would improve readability.

- A diagram showing an example provenance graph, the masking step, and the two-hop neighborhood used for prediction would clarify the core mechanism.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive feedback on our manuscript. We address each major comment point by point below, providing clarifications and committing to revisions where the manuscript can be strengthened without misrepresenting our results.

read point-by-point responses

-

Referee: [Abstract and Evaluation section] Abstract and Evaluation section: the claims of 'consistent outperformance,' 'identifies all documented attacks,' and discovery of additional malicious anomalies are stated without any reported classification accuracy, precision/recall, or false-positive rate on the normal subsets of the DARPA TC and OpTC datasets, leaving the central learnability assumption unverified.

Authors: We agree that explicit reporting of classification accuracy, precision, recall, and false-positive rates on normal data subsets would more directly verify the learnability assumption underlying our detection mechanism. The outperformance and attack detection claims in the abstract and evaluation are grounded in the number of documented attacks identified (all where executable behaviors are learnable) plus additional unlabeled anomalies relative to prior PIDS. In the revised manuscript, we will add these metrics—computed on held-out normal portions of the DARPA TC and OpTC datasets—to the Evaluation section to strengthen support for the assumption that normal behaviors produce stable, high-accuracy predictions. revision: yes

-

Referee: [Method section] Method section: the self-supervised task is defined as predicting the masked executable from the two-hop neighborhood, yet no analysis of neighborhood uniqueness, overlap between distinct executables, or robustness to interference is provided; without such analysis it is unclear whether misclassification reliably signals anomaly rather than normal variation.

Authors: The referee is correct that the Method section does not include quantitative analysis of two-hop neighborhood uniqueness, overlap across executables, or robustness to interference. Our approach relies on the empirical observation that two-hop provenance neighborhoods enable reliable executable prediction for normal behaviors, as demonstrated by the detection results. To address this gap, we will incorporate an analysis subsection (in Methods or a new Evaluation subsection) that quantifies neighborhood overlap between distinct executables and evaluates robustness via controlled graph perturbations, clarifying the conditions under which misclassifications indicate anomalies. revision: yes

-

Referee: [Evaluation section] Evaluation section: no model architecture, loss function, hyperparameters, training procedure, or accuracy metrics on held-out normal data are reported, which prevents assessment of whether the classifier learns stable mappings as required by the detection mechanism.

Authors: We acknowledge that the Evaluation section lacks these implementation and validation details, which are important for assessing stable learning and enabling reproducibility. The original manuscript emphasized the self-supervised formulation and empirical detection outcomes rather than low-level model specifics. In the revision, we will add a dedicated subsection describing the graph neural network architecture, the cross-entropy loss for the executable prediction task, all hyperparameters, the training procedure on normal data, and accuracy metrics on held-out normal subsets to confirm that the classifier learns stable mappings. revision: yes

Circularity Check

No significant circularity in GRASP's self-supervised anomaly detection chain

full rationale

The paper's derivation defines a masked self-supervised classification task on provenance graphs independently of any anomaly labels: the executable is masked and the model learns to infer it from the two-hop neighborhood, with anomalies identified directly as misclassifications. This does not reduce to its inputs by construction, as the training objective and error-based detection rule are not equivalent to any fitted parameter or prior result; the classifier acquires patterns from data, and errors are assessed on instances outside the training objective. No equations, self-citations, uniqueness theorems, or ansatzes are shown to be load-bearing in a way that collapses the central claim. The method is a standard application of self-supervised learning for anomaly detection and remains self-contained against the DARPA datasets.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The two-hop neighborhood in a provenance graph contains enough information to predict the executable for normal processes.

Reference graph

Works this paper leans on

-

[1]

A. Alshamrani, S. Myneni, A. Chowdhary, and D. Huang, “A Survey on Advanced Persistent Threats: Techniques, Solutions, Challenges, and Research Opportunities,”IEEE Communications Surveys & Tutorials, vol. 21, no. 2, pp. 1851–1877, 2019. [Online]. Available: https://doi.org/10.1109/COMST.2019.2891891 10www.deepl.com 11www.grammarly.com 12www.perplexity.ai 12

-

[2]

People’s Republic of China State-Sponsored Cyber Actor Living off the Land to Evade Detection,

D. of Defense, “People’s Republic of China State-Sponsored Cyber Actor Living off the Land to Evade Detection,” Jun 2023. [Online]. Available: https://media.defense.gov/2023/May/24/2003229517/-1/-1/0/ CSA Living off the Land.PDF

work page 2023

-

[3]

Advanced Persistent Threat Attack Detection Systems: A Review of Approaches, Challenges, and Trends,

R. Buchta, G. Gkoktsis, F. Heine, and C. Kleiner, “Advanced Persistent Threat Attack Detection Systems: A Review of Approaches, Challenges, and Trends,”Digital Threats, vol. 5, no. 4, Dec. 2024. [Online]. Available: https://doi.org/10.1145/3696014

-

[4]

The 2025 Annual Threat Assessment of the U.S. Intelligence Community,

Office of the Director of National Intelligence, “The 2025 Annual Threat Assessment of the U.S. Intelligence Community,” https://www.dni.gov/ files/ODNI/documents/assessments/ATA-2025-Unclassified-Report.pdf, [Accessed 14-November-2025]

work page 2025

-

[5]

In: 44th IEEE Symposium on Security and Privacy, SP 2023, San Francisco, CA, USA, May 21-25, 2023

M. A. Inam, Y . Chen, A. Goyal, J. Liu, J. Mink, N. Michael, S. Gaur, A. Bates, and W. U. Hassan, “SoK: History is a Vast Early Warning System: Auditing the Provenance of System Intrusions,” in2023 IEEE Symposium on Security and Privacy (SP), 2023, pp. 2620–2638. [Online]. Available: https://doi.org/10.1109/SP46215.2023.10179405

-

[6]

Provenance-based Intrusion Detection Systems: A Survey,

M. Zipperle, F. Gottwalt, E. Chang, and T. Dillon, “Provenance-based Intrusion Detection Systems: A Survey,”ACM Comput. Surv., vol. 55, no. 7, Dec. 2022. [Online]. Available: https://doi.org/10.1145/3539605

-

[7]

A Survey on Malware Detection with Graph Representation Learning,

T. Bilot, N. El Madhoun, K. Al Agha, and A. Zouaoui, “A Survey on Malware Detection with Graph Representation Learning,”ACM Comput. Surv., vol. 56, no. 11, Jun. 2024. [Online]. Available: https://doi.org/10.1145/3664649

-

[8]

J. Griffith, D. Kong, A. Caro, B. Benyo, J. Khoury, T. Upthegrove, T. Christovich, S. Ponomorov, A. Sydney, A. Saini, V . Shurbanov, C. Willig, D. Levin, and J. Dietz, “Scalable Transparency Architecture for Research Collaboration (STARC) – DARPA Transparent Computing (TC) Program,” 2020. [Online]. Available: https://apps.dtic.mil/sti/pdfs/ AD1092961.pdf

work page 2020

-

[9]

Operationally transparent cyber (optc),

R. Arantes, C. Weir, H. Hannon, and M. Kulseng, “Operationally transparent cyber (optc),” 2021. [Online]. Available: https://dx.doi.org/ 10.21227/edq8-nk52

-

[10]

J. Liu, M. A. Inam, A. Goyal, A. Riddle, K. Westfall, and A. Bates, “What We Talk About When We Talk About Logs: Understanding the Effects of Dataset Quality on Endpoint Threat Detection Research,” in 2025 IEEE Symposium on Security and Privacy (SP), 2025, pp. 112–

work page 2025

-

[11]

In2025 IEEE Symposium on Security and Privacy (SP)

[Online]. Available: https://doi.org/10.1109/SP61157.2025.00112

-

[12]

ORTHRUS: Achieving High Quality of Attribution in Provenance-based Intrusion Detection Systems,

B. Jiang, T. Bilot, N. El Madhoun, K. Al Agha, A. Zouaoui, S. Iqbal, X. Han, and T. Pasquier, “ORTHRUS: Achieving High Quality of Attribution in Provenance-based Intrusion Detection Systems,” inSecurity Symposium (USENIX Sec’25). USENIX, 2025. [Online]. Available: https://www.usenix.org/conference/ usenixsecurity25/presentation/jiang-baoxiang

work page 2025

-

[13]

T. Bilot, B. Jiang, Z. Li, N. El Madhoun, K. Al Agha, A. Zouaoui, and T. Pasquier, “Sometimes Simpler is Better: A Comprehensive Analysis of State-of-the-Art Provenance- Based Intrusion Detection Systems,” inSecurity Symposium (USENIX Sec’25). USENIX, 2025. [Online]. Available: https: //www.usenix.org/conference/usenixsecurity25/presentation/bilot

work page 2025

-

[14]

S. Wang, Z. Wang, T. Zhou, H. Sun, X. Yin, D. Han, H. Zhang, X. Shi, and J. Yang, “THREATRACE: Detecting and Tracing Host-Based Threats in Node Level Through Provenance Graph Learning,”IEEE Transactions on Information F orensics and Security, vol. 17, pp. 3972–3987, 2022. [Online]. Available: https://doi.org/10.1109/TIFS. 2022.3208815

-

[15]

R. Buchta, F. Heine, and C. Kleiner, “Challenges and Peculiarities of Attack Detection in Virtual Power Plants: Towards an Advanced Persistent Threat Detection System,” in2022 IEEE 29th Annual Software Technology Conference (STC), 2022, pp. 69–81. [Online]. Available: https://doi.org/10.1109/STC55697.2022.00019

-

[16]

E. J. M. Colbert and A. Kott,Cyber-Security of SCADA and Other Industrial Control Systems, 1st ed. Springer Cham, 2016. [Online]. Available: https://doi.org/10.1007/978-3-319-32125-7

-

[17]

Provenance-based Intrusion Detection: Opportunities and Challenges,

X. Han, T. Pasquier, and M. Seltzer, “Provenance-based Intrusion Detection: Opportunities and Challenges,” in10th USENIX Workshop on the Theory and Practice of Provenance (TaPP 2018). London: USENIX Association, Jul. 2018. [Online]. Available: https://www. usenix.org/conference/tapp2018/presentation/han

work page 2018

-

[19]

Everything is connected: Graph neural networks,

P. Veli ˇckovi´c, “Everything is connected: Graph neural networks,” Current Opinion in Structural Biology, vol. 79, p. 102538, Apr. 2023. [Online]. Available: http://dx.doi.org/10.1016/j.sbi.2023.102538

-

[20]

FUDD: Threat Hunting Framework Utilizing Graph-Based Anomaly Detection on Log Data,

R. Buchta, K. Dangendorf, C. Kleiner, F. Heine, and G. D. Rodosek, “FUDD: Threat Hunting Framework Utilizing Graph-Based Anomaly Detection on Log Data,” inNOMS 2025-2025 IEEE Network Operations and Management Symposium, 2025, pp. 1–7. [Online]. Available: https://doi.org/10.1109/NOMS57970.2025.11073579

-

[21]

Exploring the orthogonality and linearity of backdoor attacks,

M. Ur Rehman, H. Ahmadi, and W. Ul Hassan, “Flash: A Comprehensive Approach to Intrusion Detection via Provenance Graph Representation Learning,” in2024 IEEE Symposium on Security and Privacy (SP), 2024, pp. 3552–3570. [Online]. Available: https://doi.org/10.1109/SP54263.2024.00139

-

[22]

Exploring the orthogonality and linearity of backdoor attacks,

A. Goyal, G. Wang, and A. Bates, “R-CAID: Embedding Root Cause Analysis within Provenance-based Intrusion Detection,” in2024 IEEE Symposium on Security and Privacy (SP), 2024, pp. 3515–3532. [Online]. Available: https://doi.org/10.1109/SP54263.2024.00253

-

[23]

Exploring the orthogonality and linearity of backdoor attacks,

Z. Cheng, Q. Lv, J. Liang, Y . Wang, D. Sun, T. Pasquier, and X. Han, “Kairos: Practical Intrusion Detection and Investigation using Whole-system Provenance,” in2024 IEEE Symposium on Security and Privacy (SP). Los Alamitos, CA, USA: IEEE Computer Society, may 2024, pp. 3533–3551. [Online]. Available: https://doi.org/10.1109/SP54263.2024.00005

-

[24]

IEEE Symposium on Security and Privacy , year =

J. Zengy, X. Wang, J. Liu, Y . Chen, Z. Liang, T.-S. Chua, and Z. L. Chua, “SHADEW ATCHER: Recommendation-guided Cyber Threat Analysis using System Audit Records,” in2022 IEEE Symposium on Security and Privacy (SP), 2022, pp. 489–506. [Online]. Available: https://doi.org/10.1109/SP46214.2022.9833669

-

[25]

Learning- Based Link Anomaly Detection in Continuous-Time Dynamic Graphs,

T. Po ˇstuvan, C. Grohnfeldt, M. Russo, and G. Lovisotto, “Learning- Based Link Anomaly Detection in Continuous-Time Dynamic Graphs,” inarXiv, 2024. [Online]. Available: https://doi.org/10.48550/arXiv.2405. 18050

-

[26]

Unicorn: Runtime provenance-based detector for advanced persistent threats

X. Han, T. F. J. Pasquier, A. Bates, J. Mickens, and M. I. Seltzer, “UNICORN: runtime provenance-based detector for advanced persistent threats,”CoRR, vol. abs/2001.01525, 2020. [Online]. Available: http://arxiv.org/abs/2001.01525

-

[27]

POIROT: Aligning Attack Behavior with Kernel Audit Records for Cyber Threat Hunting,

S. M. Milajerdi, B. Eshete, R. Gjomemo, and V . Venkatakrishnan, “POIROT: Aligning Attack Behavior with Kernel Audit Records for Cyber Threat Hunting,” inProceedings of the 2019 ACM SIGSAC Conference on Computer and Communications Security, ser. CCS ’19. New York, NY , USA: Association for Computing Machinery, 2019, p. 1795–1812. [Online]. Available: http...

-

[28]

S. M. Milajerdi, R. Gjomemo, B. Eshete, R. Sekar, and V . Venkatakrishnan, “HOLMES: Real-Time APT Detection through Correlation of Suspicious Information Flows,” in2019 IEEE Symposium on Security and Privacy (SP). IEEE, 2019, pp. 1137–1152. [Online]. Available: https://doi.org/10.1109/SP.2019.00026

-

[29]

You Are What You Do: Hunting Stealthy Malware via Data Provenance Analysis,

Q. Wang, W. U. Hassan, D. Li, K. Jee, X. Yu, K. Zou, J. Rhee, Z. Chen, W. Cheng, C. A. Gunter, and H. Chen, “You Are What You Do: Hunting Stealthy Malware via Data Provenance Analysis,” inProceedings 2020 Network and Distributed System Security Symposium, ser. NDSS 2020. Internet Society, 2020. [Online]. Available: http://dx.doi.org/10.14722/ndss.2020.24167

-

[30]

An Event-based Data Model for Granular Information Flow Tracking,

J. Khoury, T. Upthegrove, A. Caro, B. Benyo, and D. Kong, “An Event-based Data Model for Granular Information Flow Tracking,” in 12th International Workshop on Theory and Practice of Provenance (TaPP 2020). USENIX Association, Jun. 2020. [Online]. Available: https://www.usenix.org/conference/tapp2020/presentation/khoury

work page 2020

-

[31]

Inductive Representation Learning on Large Graphs,

W. L. Hamilton, R. Ying, and J. Leskovec, “Inductive Representation Learning on Large Graphs,”CoRR, vol. abs/1706.02216, 2017. [Online]. Available: http://arxiv.org/abs/1706.02216

-

[32]

A Survey on Self-Supervised Learning: Algorithms, Applications, and Future Trends,

J. Gui, T. Chen, J. Zhang, Q. Cao, Z. Sun, H. Luo, and D. Tao, “A Survey on Self-Supervised Learning: Algorithms, Applications, and Future Trends,”IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 46, no. 12, pp. 9052–9071, 2024. [Online]. Available: https://doi.org/10.1109/TPAMI.2024.3415112

-

[33]

Are we there yet? An Industrial Viewpoint on Provenance-based Endpoint Detection and Response Tools,

F. Dong, S. Li, P. Jiang, D. Li, H. Wang, L. Huang, X. Xiao, J. Chen, X. Luo, Y . Guo, and X. Chen, “Are we there yet? An Industrial Viewpoint on Provenance-based Endpoint Detection and Response Tools,” inProceedings of the 2023 ACM SIGSAC Conference on Computer and Communications Security, ser. CCS ’23. New York, NY , USA: Association for Computing Machi...

-

[34]

SIGL: Securing Software Installations Through Deep Graph Learning,

X. Han, X. Yu, T. Pasquier, D. Li, J. Rhee, J. Mickens, M. Seltzer, and H. Chen, “SIGL: Securing Software Installations Through Deep Graph Learning,” in30th USENIX Security Symposium (USENIX Security 21). USENIX Association, Aug. 2021, pp. 2345–2362. [Online]. Available: https://www.usenix.org/conference/ usenixsecurity21/presentation/han-xueyuan

work page 2021

-

[35]

NODLINK: An Online System for Fine-Grained APT 13 Attack Detection and Investigation,

S. Li, F. Dong, X. Xiao, H. Wang, F. Shao, J. Chen, Y . Guo, X. Chen, and D. Li, “NODLINK: An Online System for Fine-Grained APT 13 Attack Detection and Investigation,” inProceedings 2024 Network and Distributed System Security Symposium. Internet Society, 2024. [Online]. Available: http://dx.doi.org/10.14722/ndss.2024.23204

-

[36]

MAGIC: detecting advanced persistent threats via masked graph representation learning,

Z. Jia, Y . Xiong, Y . Nan, Y . Zhang, J. Zhao, and M. Wen, “MAGIC: detecting advanced persistent threats via masked graph representation learning,” inProceedings of the 33rd USENIX Conference on Security Symposium, ser. SEC ’24. USA: USENIX Association, 2024. [Online]. Available: https: //www.usenix.org/conference/usenixsecurity24/presentation/jia-zian

work page 2024

-

[37]

P. Veli ˇckovi´c, G. Cucurull, A. Casanova, A. Romero, P. Li `o, and Y . Bengio, “Graph Attention Networks,” 2018. [Online]. Available: https://arxiv.org/abs/1710.10903

work page internal anchor Pith review Pith/arXiv arXiv 2018

-

[38]

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, L. Kaiser, and I. Polosukhin, “Attention Is All You Need,”CoRR, vol. abs/1706.03762, 2017. [Online]. Available: http://arxiv.org/abs/1706.03762

work page internal anchor Pith review Pith/arXiv arXiv 2017

-

[39]

Neural message passing for quantum chemistry

J. Gilmer, S. S. Schoenholz, P. F. Riley, O. Vinyals, and G. E. Dahl, “Neural Message Passing for Quantum Chemistry,”CoRR, vol. abs/1704.01212, 2017. [Online]. Available: http://arxiv.org/abs/1704. 01212

-

[40]

Back-Propagating System Dependency Impact for Attack Investigation,

P. Fang, P. Gao, C. Liu, E. Ayday, K. Jee, T. Wang, Y . F. Ye, Z. Liu, and X. Xiao, “Back-Propagating System Dependency Impact for Attack Investigation,” in31st USENIX Security Symposium (USENIX Security 22). Boston, MA: USENIX Association, Aug. 2022, pp. 2461–2478. [Online]. Available: https://www.usenix.org/conference/ usenixsecurity22/presentation/fang

work page 2022

-

[41]

Towards a Timely Causality Analysis for Enterprise Security,

Y . Liu, M. Zhang, D. Li, K. Jee, Z. Li, Z. Wu, J. J. Rhee, and P. Mittal, “Towards a Timely Causality Analysis for Enterprise Security,” in Network and Distributed System Security Symposium, 2018. [Online]. Available: https://api.semanticscholar.org/CorpusID:51951640

work page 2018

-

[42]

DEPCOMM: Graph Summarization on System Audit Logs for Attack Investigation,

Z. Xu, P. Fang, C. Liu, X. Xiao, Y . Wen, and D. Meng, “DEPCOMM: Graph Summarization on System Audit Logs for Attack Investigation,” in2022 IEEE Symposium on Security and Privacy (SP), 2022, pp. 540–

work page 2022

-

[43]

[Online]. Available: https://doi.org/10.1109/SP46214.2022.9833632 14 APPENDIXA NEIGHBORHOODS A higher neighborhood consideration increases the amount of available information for classification. Within GNNs, oversmoothing occurs when neighborhoods become too large. Table VIII shows how neighborhoods behave as neighborhood determination increases. It shoul...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.