Recognition: no theorem link

Zero-determinant Strategy for Moving Target Defense: Existence, Performance, and Computation

Pith reviewed 2026-05-11 02:14 UTC · model grok-4.3

The pith

Zero-determinant strategies achieve the performance upper bound of strong Stackelberg equilibria in repeated moving target defense games while requiring substantially lower computation.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

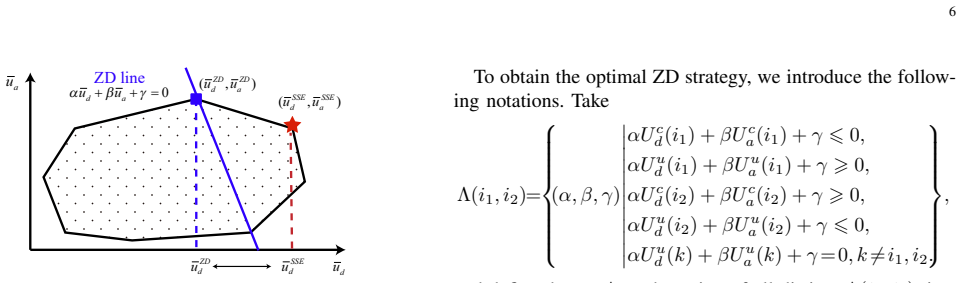

In repeated games that model moving target defense, a zero-determinant strategy exists if and only if a linear condition on the payoff matrices holds; under that condition the strategy can unilaterally enforce any feasible linear relation between the defender’s and attacker’s long-run average payoffs, and the best such relation attainable by the defender yields exactly the same expected payoff as the strong Stackelberg equilibrium.

What carries the argument

The zero-determinant strategy, which enforces a fixed linear combination of the two players’ expected payoffs independent of the opponent’s choices.

If this is right

- Optimal ZD parameters can be recovered by solving one of the two formulated programs, depending on whether the existence condition is strict or degenerate.

- The algorithm that computes these ZD strategies runs in lower time complexity than standard methods for finding the strong Stackelberg equilibrium.

- The performance guarantee extends directly to any practical MTD instance whose payoff structure satisfies the derived existence condition.

- Numerical validation on two application domains demonstrates that the computed ZD policies achieve the predicted upper-bound performance.

Where Pith is reading between the lines

- Because the existence condition is stated directly on the payoff matrices, it can be checked once per MTD application before any online computation begins.

- The same ZD construction may be reusable across related security domains that admit repeated-game formulations, such as resource allocation against persistent attackers.

- Lower per-instance computation opens the possibility of recomputing ZD parameters whenever the set of targets or their values changes.

Load-bearing premise

The defender-attacker interaction can be modeled as an infinitely repeated game in which the defender can enforce a zero-determinant strategy.

What would settle it

A concrete repeated MTD instance whose payoff matrices satisfy the stated existence condition yet whose best zero-determinant strategy yields a strictly lower defender payoff than the strong Stackelberg equilibrium value.

Figures

read the original abstract

Moving Target Defense (MTD) is commonly formulated as a repeated security game to mitigate persistent threats. Although the strong Stackelberg equilibrium (SSE) characterizes the defender's optimal strategy in the leader-follower framework, computing the SSE often incurs high computational complexity, which significantly limits its practical deployment in MTD problems with multiple targets. This paper proposes adopting a zero-determinant (ZD) strategy for constructing an MTD strategy that achieves both high defensive performance and substantially low computational complexity. We first derive a necessary and sufficient condition for the existence of ZD strategies and investigate the performance of ZD strategies, which shows their upper-bound performance matches that of the SSE strategy. We then formulate two programs to find the optimal ZD strategy parameters under different conditions. Moreover, we design an algorithm to compute the proposed ZD strategies, along with the computational complexity analysis in comparison with the traditional SSE computation. Finally, we conduct experiments on two practical applications to verify our results.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes zero-determinant (ZD) strategies for Moving Target Defense (MTD) formulated as repeated security games. It derives a necessary and sufficient condition for the existence of ZD strategies, shows that the upper-bound performance of ZD strategies matches that of the strong Stackelberg equilibrium (SSE), formulates two optimization programs to compute optimal ZD parameters, presents an algorithm for ZD strategy computation together with a complexity comparison to SSE, and validates the results via experiments on two practical MTD applications.

Significance. If the derivations hold, the work is significant for providing a lower-complexity alternative to SSE computation while preserving the same performance upper bound in multi-target MTD settings. The explicit existence condition, performance-matching result, and complexity analysis are strengths that could enable more practical deployment; the experiments on real applications further support applicability.

minor comments (3)

- [§3] §3 (existence condition): the theorem statement would benefit from an explicit enumeration of all symbols and payoff-matrix assumptions immediately before or within the statement to improve readability.

- [§5] §5 (optimization programs): clarify whether the two programs correspond to different feasibility regimes or different objective functions, and state the relationship between the resulting ZD parameters and the SSE payoff explicitly.

- [Experiments] Experiments section: the description of the two practical applications should include the concrete payoff matrices or at least the ranges used, so that the performance numbers can be reproduced from the stated ZD parameters.

Simulated Author's Rebuttal

We thank the referee for the positive review, the assessment of significance, and the recommendation for minor revision. We appreciate the recognition that the existence condition, performance equivalence to strong Stackelberg equilibrium, complexity analysis, and experimental validation on practical MTD applications constitute strengths of the work.

Circularity Check

No significant circularity; derivation self-contained

full rationale

The paper derives a necessary and sufficient condition for ZD strategy existence in the repeated MTD game and shows via analysis that ZD upper-bound performance equals the SSE value. These are presented as independent mathematical steps (existence condition, performance investigation, optimization programs, complexity comparison) rather than reductions to fitted inputs, self-definitions, or self-citation chains. The repeated-game modeling is standard and externally motivated; no load-bearing step reduces by construction to its own outputs or prior author results in a circular manner. The central claims retain independent content from the stated assumptions and derivations.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Towards a theory of moving target defense,

R. Zhuang, S. A. DeLoach, and X. Ou, “Towards a theory of moving target defense,” inProceedings of the first ACM Workshop on Moving Target Defense, 2014, pp. 31–40

work page 2014

-

[2]

A signaling game model for moving target defense,

X. Feng, Z. Zheng, D. Cansever, A. Swami, and P. Mohapatra, “A signaling game model for moving target defense,” inProceedings of IEEE Conference on Computer Communications. IEEE, 2017, pp. 1– 9

work page 2017

-

[3]

An SDN-enabled proactive defense framework for DDoS mitigation in IoT networks,

Y . Zhou, G. Cheng, and S. Yu, “An SDN-enabled proactive defense framework for DDoS mitigation in IoT networks,”IEEE Transactions on Information Forensics and Security, vol. 16, pp. 5366–5380, 2021

work page 2021

-

[4]

Assessing the effectiveness of moving target defenses using security models,

J. B. Hong and D. S. Kim, “Assessing the effectiveness of moving target defenses using security models,”IEEE Transactions on Dependable and Secure Computing, vol. 13, no. 2, pp. 163–177, 2015

work page 2015

-

[5]

A survey of moving target defenses for network security,

S. Sengupta, A. Chowdhary, A. Sabur, A. Alshamrani, D. Huang, and S. Kambhampati, “A survey of moving target defenses for network security,”IEEE Communications Surveys & Tutorials, vol. 22, no. 3, pp. 1909–1941, 2020

work page 1909

-

[6]

Y . Yue, S. Tan, Y . Tao, N. Liu, and J. L ¨u, “A non-Markovian game approach on labeled attack graphs for security decision-making in industrial control systems,”IEEE Transactions on Information Forensics and Security, 2025

work page 2025

-

[7]

Y . Shen, C. Shepherd, C. M. Ahmed, S. Shen, and S. Yu, “SGD3QN: Joint stochastic games and dueling double deep Q-networks for de- fending malware propagation in edge intelligence-enabled Internet of Things,”IEEE Transactions on Information Forensics and Security, vol. 19, pp. 6978–6990, 2024

work page 2024

-

[8]

Rl and fingerprinting to select moving target defense mechanisms for zero-day attacks in IoT,

A. H. Celdr ´an, P. M. S. S´anchez, J. von der Assen, T. Schenk, G. Bovet, G. M. P´erez, and B. Stiller, “Rl and fingerprinting to select moving target defense mechanisms for zero-day attacks in IoT,”IEEE Transactions on Information Forensics and Security, vol. 19, pp. 5520–5529, 2024

work page 2024

-

[9]

Herd accountability of privacy- preserving algorithms: A Stackelberg game approach,

Y .-T. Yang, T. Zhang, and Q. Zhu, “Herd accountability of privacy- preserving algorithms: A Stackelberg game approach,”IEEE Transac- tions on Information Forensics and Security, 2025

work page 2025

-

[10]

Consistency of Stackelberg and Nash equilibria in three-player leader-follower games,

G. Xu, G. Chen, Z. Cheng, Y . Hong, and H. Qi, “Consistency of Stackelberg and Nash equilibria in three-player leader-follower games,” IEEE Transactions on Information Forensics and Security, vol. 19, pp. 5330–5344, 2024

work page 2024

-

[11]

H. Fang, L. Xu, and X. Wang, “Coordinated multiple-relays based physical-layer security improvement: A single-leader multiple-followers stackelberg game scheme,”IEEE Transactions on Information Forensics and Security, vol. 13, no. 1, pp. 197–209, 2017

work page 2017

-

[12]

D. Korzhyk, Z. Yin, C. Kiekintveld, V . Conitzer, and M. Tambe, “Stackelberg vs. Nash in security games: An extended investigation of interchangeability, equivalence, and uniqueness,”Journal of Artificial Intelligence Research, vol. 41, pp. 297–327, 2011

work page 2011

-

[13]

Computing Stackelberg equilibria in discounted stochastic games,

Y . V orobeychik and S. Singh, “Computing Stackelberg equilibria in discounted stochastic games,” inProceedings of the AAAI Conference on Artificial Intelligence, vol. 26, no. 1, 2012, pp. 1478–1484

work page 2012

-

[14]

Single-leader-multiple-followers Stackelberg security game with hypergame framework,

Z. Cheng, G. Chen, and Y . Hong, “Single-leader-multiple-followers Stackelberg security game with hypergame framework,”IEEE Trans- actions on Information Forensics and Security, vol. 17, pp. 954–969, 2022

work page 2022

-

[15]

A Stackelberg game and markov modeling of moving target defense,

X. Feng, Z. Zheng, P. Mohapatra, and D. Cansever, “A Stackelberg game and markov modeling of moving target defense,” inProceedings of the International Conference on Decision and Game Theory for Security. Springer, 2017, pp. 315–335

work page 2017

-

[16]

Optimal timing of moving target defense: A Stackelberg game model,

H. Li and Z. Zheng, “Optimal timing of moving target defense: A Stackelberg game model,” inProceedings of the IEEE Military Com- munications Conference. IEEE, 2019, pp. 1–6

work page 2019

-

[17]

Robust moving target defense against unknown attacks: A meta- reinforcement learning approach,

——, “Robust moving target defense against unknown attacks: A meta- reinforcement learning approach,” inProceedings of the International Conference on Decision and Game Theory for Security. Springer, 2022, pp. 107–126

work page 2022

-

[18]

EI-MTD: Moving target defense for edge intelligence against 11 adversarial attacks,

Y . Qian, Y . Guo, Q. Shao, J. Wang, B. Wang, Z. Gu, X. Ling, and C. Wu, “EI-MTD: Moving target defense for edge intelligence against 11 adversarial attacks,”ACM Transactions on Privacy and Security, vol. 25, no. 3, pp. 1–24, 2022

work page 2022

-

[19]

Complexity of branch-and-bound and cutting planes in mixed-integer optimization,

A. Basu, M. Conforti, M. Di Summa, and H. Jiang, “Complexity of branch-and-bound and cutting planes in mixed-integer optimization,” Mathematical Programming, vol. 198, no. 1, pp. 787–810, 2023

work page 2023

-

[20]

Complexity of optimizing over the integers,

A. Basu, “Complexity of optimizing over the integers,”Mathematical Programming, vol. 200, no. 2, pp. 739–780, 2023

work page 2023

-

[21]

Information complexity of mixed-integer convex optimization,

A. Basu, H. Jiang, P. Kerger, and M. Molinaro, “Information complexity of mixed-integer convex optimization,”Mathematical Programming, vol. 210, no. 1, pp. 3–45, 2025

work page 2025

-

[22]

Iterated prisoner’s dilemma contains strategies that dominate any evolutionary opponent,

W. H. Press and F. J. Dyson, “Iterated prisoner’s dilemma contains strategies that dominate any evolutionary opponent,”Proceedings of the National Academy of Sciences, vol. 109, no. 26, pp. 10 409–10 413, 2012

work page 2012

-

[23]

Extortion can outperform generosity in the iterated prisoner’s dilemma,

Z. Wang, Y . Zhou, J. W. Lien, J. Zheng, and B. Xu, “Extortion can outperform generosity in the iterated prisoner’s dilemma,”Nature Communications, vol. 7, no. 1, pp. 1–7, 2016

work page 2016

-

[24]

Evolution of extortion in iterated prisoner’s dilemma games,

C. Hilbe, M. A. Nowak, and K. Sigmund, “Evolution of extortion in iterated prisoner’s dilemma games,”Proceedings of the National Academy of Sciences, vol. 110, no. 17, pp. 6913–6918, 2013

work page 2013

-

[25]

Solving the crowdsourc- ing dilemma using the zero-determinant strategies,

Q. Hu, S. Wang, X. Cheng, L. Ma, and R. Bie, “Solving the crowdsourc- ing dilemma using the zero-determinant strategies,”IEEE Transactions on Information Forensics and Security, vol. 15, pp. 1778–1789, 2019

work page 2019

-

[26]

Strategic signaling for utility control in audit games,

J. Chen, Q. Hu, and H. Jiang, “Strategic signaling for utility control in audit games,”Computers & Security, vol. 118, p. 102721, 2022

work page 2022

-

[27]

Cooperation and optimization of multi-pool mining game with zero determinant alliance,

C. Tang, B. Yang, Y . Zhang, F. Lin, and G. Chen, “Cooperation and optimization of multi-pool mining game with zero determinant alliance,” IEEE Transactions on Network Science and Engineering, vol. 11, no. 5, pp. 4965–4978, 2024

work page 2024

-

[28]

Zero-determinant incentive strategy for transaction trading in blockchain system,

L. Feng, C. Hua, and J. Hong, “Zero-determinant incentive strategy for transaction trading in blockchain system,”IEEE Transactions on Network and Service Management, vol. 22, no. 3, pp. 2311–2322, 2025

work page 2025

-

[29]

Moving target defense for internet of things based on the zero-determinant theory,

S. Wang, H. Shi, Q. Hu, B. Lin, and X. Cheng, “Moving target defense for internet of things based on the zero-determinant theory,”IEEE Internet of Things Journal, vol. 7, no. 1, pp. 661–668, 2019

work page 2019

-

[30]

Stackelberg security games: Looking beyond a decade of success,

A. Sinha, F. Fang, B. An, C. Kiekintveld, and M. Tambe, “Stackelberg security games: Looking beyond a decade of success,” inProceedings of the Twenty-Seventh International Joint Conference on Artificial Intel- ligence. IJCAI, 2018, pp. 5494–5501

work page 2018

-

[31]

Modeling and analysis of leaky deception using signaling games with evidence,

J. Pawlick, E. Colbert, and Q. Zhu, “Modeling and analysis of leaky deception using signaling games with evidence,”IEEE Transactions on Information Forensics and Security, vol. 14, no. 7, pp. 1871–1886, 2018

work page 2018

-

[32]

Spatial-temporal moving target defense: A Markov Stackelberg game model,

H. Li, W. Shen, and Z. Zheng, “Spatial-temporal moving target defense: A Markov Stackelberg game model,” inProceedings of the International Conference on Autonomous Agents and MultiAgent Systems, 2020, pp. 717–725

work page 2020

-

[33]

Deception in finitely repeated security games,

T. H. Nguyen, Y . Wang, A. Sinha, and M. P. Wellman, “Deception in finitely repeated security games,” inProceedings of the AAAI Conference on Artificial Intelligence, vol. 33, no. 01, 2019, pp. 2133–2140

work page 2019

-

[34]

Zero-determinant strategies in repeated multi- player social dilemmas with discounted payoffs,

A. Govaert and M. Cao, “Zero-determinant strategies in repeated multi- player social dilemmas with discounted payoffs,”IEEE Transactions on Automatic Control, vol. 66, no. 10, pp. 4575–4588, 2021

work page 2021

-

[35]

Extortion strategies resist disciplining when higher competitiveness is rewarded with extra gain,

L. Becks and M. Milinski, “Extortion strategies resist disciplining when higher competitiveness is rewarded with extra gain,”Nature communi- cations, vol. 10, no. 1, p. 783, 2019

work page 2019

-

[36]

From extortion to generosity, evolu- tion in the iterated prisoner’s dilemma,

A. J. Stewart and J. B. Plotkin, “From extortion to generosity, evolu- tion in the iterated prisoner’s dilemma,”Proceedings of the National Academy of Sciences, vol. 110, no. 38, pp. 15 348–15 353, 2013

work page 2013

-

[37]

Comparing strategic secrecy and Stackelberg commitment in security games,

Q. Guo, B. An, B. Bo ˇsansk´y, and C. Kiekintveld, “Comparing strategic secrecy and Stackelberg commitment in security games,” inProceedings of the International Joint Conference on Artificial Intelligence, 2017, pp. 3691–3699

work page 2017

-

[38]

A game theoretical framework on intrusion detection in heterogeneous networks,

L. Chen and J. Leneutre, “A game theoretical framework on intrusion detection in heterogeneous networks,”IEEE Transactions on Information Forensics and Security, vol. 4, no. 2, pp. 165–178, 2009

work page 2009

-

[39]

Zero-determinant strategy in stochas- tic Stackelberg asymmetric security game,

Z. Cheng, G. Chen, and Y . Hong, “Zero-determinant strategy in stochas- tic Stackelberg asymmetric security game,”Scientific Reports, vol. 13, no. 1, p. 11308, 2023

work page 2023

-

[40]

Sta- tionary strong Stackelberg equilibrium in discounted stochastic games,

V . B. L ´opez, E. Della Vecchia, A. Jean-Marie, and F. Ordonez, “Sta- tionary strong Stackelberg equilibrium in discounted stochastic games,” IEEE Transactions on Automatic Control, 2022

work page 2022

-

[41]

Imitative attacker deception in Stackelberg security games,

T. Nguyen and H. Xu, “Imitative attacker deception in Stackelberg security games,” inProceedings of the International Joint Conference on Artificial Intelligence, 2019, pp. 528–534

work page 2019

-

[42]

Payoff control in repeated games,

R. Tan, Q. Su, B. Wu, and L. Wang, “Payoff control in repeated games,” inProceedings of the Chinese Control and Decision Conference. IEEE, 2021, pp. 997–1005

work page 2021

-

[43]

On the complexity of computing Markov perfect equilibrium in general-sum stochastic games,

X. Deng, N. Li, D. Mguni, J. Wang, and Y . Yang, “On the complexity of computing Markov perfect equilibrium in general-sum stochastic games,”National Science Review, vol. 10, no. 1, p. nwac256, 2023

work page 2023

-

[44]

Finding correlated equilibrium of constrained Markov game: A primal-dual approach,

Z. Chen, S. Ma, and Y . Zhou, “Finding correlated equilibrium of constrained Markov game: A primal-dual approach,”Advances in Neural Information Processing Systems, vol. 35, pp. 25 560–25 572, 2022

work page 2022

-

[45]

Chameleonsoft: A moving target defense system,

M. Azab, R. Hassan, and M. Eltoweissy, “Chameleonsoft: A moving target defense system,” inProceedings of International Conference on Collaborative Computing: Networking, Applications and Worksharing. IEEE, 2011, pp. 241–250

work page 2011

-

[46]

Understanding malicious behavior in crowdsourcing platforms: The case of online surveys,

U. Gadiraju, R. Kawase, S. Dietze, and G. Demartini, “Understanding malicious behavior in crowdsourcing platforms: The case of online surveys,” inProceedings of the annual ACM conference on human factors in computing systems, 2015, pp. 1631–1640

work page 2015

-

[47]

S. Gao, X. Chen, J. Zhu, X. Dong, and J. Ma, “Trustworker: A trustworthy and privacy-preserving worker selection scheme for blockchain-based crowdsensing,”IEEE Transactions on Services Com- puting, vol. 15, no. 6, pp. 3577–3590, 2021

work page 2021

-

[48]

M. L. Puterman,Markov Decision Processes: Discrete Stochastic Dy- namic Programming. John Wiley & Sons, 2014. APPENDIX A. Proof of Lemma 1 Supposeπ a ∈BR(π d). For anyπ d, takeR a(a|i, j) =P d πd(d|i, j)ua(d, a)as the immediate profit by attacking tar- geta∈[K]. According to [48], there existV ∗ a andQ ∗ : [K]× [K]→Rsatisfying the Bellman optimality equat...

work page 2014

-

[49]

Also, fork=K,−ϕ min −k =−ϕ K−1 ⩽U u d (K) +βU u a (K) +γand ϕmax −ϕ max −k

Also, fork= 1,ϕ min −1 =ϕ K = 0andϕ max −ϕ max.1 = ϕ1 −ϕ max,1 = 2 K−1P k=2 ϕk −ϕ max,2 +|αU u d (1) +βU u a (1) + γ|+|αU c d(1) +βU c a(1) +γ|⩾αU u d (1) +βU u a (1) +γ. Also, fork=K,−ϕ min −k =−ϕ K−1 ⩽U u d (K) +βU u a (K) +γand ϕmax −ϕ max −k . Therefore, for anyk, −ϕmin −k ⩽αU u d (k) +βU u a (k) +γ⩽ϕ max −ϕ max −k . Thus,{ϕ k}K k=1 is a solution of (...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.