Honest Reporting in Scored Oversight: True-KL0 Property via the Prekopa Principle

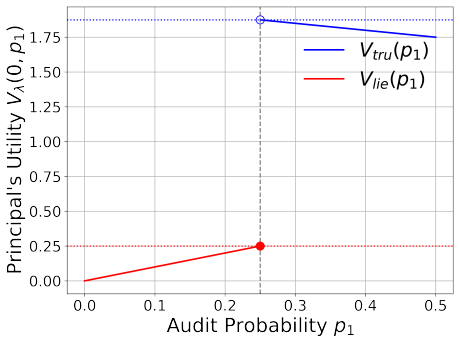

Exact gain formula G=-R U together with log-concavity of the loss integral proves unconditional incentive compatibility for every M>1.

abstract

click to expand

We prove the True-KL$_0$ property for a parametric family of heterogeneous scoring rules arising in scored elicitation mechanisms (AI oversight, forecasting competitions, expert surveys). A $d$-dimensional agent with private type $M>1$ reports to a principal who evaluates via a power-$p$ pseudospherical scoring rule, $p \in (d,d+1)$; $M$ captures the agent's information quality relative to a reference. An exact formula $G(M,M') = -R(M,p,d) U(M|M)$ shows DSIC unconditionally: honest reporting maximises expected score for every $M>1$, without distributional assumptions. True-KL$_0$, the property $R(M,p,d)<1$ for all $M>1$, $d \in \{2,3,4\}$, $p \in (d,d+1)$, gives an explicit gain-magnitude bound: the best misreport is always worse than the honest score itself.

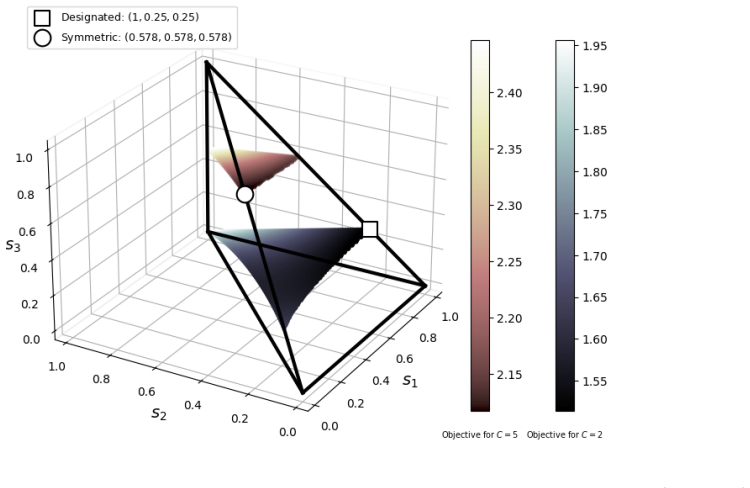

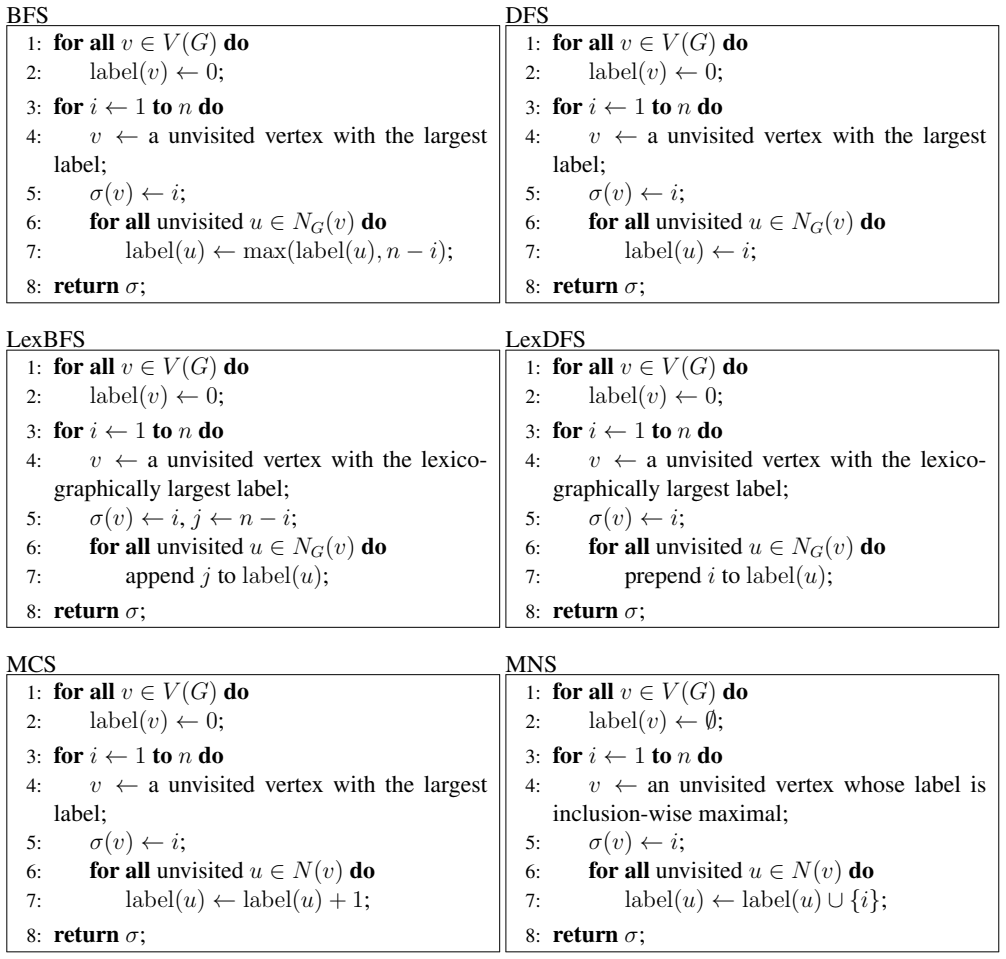

Two structural tools drive the proof: (i) a substitution $y=(x+1)/(x-1)$ rewrites the loss integral $I_L$ as $\int_1^M F(y)(M^2-y^2)^{d/2} dy$ with $M$-independent weight $F(y)>0$, isolating all $M$-dependence in a single convex factor; (ii) Prekopa's theorem on log-concavity preservation establishes that $I_L$ is log-concave in $M$, the key step in the unimodality proof for $R$. For $d=2$ the log-concavity proof is fully algebraic. For $d \in \{3,4\}$ the Prekopa argument (analytic, covering $M \le M_{cut}(d,p) \le 20$) combines with a certified high-precision numerical step on the residual region $M \in [M_{cut}, 20]$, closed by a large-$M$ asymptotic for $M>20$.

We also characterise the dimensional boundary: True-KL$_0$ holds unconditionally for all $p \in (d,d+1)$ when $d \le 4$, but fails above a critical threshold $p_{crit}(d) \in (d,d+1)$ for $d \ge 5$; for $d=5$ we locate $p_{crit}(5) \in (5.5718, 5.5750)$ via high-precision mpmath evaluation (half-width 0.0016, not interval-certified).

full image

full image