Recognition: no theorem link

On Observation Time for Recovering Latent Hawkes Networks

Pith reviewed 2026-05-12 01:10 UTC · model grok-4.3

The pith

For stationary Hawkes processes with sparse weak interactions, log d observation time suffices and is necessary for exact latent network recovery.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

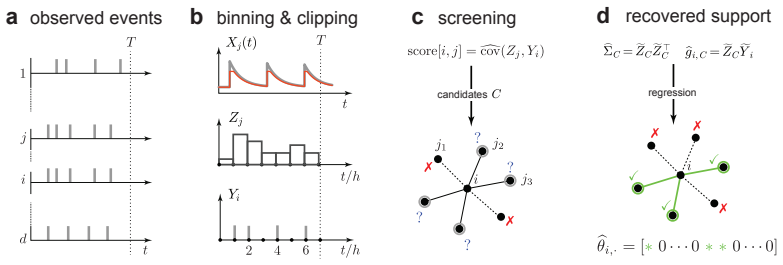

For a class of stationary Hawkes processes with sparse, weak interactions, an observation time of order log d is sufficient and necessary to exactly recover the underlying network. For the upper bound we construct a two-stage estimator that uses clipped and binned event data for screening, followed by a least-squares refinement, and apply concentration bounds derived from the Poisson cluster representation. For the lower bound we combine Fano's inequality with Jacod's Girsanov formula for point processes on a suitable subclass of networks.

What carries the argument

Two-stage estimator (clipped-and-binned screening followed by least-squares refinement) with Poisson-cluster concentration bounds for the upper bound, and Fano's inequality combined with Jacod's Girsanov formula for the lower bound.

If this is right

- Exact recovery of the interaction network is possible with observation times that grow only logarithmically in the number of entities.

- Event streams alone suffice for structure learning without requiring continuous or high-resolution measurements.

- The result applies whenever interactions remain weak and sparse, separating sample complexity from dimension in a logarithmic fashion.

- It supplies a theoretical benchmark for algorithmic work on Hawkes network inference in large systems.

Where Pith is reading between the lines

- If interaction strengths grow with d rather than staying weak, the required observation time may increase to polynomial or linear scaling.

- The screening-plus-refinement strategy could be tested on non-stationary variants by allowing slowly varying baselines.

- Empirical checks on real event data from seismology or finance could reveal whether the predicted log d threshold appears in practice.

- Analogous logarithmic scaling may hold for other classes of self-exciting point processes with comparable sparsity.

Load-bearing premise

The processes must be stationary Hawkes processes whose interactions are sparse and weak, with the lower bound holding for a suitable subclass.

What would settle it

Construct or simulate a stationary Hawkes network with sparse weak interactions and show that after observation time c log d for sufficiently small constant c, no estimator recovers the exact adjacency matrix with probability bounded away from zero.

Figures

read the original abstract

Dynamics of interacting systems in engineering, society, and nature often evolve over latent networks that govern which entities can interact. We study the problem of inferring these networks from event-based observations, which arise naturally in finance, seismology, and neuroscience. While there is substantial algorithmic work addressing this important problem, theoretical results are scarce. In this paper we ask the following fundamental question: what is the minimum time that one must observe the dynamics in order to exactly recover the underlying network, as a function of the number $d$ of interacting entities? For a class of stationary Hawkes processes with sparse, weak interactions, we prove that an observation time of order $\log d$ is sufficient and necessary. For the upper bound we construct a two-stage estimator that uses clipped and binned event data for screening, followed by a least-squares refinement, and apply concentration bounds derived from the Poisson cluster representation. For the lower bound we combine Fano's inequality with Jacod's Girsanov formula for point processes on a suitable subclass of networks.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper addresses the fundamental question of the minimal observation time required to exactly recover the latent interaction network from event data generated by a class of stationary Hawkes processes with sparse, weak interactions. It proves that an observation time of order log d (where d denotes the number of entities) is both sufficient and necessary. Sufficiency is established by constructing a two-stage estimator that first performs screening via clipped and binned event counts and then refines via least-squares, with concentration bounds derived from the Poisson cluster representation of the process. Necessity is shown via an information-theoretic argument combining Fano's inequality with Jacod's Girsanov change-of-measure formula applied to a suitable subclass of such networks.

Significance. If the bounds hold, the result supplies sharp, non-asymptotic sample-complexity guarantees for exact network recovery in a canonical class of point-process models. This is significant for applications in neuroscience, seismology, and finance, where Hawkes processes model event interactions and the number of observed entities d is often large. The paper earns credit for employing standard, externally validated tools (Poisson-cluster concentration inequalities, Fano's inequality, Jacod-Girsanov) without circularity and for delivering matching upper and lower bounds rather than one-sided results.

minor comments (3)

- §2.2: the precise definition of the 'weak interaction' regime (the constants controlling the spectral radius of the kernel matrix) should be stated explicitly before the main theorems, as it is used in both the upper- and lower-bound arguments.

- §4.1, Algorithm 1: the clipping threshold and bin width are introduced without a displayed formula relating them to the model parameters; a short display equation would improve readability.

- The proof of the lower bound (Theorem 3) invokes a 'suitable subclass' of networks; a brief paragraph clarifying which networks are excluded and why the subclass still captures the essential difficulty would help readers assess the tightness of the log d scaling.

Simulated Author's Rebuttal

We thank the referee for their positive assessment of our work on the minimal observation time for exact recovery of latent Hawkes networks. We are grateful for the recommendation to accept the manuscript and for recognizing the significance of the matching upper and lower bounds.

Circularity Check

No significant circularity

full rationale

The central claims rest on a two-stage estimator (clipped/binned screening followed by least-squares) whose concentration bounds are derived from the Poisson cluster representation of Hawkes processes, together with Fano's inequality and Jacod's Girsanov change-of-measure applied to a delimited subclass. These are standard external tools from point-process theory; the log d scaling is obtained by direct analysis of the model parameters (sparsity, weak interactions, stability) rather than by fitting or by self-referential definition. No step equates a derived quantity to its own input by construction, and no load-bearing premise reduces to a prior self-citation.

Axiom & Free-Parameter Ledger

axioms (2)

- standard math Fano's inequality applied to network distinguishability

- standard math Jacod's Girsanov formula for point processes

Reference graph

Works this paper leans on

-

[1]

Massil Achab, Emmanuel Bacry, Stéphane Gaïffas, Iacopo Mastromatteo, and Jean-François Muzy. Uncovering causality from multivariate Hawkes integrated cumulants.Journal of Machine Learning Research, 18(192):1–28, 2018

work page 2018

-

[2]

Yacine Aït-Sahalia, Julio Cacho-Diaz, and Roger JA Laeven. Modeling financial contagion using mutually exciting jump processes.Journal of Financial Economics, 117(3):585–606, 2015

work page 2015

-

[3]

Emmanuel Bacry, Martin Bompaire, Stéphane Gaïffas, and Jean-Francois Muzy. Sparse and low-rank multivariate Hawkes processes.Journal of Machine Learning Research, 21(50):1–32, 2020

work page 2020

-

[4]

Non-parametric kernel estimation for symmetric Hawkes processes

Emmanuel Bacry, Khalil Dayri, and Jean-François Muzy. Non-parametric kernel estimation for symmetric Hawkes processes. Application to high frequency financial data.The European Physical Journal B, 85(5):157, 2012

work page 2012

-

[5]

Emmanuel Bacry, Iacopo Mastromatteo, and Jean-François Muzy. Hawkes Processes in Finance. Market Microstructure and Liquidity, 01(01):1550005, 2015

work page 2015

-

[6]

Emmanuel Bacry and Jean-François Muzy. First-and second-order statistics characterization of Hawkes processes and non-parametric estimation.IEEE Transactions on Information Theory, 62(4):2184–2202, 2016

work page 2016

-

[7]

Learning Networks of Stochastic Differential Equations

José Bento, Morteza Ibrahimi, and Andrea Montanari. Learning Networks of Stochastic Differential Equations. In J. Lafferty, C. Williams, J. Shawe-Taylor, R. Zemel, and A. Culotta, editors,Advances in Neural Information Processing Systems, volume 23. Curran Associates, Inc., 2010

work page 2010

-

[8]

Information theoretic limits on learning stochastic differential equations

José Bento, Morteza Ibrahimi, and Andrea Montanari. Information theoretic limits on learning stochastic differential equations. In2011 IEEE International Symposium on Information Theory Proceedings, pages 855–859. IEEE, 2011

work page 2011

-

[9]

The rough Hawkes Heston stochastic volatility model.Mathematical Finance, 34(4):1197–1241, 2024

Alessandro Bondi, Sergio Pulido, and Simone Scotti. The rough Hawkes Heston stochastic volatility model.Mathematical Finance, 34(4):1197–1241, 2024

work page 2024

-

[10]

Clive G Bowsher. Modelling security market events in continuous time: Intensity based, multivariate point process models.Journal of Econometrics, 141(2):876–912, 2007

work page 2007

-

[11]

Pierre Brémaud.Point Processes and Queues: Martingale Dynamics. Springer Series in Statistics. Springer-Verlag, New York, Heidelberg, Berlin, 1981

work page 1981

-

[12]

P. Brémaud, G. Nappo, and G. L. Torrisi. Rate of Convergence to Equilibrium of Marked Hawkes Processes.Journal of Applied Probability, 39(1):123–136, 2002

work page 2002

-

[13]

Stability of Nonlinear Hawkes Processes.The Annals of Probability, 24(3):1563–1588, 1996

Pierre Brémaud and Laurent Massoulié. Stability of Nonlinear Hawkes Processes.The Annals of Probability, 24(3):1563–1588, 1996. 10

work page 1996

-

[14]

Biao Cai, Jingfei Zhang, and Yongtao Guan. Latent network structure learning from high- dimensional multivariate point processes.Journal of the American Statistical Association, 119(545):95–108, 2024

work page 2024

-

[15]

Feng Chen and Wai Hong Tan. Marked self-exciting point process modelling of information diffusion on Twitter.The Annals of Applied Statistics, 12(4):2175–2196, 2018

work page 2018

-

[16]

Shizhe Chen, Daniela Witten, and Ali Shojaie. Nearly assumptionless screening for the mutually-exciting multivariate Hawkes process.Electronic journal of statistics, 11(1):1207, 2017

work page 2017

-

[17]

Wen-Hao Chiang, Xueying Liu, and George Mohler. Hawkes process modeling of COVID-19 with mobility leading indicators and spatial covariates.International journal of forecasting, 38(2):505–520, 2022

work page 2022

-

[18]

Nicholas J. Clark and Philip M. Dixon. Modeling and Estimation for Self-Exciting Spatio- Temporal Models of Terrorist Activity.The Annals of Applied Statistics, 12(1):633–653, 2018

work page 2018

-

[19]

Rama Cont and Peter Tankov.Financial modelling with jump processes. Chapman and Hall/CRC, 2003

work page 2003

-

[20]

Riley Crane and Didier Sornette. Robust dynamic classes revealed by measuring the response function of a social system.Proceedings of the National Academy of Sciences, 105(41):15649– 15653, 2008

work page 2008

-

[21]

Sophie Donnet, Vincent Rivoirard, and Judith Rousseau. Nonparametric Bayesian estimation for multivariate Hawkes processes.The Annals of Statistics, 48(5):2698 – 2727, 2020

work page 2020

-

[22]

Hawkes graphs.Theory of Probability & Its Applications, 62(1):132–156, 2018

Paul Embrechts and Matthias Kirchner. Hawkes graphs.Theory of Probability & Its Applications, 62(1):132–156, 2018

work page 2018

-

[23]

Fano’s Inequality for Random Variables.Statistical Science, 35(2):178 – 201, 2020

Sébastien Gerchinovitz, Pierre Ménard, and Gilles Stoltz. Fano’s Inequality for Random Variables.Statistical Science, 35(2):178 – 201, 2020

work page 2020

-

[24]

Lasso and probabilistic inequalities for multivariate point processes.Bernoulli, 21(1):83–143, 2015

Niels Richard Hansen, Patricia Reynaud-Bouret, and Vincent Rivoirard. Lasso and probabilistic inequalities for multivariate point processes.Bernoulli, 21(1):83–143, 2015

work page 2015

-

[25]

Alan G. Hawkes. Spectra of Some Self-Exciting and Mutually Exciting Point Processes. Biometrika, 58(1):83–90, 1971

work page 1971

-

[26]

Alan G. Hawkes and David Oakes. A Cluster Process Representation of a Self-Exciting Process. Journal of Applied Probability, 11(3):493–503, 1974

work page 1974

-

[27]

Hawkestopic: A joint model for network inference and topic modeling from text-based cascades

Xinran He, Theodoros Rekatsinas, James Foulds, Lise Getoor, and Yan Liu. Hawkestopic: A joint model for network inference and topic modeling from text-based cascades. InInternational Conference on Machine Learning, pages 871–880. PMLR, 2015

work page 2015

-

[28]

Nobuyuki Ikeda and Shinzo Watanabe.Stochastic differential equations and diffusion processes, volume 24. Elsevier, 2014

work page 2014

-

[29]

Jean Jacod. Multivariate point processes: predictable projection, Radon-Nikodym derivatives, representation of martingales.Zeitschrift für Wahrscheinlichkeitstheorie und verwandte Gebiete, 31(3):235–253, 1975

work page 1975

-

[30]

Neural Jump Stochastic Differential Equations

Junteng Jia and Austin R Benson. Neural Jump Stochastic Differential Equations. In H. Wallach, H. Larochelle, A. Beygelzimer, F. d'Alché-Buc, E. Fox, and R. Garnett, editors,Advances in Neural Information Processing Systems, volume 32. Curran Associates, Inc., 2019

work page 2019

-

[31]

Yu M Kabanov. The capacity of a channel of the Poisson type.Theory of Probability & Its Applications, 23(1):143–147, 1978

work page 1978

-

[32]

Kiymet Kaya, Murat Sahin, Furkan Mangir, Alp Baris Beydemir, Cenk Yaltirak, and Sule Gun- duz Oguducu. Deep quake dynamics: A multimodal fault-aware approach to earthquake magnitude and occurrence time forecasting.Geoscience Data Journal, 13(2):e70066, 2026. e70066 GDJ-2025-02-0014. 11

work page 2026

-

[33]

Springer Science & Business Media, 2012

Alexander Kechris.Classical descriptive set theory. Springer Science & Business Media, 2012

work page 2012

-

[34]

Neural Relational Inference for Interacting Systems

Thomas Kipf, Ethan Fetaya, Kuan-Chieh Wang, Max Welling, and Richard Zemel. Neural Relational Inference for Interacting Systems. In Jennifer Dy and Andreas Krause, editors,Pro- ceedings of the 35th International Conference on Machine Learning, volume 80 ofProceedings of Machine Learning Research, pages 2688–2697. PMLR, 10–15 Jul 2018

work page 2018

-

[35]

Reconstructing neuronal circuitry from parallel spike trains.Nature communications, 10(1):4468, 2019

Ryota Kobayashi, Shuhei Kurita, Anno Kurth, Katsunori Kitano, Kenji Mizuseki, Markus Diesmann, Barry J Richmond, and Shigeru Shinomoto. Reconstructing neuronal circuitry from parallel spike trains.Nature communications, 10(1):4468, 2019

work page 2019

-

[36]

Jeremy Large. Measuring the resiliency of an electronic limit order book.Journal of Financial Markets, 10(1):1–25, 2007

work page 2007

-

[37]

Cambridge University Press, 2018

Günter Last and Mathew Penrose.Lectures on the Poisson process, volume 7. Cambridge University Press, 2018

work page 2018

-

[38]

Discovering latent network structure in point process data

Scott Linderman and Ryan Adams. Discovering latent network structure in point process data. InInternational Conference on Machine Learning, pages 1413–1421. PMLR, 2014

work page 2014

-

[39]

David Lüdke, Marin Biloš, Oleksandr Shchur, Marten Lienen, and Stephan Günnemann. Add and thin: Diffusion for temporal point processes.Advances in Neural Information Processing Systems, 36:56784–56801, 2023

work page 2023

-

[40]

Noa Malem-Shinitski, César Ojeda, and Manfred Opper. Variational Bayesian inference for nonlinear Hawkes process with Gaussian process self-effects.Entropy, 24(3):356, 2022

work page 2022

-

[41]

Extending earthquakes’ reach through cascading.Science, 319(5866):1076–1079, 2008

David Marsan and Olivier Lengline. Extending earthquakes’ reach through cascading.Science, 319(5866):1076–1079, 2008

work page 2008

-

[42]

Nicolai Meinshausen and Peter Bühlmann. High-dimensional graphs and variable selection with the Lasso.The Annals of Statistics, 34(3):1436 – 1462, 2006

work page 2006

-

[43]

Sebastian Meyer, Johannes Elias, and Michael Höhle. A space–time conditional intensity model for invasive meningococcal disease occurrence.Biometrics, 68(2):607–616, 2012

work page 2012

-

[44]

George O Mohler, Martin B Short, P Jeffrey Brantingham, Frederic Paik Schoenberg, and George E Tita. Self-exciting point process modeling of crime.Journal of the American Statistical Association, 106(493):100–108, 2011

work page 2011

-

[45]

Shyam Nandan, Guy Ouillon, and Didier Sornette. Are large earthquakes preferentially triggered by other large events?Journal of Geophysical Research: Solid Earth, 127(8):e2022JB024380,

-

[46]

e2022JB024380 2022JB024380

-

[47]

Estimators for stationary point processes.Ann

Yosihiko Ogata. Estimators for stationary point processes.Ann. Inst. Statist. Math, 30(Part A):243–261, 1978

work page 1978

-

[48]

Yosihiko Ogata. Statistical models for earthquake occurrences and residual analysis for point processes.Journal of the American Statistical Association, 83(401):9–27, 1988

work page 1988

-

[49]

Takahiro Omi, Kazuyuki Aihara, et al. Fully neural network based model for general temporal point processes.Advances in neural information processing systems, 32, 2019

work page 2019

-

[50]

Tohru Ozaki. Maximum likelihood estimation of Hawkes’ self-exciting point processes.Annals of the Institute of Statistical Mathematics, 31(1):145–155, 1979

work page 1979

-

[51]

A graph dynamics prior for relational inference

Liming Pan, Cheng Shi, and Ivan Dokmanic. A graph dynamics prior for relational inference. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 38, pages 14508–14516, 2024

work page 2024

-

[52]

Jonathan W Pillow, Jonathon Shlens, Liam Paninski, Alexander Sher, Alan M Litke, EJ Chichilnisky, and Eero P Simoncelli. Spatio-temporal correlations and visual signalling in a complete neuronal population.Nature, 454(7207):995–999, 2008. 12

work page 2008

-

[53]

Jakob Gulddahl Rasmussen. Bayesian inference for Hawkes processes.Methodology and Computing in Applied Probability, 15(3):623–642, 2013

work page 2013

-

[54]

Pradeep Ravikumar, Martin J. Wainwright, and John D. Lafferty. High-dimensional Ising model selection using ℓ1-regularized logistic regression.The Annals of Statistics, 38(3):1287 – 1319, 2010

work page 2010

-

[55]

Expecting to be hip: Hawkes intensity processes for social media popularity

Marian-Andrei Rizoiu, Lexing Xie, Scott Sanner, Manuel Cebrian, Honglin Yu, and Pascal Van Hentenryck. Expecting to be hip: Hawkes intensity processes for social media popularity. InProceedings of the 26th International Conference on World Wide Web, pages 735–744, 2017

work page 2017

-

[56]

Cambridge university press, 1999

Ken-Iti Sato.Lévy processes and infinitely divisible distributions, volume 68. Cambridge university press, 1999

work page 1999

-

[57]

Wilson Truccolo, Uri T Eden, Matthew R Fellows, John P Donoghue, and Emery N Brown. A point process framework for relating neural spiking activity to spiking history, neural ensemble, and extrinsic covariate effects.Journal of neurophysiology, 93(2):1074–1089, 2005

work page 2005

-

[58]

H Juliette T Unwin, Isobel Routledge, Seth Flaxman, Marian-Andrei Rizoiu, Shengjie Lai, Justin Cohen, Daniel J Weiss, Swapnil Mishra, and Samir Bhatt. Using Hawkes Processes to model imported and local malaria cases in near-elimination settings.PLoS Computational Biology, 17(4):e1008830, 2021

work page 2021

-

[59]

Martin J Wainwright. Information-theoretic limits on sparsity recovery in the high-dimensional and noisy setting.IEEE transactions on information theory, 55(12):5728–5741, 2009

work page 2009

-

[60]

Martin J Wainwright. Sharp thresholds for High-Dimensional and noisy sparsity recovery using ℓ1-Constrained Quadratic Programming (Lasso).IEEE transactions on information theory, 55(5):2183–2202, 2009

work page 2009

-

[61]

Learning Granger Causality from Instance-wise Self-attentive Hawkes Processes

Dongxia Wu, Tsuyoshi Ide, Georgios Kollias, Jiri Navratil, Aurelie Lozano, Naoki Abe, Yian Ma, and Rose Yu. Learning Granger Causality from Instance-wise Self-attentive Hawkes Processes. In Sanjoy Dasgupta, Stephan Mandt, and Yingzhen Li, editors,Proceedings of The 27th International Conference on Artificial Intelligence and Statistics, volume 238 of Proc...

work page 2024

-

[62]

RIV A: Efficient relational inference with variate attention.Neural Networks, 191:107748, 2025

Ruizi Wu, Liming Pan, and Linyuan Lü. RIV A: Efficient relational inference with variate attention.Neural Networks, 191:107748, 2025

work page 2025

-

[63]

Learning Granger Causality for Hawkes Processes

Hongteng Xu, Mehrdad Farajtabar, and Hongyuan Zha. Learning Granger Causality for Hawkes Processes. In Maria Florina Balcan and Kilian Q. Weinberger, editors,Proceedings of The 33rd International Conference on Machine Learning, volume 48 ofProceedings of Machine Learning Research, pages 1717–1726, New York, New York, USA, 20–22 Jun 2016. PMLR

work page 2016

-

[64]

Neural jump-diffusion temporal point processes

Shuai Zhang, Chuan Zhou, Yang Aron Liu, Peng Zhang, Xixun Lin, and Zhi-Ming Ma. Neural jump-diffusion temporal point processes. InForty-first International Conference on Machine Learning, 2024

work page 2024

-

[65]

Neural Relation Inference for Multi-dimensional Temporal Point Processes via Message Passing Graph

Yunhao Zhang and Junchi Yan. Neural Relation Inference for Multi-dimensional Temporal Point Processes via Message Passing Graph. InIJCAI, pages 3406–3412, 2021

work page 2021

-

[66]

Learning Social Infectivity in Sparse Low-rank Networks Using Multi-dimensional Hawkes Processes

Ke Zhou, Hongyuan Zha, and Le Song. Learning Social Infectivity in Sparse Low-rank Networks Using Multi-dimensional Hawkes Processes. In Carlos M. Carvalho and Pradeep Ravikumar, editors,Proceedings of the Sixteenth International Conference on Artificial Intelli- gence and Statistics, volume 31 ofProceedings of Machine Learning Research, pages 641–649, Sc...

work page 2013

-

[67]

Jiancang Zhuang and Jorge Mateu. A semiparametric spatiotemporal Hawkes-type point process model with periodic background for crime data.Journal of the Royal Statistical Society Series A: Statistics in Society, 182(3):919–942, 2019

work page 2019

-

[68]

Jiancang Zhuang, Yosihiko Ogata, and David Vere-Jones. Stochastic declustering of space-time earthquake occurrences.Journal of the American Statistical Association, 97(458):369–380, 2002. 13 A Upper bound proof details Appendix A contains the proof of the upper bound. We organize it into five sections. We begin with an intuitive description and a short ov...

work page 2002

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.