Recognition: no theorem link

Mechanism Design Is Not Enough: Prosocial Agents for Cooperative AI

Pith reviewed 2026-05-12 00:55 UTC · model grok-4.3

The pith

Incomplete contracts create welfare losses no mechanism can eliminate, but prosocial agents can close the gap.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

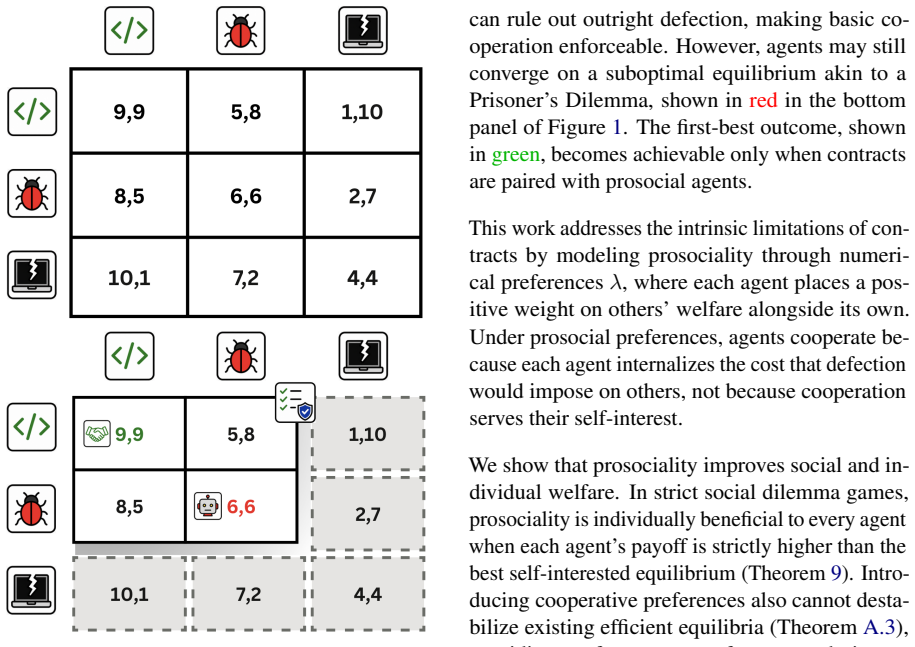

Drawing from incomplete contract theory, when contracts cannot distinguish all relevant future contingencies, there is a strictly positive welfare loss that no realistic mechanism can eliminate. Prosocial agents who weigh others' welfare alongside their own can close this gap and achieve outcomes that are socially superior and individually beneficial.

What carries the argument

Incomplete contract theory applied to multi-agent LLM interactions, with prosocial weighting in agent objectives to recover the lost welfare.

Load-bearing premise

The formal model of incomplete contracts transfers directly to LLM agents without additional frictions or instabilities in prosocial weighting.

What would settle it

A demonstration that some mechanism achieves full efficiency despite incomplete contingencies, or that prosocial agents show no welfare gain in LLM-powered resource allocation and dilemma tasks.

Figures

read the original abstract

Ensuring that AI agents behave safely and beneficially when interacting with other parties has emerged as one of the central challenges of modern AI safety. While mechanism design, as the theory of designing rules to align individual and collective objectives, can incentivize cooperative behavior, it is still an open question whether it alone is sufficient to maximize LLM agents' social welfare. This work proves that the answer is negative: drawing from incomplete contract theory, we formally show that when contracts cannot distinguish all relevant future contingencies, there is a strictly positive welfare loss that no realistic mechanism can eliminate. We show that prosocial agents, who weigh others' welfare alongside their own, can close this gap and achieve outcomes that are socially superior and individually beneficial. Experimentally, we show that in multi-agent resource-allocation environments and canonical social dilemmas where agents are powered by large language models, prosociality is beneficial. The implication for AI safety is clear: to enable cooperative interactions at scale, designing adequate mechanisms is not sufficient; agents must be built to be intrinsically prosocial.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that mechanism design alone is insufficient to maximize social welfare among LLM agents because incomplete contracts—drawing from economic theory—cannot specify all future contingencies, resulting in a strictly positive welfare loss that no realistic mechanism can eliminate. It formally shows that prosocial agents, who internalize others' welfare, can close this gap while remaining individually beneficial. Experiments in multi-agent resource allocation and social dilemma environments with LLM agents are presented to demonstrate the practical benefits of prosociality, with implications for AI safety that agents must be built intrinsically prosocial rather than relying solely on external rules.

Significance. If the core theoretical transfer and experimental results hold, the work bridges incomplete contract theory with cooperative AI, providing a clear argument that prosocial preferences are a necessary complement to mechanism design for scalable multi-agent interactions. The formal result from established theory and the experimental demonstration in LLM settings offer a falsifiable path forward for AI safety research, though its impact hinges on addressing the applicability of unverifiability assumptions in engineered AI environments.

major comments (2)

- [§3 (Theoretical Model)] §3 (Theoretical Model): The central claim that a strictly positive welfare loss persists under any realistic mechanism rests on the incomplete-contract assumption of unverifiable contingencies. The manuscript does not specify the admissible mechanism class for LLM agents or demonstrate why protocols conditioning on full conversation histories, tool outputs, or cryptographic commitments are excluded; if such protocols are admissible, the residual loss may be eliminable by mechanism design alone, undermining the necessity of prosocial weighting.

- [§5 (Experiments)] §5 (Experiments): The reported benefits of prosocial agents in resource-allocation and social-dilemma settings lack explicit baselines (e.g., purely selfish LLM agents under standard mechanisms), control conditions, number of independent runs, and statistical tests. Without these, it is impossible to assess effect sizes or rule out that observed improvements stem from prompt engineering rather than prosociality per se.

minor comments (2)

- [§2 (Preliminaries)] The notation for prosocial weighting (e.g., the parameter balancing self- and other-welfare) should be defined explicitly in the main text rather than deferred to an appendix, to improve readability for readers outside economics.

- [§5 (Experiments)] Figure 3 (social dilemma results) would benefit from error bars and a clearer legend distinguishing prosocial vs. baseline conditions.

Simulated Author's Rebuttal

We thank the referee for their constructive and insightful comments, which highlight important areas for clarification and strengthening. We address each major comment below, indicating planned revisions where appropriate. Our responses aim to preserve the core contribution while improving rigor and precision.

read point-by-point responses

-

Referee: The central claim that a strictly positive welfare loss persists under any realistic mechanism rests on the incomplete-contract assumption of unverifiable contingencies. The manuscript does not specify the admissible mechanism class for LLM agents or demonstrate why protocols conditioning on full conversation histories, tool outputs, or cryptographic commitments are excluded; if such protocols are admissible, the residual loss may be eliminable by mechanism design alone, undermining the necessity of prosocial weighting.

Authors: We appreciate this observation on the need for greater precision in the theoretical setup. Our model adopts the standard incomplete-contracts framework (Hart-Moore 1988 and subsequent literature), in which the welfare loss arises precisely because certain payoff-relevant contingencies are unverifiable by any third-party enforcer, even when they are observable to the contracting parties. For LLM agents, full conversation histories and tool outputs are observable but remain unverifiable in the contractual sense when they involve subjective interpretations, ambiguous natural-language states, or complex multi-turn reasoning that cannot be reduced to an objective, court-enforceable signal without residual ambiguity. Cryptographic commitments can render certain facts verifiable, yet they cannot eliminate all unverifiable contingencies in open-ended environments; any remaining unverifiable component preserves the strict positive loss result. In the revision we will (i) explicitly define the admissible mechanism class as contracts that condition solely on verifiable information and (ii) add a short discussion clarifying why histories and commitments do not remove the incompleteness assumption in realistic LLM settings. revision: partial

-

Referee: The reported benefits of prosocial agents in resource-allocation and social-dilemma settings lack explicit baselines (e.g., purely selfish LLM agents under standard mechanisms), control conditions, number of independent runs, and statistical tests. Without these, it is impossible to assess effect sizes or rule out that observed improvements stem from prompt engineering rather than prosociality per se.

Authors: We agree that the experimental reporting requires additional rigor. The original runs used 10 independent trials per condition with fixed random seeds, but these details and formal statistical comparisons were omitted. In the revised manuscript we will: (a) add explicit selfish-LLM baselines under identical mechanisms, (b) describe all control conditions (including neutral and selfish prompt variants), (c) report the exact number of independent runs and seeds, and (d) include statistical tests (paired t-tests and effect-size calculations) comparing prosocial versus selfish conditions. These additions will allow readers to evaluate whether gains are attributable to prosocial weighting rather than prompt engineering alone. revision: yes

Circularity Check

Derivation applies external incomplete-contract theory without self-referential reductions

full rationale

The paper's core argument draws from established incomplete contract theory (external to the authors) to assert a strictly positive welfare loss under unverifiable contingencies that survives any admissible mechanism, then shows prosocial weighting can close the gap in LLM settings. No equations, definitions, or steps reduce this loss to a fitted parameter, self-citation chain, or ansatz imported from the authors' prior work; the formal claim is presented as a direct application of the cited economic framework rather than a construction equivalent to its own inputs. The subsequent experimental validation on resource allocation and social dilemmas is independent of the theoretical derivation. This yields a self-contained chain with no load-bearing circular steps.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Contracts cannot distinguish all relevant future contingencies (incomplete contract theory)

Reference graph

Works this paper leans on

-

[1]

15 K. R. Apt and G. Schaefer. 2014. Selfishness Level of Strategic Games.Journal of Artificial Intelligence Research, 49:207–240. 3, 4, 22 Reza Bayat, Ali Rahimi-Kalahroudi, Mohammad Pezeshki, Sarath Chandar, and Pascal Vincent. 2025. Steering Large Language Model Activations in Sparse Spaces.Preprint, arXiv:2503.00177. 9 B. Douglas Bernheim and Michael D...

-

[2]

20 Jesse Bull and Joel Watson. 2007. Hard evidence and mechanism design.Games and Economic Behavior, 58(1):75–93. 20 A. Colin Cameron, Jonah B. Gelbach, and Douglas L. Miller. 2008. Bootstrap-based improvements for infer- ence with clustered errors.The Review of Economics and Statistics, 90(3):414–427. 31 Ioannis Caragiannis, Christos Kaklamanis, Panagio-...

-

[3]

Cooperative and uncooperative institution de- signs: Surprises and problems in open-source game theory.Preprint, arXiv:2208.07006. 9 Allan Dafoe, Yoram Bachrach, Gillian Hadfield, Eric Horvitz, Kate Larson, and Thore Graepel. 2021. Coop- erative AI: Machines must learn to find common ground. Nature, 593(7857):33–36. 1 Paul Dütting, Zhe Feng, Harikrishna N...

-

[4]

9 Jiarui Liu, Terry Jingchen Zhang, Ryan Faulkner, Xuan- qiang Angelo Huang, Vilém Zouhar, Dominik Glan- dorf, Isabel Dahlgren, Rishit Dagli, Yuen Chen, Fe- lix Leeb, Van Q. Truong, Punya Syon Pandey, Yves Bicker, Suvajit Majumder, Wenyuan Jiang, Zeju Qiu, Sankalan Pal Chowdhury, Mrinmaya Sachan, Bernhard Schölkopf, Mona T. Diab, and Zhijing Jin. 2026. ...

work page 2026

-

[5]

An Interpretable Automated Mechanism Design Framework with Large Language Models.Preprint, arXiv:2502.12203. 3 Ian R. Macneil. 1974. The many futures of contracts. Southern California Law Review, 47:691–816. 1 Frank P. Maier-Rigaud, Peter Martinsson, and Gianan- drea Staffiero. 2010. Ostracism and the provision of a public good: Experimental evidence.Jour...

-

[6]

Cooperate or collapse: Emergence of sustainable cooperation in a society of LLM agents. InProceedings of the 38th International Conference on Neural Infor- mation Processing Systems, volume 37 ofNIPS ’24, pages 111715–111759, Red Hook, NY , USA. Curran Associates Inc. 2, 6, 20, 28 Dereck Piche, Mohammed Muqeeth, Milad Aghajo- hari, Juan Duque, Michael Nou...

-

[7]

26 Elizaveta Tennant, Stephen Hailes, and Mirco Musolesi

The ai risk repository: A meta-review, database, and taxonomy of risks from artificial intelligence.Pat- terns, page 101517. 26 Elizaveta Tennant, Stephen Hailes, and Mirco Musolesi

-

[8]

Moral Alignment for LLM Agents.Preprint, arXiv:2410.01639. 4, 9 Emanuel Tewolde, Xiao Zhang, David Guzman Piedrahita, Vincent Conitzer, and Zhijing Jin. 2026. CoopEval: Benchmarking Cooperation-Sustaining Mechanisms and LLM Agents in Social Dilemmas. Preprint, arXiv:2604.15267. 3 Jean Tirole. 1999. Incomplete Contracts: Where Do We Stand?Econometrica, 67(...

-

[9]

2.Unique welfare optimum.(C H , CH)is the strict utilitarian and Rawlsian welfare maximiser

Faithful reduction.If both players hold effort fixed at the same level, the resulting 2 ×2 sub-game inherits the strategic structure of the base game. 2.Unique welfare optimum.(C H , CH)is the strict utilitarian and Rawlsian welfare maximiser

-

[10]

(CL, CL) produces an outcome strictly worse than (CH , CH) but no worse than mutual defection

Productivity collapse. (CL, CL) produces an outcome strictly worse than (CH , CH) but no worse than mutual defection. Effort represents real investment, so this cell must reflect the welfare loss from no-one contributing

-

[11]

Faithful equilibrium structure.The pure Nash equilibrium structure of the base game lifts in a way that preserves the qualitative strategic character of each game class. We are not aware of a standard parametric construction for this lifting problem. The closest relatives are additive cost-of-effort models in the principal-agent tradition and the selfishn...

work page 2014

-

[12]

State: bespoke ledgers in`self.state`. Escrow balances, insurance pools, and violation 17counts are framework-owned â =C” read with`ctx.escrow_balance`,`ctx.insurance_pool`, 18`ctx.violation_count`â =C” do not store those in`self.state`

-

[13]

Resolution is simultaneous:`submissions`has every fisher's choice at once; return 20final catches for everyone at once

-

[14]

If a clause cannot be expressed here, put a comment at the top: 22`# UNIMPLEMENTABLE: <clause>`and implement the rest faithfully. 23 24# EnforcementContext`ctx`(payoff primitives; same semantics as before, scoped to this call) 25ctx.transfer(src, dst, amount, reason=' ') 26ctx.escrow(name, amount, bucket='default', reason=' ') 27ctx.release_escrow(name, a...

-

[15]

Each fisher is allowed to catch a maximum of 1.5 tons of fish per month until the fish population recovers to at least 75 tons

-

[16]

If the total fish in the lake drops below 25 tons at the end of any month, all fishers must refrain from fishing in the following month to allow for recovery

-

[17]

If any fisher exceeds their allowed catch, they must forfeit their catch for the next two months

-

[18]

This agreement will be reviewed and adjusted as necessary at the end of each month. 8""" 9class RecoveryFishingLaw(Contract): 10VERSION = 1 11 12def __init__(self, num_agents, agent_names, *, prior_state=None): 13super().__init__(num_agents, agent_names, prior_state=prior_state) 14if prior_state is None: 15self.state = { 16"moratorium_months_remaining": 0...

-

[19]

TableGames“Both players commit to choosing the cooperative action, the first action listed in your available actions.“

-

[20]

The current community has agreed to catch at most 3 tons of fish per month per person

GovSimContract“The current community has agreed to catch at most 3 tons of fish per month per person. ” Holding all else constant, we observe a substantial gap in violation rates (Table 3). TableGames GovSimContracts Condition Violation rate Agent-rounds Violation rate Agent-rounds Enforced 4.0% 6 / 150 51.3% 77 / 150 Unenforced 27.3% 41 / 150 84.7% 72 / ...

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.