Recognition: 2 theorem links

· Lean TheoremSinkhorn Treatment Effects: A Causal Optimal Transport Measure

Pith reviewed 2026-05-12 01:25 UTC · model grok-4.3

The pith

The Sinkhorn treatment effect measures divergence between entire counterfactual distributions via entropic optimal transport and admits debiased estimators for valid tests.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

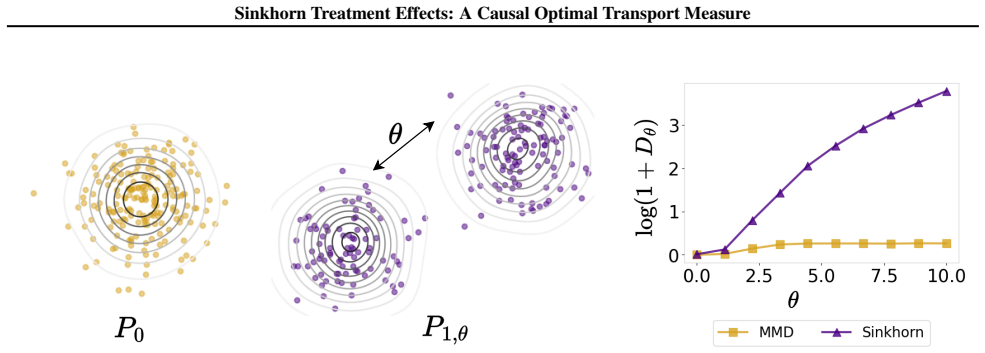

The Sinkhorn treatment effect is introduced as the entropic optimal transport divergence between counterfactual distributions. This functional is shown to equal a smooth map applied to the counterfactual mean embeddings under an appropriate kernel. The smoothness yields first-order pathwise differentiability in general and second-order pathwise differentiability under the null of equal counterfactual distributions. These properties allow construction of debiased estimators that are asymptotically normal, thereby delivering asymptotically valid tests for distributional treatment effects at any fixed entropic regularization parameter. An aggregated test is further proposed that pools evidence,

What carries the argument

The Sinkhorn treatment effect, defined as the entropic optimal transport divergence between counterfactual outcome distributions and represented as a smooth functional of their mean embeddings.

If this is right

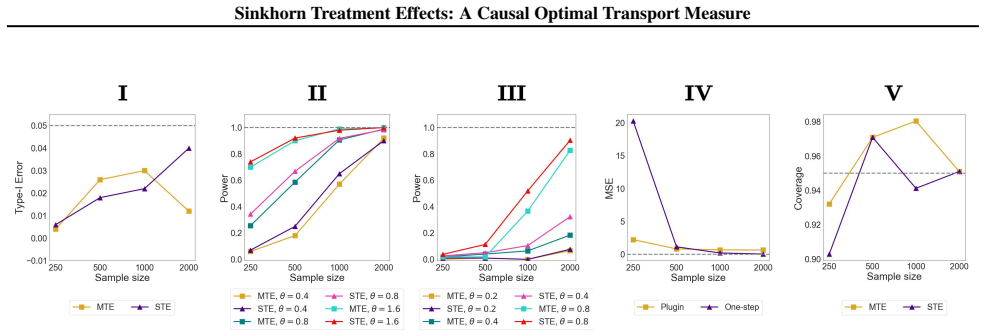

- Debiased estimators for the Sinkhorn treatment effect converge to a normal limit at the expected rate.

- Hypothesis tests for equality of counterfactual distributions control type-I error asymptotically at a fixed regularization level.

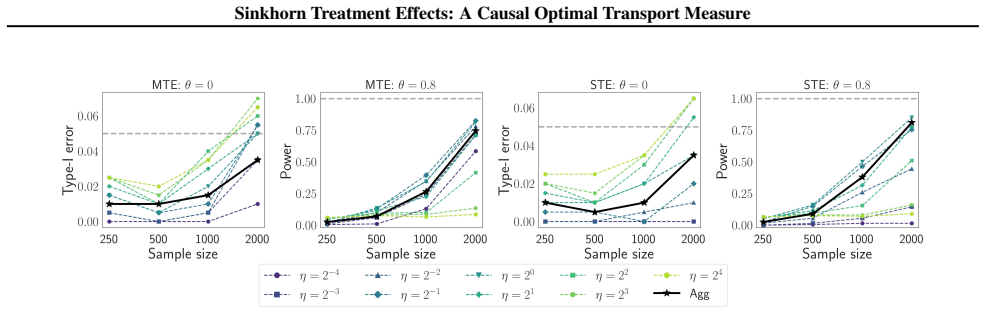

- An aggregated test over a grid of regularization values combines evidence without requiring knowledge of the optimal level in advance.

- The procedure detects distributional shifts on both simulated data and real image datasets.

Where Pith is reading between the lines

- The same kernel-embedding route may extend inference to other causal functionals that involve optimal transport distances.

- The method supplies a concrete way to test for treatment effects when only shape or tail differences are expected rather than mean shifts.

- Data-driven aggregation rules could replace the fixed grid while preserving asymptotic control.

Load-bearing premise

Counterfactual mean embeddings must exist in a reproducing kernel Hilbert space so that the entropic divergence becomes a differentiable functional of those embeddings.

What would settle it

A large-sample simulation in which the two counterfactual distributions are identical yet the test rejects the null at a rate exceeding the nominal level would refute the claim of asymptotic validity.

Figures

read the original abstract

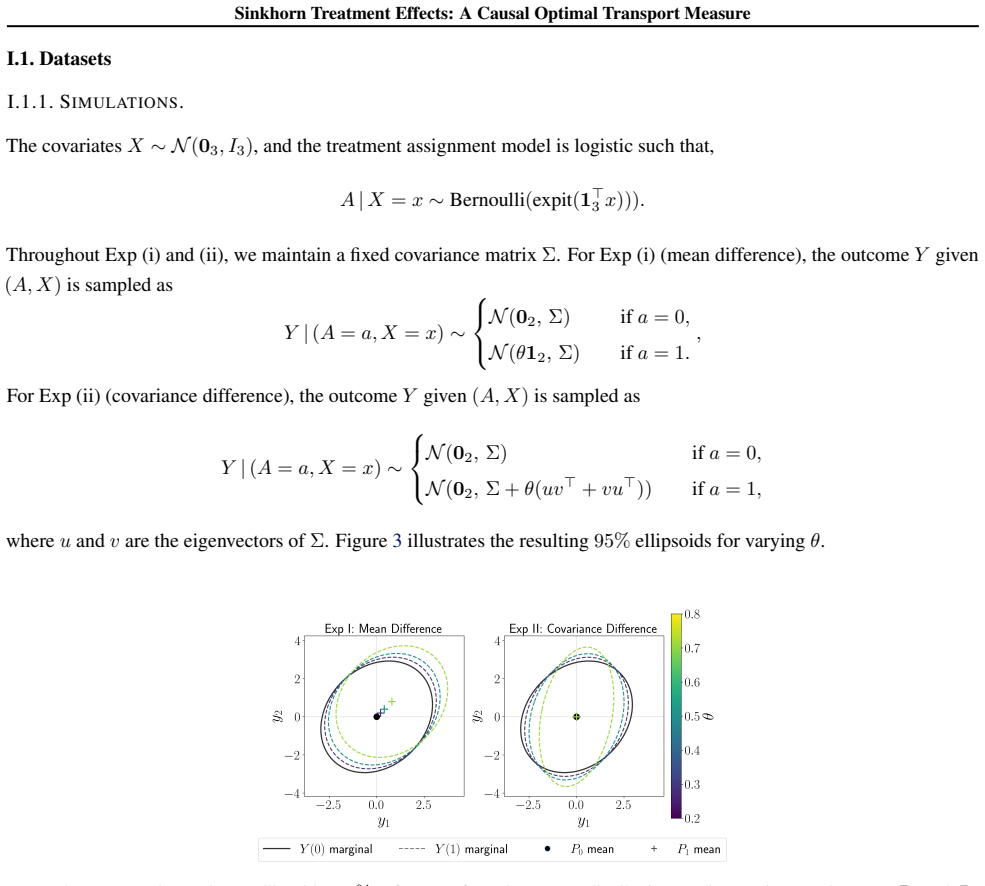

We introduce the Sinkhorn treatment effect, an entropic optimal transport measure of divergence between counterfactual distributions. Unlike classical quantities such as the average treatment effect, this measure captures differences across entire distributions. We analyze this divergence as a statistical functional and show it can be written as a smooth transformation of counterfactual mean embeddings with an appropriate kernel. This characterization allows us to establish first-order pathwise differentiability in general, and second-order pathwise differentiability under the null hypothesis of equal counterfactual distributions. Leveraging this smoothness, we construct debiased estimators and use them to obtain asymptotically valid tests for distributional treatment effects with a fixed entropic regularization parameter. Because the power of the test depends on this unknown parameter, we further propose an aggregated test that combines evidence across a grid of regularization choices. Experiments on simulated and image data demonstrate the practical advantages of our estimator and testing procedure.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces the Sinkhorn treatment effect, an entropic optimal transport divergence between counterfactual distributions, as a measure of distributional treatment effects. It characterizes the quantity as a smooth transformation of counterfactual mean embeddings under a suitable kernel, establishes first-order pathwise differentiability in general and second-order pathwise differentiability under the null of equal counterfactual distributions, constructs debiased estimators, and derives asymptotically valid tests for fixed entropic regularization. An aggregated test over a grid of regularization values is proposed to mitigate power dependence on the unknown parameter. The approach is illustrated on simulated data and image data.

Significance. If the differentiability and asymptotic results hold, the work supplies a computationally tractable, kernel-based causal OT functional that enables rigorous inference on full distributional shifts rather than moments alone. The explicit treatment of the regularization parameter via aggregation and the construction of debiased estimators are practical strengths that could support applications in causal machine learning where testing equality of counterfactual laws is required.

major comments (1)

- The abstract asserts first- and second-order pathwise differentiability together with asymptotic validity of the debiased estimators, yet the provided text supplies no explicit conditions on the kernel, no error bounds, and no verification steps for the second-order expansion under the null. Without these details the support for the central claims on differentiability and test validity cannot be fully assessed.

minor comments (2)

- The dependence of test power on the regularization parameter is acknowledged, but the precise aggregation procedure (weights, grid construction) would benefit from an explicit algorithmic statement.

- Notation for the counterfactual mean embeddings and the Sinkhorn divergence should be introduced with a self-contained definition before the differentiability arguments.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive feedback. We have revised the manuscript to supply the missing explicit conditions, error bounds, and verification steps for the differentiability claims.

read point-by-point responses

-

Referee: The abstract asserts first- and second-order pathwise differentiability together with asymptotic validity of the debiased estimators, yet the provided text supplies no explicit conditions on the kernel, no error bounds, and no verification steps for the second-order expansion under the null. Without these details the support for the central claims on differentiability and test validity cannot be fully assessed.

Authors: We agree that the original submission did not provide sufficient explicit conditions or verification details. In the revised manuscript we have added a dedicated subsection (Section 3.2) stating the required kernel assumptions (bounded, continuous, and characteristic kernels with finite RKHS norm), derived explicit first- and second-order pathwise derivative bounds under these conditions, and included a full verification of the second-order expansion under the null (Appendix B.3). These additions directly support the asymptotic validity of the debiased estimators and the proposed tests. revision: yes

Circularity Check

No significant circularity; derivation is self-contained

full rationale

The paper defines the Sinkhorn treatment effect directly as an entropic OT divergence on counterfactual distributions, represents it as a smooth functional of mean embeddings via an appropriate kernel, and derives first- and second-order pathwise differentiability from that representation using standard functional analysis. Debiased estimators and asymptotic tests follow from the differentiability, with the fixed regularization parameter explicitly acknowledged and addressed via a separate aggregation proposal. No load-bearing step reduces a claimed result to a fitted input, self-citation chain, or definitional tautology; all steps rest on external OT and statistical functional theory.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Existence of an appropriate positive definite kernel that induces the mean embeddings of counterfactual distributions

- domain assumption Standard regularity conditions for pathwise differentiability of the statistical functional

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel (J uniqueness) unclearWe define the Sinkhorn treatment effect ... S(P)=Sε∘J∘Ψ(P) ... first-order pathwise differentiability ... second-order ... under the null ... ¨SP = [I−(I−(I−PX)PA|X)PY|A,X]⊗2 gP with gP=ω⊗2P ⊙ kP1

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearKμ=ε(I−T²μ)⁻¹Hμ ... Hadamard operator of Sinkhorn divergence ... bilinear form γ1(Kμγ2)

Reference graph

Works this paper leans on

- [1]

-

[2]

The Annals of Statistics , pages=

On differentiable functionals , author=. The Annals of Statistics , pages=. 1991 , publisher=

work page 1991

-

[3]

Efficient and adaptive estimation for semiparametric models , author=. 1993 , publisher=

work page 1993

-

[4]

Transportation cost-information inequalities and applications to random dynamical systems and diffusions , author=. Ann. Probab. , volume=

-

[5]

Journal of the American statistical Association , volume=

Estimation of regression coefficients when some regressors are not always observed , author=. Journal of the American statistical Association , volume=. 1994 , publisher=

work page 1994

-

[6]

Journal of mathematical physics , volume=

Dynamics and kinematics of reciprocal diffusions , author=. Journal of mathematical physics , volume=. 1993 , pages=

work page 1993

- [7]

-

[8]

Optimal transport for applied mathematicians:

Santambrogio, Filippo , volume=. Optimal transport for applied mathematicians:. 2015 , publisher=

work page 2015

-

[9]

Carlier, Guillaume and Galichon, Alfred and Santambrogio, Filippo , journal=. From. 2010 , publisher=

work page 2010

-

[10]

The annals of mathematical statistics , volume=

Remarks on a multivariate transformation , author=. The annals of mathematical statistics , volume=. 1952 , publisher=

work page 1952

- [11]

- [12]

-

[13]

International Conference on Artificial Intelligence and Statistics , volume=

The expressive power of a class of normalizing flow models , author=. International Conference on Artificial Intelligence and Statistics , volume=. 2020 , publisher=

work page 2020

-

[14]

Handbook of Uncertainty Quantification , volume=

An introduction to sampling via measure transport , author=. Handbook of Uncertainty Quantification , volume=. 2016 , publisher=

work page 2016

-

[15]

Advances in neural information processing systems , volume=

Improved variational inference with inverse autoregressive flow , author=. Advances in neural information processing systems , volume=

-

[16]

Advances in neural information processing systems , volume=

Masked autoregressive flow for density estimation , author=. Advances in neural information processing systems , volume=

- [17]

-

[18]

NICE: Non-linear Independent Components Estimation

Nice: Non-linear independent components estimation , author=. arXiv preprint arXiv:1410.8516 , year=

work page internal anchor Pith review arXiv

-

[19]

Kobyzev, Ivan and Prince, Simon JD and Brubaker, Marcus A , journal=. Normalizing flows:. 2020 , publisher=

work page 2020

-

[20]

Sbornik: Mathematics , volume=

Triangular transformations of measures , author=. Sbornik: Mathematics , volume=. 2005 , publisher=

work page 2005

-

[21]

International Conference on Machine Learning , pages=

Neural autoregressive flows , author=. International Conference on Machine Learning , pages=. 2018 , organization=

work page 2018

-

[22]

International Conference on Machine Learning , pages=

Sum-of-squares polynomial flow , author=. International Conference on Machine Learning , pages=. 2019 , organization=

work page 2019

-

[23]

International Conference on Machine Learning , pages=

Input convex neural networks , author=. International Conference on Machine Learning , pages=. 2017 , organization=

work page 2017

-

[24]

A Style-Based Generator Architecture for Generative Adversarial Networks , year=

Karras, Tero and Laine, Samuli and Aila, Timo , booktitle=. A Style-Based Generator Architecture for Generative Adversarial Networks , year=

-

[25]

Advances in neural information processing systems , volume=

Unconstrained monotonic neural networks , author=. Advances in neural information processing systems , volume=

-

[26]

Advances in neural information processing systems , volume=

The expressive power of neural networks: A view from the width , author=. Advances in neural information processing systems , volume=

-

[27]

Advances in neural information processing systems , volume=

Resnet with one-neuron hidden layers is a universal approximator , author=. Advances in neural information processing systems , volume=

-

[28]

Optimal transport mapping via input convex neural networks , year=

Makkuva, Ashol and Amirhossein, Taghvaei and Lee, Jason and Oh, Sewoong , booktitle=. Optimal transport mapping via input convex neural networks , year=

-

[29]

Optimal Control Via Neural Networks:

Chen, Yize and Shi,Yuanyuan and Zhang, Baosen , booktitle=. Optimal Control Via Neural Networks:. 2018 , volume=

work page 2018

-

[30]

Kakade and Shai Shalev-Shwartz , year=

Sham M. Kakade and Shai Shalev-Shwartz , year=. On the duality of strong convexity and strong smoothness :

-

[31]

Archiv der Mathematik , author=

A note on the measurability of convex sets , volume=. Archiv der Mathematik , author=. 1986 , pages=. doi:10.1007/bf01202504 , number=

-

[32]

Computational Optimal Transport , publisher =. 2018 , copyright =. doi:10.48550/ARXIV.1803.00567 , author =

-

[33]

Attention is All you Need , url =

Vaswani, Ashish and Shazeer, Noam and Parmar, Niki and Uszkoreit, Jakob and Jones, Llion and Gomez, Aidan N and Kaiser, ukasz and Polosukhin, Illia , booktitle =. Attention is All you Need , url =

-

[34]

and Ablin, Pierre and Blondel, Mathieu and Peyr\'e, Gabriel , booktitle =

Sander, Michael E. and Ablin, Pierre and Blondel, Mathieu and Peyr\'e, Gabriel , booktitle =. Sinkformers:. 2022 , editor =

work page 2022

-

[35]

Revuz, D. and Yor, M. , isbn=. Continuous Martingales and. 2004 , publisher=

work page 2004

-

[36]

Advances in neural information processing systems , volume=

Attention is all you need , author=. Advances in neural information processing systems , volume=

-

[37]

L. A survey of the. Discrete Contin. Dyn. Syst. , FJOURNAL =. 2014 , NUMBER =. doi:10.3934/dcds.2014.34.1533 , URL =

-

[38]

R\". Note on the. Statist. Probab. Lett. , FJOURNAL =. 1993 , NUMBER =. doi:10.1016/0167-7152(93)90257-J , URL =

-

[39]

Alberto Chiarini and Giovanni Conforti and Giacomo Greco and Luca Tamanini , year=. Gradient estimates for the. 2207.14262 , archivePrefix=

-

[40]

Introduction to Partial Differential Equations , author=. 2013 , publisher=

work page 2013

-

[41]

Entropic Optimal Transport between Unbalanced

Janati, Hicham and Muzellec, Boris and Peyr\'. Entropic Optimal Transport between Unbalanced. 2020 , isbn =

work page 2020

-

[42]

Gradient flows in metric spaces and in the space of probability measures , SERIES =

Ambrosio, Luigi and Gigli, Nicola and Savar\'. Gradient flows in metric spaces and in the space of probability measures , SERIES =. 2008 , PAGES =

work page 2008

-

[44]

Conforti, Giovanni and Tamanini, Luca , TITLE =. J. Funct. Anal. , FJOURNAL =. 2021 , NUMBER =. doi:10.1016/j.jfa.2021.108964 , URL =

-

[45]

Csisz\'. Ann. Probability , FJOURNAL =. 1975 , PAGES =. doi:10.1214/aop/1176996454 , URL =

-

[46]

On the difference between entropic cost and the optimal transport cost , author=. 2019 , note=

work page 2019

-

[47]

Berman, Robert J. , TITLE =. Numer. Math. , FJOURNAL =. 2020 , NUMBER =. doi:10.1007/s00211-020-01127-x , URL =

-

[48]

Penalized discriminant analysis.The Annals of Statistics, 23(1), February 1995

R\". Convergence of the iterative proportional fitting procedure , JOURNAL =. 1995 , NUMBER =. doi:10.1214/aos/1176324703 , URL =

-

[49]

Nutz, Marcel and Wiesel, Johannes , TITLE =. Probab. Theory Related Fields , FJOURNAL =. 2022 , NUMBER =. doi:10.1007/s00440-021-01096-8 , URL =

-

[50]

Entropic estimation of optimal transport maps , author=. 2022 , eprint=

work page 2022

-

[51]

Aude Genevay and Lénaic Chizat and Francis Bach and Marco Cuturi and Gabriel Peyré , year=. Sample Complexity of. 1810.02733 , archivePrefix=

-

[52]

Sinho Chewi and Aram-Alexandre Pooladian , year=. An entropic generalization of. 2203.04954 , archivePrefix=

-

[53]

Weak semiconvexity estimates for

Giovanni Conforti , year=. Weak semiconvexity estimates for. 2301.00083 , archivePrefix=

-

[54]

Gigli, Nicola and Tamanini, Luca , TITLE =. Probab. Theory Related Fields , FJOURNAL =. 2020 , NUMBER =. doi:10.1007/s00440-019-00909-1 , URL =

-

[55]

Guillaume Carlier and Lénaïc Chizat and Maxime Laborde , year=. Lipschitz Continuity of the. 2210.00225 , archivePrefix=

-

[56]

Carlier, Guillaume and Laborde, Maxime , TITLE =. SIAM J. Math. Anal. , FJOURNAL =. 2020 , NUMBER =. doi:10.1137/19M1253800 , URL =

-

[57]

Chewi, Sinho and Pooladian, Aram-Alexandre , journal=. An entropic generalization of

-

[58]

Jordan, Richard and Kinderlehrer, David and Otto, Felix , TITLE =. SIAM J. Math. Anal. , FJOURNAL =. 1998 , NUMBER =. doi:10.1137/S0036141096303359 , URL =

- [59]

-

[60]

Karatzas, Ioannis and Shreve, Steven E. , TITLE =. 1991 , PAGES =. doi:10.1007/978-1-4612-0949-2 , URL =

-

[61]

Fathi, Max and Gozlan, Nathael and Prod'homme, Maxime , TITLE =. Calc. Var. Partial Differential Equations , FJOURNAL =. 2020 , NUMBER =. doi:10.1007/s00526-020-01754-0 , URL =

-

[62]

Mallasto, Anton and Gerolin, Augusto and Minh, H\`a Quang , TITLE =. Inf. Geom. , FJOURNAL =. 2022 , NUMBER =. doi:10.1007/s41884-021-00052-8 , URL =

-

[63]

Stochastic derivatives and generalized h-transforms of

Christian L. Stochastic derivatives and generalized h-transforms of. 2011 , eprint=

work page 2011

-

[64]

Moucer, C. A systematic approach to. SIAM Journal on Optimization , volume=. 2023 , publisher=

work page 2023

-

[65]

Mathematical Programming , volume=

A simplified view of first order methods for optimization , author=. Mathematical Programming , volume=. 2018 , publisher=

work page 2018

-

[66]

Zhurnal Vychislitel'noi Matematiki i Matematicheskoi Fiziki , volume=

Gradient methods for minimizing functionals , author=. Zhurnal Vychislitel'noi Matematiki i Matematicheskoi Fiziki , volume=. 1963 , publisher=

work page 1963

-

[67]

Knight, Philip A , journal=. The. 2008 , publisher=

work page 2008

-

[68]

Bakry, Dominique and Gentil, Ivan and Ledoux, Michel , TITLE =. 2014 , PAGES =. doi:10.1007/978-3-319-00227-9 , URL =

-

[69]

Bobkov, Sergey G. , TITLE =. Electron. J. Probab. , FJOURNAL =. 2022 , PAGES =. doi:10.1214/22-ejp834 , URL =

-

[70]

Stochastic analysis, filtering, and stochastic optimization , PAGES =

Karatzas, Ioannis and Tschiderer, Bertram , TITLE =. Stochastic analysis, filtering, and stochastic optimization , PAGES =. [2022] 2022 , ISBN =. doi:10.1007/978-3-030-98519-6\_10 , URL =

-

[71]

Clerc, Gauthier , TITLE =. ESAIM Control Optim. Calc. Var. , FJOURNAL =. 2022 , PAGES =. doi:10.1051/cocv/2022033 , URL =

-

[72]

L. From the. J. Funct. Anal. , FJOURNAL =. 2012 , NUMBER =. doi:10.1016/j.jfa.2011.11.026 , URL =

- [73]

-

[74]

Evans, Lawrence C. , TITLE =. 1998 , PAGES =. doi:10.1090/gsm/019 , URL =

- [75]

-

[76]

Conforti, Giovanni and Von Renesse, Max , TITLE =. Probab. Theory Related Fields , FJOURNAL =. 2018 , NUMBER =. doi:10.1007/s00440-017-0814-9 , URL =

-

[77]

Advances in Neural Information Processing Systems , volume=

Integration methods and optimization algorithms , author=. Advances in Neural Information Processing Systems , volume=

-

[78]

Vershynin, Roman , TITLE =. 2018 , PAGES =. doi:10.1017/9781108231596 , URL =

-

[79]

Bernton, Espen and Ghosal, Promit and Nutz, Marcel , TITLE =. Duke Math. J. , FJOURNAL =. 2022 , NUMBER =. doi:10.1215/00127094-2022-0035 , URL =

-

[80]

The emergence of clusters in self-attention dynamics , author=. 2024 , note=

work page 2024

-

[81]

Marshall, Nicholas F. and Coifman, Ronald R. , TITLE =. IMA J. Appl. Math. , FJOURNAL =. 2019 , NUMBER =. doi:10.1093/imamat/hxy065 , URL =

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.