Recognition: no theorem link

FLARE: One-Shot PE-Level Fault Localization in Systolic Arrays via Algebraic Test Vectors

Pith reviewed 2026-05-12 01:34 UTC · model grok-4.3

The pith

Pairwise coprime test inputs produce a unique divisibility signature that identifies the faulty row in a systolic array column after one test pass.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

By assigning pairwise coprime integers as test-input entries, a permanent weight-register fault produces a deviation whose divisibility signature uniquely identifies the faulty row. Under a general bounded error model, a single test pass localizes the faulty row with high probability. For INT16 arithmetic this covers array sizes up to 256 by 256 with localization probability above 0.98 at a test cost under 1 percent of one inference GEMM tile. When one round is insufficient a second pass using ratio computation achieves exact localization; for single-bit errors odd coprime entries guarantee exact localization in one round.

What carries the argument

The divisibility signature extracted from the output deviation when test vectors contain pairwise coprime integers; each row maps to a distinct combination of prime factors that survives bounded arithmetic errors.

If this is right

- A single test pass suffices for greater than 0.98 localization probability on INT16 arrays up to 256 by 256.

- A second pass that computes output ratios can deliver exact localization when the first pass is inconclusive.

- Odd coprime entries alone guarantee exact one-pass localization for single-bit errors.

- The method applies to a broader bounded-error fault class than prior dataflow-aware tests that focused mainly on specific error patterns.

Where Pith is reading between the lines

- The algebraic test approach could be adapted to localize faults inside other matrix-multiply accelerators that use regular dataflow.

- The sub-1-percent overhead makes periodic online testing during live inference workloads feasible without dedicated test hardware.

- Software-only row identification may complement existing hardware redundancy schemes and reduce overall silicon cost for reliable AI chips.

- Dynamic selection of coprime sets sized to the current array dimensions could extend the technique to variable-size or reconfigurable arrays.

Load-bearing premise

The faults are permanent weight-register faults and the resulting errors remain small enough that the divisibility signatures stay unambiguous and unique to each row.

What would settle it

Apply the coprime test vector to a known single-row fault in a physical or simulated 256 by 256 array and observe whether the measured deviation's prime-factor set matches only the expected row or matches multiple rows.

Figures

read the original abstract

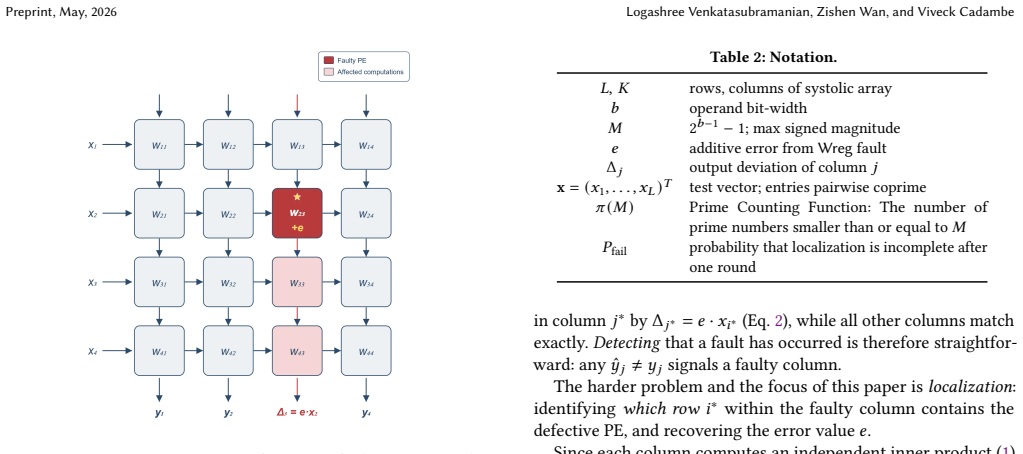

Systolic arrays are the dominant compute fabric for neural network inference. Prior work has addressed column-level fault detection efficiently with uniform test patterns, but row-level (PE-level) fault localization within a faulty column remains open without resorting to hardware redundancy. The fundamental obstacle is that uniform test inputs destroy per-row signatures: any test that activates every row equally cannot distinguish which row is the source of an observed deviation. In this paper, we propose a lightweight, purely algorithmic remedy based on coprime test vectors. By assigning pairwise coprime integers as test-input entries, a permanent weight-register fault produces a deviation whose divisibility signature uniquely identifies the faulty row. Under a general bounded error model, a single test pass localizes the faulty row with high probability. This error model covers a broader class of faults than what prior dataflow-aware testing work has primarily emphasized. When one round is insufficient, a second pass using a ratio computation achieves exact localization; for the special case of single-bit errors, odd coprime entries guarantee exact localization in one round. For INT16 arithmetic, a single test pass covers array sizes up to $256{\times}256$ with localization probability above $0.98$, at a test cost under $1\%$ of one inference GEMM tile.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents FLARE, a method for one-shot PE-level (row-level) fault localization in systolic arrays for permanent weight-register faults. It assigns pairwise coprime integers as test-input entries so that the divisibility signature of the observed deviation uniquely identifies the faulty row. Under a general bounded error model, a single test pass achieves localization with high probability (>0.98 for up to 256×256 arrays in INT16 arithmetic) at low overhead (<1% of one GEMM tile); a second pass or odd-coprime choice for single-bit errors yields exact localization.

Significance. If the central claims are substantiated, the work provides a lightweight algorithmic solution to a previously open problem in systolic-array testing, extending column-level detection to PE-level localization without hardware redundancy. The number-theoretic approach (coprime test vectors) is elegant and could influence fault-tolerance techniques in other dataflow architectures. The reported low test cost and coverage for practical array sizes make the result potentially impactful for reliable neural-network accelerators.

major comments (2)

- [Abstract] Abstract: the claim that a single test pass localizes the faulty row with probability above 0.98 for 256×256 arrays under INT16 arithmetic is stated without any derivation, explicit sequence of pairwise-coprime integers, or precise definition of the bounded error model (in particular, the allowable magnitude of the error e relative to the chosen a_i values). This is load-bearing because the uniqueness of the divisibility signature fails whenever a_j | e for some j ≠ k.

- [Abstract] Abstract: the general bounded error model is asserted to cover a broader class of faults than prior dataflow-aware testing, yet no formal statement of the model, no proof that collisions remain below 2%, and no explicit bound on |e| are supplied. Without these, the probability guarantee cannot be verified.

minor comments (1)

- [Abstract] The abstract would be clearer if it briefly indicated the construction used to generate the pairwise-coprime test vector (e.g., consecutive primes or a specific number-theoretic sequence).

Simulated Author's Rebuttal

We thank the referee for the careful reading and for identifying areas where the abstract could more explicitly support its central claims. We agree that greater precision on the bounded error model, error bound, and probability derivation would improve verifiability. We will revise the abstract to include a concise definition of the model, an explicit bound on |e|, and a reference to the supporting analysis while preserving brevity. The full formal statements, coprime sequence construction, and collision-probability proof appear in the manuscript body. We address each major comment below.

read point-by-point responses

-

Referee: [Abstract] Abstract: the claim that a single test pass localizes the faulty row with probability above 0.98 for 256×256 arrays under INT16 arithmetic is stated without any derivation, explicit sequence of pairwise-coprime integers, or precise definition of the bounded error model (in particular, the allowable magnitude of the error e relative to the chosen a_i values). This is load-bearing because the uniqueness of the divisibility signature fails whenever a_j | e for some j ≠ k.

Authors: We agree that the abstract would benefit from additional context. The manuscript supplies an explicit construction of the pairwise-coprime test-vector sequence (first N primes scaled for INT16 range), a precise definition of the bounded error model, and the derivation showing that the probability of an unintended divisibility (a_j | e for j ≠ k) remains below 2% when |e| is bounded relative to the smallest a_i. In the revision we will expand the abstract to state the error bound explicitly and note that the chosen bound precludes the failure case with the reported probability. The complete sequence and step-by-step derivation remain in the body for readers who wish to verify the arithmetic. revision: yes

-

Referee: [Abstract] Abstract: the general bounded error model is asserted to cover a broader class of faults than prior dataflow-aware testing, yet no formal statement of the model, no proof that collisions remain below 2%, and no explicit bound on |e| are supplied. Without these, the probability guarantee cannot be verified.

Authors: The manuscript contains the formal statement of the bounded error model (any permanent weight-register fault producing an additive output deviation e whose magnitude is bounded by a constant determined by the arithmetic precision), the proof that the collision probability stays below 2% for arrays up to 256×256, and the explicit bound on |e|. These elements establish both the broader fault coverage and the >0.98 localization probability. We will revise the abstract to summarize the model definition, the |e| bound, and the collision-probability result so that the guarantee is verifiable from the abstract itself. revision: yes

Circularity Check

No significant circularity; method rests on external number theory

full rationale

The derivation assigns pairwise coprime integers to test inputs and relies on the resulting divisibility signatures to identify faulty rows under a bounded error model. This uses standard external facts from number theory (coprime integers and divisibility) rather than any self-referential definition, fitted parameter renamed as prediction, or load-bearing self-citation. No equations in the abstract or described claims reduce to their own inputs by construction; the probability bound for 256x256 arrays is presented as a consequence of the model and coprimality properties, not derived circularly from the paper's own data or prior self-citations. The approach is self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Pairwise coprime integers produce unique divisibility signatures that identify individual rows

- domain assumption Faults are permanent weight-register errors whose magnitude stays within a bounded model

Reference graph

Works this paper leans on

-

[1]

In-datacenter performance analysis of a tensor processing unit

Norman P Jouppi, Cliff Young, Nishant Patil, David Patterson, Gaurav Agrawal, Raminder Bajwa, Sarah Bates, Suresh Bhatia, Nan Boden, Al Borchers, et al. In-datacenter performance analysis of a tensor processing unit. InProceedings of the 44th annual international symposium on computer architecture, pages 1–12, 2017

work page 2017

-

[2]

Lei Deng, Guoqi Li, Song Han, Luping Shi, and Yuan Xie. Model compression and hardware acceleration for neural networks: A comprehensive survey.Proceedings of the IEEE, 108(4):485–532, 2020

work page 2020

-

[3]

Mecla: Memory-compute-efficient LLM accelerator with scaling sub-matrix partition

Yubin Qin, Yang Wang, Zhiren Zhao, Xiaolong Yang, Yang Zhou, Shaojun Wei, Yang Hu, and Shouyi Yin. Mecla: Memory-compute-efficient LLM accelerator with scaling sub-matrix partition. In2024 ACM/IEEE 51st Annual International Symposium on Computer Architecture (ISCA), pages 1032–1047. IEEE, 2024

work page 2024

-

[4]

CogSys: Efficient and scalable neurosymbolic cognition system via algorithm-hardware co-design

Zishen Wan, Hanchen Yang, Ritik Raj, Che-Kai Liu, Ananda Samajdar, Arijit Ray- chowdhury, and Tushar Krishna. CogSys: Efficient and scalable neurosymbolic cognition system via algorithm-hardware co-design. In2025 IEEE International Symposium on High Performance Computer Architecture (HPCA), pages 775–789. IEEE, 2025

work page 2025

-

[5]

Hochschild, Paul Turner, Jeffrey C

Peter H. Hochschild, Paul Turner, Jeffrey C. Mogul, Rama Gober, Parthasarathy Ranganathan, David E. Culler, and Amin Vahdat. Cores that don’t count. In Proceedings of the Workshop on Hot Topics in Operating Systems (HotOS), pages 9–16. ACM, 2021

work page 2021

-

[6]

Silent data corruptions at scale

Harish Dattatraya Dixit, Sneha Pendharkar, Matt Beadon, Chris Mason, Tejasvi Chakravarthy, Bharath Muthiah, and Sriram Sankar. Silent data corruptions at scale. InarXiv preprint arXiv:2102.11245, 2021

-

[7]

Understanding permanent hardware failures in deep learn- ing training accelerator systems

Yi He, Mike Hutton, Steven Chan, Robert De Gruijl, Rama Govindaraju, Nishant Patil, and Yanjing Li. Understanding permanent hardware failures in deep learn- ing training accelerator systems. In2023 IEEE European Test Symposium (ETS), pages 1–6. IEEE, 2023

work page 2023

-

[8]

Jeff Jun Zhang, Tianyu Gu, Kanad Basu, and Siddharth Garg. Analyzing and mit- igating the impact of permanent faults on a systolic array based neural network accelerator. In2018 IEEE 36th VLSI Test Symposium (VTS), pages 1–6. IEEE, 2018

work page 2018

-

[9]

H. T. Kung and Charles E. Leiserson. Systolic arrays for VLSI.Computer, 15(1):37– 46, 1982

work page 1982

-

[10]

Ritik Raj, Sarbartha Banerjee, Nikhil Chandra, Zishen Wan, Jianming Tong, Ananda Samajdhar, and Tushar Krishna. SCALE-Sim v3: A modular cycle- accurate systolic accelerator simulator for end-to-end system analysis. In2025 IEEE International Symposium on Performance Analysis of Systems and Software (ISPASS), pages 186–200. IEEE, 2025

work page 2025

-

[11]

Kuang-Hua Huang and Jacob A. Abraham. Algorithm-based fault tolerance for matrix operations.IEEE Transactions on Computers, C-33(6):518–528, 1984

work page 1984

-

[12]

Trends and challenges in VLSI circuit reliability.IEEE Micro, 23(4):14–19, 2003

Cristian Constantinescu. Trends and challenges in VLSI circuit reliability.IEEE Micro, 23(4):14–19, 2003

work page 2003

-

[13]

A lightweight error- resiliency mechanism for deep neural networks

Brunno F Goldstein, Victor C Ferreira, Sudarshan Srinivasan, Dipankar Das, Alexandre S Nery, Sandip Kundu, and Felipe MG França. A lightweight error- resiliency mechanism for deep neural networks. In2021 22nd International Symposium on Quality Electronic Design (ISQED), pages 311–316, Santa Clara, CA, USA, 2021. IEEE

work page 2021

-

[14]

A novel fault-tolerant architecture for tiled matrix multiplication

Sandeep Bal, Chandra Sekhar Mummidi, Victor Da Cruz Ferreira, Sudarshan Srinivasan, and Sandip Kundu. A novel fault-tolerant architecture for tiled matrix multiplication. In2023 Design, Automation & Test in Europe Conference & Exhibition (DATE), pages 1–6, Antwerp, Belgium, 2023. IEEE

work page 2023

-

[15]

Natalia Cherezova, Artur Jutman, and Maksim Jenihhin. FORTALESA: Fault- tolerant reconfigurable systolic array for DNN inference.Microprocessors and Microsystems, page 105222, 2025

work page 2025

-

[16]

Hayoung Lee, Jongho Park, and Sungho Kang. An area-efficient systolic array redundancy architecture for reliable AI accelerator.IEEE Transactions on Very Large Scale Integration (VLSI) Systems, 32(10):1950–1954, 2024

work page 1950

-

[17]

Keewon Cho, Ingeol Lee, Hyeonchan Lim, and Sungho Kang. Efficient systolic- array redundancy architecture for offline/online repair.Electronics, 9(2):338, 2020

work page 2020

-

[18]

FSA: An efficient fault-tolerant systolic array-based DNN accelerator architecture

Yingnan Zhao, Ke Wang, and Ahmed Louri. FSA: An efficient fault-tolerant systolic array-based DNN accelerator architecture. In2022 IEEE 40th International Conference on Computer Design (ICCD), pages 545–552. IEEE, 2022

work page 2022

-

[19]

Algorithmic strategies for sustainable reuse of neural network accelerators with permanent faults

Youssef Ait Alama, Sampada Sakpal, Ke Wang, Razvan Bunescu, Avinash Karanth, and Ahmed Louri. Algorithmic strategies for sustainable reuse of neural network accelerators with permanent faults. In2025 IEEE International Symposium on Defect and Fault Tolerance in VLSI and Nanotechnology Systems (DFT), pages 1–6. IEEE, 2025

work page 2025

-

[20]

Hayoung Lee, Jihye Kim, Jongho Park, and Sungho Kang. STRAIT: Self-test and self-recovery for AI accelerator.IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems, 42(9):3092–3104, 2023

work page 2023

-

[21]

Wei-Kai Liu, Jonti Talukdar, Benjamin Tan, and Krishnendu Chakrabarty. Run- time fault localization in deep neural network accelerators.ACM Transactions on Design Automation of Electronic Systems, 31(1):1–27, 2025

work page 2025

-

[22]

Test architecture for systolic array of edge-based AI accelerator

Umair Saeed Solangi, Muhammad Ibtesam, Muhammad Adil Ansari, Jinuk Kim, and Sungju Park. Test architecture for systolic array of edge-based AI accelerator. IEEE Access, 9:96700–96710, 2021

work page 2021

-

[23]

RunSAFER: A novel run- time fault detection approach for systolic array accelerators

Eleonora Vacca, Giorgio Ajmone, and Luca Sterpone. RunSAFER: A novel run- time fault detection approach for systolic array accelerators. In2023 IEEE 41st International Conference on Computer Design (ICCD), pages 596–604, Washington, DC, USA, 2023. IEEE

work page 2023

-

[24]

Periodic online testing for sparse systolic tensor arrays

Christodoulos Peltekis, Chrysostomos Nicopoulos, and Giorgos Dimitrakopoulos. Periodic online testing for sparse systolic tensor arrays. In2025 14th International Conference on Modern Circuits and Systems Technologies (MOCAST), pages 1–6. IEEE, 2025

work page 2025

-

[25]

Fabiano Libano, Paolo Rech, and John Brunhaver. Efficient error detection for ma- trix multiplication with systolic arrays on FPGAs.IEEE Transactions on Computers, 72(8):2390–2403, 2023

work page 2023

-

[26]

Claus Braun, Sebastian Halder, and Hans Joachim Wunderlich. A-ABFT: Au- tonomous algorithm-based fault tolerance for matrix multiplications on graphics processing units. In2014 44th Annual IEEE/IFIP International Conference on Dependable Systems and Networks, pages 443–454. IEEE, 2014

work page 2014

-

[27]

Mehdi Safarpour, Reza Inanlou, and Olli Silvén. Algorithm level error detection in low voltage systolic array.IEEE Transactions on Circuits and Systems II: Express Briefs, 69(2):569–573, 2021

work page 2021

-

[28]

ApproxABFT: Approximate algorithm-based fault tolerance for neural network processing

Xinghua Xue, Cheng Liu, Feng Min, Tao Luo, and Yinhe Han. ApproxABFT: Approximate algorithm-based fault tolerance for neural network processing. arXiv preprint arXiv:2302.10640, 2023

-

[29]

Haoxuan Liu, Vasu Singh, Michał Filipiuk, and Siva Kumar Sastry Hari. ALBERTA: Algorithm-based error resilience in transformer architectures.IEEE Open Journal of the Computer Society, 6:85–96, 2024. 10 FLARE: One-Shot PE-Level Fault Localization in Systolic Arrays via Algebraic Test Vectors Preprint, May, 2026

work page 2024

-

[30]

Kwondo Ma, Chandramouli Amarnath, and Abhijit Chatterjee. Error resilient transformers: A novel soft error vulnerability guided approach to error checking and suppression. In2023 IEEE European Test Symposium (ETS), pages 1–6. IEEE, 2023

work page 2023

-

[31]

Tong Xie, Jiawang Zhao, Zishen Wan, Zuodong Zhang, Yuan Wang, Runsheng Wang, Ru Huang, and Meng Li. ReaLM: Reliable and efficient large language model inference with statistical algorithm-based fault tolerance.arXiv preprint arXiv:2503.24053, 2025

-

[32]

Error-correcting codes in computer arith- metic

James L Massey and Oscar N Garcia. Error-correcting codes in computer arith- metic. InAdvances in Information Systems Science: Volume 4, pages 273–326. Springer, 1972

work page 1972

-

[33]

Residue based error detection for integer and floating point execution units, August 18 2015

Sorin Iacobovici. Residue based error detection for integer and floating point execution units, August 18 2015. US Patent 9,110,768

work page 2015

-

[34]

Jingweijia Tan, Qixiang Wang, Kaige Yan, Xiaohui Wei, and Xin Fu. SACA- FI: A microarchitecture-level fault injection framework for reliability analysis of systolic array based CNN accelerator.Future Generation Computer Systems, 147:251–264, 2023

work page 2023

-

[35]

SAFFIRA: a framework for assessing the reliability of systolic-array-based DNN accelerators

Mahdi Taheri, Masoud Daneshtalab, Jaan Raik, Maksim Jenihhin, Salvatore Pap- palardo, Paul Jimenez, Bastien Deveautour, and Alberto Bosio. SAFFIRA: a framework for assessing the reliability of systolic-array-based DNN accelerators. In2024 27th International Symposium on Design & Diagnostics of Electronic Circuits & Systems (DDECS), pages 19–24. IEEE, 2024

work page 2024

-

[36]

Shamik Kundu, Suvadeep Banerjee, Arnab Raha, Suriyaprakash Natarajan, and Kanad Basu. DiagNNose: Toward error localization in deep learning hardware- based on VTA-TVM stack.IEEE Transactions on Computer-Aided Design of Inte- grated Circuits and Systems, 43(1):217–229, 2023

work page 2023

-

[37]

Guanpeng Li, Siva Kumar Sastry Hari, Michael Sullivan, Timothy Tsai, Karthik Pattabiraman, Joel Emer, and Stephen W. Keckler. Understanding error prop- agation in deep learning neural network (DNN) accelerators and applications. InProceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis (SC), 2017

work page 2017

-

[38]

Sanghyun Hong, Pietro Frigo, Yiğitcan Kaya, Cristiano Giuffrida, and Tudor Dumitraş. Terminal brain damage: Exposing the graceless degradation in deep neural networks under hardware fault attacks. InProceedings of the 28th USENIX Security Symposium, 2019

work page 2019

-

[39]

Le-Ha Hoang, Muhammad Abdullah Hanif, and Muhammad Shafique. FT-ClipAct: Resilience analysis of deep neural networks and improving their fault tolerance using clipped activation. In2020 Design, Automation & Test in Europe Conference & Exhibition (DATE), pages 1241–1246. IEEE, 2020

work page 2020

-

[40]

Elbruz Ozen and Alex Orailoglu. Just say zero: Containing critical bit-error propagation in deep neural networks with anomalous feature suppression. In Proceedings of the 39th International Conference on Computer-Aided Design, pages 1–9, 2020

work page 2020

-

[41]

Berry: Bit error robustness for energy-efficient reinforcement learning-based autonomous systems

Zishen Wan, Nandhini Chandramoorthy, Karthik Swaminathan, Pin-Yu Chen, Vijay Janapa Reddi, and Arijit Raychowdhury. Berry: Bit error robustness for energy-efficient reinforcement learning-based autonomous systems. In2023 60th ACM/IEEE Design Automation Conference (DAC), pages 1–6. IEEE, 2023

work page 2023

-

[42]

A low-cost fault corrector for deep neural networks through range restriction

Zitao Chen, Guanpeng Li, and Karthik Pattabiraman. A low-cost fault corrector for deep neural networks through range restriction. In2021 51st Annual IEEE/IFIP International Conference on Dependable Systems and Networks (DSN), pages 1–13. IEEE, 2021

work page 2021

-

[43]

Elbruz Ozen and Alex Orailoglu. Boosting bit-error resilience of DNN accelerators through median feature selection.IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems, 39(11):3250–3262, 2020

work page 2020

-

[44]

Xun Jiao, Fred Lin, Harish D. Dixit, Joel Coburn, Abhinav Pandey, Han Wang, Venkat Ramesh, Jianyu Huang, Wang Xu, Daniel Moore, and Sriram Sankar. PVF (parameter vulnerability factor): A scalable metric for understanding AI vulnerability against SDCs in model parameters.arXiv preprint arXiv:2405.01741, 2024

-

[45]

Florian Geissler, Syed Qutub, Michael Paulitsch, and Karthik Pattabiraman. A low-cost strategic monitoring approach for scalable and interpretable error de- tection in deep neural networks. InInternational Conference on Computer Safety, Reliability, and Security, pages 75–88. Springer, 2023

work page 2023

-

[46]

G. H. Hardy and E. M. Wright.An Introduction to the Theory of Numbers. Oxford University Press, 6th edition, 2008

work page 2008

-

[47]

Roth.Introduction to Coding Theory

Ron M. Roth.Introduction to Coding Theory. Cambridge University Press, 2006

work page 2006

-

[48]

An Yang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv, et al. Qwen3 technical report.arXiv preprint arXiv:2505.09388, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[49]

Edwin B Wilson. Probable inference, the law of succession, and statistical infer- ence.Journal of the American Statistical Association, 22(158):209–212, 1927. A Exact Probability via Inclusion-Exclusion The bound of Theorem 2 applies the union bound, summing⌊𝑀/𝑥 𝑘 ⌋ over all rows 𝑘≠𝑖 ∗. This can overcount when 𝑒 is simultaneously divisible by more than on...

work page 1927

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.